Validating LSER Models with Independent Data Sets: A Strategic Framework for Pharmaceutical and Biomedical Research

This article provides a comprehensive guide to the validation of Linear Solvation-Energy Relationship (LSER) models using independent data sets, a critical step for ensuring reliability in pharmaceutical and biomedical applications.

Validating LSER Models with Independent Data Sets: A Strategic Framework for Pharmaceutical and Biomedical Research

Abstract

This article provides a comprehensive guide to the validation of Linear Solvation-Energy Relationship (LSER) models using independent data sets, a critical step for ensuring reliability in pharmaceutical and biomedical applications. We explore the foundational thermodynamics of the LSER model and its rich database of molecular descriptors. A detailed methodological framework is presented for applying LSER to real-world problems, followed by targeted troubleshooting and optimization strategies to overcome common challenges like model overfitting and data scarcity. Finally, the article establishes rigorous protocols for external validation and comparative analysis against other QSPR approaches, aligning with regulatory standards such as ICH Q2(R1) to build confidence in model predictions for drug development.

Demystifying the LSER Model: Thermodynamic Principles and the Treasure Trove of Solvation Data

The Abraham Solvation Parameter Model is a linear free-energy relationship (LFER) that quantitatively predicts the partitioning behavior of neutral compounds in various chemical and biological systems. By decomposing solvation interactions into distinct, quantifiable parameters, this model provides a powerful framework for predicting solute transfer between phases, making it invaluable for fields ranging from chromatography and environmental chemistry to pharmaceutical research and drug development. This guide explores the model's core equations, its validation against independent datasets, and its practical application in modern scientific research.

Model Foundations and Core Equations

The Abraham model is grounded in the cavity theory of solvation, which describes the process of a solute dissolving in a solvent in three fundamental steps: (1) the creation of a cavity within the solvent to accommodate the solute molecule; (2) the insertion of the solute into the cavity; and (3) the establishment of solute-solvent interactions [1]. The model uses a set of descriptors to quantify a solute's capability for specific intermolecular interactions [2].

Two principal equations form the basis of the model, each applicable to a different type of phase transfer process [2] [1].

- For transfer from the gas phase to a condensed liquid phase:

log SP = c + eE + sS + aA + bB + lL[2] [1] - For transfer between two condensed phases:

log SP = c + eE + sS + aA + bB + vV[2] [1]

Definition of Equation Terms:

| Term Type | Symbol | Meaning |

|---|---|---|

| Dependent Variable | SP | Free-energy related solute property (e.g., log K or log P for partition coefficients, retention factors in chromatography) [2]. |

| System Constants (Solvent Properties) | c | Model intercept (a constant for the system) [2]. |

| e, s, a, b, l, v | System constants representing the complementary properties of the solvent phase[solvent coefficients] [2] [1]. | |

| Solute Descriptors | E | Excess molar refraction, which models polarizability contributions from n- and π-electrons [2]. |

| S | Solute dipolarity/polarizability [2]. | |

| A | Solute overall hydrogen-bond acidity [2]. | |

| B | Solute overall hydrogen-bond basicity [2]. | |

| L | The logarithm of the gas-hexadecane partition coefficient at 298 K [2] [1]. | |

| V | McGowan's characteristic volume (in units of dm³ mol⁻¹/100), which can be calculated entirely from molecular structure [2]. |

Experimental Protocols for Determining Descriptors and Constants

A critical strength of the Abraham model is its foundation in experimentally measured data. The following protocols outline established methods for determining system constants and solute descriptors.

Determining System Constants for a Solvent Phase

To characterize a specific solvent system (e.g., a chromatographic stationary phase or an extraction solvent), its system constants (e, s, a, b, v/l, c) must be determined. This is achieved through multiple linear regression analysis [2].

- Step 1: Selection of Calibration Compounds: A suitable training set of 30-60 neutral compounds is selected. These compounds should be chemically diverse and cover a wide range of descriptor values to ensure a robust model. A reasonable range of retention factors or partition constants (e.g., one order of magnitude for chromatography) is recommended [2].

- Step 2: Measurement of Dependent Variable (SP): The solute property (e.g., partition coefficient, retention factor) is measured with high precision for every calibration compound in the system of interest [2].

- Step 3: Multiple Linear Regression Analysis: The measured

log SPvalues for the calibration set are regressed against their known solute descriptors (E, S, A, B, V/L). The output of the regression provides the values of the system constants and the model intercept [2]. - Step 4: Model Assessment: The quality of the derived model is evaluated using statistical criteria including the coefficient of determination (R²), the standard error of the estimate, and the Fisher statistic (F). A plot of experimental versus model-predicted values is also used to visually assess the fit [2].

Determining Solute Descriptors via Chromatographic Methods

For new compounds, especially pharmaceuticals, experimental determination of descriptors can be efficiently performed using High-Performance Liquid Chromatography (HPLC) [3].

- Step 1: Chromatographic Profiling: The retention times of the solute are measured across a panel of 5-8 HPLC columns with different stationary phases (e.g., reversed-phase, diol, nitrile, immobilized artificial membrane). The retention factor

k'is calculated for each system [3]. - Step 2: System Constants of HPLC Columns: The system constants (

e, s, a, b, v, c) for each HPLC column in the panel must be pre-established using a calibration set of compounds with known descriptors [3]. - Step 3: Descriptor Calculation via Solver Method: The solute's descriptors (

A, B, S, etc.) become the unknown variables in a set of simultaneous equations (one for each HPLC column). An optimization algorithm, like the Solver add-in in Microsoft Excel, is used to find the descriptor values that provide the best agreement between the calculated and experimental retention factors across all chromatographic systems [2] [3]. - Ionizable Compounds: For drug-like molecules that may be ionized, the mobile phase pH must be controlled to ensure the analyte is in its neutral form, as the original Abraham model applies only to neutral species [3].

Model Validation with Independent Data

The predictive power and robustness of the Abraham model are rigorously tested by its performance on independent validation datasets not used in model training.

Validation in Polymer-Water Partitioning

A key application is predicting partition coefficients for environmental and leaching studies. An LSER model for low-density polyethylene (LDPE)-water partitioning was recently validated with a large independent dataset [4].

- Model:

log K_i,LDPE/W = −0.529 + 1.098E − 1.557S − 2.991A − 4.617B + 3.886V - Validation Result: When applied to an independent validation set of 52 compounds, the model demonstrated high predictive accuracy with R² = 0.985 and a Root Mean Square Error (RMSE) = 0.352 log units, using experimental solute descriptors [4].

Validation and Refinement for Polydimethylsiloxane (PDMS)

The model's evolution for PDMS, a common polymer in microextraction, showcases the importance of dataset quality and size.

- Early Model (Sprunger et al., 2008): Based on 170 data points for

log P_PDMS/water, this model achieved an R² of 0.993 and a standard error of 0.171 [5]. - Revised Model (2023): An updated correlation was developed using data for more than 220 different compounds. This model back-calculates the observed partitioning to within a standard deviation of 0.206 log units, confirming the model's robustness when based on a large and chemically diverse training set [5].

- Contrasting Findings: A study by Zhu and Tao (2023) reported a model with a much higher RMSE of 0.532 log units. This discrepancy was attributed to potential issues with the curation of the experimental database and the use of estimated solute descriptors, highlighting that predictive accuracy is strongly correlated with the quality of the input data [5].

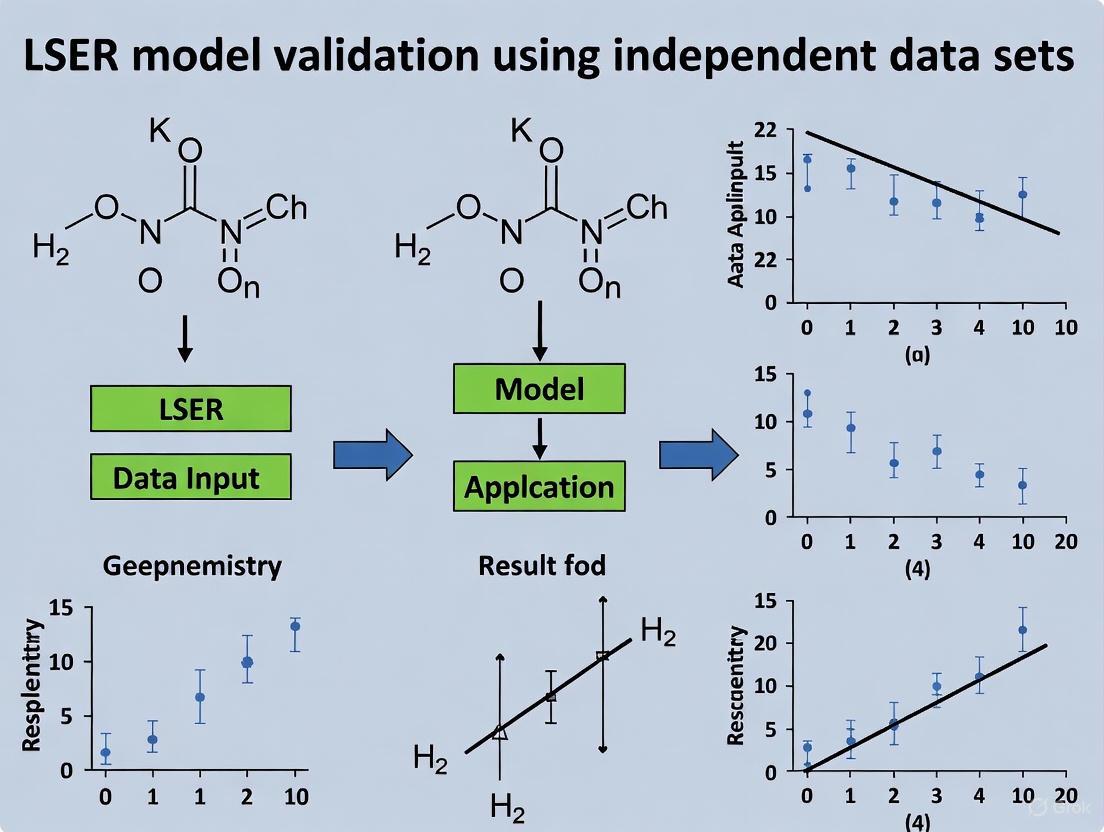

The following workflow diagrams the process of developing and validating an Abraham model, from data collection to its application in prediction.

Performance Comparison with Other Predictive Frameworks

The Abraham model's performance is competitive with and often complementary to other computational approaches.

- Comparison with Group Contribution/Machine Learning: Group contribution and machine learning methods can provide initial estimates of solute descriptors. However, they often fail to account for complex molecular phenomena like intramolecular hydrogen bonding, leading to inaccurate predictions. For example, for 4,5-dihydroxyanthraquinone-2-carboxylic acid, estimation software predicted an A descriptor (hydrogen-bond acidity) of 1.11-1.44, while experimental data suggested a value near zero due to intramolecular H-bonding, rendering the phenolic hydrogens unavailable for solvent interaction [6].

- Comparison with Pure Quantum Chemical Calculations: A recent quantum chemistry-based model was developed to predict the hydrophobicity of polymer repeating units, achieving an RMSE of 0.48 for log K_OW predictions. While this shows promise, the Abraham model, when used with experimental descriptors, often achieves lower errors (e.g., RMSE of 0.352 for LDPE-water partitioning) for specific systems, though it requires experimental input for calibration [7].

System Constants for Select Polymers and Solvents

The system constants reveal the unique interaction properties of different phases. The table below compares these constants for several common polymers and a classic organic solvent (chloroform) used in partitioning, demonstrating how the model quantifies extraction selectivity [4] [5] [1].

Table: Abraham Model System Constants for Selected Phases

| Phase | Equation Type | c | e | s | a | b | l | v |

|---|---|---|---|---|---|---|---|---|

| Low-Density Polyethylene (LDPE) [4] | log P (vs. Water) | -0.529 | 1.098 | -1.557 | -2.991 | -4.617 | - | 3.886 |

| Polydimethylsiloxane (PDMS) - Wet/Dry [5] | log P (vs. Water) | 0.268 | 0.601 | -1.416 | -2.523 | -4.107 | - | 3.637 |

| Polydimethylsiloxane (PDMS) - Wet/Dry [5] | log K (vs. Air) | -0.041 | 0.012 | 0.543 | 1.143 | 0.578 | 0.792 | - |

| Chloroform [1] | log P (vs. Water) | 0.166 | 0.0 | -0.426 | -3.202 | -4.436 | - | 3.720 |

Interpretation: A large positive v coefficient indicates a strong cavity-dispersive interaction, favoring larger molecules. A large negative b coefficient indicates the phase has strong hydrogen-bond donor acidity and will strongly interact with solute bases (high B descriptor). Chloroform's highly negative a and b constants show it is a strong hydrogen-bond donor but a very weak acceptor.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful application of the Abraham model in a research setting relies on a set of well-characterized reagents and materials.

Table: Key Reagents and Materials for Abraham Model Research

| Item | Function in Research |

|---|---|

| Calibration Compound Sets | A chemically diverse set of 30-60 compounds with pre-established Abraham descriptors. Used to determine the system constants of new solvents or chromatographic columns [2]. |

| Characterized HPLC Columns | A panel of 5-8 columns with different stationary phases (e.g., C18, IAM, HILIC, Cyano) whose system constants are known. Essential for the rapid chromatographic determination of solute descriptors [3]. |

| Polydimethylsiloxane (PDMS) | A common polymeric solvent used in solid-phase microextraction (SPME). Its well-characterized Abraham model equations allow for the prediction of analyte extraction efficiency from water or air [5]. |

| UFZ-LSER Database | A curated, publicly available database containing Abraham solute descriptors for thousands of compounds. It is a primary resource for obtaining descriptor values for calibration compounds and other solutes [6]. |

The Abraham Solvation Parameter Model remains a robust and highly validated predictive framework within LSER research. Its core equations effectively distill complex solvation phenomena into chemically intuitive parameters. Validation against independent datasets, such as those for LDPE-water and PDMS-air partitioning, consistently shows the model can achieve high predictive accuracy (R² > 0.98, RMSE < 0.4 log units) when based on high-quality, chemically diverse experimental data. While computational descriptor estimation methods are improving, the model's performance is most reliable when paired with experimental inputs. The continued refinement of model correlations and expansion of solute descriptor databases ensure its enduring relevance for predicting partition coefficients, chromatographic retention, and biological uptake in pharmaceutical and environmental science.

Table of Contents

- Introduction to the LSER Framework and its Descriptors

- A Detailed Look at the Six Core Descriptors

- Experimental Determination and Methodologies

- Model Validation with Independent Data Sets

- Comparative Performance in Practical Applications

- Essential Research Reagents and Materials

The Linear Solvation Energy Relationship (LSER) model, often called the Abraham model, is a cornerstone of molecular thermodynamics, providing a robust framework for predicting solute transfer between phases. Its remarkable success across chemical, biomedical, and environmental applications stems from its ability to distill complex intermolecular interactions into a simple linear equation using six molecular descriptors [8] [9]. The model operates on two primary equations for solute partitioning: one for transfer between two condensed phases (Equation 1) and another for gas-to-solvent partitioning (Equation 2) [8] [9].

The power of the LSER model lies in its decomposition of a molecule's behavior into specific, chemically intuitive contributions. These six descriptors—Vx, E, S, A, B, and L—quantify a molecule's intrinsic potential for different types of intermolecular interactions, independent of any specific solvent or environment. This decomposition allows researchers to predict a vast array of properties, from partition coefficients and solubility to biological activity, by simply combining these invariant solute descriptors with solvent-specific coefficients [8] [10]. The framework is exceptionally rich in thermodynamic information, and when extracted properly, this information can be leveraged for various thermodynamic developments and applications [8].

A Detailed Look at the Six Core Descriptors

Each LSER descriptor captures a distinct aspect of a molecule's structure and its potential for specific interactions. The following table provides a comprehensive overview of these six core parameters.

Table 1: The Six Core LSER Molecular Descriptors and Their Physicochemical Significance

| Descriptor | Full Name | Physicochemical Interpretation | Role in Intermolecular Interactions |

|---|---|---|---|

| Vx | McGowan's Characteristic Volume | Molecular volume, reflecting the size of the solute [9]. | Quantifies the energy cost of forming a cavity in the solvent to accommodate the solute; dominant for dispersive interactions in apolar systems [9] [4]. |

| E | Excess Molar Refraction | Measure of a solute's polarizability due to π- and n-electrons [9]. | Captures dispersion interactions that are stronger than those predicted by size alone, often relevant for aromatic compounds or those with heavy atoms [10]. |

| S | Dipolarity/Polarizability | Overall measure of a solute's dipole moment and ability to stabilize a charge [9]. | Represents the solute's ability to engage in dipole-dipole (Keesom) and dipole-induced dipole (Debye) interactions [8]. |

| A | Hydrogen-Bond Acidity | Measure of the solute's ability to donate a hydrogen bond [9]. | Quantifies the strength of the solute's acidic (proton-donor) sites in forming hydrogen bonds with solvent basic sites [8] [11]. |

| B | Hydrogen-Bond Basicity | Measure of the solute's ability to accept a hydrogen bond [9]. | Quantifies the strength of the solute's basic (proton-acceptor) sites in forming hydrogen bonds with solvent acidic sites [8] [11]. |

| L | Gas-Hexadecane Partition Coefficient | The logarithm of the gas-liquid partition coefficient in n-hexadecane at 298 K [9]. | Describes the solute's volatility and its general dispersive interaction potential with a very non-polar reference solvent [9]. |

The mathematical framework of the LSER model integrates these descriptors into predictive linear equations. For partition coefficient P between two condensed phases, the model is expressed as:

log(P) = cₚ + eₚE + sₚS + aₚA + bₚB + vₚVx [8]

For gas-to-solvent partitioning, described by the gas-to-solvent partition coefficient Kₛ, the equation uses L instead of Vx:

log(Kₛ) = cₖ + eₖE + sₖS + aₖA + bₖB + lₖL [8]

In these equations, the lower-case letters (e.g., sₚ, aₚ) are the solvent-specific (system) LSER coefficients, which represent the complementary properties of the phases between which the solute is transferring [8].

Experimental Determination and Methodologies

The accurate experimental determination of LSER descriptors is crucial for the model's predictive power, especially for complex, polar molecules. The descriptors are typically determined through a reverse-phase chromatography approach, where the retention behavior of a solute across multiple HPLC systems with different stationary and mobile phases is measured [11].

Table 2: Key Experimental Protocols for LSER Descriptor Determination

| Method | Core Principle | Typical Application & Notes |

|---|---|---|

| Reversed-Phase HPLC | Measures retention factors on a non-polar stationary phase (e.g., C18) with aqueous-organic mobile phases. | Sensitive to Vx, S, A, and B descriptors. Used for a wide range of solutes [11]. |

| Normal-Phase HPLC | Measures retention on a polar stationary phase (e.g., silica) with non-polar organic mobile phases. | Particularly sensitive to S, A, and B descriptors. Useful for polar compounds [11]. |

| Hydrophilic Interaction LC (HILIC) | Operates with a polar stationary phase and a hydrophobic mobile phase (e.g., acetonitrile-rich). | Highly effective for characterizing polar and ionizable species, providing strong data for A and B descriptors [11]. |

| Gas-Liquid Chromatography (GLC) | Measures retention on a coated capillary column to determine the gas-hexadecane partition coefficient (L). | Directly used to determine the L descriptor for a solute [9]. |

A landmark study by Tülp et al. demonstrated this multi-system approach by using a set of eight reversed-phase, normal-phase, and HILIC HPLC systems to determine the A, S, and B descriptors for 76 diverse pesticides and pharmaceuticals [11]. These compounds often contain multiple functional groups, resulting in descriptor values that are "high and lie at the very upper end of the numerical range of currently known substance descriptors" [11]. The study highlighted the importance of using a chemically diverse set of chromatographic systems to deconvolute the complex interactions of such molecules reliably. The plausibility of the newly determined descriptors was cross-validated by comparing predicted versus literature values of octanol-water (Kow) and air-water (Kaw) partition coefficients [11].

The following diagram illustrates the general workflow for the experimental determination and validation of LSER descriptors.

Figure 1: Workflow for experimental determination and validation of LSER descriptors, involving multiple HPLC systems and external validation.

Model Validation with Independent Data Sets

A critical test for any predictive model is its performance on independent data not used in its development. Robust validation is a central theme in modern LSER research, ensuring models are reliable for real-world applications. This involves two key strategies: internal validation using hold-out test sets and external validation against entirely independent experimental data or other models.

In a comprehensive study on predicting partition coefficients between low-density polyethylene (LDPE) and water, the authors rigorously evaluated their LSER model. They ascribed approximately 33% (n=52) of their total observations to an independent validation set [4]. When using experimentally determined solute descriptors, the model achieved excellent predictive performance on this unseen data (R² = 0.985, RMSE = 0.352), confirming its accuracy and precision [4]. This demonstrates the internal consistency of the LSER framework when high-quality experimental descriptors are available.

Furthermore, the study benchmarked the model's robustness by using LSER solute descriptors predicted from a compound's chemical structure via a QSPR tool, rather than experimental values. This simulates a real-world scenario for new compounds with no experimental descriptors. The model maintained high performance (R² = 0.984), though with a slightly higher error (RMSE = 0.511), indicating the critical impact of descriptor quality on prediction uncertainty [4].

Cross-validation with other independent datasets is also paramount. The Tülp et al. study cross-compared their newly determined descriptors for pesticides and pharmaceuticals against literature partition coefficients, confirming plausibility for most compounds [11]. However, they also found a "systematic deviation" for some polar, multifunctional compounds, indicating that "existing LSER equations might have problems when applied to complex compounds with high A, S, and B values" [11]. This finding underscores the need for continuous model refinement and validation with expanding chemical datasets.

Comparative Performance in Practical Applications

The LSER model's utility is proven by its predictive accuracy across diverse fields. Its performance is often benchmarked against other computational approaches, including modern machine learning (ML) methods. A key advantage of LSER is its interpretability; each term in the equation has a clear physicochemical meaning.

Table 3: Comparative Performance of LSER and Machine Learning Models for Solubility Prediction

| Application Context | Model/Descriptor Type | Reported Performance | Key Advantage |

|---|---|---|---|

| Drug Solubility in Lipids [10] | LSER (Abraham Parameters) | High predictive accuracy (RMSE = 0.50 logS units on test set). | Strong interpretability; descriptors relate directly to H-bonding, size, and polarity. |

| Drug Solubility in Lipids [10] | Machine Learning (SOAP descriptor) | High predictive accuracy (RMSE = 0.50 logS units on test set). | Atom-level interpretability; can rank molecular motifs influencing solubility. |

| Drug Solubility in Lipids [10] | Machine Learning (ECFP4 fingerprint) | Inferior predictive accuracy compared to LSER and SOAP. | Captures molecular connectivity but is less directly related to physicochemical forces. |

| LDPE-Water Partitioning [4] | LSER (Experimental Descriptors) | R² = 0.985, RMSE = 0.352 (Independent Validation Set). | High accuracy and user-friendly approach for estimating polymer-water partitioning. |

As shown in the table, a study comparing descriptor sets for predicting drug solubility in medium-chain triglycerides (MCTs) found that models based on Abraham solvation parameters performed on par with complex geometrical ML descriptors (SOAP) and outperformed fingerprint-based methods (ECFP4) [10]. This demonstrates that LSER descriptors effectively capture the essential molecular features governing solubility in lipids. The LSER model's parameters provide immediate chemical insight, indicating that solubility in MCTs is favorably influenced by solute volume (Vx) and negatively impacted by hydrogen-bonding acidity (A) and basicity (B) [10] [4].

Beyond solubility, the LSER model accurately predicts partition coefficients in environmental systems. The LDPE-water partition model is a prime example, with its system parameters revealing that sorption is driven primarily by dispersive interactions (positive coefficient for Vx) and is strongly opposed to solute hydrogen-bonding (large negative coefficients for A and B) [4]. This allows for direct comparison of sorption behavior between different polymers.

Essential Research Reagents and Materials

Successful experimental determination of LSER descriptors and model validation relies on specific reagents and computational tools. The following table details key materials and their functions in this field.

Table 4: Key Research Reagents and Tools for LSER Studies

| Category | Item / Tool | Specification / Function |

|---|---|---|

| Chromatography Systems | Reversed-Phase HPLC Columns | e.g., C18-bonded silica; for separating solutes based on hydrophobicity. |

| Normal-Phase HPLC Columns | e.g., Unmodified silica; for separating solutes based on polarity and H-bonding. | |

| HILIC Columns | e.g., Diol, amide, or cyano phases; for retaining polar solutes. | |

| Reference Solvents & Materials | n-Hexadecane | The standard solvent for determining the L descriptor via gas-liquid chromatography [9]. |

| Certified Reference Materials (CRMs) | e.g., GBW series; homogeneous powders compressed into tablets for calibration, as used in LIBS studies mimicking the methodology [12]. | |

| Computational & Data Resources | LSER Database | A freely accessible, comprehensive database rich in thermodynamic information and molecular descriptors [8] [9]. |

| COSMO-RS / Quantum Chemical Suites | Software for quantum chemical calculations; used to derive new molecular descriptors and obtain hydrogen-bonding information for LSER development [9]. | |

| QSPR Prediction Tools | Software for predicting LSER solute descriptors directly from chemical structure, useful for preliminary screening [4]. |

The experimental workflow often begins with a set of certified reference materials to ensure consistency and accuracy. For chromatographic measurements, a diverse set of HPLC systems—reversed-phase, normal-phase, and HILIC—is essential to deconvolute the various interactions (Vx, S, A, B) for a solute [11]. Computationally, the freely available LSER Database is an invaluable resource, while tools like COSMO-RS are being explored to generate thermodynamically consistent descriptors and overcome the limitation of being restricted to solvents with abundant experimental data [9].

Linear Solvation Energy Relationships (LSERs), also known as the Abraham solvation parameter model, represent one of the most successful predictive frameworks in modern molecular thermodynamics [8]. These models employ a simple linear equation to correlate free-energy-related properties of solutes with their molecular descriptors, enabling remarkably accurate predictions of partition coefficients and solvation energies across diverse chemical, biomedical, and environmental applications [4] [13]. The core LSER equations for solute transfer between two condensed phases takes the form:

log(P) = cp + epE + spS + apA + bpB + vpVx

Where the uppercase letters (E, S, A, B, Vx) represent solute-specific molecular descriptors, and the lowercase letters (cp, ep, sp, ap, bp, vp) are system-specific complementary coefficients that contain chemical information about the solvent or phase in question [4] [14]. The predictive power and very linearity of these relationships have long been recognized empirically, but their rigorous thermodynamic foundation has only recently been systematically explored [13]. This exploration is particularly crucial within the context of model validation using independent datasets—a fundamental requirement for establishing LSERs as reliable tools in critical applications such as drug development, where predicting partition coefficients directly impacts bioavailability and toxicity assessments.

Theoretical Foundation of LSER Linearity

The Thermodynamic Basis of LFER Linearity

The remarkable linearity observed in LSER models, even for strong specific interactions like hydrogen bonding, finds its explanation at the intersection of equation-of-state solvation thermodynamics and the statistical thermodynamics of hydrogen bonding [13]. Recent research has demonstrated that the division of the system Gibbs energy into a hydrogen-bonding term (ΔGhb) and a non-hydrogen-bonding term (ΔGLF) provides a robust framework for understanding this linearity [14]. The non-hydrogen-bonding component arises from all types of intermolecular interactions except hydrogen bonding, while the hydrogen-bonding component is formulated based on Veytsman's statistics, which can be equivalently handled by both LFHB (Lattice-Fluid with Hydrogen-Bonding) and SAFT (Statistical Associating Fluid Theory) approaches [14].

This thermodynamic framework verifies that the linear relationships in LSER models are not merely empirical curiosities but have sound theoretical underpinnings. The successful separation of interaction contributions allows for the linear combination of terms representing different physical interaction mechanisms, including dispersion forces, dipolarity/polarizability, and hydrogen bonding [13]. This explains why the product of solute descriptors (A, B) and system coefficients (a, b) can effectively quantify the hydrogen bonding contribution to solvation free energy, despite the inherent complexity and cooperativity of such interactions [8].

LSER Equations and Molecular Descriptors

The LSER model utilizes two primary equations for different phase transfer processes. For solute transfer between two condensed phases, the model uses:

log(P) = cp + epE + spS + apA + bpB + vpVx [14]

For gas-to-solvent partitioning, the equation becomes:

log(KS) = ck + ekE + skS + akA + bkB + lkL [8]

The molecular descriptors in these equations represent specific solute properties:

- Vx: McGowan's characteristic volume

- L: Gas-liquid partition coefficient in n-hexadecane at 298 K

- E: Excess molar refraction

- S: Dipolarity/polarizability

- A: Hydrogen bond acidity

- B: Hydrogen bond basicity [8] [14]

These descriptors comprehensively characterize a molecule's potential for various intermolecular interactions, providing the structural basis for the predictive capability of LSER models.

Experimental Validation with Independent Datasets

Validation Protocols for LSER Models

Robust validation of LSER models requires rigorous experimental protocols involving independent datasets not used in model development. The standard methodology involves:

Model Training: LSER system coefficients are determined via multilinear regression of experimentally determined partition coefficients or solvation energies for a training set of chemically diverse compounds [4].

Independent Validation Set: Approximately 25-33% of the total available observations are typically ascribed to an independent validation set [4]. This set must encompass sufficient chemical diversity to adequately challenge the model.

Descriptor Sourcing: For the validation set, calculations can be performed using either experimentally determined LSER solute descriptors or descriptors predicted from chemical structure using Quantitative Structure-Property Relationship (QSPR) tools [4].

Performance Metrics: Model predictions are compared against experimental values through linear regression analysis, with R² (coefficient of determination) and RMSE (root mean square error) serving as primary metrics of predictive accuracy [4].

Benchmarking: The validated model is compared against existing LSER models from literature, with particular attention to the quality of underlying experimental data and chemical diversity of training sets [4].

Performance Evaluation with Independent Data

A recent comprehensive study on partition coefficients between low-density polyethylene (LDPE) and water demonstrated the rigorous application of this validation protocol [4]. The researchers developed an LSER model based on experimental partition coefficients for 156 chemically diverse compounds, achieving impressive statistics (n = 156, R² = 0.991, RMSE = 0.264) [4]. For validation, approximately 33% (n = 52) of the total observations were assigned to an independent validation set.

Table 1: LSER Model Validation Performance for LDPE/Water Partitioning

| Descriptor Type | Sample Size | R² | RMSE | Application Context |

|---|---|---|---|---|

| Experimental LSER descriptors | 52 | 0.985 | 0.352 | Gold standard validation |

| QSPR-predicted descriptors | 52 | 0.984 | 0.511 | Practical application for new compounds |

| Full training set | 156 | 0.991 | 0.264 | Model development |

The slight increase in RMSE when using QSPR-predicted descriptors rather than experimental descriptors reflects the additional uncertainty introduced by descriptor prediction, representing a more realistic scenario for practical applications where experimental descriptors are unavailable [4].

Comparative Analysis of LSER with Alternative Approaches

LSER versus COSMO-RS and Equation-of-State Models

The predictive performance of LSER models can be better understood through comparison with alternative thermodynamic approaches. Recent research has conducted extensive comparisons between LSER and COSMO-RS (Conductor-like Screening Model for Real Solvents) for predicting hydrogen-bonding contributions to solvation enthalpy [14].

Table 2: Comparison of Thermodynamic Prediction Approaches

| Model | Basis | Strengths | Limitations | HB Contribution |

|---|---|---|---|---|

| LSER | Empirical linear free-energy relationships | Excellent predictability within chemical space of descriptors; Simple implementation | Limited predictive scope for new chemical spaces; Dependent on experimental data | Calculated from ahA + bhB terms |

| COSMO-RS | Quantum mechanics-based | A priori predictive without experimental input; Broad applicability | Computational intensity; Parameterization dependent | Can be calculated but not directly separable |

| Equation-of-State (LFHB/SAFT) | Statistical thermodynamics | Broad range of conditions; Firm theoretical foundation | Cannot predict HB strength without external data | Based on Veytsman statistics |

The comparison revealed generally good agreement between COSMO-RS and LSER predictions for hydrogen-bonding contributions to solvation enthalpy across most solute-solvent systems, with discrepancies in specific cases providing insights for model improvement [14].

Inter-Polymer Comparison Using LSER System Parameters

LSER system parameters enable direct comparison of sorption behavior across different polymeric phases. A recent study compared LDPE to polydimethylsiloxane (PDMS), polyacrylate (PA), and polyoxymethylene (POM) using this approach [4]. The analysis revealed that polymers with heteroatomic building blocks (PA, POM) exhibit stronger sorption for polar, non-hydrophobic compounds in the logK_{i,LDPE/W} range of 3-4, while all four polymers showed similar sorption behavior for more hydrophobic compounds beyond this range [4].

Research Reagents and Materials for LSER Studies

Table 3: Essential Research Reagents and Materials for LSER Experiments

| Reagent/Material | Function/Application | Specification Examples |

|---|---|---|

| Reference Solvents | For determining solute descriptors and system coefficients | n-Hexadecane (for L descriptor), water, various organic solvents |

| Polymer Phases | For partitioning studies involving polymeric materials | Low-density polyethylene (LDPE), polydimethylsiloxane (PDMS), polyacrylate (PA) |

| Chemical Standards | Chemically diverse compounds for training and validation sets | 150-200 compounds covering varied functional groups and properties |

| QSPR Prediction Tools | For estimating LSER descriptors when experimental values unavailable | Commercial and open-source software implementing group contribution methods |

Methodological Workflows and Signaling Pathways

The following diagram illustrates the thermodynamic relationships and experimental validation workflow underlying LSER models:

The thermodynamic basis of LSER linearity finds robust validation through rigorous testing with independent datasets, confirming the model's predictive power for partition coefficients and solvation energies. The integration of equation-of-state thermodynamics with the statistical thermodynamics of hydrogen bonding provides a solid theoretical foundation for the empirical success of LSER models [13]. For researchers in drug development and related fields, LSER models offer a validated, practical tool for predicting partition coefficients with known accuracy bounds, particularly when using experimentally determined molecular descriptors.

The future of LSER methodology points toward enhanced integration with quantum-chemical approaches like COSMO-RS and equation-of-state models, potentially leading to a unified COSMO-LSER equation-of-state framework [14]. Such integration would combine the predictive power of LSER with the ability to extrapolate across temperature and pressure conditions, further expanding the utility of these models in pharmaceutical and environmental applications.

The Linear Solvation-Energy Relationships (LSER) database, maintained by the Helmholtz Centre for Environmental Research (UFZ), represents a foundational resource for researchers investigating solute-solvent interactions across chemical, biomedical, and environmental domains. This database implements the Abraham solvation parameter model, a highly successful predictive framework that correlates free-energy-related properties of compounds with their molecular descriptors [8]. For researchers engaged in LSER model validation with independent datasets, this database provides the critical experimental data and standardized parameters necessary for rigorous comparative analyses.

The theoretical underpinning of the LSER model lies in its two primary linear free-energy relationships that quantify solute transfer between phases. For partitioning between two condensed phases, the model uses log(P) = cp + epE + spS + apA + bpB + vpVx, while for gas-to-solvent partitioning, it employs log(KS) = ck + ekE + skS + akA + bkB + lkL [8]. In these equations, the capital letters represent solute-specific molecular descriptors, while the lowercase coefficients are system-specific descriptors that characterize the solvent environment. This sophisticated mathematical framework allows researchers to predict a wide array of thermodynamic properties relevant to drug development, environmental fate modeling, and pharmaceutical sciences.

Architectural Framework of the LSER Database

Core Components and Data Structure

The LSER database architecture centers on several interconnected modules that facilitate both data retrieval and computational forecasting. The system contains a comprehensive chemical library with associated molecular descriptors essential for LSER calculations [15]. These descriptors include:

- McGowan's characteristic volume (Vx)

- Gas-liquid partition coefficient in n-hexadecane (L)

- Excess molar refraction (E)

- Dipolarity/polarizability (S)

- Hydrogen bond acidity (A)

- Hydrogen bond basicity (B)

The computational infrastructure enables researchers to predict biopartitioning behavior across multiple biological phases, including muscle protein, lipids, carbohydrates, and minerals [15]. Additional functionality allows for calculating sorbed concentrations, extraction efficiencies, and freely dissolved analyte concentrations specifically for neutral molecules—a critical limitation noted in the database documentation [15].

Specialized Research Tools and Calculators

Beyond its repository function, the LSER database incorporates specialized calculation modules for specific experimental scenarios relevant to pharmaceutical research:

- Solvent fraction calculations: Determines solute distribution across single or multiple solvent volumes

- Thermodesorption optimization: Computes optimal parameters for thermal desorption experiments

- Solute loss estimation: Quantifies maximal solute loss during solvent blow-down with nitrogen

- Membrane permeability prediction: Forecasts compound permeability through Caco-2/MDCK monolayers with input for fraction of neutral species at experimental pH [15]

These integrated tools provide drug development professionals with actionable parameters for experimental design while creating opportunities for validation against independent datasets.

Experimental Protocols for LSER Database Utilization

Protocol for Partition Coefficient Determination and Validation

Objective: Determine and validate water-to-organic solvent partition coefficients using LSER database predictions.

Materials:

- UFZ-LSER database access (version 4.0 or newer)

- Standard solvent systems (n-octanol, alkanes, ethyl acetate)

- Chemical standards with known partition coefficients

- HPLC system with UV detection for concentration verification

Methodology:

- Query construction: Identify target compounds in the LSER database using systematic name or database ID

- Descriptor verification: Confirm availability of all six molecular descriptors (Vx, L, E, S, A, B)

- System selection: Choose appropriate solvent system for partitioning calculation

- Calculation execution: Implement the LSER equation for partition coefficient prediction

- Experimental validation: Measure partition coefficients experimentally using shake-flask method

- Statistical comparison: Calculate correlation coefficients and mean absolute error between predicted and observed values

This protocol enables researchers to quantitatively assess the predictive accuracy of the LSER database for specific compound classes while generating independent validation data.

Protocol for Biopartitioning Prediction in Drug Development

Objective: Predict and validate compound distribution in biological systems for pharmacokinetic modeling.

Materials:

- LSER database with biopartitioning module

- Physiological media (plasma, artificial digestive fluids)

- Equilibrium dialysis apparatus

- LC-MS/MS for compound quantification

Methodology:

- Physiological parameter input: Define composition of biological phases (protein, lipid, carbohydrate percentages)

- Calculation setup: Configure the LSER biopartitioning module with appropriate physiological parameters

- Sorbed concentration prediction: Execute calculation of fraction compound in each biological phase

- Experimental measurement: Conduct equilibrium dialysis experiments with physiological media

- Mass balance verification: Confirm recovery of administered compound across all phases

- Model refinement: Adjust physiological parameters if systematic prediction errors are observed

This systematic approach enables drug development professionals to evaluate the utility of LSER predictions for in vivo distribution forecasting while highlighting potential limitations for specific chemical classes.

Data Presentation and Comparative Analysis

LSER Molecular Descriptors for Representative Compounds

Table 1: Experimentally-Derived LSER Molecular Descriptors for Selected Compounds

| Compound Name | Database ID | Vx | L | E | S | A | B |

|---|---|---|---|---|---|---|---|

| 1,2-dichloroethane | 1 | 0.661 | - | 0.416 | 0.647 | 0.105 | 0.127 |

| Benzene | 9 | 0.716 | 2.786 | 0.610 | 0.520 | 0.000 | 0.140 |

| Aniline | 8 | 0.816 | 3.934 | 0.955 | 0.818 | 0.260 | 0.410 |

| Chloroform | 16 | 0.616 | 2.448 | 0.425 | 0.490 | 0.150 | 0.020 |

| Ethyl acetate | 25 | 0.858 | 2.314 | 0.228 | 0.706 | 0.000 | 0.516 |

| Butan-1-ol | 12 | 0.687 | 2.601 | 0.224 | 0.420 | 0.370 | 0.480 |

Data sourced from the UFZ-LSER database v4.0 [15]. Note that some L values require calculation from gas-chromatographic retention data.

System Parameters for Common Partitioning Systems

Table 2: LSER System Coefficients for Selected Partitioning Systems

| Partitioning System | cp | ep | sp | ap | bp | vp |

|---|---|---|---|---|---|---|

| Water/Octanol | 0.088 | 0.562 | -1.054 | 0.034 | -3.460 | 3.814 |

| Water/Hexane | 0.326 | 0.427 | -1.007 | -3.389 | -4.855 | 4.260 |

| Water/Ethyl Acetate | 0.222 | 0.492 | -0.945 | -0.032 | -3.632 | 3.704 |

| Blood/Air | -0.548 | 0.736 | 1.846 | 2.509 | 3.204 | 0.643 |

Representative system parameters demonstrating how solvent environments are characterized in the LSER framework [8].

Visualization of LSER Database Workflows

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents for LSER Database Validation Studies

| Reagent/Material | Specifications | Research Function | Application Context |

|---|---|---|---|

| n-Octanol | HPLC grade, ≥99% purity | Reference solvent for lipophilicity determination | Water/octanol partition coefficient studies |

| n-Hexadecane | Analytical standard, ≥99% purity | Reference solvent for L descriptor determination | Gas-liquid partition coefficient measurements |

| Chemical Standards | Certified reference materials | Method validation and calibration | Quality control for experimental measurements |

| Equilibrium Dialysis Membranes | Molecular weight cutoff 500-1000 Da | Separation of aqueous and organic phases | Biopartitioning studies |

| Buffer Systems | pH 2.0, 7.4, physiological | Simulate biological conditions | Physiologically-relevant partitioning |

| HPLC System | UV/Vis and MS detection | Compound quantification | Experimental validation of predicted values |

| Gas Chromatograph | Flame ionization detector | Determination of gas-liquid partition coefficients | Experimental L descriptor determination |

Comparative Analysis with Alternative Databases

When contextualizing the LSER database within the research ecosystem, it's valuable to recognize alternative database structures with different specialized functions. The LASER (Learning Assisted Strain EngineeRing) database represents a specialized repository for metabolic engineering designs, cataloging 417 genetically-defined strain designs from 310 published studies [16]. This database contains 2,661 documented genetic modifications across E. coli and S. cerevisiae strains, providing a standardized framework for metabolic engineering but serving a fundamentally different research purpose than the solvation-focused LSER database.

For researchers validating LSER models, understanding this distinction is critical when searching for relevant datasets. While both databases employ formalized standards for data curation and deposition, the LASER metabolic engineering database focuses on biological strain design, whereas the UFZ-LSER database specializes in physicochemical properties and partitioning behavior. This differentiation highlights the importance of domain-specific database selection when designing validation studies.

The UFZ-LSER database provides an extensively characterized platform for predicting solute partitioning behavior across diverse chemical and biological systems. Its structured organization around the six Abraham molecular descriptors creates a consistent framework for forecasting thermodynamic properties relevant to drug development. For researchers engaged in LSER model validation with independent datasets, the database offers comprehensive reference values and calculation tools that facilitate rigorous comparative analysis.

Successful implementation of the LSER database in validation workflows requires careful attention to its domain of applicability—particularly its restriction to neutral molecules—and systematic execution of the experimental protocols outlined herein. By leveraging the database's computational tools alongside independent experimental measurements, researchers can quantitatively assess prediction accuracy, identify potential limitations for specific compound classes, and contribute to the ongoing refinement of these valuable predictive models in pharmaceutical and environmental research.

Linear Solvation Energy Relationships (LSERs) represent a cornerstone predictive model in environmental chemistry, pharmaceutical sciences, and chemical engineering for estimating partition coefficients that dictate solute transfer between phases. The Abraham LSER model, a particularly successful implementation, quantifies the complex interplay of intermolecular forces governing solvation through a mathematically elegant framework [8]. These models transform the challenge of predicting free-energy-related properties—such as partition coefficients, solubility, and chromatographic retention—into manageable linear equations based on molecular descriptors [14]. The robustness of LSER predictions stems from their grounding in solution thermodynamics, providing a physically meaningful basis for applications ranging from environmental fate modeling to drug delivery optimization [8] [17].

The core LSER formalism operates on a simple yet powerful principle: the partitioning of a solute between two phases can be described by linear combinations of molecular descriptors that capture the solute's capacity for specific intermolecular interactions [14]. Two primary equations form the backbone of this approach for different partitioning scenarios. For solute transfer between two condensed phases (such as octanol-water or polymer-water systems), the appropriate LSER equation takes the form: log(P) = cp + epE + spS + apA + bpB + vpVx [8] [14]. In this equation, the uppercase letters represent solute-specific molecular descriptors, while the lowercase coefficients characterize the complementary properties of the solvent system or partitioning phases.

For characterizing gas-to-solvent partitioning behavior, a slightly different equation is employed: log(KS) = ck + ekE + skS + akA + bkB + lkL [8]. The variables in these equations represent well-defined molecular properties: E represents the excess molar refraction, S characterizes dipolarity/polarizability, A and B quantify hydrogen-bond acidity and basicity respectively, Vx is McGowan's characteristic molecular volume, and L represents the gas-hexadecane partition coefficient at 298 K [8] [14]. The system parameters (c, e, s, a, b, v, l) are determined through multilinear regression of experimental partitioning data and remain constant for a given phase system, enabling prediction for any solute with known descriptors [8].

LSER Performance and Benchmarking Analysis

Quantitative Performance Metrics

The predictive accuracy of LSER models has been extensively validated across diverse chemical systems and application domains. Independent benchmarking studies demonstrate that LSERs achieve remarkable precision when applied to partition coefficient prediction, even when leveraging predicted rather than experimentally determined solute descriptors. The following table summarizes key performance metrics reported in recent rigorous evaluations:

Table 1: Performance Metrics of LSER Models in Partition Coefficient Prediction

| Application Context | Dataset Size | Validation Approach | R² | RMSE | Reference |

|---|---|---|---|---|---|

| LDPE-Water Partitioning | 156 compounds | Full dataset | 0.991 | 0.264 | [4] |

| LDPE-Water Partitioning | 52 compounds | Independent validation with experimental descriptors | 0.985 | 0.352 | [4] |

| LDPE-Water Partitioning | 52 compounds | Validation with predicted descriptors | 0.984 | 0.511 | [4] |

| HPLC Retention Prediction | Variable | QSPR-based LSER | Comparable to conventional methods | Not reported | [18] |

The exceptional performance of the LSER model for Low-Density Polyethylene (LDPE)-water partitioning (R² = 0.991, RMSE = 0.264) across 156 chemically diverse compounds underscores the robustness of this approach [4]. Particularly noteworthy is the minimal performance degradation when applying the model to an independent validation set (R² = 0.985, RMSE = 0.352), demonstrating strong generalizability beyond the training data [4]. The modest increase in RMSE to 0.511 when using predicted rather than experimental descriptors indicates that even without experimental characterization of new compounds, LSER models maintain substantial predictive power for application to extractables with no experimental LSER solute descriptors available [4].

Comparative Analysis with Alternative Methods

LSER models occupy a unique position in the landscape of partition coefficient prediction methods, offering a balance of theoretical foundation, predictive accuracy, and interpretability that distinguishes them from both purely empirical and fully mechanistic approaches. The following table compares LSER against other common prediction methodologies:

Table 2: Comparison of Partition Coefficient Prediction Methods

| Method Type | Representative Examples | Required Input | RMSE Range | Strengths | Limitations |

|---|---|---|---|---|---|

| LSER Models | Abraham LSER | Molecular descriptors (E, S, A, B, V, L) | 0.264-0.511 | Strong theoretical basis; interpretable parameters; wide applicability | Requires descriptor availability |

| Fragment-Based Methods | CLOGP, XLOGP2 | Molecular structure | 1.23-1.80 | Does not require experimental data; interpretable contributions | Limited fragment libraries; missing fragments problematic |

| Molecular Simulation Approaches | iLOGP, ALOGPS | Molecular structure | 1.02-2.03 | Pure computational prediction; no experimental data needed | Computationally intensive; complex parameterization |

| Molecular Formula-Based | MF-LOGP | Molecular formula only | 0.77 | Minimal input requirements; rapid screening | Limited structural information; lower accuracy |

When compared with the molecular formula-based MF-LOGP method, which achieves RMSE = 0.77 using only compositional information [19], LSER models demonstrate superior accuracy but require more detailed molecular characterization. The advantage of LSER approaches becomes particularly evident in their ability to provide insight into the specific intermolecular interactions driving partitioning behavior, unlike black-box machine learning models or fragment-based methods that offer limited physicochemical interpretation [8] [14].

Experimental Protocols and Methodologies

Core Partition Coefficient Determination

The experimental foundation for LSER model development relies on rigorous measurement of partition coefficients across well-defined chemical systems. For polymer-water partitioning—particularly relevant to pharmaceutical container compatibility studies—the standard protocol involves equilibrium partitioning followed by chemical analysis [4]. The experimental workflow begins with preparation of polymer specimens (e.g., Low-Density Polyethylene) of standardized dimensions and surface area, which are thoroughly pre-cleaned to remove manufacturing residues or contaminants [4]. These specimens are immersed in aqueous solutions containing precisely known concentrations of test solutes, covering a structurally diverse range of chemicals to ensure broad model applicability.

The experimental systems are maintained at constant temperature (typically 25°C or 37°C for pharmaceutical applications) with continuous agitation to ensure efficient mass transfer without causing mechanical degradation of the polymer matrix [4]. Equilibrium establishment is confirmed through time-course measurements, with typical equilibration periods ranging from 24 hours to several days depending on solute properties and polymer characteristics. Following equilibration, the solute concentration in the aqueous phase is quantified using appropriate analytical methods (most commonly High-Performance Liquid Chromatography with UV or mass spectrometric detection), while the concentration in the polymer phase is determined either by direct extraction and analysis or by mass balance calculation [4]. The partition coefficient is then calculated as K = Cpolymer / Cwater, where Cpolymer represents the equilibrium concentration in the polymer phase and Cwater the equilibrium concentration in the aqueous phase, with final results typically reported as log(K) values [4].

LSER Model Calibration Protocol

The transformation of experimental partition coefficient data into predictive LSER models follows a standardized computational protocol. The process begins with compilation of experimentally determined log(K) values for a training set of compounds, ideally encompassing 100+ chemically diverse solutes to ensure robust parameter estimation [4]. For each compound in the training set, the six Abraham solute descriptors (E, S, A, B, V, and L) are obtained from experimental measurements or curated databases such as the UFZ-LSER database [15]. These data are organized into a matrix format with compounds as rows and descriptors as columns, with the corresponding log(K) values as the dependent variable.

Multiple linear regression is performed using the equation log(K) = c + eE + sS + aA + bB + vV + lL, where the system-specific coefficients (c, e, s, a, b, v, l) are determined through least-squares minimization [4] [8]. The statistical significance of each coefficient is evaluated through t-tests, with non-significant terms (typically p > 0.05) potentially excluded from the final model to reduce overparameterization. Model performance is quantified using R² (coefficient of determination), RMSE (root mean square error), and leave-one-out cross-validation metrics to assess predictive ability [4]. For independent validation, the calibrated model is applied to a separate test set of compounds not included in the training process, with performance metrics compared against the training set results to evaluate generalizability [4].

In Silico HPLC Method Development

A specialized application of LSER modeling combines quantitative structure-property relationships (QSPR) with LSER and linear solvent strength (LSS) theory to predict high-performance liquid chromatography (HPLC) retention factors without experimental measurements [18]. The protocol begins with obtaining molecular descriptors from simplified molecular input line entry system (SMILES) string representations of analyte molecules [18]. These descriptors serve as inputs to QSPR models that predict solute-dependent parameters for subsequent LSER analysis.

The core LSER equation for chromatographic systems takes the form: log(k) = c + eE + sS + aA + bB + vV, where the system parameters (c, e, s, a, b, v) depend on both stationary phase characteristics and mobile phase composition [18]. These system parameters are pre-determined for various chromatographic conditions through calibration with known analyte mixtures. For retention prediction across gradient conditions, the LSER model is integrated with LSS theory, which describes the relationship between retention factor (k) and mobile phase composition (ϕ) as: log(k) = log(kw) - Sϕ, where kw is the extrapolated retention factor in pure water and S is the solvent strength parameter [18]. This combined approach enables prediction of retention times for novel compounds without experimental measurements, significantly accelerating HPLC method development [18].

Visualization of LSER Workflow

The following diagram illustrates the integrated experimental and computational workflow for LSER model development and application:

LSER Model Development and Application Workflow

Successful implementation of LSER models requires access to specialized databases, computational tools, and chemical resources. The following table details essential components of the LSER research toolkit:

Table 3: Essential Research Resources for LSER Applications

| Resource Category | Specific Tool/Resource | Key Functionality | Access Information |

|---|---|---|---|

| Solute Descriptor Databases | UFZ-LSER Database | Curated repository of experimental solute descriptors | Freely available at https://www.ufz.de/lserd/ [15] |

| Partition Coefficient Prediction | LSER Database Calculation Tools | Online calculation of partition coefficients for user-defined systems | Integrated with UFZ-LSER database [15] |

| Descriptor Prediction Tools | QSPR Prediction Software | In silico estimation of LSER descriptors from molecular structure | Various commercial and academic packages [4] |

| Chemical Standards | Certified Reference Materials | Experimental determination of partition coefficients | Commercial suppliers (e.g., Sigma-Aldrich, Merck) |

| Chromatographic Applications | HPLC-LSER Parameters | System-specific coefficients for retention prediction | Research literature and specialized databases [18] |

| Polymer Characterization | Standard Polymer Films | Well-characterized substrates for partitioning studies | Commercial manufacturers (e.g., Goodfellow, American Polymer Standards) |

The UFZ-LSER database represents a particularly critical resource, providing freely accessible curated descriptors for thousands of compounds in a web-based interface that enables direct calculation of partition coefficients for user-defined systems [15]. For applications involving complex biological partitioning, the database includes specialized tools for predicting biopartitioning behavior, plasma protein binding, and permeability through cell monolayers [15]. Complementing this experimental data, QSPR prediction tools provide estimated LSER descriptors for novel compounds not yet included in experimental databases, extending the applicability of LSER models to emerging contaminants or newly synthesized pharmaceuticals [4].

LSER models have established themselves as indispensable tools for predicting partition coefficients across diverse chemical, pharmaceutical, and environmental applications. The robust theoretical foundation of these models in solvation thermodynamics distinguishes them from purely empirical approaches, while their linear formalism ensures practical implementation without excessive computational demands [8] [14]. Benchmarking studies consistently demonstrate exceptional predictive accuracy, with R² values exceeding 0.98 for validated polymer-water partitioning systems and maintained performance even when employing predicted rather than experimentally determined molecular descriptors [4].

The comparative analysis presented in this guide reveals that LSER approaches offer a unique combination of accuracy, interpretability, and versatility unmatched by fragment-based methods, molecular simulation techniques, or simplified formula-based predictions [4] [19]. The ongoing integration of LSER with complementary thermodynamic frameworks like COSMO-RS and equation-of-state models promises further enhancements to predictive capability across extended temperature and pressure ranges [8] [14]. For researchers and product developers requiring reliable partition coefficient predictions, LSER models represent the optimal balance of physical meaningfulness and practical utility, particularly when supported by the extensive descriptor databases and computational tools now available to the scientific community [15].

A Practical Workflow for Building and Applying Robust LSER Models

The Critical Role of Data Curation in LSER Model Validation

The predictive accuracy of Linear Solvation Energy Relationship (LSER) models is fundamentally constrained by the quality of the experimental data used for their calibration. Robust validation with independent data sets is paramount, as models cannot be more reliable than the underlying data from which they are derived. Recent analyses reaffirm that poor data reproducibility and quality are significant challenges in chemical and toxicological research, directly impacting the confidence in model predictions [20]. Data curation is not a preliminary step but a critical, integral process that ensures the removal of inconsistencies, duplicates, and errors that otherwise lead to over-optimistic and non-generalizable models [20]. This guide provides a structured, step-by-step protocol for curating a high-quality initial training data set, specifically framed within LSER model validation research.

Step 1: Data Collection and Sourcing

The first step involves gathering a comprehensive and chemically diverse set of experimental data from reliable sources.

- Objective: Assemble a broad data pool that adequately represents the chemical space and endpoint of interest (e.g., partition coefficients, solvation energies) for your LSER model.

- Protocol:

- Identify Reputable Databases: Source data from curated databases such as the LSER database [8], ChEMBL (which standardizes units to nanomolar) [20], and other peer-reviewed literature.

- Prioritize Experimental Data: Give precedence to data from guideline studies. Be cautious of data predicted by other QSAR models or read-across in regulatory databases like REACH, as these can introduce circularity and inflate perceived accuracy [20].

- Capture Metadata: Collect all relevant metadata, including experimental conditions, assay protocols, and chemical purity. For LSER models, this includes the solute descriptors (Vx, E, S, A, B, L) and the corresponding measured property (e.g., log P) [4] [8].

Step 2: Data Curation and Harmonization

This step focuses on cleaning and standardizing the collected data to ensure consistency and reliability.

- Objective: Eliminate data errors and standardize formats to create a coherent and high-fidelity dataset.

- Protocol:

- Identify and Handle Duplicates: Systematically identify duplicate entries for the same chemical. Analyze them to estimate assay reproducibility and retain a consensus value or the value from the most reliable study [20].

- Standardize Units: Convert all reported values to consistent units. As exemplified by the ChEMBL database, standardizing to molar units (e.g., nanomolar) is critical, as biological effects depend on the number of molecules [20].

- Harmonize Chemical Identifiers: Standardize chemical structures, names, and identifiers (e.g., SMILES, InChIKeys) to resolve representation inconsistencies.

- Annotate Data Quality: Flag data points marked as "not reliable" by source databases [20] or those with incomplete metadata for later review.

Step 3: Data Quality Assessment and Filtering

Evaluate the curated dataset to identify and address remaining quality issues.

- Objective: Scrutinize the data for outliers, activity cliffs, and potential errors.

- Protocol:

- Analyze Activity Cliffs: Identify groups of structurally similar compounds with large differences in measured activity. These pose significant challenges for LSER and QSAR models and require careful verification [20].

- Investigate Discordant Results: For chemicals tested in multiple studies, investigate the sources of discordance (e.g., differences in study design, dosing). Set aside compounds with irreconcilable differences for consensus prediction [20].

- Apply LLM-Driven Curation (Advanced): For large datasets, leverage Large Language Models (LLMs) as a cost-effective quality rating system. To mitigate LLM inaccuracies and biases, implement a curation method like DS2 (Diversity-aware Score curation for Data Selection). DS2 uses a learned score transition matrix to correct LLM-rated scores and promotes diversity in the selected data [21].

Step 4: Dataset Splitting for Validation

Partition the final curated dataset to enable rigorous model training and validation.

- Objective: Create independent training and validation sets to assess the model's predictive performance on unseen data objectively.

- Protocol:

- Apply a Structured Split: Allocate a significant portion of the data (e.g., ~33%) to an independent validation set. The remaining data is used for model training/calibration [4].

- Ensure Representativeness: The validation set must be chemically diverse and representative of the entire data pool to provide a meaningful test of the model's generalizability [4].

Experimental Comparison of Curation Methodologies

The table below summarizes the performance outcomes of different data selection and curation strategies, highlighting the impact on model validation.

| Curation Method | Dataset Size | Key Metrics | Performance Outcome | Implications for LSER Validation |

|---|---|---|---|---|

| Baseline (Uncurated Data) | Full dataset (Variable) | Correct Classification Rate (CCR) | 7-24% higher but inflated due to duplicates [20] | Perceived performance is unreliable; high risk of overfitting. |

| Standard Curation | Reduced, variable | R², RMSE | Improved generalizability and reduced error [20] | Essential for establishing a reliable baseline model performance. |

| LLM Rating (GPT-4, LLaMA) | Subset of original | Benchmark task performance | Competitive but sub-optimal due to model-specific biases and rating inaccuracies [21] | A cost-effective but noisy selector; requires further correction. |

| DS2 Curation Pipeline | 3.3% (10k of 300k) | Various alignment benchmarks | Outperformed full dataset training and matched/exceeded human-curated data (LIMA) [21] | Challenges data scaling laws; enables highly efficient, high-quality LSER training sets. |

Detailed Experimental Protocols

Protocol 1: Building a Curated LSER Dataset for Partition Coefficients

This protocol details the creation of a dataset for predicting log Ki,LDPE/W (low-density polyethylene/water partition coefficients) [4].

- Application: LSER model development for polymer-water partitioning.

- Methodology:

- Data Compilation: Collect experimental partition coefficients for a chemically diverse set of compounds.

- Descriptor Acquisition: Obtain experimental LSER solute descriptors (E, S, A, B, Vi) for each compound.

- Data Splitting: Ascribe approximately 33% of the total observations to an independent validation set. The model is calibrated on the remaining training data using the LSER equation:

logKi,LDPE/W = −0.529 + 1.098Ei − 1.557Si − 2.991Ai − 4.617Bi + 3.886Vi[4]. - Validation: Calculate log Ki,LDPE/W for the independent validation set and perform linear regression against experimental values to obtain R² and RMSE [4].

Protocol 2: The DS2 Pipeline for LLM-Driven Data Curation

This modern protocol uses LLMs to select high-quality data for instruction tuning, a principle transferable to LSER data curation [21].

- Application: Efficiently curating a small, high-quality dataset from a large, noisy data pool.

- Methodology:

- LLM Rating: Use pre-trained LLMs (e.g., GPT-4, LLaMA) to generate quality scores for each data sample (e.g., based on rarity, complexity, informativeness).

- Error Modeling: Analyze the LLM-rated scores to learn a "score transition matrix" that models the probability of score errors without needing ground-truth labels.

- Score Curation: Use the learned transition matrix to refine and correct the raw LLM scores.

- Diversity-Aware Selection: Select the final data subset by prioritizing both the curated quality scores and the diversity of the samples to ensure broad coverage [21].

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Resource | Function in Data Curation & LSER Modeling |

|---|---|

| LSER Database | A freely accessible, curated database providing solute descriptors and partition coefficients for building predictive models [8]. |

| ChEMBL Database | A large-scale bioactivity database that standardizes data to nanomolar units, serving as a model for data harmonization [20]. |

| DS2 (Diversity-aware Score Curation) | An advanced computational pipeline that corrects errors in LLM-based data quality ratings and ensures diversity in the selected data [21]. |

| Score Transition Matrix | A core component of the DS2 method that models the probability of rating errors, enabling automated score correction [21]. |

| Abraham Solute Descriptors (Vx, E, S, A, B, L) | The six molecular descriptors that form the basis of the LSER model, quantifying properties like volume, polarity, and hydrogen-bonding [8]. |

Workflow Visualization

The diagram below outlines the logical workflow for curating a high-quality training dataset.

Key Curation Strategies

- Prioritize Quality Over Quantity: A small, meticulously curated dataset (as little as 3.3% of the original data) can outperform models trained on the full, noisy dataset, challenging traditional data-scaling laws [21].

- Systematically Address Duplicates: The presence of duplicate measurements is a primary source of performance inflation in uncurated models. Their identification and consolidation are non-negotiable [20].

- Validate with Independent Data: Always validate the final LSER model on a completely independent data set that was not used during the model calibration process. This is the gold standard for evaluating true predictive power [4].

- Embrace Modern Curation Tools: Leverage advanced methods like the DS2 pipeline to enhance traditional curation, mitigating human and machine-generated biases in data quality assessment [21].

Feature Selection and Pre-processing of LSER Molecular Descriptors

Linear Solvation Energy Relationship (LSER) models are a cornerstone of quantitative structure-property relationship (QSPR) studies, providing a robust framework for predicting solvation-related physicochemical properties critical to drug development, environmental science, and materials research [18]. The predictive accuracy and interpretability of these models depend fundamentally on the selection and pre-processing of molecular descriptors that quantify key solute-solvent interactions. As the field moves toward greater validation with independent data sets, the methodological rigor applied to descriptor handling becomes increasingly important for ensuring model reliability and translational utility.

This guide provides a comparative analysis of contemporary approaches for feature selection and pre-processing of LSER molecular descriptors, evaluating their performance against experimental benchmarks and emerging computational alternatives. By objectively examining the empirical evidence supporting different methodologies, we aim to equip researchers with practical frameworks for optimizing LSER model development and validation.

LSER Descriptor Frameworks: Comparative Analysis

Traditional LSER Descriptors

The established Abraham LSER framework utilizes five core solute descriptors that capture distinct molecular interaction properties, as summarized in Table 1. These descriptors have been extensively validated across numerous chemical systems and form the basis for most historical LSER applications in pharmaceutical research [18].

Table 1: Traditional Abraham LSER Solute Descriptors

| Descriptor | Symbol | Molecular Property Represented | Typical Range |

|---|---|---|---|

| Excess molar refraction | E | Electron lone pair interactions and refractivity | 0-2.5 |

| Dipolarity/Polarizability | S | Dipole-dipole and dipole-induced dipole interactions | 0-2 |

| Hydrogen-bond acidity | A | Solute's ability to donate a hydrogen bond | 0-1 |

| Hydrogen-bond basicity | B | Solute's ability to accept a hydrogen bond | 0-1 |

| McGowan's molecular volume | V | Molecular size and dispersion interactions | 0-4 |

The traditional LSER model predicts free-energy-related properties such as retention factors in chromatography using the linear form: logk = c + eE + sS + aA + bB + vV, where lowercase letters represent system-specific coefficients that are independent of the solute [18]. This framework has demonstrated remarkable predictive capability, with reported R² values exceeding 0.99 for partition coefficients between low-density polyethylene and water systems [4].

Emerging QC-LSER Descriptors

Recent advancements have introduced quantum chemical-based LSER descriptors (QC-LSER) that leverage molecular surface charge distributions (σ-profiles) obtained from density functional theory (DFT) calculations [22]. These descriptors offer a more fundamental approach to characterizing hydrogen-bonding interactions:

- Ah: HB acidity descriptor representing proton donor capacity

- Bh: HB basicity descriptor representing proton acceptor capacity

For molecules with single interaction sites, the HB interaction free energy is calculated as: -ΔG₁₂ʰᵇ = 5.71(α₁β₂ + β₁α₂) kJ/mol at 25°C, where α and β are effective acidity and basicity descriptors [22]. This approach provides a theoretically grounded alternative to the empirically derived A and B parameters in traditional LSER.

Feature Selection Methodologies: Performance Comparison

Effective feature selection is critical for developing interpretable LSER models without sacrificing predictive accuracy. We compare three prominent approaches in Table 2, with supporting experimental performance metrics.

Table 2: Performance Comparison of Feature Selection Methods for Molecular Descriptor Analysis

| Method | Key Principles | Reported Performance | Advantages | Limitations |

|---|---|---|---|---|