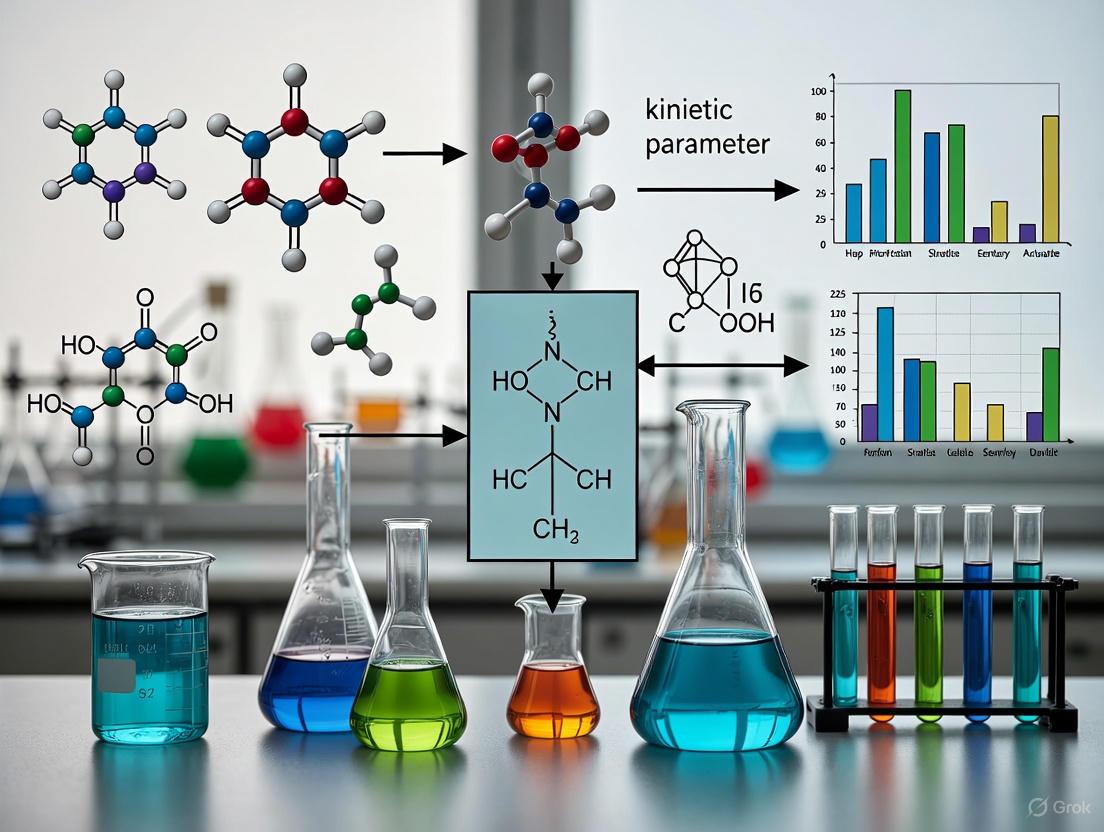

Sustainable Chemistry and Kinetic Parameter Analysis: Optimizing Drug Discovery for a Greener Future

This article explores the critical intersection of sustainable chemistry principles and kinetic parameter analysis, a frontier in modern drug discovery.

Sustainable Chemistry and Kinetic Parameter Analysis: Optimizing Drug Discovery for a Greener Future

Abstract

This article explores the critical intersection of sustainable chemistry principles and kinetic parameter analysis, a frontier in modern drug discovery. Aimed at researchers, scientists, and drug development professionals, it provides a comprehensive guide from foundational concepts to advanced applications. We first establish why integrating kinetics is essential for understanding in vivo drug efficacy beyond traditional affinity measures. The discussion then progresses to methodological advances, including high-throughput kinetic screening and AI-driven optimization, that align with green chemistry goals by reducing waste and resource consumption. A dedicated section addresses troubleshooting common challenges and optimizing processes for both performance and sustainability. Finally, we cover the latest validation frameworks and comparative tools, such as the Red Analytical Performance Index (RAPI), ensuring methods are not only analytically sound but also environmentally responsible. This synthesis offers a strategic roadmap for developing more effective therapeutics through sustainable and kinetically-informed R&D processes.

Why Kinetics Matter: Moving Beyond Equilibrium for Sustainable Drug Discovery

The equilibrium dissociation constant (KD) is a fundamental parameter in drug development and molecular biology, used to quantify the affinity of ligand-receptor interactions. Traditionally determined under idealized in vitro conditions, KD assumes that binding reactions have reached a steady state. However, mounting evidence reveals that this equilibrium assumption frequently fails in physiological environments, where dynamic conditions prevent the establishment of true equilibrium. This whitepaper examines the fundamental limitations of KD by exploring the kinetic principles governing molecular interactions in vivo. We analyze how biological barriers, temporal constraints, and non-equilibrium environments distort affinity predictions, and present emerging methodologies that provide more physiologically relevant binding assessments. Framed within sustainable chemistry and kinetic parameter analysis, this analysis advocates for a paradigm shift from purely thermodynamic binding models to kinetic-aware frameworks for accurate in vivo prediction.

The Fundamental Disconnect: Equilibrium Assumptions vs. Dynamic Reality

The Theoretical Foundation of KDand Its inherent Assumptions

The equilibrium dissociation constant (KD) is defined as the ratio of the dissociation and association rate constants (KD = koff/kon), representing the ligand concentration at which half of the receptors are occupied at equilibrium [1]. This relationship is mathematically described by the Langmuir isotherm, which assumes a simple bimolecular interaction reaching steady state where association and dissociation rates are equal. The standard protocol for determining KD involves incubating a fixed receptor concentration with varying ligand concentrations until equilibrium is established, then measuring bound complexes [1].

The determination of KD relies on several critical assumptions: (1) the system is closed and at equilibrium, (2) all receptors are identical and non-interacting, (3) ligand binding does not deplete free ligand concentration, and (4) measurements are performed under steady-state conditions. While these assumptions are reasonably achievable in controlled in vitro settings, they rarely reflect the complex, dynamic reality of living systems [1] [2].

The Kinetic Basis of Molecular Interactions

At the molecular level, binding is governed by stochastic processes where ligands and receptors continuously associate and dissociate. The rate of equilibration (keq) is given by keq = kon[T] + koff, where [T] is the target concentration [2]. High-affinity interactions (with low KD values) typically feature slow dissociation rates (koff), as KD = koff/kon and kon approaches a diffusion-limited upper bound of 106-108 M-1s-1 [2]. This relationship creates a fundamental kinetic barrier: high-affinity binders take substantially longer to reach equilibrium, making them particularly vulnerable to non-equilibrium conditions in vivo.

Table 1: Fundamental Relationships Governing Receptor-Ligand Binding

| Parameter | Symbol | Relationship | Biological Implication |

|---|---|---|---|

| Equilibrium Dissociation Constant | KD | KD = koff/kon | Measure of affinity; lower KD indicates tighter binding |

| Equilibration Rate Constant | keq | keq = kon[T] + koff | Determines time required to reach equilibrium |

| Bound Fraction at Equilibrium | y | y = (T/KD)/(1 + T/KD) | Langmuir isotherm; assumes equilibrium conditions |

| Dissociation Half-life | t1/2 | t1/2 = ln(2)/koff | Time for half of complexes to dissociate |

Biological Barriers to Achieving EquilibriumIn Vivo

Temporal Constraints in Physiological Processes

Many biological processes operate on timescales too brief for high-affinity interactions to reach equilibrium. A compelling example is the FcRn-mediated recycling of IgG antibodies, which protects them from intracellular catabolism and contributes to their long half-life [3]. The FcRn-IgG binding is pH-dependent, with high affinity occurring at acidic pH (5.5-6.0) within endosomes. However, the endosomal transit time is remarkably brief—approximately 7.5 minutes based on transferrin receptor recycling studies—while IgG-FcRn complexes exhibit dissociation half-lives ranging from 6-58 minutes [3]. This temporal mismatch means binding cannot reach equilibrium before endosomal sorting occurs, rendering traditional KD measurements physiologically irrelevant.

The implications are profound: while engineering mAbs for increased FcRn binding affinity at pH 6.0 was expected to extend half-life, experimental results have been inconsistent. Some mAbs with 10-100 fold increased affinity show minimal half-life improvement, contradicting equilibrium-based predictions [3]. A catenary physiologically-based pharmacokinetic (PBPK) model that accounts for non-equilibrium binding during endosomal transit predicts much more moderate changes in half-life (<2.5-fold for 10-fold affinity increase) compared to equilibrium models (~8-fold increase) [3], aligning better with experimental observations.

Microenvironmental Factors Disrupting Binding Equilibria

The cellular microenvironment introduces multiple variables that disrupt idealized binding conditions. Buffer composition and temperature significantly impact affinity measurements, yet in vivo conditions feature fluctuating pH, ionic strength, and molecular crowding effects absent in vitro [1]. For transcription factor-DNA interactions, the nuclear concentration of transcription factors constantly changes based on cellular state, preventing establishment of stable equilibrium [4]. Additionally, receptors often exist in different conformational states or complex with various co-factors in vivo, creating heterogeneous binding populations that violate the assumption of identical binding sites [5].

Table 2: Comparative Analysis of Equilibrium vs. Non-Equilibrium Conditions

| Parameter | In Vitro (Equilibrium) Conditions | In Vivo (Non-Equilibrium) Conditions |

|---|---|---|

| Time Available | Sufficient incubation time (hours to days) | Brief co-localization (minutes) in cellular compartments |

| Receptor Homogeneity | Purified, homogeneous preparation | Heterogeneous populations with modifications |

| Ligand Availability | Constant, well-defined concentration | Fluctuating concentrations with gradients |

| Environmental Stability | Controlled pH, temperature, buffer | Dynamic microenvironment with crowding effects |

| Measurement Context | Isolated binary interactions | Competitive binding in complex mixtures |

Ligand Depletion and Receptor Concentration Effects

Traditional KD determination assumes free ligand concentration remains essentially constant, but this assumption fails when receptor concentration approaches or exceeds KD [1] [5]. In flow cytometry experiments using live cells, the high receptor density (R0) on cell surfaces can lead to R0-driven interactions where R0 » KD, causing significant ligand depletion and distorting KD measurements [5]. This effect is particularly problematic for tight binders with sub-nanomolar KD values, where even modest receptor expression can consume substantial free ligand.

Methodological Approaches for Non-Equilibrium Binding Assessment

Pre-Equilibrium Biosensing

A groundbreaking approach challenges the fundamental necessity of reaching equilibrium for accurate concentration measurements. Pre-equilibrium biosensing leverages the kinetic response of receptors to quantify ligand concentration before reaching steady state [2]. Rather than measuring only the bound fraction at a single timepoint, this method monitors both the bound fraction (y(t)) and its rate of change (dy/dt) to instantaneously determine target concentration using the relationship:

T(t) = [dy/dt + koffy(t)] / [kon(1-y(t))]

This approach effectively eliminates the kinetic limitations of equilibrium-based sensing, enabling real-time monitoring of low-abundance analytes like insulin, where high-affinity receptors would normally require impractically long equilibration times [2]. The theoretical framework demonstrates that in noise-free systems, any receptor could instantaneously determine target concentration irrespective of kinetics, though real-world applications must optimize signal-to-noise ratios for specific concentration ranges and rates of change.

Time-Resolved Live-Cell Binding Measurements

Technologies like LigandTracer enable real-time monitoring of ligand-receptor interactions on live cells, capturing binding kinetics without requiring equilibrium [5]. This methodology offers several advantages: it preserves native receptor conformation and membrane environment, provides both kinetic parameters (kon, koff) and affinity (KD = koff/kon), and accommodates slowly-dissociating complexes through extended monitoring periods. Comparative studies reveal that endpoint flow cytometry measurements often underestimate KD values due to insufficient incubation time, while time-resolved measurements provide more accurate characterization of tight-binding interactions [5].

Advanced In Vitro Platforms for Kinetic Analysis

Novel high-throughput platforms have been developed specifically to characterize binding under non-equilibrium conditions. The inverted MITOMI (iMITOMI) assay reconfigures traditional binding geometry by immobilizing DNA targets containing binding site clusters while exposing them to solution-phase transcription factors [4]. This enables quantitative measurement of transcription factor occupancy across complex cluster configurations, revealing that clusters of low-affinity binding sites can achieve substantial occupancy at physiologically relevant transcription factor concentrations. Similarly, High-Performance Fluorescence Anisotropy (HiP-FA) incorporates a controlled delivery system within a porous agarose gel matrix to measure full competitive titration curves in single wells, enabling sensitive determination of binding affinities at equilibrium in solution [6].

The Scientist's Toolkit: Essential Research Reagents and Methodologies

Table 3: Research Reagent Solutions for Non-Equilibrium Binding Studies

| Reagent/Technology | Function/Benefit | Application Context |

|---|---|---|

| LigandTracer | Real-time monitoring of ligand-receptor interactions on live cells | Preserves native membrane environment; determines kinetics and affinity simultaneously [5] |

| Fluorescent Protein Fusions (e.g., GFP, mRFP) | Genetically-encoded tags for visualizing molecular interactions in live cells | Enables FRET and fluorescence cross-correlation spectroscopy (FCCS) for in vivo KD determination [7] |

| iMITOMI Assay | High-throughput characterization of binding site cluster occupancy | Quantitative analysis of transcription factor binding to multiple proximal sites; reveals occupancy of low-affinity clusters [4] |

| HiP-FA | High-sensitivity fluorescence anisotropy measurements in solution | Determines binding affinities at equilibrium with high sensitivity; measures full titration curves in single wells [6] |

| Catenary PBPK Models | Mathematical models incorporating non-equilibrium binding during cellular transit | Predicts pharmacokinetic outcomes more accurately than equilibrium models [3] |

Experimental Protocols for Non-Equilibrium Binding Analysis

Protocol: Time-Resolved Binding Measurements with LigandTracer

This protocol characterizes therapeutic antibody binding to cell-surface receptors on live cells, providing kinetic parameters and affinity without requiring equilibrium [5].

Cell Preparation: Seed adherent cells (e.g., SKBR3) on tilted cell culture-treated Petri dishes at 3.3×105 cells/mL in complete medium. Allow cells to adhere for 4 hours at 37°C, replace medium, and incubate overnight horizontally. Use cells 2-3 days post-seeding.

Ligand Labeling: Label antibodies (e.g., Trastuzumab) with fluorescent dyes (e.g., CF 488A, CF 647) using commercial labeling kits according to manufacturer instructions. Purify labeled proteins using buffer exchange columns and store aliquots at -20°C.

Binding Measurement: Place prepared cell dish in LigandTracer instrument. Add increasing concentrations of labeled antibody to the medium while continuously monitoring cell-associated fluorescence. Maintain temperature at 37°C throughout measurement.

Dissociation Phase: Replace ligand solution with ligand-free medium to monitor complex dissociation. Continue measurement until sufficient dissociation data is collected (may require several hours for tight binders).

Data Analysis: Globally fit association and dissociation phases using appropriate binding models to extract kon, koff, and calculate KD = koff/kon.

Protocol: Pre-equilibrium Biosensing Implementation

This protocol outlines the implementation of pre-equilibrium sensing for continuous molecular monitoring [2].

Receptor Immobilization: Immobilize molecular receptors (antibodies, aptamers) on sensor surface using appropriate conjugation chemistry. Ensure minimal mass transport limitations through microfluidic design or chaotic mixing.

System Calibration: Characterize receptor kinetic parameters (kon, koff) in controlled conditions using standard solutions. Verify that mass transport effects do not limit kinetic response.

Real-Time Monitoring: Continuously monitor bound fraction (y(t)) with high temporal resolution while exposing sensor to changing analyte concentrations.

Signal Processing: Calculate the rate of change of bound fraction (dy/dt) using appropriate numerical differentiation methods. Apply noise reduction algorithms optimized for the expected frequency range of concentration changes.

Target Estimation: Compute target concentration T(t) in real-time using the pre-equilibrium equation: T(t) = [dy/dt + koffy(t)] / [kon(1-y(t))].

Signal-to-Noise Optimization: Adjust measurement parameters (temporal resolution, filtering) to maximize SNR for the specific target concentration range and expected rates of change.

Implications for Sustainable Chemistry and Kinetic Parameter Analysis

The limitations of equilibrium-based affinity measurements have profound implications for sustainable chemistry and drug development. The failure of KD to predict in vivo efficacy contributes to high attrition rates in therapeutic development, representing substantial resource waste and environmental impact through synthesized compounds that never reach clinical use. Embracing kinetic-aware binding assessments aligns with green chemistry principles by enabling more predictive screening and reducing failed development campaigns.

Framed within kinetic parameter analysis research, the move beyond KD represents an essential evolution in molecular interaction characterization. Rather than discarding affinity measurements entirely, the field must adopt integrated parameters that account for both thermodynamic and kinetic aspects of binding. This includes reporting both KD and residence time (1/koff), developing standardized assays for time-resolved binding measurements, and creating computational models that incorporate non-equilibrium conditions.

The International Symposium on Green Chemistry (ISGC) 2025 will feature cutting-edge research in sustainable chemistry, including analytical approaches that reduce resource consumption while improving predictive power [8]. Similarly, the 30th Annual Green Chemistry & Engineering Conference (2026) will highlight innovations in sustainable measurement technologies [9]. These forums provide critical venues for disseminating kinetic-aware binding methodologies that enhance therapeutic efficacy predictions while aligning with green chemistry principles.

The equilibrium dissociation constant KD remains a valuable parameter for comparing molecular interactions under controlled conditions, but its limitations in predicting in vivo behavior are substantial and systematic. Biological systems operate dynamically, with temporal, spatial, and environmental constraints that prevent establishment of the equilibrium state required for meaningful KD interpretation. The disconnect between in vitro affinity and in vivo efficacy stems from these fundamental limitations rather than methodological deficiencies in KD determination itself.

Emerging methodologies—including pre-equilibrium biosensing, time-resolved live-cell binding measurements, and advanced in vitro platforms—provide pathways to more physiologically relevant binding assessment. By embracing kinetic parameters and non-equilibrium frameworks, researchers can develop more predictive models of therapeutic behavior while advancing sustainable chemistry principles through reduced attrition and more efficient development processes. The future of molecular interaction analysis lies not in abandoning affinity measurements, but in contextualizing them within the kinetic realities of biological systems.

The optimization of drug-receptor interactions has traditionally focused on binding affinity, a thermodynamic property. However, the kinetic parameters governing the binding event—the association rate constant (kon), the dissociation rate constant (koff), and the derived residence time (RT)—are increasingly recognized as critical determinants of in vivo drug efficacy, safety, and duration of action. This whitepaper provides an in-depth technical guide to these core kinetic parameters, framing their analysis within the principles of sustainable chemistry by emphasizing strategies that enhance lead compound optimization and reduce attrition in drug development. We detail the definitions, quantitative relationships, and experimental methodologies for kinetic parameter determination, supported by structured data and visual workflows to aid researchers and drug development professionals in leveraging kinetic selectivity for superior therapeutic outcomes.

In the context of sustainable chemistry, the goal is not only to create effective therapeutics but also to optimize the research and development process to minimize wasted resources and late-stage failures. The binding of a drug to its biological target is a dynamic process, not a static event. While the equilibrium dissociation constant (K_D) has long been the primary metric for evaluating drug-receptor interactions, it provides an incomplete picture. Binding kinetics, the study of the rates at which these interactions form and dissociate, offers a more comprehensive view that can better predict in vivo efficacy [10] [11].

The paradigm is shifting from a purely affinity-driven approach to one that also considers kinetic selectivity. This is because the human body is an open system where drug concentrations constantly change due to absorption, distribution, metabolism, and excretion (ADME) [10]. Under these non-equilibrium conditions, the time-dependent parameters—kon, koff, and RT—can be more informative than the equilibrium affinity constant alone. Incorporating kinetic profiling early in drug discovery aligns with sustainable practices by enabling the prioritization of compounds with a higher probability of clinical success, thereby reducing costly late-stage attritions [12]. This guide delves into the core kinetic parameters that define these dynamic interactions.

Defining the Core Kinetic Parameters

Association Rate Constant (k_on)

The association rate constant (k_on) is a second-order rate constant that quantifies the rate at which a drug (L) and its receptor (R) form a complex (RL). It is a measure of binding efficiency.

- Definition and Units: kon, with units of M⁻¹min⁻¹ (or M⁻¹s⁻¹), describes the bimolecular collision and complex formation between the drug and its target [10] [11].

- Governed by: The rate of association is influenced by factors such as molecular diffusion, electrostatic steering, and the steric compatibility required for the drug to assume the correct binding conformation [13].

- Functional Implication: A higher kon value typically leads to a faster onset of pharmacological action, as the drug-target complex forms more rapidly [10].

Dissociation Rate Constant (k_off)

The dissociation rate constant (k_off) is a first-order rate constant that quantifies the rate at which the drug-receptor complex (RL) breaks down into free drug and receptor.

- Definition and Units: koff, with units of min⁻¹ (or s⁻¹), is a measure of the stability of the drug-receptor complex [10] [11].

- Governed by: The koff is largely determined by the strength and number of non-covalent interactions (e.g., hydrogen bonds, van der Waals forces) that must be disrupted simultaneously for the drug to dissociate [13].

- Functional Implication: A lower koff value indicates a more stable complex and is a key driver for a prolonged duration of pharmacological effect, often extending beyond the presence of the free drug in the plasma [10] [13].

Residence Time (RT) and Dissociation Half-Life

Residence Time (RT) and dissociation half-life (t₁/₂) are derived parameters that offer an intuitive understanding of the longevity of the drug-receptor complex.

- Residence Time (RT): Defined as the reciprocal of the dissociation rate constant, RT = 1/koff. It represents the average time a drug remains bound to its receptor before dissociating [11] [13].

- Dissociation Half-Life (t₁/₂): Calculated as t₁/₂ = ln(2)/koff ≈ 0.693/koff. This is the time required for half of the drug-receptor complexes to dissociate [10] [13]. A long dissociation half-life can result in a prolonged drug action, allowing for less frequent dosing and improved trough efficacy, as exemplified by the bronchodilator tiotropium (t₁/₂ = 7.7 h) compared to ipratropium (t₁/₂ = 0.17 h) [13].

The Relationship between Kinetics and Equilibrium Affinity

The kinetic parameters kon and koff are intrinsically linked to the thermodynamic equilibrium dissociation constant (K_D).

This equation reveals that KD, the concentration required to occupy 50% of the receptors at equilibrium, is a ratio of the kinetic rates. Two drugs can have identical KD values but achieve them through vastly different kinetic mechanisms: one with fast association and fast dissociation, and another with slow association and very slow dissociation [13]. This concept, known as kinetic selectivity, can lead to profound differences in in vivo efficacy and safety profiles, even for drugs with similar affinities [13].

Table 1: Summary of Core Kinetic Parameters and Their Characteristics

| Parameter | Symbol | Definition | Units | Key Influences |

|---|---|---|---|---|

| Association Rate Constant | k_on | Rate of drug-receptor complex formation | M⁻¹min⁻¹ | Diffusion, molecular steering, conformational changes |

| Dissociation Rate Constant | k_off | Rate of drug-receptor complex breakdown | min⁻¹ | Strength of non-covalent bonds in the complex |

| Residence Time | RT | Average time a drug remains bound to its receptor | min | RT = 1 / k_off |

| Dissociation Half-Life | t₁/₂ | Time for half of drug-receptor complexes to dissociate | min | t₁/₂ = 0.693 / k_off |

| Equilibrium Dissociation Constant | K_D | Ratio of dissociation to association rates | M | KD = koff / k_on |

Quantitative Analysis and Data Presentation

A critical step in leveraging binding kinetics is the accurate quantification of parameters and the interpretation of the resulting data within a physiological context.

Calculating Target Occupancy and Drug Effect

The pharmacological effect of a drug is often directly linked to the concentration of the drug-receptor complex (RC). The rate of change of RC is governed by the law of mass action: d[RC]/dt = kon • [Ct] • ([Rt] - [RC]) - koff • [RC] [13] Where [Ct] is the free drug concentration at the target site, and [Rt] is the total receptor concentration.

When the drug effect (ΔE) is assumed to be proportional to RC, and the maximum effect (Emax) occurs when all receptors are occupied, the effect at the peak concentration (Cm) can be described by: ΔEm = Emax / (1 + KD / Cm) [13] This equation highlights that at high dose levels where Cm >> KD, the maximum effect (ΔEm) approaches Emax.

The Impact of Kinetic Parameters on Efficacy and Safety

Simulations and clinical observations have demonstrated how kon and koff shape therapeutic outcomes.

- Prolonged Duration of Action: Drugs with a slow koff (long RT) can maintain target occupancy even after systemic concentrations have fallen below effective levels. This prolonged effect can enable more convenient dosing regimens and improved patient compliance [10] [13].

- Implications for Drug Safety: Kinetic parameters can also influence a drug's safety profile. For example, typical antipsychotics like haloperidol have a long residence time at the D2 dopamine receptor, which is associated with extrapyramidal side effects. In contrast, atypical antipsychotics like clozapine have a much shorter RT, allowing them to rapidly dissociate in response to surges of endogenous dopamine, thereby reducing side effects [13].

Table 2: Impact of Kinetic Parameters on Drug Profile

| Kinetic Profile | Impact on Onset | Impact on Duration | Therapeutic Utility |

|---|---|---|---|

| High kon, Low koff | Fast | Long | Ideal for sustained efficacy; may risk prolonged toxicity. |

| Low kon, Low koff | Slow | Long | Suitable for chronic conditions requiring long-lasting action. |

| High kon, High koff | Fast | Short | Suitable for acute conditions requiring rapid, short-lived intervention. |

| Low kon, High koff | Slow | Short | Generally therapeutically undesirable. |

Experimental Protocols for Measuring Binding Kinetics

Accurate measurement of kon and koff is foundational to kinetic analysis. The following section details a generalized protocol for determining these parameters using label-free biosensors, a common modern approach.

Methodology: Determining kon and koff using Bio-Layer Interferometry (BLI)

Principle: BLI measures binding kinetics in real-time by analyzing interference patterns of white light reflected from a biosensor tip. A shift in the interference pattern corresponds to a change in optical thickness upon binding of molecules to the sensor surface [11].

Workflow:

- Immobilization: The purified target receptor is immobilized onto the surface of a biosensor.

- Association Phase (kon measurement): The sensor is immersed in a solution containing the drug ligand. The binding of the ligand to the receptor causes a measurable increase in optical thickness. The rate of this signal increase over time, at a known ligand concentration, is used to determine the association rate constant (kon).

- Dissociation Phase (koff measurement): The sensor is then transferred to a buffer solution without the ligand. The dissociation of the ligand from the receptor causes a decrease in optical thickness. The rate of this signal decay is used to determine the dissociation rate constant (koff) [11].

Data Analysis: The real-time association and dissociation data are fitted to a 1:1 binding model using the instrument's software. The model directly outputs the kon and koff values, from which the KD (koff/kon) and Residence Time (1/koff) are computed [11].

Diagram 1: BLI kinetic measurement workflow.

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagent Solutions for Binding Kinetic Assays

| Item | Function in Experiment |

|---|---|

| Purified Target Protein | The biological receptor of interest; must be highly pure and functional for reliable immobilization and binding. |

| Biosensor Tips | Functionalized surfaces (e.g., with Ni-NTA for his-tagged proteins, streptavidin for biotinylated proteins) that capture the target. |

| Label-Free Drug Ligands | Compounds to be tested; must be soluble and stable in the assay buffer to avoid experimental artifacts. |

| Assay Buffer | A physiologically relevant buffer that maintains protein stability and activity, often containing additives to minimize non-specific binding. |

| Bio-Layer Interferometry (BLI) Instrument | The core analytical platform that performs real-time, high-throughput kinetic measurements. |

Advanced Concepts and the Role of Rebinding

Moving beyond simple in vitro systems, the complex morphology of cells and tissues introduces phenomena that modulate observed binding kinetics, most notably drug rebinding.

The Phenomenon of Rebinding

Rebinding is a "hindered diffusion" process where a drug molecule, after dissociating from its target, repeatedly encounters the same or a nearby target before it can diffuse away from the local target environment [10]. This can occur due to physical barriers like cell membranes, synaptic clefts, or a high local density of receptors.

Functional Consequences of Rebinding

Rebinding has significant implications for translating in vitro kinetics to in vivo effects:

- Prolonged Apparent Target Occupancy: Rebinding leads to a much longer apparent residence time on the target than the intrinsic koff value would suggest [10]. This can explain why a drug with a seemingly fast in vitro koff still produces a sustained therapeutic effect in vivo.

- Contribution to Kinetic Selectivity: The extent of rebinding is influenced by the association rate constant (kon). A high kon facilitates more efficient recapture of a dissociated drug, meaning that optimizing for a fast kon can, under certain conditions, produce a similar outcome to optimizing for a slow koff [10].

- Challenges in Measurement: Rebinding is often intentionally suppressed in classic in vitro washout experiments by adding high concentrations of competing ligands to prevent the radioligand from re-associating. This allows for the measurement of the "genuine" koff, but may not reflect the physiological reality [10].

Diagram 2: Drug rebinding process between nearby receptors.

Emerging Tools and Sustainable Applications

The integration of kinetic parameter analysis into drug discovery is being accelerated by new computational and experimental tools that align with the goals of sustainable chemistry.

Computational Advances

Computational prediction of residence time has historically been challenging due to the long timescales required for molecular dynamics simulations. Emerging technologies like Koffee Unbinding Kinetics aim to address this by performing scalable ligand residence time screenings in approximately one minute per complex on standard hardware [12]. This represents a speed-up of 3-5 orders of magnitude compared to state-of-the-art methods, allowing for the early-stage computational prioritization of compounds based on kinetics, thereby reducing the need for costly synthetic and experimental work on compounds with poor kinetic profiles [12].

Sustainable Chemistry Perspective

Framing kinetic analysis within sustainable chemistry highlights its role in creating a more efficient and less wasteful drug development pipeline.

- Reducing Late-Stage Attrition: By providing a more accurate prediction of in vivo efficacy and duration, kinetic profiling helps select better drug candidates earlier, avoiding the immense resource consumption associated with the failure of late-stage clinical trials [10] [12].

- Enabling Differentiated Therapeutics: Kinetic selectivity allows for the design of drugs that better mimic natural physiology or minimize off-target effects, leading to safer medicines and reduced environmental burden from manufacturing and waste [13].

- Informing Dosing Regimens: Understanding residence time can lead to optimized dosing schedules with less frequent administration, improving patient adherence and reducing the total amount of API (Active Pharmaceutical Ingredient) needed over a treatment course, which has positive life-cycle assessment implications [10] [13].

The kinetic parameters kon, koff, and Residence Time are indispensable for a modern, sophisticated understanding of drug-receptor interactions. They provide critical insights into the onset, duration, and selectivity of drug action that cannot be derived from equilibrium affinity alone. As the field moves towards more predictive and efficient drug discovery, the integration of kinetic profiling—supported by robust experimental protocols and emerging computational tools—represents a cornerstone of sustainable chemistry practices. By prioritizing compounds with optimal binding kinetics, researchers can de-risk development pipelines, conserve resources, and ultimately deliver safer, more effective therapeutics to patients.

Linking Kinetic Profiles to Therapeutic Efficacy and Safety

The quantitative analysis of kinetic profiles is emerging as a critical discipline in modern drug development, enabling researchers to bridge molecular-level reaction rates with macroscopic therapeutic outcomes. This technical guide examines advanced methodologies for linking kinetic parameters to efficacy and safety endpoints, framed within the context of sustainable chemistry principles. By integrating Model-Informed Drug Development (MIDD) approaches, artificial intelligence, and environmentally-conscious experimental protocols, researchers can accelerate the development of safer, more effective therapeutics while reducing resource consumption. The following sections provide a comprehensive framework featuring structured data tables, detailed experimental protocols, and specialized visualization tools to support researchers in this evolving field.

Kinetic profiling provides crucial insights into the temporal behavior of drug substances, encompassing their absorption, distribution, metabolic transformation, and elimination (ADME) within biological systems. In the context of sustainable chemistry, understanding these kinetic parameters enables researchers to design drugs with optimized therapeutic windows while minimizing environmental impact throughout the product lifecycle. The principles of green chemistry align closely with kinetic optimization in drug development, as compounds with favorable kinetic profiles often require lower dosing, generate fewer metabolites, and exhibit reduced environmental persistence [14].

The pharmaceutical industry faces increasing pressure to adopt sustainable practices while maintaining rigorous safety and efficacy standards. Kinetic parameter analysis serves as a bridge between these objectives, enabling the development of drugs that are not only therapeutically superior but also environmentally responsible. This whitepaper outlines practical methodologies for generating, analyzing, and applying kinetic data within this dual framework, with particular emphasis on techniques that reduce resource consumption while enhancing predictive accuracy [15].

Methodologies for Kinetic Parameter Analysis

Experimental Approaches

UV-Vis Spectroscopy for Photochemical Kinetics A sustainable experimental approach for determining kinetic parameters utilizes standard UV-vis spectroscopy equipment commonly available in teaching laboratories, significantly reducing both cost and environmental impact compared to specialized instrumentation. The procedure involves three key stages: (1) acquisition of absorption spectra for carbonyl compounds in dilute cyclohexane solutions, which provides a "quasi-gas-phase" environment; (2) data analysis and determination of absorption cross-sections; and (3) evaluation of atmospheric impact through photolysis rate calculations [14].

This methodology offers particular advantages for sustainable chemistry applications, as it minimizes solvent waste through micro-scale experimentation and utilizes cyclohexane, which provides spectra within 20% of true gas-phase values without the environmental concerns associated with fluorinated solvents. The resulting data enables researchers to predict environmental transformation rates of pharmaceutical compounds while generating minimal hazardous waste [14].

Molecular Dynamics Simulation Protocols Molecular dynamics (MD) simulations provide a computational alternative to experimental kinetic studies, offering atomic-level insights into molecular behavior without resource consumption. The MD process involves several critical stages: (1) defining the simulation box boundaries; (2) selecting an appropriate force field; (3) choosing the thermodynamic ensemble; (4) establishing cutoff radii for interactions; and (5) implementing boundary conditions [16].

For thermal stability assessment, an optimized MD protocol based on neural network potential (NNP) has demonstrated remarkable accuracy in predicting decomposition temperatures of energetic materials. Key improvements include nanoparticle models and reduced heating rates, achieving correlation coefficients (R²) of 0.969 with experimental values. This approach is particularly valuable for predicting kinetic stability under various environmental conditions, supporting the development of pharmaceuticals with controlled environmental persistence [17].

AI-Enhanced Kinetic Prediction

Generative Pre-trained Transformer Applications Generative AI models, particularly decoder-only transformer architectures, have demonstrated significant capabilities in predicting kinetic sequences of physicochemical states. By treating discretized states from molecular dynamics trajectories as vocabulary elements, these models learn complex syntactic and semantic relationships within the trajectory data. The training process involves: (1) discretization of MD trajectories into meaningful state spaces using K-means clustering along collective variables; (2) training a GPT model with MD-derived states as input; and (3) generating kinetic sequences of states from the pre-trained transformer [18].

This approach has proven effective across diverse biological systems, including folded proteins and intrinsically disordered proteins, achieving kinetic accuracy while reducing computational resource requirements by orders of magnitude compared to traditional MD simulations. The self-attention mechanism inherent in transformer architectures plays a crucial role in capturing long-range correlations necessary for accurate state-to-state transition predictions [18].

Machine Learning for Small Molecule Development Artificial intelligence is revolutionizing kinetic profiling in small-molecule development, particularly for cancer immunomodulation therapy. Supervised learning algorithms, including support vector machines and random forests, enable quantitative structure-activity relationship (QSAR) modeling and ADMET property prediction. Unsupervised learning techniques facilitate chemical clustering and diversity analysis, while reinforcement learning approaches optimize de novo molecule generation toward desired kinetic profiles [19].

Deep learning architectures, including convolutional neural networks and recurrent neural networks, have demonstrated exceptional capability in modeling complex, non-linear relationships within high-dimensional kinetic data. Generative models such as variational autoencoders and generative adversarial networks further enhance kinetic prediction by generating novel molecular structures with optimized binding profiles and ADMET characteristics [19].

Data Presentation and Analysis

Quantitative Kinetic Data Tables

Table 1: Key MIDD Tools for Kinetic Analysis in Drug Development

| Tool/Methodology | Description | Primary Application in Kinetic Analysis |

|---|---|---|

| QSAR | Computational modeling predicting biological activity from chemical structure | Relating molecular structure to kinetic parameters and metabolic rates |

| PBPK | Mechanistic modeling of physiology-drug interactions | Predicting concentration-time profiles in different tissues and populations |

| Population PK/PD | Modeling variability in drug exposure and response | Identifying covariates affecting kinetic profiles across populations |

| Exposure-Response | Analysis of relationship between drug exposure and effect | Establishing kinetic drivers of efficacy and safety |

| QSP | Integrative modeling combining systems biology and pharmacology | Predicting kinetic behavior in complex biological systems |

| AI/ML Approaches | Data-driven pattern recognition and prediction | Accelerating kinetic parameter estimation and optimization |

Table 2: Experimental vs. Computational Methods for Kinetic Profiling

| Method | Key Parameters | Resource Requirements | Sustainability Advantages |

|---|---|---|---|

| UV-Vis Spectroscopy | Absorption cross-sections, photolysis rates | Standard laboratory equipment, minimal solvents | Reduced solvent consumption, minimal waste generation |

| Molecular Dynamics | Free energy landscapes, transition rates | High-performance computing resources | Elimination of chemical reagents, virtual screening |

| AI/Transformer Models | State transition probabilities, kinetic sequences | Specialized computing infrastructure | Rapid prediction without experimental resource use |

| PBPK Modeling | Tissue concentration-time profiles | Software platforms, physiological data | Reduction in animal studies through in silico prediction |

Research Reagent Solutions

Table 3: Essential Materials for Kinetic Profiling Experiments

| Research Reagent | Function | Sustainable Alternatives |

|---|---|---|

| Cyclohexane | Non-hydrogen-bonding solvent for "quasi-gas-phase" measurements | Preferred over fluorinated solvents for reduced environmental impact |

| Carbonyl compounds | Model analytes for photochemical kinetic studies | Biodegradable compounds with minimal environmental persistence |

| Neural network potentials (NNP) | Force field for accurate MD simulations | Reduces need for experimental validation through improved accuracy |

| Quantum yield databases | Reference data for photolysis rate calculations | Enables in silico prediction without experimental repetition |

| Actinic flux models | Solar radiation spectra for environmental fate prediction | Open-source data reduces resource consumption |

Visualization of Kinetic Pathways and Workflows

MIDD Kinetic Analysis Framework

MIDD Kinetic Analysis Framework

Kinetic Sequence Prediction Workflow

Kinetic Sequence Prediction Workflow

Sustainable Kinetic Profiling Protocol

Sustainable Kinetic Profiling Protocol

Linking Kinetic Profiles to Therapeutic Outcomes

Efficacy and Safety Optimization

The connection between kinetic profiles and therapeutic outcomes represents the culmination of targeted drug development efforts. Model-Informed Drug Development (MIDD) approaches provide a structured framework for quantitatively linking kinetic parameters to clinical efficacy and safety endpoints. Through population pharmacokinetic/pharmacodynamic (PPK/PD) modeling and exposure-response analysis, researchers can establish therapeutic windows that maximize efficacy while minimizing adverse effects [15].

Sustainable chemistry principles further enhance this approach by emphasizing the development of compounds with kinetic profiles that correlate not only with improved therapeutic outcomes but also with reduced environmental impact. Pharmaceuticals designed with optimal kinetic characteristics often demonstrate complete metabolism, minimized persistence in biological systems, and reduced ecological burden—aligning therapeutic excellence with environmental responsibility [14].

Applications in Precision Medicine

Kinetic profiling enables precision medicine approaches by accounting for individual variations in drug metabolism and response. Population kinetic models identify covariates such as age, renal function, and genetic polymorphisms that influence drug exposure, allowing for personalized dosing regimens. Artificial intelligence further enhances this capability through patient stratification based on multi-omics data and digital twin simulations, creating individualized kinetic profiles that predict therapeutic response [19].

The integration of sustainable chemistry principles in precision medicine emphasizes the development of targeted therapies with optimized kinetic properties, reducing overall medication use and associated waste through precisely calibrated dosing. This approach represents the convergence of therapeutic optimization, environmental responsibility, and economic efficiency in pharmaceutical development [15] [19].

The systematic integration of kinetic profiling into drug development workflows provides a powerful approach for optimizing therapeutic efficacy and safety while advancing sustainable chemistry objectives. The methodologies, data frameworks, and visualization tools presented in this technical guide offer researchers comprehensive resources for implementing kinetic analysis within their development programs. As artificial intelligence and computational modeling continue to evolve, the precision and predictive power of kinetic profiling will further enhance our ability to develop pharmaceuticals that deliver maximum therapeutic benefit with minimal environmental impact, representing the future of sustainable drug development.

The adoption of quantifiable metrics is a cornerstone of modern green chemistry, providing researchers with essential tools to measure and improve the environmental performance of their chemical processes. These metrics enable a reductionist analysis that is critical for diagnosing inefficiencies and guiding research and development toward more sustainable outcomes. Key among these are measures of process mass intensity (PMI), reaction mass efficiency (RME), and carbon efficiency (CE), which together offer a comprehensive view of resource utilization and waste generation in chemical synthesis [20].

The strength of this quantitative approach lies in its ability to provide unambiguous, data-driven insights into process efficiency. However, it is equally critical to understand the limitations of these metrics and the specific questions they do not address. A holistic green chemistry strategy requires complementing these efficiency metrics with assessments of energy requirements, hazard reduction, and overall environmental impact to ensure comprehensive sustainable design [20].

Core Quantitative Metrics for Sustainable Chemistry

Fundamental Efficiency Calculations

Quantitative green chemistry metrics provide standardized methods to evaluate the sustainability of chemical processes. The most widely adopted metrics focus on mass efficiency, carbon economy, and environmental impact. These calculations enable direct comparison between different synthetic routes and help identify opportunities for improvement [20].

Table 1: Core Quantitative Green Chemistry Metrics

| Metric Name | Abbreviation | Calculation Formula | Application Context |

|---|---|---|---|

| Process Mass Intensity | PMI | Total mass in process (kg) / Mass of product (kg) | Overall process efficiency including reagents, solvents, water |

| Reaction Mass Efficiency | RME | Mass of product (kg) / Total mass of reactants (kg) | Reaction step efficiency only |

| Carbon Efficiency | CE | (Carbon in product / Carbon in reactants) × 100% | Atom economy specifically for carbon |

| Innovative Green Aspiration Level | iGAL | Comparison to ideal process mass intensity | Benchmarking against theoretical optimum |

Advanced Assessment Tools

Beyond fundamental calculations, comprehensive tools like DOZN 3.0 provide systematic frameworks for evaluating chemical processes against the Twelve Principles of Green Chemistry. This quantitative evaluator facilitates assessment of resource utilization, energy efficiency, and reduction of hazards to human health and the environment, serving as a comprehensive guide for sustainable practice implementation in industrial settings [21].

Kinetic Parameter Analysis in Biocatalysis

Fundamental Kinetic Parameters

Enzyme kinetics provides essential parameters for evaluating and designing sustainable biocatalytic processes. The turnover number (kcat) and Michaelis-Menten constant (Km) are fundamental for understanding enzyme catalytic efficiency and specificity [22]. These parameters are crucial for assessing the viability of enzymes as sustainable catalysts in pharmaceutical and chemical manufacturing, as they directly impact process intensity and waste generation.

The kcat value represents the maximum number of substrate molecules converted to product per enzyme molecule per unit time, reflecting the intrinsic catalytic efficiency. The Km value indicates the substrate concentration at which the reaction rate is half of Vmax, serving as a measure of the enzyme's affinity for its substrate. Together, these parameters determine the catalytic efficiency (kcat/Km), which is a critical indicator for evaluating enzymes in green chemistry applications [22].

Structure-Oriented Kinetics Dataset (SKiD)

The Structure-oriented Kinetics Dataset (SKiD) represents a significant advancement in biocatalysis for green chemistry by integrating enzyme kinetic parameters with three-dimensional structural data. This comprehensive, structured dataset includes 13,653 unique enzyme-substrate complexes spanning six enzyme classes, incorporating both wild-type and mutant enzymes with natural and non-natural substrates [22].

The value of SKiD lies in its ability to correlate kinetic parameters with structural features, enabling researchers to understand how enzyme structure influences catalytic efficiency. This understanding is fundamental to designing improved enzymes with higher efficiency and selectivity for industrial applications, supporting the green chemistry principles of catalysis and reduced energy requirements [22].

Table 2: Enzyme Kinetic Parameters and Their Significance in Green Chemistry

| Parameter | Symbol | Units | Interpretation | Green Chemistry Relevance |

|---|---|---|---|---|

| Turnover Number | kcat | s⁻¹ | Maximum catalytic turns per unit time | Determines catalyst loading and process mass intensity |

| Michaelis Constant | Km | mM | Substrate concentration at half Vmax | Impacts substrate concentration and reaction volume |

| Catalytic Efficiency | kcat/Km | M⁻¹s⁻¹ | Second-order rate constant for substrate conversion | Overall measure of biocatalyst performance |

| Specific Activity | — | μmol/min/mg | Reaction rate per mass enzyme | Determines required enzyme quantity and cost |

Experimental Design for Sustainable Chemistry Optimization

Principles of Experimental Design

Well-structured experimental design (DoE) is essential for efficiently optimizing sustainable chemical processes while minimizing experimental waste. The chemometrics field offers systematic approaches to experimentation that enable researchers to establish cause-and-effect relationships between process variables and outcomes while minimizing bias and error [23] [24].

A fundamental concept in experimental design is the proper identification and control of variables. Independent variables (factors manipulated by the researcher), dependent variables (measured responses), and extraneous variables (potential confounding factors) must be clearly defined. Techniques such as randomization, blocking, and matching are employed to control extraneous variables and ensure experimental validity [24].

Practical Experimental Design Strategies

Factorial designs represent a powerful approach for investigating the effects of multiple factors simultaneously, making them particularly valuable for reaction optimization in sustainable chemistry. Unlike one-factor-at-a-time approaches, factorial designs enable researchers to identify interaction effects between factors, providing a more comprehensive understanding of the reaction system while reducing the total number of experiments required [25].

The response surface methodology (RSM) extends these principles to model and optimize reaction conditions, enabling researchers to identify optimal conditions for critical green chemistry parameters such as yield, selectivity, and energy consumption. This approach is particularly valuable for developing processes that align with green chemistry principles while maintaining economic viability [23].

Experimental Design Workflow

Integrated Methodologies for Green Chemistry R&D

Enzyme Kinetics Experimental Protocol

Objective: Determine kinetic parameters (kcat and Km) of an enzyme-substrate interaction for green chemistry applications.

Methodology:

- Data Curation: Collect experimentally measured Km and kcat values from databases such as BRENDA, using in-house scripts to process raw data into uniform formats. Resolve redundancy through extensive comparison of annotations including Enzyme Commission (EC) number, UniProtKB ID, substrate SMILES, and experimental conditions [22].

- Outlier Analysis: Perform statistical analysis to identify and prune datapoints with values outside thrice the standard deviation of the log-transformed parameter distributions. Compute geometric means for datapoints with differing values under identical conditions [22].

- Enzyme and Substrate Annotation: Extract enzyme annotations from database comments and named entries using custom Python scripts. Regular expressions standardize experimental conditions and mutation data. Annotate substrates with isomeric SMILES using OPSIN and PubChemPy libraries [22].

- Structural Mapping: Classify available structural information into four categories: substrate + cofactor structures, substrate-only structures, cofactor-only structures, and apo structures. Differentiate substrates from cofactors using EMBL CoFactor database mappings with manual verification [22].

- Structural Modeling: Model mutant enzymes from wild-type structures. Correct protonation states based on experimental pH from kinetic data. Dock pre-processed enzyme and substrate structures to obtain final enzyme-substrate complex structures [22].

Chemical Space Network Analysis for Green Chemistry

Objective: Visualize and interpret relationships within small molecule datasets to guide the design of safer, more effective compounds.

Methodology:

- Data Collection and Curation: Collect compound data from relevant databases (e.g., ChEMBL), apply filters for relevant parameters (e.g., molecular weight under 600), and remove compounds without associated activity values. Check for salts as disconnected SMILES and validate single-fragment compounds using RDKit GetMolFrags function [26].

- Pairwise Relationship Calculation: Compute pairwise relationships between compounds using RDKit 2D fingerprint Tanimoto similarity values or maximum common substructure similarity values. Apply minimum threshold values to adjust edge numbers in the network visualization [26].

- Network Construction and Visualization: Create chemical space networks using NetworkX, representing compounds as nodes and relationships as edges. Implement visualization features including node coloring based on property values, edge line styles based on similarity values, and replacement of circle nodes with 2D structure depictions [26].

- Network Analysis: Apply established network science algorithms and statistical calculations including clustering coefficient, degree assortativity, and modularity to extract meaningful patterns from the chemical space network [26].

Green Chemistry R&D Framework

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Tools for Sustainable Chemistry Development

| Tool/Resource | Function | Application in Green Chemistry |

|---|---|---|

| BRENDA Database | Comprehensive enzyme information resource | Provides kinetic parameters (kcat, Km) for biocatalyst selection |

| SABIO-RK | Manual curation of enzyme kinetics data | High-quality data for metabolic engineering and pathway design |

| SKiD Dataset | Integrated structural and kinetic data | Correlates enzyme structure with function for rational design |

| RDKit | Cheminformatics and machine learning | Computes molecular descriptors and similarity metrics |

| NetworkX | Network analysis and visualization | Constructs chemical space networks for compound optimization |

| DOZN 3.0 | Quantitative green chemistry evaluation | Assesses processes against Twelve Principles of Green Chemistry |

| STRENDA DB | Standardized enzymology data reporting | Ensures reproducibility and reliability of kinetic measurements |

In the pursuit of industrial sustainability, the precision offered by kinetic analysis is a cornerstone for innovation. This technical guide elucidates how the rigorous quantification of kinetic parameters—such as activation energy (Eₐ), pre-exponential factor (A), and reaction order—directly enables the optimization of chemical processes central to a circular economy. By providing a fundamental understanding of reaction rates and mechanisms under varying conditions, kinetic analysis serves as a powerful tool for reducing energy consumption, improving product yields from waste streams, and minimizing undesirable byproducts. This document, framed within broader research on sustainable chemistry and kinetic parameter analysis, provides researchers and scientists with the methodologies and insights to harness kinetic principles for waste and resource reduction.

The application of kinetic analysis spans diverse fields, from the thermochemical conversion of waste plastics and biomass to the synthesis of advanced materials. For instance, studies on the pyrolysis of waste mixed plastics (WMPs) have demonstrated that a positive synergetic effect can lower degradation temperatures and reduce the required activation energy, thereby directly conserving energy [27]. Similarly, in ammonia co-firing processes, kinetic analysis helps identify reaction pathways that suppress NOx formation, turning a potential waste product into a manageable emission [28]. These examples underscore the critical role of kinetics in designing processes that are not only efficient but also environmentally benign.

Theoretical Foundations of Kinetic Analysis

Kinetic analysis involves extracting quantitative parameters that describe the rate of a chemical reaction. The most common model for describing the temperature dependence of a reaction rate is the Arrhenius equation:

Where k is the rate constant, A is the pre-exponential factor, Eₐ is the activation energy, R is the universal gas constant, and T is the absolute temperature. Determining Eₐ is particularly crucial for sustainability, as it represents the energy barrier that must be overcome for a reaction to proceed; a lower Eₐ often translates directly to lower operational energy requirements.

Two primary methodological approaches are employed for kinetic analysis:

Model-Free (Isoconversional) Methods: These methods, including Flynn-Wall-Ozawa (FWO) and Kissinger-Akahira-Sunose (KAS), calculate the activation energy without assuming a specific reaction model. They are highly valuable for complex reactions common in waste conversion, such as the solid-state degradation of plastics or biomass, as they can reveal how

Eₐchanges with the extent of conversion (α) [27] [29]. This can identify multi-step mechanisms and is the approach recommended by the International Confederation of Thermal Analysis and Calorimetry (ICTAC) for solid-state kinetics [29].Model-Fitting Methods: Techniques like the Coats-Redfern (CR) method are used to fit experimental data to a library of reaction models (e.g., diffusion, nucleation, and order-based models). The Criado method (master plots) is a powerful hybrid approach that compares experimental curves with theoretical models to identify the most probable reaction mechanism [27].

Beyond Eₐ, kinetic analysis also determines thermodynamic parameters such as the change in enthalpy (ΔH‡), free energy (ΔG‡), and entropy (ΔS‡). For example, a pyrolysis process described as endothermic (ΔH‡ > 0) and non-spontaneous (ΔG‡ > 0) with a decrease in randomness (ΔS‡ < 0) provides a complete thermodynamic profile essential for reactor design and process scale-up [27].

Kinetic Analysis in Action: Key Applications for Sustainability

The following case studies demonstrate how kinetic analysis directly contributes to waste reduction and resource recovery.

Catalytic Pyrolysis of Waste Plastics

The conversion of waste plastics into valuable fuels and chemicals is a prime example of "waste to wealth." Kinetic analysis is instrumental in optimizing this process. A comparative study on low-density polyethylene (LDPE), polypropylene (PP), polystyrene (PS), and waste mixed plastics (WMPs) revealed a positive synergetic effect in WMPs, leading to a lower degradation temperature and a reduced activation energy compared to the individual polymers [27]. This synergy simplifies recycling and reduces energy costs.

The introduction of a spent fluid catalytic cracking (sFCC) catalyst further enhanced this process. The catalyst significantly lowered the initial pyrolysis temperature by approximately 47 °C and reduced the average activation energy of WMPs by about 13.41 kJ/mol [27]. This reduction in Eₐ represents a direct decrease in the energy input required for the decomposition reaction, making the process more efficient and less carbon-intensive. The thermodynamic parameters confirmed the process was endothermic and non-spontaneous, guiding engineers to design systems that optimally supply the necessary energy [27].

Table 1: Kinetic and Thermodynamic Parameters for Pyrolysis of Waste Mixed Plastics (WMPs)

| Parameter | Non-Catalytic WMPs | Catalytic WMPs (with sFCC) | Impact on Sustainability |

|---|---|---|---|

Avg. Activation Energy (Eₐ) |

Higher | Reduced by ~13.41 kJ/mol | Lower energy consumption |

| Initial Decomposition Temp. | Higher | Lowered by ~47 °C | Milder operating conditions |

| Process Thermodynamics | Endothermic & Non-Spontaneous | Endothermic & Non-Spontaneous | Informs reactor energy design |

Thermal Conversion of Biomass

Sugarcane bagasse (SCB), a significant agricultural residue, can be converted into bio-oil through pyrolysis. Kinetic analysis of this process is the "first phase" for designing efficient gasification and pyrolysis reactors [29]. Research has shown that adding catalysts like manganese copper vanadate (MCV) can slightly reduce the biomass decomposition temperature and alter the kinetic parameters, thereby improving the efficiency of the conversion process and the quality of the resulting bio-oil [29]. A well-understood kinetic model allows for the optimization of process parameters like heating rate and temperature profile, maximizing the yield of desired products and minimizing waste.

Low-Carbon Energy and Material Synthesis

Kinetic analysis also facilitates the transition to low-carbon energy systems. In ammonia/coal co-firing, a strategy to reduce CO₂ emissions from coal power plants, the high nitrogen content of ammonia poses a risk of increased NOx emissions. Kinetic analysis and sensitivity modeling of the co-firing process identify key reactions that influence NO formation and destruction. This understanding allows engineers to optimize parameters like the ammonia injection method. For example, injecting ammonia into the main combustion zone, where oxygen shortage and high NO concentration are present, can promote NO reduction reactions, thereby controlling emissions [28].

In material science, the synthesis of Ni-Fe alloys from nano-sized oxide precursors via hydrogen reduction relies on kinetic analysis. Non-isothermal reduction experiments at multiple heating rates help determine the mechanism (e.g., gaseous diffusion vs. interfacial chemical reactions) controlling the reduction-sintering process [30]. This knowledge is key to optimizing temperature profiles and gas flows, reducing energy waste, and producing high-performance alloys from waste streams with minimal environmental impact.

Experimental Protocols for Kinetic Analysis

This section provides a detailed methodology for a representative experiment: determining the kinetic parameters of waste plastic pyrolysis via thermogravimetric analysis (TGA).

Protocol: Kinetic Analysis of Plastic Pyrolysis via TGA

1. Objective: To determine the apparent activation energy (Eₐ) and reaction mechanism of waste plastic pyrolysis using a model-free isoconversional method.

2. Materials and Equipment:

- Thermogravimetric Analyzer (TGA): Capable of operating under controlled atmosphere and variable heating rates (e.g., STA-504) [27] [30].

- Sample Materials: Waste plastic samples (e.g., LDPE, PP, PS, WMPs), ground to a consistent particle size.

- Catalyst (Optional): sFCC catalyst or other catalytic materials [27].

- Reaction Gases: High-purity Nitrogen (

N₂) and Hydrogen (H₂) for inert and reducing atmospheres, respectively [27] [30]. - Sample Containers: Alumina crucibles.

3. Experimental Procedure:

- Step 1: Sample Preparation. Weigh approximately 5-10 mg of the plastic sample (or plastic-catalyst mixture) into an alumina crucible. Using a small, uniform mass minimizes heat and mass transfer limitations [29].

- Step 2: Instrument Setup. Purge the TGA furnace with

N₂gas (flow rate ~30-50 mL/min) for at least 15 minutes to establish an inert atmosphere and prevent oxidative degradation. - Step 3: Non-Isothermal Experiment. Program the TGA to heat the sample from ambient temperature to a final temperature (e.g., 800°C) using at least four different heating rates (β), typically 5, 10, 15, and 20 °C/min [27]. This multi-heating rate approach is critical for reliable isoconversional analysis.

- Step 4: Data Recording. Record the sample mass (

m), temperature (T), and time (t) continuously throughout the experiment. The data is processed as mass loss (TG) and derivative mass loss (DTG) curves.

4. Data Analysis using the Flynn-Wall-Ozawa (FWO) Method:

- Step 1: Calculate Conversion (

α). For a series of temperatures across the different heating rates, calculate the degree of conversion using:α = (m₀ - mₜ) / (m₀ - m_f)wherem₀is initial mass,mₜis mass at timet, andm_fis final mass [27] [30]. - Step 2: Plot FWO Equation. For fixed values of

α(e.g., from 0.1 to 0.9 in steps of 0.1), plotln(β)against1000/Tfor the different heating rates. - Step 3: Determine

Eₐ. The FWO equation isln(β) = ln(A Eₐ / R g(α)) - 5.331 - 1.052 (Eₐ / RT). The activation energyEₐfor each conversionαis calculated from the slope of the line (-1.052 Eₐ / R). A plot ofEₐversusαreveals if the activation energy changes during the reaction, indicating a simple or complex process [27].

Diagram 1: Workflow for kinetic analysis of waste pyrolysis.

The Scientist's Toolkit: Essential Reagents and Materials

The following table details key materials and their functions in experiments related to kinetic analysis for sustainable processes.

Table 2: Key Research Reagent Solutions for Kinetic Studies

| Reagent/Material | Function in Experiment | Example Application |

|---|---|---|

| Spent FCC Catalyst (sFCC) | Lowers activation energy and reaction temperature of pyrolysis; cracks large hydrocarbons into valuable products. | Catalytic pyrolysis of waste mixed plastics [27]. |

| Metal Vanadate Catalysts (e.g., MCV) | Catalyzes the deoxygenation of biomass pyrolysis vapors, improving the quality and yield of bio-oil. | Pyrolysis of sugarcane bagasse [29]. |

| Nano-sized Oxides (NiO, Fe₂O₃) | High-surface-area precursors for solid-state reduction reactions, enabling lower-temperature synthesis of metal alloys. | Hydrogen-based production of Ni-Fe alloys [30]. |

| High-Purity Gases (N₂, H₂, Ar) | N₂: Creates an inert atmosphere for pyrolysis. H₂: Serves as a clean reducing agent. Ar: Provides an inert sintering atmosphere. | Used in TGA pyrolysis and reduction-sintering experiments [27] [30]. |

| Thermogravimetric Analyzer (TGA) | Core instrument for measuring mass change as a function of temperature/time, providing raw data for kinetic analysis. | Foundational equipment for all solid-state reaction kinetics [27] [29]. |

Kinetic analysis transcends its role as a mere analytical technique, establishing itself as a fundamental enabler of sustainable chemistry. By providing a quantitative framework to understand and control chemical reactions, it directly addresses the core challenges of waste and resource reduction. The precise determination of activation energies and reaction mechanisms allows researchers to design processes that require less energy, convert waste streams into valuable products with higher efficiency, and minimize the formation of harmful pollutants. As the field advances, the integration of kinetic analysis with computational modeling and in-situ characterization will further accelerate the development of next-generation recycling, energy, and manufacturing technologies, paving the way for a more resource-efficient circular economy.

Tools and Techniques: Implementing High-Throughput Kinetics and Green Workflows

Advances in High-Throughput Kinetic Screening Technologies

High-throughput kinetic screening represents a paradigm shift in chemical and drug discovery research, moving beyond single time-point yield measurements to capture comprehensive time-dependent reaction data. This whitepaper examines transformative methodologies like Simulated Progress Kinetic Analysis (SPKA) that decouple experimental throughput from reaction timescales, enabling unprecedented mechanistic insight. Framed within sustainable chemistry principles, these technologies significantly reduce material consumption and experimental duration while providing crucial kinetic parameters for process optimization. The integration of flow chemistry, automation, and advanced detection systems offers researchers powerful tools to accelerate the development of efficient, sustainable chemical processes with reduced environmental impact.

Traditional kinetic analysis has long presented a bottleneck in chemical research due to the time-intensive nature of collecting comprehensive reaction data. Conventional methods typically require monitoring individual reactions from start to completion, creating a fundamental limitation where experimental throughput is directly constrained by reaction timescales. The emergence of high-throughput kinetic screening technologies addresses this limitation through innovative approaches that transform kinetic data acquisition. These methodologies are particularly valuable within sustainable chemistry frameworks, where understanding reaction kinetics enables the optimization of atom economy, energy efficiency, and waste reduction in chemical processes [31].

Where conventional kinetic profiling might require days or weeks to characterize a single slow reaction, modern high-throughput approaches can generate complete kinetic profiles every 25 minutes, representing an almost 40-fold increase in experimental throughput with corresponding reductions in material consumption. This revolutionary capability is achieved through fundamental methodological innovations that reimagine how kinetic data is collected, processed, and interpreted. The resulting kinetic parameters provide crucial insights for rational process improvement, leading to greener, safer, and more sustainable chemical transformations essential for advancing circular economy principles in the chemical industry [31] [32].

Core Technological Principles and Methodologies

Simulated Progress Kinetic Analysis (SPKA)

Simulated Progress Kinetic Analysis represents a fundamental departure from traditional kinetic data collection methods. Rather than monitoring a single reaction to completion, SPKA constructs complete kinetic profiles from multiple independent reactions initiated at starting points along a parent reaction trajectory. This approach collects differential kinetic data (direct measurement of rate) rather than integral data (concentration over time), enabling the construction of rate versus concentration profiles without monitoring reactions from start to finish. The methodology effectively decouples the time required to generate a full kinetic profile from the actual reaction timescale, meaning researchers can obtain complete kinetic characterization faster than the reaction itself reaches completion [31].

The mathematical foundation of SPKA involves determining instantaneous rates for individual reactions and combining these measurements to construct a single differential kinetic profile. When multiple reactions are initiated at different concentrations along the expected reaction trajectory, their instantaneous rates can be plotted against concentration to reveal the complete kinetic behavior. This approach remains agnostic to the underlying kinetic model, making it particularly valuable for studying complex reaction systems where the rate law is unknown a priori. A significant advantage of SPKA is its ability to probe catalyst robustness by comparing profiles collected over different instantaneous reaction times—deviations between profiles can indicate catalyst activation, deactivation, product acceleration, or inhibition phenomena that are crucial for understanding sustainable catalytic processes [31].

Enabling Platform Technologies

The practical implementation of high-throughput kinetic screening relies on specialized platform technologies that enable precise control and monitoring of reaction conditions:

Segmented Flow Platforms: These systems utilize a biphasic flow regime where reaction mixtures are divided into discrete segments by an immiscible carrier phase, typically a fluorous solvent or inert gas. This compartmentalization ensures each reaction segment is well-mixed and completely isolated while maintaining identical reaction environments across all segments. The segmented flow approach provides the foundation for SPKA implementation by enabling the simultaneous operation of multiple independent reactions under carefully controlled conditions [31].

Automated Liquid Handling and Analysis: Robotic fluidic systems enable the precise manipulation of nanoliter to milliliter volumes required for high-throughput kinetic experimentation. These systems integrate seamlessly with various detection methodologies including UV-Vis spectroscopy, mass spectrometry, and fluorescence detection. The automation extends to data collection and processing, with specialized software transforming raw sensor data into kinetic parameters. Advanced platforms can theoretically generate over 600 complete kinetic profiles in a single day, dramatically accelerating the kinetic characterization process [33] [31].

Advanced Detection Technologies: Modern high-throughput kinetic platforms employ multiple detection strategies to monitor reaction progress. Fluorescence-based assays offer high sensitivity and real-time monitoring capabilities, while luminescence-based systems provide broad dynamic ranges with minimal background noise. Mass spectrometry-based detection delivers unparalleled specificity by directly measuring substrate and product masses, and label-free biosensor technologies like surface plasmon resonance (SPR) enable real-time kinetic analysis without molecular labels [34].

Comparative Analysis of High-Throughput Screening Platforms

The landscape of high-throughput screening technologies encompasses various approaches with distinct capabilities and applications. The following table summarizes key platform types and their characteristics:

Table 1: Comparison of High-Throughput Screening Technology Platforms

| Platform Type | Throughput (Profiles/Day) | Key Applications | Detection Methods | Material Consumption |

|---|---|---|---|---|

| SPKA Flow Systems | >600 | Reaction mechanism elucidation, catalyst stability studies | Fluorescence, MS, UV-Vis | Very low (nL-μL volumes) |

| Traditional HTS | 10,000-100,000 compounds | Primary compound screening, hit identification | Fluorescence, luminescence, colorimetric | Low (μL volumes) |

| uHTS | >300,000 compounds | Large library screening, initial hit discovery | Fluorescence, enzymatic assays | Very low (nL volumes) |

| Mass Spectrometry HTS | Moderate | Complex reaction monitoring, pathway analysis | Direct mass measurement | Low to moderate |

Beyond the specialized SPKA platforms, broader high-throughput screening technologies include Ultra-High-Throughput Screening (uHTS) capable of testing >300,000 small molecule compounds daily using 1536-well plate formats and nanoliter dispensing technologies. Traditional HTS typically handles 10,000-100,000 compounds per day using 96-, 384-, and 1536-well plate formats with automated liquid handling systems. Each platform offers distinct advantages for specific applications within drug discovery and sustainable chemistry development pipelines [33].