Predicting Ionic Liquid Melting Points: A Comprehensive Guide to QSPR and Machine Learning Models

Accurate prediction of ionic liquid melting points is critical for tailoring their properties in applications ranging from energy storage to pharmaceutical development.

Predicting Ionic Liquid Melting Points: A Comprehensive Guide to QSPR and Machine Learning Models

Abstract

Accurate prediction of ionic liquid melting points is critical for tailoring their properties in applications ranging from energy storage to pharmaceutical development. This article provides a comprehensive overview of Quantitative Structure-Property Relationship (QSPR) models and machine learning approaches for melting point prediction. It covers fundamental principles, state-of-the-art methodologies, optimization strategies to overcome data sparsity and model reliability challenges, and rigorous validation techniques. Designed for researchers, scientists, and drug development professionals, this resource synthesizes current research trends and practical guidance to facilitate the efficient design and selection of ionic liquids with desired phase behavior, reducing reliance on costly experimental screening.

The Fundamentals of Ionic Liquids and Why Melting Point Prediction Matters

FAQs: Ionic Liquids and Melting Point Characterization

Q1: What defines an ionic liquid and why is its melting point a critical property? An Ionic Liquid (IL) is broadly defined as a salt in which the ions are poorly coordinated, leading to a melting point generally below 100 °C. Many are liquid at room temperature. Their low melting point is a direct consequence of the bulky and asymmetric structure of the constituent organic cations, which prevents the ions from packing efficiently into a crystal lattice [1] [2] [3]. The melting point is a critical property because it defines the lower limit of the liquidus range for applications such as electrolytes in batteries, solvents for chemical reactions, and as functional materials in thermal energy storage [4] [5]. Accurately predicting it is essential for the computer-aided design of new ILs with desired phase behavior [6].

Q2: What are the common experimental challenges when determining the melting point of ionic liquids? Researchers often face several challenges:

- Supercooling: Many ILs tend to supercool, meaning they remain in a liquid state well below their thermodynamic melting point, which can lead to inaccurate measurements [4].

- Polymorphism and Complex Solid-Solid Transitions: Some ILs, especially a subclass known as Organic Ionic Plastic Crystals (OIPCs), can exhibit multiple solid phases with transitions between them before melting. These solid-solid transitions also involve enthalpy changes and must be carefully characterized to avoid confusing them with the final melting point [1] [4].

- Purity and Hygroscopicity: The presence of impurities or absorbed water can significantly alter the measured melting point. Many ILs are hygroscopic and must be handled under strict anhydrous conditions to obtain reliable data [2].

Q3: How can QSPR models assist in the design of ionic liquids with specific melting points? Quantitative Structure-Property Relationship (QSPR) models are computational tools that relate the molecular structure of ILs to their melting points. By using machine learning on datasets of known ILs, these models identify which molecular descriptors (e.g., ion size, symmetry, types of chemical bonds) most significantly influence the melting point [6] [5]. This allows researchers to predict the melting points of vast numbers of theoretical cation-anion combinations (e.g., over 35,000) before undertaking costly synthesis, thereby speeding up the discovery of ILs with tailored properties [6].

Q4: What are some key molecular features that generally lead to a lower melting point in ILs? Several structural features are known to depress the melting point of ILs:

- Large Ion Size and Asymmetry: Bulky, asymmetrical cations (like imidazolium with long alkyl chains) prevent efficient crystal packing [1] [3].

- Charge Delocalization: Anions where the negative charge is spread over a larger area (e.g., bis(trifluoromethylsulfonyl)imide, [NTf₂]⁻) weaken the Coulombic forces between ions [4].

- Flexible Alkyl Chains: Chains on the cation can introduce conformational disorder, inhibiting crystallization [4].

- Weak Hydrogen Bonding Ability: Reducing the strength of hydrogen-bonding networks between the cation and anion can lower the melting point [4].

Troubleshooting Guide: Melting Point Experiments

| Common Problem | Potential Cause | Recommended Solution |

|---|---|---|

| High Supercooling | High viscosity, slow nucleation kinetics, impurity effects. | Seed the sample with a tiny crystal of the solid phase. Use a slower cooling rate prior to measurement [4]. |

| Broad or Ill-Defined Melting Endotherm | Sample impurity, decomposition upon heating, polymorphism, or non-equilibrium conditions. | Purify the IL (e.g., recrystallization, washing). Ensure anhydrous handling. Use multiple heating/cooling cycles to establish reproducibility [2] [4]. |

| Discrepancy between Calculated and Experimental Tm | Inaccuracies in the QSPR model, insufficient training data for the specific IL family, or unaccounted for ion-pairing in the liquid state. | Verify the IL's chemical structure and purity. Use a QSPR model that has been validated for the specific cation/anion classes of your IL. Consult the model's applicability domain to ensure your IL is within its reliable prediction space [6] [5]. |

| Corrosion of Metal Sample Containers | Chemical reactivity of the IL anions (e.g., halides) with metal surfaces at elevated temperatures. | Use containers made of inert materials such as Teflon, quartz, or certain types of stainless steel with proven compatibility [4]. |

Essential Data for Ionic Liquid Melting Points

Table 1: Melting Points and Enthalpies of Common Imidazolium-Based Ionic Liquids [4]

| Ionic Liquid | Cation Abbr. | Anion Abbr. | Melting Point Range (°C) | Enthalpy of Fusion (kJ/kg) |

|---|---|---|---|---|

| 1-Hexadecyl-3-methylimidazolium Bromide | [C₁₆MIM] | Br | ~64 | 159.00 |

| 1-Hexadecyl-3-methylimidazolium Chloride | [C₁₆C₁IM] | Cl | ~64 | 159.00 |

| Various Imidazolium ILs | [CₓMIM] | [NTf₂], [TfO], etc. | -87 to 208 | 59.00 - 159.00 |

Table 2: Performance Metrics of a Deep-Learning Model for Melting Point Prediction [5]

| Model Type | Dataset Size | R² Score | Root Mean Square Error (RMSE) |

|---|---|---|---|

| Deep Learning (RNN) | 1253 ILs | 0.90 | ~32 K (~32 °C) |

Experimental Protocol: Determining Melting Point via DSC

Objective: To accurately determine the melting point (Tm) and enthalpy of fusion (ΔHf) of an ionic liquid using Differential Scanning Calorimetry (DSC).

Materials and Equipment:

- Differential Scanning Calorimeter

- Hermetically sealed, inert sample pans (e.g., aluminum Tzero)

- Micro-syringe or spatula for sample handling

- Glove box (for hygroscopic or air-sensitive ILs)

- Pure, dry ionic liquid sample

Procedure:

- Sample Preparation: Inside a glove box if necessary, accurately weigh 5-10 mg of the pure, dry IL into a pre-tared DSC sample pan. Seal the pan hermetically to prevent moisture ingress or solvent evaporation during the experiment.

- Instrument Calibration: Calibrate the DSC cell for temperature and enthalpy using high-purity standards such as indium or zinc.

- Experimental Method:

- Loading: Place the sealed sample pan and an empty reference pan in the DSC cell.

- Thermal Program:

- Equilibrate at 25 °C.

- Cool to -100 °C at a rate of 10 °C/min.

- Hold isothermally for 5 minutes to ensure thermal equilibrium.

- Heat to 150 °C at a scan rate of 5 °C/min. This slower heating rate is recommended to minimize thermal lag and better resolve complex thermal events like solid-solid transitions.

- Repeat: Perform at least two full heating/cooling cycles to establish the reproducibility of the thermal events.

- Data Analysis:

- On the final heating scan, identify the onset temperature of the endothermic peak corresponding to melting; this is reported as the melting point (Tm).

- Integrate the area under the melting peak to determine the enthalpy of fusion (ΔHf).

QSPR Workflow for Melting Point Prediction

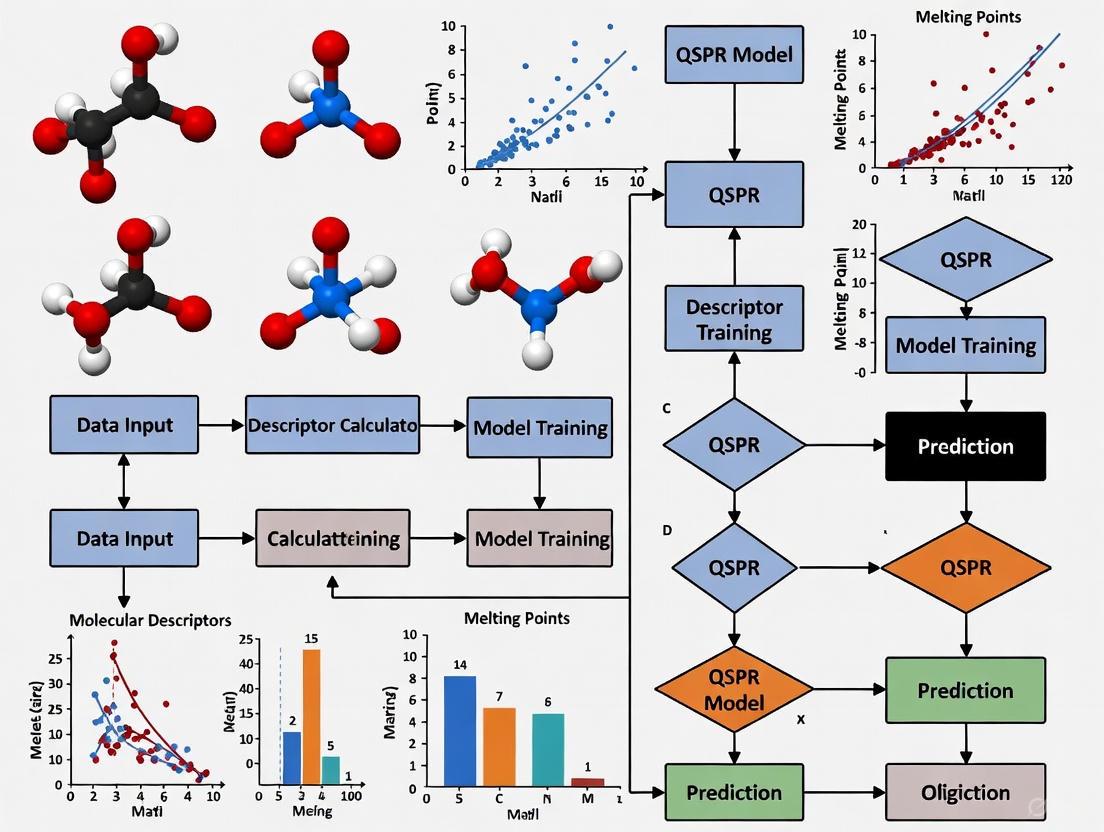

The following diagram illustrates the workflow for developing a QSPR model to predict the melting points of Ionic Liquids.

Table 3: Essential Materials for IL Synthesis and Melting Point Analysis

| Item Name | Function/Description | Example in Context |

|---|---|---|

| Imidazolium Cations | A common class of organic cations providing a versatile, tunable platform for IL synthesis. | 1-Butyl-3-methylimidazolium ([C₄MIM]⁺) is a widely studied cation whose salts often have low melting points [4]. |

| Complex Anions | Inorganic or organic anions that contribute to charge delocalization and low lattice energy. | Bis(trifluoromethylsulfonyl)imide ([NTf₂]⁻) and tetrafluoroborate ([BF₄]⁻) are common anions that help achieve low melting points [4] [5]. |

| Differential Scanning Calorimeter (DSC) | The primary instrument for the experimental determination of melting points and enthalpies of fusion. | Used to measure the precise melting temperature and latent heat of an unknown IL sample for model validation [4]. |

| ILThermo Database | A comprehensive NIST database for collecting experimental thermophysical property data on ILs. | Serves as a critical source of high-quality experimental data for training and validating QSPR models [5]. |

| COSMO-RS Computational Method | A thermodynamic method for predicting chemical potentials and activity coefficients, applicable to ILs. | Can be used to calculate activity coefficients at infinite dilution, which are related to solubility and can inform property models [7]. |

The Critical Role of Melting Points in Application Performance

FAQs and Troubleshooting Guides

This guide addresses common challenges researchers face when determining the melting points of Ionic Liquids (ILs) and how these impact application performance within the context of Quantitative Structure-Property Relationship (QSPR) modeling.

Q: What is the most common error in melting point determination, and how does it affect the result?

A: The most prevalent error is heating the sample too quickly [8]. This causes a thermal lag between the sample's actual temperature and the temperature registered by the thermometer. The consequence is an artificially high and broad melting point range [8]. This inaccurate data can mislead the assessment of an IL's purity and identity, and if used in QSPR model training, can reduce the model's predictive accuracy.

Q: My melting point measurement is broad and inconsistent. What could be the cause?

A: A broad melting range often stems from technique rather than the sample itself. Key factors to check are [8]:

- Heating Rate: Ensure you slow the heating rate to 1-2 °C per minute as you approach the anticipated melting point [8].

- Sample Preparation: The sample must be finely powdered and densely packed into the capillary tube to a height of no more than 2-3 mm [8]. A large or loosely packed sample creates temperature gradients, leading to a broad range.

- Instrument Calibration: An uncalibrated thermometer can introduce a systematic error. Regularly calibrate your apparatus using known melting point standards [9].

Q: How can I determine if a depressed melting point is due to impurity or just poor technique?

A: First, repeat the measurement with careful attention to slow heating and proper sample packing. If the melting range remains broad and depressed, it is a strong indicator of sample impurity. Technique-related errors typically resolve upon careful repetition, while impurity is an intrinsic property of the sample [8] [9].

Q: Why is accurate melting point data critical for QSPR models of Ionic Liquids?

A: QSPR models learn the relationship between molecular descriptors of ions and their measured properties. The presence of erroneous data (e.g., artificially high melting points from rapid heating) introduces "noise" that confuses the model. High-quality, accurate experimental data is the foundation for building robust and reliable QSPR models that can genuinely predict the melting points of new, unsynthesized ILs [6] [5].

Melting Point Errors and Their Impact

The following table summarizes common experimental errors and their consequences for your data and subsequent QSPR modeling.

| Error Type | Consequence on Melting Point | Impact on QSPR Model Development |

|---|---|---|

| Rapid Heating [8] | Artificially high & broad melting range. | Introduces noise, reducing prediction accuracy and model reliability. |

| Improper Sample Packing (too much, not dense) [8] | Broad, uneven melting range. | Obscures the true sharp melting point of pure compounds, affecting data quality. |

| Uncalibrated Thermometer [9] | Systematic high or low readings. | Creates a consistent bias in the dataset, leading to systematically incorrect predictions. |

| Wet or Impure Sample [8] [9] | Depressed (lowered) & broad melting range. | Provides incorrect target values for the model to learn from, confusing structure-property relationships. |

Experimental Protocol: Accurate Melting Point Determination

This protocol ensures the generation of high-quality data suitable for QSPR studies [8] [9].

1. Sample Preparation

- Grinding: Use a clean mortar and pestle to grind the sample into a fine powder.

- Packing: Fill a melting point capillary tube by tapping the open end into the powder. Tap the closed end down on a hard surface or slide the tube down a long glass tube to densify the sample. Repeat until the sample is a compact column of 2-3 mm in height [8].

2. Apparatus Setup

- Place the capillary tube in the melting point apparatus.

- If the approximate melting point is known, heat rapidly to about 15-20 °C below the expected range [8].

3. Measurement and Data Recording

- Once near the melting point, sharply reduce the heating rate to 1-2 °C per minute [8].

- Carefully observe the sample. Record two temperatures:

- Initial Melting Point (T₁): The temperature at which the first drop of liquid is observed.

- Final Melting Point (T₂): The temperature at which the last solid crystal disappears into the liquid [9].

- The melting point is reported as a range: T₁ - T₂.

4. Thermometer Calibration

- Perform regularly using at least two certified melting point standards that bracket your range of interest.

- Measure the melting point of the standards using the protocol above.

- Plot the observed values versus the literature values to create a calibration curve for your specific thermometer [9].

The Scientist's Toolkit: Essential Research Reagents and Materials

| Item | Function in Melting Point Analysis |

|---|---|

| Melting Point Apparatus | A specialized instrument with a heated block, viewer, and temperature control for precise measurement. |

| Capillary Tubes | Thin-walled glass tubes for holding a small amount of the solid sample. |

| Melting Point Standards | Ultra-pure compounds with known, sharp melting points (e.g., vanillin, acetanilide) used for thermometer calibration [9]. |

| Molecular Descriptor Software | Software (e.g., Dragon) used to calculate numerical representations (descriptors) of the cation and anion structure from their SMILES strings for QSPR modeling [5]. |

Workflow for Integrating Experimental Data with QSPR Modeling

The diagram below illustrates the workflow from experimental measurement to QSPR model development and application.

Frequently Asked Questions (FAQs)

1. What is QSPR and how is it used for ionic liquids? Quantitative Structure-Property Relationship (QSPR) is a computational modeling approach that uses mathematical equations to predict the properties of chemical compounds based on their molecular structures. For ionic liquids (ILs), QSPR models establish relationships between molecular descriptors (numerical representations of structural features) and specific IL properties, such as melting point. This allows researchers to predict properties for new, unsynthesized IL combinations, significantly accelerating the design of ILs with tailored characteristics for specific applications like energy storage or catalysis [6] [10].

2. Why is predicting the melting point of ionic liquids important? The melting point (Tm) of an ionic liquid is a critical property that determines its operational temperature range in applications such as batteries, supercapacitors, and industrial extraction processes. A low melting point is often desirable for practical use. However, the Tm can vary dramatically based on the molecular structures of the cation and anion and their combination. QSPR models provide a way to systematically understand and predict Tm, enabling the computer-aided design of ILs with favorable melting points without relying solely on costly and time-consuming experimental synthesis and testing [5].

3. What are the common computational methods used in QSPR for IL melting points? Researchers employ a variety of machine learning and statistical methods to build predictive QSPR models for IL melting points. Commonly used algorithms include:

- Partial Least Squares Regression and Stepwise Multiple Linear Regression for linear modeling [6].

- Monte Carlo optimization using software like CORAL to generate models based on hybrid optimal descriptors derived from molecular structures [11].

- Deep Learning models, which can process a vast number of molecular features and have demonstrated high predictive accuracy for Tm [5].

- Projection Pursuit Regression (PPR), which can capture nonlinear relationships between descriptors and the melting point [12].

4. What molecular features influence the melting point of ionic liquids? QSPR studies have identified several key structural attributes that affect the melting point of ionic liquids. These include:

- Symmetry: Higher molecular symmetry often leads to higher melting points due to better crystal packing.

- Flexibility: The presence of rotatable bonds can lower the melting point.

- Branched chains: Branching in alkyl chains generally decreases the melting point.

- Aromatic systems: The presence of benzene ring structures in cations influences intermolecular interactions and Tm.

- Intramolecular electronic effects: The electronic distribution within the ions affects the strength of Coulombic interactions [12].

Troubleshooting Guides for QSPR Modeling

Issue 1: Poor Model Performance and Low Predictive Accuracy

Potential Causes and Solutions:

Cause: Inadequate or Non-Representative Molecular Descriptors. The set of molecular descriptors used may not sufficiently capture the structural features governing the melting point.

Cause: Overfitting of the Model. The model may perform well on training data but poorly on new, unseen data.

- Solution: Implement a rigorous validation protocol. This should include internal cross-validation and external validation with a separate test set. Always define the model's applicability domain to understand its limitations. Reducing the descriptor dimensionality by removing low-variance or highly correlated features can also prevent overfitting [6] [5].

Cause: Suboptimal Data Splitting. The way the dataset is divided into training, calibration, and validation sets can impact the perceived performance.

Issue 2: Model is Not Robust or Chemically Interpretable

Potential Causes and Solutions:

Cause: Lack of Mechanistic Interpretation. The model predicts the endpoint but offers no insight into which structural features are responsible.

- Solution: Use modeling approaches that allow for mechanistic interpretation. For instance, in Monte Carlo-based models, analyze the correlation weights (CW) of specific structural attributes. Positive CW indicates a fragment increases the property (e.g., melting point), while negative CW indicates a fragment decreases it [10] [11].

Cause: Ignoring the Effect of Chemical Family. The model may not account for variations in performance across different classes of cations and anions.

- Solution: Analyze the model's performance stratified by the chemical family of the ions. Highlighting these effects can provide deeper insights and guide the design of future ILs [6].

Issue 3: Challenges in Handling Ionic Liquid Structures

Potential Causes and Solutions:

Cause: Difficulty in Representing the Ionic Pair. Ionic liquids consist of two distinct ions, and creating a single molecular representation for QSPR can be challenging.

- Solution: Employ and compare different combining rules. These are averaging functions used to obtain the descriptors of the entire IL from the descriptors of the individual ions. Testing different rules is crucial for building a robust model [6].

Cause: Limited Experimental Data for Training. The availability of high-quality, experimental melting point data for a diverse set of ILs may be limited.

- Solution: Leverage large, publicly available databases like ILThermo to compile datasets. For deep-learning models, which require large datasets, this is particularly important [5].

Experimental Protocols: Key Workflows in QSPR for IL Melting Points

Protocol 1: Building a QSPR Model Using Machine Learning

This protocol outlines the general workflow for developing a QSPR model to predict the melting points of Ionic Liquids [6] [5].

- Data Collection: Extract experimental melting point data for ionic liquids from databases (e.g., ILThermo) or published literature.

- Structure Representation and Descriptor Calculation:

- Represent the molecular structures of cations and anions using Simplified Molecular Input Line Entry System (SMILES) notations or draw them with molecular editing software.

- Use software (e.g., Dragon) to calculate a wide range of molecular descriptors for each ion.

- Data Preprocessing and Splitting:

- Preprocess the data by removing descriptors with low variance or missing values.

- Split the entire dataset randomly into a training set (≈80%) and a validation set (≈20%).

- Feature Selection:

- Apply feature selection techniques (e.g., using Pearson correlation matrix) to identify a subset of significant molecular descriptors that are highly correlated with the melting point. Remove descriptors with low correlation (<0.20) or high inter-correlation (>0.90) to prevent overfitting.

- Model Training and Validation:

- Train various machine learning algorithms (e.g., Partial Least Squares, Deep Learning) on the training set.

- Validate the trained model's predictive performance on the separate validation set using statistical metrics like R² and RMSE.

The workflow for this protocol is summarized in the diagram below:

Protocol 2: Building a Model with Monte Carlo Optimization (CORAL Software)

This protocol details the methodology for creating a QSPR model using the Monte Carlo algorithm as implemented in the CORAL software [10] [11].

- Data Compilation: Compile a dataset of compounds with known experimental property values (e.g., melting point, expressed as log H50 for sensitivity, or Tm).

- Structure Representation: Draw molecular structures and convert them into SMILES notations.

- Data Splitting: Randomly divide the dataset into four subsets for a robust modeling process:

- Active Training Set (≈33%): Used for the model's initial training.

- Passive Training Set (≈31%): Used for additional training.

- Calibration Set (≈16%): Used to optimize the model's parameters.

- Validation Set (≈20%): Used for the final, unbiased evaluation of the model.

- Descriptor Calculation and Optimization:

- The CORAL software calculates hybrid optimal descriptors (DCW) from the SMILES notations and/or graph representations via Monte Carlo optimization.

- The optimization process aims to find the best correlation weights for molecular attributes to predict the target property.

- Model Building and Validation:

- Build the QSPR model using the target function and optimized descriptors.

- Validate the model using both internal and external validation parameters (e.g., R²Validation, Q²Validation) from the calibration and validation sets.

- Mechanistic Interpretation: Examine the correlation weights of structural attributes to identify fragments that increase or decrease the target property.

The workflow for this protocol is summarized in the diagram below:

Data Presentation: Model Performance Comparison

The following table summarizes the performance of different QSPR modeling approaches as reported in the literature, providing a benchmark for expected outcomes.

Table 1: Performance Metrics of Various QSPR Models for Ionic Liquid Properties

| Modeling Approach | Property Predicted | Data Points | Key Statistical Metrics | Reference / Software |

|---|---|---|---|---|

| Deep Learning Model | Melting Point (Tm) | 1253 ILs | R² ≈ 0.90, RMSE ≈ 32 K | [5] |

| Monte Carlo Optimization | Melting Point (Tm) of Imidazolium ILs | 353 ILs | R²Validation: 0.78-0.85, Q²Validation: 0.77-0.84 | CORAL Software [11] |

| Projection Pursuit Regression (PPR) | Melting Point (Tm) | 288 ILs | R² = 0.81, AARD* = 17.75% | CODESSA Program [12] |

| Monte Carlo Optimization | Impact Sensitivity (log H50) | 404 Nitro Compounds | R²Validation = 0.78, Q²Validation = 0.77 | CORAL Software [10] |

| Multiple Machine Learning Classifiers | State of Matter (at 300 K) | 953 IL Salts | N/A (Classification) | [6] |

AARD: Average of Absolute Relative Deviation

The Scientist's Toolkit: Essential Research Reagents and Software

Table 2: Key Resources for QSPR Modeling of Ionic Liquids

| Tool Name | Type | Primary Function in QSPR |

|---|---|---|

| CORAL Software | Software | Implements the Monte Carlo algorithm to build QSPR models using SMILES-based optimal descriptors. Used for robust model development and validation [10] [11]. |

| Dragon | Software | Calculates a very large number (5000+) of molecular descriptors from molecular structures, which are used as inputs for machine learning models [5]. |

| ILThermo Database | Database | A comprehensive NIST database for experimentally measured thermodynamic properties of ionic liquids, serving as a key source for training data [5]. |

| SMILES Notation | Representation | A string-based representation of molecular structure that serves as input for descriptor calculation in software like CORAL and for converting IUPAC names [10] [5]. |

| OPSIN Library | Software Library | Used to convert IUPAC names of chemical compounds into SMILES representations, facilitating the processing of large datasets [5]. |

Key Molecular Descriptors for Characterizing Cations and Anions

Frequently Asked Questions (FAQs)

FAQ 1: What is the most effective way to represent the structure of an ionic liquid for calculating molecular descriptors? The structure of an ionic liquid can be represented in two primary ways for descriptor calculation: using descriptors derived for separate ions or for the whole ionic pair [13] [14]. A benchmark study concluded that a description based on 2D descriptors calculated for ionic pairs is often sufficient to develop a reliable QSPR model. This approach yields high accuracy in both calibration and validation, and it streamlines the descriptor selection process by reducing the number of potential variables at the start of model development [13] [14]. While many models use 3D descriptors from separately optimized ion geometries, 2D descriptors derived from the structural formula are less time-consuming and can be just as effective without significant loss of model quality [14].

FAQ 2: How does the choice of geometry optimization method affect my QSPR model? The level of theory used for geometry optimization can significantly influence the values of 3D molecular descriptors and, consequently, the quality of the final QSPR model [13] [14]. Research has shown that descriptor values are dependent on the applied theory level [14]. Models utilizing descriptors from molecular geometries optimized with semi-empirical PM7 and ab initio Hartree-Fock (HF/6-311 + G) methods often show similarly high quality and validation parameters [14]. In contrast, models based on geometries from more computationally intensive methods like Density Functional Theory (DFT with B3LYP/6-311 + G) can sometimes result in lower model quality [14]. Therefore, the semi-empirical PM7 method is frequently recommended for the routine optimization of anion and cation geometries [14].

FAQ 3: What are "combining rules" and why are they important in QSPR for ionic liquids? Combining rules are defined as averaging functions used to obtain the molecular descriptors of an entire ionic liquid from the descriptors calculated for its individual ions [6]. The choice of rule is a key novelty in recent QSPR models and is critical for checking model robustness [6]. Since ionic liquids are composed of disconnected cations and anions, a rule is required to aggregate their independent descriptor values into a single set that represents the salt. Investigating different combining rules is part of a good practice protocol in QSPR model selection [6].

FAQ 4: Which machine learning algorithms are commonly used in QSPR models for properties like melting point? A variety of machine learning algorithms are successfully applied in QSPR studies for ionic liquids. These include both regression and classification methods [6]. Common algorithms mentioned in recent research include:

- Regression Techniques: Partial Least Squares (PLS) regression, Stepwise Multiple Linear Regression (Stepwise-MLR) [6].

- Classification Techniques: k-Nearest Neighbors (k-NN), Naive Bayes, Linear Discriminant Analysis, and Support Vector Machines (SVM) [6]. Other powerful algorithms used in related property prediction (e.g., viscosity) include Random Forest (RF), Categorical Boosting (CatBoost), and Artificial Neural Networks (ANN) [15] [16].

Troubleshooting Guides

Problem: My QSPR model shows excellent performance on the training set but fails to predict new ionic liquids accurately. This is a classic sign of overfitting or an improperly assessed applicability domain.

- Solution 1: Implement Rigorous Validation. Always go beyond internal validation. Use external validation where a portion of the data is held out from the model building process entirely [15] [13]. Perform leave-one-out (LOO) or leave-multi-out (LMO) cross-validation and Y-randomization testing to avoid spurious correlations [15] [17].

- Solution 2: Define the Applicability Domain (AD). The model's reliability depends on the chemical space of the ILs it was built on. Use the applicability domain analysis to determine whether a new IL you are predicting falls within this space or is an outlier [6] [13].

- Solution 3: Split Data by IL Type, Not Randomly. When creating your training and test sets, split the data based on ionic liquid categories. A random split of data points can lead to over-optimistic statistics because the test set may contain data for the same ILs that were in the training set, just under different conditions. A category-wise split better assesses the model's true generalization ability for entirely new cation-anion combinations [15].

Problem: The process of calculating and selecting molecular descriptors is too slow and computationally expensive. This is a common challenge, especially with 3D descriptors that require geometry optimization.

- Solution 1: Prioritize 2D Descriptors. Consider using 2D molecular descriptors derived directly from the structural formula. They are less computationally demanding and a benchmark study has shown they can be sufficient for developing reliable models [13] [14]. These include constitutional descriptors, topological indices, and walk and path counts [13].

- Solution 2: Use Efficient Combining Rules. When working with separate ions, employ simple and effective combining rules to obtain IL-level descriptors, rather than optimizing the entire ionic pair, which is more computationally intensive [6].

- Solution 3: Apply Feature Selection. Use stepwise selection algorithms or other feature selection methods to efficiently reduce the pool of descriptors to the most relevant variables, speeding up model development and improving interpretability [13] [14].

Data Presentation

Table 1: Comparison of Molecular Descriptor Representation Approaches

| Aspect | Separate Ions (A | B) | Ionic Pair [A+B] |

|---|---|---|---|

| Core Concept | Descriptors calculated independently for cation and anion, then combined [13] [14]. | Descriptors calculated from the optimized structure of the cation-anion pair [13] [14]. | |

| Computational Cost | Moderate (requires two optimizations). Can be high if 3D descriptors are used [14]. | High (requires optimization of the paired structure). | |

| Advantages | Allows analysis of individual ion contributions. | May better capture inter-ion interactions like hydrogen bonding [18]. | |

| Recommended Use | When using simple combining rules or analyzing ion-specific effects. | When inter-ion interactions are critical and computational resources are adequate. |

Table 2: Key Statistical Metrics for QSPR Model Validation

This table defines essential metrics used to ensure the reliability and predictive power of a QSPR model, as referenced in the search results.

| Metric | Formula / Description | Interpretation & Threshold | ||

|---|---|---|---|---|

| R² (Coefficient of Determination) | - | Measures goodness-of-fit. Closer to 1 is better [16]. | ||

| Q² (Cross-Validation Coefficient) | - | Measures internal predictive ability. Q² > 0.5 is generally acceptable [13]. | ||

| RMSE (Root Mean Square Error) | - | Measures average prediction error. Lower values are better [15]. | ||

| AARD (Absolute Average Relative Deviation) | ( \text{AARD} = \frac{100}{N} \sum \left | \frac{\eta{\text{exp}} - \eta{\text{pred}}}{\eta_{\text{exp}}} \right | ) | Measures average percentage error. Lower values indicate higher accuracy [15]. |

| CCC (Concordance Correlation Coefficient) | - | Evaluates the agreement between observed and predicted data. Closer to 1 indicates better agreement [13]. |

Experimental Protocols

Detailed Methodology: Building a QSPR Model for Ionic Liquid Melting Point

This protocol outlines the key steps for developing a validated QSPR model, based on established good practices [6] [13].

1. Data Collection and Curation

- Extract experimental data (e.g., melting point, Tm) from reliable literature sources for a wide range of ionic liquid salts. For example, one study used data for 953 salts [6].

- Carefully curate the data, removing outliers and ensuring consistency. For classification tasks (e.g., solid/liquid at 300 K), assign class labels accordingly [6].

2. Structure Representation and Descriptor Calculation

- Representation: Choose a structural representation method (separate ions vs. ionic pair) [13] [14].

- Geometry Optimization: If using 3D descriptors, optimize the geometry of the ions or ionic pairs. The semi-empirical PM7 method is often recommended for its balance of accuracy and speed [14].

- Descriptor Calculation: Use software like DRAGON to calculate molecular descriptors. Focus on relevant groups like constitutional descriptors, topological indices, and information indices [13].

- Combining Rule: If ions are handled separately, apply a combining rule (e.g., a weighted average) to obtain a single set of descriptors for the ionic liquid [6].

3. Model Development and Training

- Split the dataset into a training set (for model building) and an external test set (for final validation). It is better to split by IL type rather than randomly [15].

- Apply feature selection algorithms (e.g., stepwise selection) to identify the most relevant descriptors and avoid overfitting [13] [14].

- Train the model using selected machine learning algorithms (e.g., Multiple Linear Regression, Support Vector Machines, Random Forest) on the training set [6] [16].

4. Model Validation and Applicability Domain

- Internal Validation: Perform cross-validation (e.g., Leave-One-Out) on the training set to assess stability [13].

- External Validation: Use the held-out test set to evaluate the model's true predictive power on unseen data [6] [15].

- Applicability Domain: Define the chemical space of the model to identify for which new ILs predictions can be considered reliable [6] [13].

- Statistical Checks: Use Y-randomization to confirm the model is not based on chance correlation [15].

Mandatory Visualization

Descriptor Selection Workflow

QSPR Model Development & Validation

The Scientist's Toolkit

Table 3: Essential Research Reagents & Computational Tools

This table lists key software, methods, and resources used in the development of QSPR models for ionic liquids.

| Item Name | Function / Purpose | Key Details / Examples |

|---|---|---|

| DRAGON Software | Calculates a wide range of molecular descriptors from molecular structures. | Used to generate 2D and 3D descriptors (constitutional, topological, geometrical, etc.) for QSPR modeling [13]. |

| Gaussian 09/16 | Performs quantum chemical calculations for geometry optimization and electronic structure analysis. | Used for optimizing ion or ionic pair geometries at various theory levels (e.g., DFT, HF) before descriptor calculation [13]. |

| COSMO-RS Descriptors | A set of quantum-chemically derived descriptors based on the sigma-profile of a molecule. | Used as molecular descriptors in QSPR models to predict properties like activity coefficient and viscosity [15] [17]. |

| R / Python with ML libraries (olsrr, caret, scikit-learn) | Provides the programming environment for data splitting, feature selection, model building, and validation. | The olsrr package in R was used for stepwise descriptor selection [13]. Various algorithms (SVM, RF, ANN) are implemented in these environments [6] [16]. |

| Semi-empirical PM7 Method | A fast quantum-mechanical method for geometry optimization of ions. | Recommended for routine optimization of anion and cation geometries to calculate 3D descriptors for QSPR models [14]. |

Frequently Asked Questions (FAQs)

Q1: What are the primary public data sources for Ionic Liquid melting point data? The most comprehensive public data source for Ionic Liquid properties is the NIST ILThermo database [5]. This database is continuously updated and, as of recent research, contains data for thousands of ILs, including melting points for 1,253 unique ILs, compiled from nearly 3,500 scientific references [5]. Another extensive review compiled a database of 3,129 ILs with melting points ranging from 177.15 K to 645.9 K [19]. For viscosity and other properties, NIST also maintains a dynamic database with hundreds of thousands of data points [15].

Q2: What is a key data splitting strategy to ensure my QSPR model generalizes well? A critical best practice is to split your dataset by ionic liquid type, not randomly. Random splitting can lead to over-optimistic performance statistics because the test set may contain data points from ILs that are structurally very similar to those in the training set. A more robust method is to ensure that the ILs in the test set are entirely distinct from those used during training, which provides a more realistic assessment of the model's ability to predict properties for novel, untested ILs [15].

Q3: Which molecular descriptors are most significant for predicting melting points? While the exact descriptors can vary by model, a key step in descriptor selection is dimensionality reduction. One effective approach involves using a Pearson correlation matrix to filter descriptors, retaining those with a statistically significant correlation to the melting point (e.g., >0.20) and removing those with very high inter-correlation (e.g., >0.90) to reduce redundancy. This process can narrow thousands of initial descriptors down to a more manageable and meaningful set, for instance, around 137 key molecular descriptors for a deep learning model [5].

Q4: How can I validate the robustness of my QSPR model? A robust validation protocol should include multiple techniques [6]:

- Cross-validation: Used to assess model stability during training.

- External validation: Testing the model on a completely held-out dataset.

- Applicability domain analysis: Determines the range of structures for which the model's predictions are reliable.

- Y-randomization testing: Helps confirm that the model has learned real structure-property relationships and not just spurious correlations [15].

Troubleshooting Guides

Issue 1: Model Performance is Poor on Novel Ionic Liquids

Problem: Your QSPR model performs well on the test set but fails to accurately predict the melting points of ionic liquids with chemistries not represented in your original dataset.

Solution:

- Analyze the Applicability Domain (AD): The model's predictions are only reliable for compounds structurally similar to those it was trained on. Use AD analysis to identify when you are asking the model to make predictions too far outside its training space [6].

- Check Data Splitting Methodology: Verify that your original training and test sets were split by IL chemical identity, not just randomly. Poor generalization often stems from data leakage during the split [15].

- Source Additional Data: Seek out data for new cation-anion families to expand the chemical space covered by your training set. The NIST ILThermo database is the best starting point for this [5] [19].

Issue 2: Inconsistent or Noisy Experimental Data

Problem: The experimental melting point data from different sources for the same ionic liquid shows high variability, introducing noise and reducing model accuracy.

Solution:

- Data Curation and Outlier Detection: Implement statistical methods to identify and handle outliers. The Leverage method is one recognized technique for identifying statistically valid and invalid data points within a dataset [16].

- Standardize Data Selection Criteria: Apply consistent filters during data collection. For example, you might decide to only use data measured at atmospheric pressure or to average values from multiple reputable sources after removing clear outliers.

- Consult Compiled Reviews: Refer to recent evaluation reviews that have already collected and assessed melting point data, as they can provide a pre-curated dataset [19].

Experimental Protocols & Data Curation

Protocol: Building a High-Quality Dataset for Melting Point Prediction

Objective: To construct a curated, machine-learning-ready dataset of ionic liquid melting points from public sources.

Materials and Software:

- Primary Data Source: NIST ILThermo (v2.0) database [5].

- Data Extraction Tool: Python libraries such as

pyilt2for automated data retrieval from ILThermo [5]. - Structure Conversion: OPSIN library or similar tool to convert IUPAC names into SMILES representations [5].

- Descriptor Calculation: Commercial software like Dragon7, which can calculate over 5,000 molecular descriptors for QSPR models [5].

Methodology:

- Data Extraction: Use the

pyilt2library to programmatically extract melting point records for pure ionic liquids from the ILThermo database. - Data Cleaning:

- Remove entries with missing critical information (e.g., no melting point value, unclear cation/anion assignment).

- Identify and handle duplicates from multiple sources. Decide on a strategy (e.g., averaging, keeping the most recent measurement).

- Structure Standardization: Convert the IUPAC names of all cations and anions into standardized SMILES strings using the OPSIN library.

- Descriptor Generation: Input the standardized molecular structures (SMILES) into Dragon7 to compute a comprehensive set of molecular descriptors.

- Descriptor Preprocessing:

- Remove columns with low variance or missing values.

- Apply a correlation-based feature selection to reduce dimensionality and multicollinearity (e.g., keep descriptors with correlation >0.20 with the target and inter-correlation <0.90 with each other) [5].

- Data Splitting: Split the final curated dataset into training and test sets, ensuring that all data points for any given ionic liquid are contained entirely within one set to enable true external validation.

Workflow Diagram: Data Curation for QSPR Models

Key Research Reagents & Computational Tools

The following table details essential resources for conducting QSPR research on ionic liquid melting points.

| Resource Name | Type | Function in Research |

|---|---|---|

| NIST ILThermo (v2.0) [5] | Database | Primary repository for experimentally measured thermodynamic properties of ionic liquids, including melting points. |

| Dragon7 [5] | Software | Calculates thousands of molecular descriptors (geometric, topological, quantum-chemical) from molecular structure inputs for QSPR models. |

| OPSIN Library [5] | Software Tool | Converts systematic IUPAC chemical names into machine-readable SMILES representations, enabling automated structure processing. |

| Monte Carlo Tree Search (MCTS) & RNN [20] | Generative Algorithm | Used for de novo generation of novel cation and anion structures, creating vast virtual libraries for screening. |

| COSMO-RS [19] [20] | Solvation Model | Used as a validation tool and for calculating σ-profile descriptors; shows promise for further improvement in melting point prediction. |

Model Development and Validation Workflow

Building Predictive Models: From Traditional QSPR to Advanced Machine Learning

Frequently Asked Questions (FAQs)

Q1: Which machine learning algorithm is most recommended for predicting the melting points of Ionic Liquids (ILs) and Deep Eutectic Solvents (DESs) in a QSPR framework?

For predicting properties like melting points in QSPR studies, Random Forest Regression (RFR) and Support Vector Regression (SVR) often demonstrate robust performance, though the optimal choice depends on your dataset size and feature complexity [21] [22] [23].

RFR is particularly powerful because it can model non-linear relationships, is less prone to overfitting, and provides feature importance rankings, which are invaluable for interpreting the QSPR model. SVR, especially with non-linear kernels like the Radial Basis Function (RBF), is excellent for capturing complex, non-linear patterns in high-dimensional descriptor spaces [23]. One study on DES melting point prediction developed an integrated model that leveraged the strengths of multiple algorithms, including SVR and RFR, to achieve outstanding performance (R² = 0.99) [21]. For smaller datasets, SVR's principle of structural risk minimization can be advantageous [23].

Q2: My model performance is poor. What are the first aspects I should check related to my data?

Data quality and representation are the most common sources of poor model performance.

- Feature Selection and Engineering: The descriptors you use to represent the ionic liquids are paramount. Ensure you are using chemically relevant molecular descriptors. Consider employing feature selection techniques (like those available in

scikit-learn) to eliminate redundant or irrelevant descriptors, which can degrade model performance [22] [23]. - Data Scaling: Algorithms like SVR and MLP are sensitive to the scale of the input data. If your features are on different scales, the model can become biased. Always standardize (zero mean, unit variance) or normalize (scale to a [0, 1] range) your data before training these models [24].

- Dataset Size and Quality: Machine learning models, especially non-linear ones like MLP, require a sufficient amount of reliable data to learn effectively. A dataset that is too small or contains significant noise will lead to poor generalization [21] [23].

Q3: How do I decide on the hyperparameters to use for tuning the SVR model?

Hyperparameter tuning is critical for SVR performance. The key parameters to optimize are [24]:

- Kernel: The function that maps data to a higher dimension. The RBF kernel is a good starting point for non-linear problems.

- C (Regularization Parameter): Controls the trade-off between achieving a low error on the training data and minimizing the model complexity. A low

Ccreates a smoother function, while a highCaims to fit more training points correctly. - Epsilon (ε): Defines the margin of error within which no penalty is associated. A larger epsilon results in a sparser model (fewer support vectors).

- Gamma (γ for RBF kernel): Defines how far the influence of a single training example reaches. Low values mean 'far', high values mean 'close'.

Use automated techniques like Grid Search or Randomized Search (e.g., GridSearchCV in scikit-learn) to systematically find the best combination of these parameters for your dataset.

Q4: What are the primary advantages and disadvantages of MLP models for QSPR studies?

| Advantage | Disadvantage |

|---|---|

| High Non-linearity: Excellent at learning complex, non-linear relationships between a large number of molecular descriptors and the target property [23]. | Data Hungry: Requires large datasets to train effectively and avoid overfitting [23]. |

| Flexibility: Can be adapted to various problem types (regression, classification) and complex architectures [25]. | Black Box: Model interpretability is very low, making it difficult to extract clear chemical insights [23]. |

| Automatic Feature Interaction: Can learn interactions between features without explicit instruction. | Sensitive to Hyperparameters: Performance is highly dependent on the choice of layers, neurons, and learning rate [23]. |

Troubleshooting Guides

Issue 1: Long Training Times or High Computational Cost

This is a common issue, particularly with large datasets or complex models.

- Potential Cause #1: The model algorithm is inherently computationally expensive for the dataset size.

- Solution:

- For RFR, reduce the

n_estimators(number of trees). While more trees are generally better, there is a point of diminishing returns. - For SVR, consider using a linear kernel instead of RBF for very large datasets, as it is faster. You can also try the

LinearSVRclass inscikit-learn, which is optimized for linear SVR. - For KNN, the model is lazy and has a fast training time but slow prediction time for large datasets. For prediction speed, consider using data structures like KD-Tree or Ball Tree (available in

sklearn.neighbors).

- For RFR, reduce the

- Solution:

- Potential Cause #2: The dataset has a very high number of features (descriptors).

- Solution: Perform feature selection (e.g., using RFR's built-in feature importance or mutual information) to reduce dimensionality. This will speed up all models significantly [22].

Issue 2: The Model is Overfitting the Training Data

An overfit model performs well on training data but poorly on unseen test data.

- Potential Cause #1: The model is too complex for the amount of training data.

- Solution:

- For RFR: Increase the

min_samples_leafandmin_samples_splitparameters. This constrains the trees from growing too deep. Also, ensure you are using a sufficiently high number of trees to average over (n_estimators). - For SVR: Increase the regularization parameter

Cto enforce a smoother decision function [24]. - For MLP: Apply regularization techniques like L1/L2 penalty, use dropout layers, or reduce the number of neurons and hidden layers (model capacity) [23].

- For all models: Gather more training data if possible [21].

- For RFR: Increase the

- Solution:

- Potential Cause #2: The model is memorizing noise in the training data.

- Solution: For KNN, increase the number of neighbors

k. Ak=1is highly susceptible to noise. A largerkforces a more local averaging, smoothing out predictions.

- Solution: For KNN, increase the number of neighbors

Issue 3: The Model is Underfitting the Training Data

An underfit model performs poorly on both training and test data because it fails to capture the underlying trend.

- Potential Cause #1: The model is too simple.

- Solution:

- For SVR: Use a non-linear kernel (like RBF) if you are using a linear one. Decrease the

Cparameter to allow the model to fit the data more closely [24]. - For MLP: Increase the number of hidden layers and neurons to increase model capacity [23].

- For RFR: Increase the

max_depthof the trees and decreasemin_samples_leaf. - For KNN: Decrease the number of neighbors

kto make the model more sensitive to local patterns.

- For SVR: Use a non-linear kernel (like RBF) if you are using a linear one. Decrease the

- Solution:

- Potential Cause #2: Informative features are missing or not properly scaled.

- Solution: Revisit your feature engineering process. Ensure that all algorithms (especially SVR and MLP) are working with scaled data [24].

Experimental Protocols & Data Presentation

Comparative Performance of Algorithms in Property Prediction

The following table summarizes the quantitative performance of SVR, RFR, and other algorithms as reported in recent research for predicting properties like melting points and streamflow, which shares similarities with QSPR tasks.

Table 1: Performance metrics of ML algorithms from various scientific studies.

| Study Context | Algorithm | Performance Metrics | Key Findings / Notes |

|---|---|---|---|

| DES Melting Point Prediction [21] | Integrated Model (MLP, MLR, SVR, KNN, RFR) | R² = 0.99, AARD = 1.24% | The integration of multiple optimized models into a unified framework yielded exceptional predictive accuracy. |

| Streamflow Prediction [26] | SVR | NSE = 0.59, RMSE = 1.18 m³/s | SVR outperformed both RFR and Multiple Linear Regression (MLR) in this hydrological study. |

| RFR | NSE = 0.53, RMSE = 1.18 m³/s | ||

| MLR | NSE = 0.54, RMSE = 1.01 m³/s | ||

| Antioxidant Tripeptide QSAR [22] | XGBoost (Tree-based, advanced RFR) | R²Test = 0.847, RMSETest = 0.627 | Non-linear regression methods tended to perform better than linear ones in this QSAR study. |

Essential Research Reagent Solutions

This table lists the key computational "reagents" required for building QSPR models for melting point prediction.

Table 2: Key computational tools and packages for ML-based QSPR modeling.

| Item / Package Name | Function / Application |

|---|---|

| scikit-learn (sklearn) | Primary library for implementing SVR (SVR), RFR (RandomForestRegressor), KNN (KNeighborsRegressor), and MLP (MLPRegressor). Also provides data preprocessing and model evaluation tools [24]. |

| COSMO-RS / RDKit | Used to generate quantum-chemical and molecular descriptors (e.g., σ-profiles, topological indices) that serve as input features (X) for the QSPR model [21] [23]. |

| Pandas & NumPy | Essential for data manipulation, handling, and cleaning of the dataset containing molecular structures and their corresponding melting points (y). |

| Hyperparameter Optimization | Tools like GridSearchCV or RandomizedSearchCV in scikit-learn are used to systematically find the best model parameters [23]. |

| SHAP (SHapley Additive exPlanations) | A game-theoretic approach to explain the output of any ML model, crucial for interpreting "black-box" models and understanding which molecular descriptors drive the predictions [23]. |

Workflow for QSPR-based Melting Point Prediction

The following diagram illustrates a standardized experimental workflow for developing a QSPR model for melting point prediction, from data collection to model deployment.

QSPR Modeling Workflow

Algorithm Selection Guide

This decision diagram provides a logical pathway for selecting the most appropriate algorithm based on your dataset characteristics and research goals.

Algorithm Selection Logic

Deep Learning Approaches for High-Accuracy Melting Point Prediction

Frequently Asked Questions (FAQs)

Q1: What is the main advantage of using deep learning over traditional QSPR methods for melting point prediction? Deep learning models, particularly graph neural networks (GNNs), can automatically learn relevant features from molecular structures without relying on pre-defined human-engineered descriptors. This allows them to capture complex structure-property relationships more effectively, often leading to higher predictive accuracy. Traditional descriptor-based methods may lose important structural information and are limited by human design choices [27].

Q2: My model achieves excellent performance on the training data but performs poorly on new ionic liquids. What might be the cause? This is a classic sign of overfitting. Solutions include:

- Increase your dataset size: The diversity and size of your training set are crucial. One high-performing model was trained on a dataset of 1,253 ionic liquids [5].

- Apply regularization techniques: Use methods like dropout, which was implemented with a rate of 0.8 in a PyTorch DNN tutorial to prevent the network from becoming overly reliant on any single neuron [28].

- Use a validation set: Always evaluate your model on a separate test set that is not used during training to get a true measure of its predictive performance [29].

Q3: How can I represent an ionic liquid for a deep learning model? There are two primary approaches:

- Molecular Descriptors: Use software like RDKit or Dragon to calculate numerical descriptors from the SMILES string of the molecule [5] [29]. This was used in a study achieving an R² score of 0.90 [5].

- Molecular Graphs: This is the more modern approach. The ionic liquid is represented as a graph where atoms are nodes and bonds are edges. GNNs then operate directly on this structure, which preserves topological information and has shown superior performance [27] [30].

Q4: What does the "domain of applicability" mean for my melting point prediction model? The domain of applicability defines the chemical space for which your model's predictions are reliable. If you try to predict the melting point of an ionic liquid with a structure very different from those in your training set, the prediction will have high uncertainty. Some advanced platforms, like DeepAutoQSAR, provide confidence estimates alongside predictions to help identify such cases [31].

Q5: How can I understand which parts of an ionic liquid's structure most influence the melting point prediction? Use interpretability methods built into GNNs. These techniques can compute the contribution of individual atoms to the final predicted melting point. For example, one study found that amino groups, S+, N+, and P+ increased melting points, while negatively charged halogen atoms, S-, and N- decreased them [27].

Troubleshooting Guides

Issue 1: Poor Predictive Performance (Low R², High Error)

Symptoms:

- Low R² value and high Root-Mean-Squared Error (RMSE) on the test set.

- Predictions are inaccurate for both training and test data.

Diagnosis and Solutions:

Check Data Quality and Quantity

Try a Different Model Architecture

- Cause: The chosen model is not suited for the complexity of the task.

- Solution: Experiment with different deep learning architectures. Research indicates that GNNs, specifically Graph Convolutional Networks (GCNs), can achieve state-of-the-art performance (RMSE ~37 K) for this task, outperforming descriptor-based models [27].

Tune Hyperparameters

- Cause: The model's hyperparameters (e.g., learning rate, hidden layer size) are not optimized.

- Solution: Systematically search for optimal hyperparameters. The following table lists key hyperparameters from a successful Deep Neural Network (DNN) implementation [28]:

Table: Key Hyperparameters for a DNN Model from a PyTorch Tutorial

| Hyperparameter | Description | Value Used |

|---|---|---|

| Hidden Size | Number of neurons in the hidden layer. | 1024 |

| Learning Rate | Step size for weight updates during training. | 0.001 |

| Dropout Rate | Fraction of neurons randomly turned off to prevent overfitting. | 0.8 |

| Batch Size | Number of samples processed before the model is updated. | 256 |

| Training Epochs | Number of complete passes through the training dataset. | 200 |

Issue 2: Model Training is Unstable or Slow

Symptoms:

- The training loss does not decrease or shows large fluctuations.

- The model takes a very long time to train.

Diagnosis and Solutions:

Normalize Input Data

- Cause: Molecular descriptors have different scales, which can destabilize training.

- Solution: Always standardize your input features. A common method is to scale them to have a mean of 0 and a standard deviation of 1, as implemented in a PyTorch DNN using

StandardScaler[28].

Optimize the Optimizer

Utilize GPU Acceleration

- Cause: Training deep learning models on a CPU is computationally intensive and slow.

- Solution: Implement code that can leverage GPUs. Frameworks like PyTorch and TensorFlow make this relatively straightforward, as shown in a tutorial that checks for CUDA availability [28].

Issue 3: Inability to Interpret Model Predictions

Symptoms:

- The model is a "black box," and you cannot explain why it made a specific prediction.

Diagnosis and Solutions:

- Adopt an Interpretable Architecture

- Cause: Standard descriptor-based deep learning models are inherently difficult to interpret.

- Solution: Use Graph Neural Networks (GNNs) with built-in interpretability. These models can highlight which atoms in a molecule contributed most to the prediction, moving beyond the black box [27].

Experimental Protocols & Workflows

Detailed Methodology: Building a GNN Model for Melting Point Prediction

This protocol is adapted from recent research that demonstrated high accuracy using GNNs [27].

Data Collection:

- Source melting point data for ionic liquids from a reliable database such as ILThermo [5].

- Collect the SMILES strings or other structural identifiers for each ionic liquid cation and anion.

Data Preprocessing:

- Clean the Data: Remove duplicates and handle missing values.

- Split the Data: Randomly divide the dataset into training (80%), validation (10%), and test (10%) sets. The validation set is used for tuning hyperparameters, and the test set is for the final evaluation [29].

Molecular Representation:

- Convert the SMILES strings of the ionic liquids into molecular graphs. In this representation:

- Nodes represent atoms.

- Edges represent chemical bonds.

- Use a library like RDKit to automate this conversion. Node features can include atom type, formal charge, hybridization, and aromaticity [27].

- Convert the SMILES strings of the ionic liquids into molecular graphs. In this representation:

Model Training:

- Select a GNN architecture such as a Graph Convolutional Network (GCN) or Graph Attention Network (GAT).

- Feed the training molecular graphs into the GNN.

- The GNN learns to aggregate information from neighboring atoms to create a numerical representation (embedding) of the entire molecule.

- This embedding is passed through a set of fully connected layers to predict the melting point.

- Use a loss function like Mean Squared Error (MSE) to measure the difference between predictions and true values.

Model Interpretation:

- Use the GNN's interpretability method to compute atomic contributions.

- Visualize these contributions on the molecular structure, often with a color scale, to see which functional groups raise or lower the predicted melting point [27].

GNN-based Melting Point Prediction Workflow

Table: Comparison of Machine Learning Model Performance for Melting Point Prediction

| Model Type | Specific Model | Dataset Size | Performance (RMSE) | R² / Correlation Coefficient | Key Features |

|---|---|---|---|---|---|

| Deep Learning | Deep Learning (RNN/Recursive) | 1,253 ILs | ~32 K | R² = 0.90 [5] | Uses 137 key molecular descriptors from Dragon software. |

| Graph Neural Network | Graph Convolutional (GC) | 3,080 ILs | 37.06 | R = 0.76 [27] | Operates directly on molecular graphs; offers atom-level interpretation. |

| Descriptor-Based ML | Random Forest | Not Specified | Not Specified | Not Specified | Used Extended-Connectivity Fingerprints (ECFPs); outperformed by GNNs in study [27]. |

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Software and Tools for Deep Learning-based QSPR

| Tool Name | Type | Primary Function | Relevance to Melting Point Prediction |

|---|---|---|---|

| ILThermo | Database | A comprehensive database of thermodynamic properties of ionic liquids. [5] | The primary source for curated, experimental melting point data for model training and validation. |

| RDKit | Cheminformatics | An open-source toolkit for Cheminformatics. [27] | Used to convert SMILES strings into molecular graphs or to calculate molecular descriptors (e.g., ECFPs). |

| Dragon | Descriptor Software | Commercial software for calculating a vast number of molecular descriptors. [5] | Can generate over 5,000 molecular descriptors for traditional QSPR models. |

| DeepChem | ML Library | An open-source library for deep learning in drug discovery and materials science. [27] | Provides implementations of various GNN architectures (GCN, GAT, MPNN) and other ML models. |

| TensorFlow/Keras & PyTorch | Deep Learning Frameworks | Open-source libraries for building and training deep learning models. [5] [28] | The foundational frameworks used to construct, train, and deploy custom deep neural networks and GNNs. |

| DeepAutoQSAR | Commercial Platform | An automated platform for building QSAR/QSPR models. [31] | Streamlines the entire workflow, from descriptor calculation and model training to providing confidence estimates and visualizations. |

Descriptor Selection and Feature Engineering Strategies

Frequently Asked Questions (FAQs)

FAQ 1: What are the primary strategies for representing ionic liquid structures in QSPR models? The structure of an ionic liquid can be represented in several ways, each with implications for descriptor calculation and model interpretability. The main approaches are:

- Separate Ion Description (A|B): Molecular descriptors are calculated independently for the optimized geometry of the cation and the anion. This method can be computationally intensive as it requires geometry optimization for each ion, often using quantum-chemical methods like PM7, HF, or DFT [13].

- Ionic Pair Description ([A+B]): Descriptors are calculated for the entire ionic pair after its geometry has been optimized as a single molecule [13].

- Additive Scheme: Descriptors for the whole IL are calculated as a molar-fraction-weighted sum of the descriptors for the separately optimized ions [13].

- 2D Descriptors: Instead of relying on 3D structures that require geometry optimization, simpler 2D descriptors can be derived directly from the chemical structural formula. Benchmark studies have shown that models using 2D descriptors for ionic pairs can achieve reliability comparable to more complex 3D approaches, while being less time-consuming and easier to interpret [13].

FAQ 2: Which molecular descriptors are most critical for predicting the melting points of ionic liquids? Research indicates that melting points are influenced by specific molecular features captured by key descriptors. A QSPR study on 288 diverse ILs revealed that descriptors related to the following factors are particularly important [12]:

- Presence of a benzene ring structure

- Number of rotatable bonds

- Degree of branching

- Molecular symmetry

- Intramolecular electronic effects

Furthermore, a deep-learning model that started with 5272 molecular descriptors successfully predicted melting points using a refined set of 137 significant molecular descriptors, achieving a high R² score of 0.90 [5]. The selection of these descriptors often involves statistical filtering, such as using a Pearson correlation matrix to remove descriptors with low correlation (<0.20) or high inter-correlation (>0.90) with the target property [5].

FAQ 3: What are the common data sources for building QSPR models of ionic liquids? Researchers typically rely on curated experimental databases and specialized software for descriptor calculation, as summarized in the table below.

Table 1: Key Research Reagents and Resources for QSPR Modeling of Ionic Liquids

| Resource Name | Type/Function | Key Features / Application |

|---|---|---|

| ILThermo (v2.0) [5] | Experimental Database | NIST database containing ~120,000 data points for nearly 1800 pure IL systems, including melting points, thermodynamic, and transport properties. |

| Dragon Software [5] [13] | Descriptor Calculation | Commercial software used to calculate thousands of molecular descriptors (e.g., 5272 in one study) for QSPR modeling. |

| CODESSA Program [12] | Descriptor Calculation & Modeling | Software capable of calculating descriptors and performing heuristic method (HM) and projection pursuit regression (PPR) for model development. |

| SelinfDB [32] | Experimental Database | Database containing selectivity at infinite dilution values, useful for auxiliary modeling and validation. |

| OPSIN Library [5] | Structure Conversion | A library used to convert IUPAC names of ILs into SMILES representations for simplified processing. |

FAQ 4: What machine learning algorithms are most effective for melting point prediction? Both traditional and advanced machine learning algorithms have been successfully applied. Projection Pursuit Regression (PPR), a nonlinear technique, has been shown to outperform linear methods like Heuristic Method (HM), yielding a higher R² (0.810 vs. 0.712) for melting point prediction [12]. More recently, deep learning models (a subset of machine learning) have demonstrated high accuracy, with one model based on recursive neural networks (RNNs) achieving an R² of 0.90 and a root mean square error (RMSE) of approximately 32 K [5]. Other studies also report good performance from Random Forest (RF) and Categorical Boosting (CatBoost) algorithms for predicting other IL properties like viscosity [16].

Troubleshooting Guides

Issue 1: Poor Model Performance and Low Predictive Accuracy

- Problem: Your QSPR model for melting point prediction shows a low R² value and high error on the validation set.

- Solution: Follow this systematic troubleshooting workflow to identify and address the root cause.

Check Data Quality and Preprocessing:

- Outlier Detection: Use statistical methods, such as the Leverage method, to identify and remove outliers from your dataset [16]. One study on viscosity prediction found that over 94% of their data was statistically valid after this step [16].

- Data Size: Ensure your training dataset is sufficiently large. For complex deep learning models, datasets with over 1200 IL data points have been used successfully [5].

- Data Normalization: Normalize all molecular descriptors before model training to ensure features are on a comparable scale [5].

Review Feature Engineering Strategy:

- Feature Selection: Apply correlation analysis. A robust approach is to exclude molecular descriptors with low correlation with the melting point (<0.20) and those with high inter-correlation (>0.90) to reduce dimensionality and prevent overfitting [5].

- Structure Representation: Experiment with different ionic liquid representations. Benchmark studies suggest that 2D descriptors calculated for the ionic pair can be sufficient and more efficient than 3D descriptors calculated for separate ions [13].

Validate Model Complexity:

- If using a linear model, try a nonlinear alternative. Projection Pursuit Regression (PPR) has been shown to provide better performance than linear Heuristic Methods for melting point prediction [12].

- For larger datasets, consider deep learning models with multiple hidden layers, which can capture complex, non-linear relationships between descriptors and melting points [5] [33].

- Always use cross-validation to tune model hyperparameters and avoid overfitting.

Assess Applicability Domain (AD): Ensure that the ILs you are trying to predict fall within the chemical space of the ILs used to train your model. Predictions for structures outside the model's AD are unreliable [32] [13].

Issue 2: Model Overfitting and Inability to Generalize

- Problem: The model performs excellently on the training data but poorly on unseen test data.

- Solution:

- Reduce Feature Dimensionality: A common cause of overfitting is having too many descriptors relative to the number of data points. Use feature selection techniques aggressively. For example, one study reduced an initial set of 5272 descriptors to a more robust set of 137 before model training [5].

- Implement Rigorous Validation: Use a hold-out test set (e.g., 20% of the data) that is not used during model training or feature selection [5]. Perform internal validation via leave-one-out or k-fold cross-validation to assess model stability [13] [12].

- Simplify the Model: If using a complex model like a deep neural network, try simplifying the architecture (e.g., fewer layers or neurons) or increase the amount of training data.

- Apply Regularization Techniques: Use regularization methods (e.g., L1 or L2) available in many machine learning algorithms to penalize model complexity.

Issue 3: Inconsistent or Chemically Irrational Predictions

- Problem: The model generates predictions that contradict established chemical knowledge or show high variance for similar ILs.

- Solution:

- Descriptor Interpretation: Analyze the most important descriptors in your model. They should have a physically interpretable relationship with melting point. For example, descriptors related to symmetry, rotatable bonds, and aromaticity are known to be influential [12]. If the key descriptors lack chemical meaning, reconsider your feature set.

- Define Applicability Domain: Explicitly define the model's Applicability Domain (AD). A model trained only on imidazolium-based ILs may perform poorly when predicting phosphonium-based ILs. Techniques like leverage and distance-to-model can be used to define the AD [32] [13].

- Check for Data Bias: Ensure your training data covers a diverse range of cation and anion classes. A model built on a non-representative dataset will have inherent biases.

Experimental Protocol: Building a Melting Point Prediction Model

This protocol outlines the key steps for developing a QSPR model to predict the melting points of Ionic Liquids, based on established methodologies [5] [12].

Step 1: Data Collection and Curation

- Source experimental melting point data from a reliable database such as ILThermo [5].

- Collect the IUPAC names or structures of the ionic liquids.

- Convert the IUPAC names to SMILES representations using a tool like the OPSIN library [5].

- Clean the data by removing duplicates and obvious outliers.

Step 2: Molecular Descriptor Calculation

- Choose a structure representation strategy (e.g., separate ions vs. ionic pair).

- Use software like Dragon or CODESSA to calculate a comprehensive set of molecular descriptors for each IL [5] [12].

- If using 3D descriptors, perform geometry optimization of the ions or ionic pairs using an appropriate quantum-chemical method (e.g., PM7, DFT) [13].

Step 3: Feature Selection and Preprocessing

- Remove descriptors with missing values or low variance.

- Perform Pearson correlation analysis:

- Exclude descriptors with a low correlation (e.g., <0.20) with the melting point.

- From the remaining descriptors, exclude those with high inter-correlation (e.g., >0.90) to reduce multicollinearity [5].

- Normalize the selected descriptor set (e.g., mean centering and scaling to unit variance).

Step 4: Model Development and Training

- Split the dataset randomly into a training set (e.g., 80%) and a test set (e.g., 20%) [5].

- Select a machine learning algorithm. For a baseline, start with a linear model, then progress to nonlinear methods like Projection Pursuit Regression (PPR) or a Deep Learning model [5] [12].

- For deep learning, a typical architecture may include an input layer, several hidden layers (e.g., 512, 512, 256 neurons), and an output layer with one neuron [5].

- Train the model using the training set. Use a stochastic gradient descent optimizer like Adam and train for a sufficient number of epochs [5].

Step 5: Model Validation and Interpretation

- Use the held-out test set to evaluate the model's predictive performance. Report key metrics such as R² and RMSE [5] [12].

- Perform internal validation via cross-validation on the training set [13].

- Interpret the model by analyzing the importance of the selected descriptors in the context of ionic liquid chemistry [12].

Frequently Asked Questions (FAQs)

FAQ 1: What are the key advantages of using machine learning (ML) over traditional group contribution methods for predicting ionic liquid melting points?