Optimizing Drug Development: A Project Manager's Guide to Design of Experiments (DoE)

This article provides a comprehensive framework for applying Design of Experiments (DoE) in pharmaceutical development and manufacturing.

Optimizing Drug Development: A Project Manager's Guide to Design of Experiments (DoE)

Abstract

This article provides a comprehensive framework for applying Design of Experiments (DoE) in pharmaceutical development and manufacturing. Tailored for researchers, scientists, and project managers, it bridges statistical methodology with project management principles. The content covers foundational DoE concepts, advanced application methodologies, troubleshooting for complex systems, and validation techniques, with a special focus on optimizing drug delivery systems and manufacturing processes. Readers will learn to implement DoE for improved product quality, regulatory compliance, and accelerated development timelines.

What is Design of Experiments? Core Principles for Pharmaceutical Professionals

Design of Experiments (DoE) is a systematic, statistically-based method for simultaneously investigating the effects of multiple factors and their interactions on a process or product outcome. This approach represents a fundamental shift from the traditional One-Factor-at-a-Time (OFAT) methodology, which varies only one parameter while holding all others constant. OFAT methodology presents significant limitations: it fails to detect interactions between factors, requires more experimental resources, and often leads to suboptimal process understanding and performance.

Within pharmaceutical development and Process Mass Intensity (PMI) optimization research, DoE provides a structured framework for efficiently mapping the relationship between critical process parameters (CPPs) and critical quality attributes (CQAs). This enables researchers to identify robust operating conditions that minimize environmental impact while maintaining product quality, directly supporting the development of 'greener-by-design' synthetic routes for Active Pharmaceutical Ingredients (APIs) [1].

Comparative Analysis: DoE Versus OFAT

The fundamental difference between DoE and OFAT lies in their experimental efficiency and ability to detect interactions. The table below summarizes the key distinctions:

Table 1: Systematic Comparison of DoE and OFAT Methodologies

| Characteristic | One-Factor-at-a-Time (OFAT) | Design of Experiments (DoE) |

|---|---|---|

| Experimental Approach | Sequential variation of single factors | Simultaneous variation of multiple factors |

| Detection of Interactions | Fails to detect factor interactions | Systematically identifies and quantifies interactions |

| Number of Experiments | Often excessive and inefficient | Highly efficient; minimizes experimental runs |

| Statistical Robustness | Low; limited predictive power | High; enables predictive modeling and optimization |

| Resource Utilization | High resource consumption | Optimized resource allocation |

| Process Understanding | Superficial understanding of main effects | Deep, mechanistic understanding of factor relationships |

A compelling case study from pharmaceutical development demonstrates these differences quantitatively. For a specific chemical transformation, traditional OFAT optimization required approximately 500 experiments to achieve 70% yield and 91% enantiomeric excess (ee). In contrast, a Bayesian optimization approach (a model-based DoE technique) achieved a superior outcome of 80% yield and 91% ee in only 24 experiments [1]. This represents a 95% reduction in experimental workload while simultaneously improving the key performance metric.

The ability of DoE to detect interactions is its most significant advantage. In a project coordination example, analysis revealed that adding both an engineer and a technician together reduced project completion time more than the sum of their individual effects, a synergistic interaction completely undetectable by OFAT [2].

DoE Experimental Protocols and Workflows

Protocol 1: Pre-Experimental Planning and Factor Selection

Objective: To systematically define the experimental scope, select factors and responses, and choose an appropriate experimental design.

Step 1: Define the Problem and Objectives

- Clearly articulate the primary goal (e.g., "Optimize reaction yield and enantioselectivity while minimizing PMI").

- Determine the type of study: screening (identifying vital few factors) or optimization (finding the best factor levels).

Step 2: Identify and Classify Factors

- Brainstorm all potential controllable factors (e.g., temperature, catalyst loading, solvent volume, reagent stoichiometry).

- Classify factors as continuous (e.g., temperature) or categorical (e.g., solvent type).

- Select a manageable number of factors (typically 3-5) for initial studies based on prior knowledge and risk assessment.

Step 3: Select Measurable Responses

- Define primary and secondary responses that align with objectives.

- Ensure responses are quantifiable, reproducible, and relevant to process performance (e.g., yield, purity, PMI, cost).

Step 4: Choose the Experimental Design

- For screening: Use Fractional Factorial or Plackett-Burman designs to efficiently identify significant factors.

- For optimization: Use Response Surface Methodology (RSM) designs like Central Composite Design (CCD) or Box-Behnken.

- For formulation: Use Mixture Designs.

Step 5: Determine the Experimental Range for Factors

- Define the low (-) and high (+) levels for each factor based on scientific judgment and preliminary data.

- Ensure the range is wide enough to elicit a measurable response but not so wide as to cause process failure.

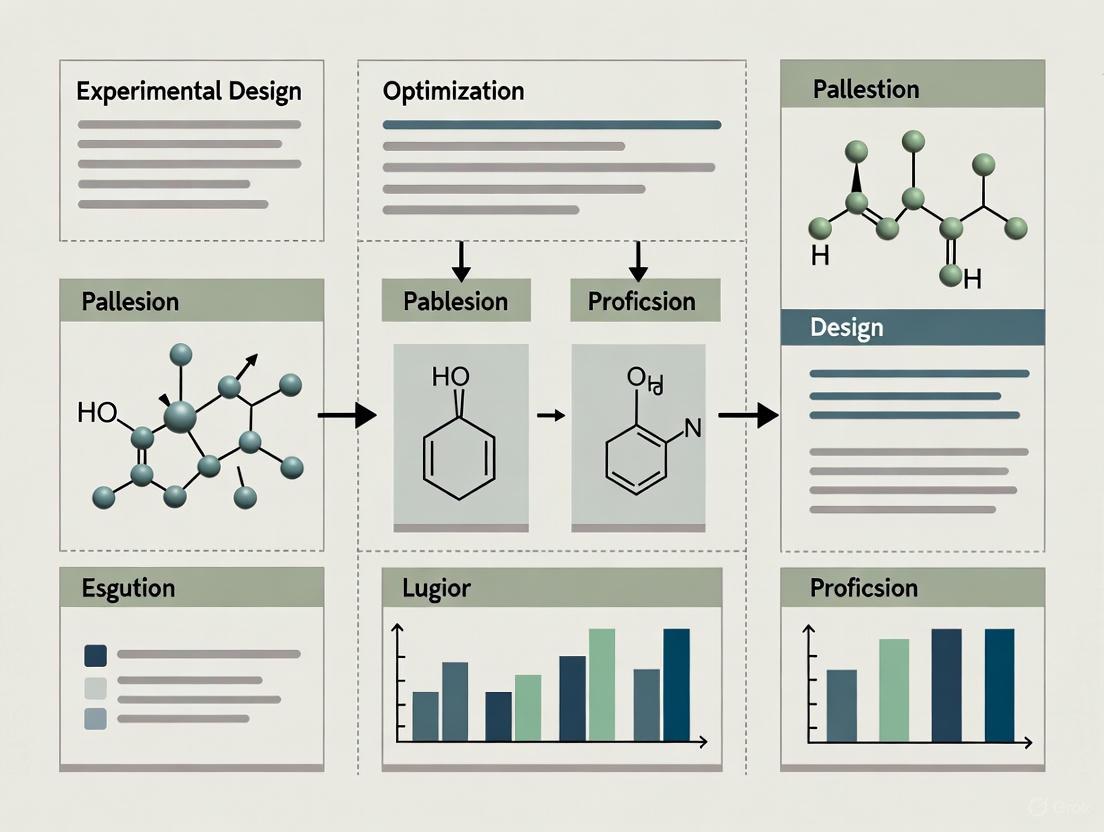

The following workflow diagram illustrates the logical sequence for planning and executing a DoE study:

Protocol 2: Standard Workflow for a Screening DoE

Objective: To efficiently identify the most influential factors affecting a process using a Fractional Factorial design.

Materials:

- Reaction substrates and reagents

- Appropriate laboratory equipment (reactors, analyzers, etc.)

- Statistical software (e.g., JMP, Design-Expert, R, Python with EDBO+)

Procedure:

- Design Generation: Using statistical software, generate a 2-level (high/low) Fractional Factorial design for the selected factors. A design for 4 factors in 8 runs is common.

- Randomization: Randomize the order of all experimental runs. This is critical to avoid confounding the effects of factors with unknown time-dependent variables.

- Experimental Execution: Conduct experiments precisely according to the randomized run order and specified factor levels.

- Data Collection: For each run, accurately measure and record all predefined responses (e.g., yield, conversion, impurity profile).

- Data Analysis:

- Input the response data into the software.

- Perform ANOVA (Analysis of Variance) to identify statistically significant factors (typically with a p-value < 0.05).

- Examine Pareto charts and half-normal plots to visualize effect magnitudes.

- Interpret main effects and interaction plots to understand factor influence.

- Model Validation: Confirm the model's predictive capability by running 1-2 confirmation experiments at conditions not in the original design but within the experimental space.

Protocol 3: Advanced Optimization Using Bayesian DoE

Objective: To rapidly converge on an optimal set of process conditions with a minimal number of experiments, particularly useful for complex, non-linear systems.

Materials:

- Standard laboratory synthesis equipment.

- Access to a Bayesian optimization platform (e.g., EDBO/EDBO+, an open-source experimental design package).

Procedure:

- Define the Search Space: Specify the factors to be optimized and their feasible ranges (e.g., temperature: 20-100°C, catalyst mol%: 1-5%).

- Define the Objective Function: Formulate a single objective to maximize or minimize, which can be a combination of multiple responses (e.g., "Maximize: 0.7yield + 0.3ee").

- Initial Design: Start with a small space-filling initial design (e.g., 5-10 points) to build a preliminary model.

- Iterative Optimization Loop:

- Model Training: The algorithm uses the accumulated data to train a Gaussian Process (GP) model that predicts the objective function across the entire search space.

- Acquisition Function Maximization: The algorithm calculates an "acquisition function" (e.g., Expected Improvement) to identify the single most informative next experiment by balancing exploration (probing uncertain regions) and exploitation (probing regions predicted to be high-performing).

- Experiment Execution: Perform the recommended experiment and record the result.

- Data Update: Add the new data point to the existing dataset.

- Convergence: Repeat the loop until performance plateaus or a predefined performance target is met, typically requiring far fewer runs than traditional DoE [1].

- Final Validation: Conduct 2-3 replicate runs at the predicted optimum to confirm performance and estimate robustness.

The conceptual flow of this closed-loop optimization is shown below:

Data Presentation and Analysis

The quantitative outcomes from a DoE study are best analyzed and presented using statistical tools and summary tables. The following table compiles data from a published case study and a project management example to illustrate the typical outputs of a DoE analysis.

Table 2: Quantitative Results from DoE Case Studies in API Synthesis and Project Management

| Case Study Description | Optimization Method | Number of Experiments | Key Results | Quantified Improvement |

|---|---|---|---|---|

| Chemical Transformation for API [1] | One-Factor-at-a-Time (OFAT) | ~500 | 70% Yield, 91% ee | Baseline |

| Bayesian Optimization (DoE) | 24 | 80% Yield, 91% ee | +10% Yield, >95% fewer experiments | |

| Project Coordination [2] | Baseline (2 Engineers, 6 Techs) | 1 (Simulation) | 128 Days, $158K Cost | Baseline |

| DoE Optimized (3 Engineers, 7 Techs) | 1 (Simulation) | 74 Days, $110K Cost | -54 Days, -$48K Cost |

Analysis of variance (ANOVA) is the cornerstone of interpreting a classical DoE. It decomposes the total variability in the response data into attributable components for each factor and their interactions. A significant F-value and a low p-value (typically < 0.05) indicate that the factor has a statistically significant effect on the response. The resulting regression model allows for the prediction of responses and the creation of contour plots or response surface plots to visualize the relationship between factors and identify optimal regions.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful implementation of DoE, particularly in pharmaceutical research, relies on both physical reagents and computational tools. The following table details key components of the modern researcher's toolkit for PMI optimization.

Table 3: Essential Research Reagent Solutions for DoE and PMI Optimization

| Tool / Reagent Category | Specific Examples | Function & Application Note |

|---|---|---|

| Statistical Software | JMP, Design-Expert, R, Python (with scikit-learn, EDBO+) | Generates experimental designs, performs ANOVA, builds predictive models, and visualizes response surfaces. Critical for data analysis. |

| Bayesian Optimization Platforms | EDBO / EDBO+ [1] | Open-source platforms that automate the design-selection loop for highly efficient experiment selection, minimizing total experimental burden. |

| Process Mass Intensity (PMI) Prediction Tools | PMI Prediction App [1] | Utilizes predictive analytics and historical data to forecast the PMI of proposed synthetic routes prior to laboratory work, enabling greener-by-design route selection. |

| Catalysts & Ligands | Organocatalysts, Metal complexes (e.g., Pd, Ru), Chiral ligands | Key factors for optimizing yield and stereoselectivity in API syntheses. Their type and loading are common variables in DoE studies. |

| Solvent Systems | Green solvents (e.g., 2-MeTHF, Cyrene), solvent mixtures | A primary lever for reducing PMI and improving environmental footprint. Solvent choice and volume are critical factors for "greener" processes. |

Visualization and Accessibility in Data Representation

Effective communication of DoE results requires clear, accessible visualizations. Adherence to established design principles ensures that charts and diagrams are interpretable by all audience members, including those with color vision deficiencies.

- Color Scale Selection: Use sequential scales (single hue, varying lightness) for ordered data progressing from low to high values. Use diverging scales (two contrasting hues) to highlight deviation from a central midpoint. Use categorical/qualitative scales (distinct hues) for nominal data, limiting the palette to 10 or fewer colors [3] [4].

- Accessibility and Contrast: Ensure a high contrast ratio between text/foreground elements and their background. The Web Content Accessibility Guidelines (WCAG) recommend a contrast ratio of at least 4.5:1 for standard text and 3:1 for large text [5]. Avoid problematic color pairs like red-green, which are prevalent in forms of color blindness. Use online tools to simulate how visualizations appear to color-blind users [3] [4].

- Strategic Color Use: Employ color purposefully to highlight key data patterns or categories, avoiding decoration. Using neutral colors for most data and a bright, contrasting color to emphasize critical points enhances comprehension and focuses attention [4].

Design of Experiments (DoE) is a branch of applied statistics that deals with the planning, conducting, analyzing, and interpreting of controlled tests to evaluate the factors that control the value of a parameter or group of parameters [6]. This structured approach allows researchers to efficiently investigate the relationship between input factors and output responses, moving beyond the limitations of traditional one-factor-at-a-time (OFAT) experimentation [7]. Within the context of pharmaceutical development and process optimization, understanding the core concepts of factors, responses, and their interactions is fundamental to developing robust, efficient, and predictable processes.

The alternative to DoE, often called the "COST" (Change One Separate factor at a Time) approach, involves varying just one factor while holding others constant [7]. While intuitive, this method is inefficient and carries a significant risk of misidentifying optimal conditions because it fails to explore the entire experimental space and cannot detect interactions between factors [7]. In contrast, a strategically planned and executed DoE allows for multiple input factors to be manipulated simultaneously, determining their individual and interactive effects on a desired output [6]. This provides a more accurate map of the process, leading to more reliable conclusions and better decision-making.

Defining the Core Components of an Experiment

Key Terminology and Definitions

The language of DoE provides a precise framework for designing and discussing experiments. The table below summarizes the fundamental terms and their definitions.

Table 1: Core Terminology in Design of Experiments

| Term | Definition | Example from Pharmaceutical Context |

|---|---|---|

| Response | The variable that measures the outcome of interest [8]. | Final drug product purity, percentage yield, or dissolution rate. |

| Factor | An independent variable that is a possible source of variation in the response variable [8]. | Reaction temperature, catalyst concentration, or mixing speed. |

| Factor Level | A specific value or setting of a factor used in the experiment [8]. | Temperature: 50°C and 70°C; Catalyst: 0.1 mol% and 0.5 mol%. |

| Treatment | A unique combination of factor levels [8]. | Running the reaction at 50°C with 0.5 mol% catalyst. |

| Interaction | When the effect of one factor on the response depends on the level of another factor [8]. | A higher temperature increases yield only when the catalyst concentration is also high. |

| Effect | The change in the mean response due to a change in the factor level [8]. | The average increase in purity when pressure is increased from 1 to 2 bar. |

| Experimental Run | A single instance where a treatment is applied and the response is measured [8]. | One execution of the reaction at a specific temperature and catalyst level. |

| Replication | Repetition of an entire experimental run, including the setup [6]. | Executing the same treatment combination (e.g., 50°C, 0.5 mol% catalyst) multiple times to estimate variability. |

The Critical Role of Interactions

An interaction occurs when the effect of one factor on the response is not independent of another factor [8]. In other words, the impact that changing Factor A has on the output depends on the current level of Factor B. This is a critical concept because studying one factor in isolation while ignoring others can lead to incorrect or incomplete conclusions [8].

For example, consider an experiment optimizing a chemical reaction. The effect of reaction temperature (Factor A) on product yield (Response) might be different depending on the catalyst type (Factor B). It is possible that increasing temperature boosts yield when using Catalyst 1, but has little to no effect—or even a negative effect—when using Catalyst 2. If the experimenter only studied temperature with Catalyst 1, they would draw a conclusion that does not hold true for the entire process. DoE is uniquely powerful in its ability to identify and quantify these interactions, which are often missed by the OFAT approach [6].

Experimental Protocols and Data Analysis

A Detailed Protocol for a Two-Factor Full Factorial DoE

This protocol outlines the steps for a basic yet powerful experimental design to investigate two factors and their potential interaction.

Objective: To determine the individual and interactive effects of Temperature and Catalyst Concentration on the Yield of an active pharmaceutical ingredient (API).

Step 1: Define Factors and Levels

- Factor A: Reaction Temperature

- Low Level (-1): 50°C

- High Level (+1): 70°C

- Factor B: Catalyst Concentration

- Low Level (-1): 0.1 mol%

- High Level (+1): 0.5 mol%

Step 2: Construct the Design Matrix A full factorial design requires running all possible combinations of the factor levels. For 2 factors at 2 levels each, this results in 2² = 4 experimental runs [6]. The design matrix is coded for easier calculation of effects.

Table 2: Design Matrix for a 2² Full Factorial Experiment

| Run Order | Temperature (Coded) | Catalyst Conc. (Coded) | Temperature (Actual) | Catalyst Conc. (Actual) |

|---|---|---|---|---|

| 1 | -1 | -1 | 50°C | 0.1 mol% |

| 2 | +1 | -1 | 70°C | 0.1 mol% |

| 3 | -1 | +1 | 50°C | 0.5 mol% |

| 4 | +1 | +1 | 70°C | 0.5 mol% |

Step 3: Implement Replication and Randomization

- Replication: Perform each of the 4 runs in the design matrix twice (for a total of 8 experimental runs) to obtain an estimate of experimental error [8].

- Randomization: Randomize the order in which all 8 runs are executed. This helps average out the effects of uncontrolled, lurking variables (e.g., ambient humidity, reagent age) that could otherwise confound the results [8] [6].

Step 4: Execute Experiment and Record Data Carry out the reaction according to the randomized run order, carefully controlling the factor levels as defined. Precisely measure and record the Yield (%) for each run.

Step 5: Analyze the Results and Calculate Effects The main effect of a factor is the average change in response when that factor is moved from its low to high level, averaged across the levels of the other factors [6]. Using the example data below, the effects can be calculated.

Table 3: Example Experimental Data and Effect Calculations

| Run | Temp. | Catalyst | Yield (%) | Calculation |

|---|---|---|---|---|

| 1 | -1 | -1 | 65 | Main Effect of Temp: |

| 2 | +1 | -1 | 75 | [ (75 + 92)/2 - (65 + 85)/2 ] = 8.5% |

| 3 | -1 | +1 | 85 | Main Effect of Catalyst: |

| 4 | +1 | +1 | 92 | [ (85 + 92)/2 - (65 + 75)/2 ] = 18.5% |

| Interaction Effect (Temp*Catalyst): | ||||

| [ (65 + 92)/2 - (75 + 85)/2 ] = -1.5% |

Visualizing the Experimental Workflow and Outcomes

The following diagram illustrates the logical workflow of a designed experiment, from planning to analysis.

The relationship between factors and their interaction can be effectively visualized using an interaction plot, as generated by the following DOT script.

The Scientist's Toolkit: Essential Research Reagent Solutions

The successful execution of a DoE relies on precise control of factors and accurate measurement of responses. The following table details key materials and their functions in the context of a pharmaceutical development experiment.

Table 4: Key Research Reagent Solutions for Experimental Execution

| Item | Function / Rationale |

|---|---|

| High-Purity Chemical Reference Standards | Serves as the benchmark for quantifying API yield and purity via HPLC or GC analysis, ensuring accurate response measurement. |

| Characterized Catalyst Lots | A critical controllable factor whose concentration and type can significantly influence reaction rate, yield, and impurity profile. |

| Buffers and pH Adjustment Solutions | Allows for the precise control and maintenance of reaction pH, a continuous factor that often interacts with other variables like temperature. |

| Stable Isotope-Labeled Analytes | Used as internal standards in mass spectrometry to correct for sample preparation and instrument variability, improving response data quality. |

| Specification-Compliant Solvents | The reaction medium; different solvent lots or grades can be a source of uncontrolled noise if not properly standardized. |

Application in Project Management and Optimization

The principles of DoE extend beyond the laboratory and are powerfully applied in project management for optimization and decision-making. A case study involving project coordination demonstrates this utility [2]. The project was behind schedule, and management needed to decide whether to add an engineer, add a technician, or purchase a patented component to reduce completion time.

A designed experiment was set up with these three factors, each at two levels [2]:

- Engineer Staff Level: 2 (Low) vs. 3 (High)

- Technician Staff Level: 6 (Low) vs. 7 (High)

- Latching Device Source: Develop in-house (Low) vs. Purchase (High)

The analysis of the eight possible scenarios revealed significant main and interaction effects. While adding an engineer reduced project time by an average of 18.5 days and adding a technician reduced it by 42 days, the interaction between them was crucial [2]. The time-saving benefit of adding both was greater than the sum of their individual effects. Furthermore, purchasing the component unexpectedly increased completion time, a counter-intuitive finding that would have been missed with a COST approach. This structured analysis allowed management to identify the most effective and cost-efficient solution to the scheduling problem [2].

For researchers, scientists, and drug development professionals, the Design of Experiments (DoE) represents a powerful statistical methodology for simultaneously testing multiple input factors to determine their effect on desired outputs and their interactions [9]. When integrated with Project Management Institute (PMI) principles, DoE transforms from a mere technical tool into a strategic project asset that enables data-driven decision-making in quality planning and process optimization [10]. In the highly regulated pharmaceutical and biomedical research sectors, this integration provides a structured framework for managing complexity, reducing development time, and ensuring consistent, reproducible results while controlling costs [11].

Project managers serve as the critical link between statistical rigor and project execution, ensuring that experiments generate statistically valid data to guide development pathways. According to PMI's quality management framework, the ultimate responsibility for quality rests with the line organization, with the individual employee performing a given task bearing responsibility for conformance to specifications [12]. The project manager's role orchestrates this responsibility through systematic experiment design, cross-functional coordination, and rigorous application of PMI principles to the experimental process.

Fundamental DoE Concepts for Project Management Professionals

Core Principles and Terminology

At its core, DoE involves the planning of an experiment to minimize the cost of data obtained and maximize the validity range of the results [12]. This requires clear treatment comparisons, controlled variables, and maximum freedom from systematic error. Key concepts include:

- Factors: Input variables that can be controlled and manipulated (e.g., temperature, pressure, staff levels, material sources) [2]

- Levels: Specific values or settings chosen for each factor (e.g., 100°C/200°C, 2 engineers/3 engineers) [9]

- Responses: Measured output variables that reflect experimental outcomes (e.g., product yield, completion time, cost) [11]

- Interactions: Situations where the effect of one factor depends on the level of another factor [10]

Unlike the inefficient "one-factor-at-a-time" (OFAT) approach, DoE allows for simultaneous testing of multiple factors, enabling project teams to detect interactions that OFAT methodologies would miss [9] [11]. This provides a more comprehensive understanding of complex systems with the same or fewer experimental runs.

DoE in the PMI Quality Management Framework

Within the PMI quality management structure, DoE serves as a vital tool during quality planning to determine the factors of a process and their impact on the overall deliverable [10]. The experimental statement, design, and analysis form the three essential components that align with PMI's progressive elaboration principle [12]. As a statistical decision-making technique, DoE supports the PMI's emphasis on data-driven approaches to quality management, enabling project teams to make informed choices between alternatives based on formal statistical concepts rather than intuition alone [12].

Table: DoE Alignment with PMI Quality Management Components

| PMI Quality Component | DoE Contribution | Project Management Benefit |

|---|---|---|

| Overall Quality Philosophy | Provides structured approach to quality planning | Engages all participants in ensuring project goals and requirements are met |

| Quality Assurance | Establishes managerial processes for experimental design | Determines organization, design, objectives and resources for quality activities |

| Quality Control | Offers technical processes for examining and analyzing results | Provides mechanisms to examine, analyze and report conformance with requirements |

DoE Application Protocols for Pharmaceutical and Biomedical Research

Structured Implementation Workflow

A successful DoE implementation in research settings follows a systematic workflow that aligns with project management phases:

Phase 1: Problem Definition and Objective Setting

- Protocol: Conduct stakeholder analysis to define experiment goals and measurable success metrics [11]

- PM Integration: Align experiment objectives with project scope statement and requirements [12]

- Output: Clearly defined problem statement with quantifiable targets

Phase 2: Factor Identification and Selection

- Protocol: Brainstorm potential factors with subject matter experts; review historical data [11]

- PM Integration: Document factors in project repository; distinguish between controllable and uncontrollable variables [10]

- Output: Comprehensive factor list with ranges and measurement methods

Phase 3: Experimental Design Selection

- Protocol: Choose appropriate design (full factorial, fractional factorial, RSM) based on factors and resources [11]

- PM Integration: Evaluate design choice against project constraints (time, budget) [2]

- Output: Experimental design matrix with defined runs and sequences

Phase 4: Experiment Execution and Data Collection

- Protocol: Implement randomization, blocking, and replication principles [9]

- PM Integration: Monitor experimental runs as project tasks; track adherence to protocol [13]

- Output: Raw data with documented experimental conditions

Phase 5: Data Analysis and Interpretation

- Protocol: Apply statistical methods (ANOVA, regression) to identify significant factors [11]

- PM Integration: Translate statistical findings into project decisions; update risk register [2]

- Output: Analysis of factor effects and interactions; model equations

Phase 6: Validation and Implementation

- Protocol: Conduct confirmation runs; verify model predictions [11]

- PM Integration: Implement changes; update project plans and quality standards [10]

- Output: Validated optimal settings; revised project parameters

Experimental Workflow Visualization

DoE Implementation Workflow: This diagram illustrates the systematic progression through DoE phases, highlighting the iterative nature of experimental design and validation.

Cross-Functional Team Responsibilities

Implementing DoE successfully requires a cross-functional team approach with clearly defined roles [11]:

Table: DoE Project Team Structure and Responsibilities

| Role | DoE Responsibilities | Project Management Activities |

|---|---|---|

| Project Manager | Coordinates experiment timeline and resources; facilitates communication | Integrates DoE results into project plan; manages stakeholder expectations |

| Research Scientist | Provides subject matter expertise; identifies factors and responses | Ensures technical alignment with project objectives; maintains research integrity |

| Statistician | Selects appropriate experimental design; performs data analysis | Validates statistical significance of results; ensures methodological rigor |

| Quality Specialist | Ensures compliance with regulatory requirements | Maintains quality documentation; verifies adherence to standards |

| Laboratory Technician | Executes experimental runs; collects data | Maintains experimental protocols; ensures data integrity |

Quantitative DoE Analysis for Project Decision-Making

Project Coordination Case Study

A practical application of DoE in project management can be illustrated through a project coordination case where a project computer analysis indicates the project will not be completed by the required date [2]. The project manager identifies three factors that could potentially reduce completion time: adding a product engineer, adding a technician, or purchasing rights to a patented device instead of developing a similar component independently.

Table: Experimental Factors and Levels for Project Coordination Example

| Factor | Low Level (−) | High Level (+) |

|---|---|---|

| Engineer Staff Level | 2 | 3 |

| Technician Staff Level | 6 | 7 |

| Obtain Latching Device | Develop | Purchase |

The experimental design involves creating eight different scenarios covering all possible combinations of the three factors at two levels each. The project completion times and costs are calculated for each combination:

Table: Experimental Design Matrix and Results for Project Coordination

| Condition | Eng. Staff | Tech. Staff | Source | Time (days) | Costs ($K) |

|---|---|---|---|---|---|

| 1 | 2 | 6 | develop | 128 | 158.2 |

| 2 | 3 | 6 | develop | 124 | 173.6 |

| 3 | 2 | 7 | develop | 98 | 129.2 |

| 4 | 3 | 7 | develop | 74 | 109.7 |

| 5 | 2 | 6 | purchase | 142 | 203.2 |

| 6 | 3 | 6 | purchase | 129 | 205.8 |

| 7 | 2 | 7 | purchase | 108 | 163.4 |

| 8 | 3 | 7 | purchase | 75 | 125.9 |

Statistical Analysis and Interpretation

The analysis proceeds by calculating the average response for each factor level:

- Engineer Staffing: Average with 2 engineers = 119 days; with 3 engineers = 100.5 days (18.5-day reduction)

- Technician Staffing: Average with 6 technicians = 130.8 days; with 7 technicians = 88.8 days (42-day reduction)

- Device Source: Average with development = 106 days; with purchase = 113.5 days (7.5-day increase)

These calculations reveal that while adding staff reduces project time, purchasing the device increases completion time without regard to staffing levels. Further analysis demonstrates a significant interaction effect between engineer and technician staffing: with three engineers, the addition of technicians has a greater impact on reducing project time than with two engineers [2].

Cost-Benefit Analysis Integration

The project manager can extend the analysis to include cost implications by denominating both costs and benefits in the same units. If each day reduced from the project time is worth $1,000 to the company, the time reductions can be translated into increased revenue, enabling a comprehensive benefit-cost comparison [2]. In the example, the total project cost was originally $158K with two engineers and six technicians. This cost can be reduced to $102K by adding one technician and one engineer, demonstrating that the cost of additional staff can be offset by shorter project time for everyone.

DoE Implementation in Pharmaceutical and Biomedical Contexts

Clinical Trial Management Applications

In pharmaceutical development, clinical trials represent temporary endeavors with definite beginnings and ends, creating unique deliverables in the form of results that form the basis of evidence-based medicine [13]. The application of DoE in this context enables structured management of complex trial processes. For bioequivalence studies (BES), which have clearly defined objectives and typically complete within one year, DoE provides a framework for optimizing trial conduct, harmonizing activities, and lowering expenditures [13].

A seven-year effort implementing project management principles to manage 30 clinical studies demonstrated that BES include distinct phases with specific deliverables [13]:

Table: Clinical Trial Phases and Deliverables for DoE Application

| Phase | Key Activities | Deliverables |

|---|---|---|

| Preparation | Offer preparation, contract negotiation, study documentation | Approved study protocol, finalized contract |

| Regulatory Approval | Ethical committee application, regulatory application | Regulatory approvals, ethical committee endorsement |

| Clinical Execution | Subject recruitment, clinical procedures | Completed clinical data, monitored results |

| Analysis | Blood sample analysis, statistical analysis | Analytical results, statistical report |

| Reporting | Final report preparation, post-study activities | Final study report, completed documentation |

Discovery Project Management

In the early "discovery" phase of drug development, pharmaceutical companies face unique challenges in managing projects where outcomes are relatively unpredictable and the potential rewards distant [14]. Survey results from pharmaceutical industry professionals indicate that 86% have 5+ years of project management experience in the pharmaceutical industry, and 90% work in organizations with discovery groups of over 50 people [14]. This establishes a mature foundation for implementing structured DoE approaches.

The survey further revealed that 62% of companies have discovery projects planned by "discovery" people only, while 33% use an interdisciplinary team approach [14]. This suggests significant opportunity for greater integration of formal DoE methodologies through project management leadership. Interestingly, 100% of respondents believed that formal procedures are or could be useful for projects arising from discovery organizations [14].

Research Reagent Solutions and Essential Materials

The implementation of DoE in pharmaceutical and biomedical research requires specific tools and materials to ensure statistical validity and practical applicability.

Table: Essential Research Reagent Solutions for DoE Implementation

| Item Category | Specific Examples | Function in DoE Process |

|---|---|---|

| Statistical Software | Minitab, JMP, Design-Expert, MODDE | Streamlines experimental design, analysis, and visualization of results |

| DoE Templates | ASQ Design of Experiments Template (Excel) | Provides structured format for planning and recording experimental runs |

| Project Management Tools | Catalyst software, PERT/CPM systems | Enables computational analysis of factor effects on project timelines |

| Data Collection Systems | Electronic Lab Notebooks (ELNs), Laboratory Information Management Systems (LIMS) | Ensures robust data management and protocol adherence |

| Quality Documentation | Standard Operating Procedures (SOPs), Good Laboratory Practice (GLP) guidelines | Maintains regulatory compliance and documentation standards |

Factor Relationships and Interaction Effects

Understanding the relationships between factors and their interaction effects is crucial for effective DoE implementation in project management.

Factor Interaction Relationships: This diagram visualizes how controllable and uncontrollable factors interact within an experimental design to influence project outcomes, moderated by quality standards and project objectives.

Best Practices and Protocol Implementation

Success Factors for DoE Implementation

Successful implementation of DoE in project management contexts requires adherence to several best practices:

- Foster Cross-Functional Collaboration: Involve diverse team members from R&D, engineering, quality control, and production to ensure various perspectives are considered [11]

- Establish Clear Objectives: Define precise, measurable goals before starting experiments to guide design and factor selection [11]

- Gain Deep Process Understanding: Thoroughly comprehend the underlying process, including all potential input variables and their ranges [11]

- Implement Careful Planning and Control: Ensure factors not being tested are kept constant to minimize confounding variables [11]

- Validate and Verify Results: Conduct confirmation runs to ensure predicted improvements are reproducible in real-world environments [11]

Challenges and Mitigation Strategies

Implementing DoE in industrial and research settings presents several challenges that project managers must anticipate and address:

Table: Common DoE Implementation Challenges and Solutions

| Challenge | Impact on Projects | Mitigation Strategy |

|---|---|---|

| Complexity and High Number of Variables | Difficult to identify critical factors from dozens of possibilities | Use screening designs (e.g., Fractional Factorial) to identify critical factors before optimization [11] |

| Resource Constraints (Time, Cost, Materials) | Experimental approaches appear resource-intensive | Leverage statistical efficiency of DoE compared to OFAT; use specialized software to streamline process [11] |

| Lack of Statistical Expertise | Team members may not have extensive statistical backgrounds | Invest in training; engage statistical departments; use user-friendly DOE software [11] |

| Resistance to Change | Organization clings to traditional OFAT approaches | Demonstrate efficiency gains, cost savings, and ability to detect interactions [11] |

| Regulatory Compliance | Need to meet FDA, EMA, and other regulatory requirements | Implement DoE within Quality by Design (QbD) framework; maintain comprehensive documentation [15] |

For researchers, scientists, and drug development professionals, the integration of Design of Experiments with PMI principles represents a methodological advancement that bridges statistical rigor with project execution excellence. By applying structured experimental frameworks to project challenges, teams can move beyond trial-and-error approaches to develop evidence-based strategies for process optimization and quality management.

The project manager's role in this integration is multifaceted: serving as communication bridge between statistical experts and domain specialists, ensuring rigorous experimental design and execution, translating results into project decisions, and maintaining alignment with overall project objectives and constraints. As the pharmaceutical and biomedical industries face increasing pressure to accelerate development timelines while maintaining quality standards, the strategic application of DoE within a project management framework offers a pathway to data-driven decision-making and continuous improvement.

When implemented systematically through the protocols and application notes outlined in this document, DoE becomes more than a quality tool—it transforms into a strategic asset for project optimization, risk reduction, and value delivery across the research and development lifecycle.

Design of Experiments (DoE) is a systematic, statistical approach that is revolutionizing drug development by optimizing products and processes through a deep understanding of the relationship between input variables and output responses. In the pharmaceutical industry, where trends are shifting toward more customized, high-potency formulations, DoE enables researchers to identify the most influential factors, determine their optimal levels, and establish robust, efficient processes while minimizing experimental runs [16]. This application note details the critical role of DoE in enhancing efficiency, ensuring quality, and providing significant cost benefits within modern drug development, particularly for complex products like Highly Potent Active Pharmaceutical Ingredients (HPAPIs).

DoE is a structured method for planning, conducting, analyzing, and interpreting controlled tests to evaluate the factors that control the value of a parameter or group of parameters. Unlike traditional one-factor-at-a-time (OFAT) approaches, which are time-consuming and often miss critical factor interactions, DoE allows for the simultaneous assessment of multiple factors and their interactions [2] [16]. This is crucial in drug development, where factors such as excipient selection, API concentration, and processing conditions interact in complex ways to determine the final product's Critical Quality Attributes (CQAs).

A well-designed experiment provides several key benefits:

- Clarity on Controllable Variables: The effects of factors under the researcher's control become unequivocally clear [2].

- Management of Uncontrolled Variables: The impact of nuisance variables can be minimized, leading to more robust conclusions [2].

- Selective and Efficient Data Collection: Conclusions are valid across a range of studied conditions, reducing the need for extensive testing [2].

- Objective-Oriented Analysis: The choice of experimental design directly controls the mathematical power and limitations of the results, ensuring they are tailored to specific development objectives [2].

Key Applications and Benefits in Drug Development

Enhancing Development Efficiency

The traditional trial-and-error method in formulation development is notoriously resource-intensive. DoE provides a smarter pathway by significantly reducing the number of experimental runs required to obtain actionable data [16]. For instance, in a project with three factors (e.g., Engineer Staff Level, Technician Staff Level, and Component Sourcing), a full factorial design with two levels per factor requires only 8 experimental runs to comprehensively understand the main effects and all possible interactions [2]. This structured approach is instrumental in accelerating the journey from early-phase clinical development to commercial manufacturing, ensuring a greater speed to market for sponsor organizations [16].

Ensuring Product Quality and Robustness

DoE is a cornerstone of the Quality by Design (QbD) framework encouraged by regulatory agencies like the FDA [16]. It enables a proactive approach to quality by:

- Identifying Critical Process Parameters (CPPs): Systematically determining which manufacturing parameters most significantly affect CQAs.

- Establishing a Design Space: Defining the multidimensional combination of input variables and process parameters that have been demonstrated to provide assurance of quality [16].

- Mitigating Risks: Pinpointing potential sources of variability in the formulation and process, allowing for the development of proactive mitigation strategies [16].

A real-world case study highlights the consequences of inadequate knowledge transfer. A sponsor developing a film-coated tablet with an HPAPI encountered issues with powder static and poor flowability. This critical information was not shared with their Contract Development and Manufacturing Organization (CDMO). The problem resurfaced during scale-up, causing tablet splitting issues and significant delays. Had the CDMO possessed the original DoE data and powder characterization reports, they could have addressed the flowability issue during developmental transfer, avoiding costly rework [16].

Reducing Development Costs

The efficiency gains from DoE directly translate into substantial cost savings. By minimizing failed experiments and reducing the volume of required materials—a critical consideration for expensive or scarce HPAPIs—DoE curtails direct experimental costs [16]. Furthermore, the establishment of a robust design space prevents costly failures during late-stage development and scale-up. The synergistic effect of adding resources, as revealed by DoE analysis, can also lead to overall project cost reduction by shortening project timelines more than the additional resource costs, as demonstrated in the project coordination example where adding both an engineer and a technician reduced total project costs [2].

Quantitative Analysis of DoE Impact

The following table summarizes the quantitative outcomes from a project management case study, illustrating how DoE can be used to analyze the impact of resource changes on project time and cost [2].

Table 1: Analysis of Project Factors for Schedule and Cost Reduction

| Factor | Level (-) | Level (+) | Effect on Completion Time (Days) | Effect on Project Cost |

|---|---|---|---|---|

| Engineer Staff Level | 2 | 3 | Reduction of 18.5 days (avg.) | Increase if added alone; decrease if added with technician |

| Technician Staff Level | 6 | 7 | Reduction of 42 days (avg.) | Substantial cost reduction |

| Obtain Latching Device | Develop | Purchase | Increase of 7.5 days (avg.) | Increase due to purchase price |

Table 2: Experimental Results from Full Factorial Design (2^3) [2]

| Condition | Eng. Staff | Tech. Staff | Source | Time (days) | Costs ($K) |

|---|---|---|---|---|---|

| 1 | 2 | 6 | Develop | 128 | 158.2 |

| 2 | 3 | 6 | Develop | 124 | 173.6 |

| 3 | 2 | 7 | Develop | 98 | 129.2 |

| 4 | 3 | 7 | Develop | 74 | 109.7 |

| 5 | 2 | 6 | Purchase | 142 | 203.2 |

| 6 | 3 | 6 | Purchase | 129 | 205.8 |

| 7 | 2 | 7 | Purchase | 108 | 163.4 |

| 8 | 3 | 7 | Purchase | 75 | 125.9 |

Experimental Protocols for DoE in Formulation Development

Protocol: Pre-formulation Excipient Compatibility Study

Objective: To identify compatible excipients and potential stability issues for a new solid oral dosage form containing an HPAPI early in the development process [16].

Materials:

- HPAPI (Limited supply, handle with containment)

- Candidate excipients (e.g., diluents, binders, disintegrants, lubricants)

- Glass vials with sealed closures

- Stability chambers controlling temperature and humidity (e.g., 40°C/75% RH)

Methodology:

- Preparation of Binary Mixtures: Precisely weigh and mix the HPAPI with each candidate excipient at a relevant ratio (e.g., 1:1 or 1:5 API:excipient w/w).

- Stress Conditions: Place the mixtures in glass vials and store them in stability chambers under accelerated conditions (e.g., 40°C/75% RH). Include controls of pure API and pure excipients.

- Sampling Time Points: Remove samples at predetermined intervals (e.g., 0, 1, 2, 4 weeks).

- Analysis: Analyze samples using validated HPLC/UPLC methods for assay and related substances (degradation products).

- DoE Analysis: Analyze the data (e.g., % potency loss, growth of key degradants) using statistical software to identify which excipients have a significant adverse effect on API stability.

Protocol: Powder Blending and Flowability Optimization

Objective: To systematically understand the impact of formulation composition and process parameters on the flowability and homogeneity of a powder blend for direct compression, preventing issues like the tablet splitting case study [16].

Materials:

- HPAPI

- Selected excipients (based on compatibility study)

- V-blender or bin blender

- Powder characterization equipment (e.g., FT4 Powder Rheometer, laser diffraction for particle size, tapped density tester).

Methodology:

- Factor Selection: Identify critical factors such as:

- A: Diluent ratio (e.g., Microcrystalline Cellulose : Lactose)

- B: Lubricant concentration (e.g., Magnesium Stearate %)

- C: Blending time (minutes)

- Experimental Design: Select an appropriate design (e.g., 2^3 full factorial or a Response Surface Design like Central Composite Design (CCD) if curvature is suspected) [17].

- Experiment Execution: For each experimental run, prepare the blend according to the defined factor levels.

- Response Measurement: After blending, measure key responses for each batch:

- Content Uniformity: Assess blend homogeneity by sampling from different locations and analyzing API concentration.

- Flowability: Measure using parameters like Angle of Repose, Compressibility Index, or Shear Cell testing [16].

- Statistical Analysis: Fit the data to a model and generate response surfaces to identify the optimal factor settings that ensure both excellent content uniformity and acceptable powder flow.

Visualization of Workflows and Relationships

DoE Implementation Workflow

Factor-Response Relationship

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Materials and Equipment for DoE in Solid Dosage Form Development

| Item | Function/Description | Application in DoE |

|---|---|---|

| HPAPI (High Potency API) | The active pharmaceutical ingredient with high biological activity. Requires specialized handling and containment. | The central material under investigation; its properties drive many formulation and process decisions. |

| Excipients (Diluents, Binders, Disintegrants) | Inactive components that form the bulk of the dosage form and govern its physical properties. | Factors in a DoE to optimize blend properties, compression behavior, and drug release profile. |

| Powder Rheometer (e.g., FT4) | Instrument for comprehensive powder characterization, measuring flowability, cohesivity, and shear properties. | A key tool for measuring responses related to powder blend processability in a DoE [16]. |

| Stability Chambers | Environmental chambers that control temperature and humidity for accelerated stability studies. | Used to stress test formulations from a DoE to assess chemical stability as a critical response. |

| Statistical Software (e.g., JMP, Design-Expert) | Software for designing experiments, analyzing complex data, and generating predictive models. | Essential for creating DoE designs, analyzing variance (ANOVA), and visualizing factor interactions. |

Within Pharmaceutical Manufacturing and Innovation (PMI), the pressure to accelerate development timelines while ensuring quality and controlling costs is immense. The Design of Experiments (DoE) is a powerful statistical approach for process understanding and optimization, recognized as a key tool in successful Quality by Design (QbD) implementation [18] [19]. Traditionally, initial DoE factors and levels are set using expert knowledge or preliminary one-factor-at-a-time (OFAT) experiments, an approach that can be inefficient and miss critical interactions [20].

This application note advocates for a paradigm shift: using historical data and meta-analysis as an evidence-based foundation for DoE. This methodology systematically leverages existing knowledge to create more efficient, informative, and powerful experiments from the outset, ensuring that new research contributes to the collective advancement of knowledge in a structured, data-driven manner [19].

The Scientific and Regulatory Rationale

An evidence-based starting point for DoE directly addresses the call for more deliberate and efficient methods to optimize the impact of health interventions [19]. By integrating prior knowledge, researchers can avoid unnecessary duplication and investigate the most critical research questions from a position of strength.

This approach aligns with fundamental DoE principles established by Fisher, including comparison, randomization, and replication [18]. It enhances these principles by providing a statistically rigorous basis for selecting factors and defining level ranges, thereby increasing the reliability and validity of the experiment. Furthermore, it is particularly suited for optimization, defined as "a deliberate, iterative and data-driven process to improve a health intervention and/or its implementation to meet stakeholder-defined public health impacts within resource constraints" [19].

Quantitative Data Synthesis for DoE Planning

Meta-analysis of prior studies provides quantitative data critical for informing the planning stages of a new DoE. The following table summarizes key parameters that can be extracted.

Table 1: Key Quantitative Parameters from Meta-Analysis for DoE Design

| Parameter | Description | Role in DoE Planning |

|---|---|---|

| Key Factors | Process or formulation variables previously studied. | Identifies critical factors for inclusion in the screening design; prevents omission of vital interactions [19]. |

| Effect Sizes | The magnitude of a factor's impact on Critical Quality Attributes (CQAs). | Informs the realistic setting of factor levels (high/low) to ensure the experiment is challenging yet feasible [20]. |

| Baseline Performance | The average performance of the control or standard process. | Provides a benchmark for comparing the outcomes of the new DoE and estimating expected improvement. |

| Variance Estimates | Pooled estimate of process or measurement noise. | Enables an a priori calculation of statistical power and helps determine the necessary number of experimental replicates [18]. |

| Optimal Ranges | Ranges of factors where optimal performance was previously observed. | Focuses the experimental domain (e.g., for a Response Surface Methodology) on the most promising region of the design space [20]. |

The data synthesized in Table 1 directly feeds into the creation of a design matrix. For instance, a 2-factor experiment investigating Temperature and Pressure would require 4 experimental runs (2^2), with levels coded as +1 (high) and -1 (low) [20]. The quantitative ranges for these levels should be derived from the "Optimal Ranges" and "Effect Sizes" identified in the meta-analysis.

Experimental Protocols

Protocol 1: Conducting a Meta-Analysis to Inform DoE

This protocol details the steps for performing a systematic meta-analysis to gather historical evidence.

1. Define the Research Question & Eligibility Criteria (PICO):

- Population (P): The specific process or product type (e.g., "lyophilized monoclonal antibodies").

- Intervention (I) & Comparison (C): The process factors and their ranges to be investigated (e.g., "primary drying temperature between -20°C and 0°C").

- Outcome (O): The Critical Quality Attributes (CQAs) (e.g., "aggregation rate," "residual moisture").

2. Search Strategy:

- Systematically search electronic databases (e.g., Medline, EMBASE, Cochrane Library) [19] [21].

- Use a combination of MeSH terms and text words related to the process, product, and CQAs [21].

- Document the search strategy comprehensively for reproducibility.

3. Study Selection & Data Extraction:

- Use software (e.g., EndNote, Covidence) to manage citations and screen titles/abstracts, followed by full-text review [19].

- Extract data into a standardized form. Key items include: first author, year, sample size, factor levels, mean outcome values, measures of variance (standard deviation, confidence intervals), and study quality indicators.

4. Quality Assessment & Data Synthesis:

- Assess the risk of bias of included studies using appropriate tools (e.g., Cochrane Risk of Bias tool) [21].

- Perform a quantitative synthesis (meta-analysis) using statistical software (e.g., Review Manager, Stata). Calculate pooled effect sizes, confidence intervals, and assess heterogeneity using the I² statistic [21].

- Output: A summary of findings table and, if possible, a predictive equation for the relationship between factors and CQAs.

Protocol 2: Designing the DoE from Meta-Analytic Data

This protocol outlines how to translate the results of a meta-analysis into a formal DoE.

1. Acquire Process Understanding:

- Create a process flowchart mapping all potential inputs and outputs.

- Consult with Subject Matter Experts (SMEs) to contextualize the meta-analysis findings [20].

2. Define DoE Objective and Select Factors:

- Objective: Clearly state the goal (e.g., "screening critical factors," "optimizing a formulation").

- Factor Selection: Based on the meta-analysis, select the most influential factors for the DoE. Avoid including factors with historically negligible effects.

3. Set Factor Levels and Determine Measurement System:

- Use the "Optimal Ranges" and "Effect Sizes" from the meta-analysis to set realistic high/low levels for each factor [20].

- Ensure the measurement system for the output (CQA) is stable, repeatable, and preferably a continuous variable [20].

4. Create Design Matrix and Execute:

- Screening: Use a fractional factorial or Plackett-Burman design to efficiently screen many factors.

- Optimization: Use a full factorial or Response Surface Methodology (e.g., Central Composite Design) for a detailed study of critical factors and their interactions [20].

- Replication: Incorporate replication based on the variance estimates from the meta-analysis to ensure adequate statistical power [18].

- Randomization: Randomize the run order to eliminate the effects of unknown confounding variables [18] [20].

The workflow for this integrated evidence-based approach is outlined below.

The Scientist's Toolkit: Research Reagent Solutions

The following table lists essential methodological components, or "research reagents," for implementing this evidence-based approach.

Table 2: Essential "Research Reagents" for Evidence-Based DoE

| Item | Function / Explanation |

|---|---|

| Systematic Review Protocol | A pre-defined plan detailing the meta-analysis objectives and methods. It minimizes bias and ensures the review is comprehensive and reproducible [19]. |

| Statistical Software (e.g., R, Stata, RevMan) | Used to calculate pooled effect estimates, confidence intervals, and assess heterogeneity in the meta-analysis. It is also essential for analyzing data from the subsequent DoE [21]. |

| DoE Software & Templates | Tools and templates (e.g., ASQ's DoE template) that aid in the generation of design matrices, randomization, and initial analysis of factorial experiments [20]. |

| Risk of Bias Assessment Tool | A standardized framework (e.g., Cochrane RoB tool) to critically appraise the quality of individual studies included in the meta-analysis, informing the confidence in the synthesized results [21]. |

| Factorial Design Matrix | The structured set of experimental runs that simultaneously varies all selected factors. It is the core "reagent" for efficiently estimating main effects and interactions [20]. |

Visualizing the Analytical Workflow

The process of analyzing the data from an evidence-based DoE involves moving from raw data to a validated process model, as shown in the following workflow.

Implementing DoE in Pharmaceutical Development: From Formulation to Manufacturing

This application note provides a detailed, step-by-step protocol for implementing a structured Design of Experiments (DoE) workflow within the context of Process Mass Intensity (PMI) optimization for pharmaceutical development. Designed for researchers, scientists, and drug development professionals, this guide bridges the gap between statistical theory and practical laboratory execution. By following this structured approach, teams can efficiently identify critical process parameters, build predictive models, and establish optimized, sustainable reaction conditions with reduced experimental burden, accelerating the development of greener synthetic routes for Active Pharmaceutical Ingredients (APIs).

Design of Experiments (DoE) is a systematic, statistical approach used to study the effects of multiple input variables, or factors, on one or more output responses [22] [23]. In pharmaceutical development, this methodology is invaluable for understanding complex processes, identifying cause-and-effect relationships, and finding optimal conditions that maximize yield, purity, or sustainability metrics like Process Mass Intensity (PMI) [1]. A structured workflow is crucial because it ensures experiments are planned and analyzed correctly, yielding valid, reliable, and actionable conclusions. Adopting a multi-step DoE process is vastly superior to the inefficient "One Factor at a Time" (OFAT) approach, which can miss critical factor interactions and lead to suboptimal process understanding [24] [23]. The following workflow diagram outlines the six critical stages of a structured DoE, from initial problem definition to final model validation.

Detailed Step-by-Step Protocol

Step 1: Define the Experimental Purpose and Variables

The foundation of a successful DoE is a clear and precise definition of the experimental objectives and system variables.

Protocol 2.1.1: Defining the Experimental Purpose

- State the Primary Objective: Formulate a single, clear sentence stating what you want to achieve. In PMI optimization, this is often: "To identify the factor settings that minimize PMI while maintaining or improving yield and purity for [Reaction Name]."

- Categorize the Experiment Type: Determine if the goal is:

- Screening: To rapidly identify the most influential factors from a large set.

- Optimization: To characterize the relationship between key factors and responses to find a optimum (e.g., using a Response Surface Methodology).

- Robustness Testing: To ensure the process remains unaffected by small variations in factor settings [22] [24].

Protocol 2.1.2: Identifying and Classifying Factors and Responses

- Define Responses (Outputs): Identify the key measurable outcomes. For each response, specify a goal (e.g., maximize, minimize, or target).

- Primary Response: PMI (Goal: Minimize).

- Secondary Responses: Reaction Yield (Goal: Maximize), Purity/Selectivity (Goal: Maximize) [1].

- Identify Factors (Inputs): Using process knowledge and prior screening, list all potential input variables. Classify them as follows:

- Controllable Factors: Variables you can set and maintain (e.g., temperature, catalyst loading, stoichiometry, solvent ratio).

- Noise Factors: Variables that are hard or expensive to control but may influence the result (e.g., raw material lot, ambient humidity). These can be included in the design to test robustness.

- Set Factor Ranges and Levels: For each controllable factor, define realistic high and low levels that are sufficiently spaced to produce a measurable effect but remain within a safe and practical operating range [22] [23].

Step 2: Propose an Initial Statistical Model

The statistical model is a mathematical representation of how the factors are believed to influence the responses.

Protocol 2.2: Model Specification

- Select Model Type Based on Objective:

- Document the Initial Model: Write out the full model with all potential terms. This guides the selection of the experimental design in the next step.

Step 3: Generate and Evaluate an Experimental Design

The design is the blueprint for your experiment, specifying the exact combination of factor levels to be tested in each experimental run.

Protocol 2.3.1: Design Generation and Selection

- Choose a Design Structure: Select a design that can efficiently estimate the model from Step 2.

- Determine Number of Runs: The software will calculate the minimum number of runs required to estimate the model. It is good practice to include replicates (e.g., 3-5 center points) to estimate experimental error [24].

- Implement Randomization and Blocking:

- Randomize the run order to mitigate the effects of lurking variables [24].

- If the experiment must be performed in separate batches (e.g., different days, equipment), apply blocking to account for this known source of variation.

Protocol 2.3.2: Pre-Experimental Design Evaluation

Before executing the experiment, use software diagnostics to evaluate the design's properties [22]:

- Power: The probability of detecting a significant effect if it exists. Aim for a power > 0.8 for critical factors.

- Prediction Variance: Assess how the forecast precision of your model changes across the design space. A more uniform variance is better.

The table below summarizes common designs used in pharmaceutical development.

Table 1: Common Experimental Designs for API Process Development

| Design Type | Primary Objective | Typical Factors | Key Advantage | Consideration for PMI |

|---|---|---|---|---|

| Full Factorial | Characterize all interactions | 2 - 5 | Estimates all main effects and interactions | Number of runs becomes prohibitive with many factors. |

| Fractional Factorial | Screening | 4 - 8 | Highly efficient for identifying vital few factors | Effects are aliased (confounded); requires careful planning. |

| Plackett-Burman | Screening | 5 - 11 | Very efficient for main effects screening | Cannot estimate interactions. |

| Central Composite (CCD) | Optimization | 2 - 4 | Precisely estimates curvature and quadratic effects | Provides excellent model fidelity for optimization. |

| Box-Behnken | Optimization | 3 - 5 | Efficient for second-order models; avoids extreme corners | Cannot include axial points. |

Step 4: Execute the Experiment and Enter Data

Protocol 2.4: Experimental Execution and Data Integrity

- Follow the Randomized Run Order: Adhere strictly to the randomized sequence provided by the design software. Do not run experiments in a "convenient" order.

- Record Data Meticulously: For each run, record the measured responses in the designated data table. It is critical to record data exactly as it is measured, without filtering.

- Document Any Deviations: Note any unexpected events or deviations from the experimental protocol, as these can help explain anomalies during analysis.

Step 5: Analyze the Data and Fit the Statistical Model

This step involves fitting the initial model to the data and refining it to identify the significant effects.

Protocol 2.5.1: Initial Model Fitting and Analysis of Variance (ANOVA)

- Fit the Full Model: Use statistical software (e.g., JMP, Minitab, R) to fit the initial model specified in Step 2 [22] [26].

- Perform ANOVA: Examine the ANOVA table to assess the overall significance of the model. A low p-value (typically < 0.05) for the model indicates that the terms in the model explain a significant portion of the variation in the response.

- Check Model Assumptions: Validate the underlying assumptions of the statistical model by analyzing the residuals (the differences between observed and predicted values). This includes checks for normality, constant variance, and independence.

Protocol 2.5.2: Model Reduction and Interpretation

- Identify Significant Terms: Examine the p-values for individual model terms (e.g., main effects, interactions, quadratic terms). Terms with p-values greater than the significance level (alpha, often 0.05) are candidates for removal.

- Use Stepwise Regression or Manual Selection: Employ statistical methods to iteratively remove non-significant terms, creating a reduced model that contains only the active effects. This leads to a simpler, more predictive model [22].

- Interpret the Final Model:

- Use Pareto Charts to visualize the relative magnitude of the effects.

- Examine Interaction Plots to understand how the effect of one factor depends on the level of another.

Table 2: Key Outputs from Data Analysis and Their Interpretation

| Analysis Output | Description | Interpretation Guideline |

|---|---|---|

| Model P-value | Probability that the observed model fit is due to chance. | p < 0.05: The model is statistically significant. |

| Lack of Fit P-value | Tests whether the model form is adequate. | p > 0.05: No significant lack of fit; the model is adequate. |

| R-Squared (R²) | Proportion of variance in the response explained by the model. | Closer to 1.00 is better (e.g., >0.80 indicates a good fit). |

| Adjusted R-Squared | R² adjusted for the number of terms in the model. | Prefers simpler models; more reliable for model comparison. |

| Coefficient Estimate | The estimated size and direction of a factor's effect. | A positive coefficient means the response increases as the factor moves from low to high. |

| Coefficient P-value | Probability that the estimated effect is zero. | p < 0.05: The factor (or interaction) has a significant effect. |

Step 6: Generate Predictions and Validate the Model

The final step is to use the confirmed model to make predictions and verify them experimentally.

Protocol 2.6.1: Prediction and Optimization

- Use the Prediction Profiler: Leverage the profiler tool in your software to visually explore how the responses change with different factor settings [22].

- Find Optimal Factor Settings: Use the software's numerical optimization function (e.g., Desirability Function) to find factor settings that simultaneously optimize all responses (e.g., minimize PMI while maximizing yield) [22].

- Establish the Design Space: The model can define a "design space," a multidimensional combination of factor inputs within which consistent product quality is assured. This is a key concept in Quality by Design (QbD).

Protocol 2.6.2: Model Validation

- Run Confirmation Experiments: The most critical validation step. Perform new experimental runs (typically 3-5) at the predicted optimal settings. Do not use runs from the original design.

- Compare Results to Predictions: Compare the actual measured response values from the confirmation runs with the model's predictions.

- Assess Validation: If the actual results fall within the prediction intervals of the model, the model is considered validated. If not, return to Step 1 (Define) to investigate the discrepancy and potentially run a follow-up experiment to refine the model [23].

Case Study: PMI Optimization in API Synthesis

A team at Bristol Myers Squibb demonstrated the power of combining DoE with advanced analytics for greener API synthesis [1]. They first used a PMI prediction app to select a more efficient synthetic route during the design phase. Subsequently, for a specific chemical transformation, they employed Bayesian Optimization (EDBO+), a machine-learning-driven DoE approach, to optimize the reaction conditions.

- Traditional OFAT Result: After ~500 experiments, the process achieved 70% yield and 91% enantiomeric excess (ee).

- Structured DoE (Bayesian Optimization) Result: In only 24 experiments, the process achieved 80% yield and 91% ee.

This case highlights a core benefit of the structured workflow: dramatically accelerated process understanding and optimization with significantly fewer experimental resources, directly contributing to lower PMI and a "greener-by-design" outcome [1].

The Scientist's Toolkit: Essential Research Reagent Solutions

The following reagents and materials are fundamental for executing and analyzing experiments in API process development and PMI studies.

Table 3: Key Research Reagent Solutions for API Process Development

| Reagent / Material | Function in Experimentation | Application Note |

|---|---|---|

| Catalysts (e.g., Pd/C, Enzymes) | Accelerate reaction rates and improve selectivity, directly impacting yield and PMI. | Screening different catalysts and loadings is a common factor in reaction optimization DoEs. |

| Solvents (e.g., MeTHF, 2-MeTHF, CPME) | Medium for reaction, purification, and crystallization. Choice greatly influences solubility, kinetics, and waste. | "Greener" solvent selection is a key lever for reducing PMI. Solvent ratio is a frequent DoE factor. |

| Reagents & Building Blocks | Participate directly in the synthetic transformation to construct the API molecule. | Stoichiometry and reagent purity are critical controlled factors in DoE to maximize efficiency. |

| Adsorbents (e.g., Silica, Celite) | Used in purification steps (e.g., chromatography, filtration) to remove impurities. | Amount and type can be optimized via DoE to reduce mass waste in purification. |

| Analytical Standards | Provide reference for quantifying reaction components (substrate, product, impurities) via HPLC, GC, etc. | Essential for generating accurate, reliable response data (e.g., yield, purity) for DoE analysis. |

| DoE Software (e.g., JMP, Modde) | Platforms for generating optimal designs, analyzing experimental data, and building predictive models. | Enables the statistical rigor of the entire workflow, from design generation to optimization [27]. |

In Design of Experiments (DoE) for pharmaceutical and manufacturing innovation (PMI) optimization, selecting the appropriate experimental design is crucial for efficiently extracting meaningful insights from complex systems. The choice of design directly influences the quality of the resulting model, the number of required experimental runs, and the validity of the conclusions drawn. This guide focuses on three fundamental design families—Full Factorial, Fractional Factorial, and Response Surface Methodology (RSM)—and provides a structured framework for their selection and application within a sequential DoE campaign [28].

DoE is not a single-experiment endeavor but a sequential process where the learning from one phase informs the next. Different designs are optimally suited for different stages of this campaign, from initial scoping to final optimization and robustness testing [28]. By aligning your design choice with your current experimental goal, you ensure efficient resource use and a coherent analytical pathway, as the selected design inherently dictates the type of statistical analysis you will perform [28].

Understanding the DoE Progression and Design Philosophy

A typical DoE campaign progresses through several logical stages, each with a distinct objective. The table below outlines these stages and the designs most commonly associated with them.

Table 1: DoE Campaign Stages and Corresponding Design Objectives

| Campaign Stage | Primary Objective | Recommended Design Families |

|---|---|---|

| Scoping | Broadly investigate a system with little prior knowledge [28]. | Space-Filling Designs |

| Screening | Identify the few critical factors from a large set of potential factors [28] [29]. | Fractional Factorial, Plackett-Burman |

| Refinement & Iteration | Characterize main effects and interaction effects of the important factors [28]. | Full Factorial, Fractional Factorial |

| Optimization | Model curvature and locate optimal process conditions [28] [30]. | RSM (e.g., CCD, Box-Behnken) |

| Robustness | Determine the sensitivity of the system to small changes in factor settings [28]. | RSM |

This sequential approach allows researchers to move rationally from a state of high uncertainty to a detailed, optimized, and robust process understanding. It is often inefficient to begin a study with a complex, resource-intensive design like RSM; starting with a screening design ensures that subsequent efforts are focused only on the factors that matter most [29].

The following workflow diagram illustrates the strategic decision-making process for selecting an appropriate experimental design within a sequential DoE campaign.

Design Types: Characteristics and Applications

Full Factorial Designs

Full Factorial Designs (FFD) are the most comprehensive type of factorial design, involving the study of all possible combinations of the levels of all factors [31] [29]. This completeness allows for the estimation of all main effects and all interaction effects between factors, providing a holistic view of the system's behavior [31].

Key Characteristics:

- Experimental Runs: The number of runs is (n^k), where (k) is the number of factors and (n) is the number of levels. This leads to an exponential increase in runs with added factors [28] [31].