In-Line Monitoring for Enhanced Process Control and Quality in Biopharmaceutical Manufacturing

This article explores the critical role of in-line monitoring and Process Analytical Technology (PAT) in advancing biopharmaceutical manufacturing.

In-Line Monitoring for Enhanced Process Control and Quality in Biopharmaceutical Manufacturing

Abstract

This article explores the critical role of in-line monitoring and Process Analytical Technology (PAT) in advancing biopharmaceutical manufacturing. It provides a comprehensive overview for researchers and drug development professionals, covering foundational principles, key technologies like Raman spectroscopy and capacitive sensors, and their application in real-time control of Critical Process Parameters (CPPs) and Critical Quality Attributes (CQAs). The content further addresses practical strategies for implementation, troubleshooting, and optimization, supported by validation frameworks and comparative analyses of different monitoring techniques. By synthesizing current methodologies and future directions, this article serves as a guide for leveraging in-line monitoring to reduce variability, ensure regulatory compliance, and achieve robust, high-quality drug production.

Understanding In-Line Monitoring and PAT in Biopharmaceuticals

Defining In-Line, On-Line, At-Line, and Off-Line Monitoring in Pharma

The evolution of pharmaceutical manufacturing toward continuous processes has necessitated the integration of advanced Process Analytical Technology (PAT) tools for real-time quality control and robust process monitoring [1]. International regulatory bodies, including those in the European Union, have prioritized support for PAT research in nanosystems through initiatives such as the NanoPAT and PAT4Nano projects [1]. The primary goal of PAT implementation is to build quality into the product through comprehensive process understanding and control, moving beyond traditional end-product testing [2]. This paradigm shift enables manufacturers to maintain consistent product quality while optimizing process efficiency, reducing production costs, and minimizing waste [1] [3]. For research focused on Preventive Maintenance Improvement (PMI) reduction, a thorough understanding of the different PAT sampling approaches—off-line, at-line, on-line, and in-line—is fundamental to designing robust monitoring strategies that can predict and prevent process deviations, thereby reducing maintenance interventions and associated downtime.

Definitions and Key Distinctions

The classification of monitoring approaches is based on the proximity of the analytical measurement to the process stream and the degree of automation [2] [4]. The following table summarizes the core characteristics of each category.

Table 1: Classification of Monitoring Approaches in Pharmaceutical Manufacturing

| Monitoring Type | Sample Handling & Location | Level of Automation | Feedback Time | Typical Applications |

|---|---|---|---|---|

| In-Line | Analyzer is integrated directly into the process stream; no sample removal [4] [3]. | Continuous and automatic [3]. | Real-time, immediate [5]. | Direct monitoring of product flow via a probe (e.g., Raman spectroscopy) [3]. |

| On-Line | A sample is automatically diverted from the process stream via a bypass loop and returned after measurement [4]. | Automatic, with integrated sampling [4]. | Near real-time, with a slight delay [1]. | Analysis of a representative sample extracted via a sampling system [2]. |

| At-Line | Sample is manually or automatically extracted and analyzed in close proximity to the process line [1] [4]. | Manual or semi-automatic [4]. | Rapid, but with a short delay [1]. | Rapid final product control near the production line [1]. |

| Off-Line | Sample is removed and transported to a remote laboratory for analysis [4]. | Manual [5]. | Significant delay (hours to days) [6]. | Sophisticated analysis requiring extensive sample preparation (e.g., HPLC, MS) [3]. |

These monitoring strategies can be visualized as a spectrum, moving from the most integrated to the most detached from the manufacturing process.

Detailed Experimental Protocols for PAT Implementation

Protocol for In-Line Monitoring Using Raman Spectroscopy

Raman spectroscopy has emerged as a key technology for in-line product quality monitoring during biopharmaceutical manufacturing [7]. The following protocol details its application for monitoring product aggregation and fragmentation during affinity chromatography.

- Objective: To achieve real-time, in-line monitoring of critical quality attributes (CQAs), specifically high molecular weight aggregates and fragments, during the elution phase of an affinity chromatography unit operation.

- Materials and Reagents:

- Investigational IgG1 mAb drug substance.

- Harvested Cell Culture Fluid (HCCF).

- Affinity chromatography system and consumables.

- In-line Raman spectrometer (e.g., with virtual slit technology for signal collection on the order of seconds) [7].

- Raman immersion probe.

- Methodology:

- System Setup: Integrate the Raman immersion probe directly into the process stream, post-affinity column and prior to the elution fraction collector. Ensure the probe is installed to monitor the flowing stream continuously [3].

- Calibration Dataset Generation:

- Perform affinity chromatography and collect elution fractions.

- Employ an automated liquid handling robot to create a mixing series by combining adjacent fractions in different proportions to generate a large calibration set (e.g., 169 data points from 25 original fractions) [7].

- Analyze all calibration samples using off-line reference methods (e.g., SE-HPLC for aggregate and fragment quantification) to assign known values to each sample.

- Spectral Acquisition and Preprocessing:

- Collect Raman spectra from the calibration samples and during subsequent process runs.

- Apply a preprocessing pipeline to the raw spectra to reduce noise. An optimized pipeline may include:

- Model Development and Real-Time Prediction:

- Use the calibration dataset (Raman spectra + reference analytical data) to train machine learning models. Convolutional Neural Networks (CNN) and k-Nearest Neighbor (KNN) regressors have demonstrated high accuracy for this task [7].

- Validate the model with an independent test dataset.

- Deploy the validated model to predict CQAs from spectra collected in real-time every 38 seconds during processing [7].

Protocol for On-Line and At-Line Monitoring Using Dynamic Light Scattering (DLS)

DLS is a cornerstone technique for monitoring the critical quality attribute of particle size in nanopharmaceutical production [1].

- Objective: To monitor the Z-average particle size and polydispersity index (PdI) of lipid-based nanosystems (SLN, NLC, NE) during and after a continuous top-down manufacturing process.

- Materials and Reagents:

- Lipid-based nanoparticle formulations.

- Appropriate dilution medium (e.g., Milli-Q water).

- On-line/At-line DLS instrument (e.g., Litesizer 500 with a flow-through cell and dilution unit) or Spatially Resolved DLS (SR-DLS) instrument (e.g., NanoFlowSizer) [1].

- Methodology for On-Line DLS (using SR-DLS):

- Integration: Install the SR-DLS instrument inline with the process stream. The SR-DLS technology combines DLS with low-coherence interferometry, which compensates for flow effects, making it feasible for direct inline measurements [1].

- Measurement: The sample flows continuously through the instrument's measurement cell. The system automatically collects and analyzes data at defined intervals without manual intervention.

- Data Analysis: The software provides real-time updates of the Z-average and PdI, allowing for immediate process adjustments if size deviations exceed predefined thresholds.

- Methodology for At-Line DLS (using conventional DLS):

- Sample Extraction: Automatically or manually extract a small sample from the process line into a closed loop.

- Automated Analysis: The sample is automatically diluted (if necessary) and transferred to a flow-through cuvette within the DLS instrument located near the production line [1].

- Rapid Feedback: The measurement is conducted automatically after a short equilibration time (e.g., 60 s). Results are generated within minutes, providing efficient final product control with minimal manual intervention [1].

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of PAT strategies requires specific instrumentation and consumables. The table below lists key items for setting up the experiments described in the protocols.

Table 2: Key Research Reagents and Solutions for PAT Implementation

| Item Name | Function/Application | Experimental Protocol |

|---|---|---|

| Raman Spectrometer with Immersion Probe | Enables direct, in-line monitoring of chemical and structural attributes in the process stream [7] [3]. | In-line monitoring of product aggregation during chromatography. |

| Spatially Resolved DLS (SR-DLS) Instrument | Allows for inline particle size analysis in flowing samples by compensating for flow effects [1]. | Real-time size monitoring of nano-formulations during continuous production. |

| Conventional DLS with Flow-Through Cell | Facilitates at-line size measurements by enabling automatic sample presentation from a process loop [1]. | Rapid particle size analysis of the final product near the manufacturing line. |

| Liquid Handling Robotics | Automates the creation of large calibration sample sets by preparing precise mixing or dilution series [7]. | Generation of high-quality training data for multivariate model calibration. |

| Lipid Phases (e.g., Precirol, Gelucire) | Key components for forming solid lipid nanoparticles (SLNs) and nanostructured lipid carriers (NLCs) [1]. | Production of model lipid-based nanosystems for process monitoring studies. |

| Affinity Chromatography Resins | Used for the primary capture and purification of monoclonal antibodies, a key unit operation for PAT deployment [7]. | Purification process step for in-line Raman monitoring of product quality. |

The strategic selection and implementation of in-line, on-line, at-line, and off-line monitoring techniques form the backbone of modern, quality-driven pharmaceutical manufacturing. For PMI reduction research, leveraging real-time and near real-time data from in-line and on-line tools is paramount. These technologies enable a shift from reactive to predictive maintenance by providing immediate insight into process health and product quality, allowing for proactive adjustments before deviations necessitate corrective maintenance. While off-line analysis remains vital for definitive characterization, the integration of in-line PAT tools, such as Raman spectroscopy and SR-DLS, paves the way for more robust, efficient, and reliable manufacturing processes with minimized operational interruptions.

The modern pharmaceutical landscape is defined by a regulatory-driven shift from traditional quality-by-testing to a more systematic, proactive approach to quality assurance. This paradigm, centered on Quality by Design (QbD), Process Analytical Technology (PAT), and supporting ICH Guidelines (Q8, Q12, Q13), aims to build quality into products from the outset through enhanced scientific understanding and risk management [8] [9] [10]. For researchers focused on in-line monitoring for Process Mass Intensity (PMI) reduction, these frameworks provide the essential regulatory and technical foundation for developing more efficient, sustainable, and robust manufacturing processes. The integration of these elements facilitates real-time quality assurance, enables continuous process verification, and provides a structured pathway for implementing innovative monitoring and control strategies that directly contribute to waste minimization and process efficiency [8] [11].

The foundation of modern pharmaceutical development was reshaped in the early 2000s with the US Food and Drug Administration's (FDA) initiative "Pharmaceutical CGMPs for the 21st Century: A Risk-Based Approach" and its accompanying PAT guidance [10]. This marked a significant departure from a historically reactive quality model, reliant on end-product testing, toward a proactive, science- and risk-based paradigm where "quality cannot be tested into products; it should be built-in or should be by design" [10]. This philosophy of building quality in is the core of QbD.

The International Council for Harmonisation (ICH) has been instrumental in harmonizing these concepts globally through a series of pivotal guidelines. ICH Q8 (Pharmaceutical Development) outlines the QbD framework, introducing key concepts like the Quality Target Product Profile (QTPP), Critical Quality Attributes (CQAs), and design space [12]. ICH Q9 (Quality Risk Management) provides the tools for systematic risk assessment, while ICH Q10 (Pharmaceutical Quality System) describes a comprehensive model for an effective quality system [10]. More recently, ICH Q12 (Technical and Regulatory Considerations for Pharmaceutical Product Lifecycle Management) and ICH Q13 (Continuous Manufacturing) provide further guidance on post-approval change management and the regulatory framework for continuous manufacturing, respectively [11]. PAT serves as a critical enabler within this framework, providing the tools for real-time measurement and control to assure quality [8] [9].

Core Concepts and Their Interrelationships

Quality by Design (QbD) and ICH Q8

QbD is a systematic approach to development that begins with predefined objectives and emphasizes product and process understanding and process control, based on sound science and quality risk management [8] [12]. The implementation of QbD, as detailed in ICH Q8(R2), involves a structured sequence of activities:

- Define the Quality Target Product Profile (QTPP): The QTPP is a prospective summary of the quality characteristics of a drug product that ideally will be achieved to ensure the desired quality, taking into account safety and efficacy [12]. It is the foundational element that guides all subsequent development.

- Identify Critical Quality Attributes (CQAs): CQAs are physical, chemical, biological, or microbiological properties or characteristics that should be within an appropriate limit, range, or distribution to ensure the desired product quality [12]. These are derived from the QTPP.

- Link Material Attributes and Process Parameters to CQAs: Using risk assessment and experimental designs (e.g., Design of Experiments, DoE), the relationship between Critical Material Attributes (CMAs), Critical Process Parameters (CPPs), and the identified CQAs is established [8] [12].

- Establish a Design Space: The design space is the multidimensional combination and interaction of input variables (e.g., material attributes) and process parameters that have been demonstrated to provide assurance of quality [12]. Operating within the design space is not considered a regulatory change, offering flexibility.

- Implement a Control Strategy: A control strategy is a planned set of controls, derived from current product and process understanding, that assures process performance and product quality [12]. This often includes PAT, real-time release testing (RTRT), and continuous process verification (CPV) [8].

The following workflow illustrates the logical sequence and relationships between these core QbD elements and their supporting frameworks:

The Role of Process Analytical Technology (PAT) in QbD

PAT is a system for designing, analyzing, and controlling manufacturing through timely measurements of critical quality and performance attributes of raw and in-process materials and processes, with the goal of ensuring final product quality [9] [10]. It is a fundamental enabler of the QbD approach. PAT tools facilitate:

- Real-time process monitoring and control: Moving away from offline testing to inline/online measurements allows for immediate adjustment of CPPs to maintain CQAs within the design space [8] [13].

- Enhanced process understanding: The data-rich environment created by PAT provides deep insights into process dynamics and the impact of variability [8].

- Support for Real-Time Release Testing (RTRT): The ability to ensure product quality based on process data enables RTRT, reducing the need for end-product testing [8] [13].

- Foundation for Continuous Manufacturing: PAT is essential for the control strategies required in continuous manufacturing, as outlined in ICH Q13, allowing for real-time detection of disturbances and trend shifts [11].

Application in PMI Reduction Research

For research targeting PMI reduction, the QbD/PAT framework is indispensable. Process Mass Intensity (PMI) is a key green metric calculated as the total mass of inputs (raw materials, solvents) divided by the mass of the API produced [14]. A lower PMI signifies a greener, more efficient process. The regulatory drive toward well-understood, controlled processes directly aligns with PMI reduction goals. By employing PAT to monitor and control processes in real-time within a defined design space, researchers can:

- Optimize reagent and solvent use, minimizing waste.

- Identify and control sources of variability that lead to off-spec material and rework.

- Develop more robust, intensified processes with fewer unit operations and higher overall yield.

Quantitative Data and PAT Tools in Unit Operations

The application of PAT, QbD, and CPV is most evident in specific pharmaceutical unit operations. The following table summarizes critical parameters, monitored attributes, and advanced PAT tools as cited in recent literature, providing a quantitative overview for researchers.

Table 1: PAT Applications and Critical Parameters in Pharmaceutical Unit Operations

| Unit Operation | Critical Process Parameter (CPP) | Intermediate Quality Attribute (IQA) / CQA | PAT Tools Employed | Reference |

|---|---|---|---|---|

| Blending | Blending time, Blending speed, Order of input, Filling level | Drug content, Blending uniformity, Moisture content | NIR Spectroscopy | [8] |

| Granulation | Binder solvent amount, Binder solvent concentration, Impeller speed, Chopper speed | Granule size distribution, Granule strength, Flowability, Bulk/density | Spatial Filter Velocimetry, NIR Spectroscopy | [8] |

| Tableting | Compression force, Pre-compression force, Turret speed, Feed frame speed | Hardness, Thickness, Friability, Disintegration time | NIR Spectroscopy, Thermal Effusivity Sensor, Terahertz Pulsed Imaging | [8] |

| Coating | Spray rate, Pan speed, Inlet air temperature, Gun-to-bed distance | Weight gain, Coating uniformity, Moisture content | NIR Spectroscopy, Raman Spectroscopy, Terahertz Pulsed Imaging | [8] |

| Dry Granulation (Roller Compaction) | Press force, Roller speed, Feed screw speed | Ribbon density, Granule particle size distribution | Inline Density Measurement (novel PAT tool) | [13] |

A notable advancement in PAT is the development of inline, real-time tools for previously challenging operations like dry granulation. For example, a novel inline density measurement system for roller compactors has been commercialized, which measures ribbon density at the point of maximum compression (gap density) without stopping the line [13]. This system uses a setup with upper and lower valves, where the lower valve is positioned on weighing cells, allowing for continuous mass flow calculation and automatic adjustment of press force to maintain the desired density range. This exemplifies a PAT tool directly enabling closed-loop control and supporting QbD and RTRT objectives in continuous manufacturing [13].

Experimental Protocols for PAT Implementation and PMI Assessment

This section provides detailed methodologies for implementing PAT in a key unit operation and for assessing the greenness of a process through PMI, which is critical for research in PMI reduction.

Protocol: Inline Monitoring of Ribbon Density in Dry Granulation

Objective: To implement and validate an inline PAT tool for real-time monitoring and control of ribbon density during a dry granulation process using a roller compactor, with the goal of ensuring consistent granule quality and reducing waste.

Materials:

- Roller compactor (e.g., Gerteis Pactor or Mini-Pactor series)

- Inline Density Control PAT tool (e.g., system with weighing cells and control valves)

- Powder blend (API and excipients)

- Data acquisition and control system software

Methodology:

- System Configuration: Install the inline density measurement system as an add-on to the roller compactor outlet. The system typically consists of an upper valve to control product flow and a lower valve positioned on precision weighing cells for measurement [13].

- Calibration: Verify that the system requires no product-specific calibration, as per manufacturer claims [13]. Perform a system zeroing with the equipment empty.

- Parameter Setting: Define the target ribbon density range based on prior DoE studies linking ribbon density to critical granule CQAs (e.g., particle size distribution, flowability). Set the measurement time intervals, correction limits, and the magnitude of press force adjustments in the control software.

- Process Operation: Initiate the roller compactor with the chosen feed screw speed and roller speed. Activate the inline density control system.

- Real-time Control Loop:

- The system periodically closes the upper valve, allowing the lower valve (on the weighing cells) to collect a sample of the ribbon for a predefined time.

- The mass of the sample is measured, and the density is calculated based on the known volume flow (from roller dimensions and speed) and measured mass flow [13].

- The measured density is compared to the target set point. If the density deviates beyond the predefined limits, the system automatically adjusts the hydraulic press force of the roller compactor to bring the density back within the target range.

- This measurement-control-adjustment cycle continues throughout the entire production run.

- Data Collection and Analysis: Continuously log ribbon density, press force, and other relevant process parameters. Use statistical process control (SPC) charts to monitor process stability and capability over time.

- Validation: Correlate the inline density measurements with offline reference methods (e.g., mercury porosimetry or calibrated offline density tests) on collected ribbon samples to validate the accuracy of the PAT tool.

Protocol: Determination of Process Mass Intensity (PMI)

Objective: To calculate the Process Mass Intensity (PMI) for an API synthesis or a drug product manufacturing process to benchmark and track progress in green chemistry and sustainability.

Materials:

- Detailed process description with all input materials

- Mass data for all input materials (kg)

- Mass data for the final isolated API or drug product (kg)

Methodology:

- Define Process Boundaries: Clearly define the start and end points of the process for which PMI is being calculated (e.g., from raw materials to isolated final API).

- Sum Total Input Mass: For the defined process, sum the masses of all input materials. This includes all reactants, reagents, catalysts, and solvents used in the process. Water can be included or excluded, but this must be stated explicitly in the reporting [14].

- Total Mass of Inputs (kg) = MassReactantA + MassReactantB + ... + MassSolvent1 + MassSolvent2 + ...

- Record Output Mass: Record the mass of the final product produced from the process, typically the isolated and dried API or the final drug product.

- Mass of API Produced (kg) = Mass of final, dried product

- Calculate PMI: Use the standard PMI formula.

- PMI = Total Mass of Inputs (kg) / Mass of API Produced (kg) [14]

- Interpretation: A lower PMI value indicates a more efficient and environmentally friendly process. The PMI can be broken down by individual stages to identify hotspots of inefficiency and waste generation, guiding research efforts toward process intensification and solvent/reagent reduction.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Research Reagent Solutions for PAT and QbD Implementation

| Item / Tool Category | Specific Examples | Function / Application in Research |

|---|---|---|

| PAT Analytical Probes | NIR (Near-Infrared) Spectroscopy Probe, Raman Spectroscopy Probe, Terahertz Pulsed Imaging Sensor | Enables non-destructive, real-time measurement of critical attributes like blend uniformity, API concentration, moisture content, and coating thickness directly in the process stream. |

| Process Modeling Software | Software for Multivariate Data Analysis (MVDA), Design of Experiments (DoE), and Partial Least Squares (PLS) Regression | Critical for analyzing complex data from PAT tools, building statistical models, defining the design space, and developing predictive models for real-time release. |

| Inline Density Measurement System | Custom PAT tool for roller compactors (e.g., Gerteis Inline Density Control) | Specifically measures ribbon density in real-time during dry granulation, enabling closed-loop control of this critical parameter for consistent granule quality. |

| Reference Analytical Standards | USP/EP reference standards for APIs and key impurities, Certified solvent standards | Used for calibration and validation of PAT methods. Essential for demonstrating that inline measurements are equivalent or superior to traditional offline tests. |

| Small-Scale Processing Equipment | Mini-Pactor with Ultra Small Amount Funnel | Allows for process development and PAT method scouting with very small amounts of material (as little as 10 grams), enabling high-yield, low-waste experimentation during early-stage development. |

Application Note: The Role of In-line Monitoring in Pharmaceutical Manufacturing

Within pharmaceutical development, the control and reduction of Particulate Matter Ingestion (PMI) is critical for ensuring final product quality, safety, and efficacy. Traditional quality assurance methods, which often rely on off-line laboratory testing, introduce significant delays between production and the availability of quality data. This paradigm is ill-suited for modern quality-by-design (QbD) principles, where understanding and controlling the manufacturing process in real-time is paramount. This application note details the implementation of in-line monitoring technologies as the core enabler for a comprehensive PMI reduction strategy. By integrating analytical probes directly into the manufacturing process, this approach simultaneously delivers three core benefits: real-time quality assurance, substantial cycle time reduction, and direct yield optimization. The content herein is framed within a broader thesis on in-line monitoring for PMI reduction, providing researchers and drug development professionals with validated protocols and data-driven insights.

Quantitative Impact of In-line Monitoring

The adoption of in-line monitoring systems induces a paradigm shift in key performance indicators across the pharmaceutical manufacturing workflow. The following table summarizes comparative data between traditional and in-line methodologies, illustrating the tangible benefits.

Table 1: Comparative Analysis of Traditional vs. In-line Monitoring Approaches

| Performance Indicator | Traditional Off-line Testing | In-line Monitoring | Measured Improvement |

|---|---|---|---|

| Quality Data Lag Time | 4 - 24 hours | < 2 minutes | Reduction of > 99% [15] |

| Batch Release Cycle Time | 5 - 10 days | 1 - 3 days | Reduction of 50 - 80% |

| Process Deviation Detection | Post-hoc (hours/days later) | Real-time (within seconds) | Enables immediate corrective action |

| Overall Yield Impact | Baseline | +5% to +15% | Direct result of continuous control |

| Representative Analytical Method | HPLC (Off-line) | NIR / Raman (In-line) | Elimination of sample preparation |

Experimental Protocol: Establishing an In-line PMI Monitoring System

This protocol outlines the steps for integrating an in-line monitoring system for PMI reduction in a small-molecule active pharmaceutical ingredient (API) crystallization process.

1. Objective: To install, qualify, and utilize an in-line Raman spectroscopy system for real-time monitoring and control of API crystal form and solvent composition, thereby reducing PMI-related impurities.

2. Materials and Equipment:

- Process Reactor (e.g., 50 L glass-lined jacketed reactor)

- In-line Raman Spectrometer with 785 nm laser source

- Immersion Optic Raman Probe (Hastelloy body, sapphire window)

- Temperature and pH probes

- Process Control System (PCS) or Data Historian

- Calibration standards for target API polymorphs and solvent ratios

3. Methodology: 1. System Design & Risk Assessment (1-2 weeks): * Identify Critical Process Parameters (CPPs) and Critical Quality Attributes (CQAs) linked to PMI, such as solvent water content, impurity concentration, and polymorphic form. * Perform a risk assessment (e.g., using an FMEA framework) to select the optimal probe placement within the reactor vessel to ensure representative sampling and avoid dead zones. 2. Hardware Installation & Qualification (1 week): * Install the immersion probe through a designated reactor port, ensuring proper sealing and alignment. * Conduct instrumental qualification (IQ/OQ) following vendor protocols to verify laser power, wavelength accuracy, and spectral resolution. 3. Chemometric Model Development (2-4 weeks): * Collect Raman spectra from calibration batches with known variations in CPPs and CQAs. * Use multivariate analysis (e.g., Partial Least Squares Regression, PLSR) to build predictive models correlating spectral features with key parameters like API concentration and impurity levels. * Validate the model's accuracy and robustness using an independent set of test samples. 4. Process Integration & Control Strategy (Ongoing): * Integrate the real-time analytical data stream from the Raman system into the PCS. * Establish control algorithms and alerts. For example: "If impurity spectral signature exceeds threshold X, initiate corrective action Y (e.g., adjust cooling rate)." * Run validation batches to demonstrate the system's ability to maintain process control within the designated design space, directly contributing to PMI reduction.

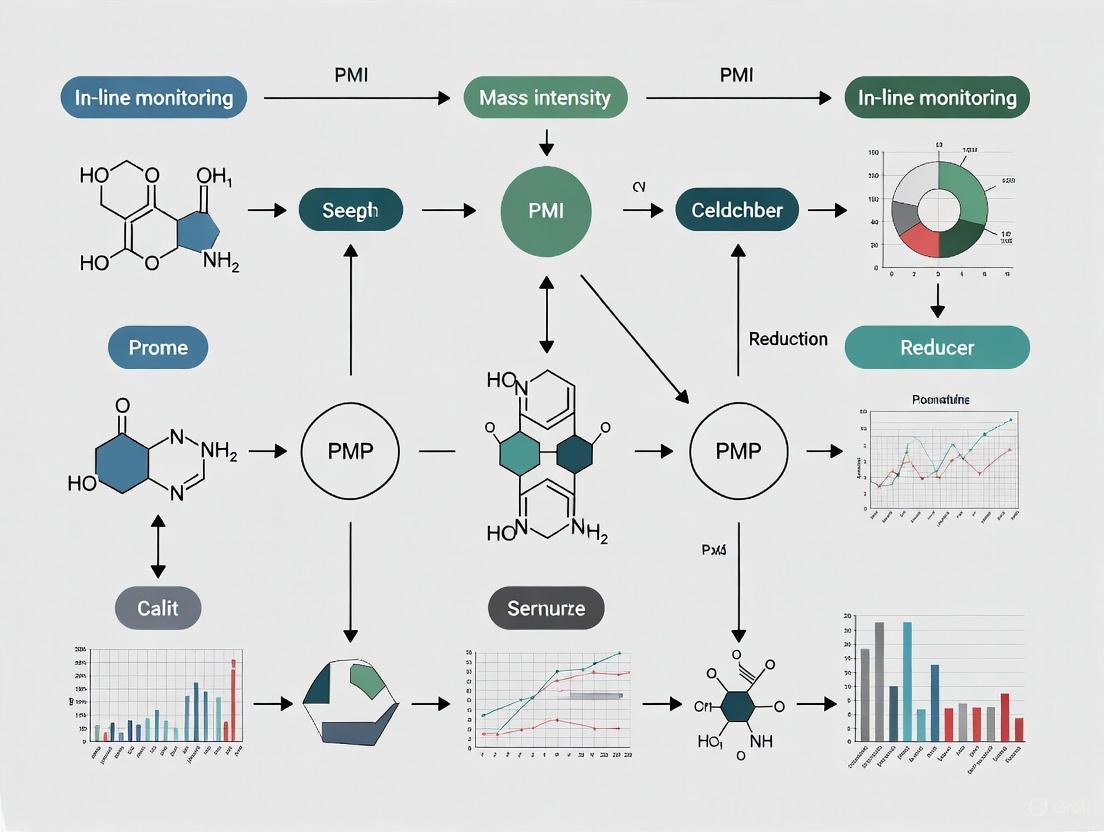

Visualization of the In-line Monitoring Control Logic

The following diagram illustrates the logical workflow and feedback loop established by an in-line monitoring system for real-time quality assurance and control.

In-line Monitoring Control Logic for PMI Reduction

The Scientist's Toolkit: Research Reagent Solutions for In-line Monitoring

Successful implementation of in-line monitoring requires specific materials and reagents for system calibration, validation, and operation. The following table details key items essential for researchers in this field.

Table 2: Essential Research Reagents and Materials for In-line Monitoring

| Item | Function & Application Notes |

|---|---|

| Chemometric Software Suite | Enables the development of multivariate calibration models (e.g., PLSR, PCA) that translate complex spectral data into actionable process parameters. Critical for method development. |

| Process-Analytical Technology (PAT) Probe | The physical interface with the process stream. Selection criteria include material of construction (e.g., Hastelloy for corrosion resistance), optical window (sapphire for durability), and form factor (immersion, flow-through). |

| Validation Standards Kit | A set of certified reference materials with precisely known concentrations of API, impurities, and solvents. Used for initial calibration and periodic verification of the in-line method's accuracy. |

| Static Mixer & Flow Cell Assembly | For side-stream analysis, this setup ensures a homogeneous and representative sample is presented to the analytical probe, vital for accurate and reproducible measurements. |

| Data Integration Middleware | Software that facilitates the secure and reliable communication between the analyzer's data system and the plant's Process Control System (PCS) or data historian. |

Experimental Protocol: Yield Optimization via Real-Time Reaction Monitoring

This protocol provides a detailed methodology for using in-line Fourier Transform Infrared (FTIR) spectroscopy to monitor a catalytic reaction endpoint, directly minimizing byproduct formation and maximizing yield.

1. Objective: To utilize in-line FTIR for precise, real-time endpoint detection in a stoichiometrically sensitive coupling reaction, thereby optimizing yield and reducing PMI from unreacted starting materials or side-products.

2. Materials and Equipment:

- Jacketed Lab Reactor (e.g., 2 L)

- In-line FTIR Spectrometer with ATR (Attenuated Total Reflectance) flow cell

- Circulating Chiller/Heater for temperature control

- Reagents: API Intermediate, Catalyst, Solvents

3. Methodology: 1. Baseline Establishment: Charge the reactor with starting materials and solvent. Begin agitation and temperature control. Commence recording FTIR spectra immediately to establish a spectral baseline. 2. Reaction Initiation & Monitoring: Introduce the catalyst to initiate the reaction. Continuously collect FTIR spectra (e.g., every 30 seconds). Key spectral peaks for the starting material (e.g., C=O stretch at 1710 cm⁻¹) and the desired product (e.g., C-N stretch at 1240 cm⁻¹) are monitored. 3. Endpoint Determination: The reaction endpoint is definitively determined by observing the plateau of the product peak area and the concomitant disappearance (or stabilization at a minimal level) of the starting material peak, as calculated by the integrated chemometric model. 4. Quenching and Work-up: Immediately upon FTIR-indicated endpoint, quench the reaction. Compare the yield and impurity profile (via off-line HPLC) against a control batch stopped based on a fixed time recipe.

4. Expected Outcome: Batches controlled by the in-line FTIR endpoint detection protocol are expected to show a significant reduction in cycle time (by eliminating unnecessary "safety margins") and a measurable increase in yield due to the minimization of decomposition or side reactions that occur when the reaction is allowed to proceed beyond its optimal endpoint.

The establishment of a robust control strategy in biopharmaceutical manufacturing hinges on the precise identification of Critical Process Parameters (CPPs) and Critical Quality Attributes (CQAs). A CPP is a process parameter whose variability impacts a CQA and therefore should be monitored or controlled to ensure the process produces the desired quality [16]. A CQA is a physical, chemical, biological, or microbiological property or characteristic that should be within an appropriate limit, range, or distribution to ensure the desired product quality [16]. The International Conference for Harmonization (ICH) Q8(R2) guidance states that a control strategy should include, at a minimum, control of the critical process parameters and material attributes [17].

The transition from traditional quality-by-testing (QbT) to a modern quality-by-design (QbD) framework is facilitated by Process Analytical Technology (PAT), defined by the FDA as "a system for designing, analyzing, and controlling manufacturing through timely measurements (i.e., during processing) of critical quality and performance attributes of raw and in-process materials and processes, with the goal of ensuring final product quality" [18]. PAT enables real-time monitoring and control, which is fundamental for implementing continuous manufacturing and real-time release (RTR) of products [18]. This approach is crucial for Process Monitoring and Intervention (PMI) reduction as it shifts the focus from end-product testing to building quality directly into the manufacturing process, thereby reducing the need for extensive offline testing and intervention.

Systematic Identification of CPPs and CQAs

A Framework for Selection

A systematic approach to selecting CPPs, aligned with ICH Q8, Q9, Q10, and Q11 guidelines, involves several key steps [17]:

- Identify CQAs for the drug product and substance, which form the foundation for determining what needs to be controlled.

- Define all unit operations and their associated equipment sets across upstream and downstream processes.

- Complete a quality risk management (QRM) assessment for all materials and unit operations to identify factors with potential influence on CQAs.

- Explore the design space for key factors using Design of Experiments (DoE) or other multivariate methods.

- Determine the factor effect size and select CPPs based on their impact on CQAs.

- Evaluate CPPs for ease of control and practical application to process control.

Assessing Practical Significance

A critical challenge is distinguishing statistically significant effects from those that are practically significant. A methodology combining process risk (Z score) and parameter effect size (20% rule) provides a consistent framework for this assessment [16].

- Z Score: Evaluates process risk by measuring how close the dataset is to a specification limit. It is calculated as Z = (U - x̄)/s, where U is the upper specification limit, x̄ is the data average, and s is the standard deviation [16].

- Z > 6: Low-risk process; no practically significant parameters.

- Z < 2: High-risk process; statistically significant parameters are generally practically significant.

- 2 ≤ Z ≤ 6: The effect size of individual parameters must be quantified.

- 20% Rule: For processes with a Z score between 2 and 6, a parameter is considered practically significant if its effect size is greater than 20% of the specification range [16].

This statistical approach prevents the "dilution" of the control strategy by including unimportant process parameters as CPPs, ensuring focus remains on parameters truly critical to product quality [16].

PAT Tools and In-line Monitoring for Downstream Processing

While PAT is more common upstream, its application in downstream processing (DSP) is critical as DSP accounts for approximately 80% of biopharmaceutical production costs and directly impacts final product quality [18]. The following table summarizes key CPPs, monitored CQAs, and corresponding PAT tools for major downstream unit operations.

Table 1: Key CPPs, CQAs, and PAT Tools in Downstream Processing

| Unit Operation | Critical Process Parameters (CPPs) | Critical Quality Attributes (CQAs) | PAT Tools & Monitoring Solutions |

|---|---|---|---|

| Buffer Preparation | pH, conductivity [19] | Buffer composition, ionic strength | In-line pH and conductivity sensors (e.g., Hamilton SU OneFerm Arc 120, Conducell 4USF Arc 120) for real-time monitoring and control of in-line dilution [19]. |

| Chromatography | pH, conductivity, flow rate, protein titer, temperature [19] | Purity (HCP, DNA, RNA levels), aggregate levels, product-related variants [19] | In-line sensors for pH, conductivity, and UV for protein concentration; Raman spectroscopy for real-time monitoring of aggregation and fragmentation [7] [19]. |

| Tangential Flow Filtration (TFF) | pH, conductivity, transmembrane pressure, feed/permeate flow rates, protein concentration, temperature [19] | Protein concentration, purity, aggregate levels, buffer exchange efficiency | In-line UV sensors for protein concentration; pressure and flow sensors; in-line pH and conductivity sensors for monitoring buffer exchange [19]. |

| Viral Inactivation | pH, hold time, temperature | Viral clearance, product stability | In-line pH sensors to ensure maintenance of the narrow pH window required for effective inactivation. |

Advanced PAT in Practice: Raman Spectroscopy

Raman spectroscopy is emerging as a powerful PAT tool for in-line monitoring of multiple CQAs simultaneously. It offers advantages such as minimal water interference and the ability to provide spectral data with multiple variables [7]. A recent study demonstrated its capability to monitor product aggregation and fragmentation every 38 seconds during the elution phase of affinity chromatography—a critical unit operation for impurity removal [7].

Key Implementation Steps:

- System Calibration: A high-throughput calibration was achieved using an integrated robotic system (Tecan) to create a mixing series from chromatography fractions, generating 169 calibration data points matched with off-line analytical results (e.g., SE-HPLC for aggregates) [7].

- Data Preprocessing: An optimized preprocessing pipeline (e.g., high-pass digital Butterworth filtering and sapphire peak normalization) is applied to reduce noise and correct for spectral distortions from factors like flow rate variations [7].

- Model Training: A large calibration dataset enables the training of various regression models, such as Convolutional Neural Networks (CNN) and Partial Least Squares (PLS), to deconvolute Raman spectra into accurate, real-time CQA measurements [7].

Experimental Protocols for In-line Monitoring

Protocol: Real-Time Monitoring of Product Quality Attributes via Raman Spectroscopy During Affinity Chromatography

This protocol details the procedure for in-line monitoring of protein aggregation and fragmentation during the elution step of an affinity capture unit operation [7].

1. Research Reagent Solutions

Table 2: Essential Materials for Raman Spectroscopy PAT

| Item | Function/Description |

|---|---|

| Raman Spectrometer | Equipped with virtual slit technology for fast signal collection on the order of seconds [7]. |

| Flow Cell | In-line flow cell integrated into the elution line of the chromatography system. |

| Harvested Cell Culture Fluid (HCCF) | The feedstock containing the target protein (e.g., an IgG1 mAb) and impurities. |

| Chromatography System & Resin | For performing the affinity capture step (e.g., Protein A chromatography). |

| Calibration Samples | Series of elution fractions from a representative chromatography run, used to build the quantitative model. |

| Liquid Handling Robot | Automated system (e.g., Tecan) for precise mixing of fractions to generate a large calibration dataset [7]. |

| Off-line Analytics | SE-HPLC or similar methods for validating aggregate and fragment levels in calibration samples [7]. |

2. Methodology

Step 1: System Setup and Calibration

- Integrate the Raman spectrometer with an in-line flow cell on the elution line of the chromatography system.

- Perform an initial affinity chromatography run and collect elution fractions.

- Use a liquid handling robot to create a mixing series from the collected fractions, generating a wide range of aggregate and fragment levels. Analyze these mixtures using off-line SE-HPLC to obtain reference CQA values [7].

- Collect Raman spectra for each calibration sample and pair them with the off-line analytical data to create a robust calibration dataset.

Step 2: Model Development and Training

- Preprocess the spectral data (e.g., Butterworth filtering, normalization) to reduce noise.

- Train a suite of computational models (e.g., CNN, PLS, SVR) on the calibration dataset to establish a relationship between the Raman spectra and the CQAs (aggregates, fragments). Select the best-performing model based on metrics like R² and Mean Absolute Error (MAE) [7].

Step 3: Real-Time Monitoring and Control

- For subsequent manufacturing runs, collect Raman spectra in real-time during the elution phase.

- Feed the preprocessed spectra into the trained model to obtain predictions for aggregate and fragment levels every 38 seconds.

- Use this real-time data for process tracking and to inform or automate process control decisions, ensuring CQAs remain within the desired range.

The workflow for this protocol, from calibration to real-time control, is outlined below.

Protocol: In-line Density Monitoring for Dry Granulation

In pharmaceutical solid dosage manufacturing, in-line monitoring is vital for continuous processing. This protocol describes an in-line density measurement for a roller compactor in dry granulation, a key PAT tool for real-time control [13].

1. Methodology

- Step 1: System Integration

- Install an inline density measurement system as an add-on to the roller compactor. The system typically consists of two valves in the outlet product stream, with the lower valve positioned on weighing cells [13].

- Step 2: Measurement Sequence

- Close the lower valve and open the upper valve to allow the granules to fill the space between them.

- Close the upper valve and open the lower valve, allowing the collected sample to fall onto the weighing cells.

- The mass of the sample is measured, and the system calculates the ribbon density (mass flow / volume flow) [13].

- Step 3: Closed-Loop Control

- The measured density is compared to the target setpoint.

- The system's control software automatically adjusts the roller compactor's press force to maintain the ribbon density within the defined range, enabling fully automated, closed-loop control without stopping the continuous line [13].

Data Integration and Control Strategies

The ultimate goal of in-line monitoring is to establish a fully integrated and automated control strategy. This involves using real-time CPP and CQA data not just for monitoring, but for proactive process control.

- From Periodic to Adaptive Monitoring: A study comparing monitoring strategies demonstrated that adaptive monitoring and intervention (AMI), which uses continuous "dose-free" imaging and acts only when necessary, is significantly more effective than periodic monitoring and intervention (PMI) at managing process variability, resulting in a mean over-threshold time of 0.6% compared to ~4% with PMI [20]. This principle translates to bioprocessing, where continuous PAT feedback is superior to infrequent off-line sampling.

- Closed-Loop Control: Advanced PAT tools enable closed-loop control systems. For example, the in-line density control for roller compactors automatically adjusts the press force based on real-time density measurements to maintain the defined parameter range during production [13].

- Digital Integration: Modern sensors with digital outputs (e.g., Hamilton intelligent ARC sensors) can be seamlessly integrated into process control systems. This provides robust communication, real-time sensor health diagnostics, and simplified calibration, reducing the risk of batch failure due to probe fouling or signal issues [19].

The following diagram illustrates the information flow in a modern, PAT-driven bioprocess with closed-loop control.

The systematic monitoring of CPPs and CQAs from upstream to downstream processing is a cornerstone of modern biopharmaceutical quality assurance. By implementing a QbD framework and leveraging advanced PAT tools like in-line Raman spectroscopy and automated density control, manufacturers can achieve unprecedented levels of process understanding and control. This shift from offline, periodic testing to continuous, in-line monitoring and adaptive control is fundamental to reducing PMI, enhancing efficiency, minimizing product loss, and ultimately ensuring the consistent production of high-quality, safe, and effective therapeutics. The integration of real-time data with predictive models and closed-loop control strategies paves the way for intelligent, autonomous biomanufacturing systems.

Implementing In-Line Monitoring Technologies: From Probes to Spectrometers

Application Notes

Probe-based sensors are critical tools for in-line monitoring in pharmaceutical manufacturing, directly contributing to Process Mass Intensity (PMI) reduction by enabling real-time process control, minimizing waste, and ensuring consistent product quality.

pH Sensors monitor the acidity or alkalinity of solutions in bioreactors and purification steps. Precise pH control is essential for optimizing reaction yields, maintaining cell viability in bioprocesses, and ensuring the stability of active pharmaceutical ingredients (APIs). In-line monitoring eliminates the need for offline sampling, reducing sample waste and potential contamination [21].

Dissolved Oxygen (DO) Sensors are vital in aerobic fermentation and bioreactor processes. Maintaining optimal DO levels is crucial for maximizing biomass yield and ensuring the efficient production of biologics. Real-time monitoring prevents over-aeration, thereby reducing energy consumption, and under-aeration, which can lead to batch failures, directly impacting PMI by improving process efficiency and reducing waste [21].

Capacitance Sensors are primarily used for real-time monitoring of biomass in cell culture processes. They measure the permittivity of the culture, which correlates with viable cell density. This allows for precise control of feeding strategies and the determination of optimal harvest times, leading to increased product titers and more efficient raw material use, which lowers PMI [22].

The integration of these sensors aligns with the Industry 4.0 and digital transformation trends in pharmaceutical manufacturing, which leverage Manufacturing Execution Systems (MES) and Process Analytical Technology (PAT) for real-time quality control and data-driven decision-making [22].

Table 1: Key Performance Parameters of Probe-Based Sensors

| Sensor Type | Measured Parameter | Typical In-line Applications | Key Impact on PMI Reduction |

|---|---|---|---|

| pH | Hydrogen ion activity (pH) | Bioreactors, chemical synthesis, purification | Optimizes reaction yields and cell growth, minimizing reprocessing and raw material waste. |

| Dissolved Oxygen (DO) | O₂ concentration in liquid (mg/L) | Aerobic fermentation, cell culture | Improves biomass yield and process efficiency, reduces energy consumption from over-aeration. |

| Capacitance | Permittivity (pF/cm) | Bioreactors (viable cell density) | Enables precise feeding and harvest, maximizing output and efficient use of media and substrates. |

Experimental Protocols

Protocol for In-line pH Monitoring in a Bioreactor

This protocol details the methodology for real-time pH monitoring and control in a mammalian cell culture bioreactor to maintain optimal growth conditions and improve product yield.

2.1.1 Research Reagent Solutions

Table 2: Essential Materials for Bioreactor pH Monitoring

| Item | Function |

|---|---|

| Sterilizable pH Probe (e.g., combined glass electrode) | The core sensor for in-line measurement of hydrogen ion activity. |

| Bioreactor System | Provides a controlled environment (temperature, agitation, aeration) for the cell culture. |

| Cell Culture Media | The nutrient-rich solution that supports cell growth and production. |

| Acid Solution (e.g., CO₂ or HCl) | Used by the control system to lower the pH when it rises above the setpoint. |

| Base Solution (e.g., NaHCO₃ or NaOH) | Used by the control system to raise the pH when it falls below the setpoint. |

| Buffer Solutions (pH 4.01, 7.00, 10.01) | Used for the calibration of the pH probe to ensure measurement accuracy. |

2.1.2 Workflow Diagram

Protocol for In-line Dissolved Oxygen (DO) Monitoring

This protocol describes the setup and use of dissolved oxygen sensors for monitoring and controlling oxygen levels in a bioreactor.

2.2.1 Sensor Operating Principles

DO sensors operate primarily on two principles [21]:

- Electrochemical Sensors: Use a membrane-covered electrode that generates an electrical current proportional to the oxygen concentration diffusing through the membrane.

- Optical Sensors: Employ a luminescent dye excited by a light source; the decay time of the luminescence is inversely related to the oxygen concentration.

2.2.2 Workflow Diagram

Protocol for Biomass Monitoring via Capacitance

This protocol outlines the use of capacitance probes for monitoring viable cell density in a bioreactor, informing critical process decisions.

2.3.1 Research Reagent Solutions

Table 3: Essential Materials for Capacitance-Based Biomass Monitoring

| Item | Function |

|---|---|

| Sterilizable Capacitance Probe | Measures the permittivity of the culture, which is proportional to the concentration of viable cells with intact membranes. |

| Single-Use Bioreactor or Stainless-Steel Vessel | The vessel housing the cell culture and sensor. |

| Cell Line and Culture Media | The biological system being monitored. |

| Calibration Standards | Solutions of known permittivity for sensor verification. |

2.3.2 Workflow Diagram

The implementation of Process Analytical Technology (PAT) is revolutionizing biopharmaceutical manufacturing by enabling real-time monitoring and control of Critical Process Parameters (CPPs) and Critical Quality Attributes (CQAs). This advancement is crucial for reducing Process Mass Intensity (PMI), a key metric for assessing the environmental impact and efficiency of pharmaceutical processes. Among the most powerful tools for in-line monitoring are vibrational spectroscopic techniques, including Raman, Near-Infrared (NIR), and Mid-Wave Infrared (MWIR or MIR) spectroscopy. These non-destructive, reagent-free techniques provide molecular-level insights into process streams, allowing for rapid decision-making and intervention. When integrated with advanced chemometric models and machine learning algorithms, they form the cornerstone of modern Quality by Design (QbD) paradigms, facilitating the development of more sustainable and efficient manufacturing processes with significantly reduced PMI [7] [23].

Raman, NIR, and MIR spectroscopy offer complementary strengths for in-line monitoring. The following table summarizes their key characteristics, advantages, and limitations, providing a guide for selecting the appropriate technique for specific PMI reduction applications.

Table 1: Comparison of Advanced Spectroscopic Techniques for In-line Monitoring

| Feature | Raman Spectroscopy | Near-Infrared (NIR) Spectroscopy | Mid-Infrared (MIR) Spectroscopy |

|---|---|---|---|

| Physical Principle | Inelastic scattering of light [24] | Absorption of light (overtone & combination vibrations) [25] | Absorption of light (fundamental vibrations) [26] |

| Spectral Range | Varies with laser excitation (e.g., 785 nm) [27] | 700–2500 nm [25] | 4000–200 cm⁻¹ [26] |

| Key Advantages | - Low interference from water- Good spatial resolution- Specific molecular "fingerprints" [24] [27] | - Fast measurements suitable for in-line use- Deep penetration depth- Robust fiber-optic probes [24] [25] | - High sensitivity to functional groups- Strong absorption bands- Excellent for organics [23] [26] |

| Typical Limitations | - Sensitivity to fluorescence- Interference from ambient light [24] | - Broad, overlapping spectral bands- Lower spatial resolution [24] | - Strong water absorption can interfere- Requires short pathlengths (e.g., ATR) [23] |

| Example Performance (RMSEP) | Glucose: 0.92 g/L; Ethanol: 0.39 g/L; Biomass: 0.29 g/L [26] | Effective for content uniformity & tensile strength of flakes [28] | Glucose: 0.68 g/L; Ethanol: 0.48 g/L; Biomass: 0.37 g/L [26] |

| Ideal for PMI Reduction in: | - Cell culture monitoring (glucose, lactate)- Monitoring product aggregation & fragmentation [7] [27] | - Roller compaction (content uniformity, tensile strength)- Polymer curing monitoring [28] [25] | - Ultrafiltration/Diafiltration (UFDF) protein concentration- Fermentation monitoring (ethanol, glucose) [23] [26] |

Detailed Experimental Protocols

Protocol 1: In-line Monitoring of Cell Culture Metabolites Using Raman Spectroscopy

This protocol details the use of Raman spectroscopy for real-time monitoring of key metabolites like glucose and lactate in a bioreactor, enabling fed-batch optimization to reduce raw material waste and PMI [27].

1. Research Reagent Solutions

- Cell Culture: Chinese Hamster Ovary (CHO) cell line expressing a therapeutic monoclonal antibody (mAb).

- Culture Media: Standard commercial cell culture media, composition known.

- Calibration Standards: Solutions with known concentrations of glucose and lactate in culture media, validated by reference methods (e.g., HPLC, ion chromatography) [27].

2. Equipment and Setup

- Raman Analyzer: Metrohm 2060 Raman Analyzer or equivalent with a 785 nm laser [27].

- Probe: Autoclavable immersion optic probe designed for in-line use in bioreactors.

- Software: Spectral acquisition and analysis software (e.g., Metrohm IMPACT and Vision).

- Bioreactor: Standard benchtop or production bioreactor with appropriate probe ports.

3. Procedure 1. System Calibration: * Collect Raman spectra from the calibration standards covering the expected concentration ranges (e.g., glucose: 0.1-40 g/L; lactate: 0.0-5.0 g/L). * Use reference analytical methods to determine the actual concentration of each standard. * Develop a multivariate calibration model (e.g., Partial Least Squares regression, PLS) linking the spectral features to the known concentrations [27]. 2. In-line Process Monitoring: * Aseptically install the Raman immersion probe into the bioreactor. * Initiate the cell culture process. The Raman analyzer collects spectra directly from the culture broth at set intervals (e.g., every minute). * In real-time, the acquired spectra are pre-processed and fed into the calibration model. * The model outputs the predicted concentrations of glucose and lactate, providing a continuous trend of metabolite levels. 3. Process Control: * Use the real-time glucose concentration to control feed pumps, preventing overfeeding (reducing waste) and underfeeding (maintaining cell health and productivity). * Monitor lactate accumulation to understand cell metabolic state and overall culture health [27].

4. Expected Outcomes The Raman system can achieve a standard error of prediction (SEP) of 0.20 g/L for glucose and 0.12 g/L for lactate, allowing for tight control of the bioprocess and a significant reduction in media component waste, thereby lowering PMI [27].

Protocol 2: Real-time Monitoring of Protein Concentration during UF/DF using MIR Spectroscopy

This protocol describes the use of MIR-ATR spectroscopy for in-line monitoring of protein concentration during the ultrafiltration/diafiltration (UF/DF) step, a critical unit operation in downstream processing. Accurate monitoring prevents over-processing and buffer waste [23].

1. Research Reagent Solutions

- Protein Solution: Monoclonal antibody (mAb) from a CHO cell culture, post-purification.

- Buffers: Equilibration buffer (e.g., 40 mM Excipient I, 135 mM Excipient II, pH 6.0) and diafiltration buffer (e.g., 5 mM Excipient III, 240 mM Excipient IV, pH 6.0) [23].

2. Equipment and Setup

- MIR Spectrometer: e.g., Monipa MIR spectrometer (IRUBIS GmbH).

- Flow Cell: Single-use flow cell with a single-bounce silicon Attenuated Total Reflectance (ATR) crystal.

- UF/DF System: Tangential Flow Filtration (TFF) system with a suitable membrane (e.g., 30 kDa MWCO).

- Peristaltic Pump: To circulate the protein solution through the flow cell.

3. Procedure 1. Hardware Integration: * Integrate the MIR flow cell in-line on the feed line of the UF/DF system, ensuring a secure connection with minimal dead volume (~0.6 mL) [23]. 2. Calibration: * A one-point calibration based on the absorbance of amide I and amide II peaks in the MIR spectrum can be sufficient for highly accurate protein concentration predictions when compared to validated off-line methods (e.g., OD280) [23]. * Alternatively, a PLS regression model can be built using spectra from samples with a wide range of known protein concentrations (e.g., 17-200 mg/mL). 3. In-line UF/DF Monitoring: * Start the UF/DF process according to the established protocol (ultrafiltration, diafiltration, followed by a second ultrafiltration step). * The MIR spectrometer continuously collects spectra as the protein solution passes over the ATR crystal. * The calibration model converts the spectral data, particularly the absorbance in the amide I and II regions, into a real-time protein concentration value. 4. Process Endpoint Determination: * Use the real-time concentration data to precisely determine the endpoint of the ultrafiltration steps, ensuring the target protein concentration is achieved without exceeding it, thus optimizing process time and buffer consumption.

4. Expected Outcomes MIR spectroscopy with a simple one-point calibration algorithm can predict protein concentration with high accuracy, comparable to validated off-line analytical methods. This enables precise process control, reducing buffer volume and processing time, which directly contributes to PMI reduction in downstream purification [23].

Diagram 1: MIR-ATR In-line Monitoring Workflow for UF/DF.

Protocol 3: Monitoring of Roller Compaction using Near-Infrared (NIR) Spectroscopy

This protocol outlines the use of NIR spectroscopy for the real-time monitoring of critical quality attributes (CQAs) like drug content and tensile strength during roller compaction, a dry granulation process. This ensures right-first-time production, minimizing material rejection and rework [28].

1. Research Reagent Solutions

- Powder Blends: Formulations containing micronized Active Pharmaceutical Ingredient (API, e.g., Chlorpheniramine Maleate), microcrystalline cellulose (MCC), lactose, and magnesium stearate (MgSt) [28].

- Experimental Design: A full factorial design is recommended to generate calibration samples with varying API concentrations and roller compaction forces.

2. Equipment and Setup

- NIR Spectrometer: Equipped with a reflectance probe. Compact micro-spectrometers (1650-2150 nm range) can be cost-effective [28] [25].

- Roller Compactor: Fitted with ribbed rolls.

- Probe Positioning: The NIR reflection probe should be positioned dynamically to minimize spectral variability from the undulating surface of the ribbed flakes. A beam size of ~1.2 mm is recommended [28].

3. Procedure 1. Calibration Model Development: * Prepare powder blends with different API concentrations (e.g., 0-8% w/w). * Compact each blend at different roll forces (e.g., 40-80 kN) to produce flakes with varying properties. * For each flake type, collect NIR spectra dynamically (while the flake is moving) using the optimized probe position. * Measure the reference values for the CQAs (e.g., drug content via HPLC, tensile strength via mechanical testing). * Pre-process the spectral data (e.g., Standard Normal Variate followed by first derivative) to remove physical light scatter effects. * Develop a PLS1 regression model to correlate the pre-processed spectral data (X-matrix) with the measured CQAs (Y-matrix) [28]. 2. In-line Monitoring: * Install the NIR probe at the outlet of the roller compactor to analyze the flakes immediately after formation. * During production, collect NIR spectra in real-time and feed them into the validated PLS model. * The model outputs real-time predictions for drug content, tensile strength, and relative density. 3. Process Control: * Use the real-time predictions for active feedback control of process parameters (e.g., roll force, feed screw speed) to maintain CQAs within the desired specification, ensuring consistent quality and minimizing waste.

4. Expected Outcomes Robust NIR-PLS models can accurately predict ribbed flake attributes in real-time. This allows for immediate corrective actions, dramatically reducing out-of-specification batches and the associated material and energy waste, leading to a lower PMI [28].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Research Reagent Solutions for Spectroscopic PAT Development

| Item Name | Function/Application | Technical Notes |

|---|---|---|

| Chinese Hamster Ovary (CHO) Cell Culture | Model system for production of complex therapeutic proteins (mAbs) [7] [23]. | Industry standard host cell line; metabolic monitoring (glucose/lactate) is critical for productivity and PMI reduction. |

| Hydroxypropyl Methylcellulose (HPMC) | Polymer used to create sustained-release matrix in tablets [24]. | Its concentration and particle size, measurable via chemical imaging, determine drug release rate, a key CQA. |

| Epoxidized Linseed Oil (ELSO) & Citric Acid | Bio-based epoxy resin system for composite materials [25]. | Model system for monitoring degree of curing in polymer processes using NIR spectroscopy. |

| Silicon ATR Crystal (Single-bounce) | Internal Reflection Element (IRE) for MIR spectroscopy in single-use flow cells [23]. | Enables in-line MIR measurements in bioprocesses; cost-effective alternative to diamond for single-use applications. |

| Partial Least Squares (PLS) Regression | Multivariate chemometric algorithm for building quantitative calibration models [28] [26]. | Correlates spectral data (X) with reference analytical data (Y) to predict concentrations of multiple analytes simultaneously. |

| Butterworth Filter | Digital signal processing filter for spectral preprocessing [7]. | Effectively removes high-frequency noise and spectral distortions, such as those induced by flow rate variations in liquid streams. |

Data Analysis and Chemometric Modeling

The transformation of spectral data into actionable process information is achieved through chemometrics. Partial Least Squares (PLS) regression is the most widely used technique for building quantitative models that relate spectral variations (X-matrix) to the property of interest, such as concentration or a CQA (Y-matrix) [28] [26]. The performance of these models is typically evaluated using metrics like the Root Mean Square Error of Prediction (RMSEP) and the coefficient of determination (R²).

Data preprocessing is a critical step to enhance the robustness of these models. Techniques include:

- Standard Normal Variate (SNV): Corrects for light scattering effects due to particle size differences, commonly used in NIR spectroscopy of solid samples [28].

- Smoothing and Derivatives: Reduce noise and resolve overlapping peaks.

- Digital Filtering (e.g., Butterworth filter): Effectively removes high-frequency noise and spectral distortions induced by process conditions, such as variations in flow rate. One study demonstrated a 19-fold reduction in noise after implementing an optimized preprocessing pipeline incorporating a Butterworth filter [7].

More advanced machine learning techniques, such as Convolutional Neural Networks (CNNs), are being applied to extract complex information, like particle size distribution, directly from chemical images, further enriching the dataset used for process control and PMI optimization [24].

Diagram 2: Chemometric Modeling Workflow for PAT.

The integration of Raman, NIR, and MIR spectroscopy as in-line PAT tools represents a paradigm shift in biopharmaceutical manufacturing and process development. Their ability to provide real-time, molecular-level data on CPPs and CQAs is indispensable for implementing a QbD framework aimed at reducing Process Mass Intensity (PMI). By transitioning from off-line, batch-end testing to continuous, in-line monitoring, manufacturers can achieve unprecedented levels of process control and understanding. This leads to significant reductions in failed batches, raw material waste, solvent consumption, and energy usage through optimized process durations. As spectroscopic hardware becomes more robust and compact, and data analysis algorithms powered by machine learning become more sophisticated, the widespread adoption of these techniques will be a key driver in the creation of more efficient, sustainable, and cost-effective pharmaceutical manufacturing processes.

In the field of biopharmaceutical development and fundamental cell biology research, the ability to monitor cellular processes in real-time is paramount. This application note focuses on the pivotal role of real-time monitoring of metabolites and cell density as a cornerstone for advanced Process Analytical Technology (PAT). Within the broader thesis of implementing in-line monitoring for Post-Mortem Interval (PMI) reduction research, these technologies transition bioprocessing from a traditional, retrospective model to a proactive, data-driven paradigm. The accurate, continuous tracking of critical process parameters (CPPs) and critical quality attributes (CQAs) enables researchers to minimize process variability, enhance product quality, and significantly reduce the time between cell culture initiation and the acquisition of reliable, actionable data. By providing a dynamic window into the live cell environment, these methods allow for immediate intervention and control, ultimately leading to more robust and predictable outcomes in drug development and cellular research [29] [30].

Technology Platforms for Real-Time Monitoring

Several advanced technological platforms have emerged to facilitate non-invasive, real-time monitoring of cell cultures and bioprocesses. The table below summarizes the core characteristics of three prominent approaches.

Table 1: Comparison of Real-Time Monitoring Platforms for Metabolites and Cell Density

| Technology Platform | Key Measured Parameters | Spatial Resolution | Temporal Resolution | Primary Cell Culture Model |

|---|---|---|---|---|

| NMR Bioreactor [31] | Protein-ligand interactions, protein folding, chemical modifications | Bulk / Population Average | Minutes to Hours | High-density human cells (e.g., HEK293T) encapsulated in agarose |

| Microfluidic Organ-on-a-Chip with Integrated Sensors [32] | Dissolved oxygen, glucose, lactate, pH | 2D / 3D Micro-environment | Continuous (Seconds to Minutes) | 3D cell cultures, organoids (e.g., breast cancer stem cells) |

| Single-Cell Dynamic Metabolomics [33] | Metabolic flux, concentration of ~40 metabolites, metabolic heterogeneity | Single Cell | Medium-Term (Hours) | Single tumor cells and macrophages in co-culture |

The operational principles and data flow for these integrated monitoring systems can be visualized as a cohesive workflow, from sample preparation to data-driven feedback.

Figure 1: Generalized Workflow for Integrated Real-Time Cell Culture Monitoring and Control. MCR-ALS: Multivariate Curve Resolution–Alternating Least Squares.

Quantitative Data from Monitoring Studies

The implementation of these platforms generates robust quantitative data essential for modeling and control. The following tables consolidate key performance metrics and findings from recent studies.

Table 2: Sensor Performance Metrics in a Microfluidic Organ-on-a-Chip Platform [32]

| Sensor Target | Detection Principle | Linear Range | Limit of Detection (LOD) | Stability |

|---|---|---|---|---|

| Dissolved Oxygen | Chronoamperometry | N/S | < 1.0 µM | No drift over 1 week in serum-containing media |

| Lactate | Enzymatic (LOx)/Amperometry | N/S | 6.1 µM | Stable via pHEMA hydrogel encapsulation |

| Glucose | Enzymatic (GOx)/Amperometry | N/S | 7.6 µM | Stable via pHEMA hydrogel encapsulation |

Table 3: Key Findings from Single-Cell and 3D Culture Metabolic Monitoring Studies [33] [32]

| Observed Phenomenon | Experimental Model | Quantitative Result | Biological Implication |

|---|---|---|---|

| Metabolic Heterogeneity | Single MDA-MB-231 cells [33] | 40 labeled metabolites tracked over 3 hours at single-cell level | Reveals sub-populations with distinct metabolic phenotypes |

| Cell Doubling Time | Breast cancer stem cells (BCSC1) in 3D chip [32] | Doubling time of 21.7 hours | Confirms viability and proliferative capacity in the microsystem |

| Drug Response Dynamics | BCSC1 treated with Antimycin A [32] | Oxygen consumption rate (OCR) dropped sharply within 1 hour | Enables real-time quantification of drug efficacy on metabolic pathways |

| Cell Size Correlation | Multiple animal carcasses [34] | Strong positive correlation (R² = 0.962) between body weight and cooling rate | Informs PMI estimation models by linking biophysical properties to metabolic decay. |

Detailed Experimental Protocols

Protocol A: Real-Time Intracellular NMR for Protein-Ligand Interactions in Human Cells

This protocol enables the observation of intracellular processes at atomic resolution over extended periods [31].

Key Reagents and Solutions:

- Complete DMEM: DMEM high glucose, supplemented with 10% (v/v) FBS, 1x L-glutamine (200 mM), and 1x penicillin-streptomycin.

- Agarose Solution (1.5% w/v): Dissolve 150 mg of low-gelling temperature agarose in 10 mL of PBS. Sterilize by passing through a 0.22 µm filter. Prepare 1 mL aliquots and store at 4°C.

- Bioreactor Medium: Dissolve DMEM powder in ultra-pure H₂O (e.g., 13.4 g/L). Add 2% FBS, 10 mM NaHCO₃, 1x penicillin-streptomycin, and 2% D₂O (for NMR lock signal). Adjust pH to 7.4. Sterilize by vacuum filtration.

Procedure:

- Bioreactor Setup: Assemble the NMR flow cell according to manufacturer instructions. Connect the medium reservoir and set the temperature control to 37°C. Pre-fill the system with bioreactor medium at a flow rate of 0.1 mL/min.

- Cell Preparation and Encapsulation:

- Culture and transfect HEK293T cells as required.

- Wash cells with PBS, dissociate using trypsin/EDTA, and inactivate with complete DMEM.

- Centrifuge at 800 × g for 5 minutes, wash with PBS, and re-pellet the cells.

- Melt a 1 mL aliquot of 1.5% agarose at 85°C, then hold at 37°C.

- Resuspend the cell pellet in 450 µL of the molten agarose, avoiding bubble formation.

- Loading the NMR Tube:

- Using a Pasteur pipette, create a ~5 mm high bottom plug in the NMR tube by adding 60-70 µL of agarose and letting it set on ice.

- Carefully load the cell-agarose suspension on top of the plug. Allow it to gelify, forming the "cell thread" within the active volume of the NMR coil.

- Real-Time Data Acquisition:

- Insert the prepared NMR tube into the spectrometer.

- Initiate and maintain a constant flow of pre-warmed (37°C) bioreactor medium.

- Acquire sequential ¹H-NMR spectra over the desired time course (up to 72 hours).

- For ligand interaction studies, introduce the drug (e.g., Acetazolamide) into the perfusion medium at the desired concentration and continue time-resolved data acquisition.

- Data Analysis:

- Process the time-series NMR spectra.

- Employ multivariate curve resolution–alternating least squares (MCR-ALS) algorithms to resolve pure spectral components and extract concentration profiles of observed metabolites or protein states over time [31].

- Fit the concentration profiles to kinetic models to determine relevant rate constants.

Protocol B: Metabolic Monitoring in a 3D Microfluidic Organ-Chip

This protocol details the use of integrated electrochemical sensors for real-time monitoring of 3D cell cultures [32].

Key Reagents and Solutions:

- Matrigel: Basement membrane matrix, thawed on ice.

- Cell Culture Medium: Appropriate for the cell type (e.g., MSC medium for breast cancer stem cells).

- PBS (Phosphate Buffered Saline): For washing and dilution.

Procedure:

- Chip Preparation: Sterilize the microfluidic chip (e.g., via UV light). The chip should have pre-integrated sensors for O₂, glucose, and lactate.

- Sensor Calibration:

- Calibrate the oxygen sensor in air-saturated PBS and nitrogen-flushed PBS.

- Calibrate lactate and glucose sensors using standard solutions in the relevant concentration ranges.

- 3D Cell Culture Preparation:

- Harvest and count the cells of interest (e.g., BCSC1).

- Suspend the cells in a mixture of Matrigel and culture medium (e.g., 75% Matrigel). Keep the suspension on ice to prevent premature gelling.

- Chip Loading:

- Using a standard pipette, carefully inject the cell-Matrigel suspension into the dedicated cell culture chamber of the chip. The SU-8 barrier structures will prevent leakage.

- Allow the Matrigel to polymerize in an incubator (37°C, 5-10 minutes).

- Initiate Perfusion and Monitoring:

- Connect the chip to a perfusion system and begin flowing pre-warmed culture medium at a defined rate (e.g., 10 µL/min).

- Place the entire assembly in a standard cell culture incubator.

- Initiate continuous or frequent intermittent sampling via the electrochemical sensors.

- Drug Testing (Example):

- After a stable baseline is established, switch the perfusion medium to one containing the drug of interest (e.g., 300 ng/mL Doxorubicin or Antimycin A).

- Continue real-time monitoring of O₂, glucose, and lactate concentrations.

- Observe and quantify the metabolic shifts in response to the drug.

- Data Analysis:

- For oxygen, calculate the Oxygen Consumption Rate (OCR) from the slope of the dissolved oxygen decrease during stopped-flow intervals.

- For glucose and lactate, determine consumption and production rates based on concentration changes between the inlet and outlet, factoring in the flow rate.

The logical sequence of the microfluidic chip protocol, from preparation to analysis, is outlined below.

Figure 2: Experimental Workflow for Metabolic Monitoring in a 3D Microfluidic Chip.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of real-time monitoring protocols requires specific reagents and instrumentation. The following table catalogues key solutions and their functions.

Table 4: Essential Research Reagent Solutions and Materials for Real-Time Monitoring

| Item Name | Function / Application | Example / Specification |

|---|---|---|