In Silico Prediction of Reaction Conversion: A Data-Driven Pathway to Greener Chemistry

This article explores the transformative role of in silico prediction in advancing green chemistry principles for researchers, scientists, and drug development professionals.

In Silico Prediction of Reaction Conversion: A Data-Driven Pathway to Greener Chemistry

Abstract

This article explores the transformative role of in silico prediction in advancing green chemistry principles for researchers, scientists, and drug development professionals. It covers the foundational shift from traditional, resource-intensive experimental methods to computational strategies that predict reaction conversion and optimize for sustainability. The scope includes a detailed examination of key methodologies like Variable Time Normalization Analysis (VTNA) and Linear Solvation Energy Relationships (LSER), their application in troubleshooting and optimizing reactions, and their validation through real-world case studies and green metrics. By synthesizing insights from these core intents, the article provides a comprehensive framework for leveraging computational tools to design more efficient, safer, and environmentally friendly chemical processes.

The Foundation of Green Chemistry: How In Silico Prediction is Reducing the Environmental Footprint of Research

The traditional process of chemical reaction development, particularly in the pharmaceutical industry, faces a dual crisis of sustainability and economics. The research and development (R&D) cost for a new drug is estimated at approximately $2.8 billion, with the journey from synthesis to first human testing taking about 2.6 years and costing $430 million [1]. Furthermore, chemical production has historically generated substantial waste; in many cases, over 100 kilos of waste are coproduced per kilo of active pharmaceutical ingredient (API) [2]. This environmental burden is compounded by the use of hazardous solvents, reagents, and energy-intensive processes.

Green chemistry presents a fundamental solution to these challenges by focusing on pollution prevention at the molecular level [3]. Rather than treating waste after it is created, green chemistry aims to design chemical products and processes that reduce or eliminate the use or generation of hazardous substances [3]. This paradigm shift, supported by emerging computational technologies, directly addresses the core challenges of cost and environmental impact by making processes inherently cleaner, more efficient, and less resource-intensive.

Quantitative Perspectives: Measuring Environmental and Economic Impact

To objectively assess the environmental performance of chemical processes, researchers rely on specific metrics that enable direct comparison between traditional and greener alternatives. The most prominent of these metrics are Process Mass Intensity (PMI) and the E-factor [2].

Table 1: Key Metrics for Assessing Environmental Impact in Chemistry

| Metric Name | Calculation Formula | Interpretation | Industry Context |

|---|---|---|---|

| E-Factor | Total mass of waste produced / Mass of product | Lower values indicate less waste generation; ideal is 0 | Historically >100 for many pharmaceuticals [2] |

| Process Mass Intensity (PMI) | Total mass of materials used / Mass of product | Lower values indicate higher material efficiency | Favored by ACS Green Chemistry Institute Pharmaceutical Roundtable [2] |

These metrics reveal startling inefficiencies in traditional approaches. When companies systematically apply green chemistry principles to API process design, dramatic reductions in waste—sometimes as much as ten-fold—are often achievable [2]. This translates directly to reduced raw material costs, lower waste disposal expenses, and diminished environmental liability.

Another critical green chemistry principle is atom economy, which evaluates the efficiency of a synthesis by calculating what percentage of reactant atoms are incorporated into the final desired product [2]. A reaction with 100% yield can have only 50% atom economy if half the mass of reactants ends up in unwanted by-products [2]. This reveals fundamental inefficiencies that traditional yield calculations alone cannot capture.

In Silico Solutions: Computational Approaches for Greener Chemistry

Computer-aided drug design (CADD) and artificial intelligence (AI) are transforming pharmaceutical R&D by enabling more predictive and efficient discovery processes. These in silico approaches enable researchers to evaluate potential compounds and reactions virtually before conducting wet lab experiments, significantly reducing material consumption, waste generation, and development time [1].

AI-Optimized Reaction Design

Machine learning algorithms are now being trained to evaluate reactions based on sustainability metrics such as atom economy, energy efficiency, toxicity, and waste generation [4]. These AI systems can suggest safer synthetic pathways and optimal reaction conditions—including temperature, pressure, and solvent choice—thereby reducing reliance on trial-and-error experimentation [4]. Specific applications include:

- Predicting catalyst behavior without physical testing, reducing waste, energy usage, and potentially hazardous chemical usage [4]

- Designing catalysts that support greener ammonia production for sustainable agriculture and optimize fuel cells [4]

- Autonomous optimization loops that integrate high-throughput experimentation with machine learning [4]

A notable implementation is Algorithmic Process Optimization (APO), a proprietary machine learning platform developed by Sunthetics in collaboration with Merck. This technology, which received the 2025 ACS Data Science and Modeling for Green Chemistry Award, replaces traditional Design of Experiments with Bayesian Optimization and active learning [5]. APO handles complex optimization challenges with 11+ input parameters, enabling teams to reduce hazardous reagents and material waste while accelerating development timelines [5].

Predictive Metabolic Modeling

Understanding how drug candidates will be metabolized in the human body is crucial for avoiding toxicity issues and efficacy failures late in development. Researchers have developed in silico models that predict which human enzymes can catalyze a given chemical compound based on chemical and physical similarity between known enzyme substrates and query compounds [6]. Using multiple linear regression, these models achieve high predictive performance (AUC = 0.896) despite the large number of enzymes involved [6] [7].

Table 2: Research Reagent Solutions for In Silico Prediction

| Reagent/Tool Name | Type/Classification | Function in Research | Key Features |

|---|---|---|---|

| PaDEL-Descriptor | Software Tool | Calculates chemical & physical properties of molecules from SMILES strings | Generates 1,444 1-D and 2-D molecular descriptors [6] [7] |

| admetSAR | Predictive Model | Predicts ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) features | Evaluates drug-likeness and metabolic fate of query molecules [6] [7] |

| deepDTI | Deep Learning Tool | Predicts drug-target interactions using deep-belief networks | Identifies potential binding targets for chemical compounds [6] [7] |

| SMILES | Data Format | Simplified Molecular-Input Line-Entry System representation of molecules | Standardized string representation enabling computational chemical analysis [6] [7] |

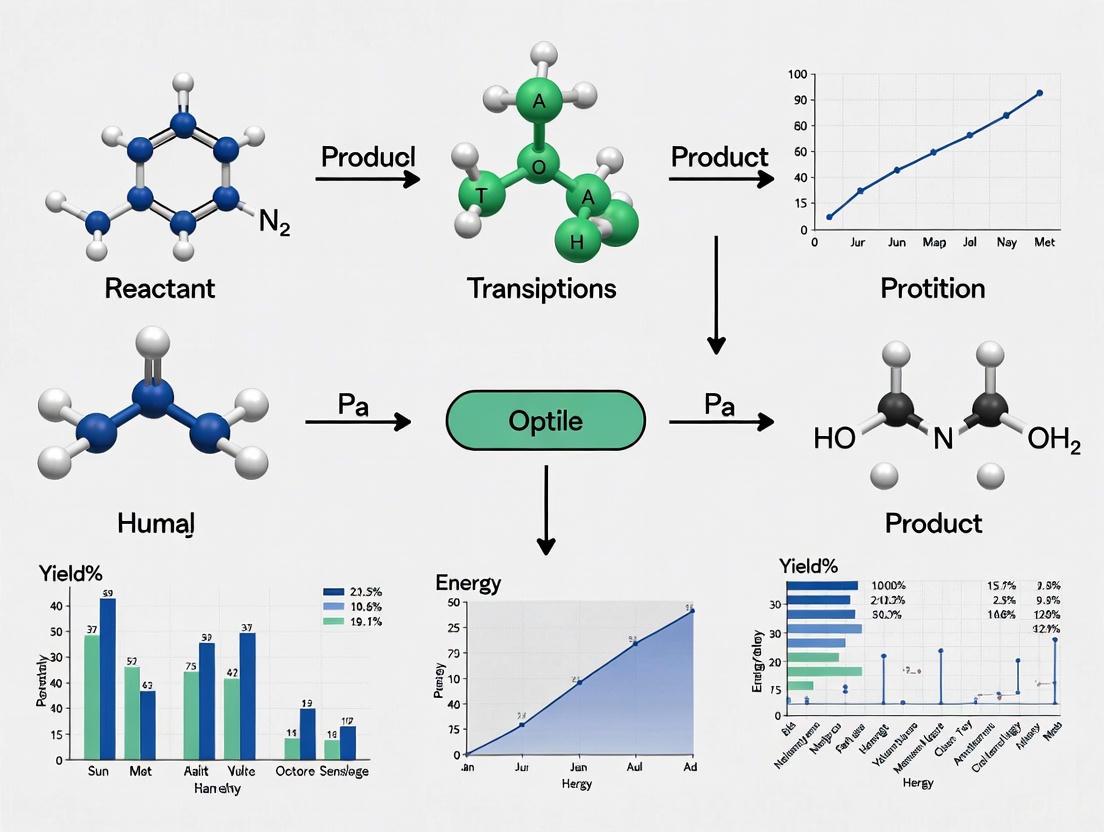

The following diagram illustrates the complete workflow for predicting enzyme-mediated reactions, from data preparation through model training and validation:

Experimental Protocols for Greener Synthesis

Protocol: Mechanochemical Solvent-Free Synthesis

Mechanochemistry utilizes mechanical energy—typically through grinding or ball milling—to drive chemical reactions without solvents [4]. This protocol outlines the general procedure for solvent-free synthesis of organic compounds, particularly relevant for pharmaceutical applications.

Principle: Mechanical force induces chemical transformations by facilitating molecular collisions and energy transfer without solvation [4].

Materials:

- High-energy ball mill (e.g., planetary ball mill)

- Grinding jars and balls (typically zirconia or stainless steel)

- Anhydrous reactants

- Liquid-assisted grinding (LAG) additives if required (minimal solvent)

Procedure:

- Preparation: Weigh reactants according to stoichiometric ratios. For imidazole-dicarboxylic acid salt synthesis, use 1:1 molar ratio of starting materials [4].

- Loading: Transfer reactants to grinding jar with grinding balls. Ball-to-powder mass ratio typically ranges from 10:1 to 20:1.

- Milling: Securely fasten jar in mill. Process at 300-500 rpm for 30-120 minutes, depending on reaction requirements.

- Monitoring: Periodically stop milling to collect small samples for analysis (e.g., TLC, FTIR).

- Work-up: After completion, dissolve reaction mixture in minimal eco-friendly solvent (e.g., ethyl acetate) to separate from grinding media.

- Purification: Filter and concentrate under reduced pressure. Recrystallize if necessary.

Key Advantages:

- Eliminates bulk solvent waste [4]

- Often provides high yields with less energy input [4]

- Enables reactions with low-solubility reactants [4]

Green Chemistry Alignment: This method directly addresses Principles #5 (safer solvents) and #12 (accident prevention) by eliminating or drastically reducing solvent use [3].

Protocol: In-Water and On-Water Organic Reactions

Water represents an ideal green solvent—non-toxic, non-flammable, and abundantly available [4]. This protocol describes the implementation of organic reactions using water as reaction medium.

Principle: Water's unique properties, including hydrogen bonding, polarity, and surface tension, can facilitate or accelerate chemical transformations even for water-insoluble reactants [4].

Materials:

- Round-bottom flask with reflux condenser

- Magnetic stirrer with heating capability

- Surfactant (if needed for emulsion formation)

- Distilled water

- Reactants

Procedure:

- Reaction Setup: In a round-bottom flask, add water (typically 5-10 mL per mmol of limiting reactant).

- Reactant Addition: Add organic reactants to water. Note that many reactions proceed well even when reactants are not fully soluble [4].

- Emulsion Formation (if needed): For hydrophobic reactants, add eco-friendly surfactant (e.g., rhamnolipids, sophorolipids) at 1-5 mol% to form stable emulsion [4].

- Reaction Execution: Stir reaction mixture vigorously at specified temperature (often 25-80°C). Monitor reaction by TLC or GC.

- Product Isolation: After completion, cool reaction mixture. Extract product with eco-friendly solvent (e.g., ethyl acetate).

- Purification: Dry organic layer and concentrate. Purify by column chromatography or recrystallization.

Application Example: Diels-Alder Reaction in Water The Diels-Alder reaction, used across numerous organic chemistry applications, has been successfully accelerated in water without toxic solvents [4].

Green Chemistry Alignment: This approach directly supports Principle #5 (safer solvents) by replacing toxic organic solvents with water [3].

Emerging Technologies and Future Directions

Several promising green chemistry technologies are approaching commercial scalability, offering additional pathways to address cost and environmental challenges:

Earth-Abundant Permanent Magnets: Researchers are developing high-performance magnetic materials using abundant elements like iron and nickel to replace rare earth elements in permanent magnets [4]. Alternatives include iron nitride (FeN) and tetrataenite (FeNi), which offer competitive magnetic properties without the environmental and geopolitical costs of rare earth sourcing [4]. These magnets are crucial components for electric vehicle motors, wind turbines, and consumer electronics.

PFAS-Free Manufacturing: Many industries are replacing PFAS-based solvents, surfactants, and etchants with alternatives such as plasma treatments, supercritical CO₂ cleaning, and bio-based surfactants like rhamnolipids and sophorolipids [4]. These innovations reduce potential liability and cleanup costs associated with PFAS contamination while enabling safer, more compliant production [4].

Deep Eutectic Solvents (DES) for Circular Chemistry: DES are customizable, biodegradable solvents created from mixtures of hydrogen bond donors and acceptors [4]. They are being used to extract both critical metals (e.g., gold, lithium) and bioactive compounds from waste streams, ores, and agricultural residues, supporting the goals of the circular economy [4].

The integration of these technologies with computational optimization approaches represents the future of sustainable chemical development—where processes are designed from the outset to be efficient, economical, and environmentally benign.

In silico prediction of reaction conversion is a computational approach that uses software tools and theoretical models to simulate and predict the outcome of chemical reactions before any laboratory experiments are conducted. This methodology is foundational to green chemistry, as it enables researchers to virtually screen and optimize reaction conditions for maximum efficiency, minimum waste, and reduced environmental impact at the earliest stages of research and development [8] [9]. By accurately forecasting key parameters like product yield and conversion, these computational techniques help in selecting the greenest and most effective reagents, solvents, and reaction parameters.

Core Principles and Workflow

The in silico prediction process integrates fundamental chemical principles with computational power. The core workflow involves using kinetic data and solvent parameters to build models that can accurately simulate reaction progress.

Table 1: Core Inputs and Outputs of In Silico Reaction Conversion Prediction

| Input Data & Parameters | Model Processing | Key Predictive Outputs |

|---|---|---|

| Reaction component concentrations over time [9] | Variable Time Normalization Analysis (VTNA) for reaction orders [9] | Predicted product conversion at a specified time [9] |

| Initial reactant concentrations [9] | Linear Solvation Energy Relationships (LSER) for solvent effects [9] | Calculated reaction rate constants (k) [9] |

| Temperature variations [9] | Calculation of activation parameters (ΔH‡ and ΔS‡) [9] | Projected green chemistry metrics (e.g., Reaction Mass Efficiency) [9] |

| Kamlet-Abboud-Taft solvent parameters (α, β, π*) [9] | Multi-linear regression analysis [9] | Identification of optimal solvents and conditions [9] |

The logical relationship between these components forms a cyclic process of computational analysis and refinement, which can be visualized in the following workflow.

Figure 1: In Silico Reaction Optimization Workflow. This diagram outlines the key steps for using kinetic data and solvent modeling to predict reaction conversion and greenness.

Application Notes: Protocols for Greener Chemistry

The following protocols demonstrate how in silico tools are applied to meet green chemistry objectives, specifically in reducing hazardous solvent use and improving efficiency.

Protocol 1: Replacement of a Hazardous Solvent using a Comprehensive Spreadsheet Tool

This protocol details the use of a published spreadsheet tool to identify and replace an undesirable solvent while maintaining or improving reaction performance, as applied to an aza-Michael addition reaction [9].

Experimental Workflow:

- Data Collection: Perform the aza-Michael addition between dimethyl itaconate and piperidine in a set of 5-10 different solvents with varied polarity. Monitor the reaction using a technique like 1H NMR spectroscopy to obtain precise concentration data for reactants and products at timed intervals [9].

- Kinetic Analysis (VTNA): Input the concentration-time data into the "Kinetics" worksheet of the spreadsheet tool. The tool will guide the user to test different potential reaction orders. The correct orders are identified when data from reactions with different initial concentrations overlap on a single curve. For the specified aza-Michael reaction, the order was found to be 1 with respect to dimethyl itaconate and 2 with respect to piperidine (trimolecular mechanism) in aprotic solvents [9].

- Model Solvent Effects (LSER): Using the calculated rate constants (

k) for each solvent, proceed to the "Solvent effects" worksheet. Perform a multi-linear regression analysis against Kamlet-Abboud-Taft solvent parameters (hydrogen bond donating abilityα, accepting abilityβ, and dipolarity/polarizabilityπ*). For the model reaction, this yielded the LSER: ln(k) = -12.1 + 3.1β + 4.2π*, indicating the reaction is accelerated by polar, hydrogen bond-accepting solvents [9]. - Solvent Selection & Greenness Evaluation: In the "Solvent selection" worksheet, plot a chart of

ln(k)(performance) against solvent greenness, for example, using the CHEM21 solvent guide which scores Safety, Health, and Environment (S/H/E) from 1 (best) to 10 (worst). This visualizes the trade-off between performance and greenness. While DMF is a high performer, it is reprotoxic. DMSO, with a high predicted rate and a better greenness profile, was identified as a superior alternative [9]. - In Silico Prediction & Validation: The spreadsheet's "Metrics" worksheet can then predict product conversion for the newly selected solvent (DMSO) based on the model. The final step is to validate this prediction experimentally by running the reaction in DMSO and confirming the high conversion.

Protocol 2: Enhancing Preparative Chromatography via Conversion Prediction

This protocol outlines a computational method to optimize preparative chromatography for active pharmaceutical ingredient (API) purification, significantly reducing solvent waste and number of runs required [8].

Experimental Workflow:

- Define the System: Input the chemical structures of the API and key impurities into the computer-assisted method development software.

- Map the Separation Landscape: The in silico tool will simulate chromatographic separations across a wide range of mobile phase compositions, temperatures, and gradients. It simultaneously calculates an Analytical Method Greenness Score (AMGS) for each simulated condition [8].

- Identify Optimal Conditions: Analyze the generated separation landscape to find conditions that achieve the required resolution (e.g., Rs ≥ 1.5) with the lowest possible AMGS. For instance, the model can identify opportunities to replace toxic acetonitrile with greener methanol, or replace fluorinated additives with chlorinated ones, reducing the AMGS from 7.79 to 5.09 while preserving resolution [8].

- Maximize Loading with Peak Crossover Analysis: For preparative purification, use the software's resolution map to strategically exploit peak crossover. This allows for a higher injection load without sacrificing purity. In one case, this approach enabled a 2.5× increase in API loading, directly resulting in 2.5 times fewer required purification runs and substantial solvent reduction [8].

- Experimental Verification: Perform a single verification run using the predicted optimal conditions to confirm the simulated resolution and loading capacity.

Table 2: Key Research Reagent Solutions for In Silico Prediction

| Item | Function / Purpose | Application Example |

|---|---|---|

| Comprehensive Reaction Optimization Spreadsheet [9] | Integrated tool for VTNA, LSER, and green metric calculation. | Predicting reaction conversion and identifying green solvents for aza-Michael additions [9]. |

| Kamlet-Abboud-Taft Solvent Parameters [9] | Quantitative descriptors of solvent polarity (α, β, π*). | Building Linear Solvation Energy Relationships to understand and predict solvent effects on reaction rates [9]. |

| CHEM21 Solvent Selection Guide [9] | A standardized metric ranking solvents based on Safety, Health, and Environmental (S/H/E) profiles. | Evaluating and comparing the greenness of potential solvents identified by the LSER model [9]. |

| Chromatography Modeling Software [8] | In silico platform for simulating analytical and preparative separations. | Mapping separation resolution and greenness scores (AMGS) to replace hazardous mobile phases and maximize sample loading [8]. |

| Flow Matching Models (e.g., MolGEN) [10] | A deterministic generative framework for predicting reaction pathways and transition states. | Generating valid transition states and reaction products with high accuracy, reducing reliance on costly quantum-chemistry calculations [10]. |

The Twelve Principles of Green Chemistry as a Framework for In Silico Optimization

The integration of the Twelve Principles of Green Chemistry with advanced in silico technologies is revolutionizing sustainable chemical research and development. This paradigm shift enables researchers to predict reaction outcomes, optimize for efficiency, and minimize environmental impact before conducting laboratory experiments. Within pharmaceutical development and other chemistry-intensive industries, this approach is critical for reducing waste, improving atom economy, and designing safer chemicals while accelerating the discovery process [11] [12]. The framework presented in this document provides detailed protocols and application notes for implementing green chemistry principles through computational strategies, specifically focusing on the prediction of reaction conversion and optimization of chemical processes.

The following core in silico methodologies, each aligning with specific green chemistry principles, form the foundation of this approach:

- Reaction Kinetics and Mechanism Analysis aligns with Principle 5 (Safer Solvents and Auxiliaries) and Principle 9 (Catalysis) by enabling the selection of efficient solvents and catalysts through computational models [9] [13].

- Synthetic Feasibility and Pathway Prediction directly supports Principle 1 (Waste Prevention) and Principle 2 (Atom Economy) by identifying optimal synthetic routes that minimize byproducts [14].

- Molecular and Materials Design facilitates Principle 3 (Less Hazardous Chemical Synthesis) and Principle 4 (Designing Safer Chemicals) through property prediction and hazard assessment prior to synthesis [15] [16].

- Process Optimization and Metrics Calculation embodies Principle 6 (Design for Energy Efficiency) and Principle 12 (Inherently Safer Chemistry) by enabling energy-efficient processes with reduced accident potential [9] [17].

The diagram below illustrates the integrative framework connecting Green Chemistry Principles with in silico methodologies and their resulting applications.

Application Notes

Kinetics-Driven Reaction Optimization with Variable Time Normalization Analysis (VTNA)

Overview: Variable Time Normalization Analysis (VTNA) represents a powerful computational approach for determining reaction orders without extensive mathematical derivations, enabling rapid optimization of reaction conditions toward improved efficiency and reduced waste generation [9]. This methodology directly supports Principle 1 (Prevention) by facilitating higher-yielding reactions and Principle 6 (Energy Efficiency) through identification of faster reaction pathways.

Key Implementation Findings:

- VTNA successfully determined non-integer reaction orders (e.g., 1.6 for piperidine in aza-Michael additions) that would be difficult to identify through traditional kinetic analysis [9].

- In pharmaceutical applications, VTNA-informed process optimization led to a 19% reduction in waste and a 56% improvement in productivity compared to conventional drug production standards [12].

- The integration of VTNA with linear solvation energy relationships (LSER) enables simultaneous optimization of reaction kinetics and solvent greenness, enabling researchers to balance reaction rate with environmental and safety considerations [9].

Limitations and Considerations: VTNA requires high-quality concentration-time data for accurate order determination. Implementation is most effective when combined with experimental validation, particularly for complex reaction networks where competing pathways may exist.

Machine Learning for Predictive Green Chemistry

Overview: Artificial intelligence and machine learning (ML) models are transforming green chemistry by enabling accurate prediction of reaction outcomes, optimization of conditions, and identification of sustainable synthetic pathways [11] [15] [14]. These approaches directly support Principle 2 (Atom Economy) through optimized route selection and Principle 12 (Inherently Safer Chemistry) by minimizing hazardous experimentation.

Key Implementation Findings:

- Machine learning models for predicting sites of borylation reactions have outperformed previous methods, streamlining drug development while reducing resource consumption [11].

- AI-driven optimization of green carbon dot (GCD) synthesis has demonstrated potential to reduce experimental iterations by over 80%, significantly decreasing solvent waste, energy demand, and experimental effort [15].

- The TRACER framework, which combines conditional transformers with reinforcement learning, successfully generated synthetically feasible compounds with high predicted activity against drug targets (DRD2, AKT1, CXCR4) while considering real-world reactivity constraints [14].

Limitations and Considerations: ML model efficacy depends heavily on access to large, high-quality datasets, which remain limited in some chemistry domains. Model interpretability can be challenging, particularly for complex deep learning architectures, though SHAP (SHapley Additive exPlanations) and LIME (Local Interpretable Model-agnostic Explanations) are emerging as potential solutions [15].

Computational Solvent Selection and LSER Modeling

Overview: Linear Solvation Energy Relationships (LSER) modeling enables quantitative prediction of solvent effects on reaction rates, facilitating the selection of environmentally preferable solvents that maintain high reaction performance [9]. This methodology directly supports Principle 5 (Safer Solvents) and Principle 3 (Less Hazardous Chemical Synthesis).

Key Implementation Findings:

- For the trimolecular aza-Michael reaction between dimethyl itaconate and piperidine, LSER analysis revealed the rate acceleration correlation: ln(k) = -12.1 + 3.1β + 4.2π, indicating the importance of hydrogen bond acceptance (β) and polarity/polarizability (π) [9].

- The integration of LSER with solvent greenness metrics (from guides such as the CHEM21 solvent selection guide) enables direct comparison of reaction efficiency against environmental, health, and safety parameters [9].

- Predictive models can identify alternative solvents with superior environmental profiles while maintaining reaction performance, such as identifying potential substitutes for problematic but high-performing solvents like DMSO [9].

Limitations and Considerations: LSER correlations are typically valid only for solvents supporting the same reaction mechanism. Database limitations may restrict the range of solvents that can be evaluated, particularly for newer, more sustainable solvent options.

In Silico Catalyst Design and Reaction Prediction

Overview: Computational approaches for catalyst design and reaction prediction enable the replacement of precious metals with more abundant alternatives and provide insights into reaction mechanisms and selectivity [11] [13] [12]. These methods directly support Principle 9 (Catalysis) and Principle 1 (Waste Prevention).

Key Implementation Findings:

- Replacing palladium with nickel-based catalysts in borylation and Suzuki reactions has led to reductions of more than 75% in CO₂ emissions, freshwater use, and waste generation [11].

- Automated computational approaches for predicting intermediates and mechanisms in palladium-catalyzed C-H activation reactions have successfully rationalized regioselectivity and predicted new reactions [13].

- Photoredox catalysis and electrocatalysis, enabled by computational design, provide alternative activation pathways that reduce reliance on hazardous reagents and improve energy efficiency [11].

Limitations and Considerations: Accurate prediction of reaction outcomes for novel catalyst systems remains challenging. High-performance computing resources are often required for detailed mechanistic studies, potentially limiting accessibility for some research groups.

Experimental Protocols

Protocol: Variable Time Normalization Analysis for Kinetic Parameter Determination

Objective: Determine reaction orders and rate constants from concentration-time data using VTNA methodology.

Materials and Software:

- Kinetic data (concentration vs. time for all reactants and products)

- Spreadsheet software (e.g., Microsoft Excel, Google Sheets) with VTNA template [9]

- Statistical analysis software (optional, for advanced fitting)

Procedure:

Data Preparation

- Compile concentration-time data for all reaction components from at least three experiments with varying initial reactant concentrations.

- Ensure data covers sufficient conversion range (ideally 20-80% conversion) for accurate order determination.

- Input data into VTNA spreadsheet template, with time in consistent units and concentrations in mol/L.

Reaction Order Determination

- Test potential reaction orders by plotting transformed time (t × [B]⁰ᴮ) against concentration of limiting reactant, where θᴮ is the hypothesized order with respect to reactant B.

- Iterate through different order values (typically -2 to 2 in 0.1-0.2 increments) to identify the value that produces the best overlap of datasets from different initial conditions.

- Validate selected orders by inspecting data collapse – correct orders will produce overlapping curves when plotted against transformed time.

Rate Constant Calculation

- Once appropriate orders are identified, calculate rate constants (k) for each experiment using the integrated rate law corresponding to the determined orders.

- Perform statistical analysis on calculated k values to determine mean and standard deviation across replicates.

- Assess quality of fit through residual analysis and R² values for linearized plots.

Experimental Validation

- Design new experiments based on VTNA results to validate predicted kinetics.

- Compare predicted versus experimental concentration profiles to confirm accuracy of determined parameters.

Troubleshooting:

- Poor data overlap may indicate complex mechanism or competing pathways; consider segmental analysis for different reaction phases.

- Non-integer orders may suggest mixed mechanisms; test hypotheses with additional designed experiments.

- Ensure temperature control throughout experiments, as small variations can significantly impact rate constants.

Protocol: Machine Learning-Enhanced Reaction Optimization with TRACER Framework

Objective: Implement the TRACER (conditional transformer with MCTS) framework for molecular optimization with synthetic feasibility constraints.

Materials and Software:

- Chemical reaction dataset (e.g., USPTO with 1,000 reaction types) [14]

- Python environment with PyTorch/TensorFlow

- TRACER implementation (available from original publication)

- RDKit or similar cheminformatics toolkit

- High-performance computing resources (recommended for training)

Procedure:

Data Preparation and Preprocessing

- Curate reaction dataset with reactant-product pairs and associated reaction types.

- Standardize molecular representations (SMILES) and remove duplicates or erroneous entries.

- Split data into training (80%), validation (10%), and test (10%) sets.

Model Training

- Implement conditional transformer architecture with encoder-decoder structure.

- Train model to predict products from reactants and reaction type conditioning.

- Monitor training with loss function and accuracy metrics (partial accuracy and perfect accuracy).

- Optimize hyperparameters (learning rate, batch size, number of layers) using validation set performance.

Molecular Optimization with MCTS

- Select starting molecule(s) for optimization based on project goals.

- Implement Monte Carlo Tree Search with expansion guided by reaction template predictions.

- Use property prediction model (e.g., QSAR for target protein) as reward function.

- Run MCTS for predetermined number of steps (e.g., 200 steps as in original publication).

Synthetic Pathway Evaluation

- Evaluate generated compounds for synthetic accessibility using forward prediction accuracy.

- Prioritize compounds with high predicted activity and feasible synthesis pathways.

- Validate top candidates through experimental testing or additional computational studies.

Troubleshooting:

- Low perfect accuracy may indicate need for more training data or architecture adjustment.

- Limited chemical diversity in generated molecules may require adjustment of exploration-exploitation balance in MCTS.

- Reaction template mismatches can be addressed through expanded template set or relaxed matching criteria.

Protocol: Solvent Greenness Assessment with LSER Modeling

Objective: Develop Linear Solvation Energy Relationships to guide green solvent selection.

Materials and Software:

- Kinetic data for target reaction in multiple solvents

- Solvatochromic parameters (α, β, π*) for candidate solvents

- Greenness metrics (e.g., CHEM21 guide scores)

- Statistical software for multiple linear regression

Procedure:

Experimental Data Collection

- Perform kinetic experiments for target reaction in at least 8-10 solvents with diverse polarity characteristics.

- Determine rate constants (k) in each solvent at constant temperature.

- Compile solvatochromic parameters (α - hydrogen bond donation, β - hydrogen bond acceptance, π* - dipolarity/polarizability) for each solvent.

LSER Model Development

- Perform multiple linear regression of ln(k) against solvent parameters: ln(k) = c + aα + bβ + pπ*

- Evaluate statistical significance of each parameter using p-values (< 0.05 threshold).

- Validate model using leave-one-out cross-validation or similar technique.

- Apply model to predict performance in untested solvents.

Greenness Assessment

- Compile greenness metrics for solvents (safety, health, environment scores from CHEM21 or similar guide).

- Create combined greenness score (sum of S+H+E or worst score approach).

- Plot ln(k) predicted from LSER against solvent greenness to identify optimal solvents balancing performance and sustainability.

Experimental Validation

- Select top candidate solvents identified through LSER and greenness analysis.

- Validate predicted reaction rates experimentally.

- Assess practical considerations (cost, availability, purification) for final solvent selection.

Troubleshooting:

- Poor regression fit may indicate mechanism change across solvents; analyze kinetics for consistency.

- Limited solvent diversity in parameter space can reduce model predictive power; include solvents spanning wide range of α, β, π* values.

- Discrepancies between predicted and experimental rates may indicate specific solvent-solute interactions not captured by standard solvatochromic parameters.

Data Presentation

Quantitative Comparison of Green Chemistry Metrics

Table 1: Comparative analysis of computational approaches for green chemistry optimization

| Methodology | Primary Green Principles Addressed | Quantitative Improvement Reported | Computational Resource Requirements | Experimental Validation Required |

|---|---|---|---|---|

| VTNA with LSER [9] | Principles 1, 5, 6, 9 | 19% waste reduction, 56% productivity improvement [12] | Low (spreadsheet-based) | Moderate (kinetic validation) |

| ML-Based Molecular Optimization [14] | Principles 1, 2, 3, 12 | Up to 80% reduction in experimental iterations [15] | High (GPU-intensive training) | High (synthesis validation) |

| Computational Catalyst Design [11] [13] | Principles 1, 9 | >75% reduction in CO₂, water, waste [11] | Medium-High (DFT calculations) | High (catalyst testing) |

| Solvent Greenness Assessment [9] | Principles 3, 5, 12 | Identification of alternatives to problematic solvents (e.g., DMSO) | Low-Medium (regression analysis) | Moderate (solvent performance testing) |

| Reaction Prediction Algorithms [13] [14] | Principles 1, 2, 9 | Perfect accuracy up to 0.6 with conditional transformers [14] | Medium-High (HPC implementation) | High (reaction validation) |

Performance Metrics for AI in Green Chemistry

Table 2: Performance comparison of AI models for reaction prediction and optimization

| Model Architecture | Application | Key Performance Metrics | Green Chemistry Impact | Limitations |

|---|---|---|---|---|

| Conditional Transformer [14] | Reaction product prediction | Perfect accuracy: 0.6 (vs. 0.2 unconditional) | Reduces failed experiments and waste | Requires large, curated reaction datasets |

| Graph Convolutional Networks (GCN) [14] | Reaction template prediction | Top-10 accuracy for diverse reaction types | Enables synthesis-aware molecular design | Limited to known reaction templates |

| Monte Carlo Tree Search (MCTS) [14] | Molecular optimization | Successful generation of high-activity compounds | Optimizes for multiple properties simultaneously | Computationally intensive for large spaces |

| Density Functional Theory (DFT) [13] | Reaction mechanism elucidation | Accurate prediction of regioselectivity | Guides development of more selective catalysts | High computational cost limits system size |

| Machine Learning (Random Forest, etc.) [11] [15] | Property prediction | Outperforms traditional methods in borylation site prediction | Reduces resource consumption through accurate prediction | Dependent on quality and size of training data |

The Scientist's Toolkit

Table 3: Essential computational tools and resources for in silico green chemistry

| Tool/Resource | Function | Access Method | Application in Green Chemistry |

|---|---|---|---|

| VTNA Spreadsheet [9] | Determination of reaction orders from kinetic data | Supplementary materials from publications | Optimizes reaction conditions to prevent waste (Principle 1) |

| Rosetta Software Suite [18] | Biomacromolecular modeling and design | Academic license (RosettaCommons) | Enables enzyme design for biocatalysis (Principle 9) |

| PyRosetta [18] | Python-based interface for Rosetta | Open source with C++ license | Facilitates protein design for sustainable catalysis |

| DFT Packages (NWChem) [13] | Quantum chemical calculations | Open source | Predicts reaction mechanisms and selectivity (Principles 1, 3) |

| Reaction Datasets (USPTO) [14] | Training data for ML models | Publicly available | Enables synthesis-aware molecular design (Principle 2) |

| CHEM21 Solvent Selection Guide [9] | Solvent greenness assessment | Published guide | Guides safer solvent selection (Principle 5) |

| TRACER Framework [14] | Molecular optimization with synthetic awareness | Code from publication | Generates synthesizable compounds with desired properties |

| Green Metrics Calculators [9] [17] | Process Mass Intensity, E-factor, etc. | Custom spreadsheets or tools | Quantifies environmental impact of processes |

Workflow Visualization

The following diagram illustrates the integrated workflow for implementing green chemistry principles through in silico optimization, from initial computational design to experimental validation and final process selection.

The field of organic chemistry is undergoing a profound digital transformation, moving beyond traditional laboratory confines into a data-driven discipline where chemoinformatics and machine learning (ML) are accelerating the path toward sustainable innovation [19]. This paradigm shift is particularly pivotal for green chemistry, where the core objectives of minimizing waste, reducing hazardous reagent use, and lowering energy consumption align perfectly with the predictive power of in silico methodologies [19]. By leveraging vast datasets from digitized patents, academic literature, and reaction databases, researchers can now predict reaction outcomes, optimize synthetic pathways, and design novel compounds with desirable properties before setting foot in the laboratory [19]. This approach, often termed "predictive synthesis," empowers chemists to maximize efficiency and adhere to green chemistry principles by drastically cutting down on trial-and-error experimentation [19] [20]. The integration of these computational tools is not merely an enhancement of traditional methods but a fundamental reimagining of the research and development workflow, enabling a more rational and sustainable design of chemical reactions and processes.

Application Note: A Multi-Objective Workflow for Optimizing Nitroso Reaction Selectivity

Background and Objectives

A central challenge in sustainable synthesis is controlling selectivity in reactions where multiple pathways compete, as this directly impacts atom economy and waste generation. This application note details an implemented in silico guidance system to map and optimize the competition between the hetero-Diels-Alder and Mukaiyama aldol reactions of C-nitroso compounds with 3-trialkylsilyl dienes [20]. The primary objective was to identify optimal reaction conditions that maximize multiple desired outcomes—conversion, selectivity, and output—simultaneously, irrespective of the process mode (batch or flow), thereby providing a general framework for rational reaction design in green chemistry [20].

Key Findings and Quantitative Outcomes

The integrated workflow successfully predicted distinct reactivity trends across different electrophiles and dienes. Experimental validation confirmed the in silico predictions, highlighting the reliability of the approach. The key to its success was the ability to screen reagent candidates efficiently and predict critical transition state features without the need for full localization, thus conserving computational resources [20]. The table below summarizes the core computational modules and their specific roles in achieving the study's objectives.

Table 1: Core Computational Modules and Functions for Reaction Optimization

| Module Name | Primary Function | Key Output | Impact on Green Chemistry |

|---|---|---|---|

| Semi-Empirical QM Calculations | Rapid screening of reagent candidates | Energetic feasibility of reaction pathways | Reduces computational resource burden |

| Supervised Machine Learning | Prediction of key transition state features | Insights into kinetics and selectivity | Avoids resource-intensive calculations |

| Bayesian Optimizer | Multi-objective identification of optimal conditions | Conditions for max conversion & selectivity | Minimizes experimental waste & energy use |

Experimental Protocol

Protocol 1: Multi-Objective In Silico Guidance for Reaction Optimization

This protocol describes the steps for implementing the computational intelligence framework to optimize competing reaction pathways [20].

Step 1: Data Curation and Initial Screening

- Action: Compile a dataset of known reactions and corresponding conditions from literature and internal databases. Convert molecular structures into a machine-readable format (e.g., SMILES strings).

- Reagents & Tools: Use a tool like Open Babel or RDKit for format conversion and structure standardization [21].

- Rationale: This provides the foundational data for the machine learning model. Standardized structures ensure descriptor calculation consistency.

Step 2: Descriptor Calculation and Molecular Representation

- Action: Calculate molecular descriptors for all reagents and potential products. PaDEL-Descriptor is a suitable tool for calculating a wide array of 1D and 2D descriptors [6]. Alternatively, use RDKit for its comprehensive descriptor calculation capabilities [19] [21].

- Rationale: Descriptors quantitatively represent molecular structures, enabling the ML model to learn structure-property relationships.

Step 3: Machine Learning Model for Transition State Prediction

- Action: Train a supervised ML model (e.g., Multiple Linear Regression, Random Forest as explored in similar studies [6]) to predict key transition state features or activation energies based on the calculated descriptors and results from preliminary semi-empirical quantum mechanics (QM) calculations.

- Rationale: This bypasses the need for computationally expensive full transition state localization for every candidate, enabling rapid screening.

Step 4: Bayesian Optimization for Condition Selection

- Action: Feed the ML model's predictions into a Bayesian optimizer. Define the objectives (e.g., maximize conversion, maximize selectivity for the desired product).

- Rationale: The Bayesian optimizer intelligently explores the multi-dimensional condition space (e.g., temperature, concentration, catalyst loading) to find the Pareto-optimal set of conditions that best satisfy all objectives [20].

Step 5: Experimental Validation and Model Refinement

- Action: Execute the top-ranked reactions in the laboratory under the predicted optimal conditions.

- Rationale: This provides ground-truth data to validate the in silico predictions. The new experimental data can be fed back into the dataset to iteratively refine and improve the model's accuracy.

Application Note: Predicting Enzymatic Fate for Safer Chemical Design

Background and Objectives

The metabolic fate of a chemical in a biological or environmental system is a critical sustainability and safety parameter. Unintended enzymatic conversion can lead to the formation of toxic metabolites or render a compound inactive, contributing to waste and potential harm [6]. While traditional in silico prediction focused on a limited set of enzymes like CYP450, a broader view is necessary for a comprehensive assessment [6]. This application note summarizes the development and application of a robust ML model designed to predict which of thousands of human enzymes can catalyze a given chemical compound, based on chemical and physical similarity to known enzyme substrates [6].

Key Findings and Quantitative Outcomes

The model demonstrated high predictive performance, achieving an Area Under the Curve (AUC) of 0.896 during development and 0.746 on an independent test dataset from DrugBank [6]. This high accuracy, despite the large number of enzymes considered, fosters the discovery of new metabolic routes and accelerates the computational development of safer drug candidates and chemicals by predicting potential conversions into active or inactive forms [6]. The model's performance benchmarked against other tools is shown below.

Table 2: Performance Benchmarking of Enzyme Reaction Prediction Models

| Model/Method | Basis of Prediction | Number of Enzymes Covered | Reported Performance (AUC) |

|---|---|---|---|

| Described ML Model [6] | Physico-chemical similarity of substrates | 2,118 human enzymes | 0.896 (Training), 0.746 (Test) |

| admetSAR [6] | ADMET-focused feature analysis | Specific profiles (e.g., CYP2C9, CYP2D6) | Comparable performance for specific CYPs |

| deepDTI [6] | Deep-belief network for drug-target interaction | Customizable based on training data | Performance requires training with specific dataset |

Experimental Protocol

Protocol 2: In Silico Prediction of Enzyme-Chemical Interactions

This protocol outlines the workflow for building a model to predict the interaction between a query molecule and a broad spectrum of enzymes [6].

Step 1: Data Extraction and Curation

- Action: Extract human enzymes and their known substrates from curated databases such as the Human Metabolome Database (HMDB) and BRaunschweig ENzyme DAtabase (BRENDA). Resolve compound names to standard SMILES representations.

- Rationale: Building a reliable model requires a comprehensive and accurately represented dataset of known enzyme-substrate pairs.

Step 2: Descriptor Calculation and Pairwise Feature Generation

- Action: Calculate 1D and 2D molecular descriptors for all substrates using a tool like PaDEL-Descriptor [6]. For every possible pair of substrates, generate a new set of features by calculating the absolute difference between each of their descriptors.

- Rationale: The subtracted descriptors quantitatively represent the physico-chemical similarity between two molecules, which is the core hypothesis for shared enzyme specificity.

Step 3: Dataset Labeling and Dimensionality Reduction

- Action: Label each pair of substrates with '1' if they are catalyzed by the same enzyme, and '0' otherwise. To manage the high dimensionality, reduce the feature set by selecting the top 'n' descriptors with the highest point-biserial correlation coefficient with the labels [6].

- Rationale: This creates a supervised learning dataset and improves model efficiency by eliminating non-informative features.

Step 4: Model Training and Validation

- Action: Train multiple machine learning algorithms (e.g., Multiple Linear Regression, Random Forest, Neural Networks) using the labeled and reduced-feature dataset. Employ a fold-cross-validation strategy where data is partitioned by enzymes, not substrates, to prevent overfitting [6].

- Rationale: Partitioning by enzymes ensures that the model is evaluated on its ability to generalize to new enzymes, not just new substrates for seen enzymes.

Step 5: Score Integration for Query Molecules

- Action: For a new query molecule, generate pairwise similarity scores with all known substrates of a given enzyme. Integrate these multiple scores into a single, robust prediction score using the custom integration function described in the original study, which emphasizes scores above the average [6].

- Rationale: This provides a single, interpretable probability score indicating the likelihood that the query molecule is a substrate for the enzyme.

The Scientist's Toolkit: Essential Cheminformatics Reagents & Software

The practical application of the protocols above relies on a suite of software "reagents" and computational tools. The following table details key open-source and commercial solutions that form the backbone of modern, sustainable in silico research [19] [21].

Table 3: Essential Software Tools for Sustainable Cheminformatics Research

| Tool Name | Type/Category | Primary Function in Sustainable Chemistry | Key Green Chemistry Application |

|---|---|---|---|

| RDKit [19] [21] | Open-Source Cheminformatics Toolkit | Molecule manipulation, descriptor calculation, & QSAR modeling | Accelerates molecular design & property prediction, reducing lab waste. |

| PaDEL-Descriptor [6] | Descriptor Calculation Software | Calculates 1D & 2D molecular descriptors from structures | Provides essential features for ML models predicting activity/toxicity. |

| Open Babel [21] | Chemical File Format Tool | Converts between numerous chemical file formats | Ensures interoperability and data sharing between different software tools. |

| IBM RXN / AiZynthFinder [19] | AI-Powered Synthesis Tools | Predicts retrosynthetic pathways & reaction outcomes | Identifies shortest, safest synthetic routes, minimizing waste & energy. |

| AutoDock / Gnina [19] [22] | Molecular Docking Software | Performs virtual screening of molecules against protein targets | Identifies potential drug candidates early, reducing costly synthetic dead-ends. |

| JChem Microservices [23] | Commercial Cheminformatics Suite | Provides scalable chemical intelligence (property calculation, search) via API | Enables robust database management and high-throughput in silico screening. |

| ChemProp [19] [22] | Machine Learning Package | Message-passing neural networks for molecular property prediction | Highly accurate prediction of physico-chemical and ADMET properties. |

Workflow Visualization for Sustainable Cheminformatics

The following diagram illustrates the integrated, iterative workflow that combines the elements discussed into a powerful engine for sustainable chemistry discovery.

In Silico Guided Sustainable Chemistry Workflow

The integration of cheminformatics and machine learning is ushering in a new era for sustainable chemistry. The application notes and protocols detailed herein demonstrate a tangible path toward replacing resource-intensive trial-and-error with rational, data-driven design. By leveraging powerful software tools and robust computational workflows, researchers can now accurately predict reaction outcomes, optimize for multiple green objectives simultaneously, and anticipate the biological and environmental interactions of chemicals before they are synthesized. This in silico revolution is not just about increasing speed and efficiency; it is a fundamental enabler for designing chemical processes and products that are inherently safer, less wasteful, and more aligned with the principles of green chemistry. As these computational methodologies continue to evolve and become more accessible, they will undoubtedly become the standard practice for advancing both scientific discovery and global sustainability goals.

Core Methodologies and Tools: A Practical Guide to Predicting and Optimizing Reaction Conversion

Kinetic Analysis with Variable Time Normalization Analysis (VTNA) for Determining Reaction Orders

Variable Time Normalization Analysis (VTNA) is a visual kinetic analysis method that simplifies the determination of global rate laws for chemical reactions under synthetically relevant conditions. By enabling the efficient optimization of reactions, VTNA plays a crucial role in advancing the goals of green chemistry by helping to reduce waste, improve energy efficiency, and minimize the environmental impact of chemical processes. The method allows researchers to determine reaction orders without requiring bespoke software or complex mathematical calculations, making kinetic analysis more accessible to the synthetic chemistry community [24]. When integrated with in silico prediction tools, VTNA provides a powerful framework for screening reaction conditions computationally before conducting laboratory experiments, thereby supporting the principles of green chemistry through reduced experimental waste and enhanced process efficiency [9].

Theoretical Foundation of VTNA

The Global Rate Law

The global rate law is a mathematical expression that correlates the rate of a reaction with the concentrations of each reaction species, taking the general form:

Rate = kobs[A]m[B]n[C]p

where [A], [B], and [C] represent the molar concentrations of the reacting components; kobs is the observed rate constant; and m, n, and p are the orders of the reaction with respect to each reaction component [24]. VTNA enables the empirical construction of this rate law from experimental data without explicit consideration of the reaction mechanism.

Fundamental Principles of VTNA

Traditional VTNA involves normalizing the time axis of concentration-time data with respect to a particular reaction species whose initial concentration varies across different experiments. The core principle is that concentration profiles linearize when the time axis is normalized with respect to every reaction component raised to its correct order [24]. Researchers typically test several reaction orders through trial-and-error until they identify the order that gives the best visual overlay of the concentration profiles [24]. The transformation of the time axis for a reaction species depends on its concentration and the hypothesized order.

VTNA Methodologies and Protocols

Manual VTNA Using Spreadsheets

The traditional approach to VTNA utilizes spreadsheet software to manipulate kinetic data and perform time normalization.

Table 1: Key Steps in Manual VTNA Implementation

| Step | Procedure | Purpose | Green Chemistry Connection |

|---|---|---|---|

| 1. Data Collection | Record reaction component concentrations at timed intervals using analytical methods (e.g., NMR spectroscopy) | Generate kinetic profiles under synthetically relevant conditions | Enables reaction optimization to minimize waste |

| 2. Data Entry | Input concentration-time data into spreadsheet templates | Organize data for systematic analysis | Facilitates in silico screening before experimental work |

| 3. Time Transformation | Normalize time axis using tnorm = t × [species]n for trial order values (n) | Linearize concentration profiles when correct orders are used | Identifies optimal conditions to reduce energy consumption |

| 4. Order Determination | Identify order values that produce best overlay of normalized profiles | Establish empirical reaction orders without mechanistic assumptions | Supports atom economy through understanding reaction efficiency |

| 5. Rate Constant Calculation | Determine kobs from normalized profiles | Quantify reaction performance under different conditions | Enables selection of greener reaction conditions |

A specialized spreadsheet for reaction optimization can perform multiple functions including VTNA, linear solvation energy relationships (LSER), and solvent greenness calculations [9]. This integrated approach allows researchers to understand the variables controlling reaction chemistry so they can be optimized for greener outcomes.

Automated VTNA Platforms

Recent advances have led to the development of automated VTNA tools that significantly reduce analysis time and remove human bias from order determination.

Auto-VTNA is a Python package that automatically determines reaction orders for multiple species concurrently by computationally assessing the overlay across a wide range of order value combinations [24]. The program uses a mesh of order values within a specified range (e.g., -1.5 to 2.5) and evaluates each combination of orders by normalizing the time axis and calculating an "overlay score" based on how well the transformed concentration profiles fit a common flexible function [24].

Auto-VTNA Workflow:

- Define a mesh of potential order values within a specified range

- Create a list of every combination of reaction order values

- For each combination, normalize the time axis and fit transformed concentration profiles

- Calculate an overlay score (e.g., RMSE) to quantify the degree of overlay

- Refine order values around the optimal combination to increase precision [24]

Table 2: Comparison of VTNA Implementation Methods

| Feature | Manual VTNA (Spreadsheet) | Auto-VTNA (Python) |

|---|---|---|

| Accuracy | Dependent on user's visual assessment | Quantitative, reproducible metrics |

| Efficiency | Time-consuming trial and error | Rapid automated processing |

| Multi-component Systems | Sequential analysis of species | Concurrent determination of all orders |

| Error Quantification | Qualitative visual assessment | Quantitative error analysis |

| Accessibility | Requires only basic spreadsheet skills | Requires programming knowledge or GUI use |

| Visualization | Manual plot inspection | Automated generation of overlay score plots |

Auto-VTNA provides quantitative metrics for assessing the quality of the overlay, classifying optimal overlay scores (when set to RMSE) as excellent (<0.03), good (0.03-0.08), reasonable (0.08-0.15), or poor (>0.15) [24].

Experimental Design for VTNA

Proper experimental design is crucial for obtaining high-quality kinetic data for VTNA:

- "Different Excess" Experiments: Conduct multiple reactions where initial concentrations of reactants are systematically varied while maintaining a constant concentration of other components [24].

- Data Density: Collect sufficient data points throughout the reaction progress to accurately define concentration profiles.

- Temperature Control: Maintain constant temperature during kinetic experiments to isolate concentration effects.

- Analytical Methods: Use appropriate analytical techniques (e.g., NMR spectroscopy, HPLC) for accurate concentration measurements [25].

Advanced VTNA Applications

Handling Catalyst Activation and Deactivation

VTNA provides powerful methods for analyzing reactions complicated by catalyst activation or deactivation processes, which are common challenges in sustainable catalysis development.

The first treatment allows removal of induction periods or rate perturbations associated with catalyst deactivation when the quantity of active catalyst can be measured throughout the reaction [25]. By normalizing the time scale using the instantaneous catalyst concentration, the intrinsic reaction profile can be revealed without complications from changing catalyst concentration.

The second treatment estimates the catalyst activation or deactivation profile when the reaction orders are known but the catalyst concentration cannot be directly measured [25]. This approach uses VTNA to deconvolve the catalyst's effect on the reaction profile by maximizing the linearity of the resulting VTNA plot, providing insight into activation/deactivation pathways and their kinetics.

VTNA for Catalyst Processes

Solvent Effects and Green Metrics Integration

VTNA can be combined with linear solvation energy relationships (LSER) to understand solvent effects on reaction rates and select greener alternatives. For example, in the aza-Michael addition between dimethyl itaconate and piperidine, VTNA revealed different reaction orders depending on the solvent, while LSER correlated rate constants with solvent polarity parameters (Kamlet-Abboud-Taft parameters) [9]. This combined approach identified that the reaction is accelerated by polar, hydrogen bond accepting solvents following the relationship: ln(k) = -12.1 + 3.1β + 4.2π* [9].

The reaction optimization spreadsheet facilitates solvent selection by plotting ln(k) against solvent greenness scores (e.g., from the CHEM21 solvent selection guide), enabling simultaneous consideration of reaction efficiency and environmental, health, and safety (EHS) profiles [9].

Research Reagent Solutions

Table 3: Essential Materials and Tools for VTNA Implementation

| Category | Specific Items | Function in VTNA | Green Chemistry Considerations |

|---|---|---|---|

| Analytical Instruments | NMR spectrometer, HPLC, ReactIR | Monitoring reaction component concentrations at timed intervals | Enables real-time monitoring to minimize sampling waste |

| Software Tools | Microsoft Excel, Python with Auto-VTNA package, Kinalite | Data processing, visualization, and automated order determination | Facilitates in silico optimization before laboratory experiments |

| Solvent Selection Guides | CHEM21 Solvent Selection Guide | Assessing environmental, health, and safety profiles of solvents | Promoves use of greener solvents with lower EHS scores |

| Reaction Components | Dimethyl itaconate, piperidine, dibutylamine (for aza-Michael model reaction) | Model substrates for method validation and optimization | Exemplifies renewable feedstocks and atom economy principles |

| Catalyst Systems | Supramolecular rhodium complexes, aminocatalysts | Studying catalyst activation and deactivation processes | Enables development of efficient catalytic systems for waste reduction |

Case Study: Aza-Michael Addition Optimization

The aza-Michael addition between dimethyl itaconate and amines serves as an illustrative case study for VTNA application in green chemistry. VTNA analysis revealed that the reaction experiences different orders depending on the solvent: trimolecular in aprotic solvents (second order in amine) but bimolecular in protic solvents [9]. In isopropanol, a non-integer order (1.6 with respect to piperidine) was observed, indicating competing mechanisms [9].

This kinetic understanding enabled the identification of dimethyl sulfoxide (DMSO) as an optimal solvent, balancing high reaction rate with relatively favorable greenness profile compared to more hazardous alternatives like reprotoxic N,N-dimethylformamide (DMF) [9]. The integrated approach combining VTNA with solvent greenness assessment demonstrates how kinetic analysis directly supports greener reaction design.

VTNA Green Optimization Workflow

Implementation Protocol

Step-by-Step VTNA Protocol for Reaction Optimization

Experimental Design Phase

- Select a model reaction system with relevance to green chemistry goals

- Design "different excess" experiments varying initial concentrations systematically

- Identify appropriate analytical methods for concentration monitoring

Data Collection Phase

- Conduct kinetic experiments under isothermal conditions

- Collect concentration-time data for all relevant reaction components

- Ensure sufficient data density throughout reaction progress

VTNA Analysis Phase

- Input concentration-time data into spreadsheet or Auto-VTNA platform

- Perform time normalization with trial order values: tnorm = t × [A]n × [B]m

- Identify optimal orders that produce best overlay of normalized profiles

- Calculate rate constants (kobs) from normalized profiles

Green Chemistry Integration Phase

- Correlate rate constants with solvent polarity parameters (LSER)

- Assess solvent greenness using established guides (e.g., CHEM21)

- Identify optimal conditions balancing reaction efficiency and sustainability

Validation Phase

- Predict reaction performance under new conditions using established rate law

- Verify predictions experimentally

- Calculate green metrics (atom economy, reaction mass efficiency, optimum efficiency)

Variable Time Normalization Analysis provides a powerful, accessible method for determining reaction orders under synthetically relevant conditions, making it particularly valuable for green chemistry research. When integrated with in silico prediction tools and solvent greenness assessment, VTNA enables comprehensive reaction optimization that simultaneously addresses efficiency and sustainability goals. The development of automated platforms like Auto-VTNA further enhances the utility of this methodology by reducing analysis time and providing quantitative assessment of kinetic parameters. As the chemical industry continues its transition toward safer and more sustainable practices, VTNA represents a key analytical tool for developing efficient, waste-minimized chemical processes aligned with the principles of green chemistry.

Understanding Solvent Effects with Linear Solvation Energy Relationships (LSER)

Linear Solvation Energy Relationships (LSERs) represent a powerful quantitative approach for predicting the physicochemical behavior of molecules across different solvent environments. Within green chemistry and pharmaceutical research, the ability to accurately forecast partition coefficients, solubility, and reactivity in silico is paramount for designing sustainable processes and reducing experimental waste. The LSER methodology, particularly the Abraham solvation parameter model, provides a robust framework for this purpose by correlating free-energy-related properties of a solute with its fundamental molecular descriptors [26]. This approach allows researchers to model complex solvation phenomena, enabling the rational selection of environmentally benign solvents and the prediction of key environmental fate parameters, all of which align with the principles of green chemistry.

Theoretical Foundation of LSER

The LSER Formalism

The LSER model operationalizes solvation thermodynamics through linear free-energy relationships. For solute transfer between two condensed phases, the fundamental equation is expressed as:

log(P) = cp + epE + spS + apA + bpB + vpVx [26]

Where P represents the partition coefficient between two phases (e.g., water-to-organic solvent), and the lowercase coefficients (cp, ep, sp, ap, bp, vp) are system-specific descriptors characterizing the solvent phases. These coefficients are determined through regression against experimental data and remain constant for all solutes partitioning within the same system.

For gas-to-solvent partitioning, a slightly different equation is employed:

log (KS) = ck + ekE + skS + akA + bkB + lkL [26]

Where KS is the gas-to-organic solvent partition coefficient, and L is the gas-hexadecane partition coefficient.

Molecular Descriptors and Their Chemical Significance

The capital letters in the LSER equations represent solute-specific molecular descriptors that quantify different aspects of intermolecular interactions:

Table: LSER Molecular Descriptors and Their Physicochemical Interpretation

| Descriptor | Name | Molecular Property Quantified |

|---|---|---|

| E | Excess molar refraction | Polarizability from n- and π-electrons |

| S | Dipolarity/Polarizability | Molecular dipole moment and polarizability |

| A | Hydrogen Bond Acidity | Solute's ability to donate a hydrogen bond |

| B | Hydrogen Bond Basicity | Solute's ability to accept a hydrogen bond |

| Vx | McGowan's Characteristic Volume | Molecular size and cavity formation energy |

| L | Gas-Hexadecane Partition Coefficient | General dispersion interactions |

These descriptors collectively capture the dominant intermolecular forces governing solvation, including cavity formation, dispersion interactions, dipole-dipole interactions, and hydrogen bonding [26].

Quantitative LSER Models and Data

Representative LSER Model for Polymer-Water Partitioning

Recent research has established accurate LSER models for environmentally relevant partitioning systems. The following model for low density polyethylene (LDPE)-water partitioning demonstrates the application of LSER in predicting environmental fate of organic compounds:

logKi,LDPE/W = -0.529 + 1.098E - 1.557S - 2.991A - 4.617B + 3.886Vx [27]

This specific model was validated using 156 chemically diverse compounds (R² = 0.991, RMSE = 0.264) and independently confirmed with an additional 52 compounds (R² = 0.985, RMSE = 0.352) [27]. The magnitude and sign of the coefficients provide insights into the nature of LDPE-water partitioning: the strong positive Vx coefficient indicates size-driven hydrophobic partitioning, while the strongly negative A and B coefficients reveal that hydrogen bonding interactions favor the aqueous phase.

System Parameters for Common Partitioning Systems

Table: LSER System Parameters for Select Partitioning Systems

| Partitioning System | c | e | s | a | b | v | Application Context |

|---|---|---|---|---|---|---|---|

| LDPE/Water [27] | -0.529 | 1.098 | -1.557 | -2.991 | -4.617 | 3.886 | Leachable assessment, environmental fate |

| n-Hexadecane/Water* | - | - | - | - | - | - | Reference system for lipophilicity |

| PDMS/Water* | - | - | - | - | - | - | Passive sampling, medical devices |

| *Note: Exact values for these systems should be sourced from curated LSER databases for specific applications. |

Experimental Protocols

Protocol 1: Determining Solute Descriptors Experimentally

Objective: To experimentally determine the six LSER molecular descriptors for a novel chemical compound.

Materials:

- Pure compound of interest (high purity >99%)

- Reference solvents: n-hexadecane, water, and other well-characterized partitioning systems

- Gas chromatography system equipped with appropriate detector

- UV-Vis spectrophotometer

- Partitioning experiment apparatus (separatory funnels or HPLC for retention time measurements)

Procedure:

Determine McGowan's Characteristic Volume (Vx):

- Calculate Vx from molecular structure using the group contribution method [26].

- Vx is computed as the sum of atomic volumes minus a constant, providing a measure of molecular size relevant to cavity formation.

Determine Excess Molar Refraction (E):

- Measure refractive index of the pure compound at 20°C using a refractometer.

- Calculate E using the established formula: E = (measured refractive index - 1)/0.1 - (calculated non-polar contribution).

Determine Gas-Hexadecane Partition Coefficient (L):

- Measure retention time of the compound on a gas chromatograph with n-hexadecane stationary phase.

- Calculate L as logL = logK (where K is the directly measured gas-hexadecane partition coefficient).

Determine Hydrogen Bond Acidity (A) and Basicity (B):

- Measure partition coefficients between multiple solvent systems with characterized LSER parameters.

- Use multivariate regression to solve for A and B values that best fit the experimental partitioning data across systems.

- Alternatively, use spectroscopic methods for direct measurement of hydrogen bonding strength.

Determine Dipolarity/Polarizability (S):

- Derive S from the same multivariate regression used for A and B determination.

- Validate S value by predicting partition coefficients in additional solvent systems.

Validation:

- Confirm descriptor validity by predicting logP for known solvent-water systems and comparing with experimental values.

- Descriptors should yield prediction errors within 0.1 log units for well-behaved compounds.

Protocol 2: Validating LSER Models for Specific Applications

Objective: To validate an LSER model for predicting polymer-water partition coefficients in pharmaceutical container systems.

Materials:

- Low density polyethylene (LDPE) membranes of standardized thickness

- Pharmaceutical compounds with known LSER descriptors

- HPLC system with UV/Vis or MS detection

- Controlled temperature incubation system

Procedure:

Experimental Design:

- Select 20-30 chemically diverse compounds spanning a range of E, S, A, B, and Vx values.

- Include compounds with known experimental polymer-water partition coefficients for method validation.

Partitioning Experiments:

- Cut LDPE membranes into standardized discs and precondition in ultrapure water.

- Prepare compound solutions in ultrapure water at concentrations below solubility limits.

- Expose LDPE discs to compound solutions in sealed vessels with minimal headspace.

- Incubate with agitation at constant temperature (typically 25°C or 37°C) until equilibrium (typically 7-14 days based on preliminary kinetics studies).

Sample Analysis:

- At equilibrium, measure compound concentration in aqueous phase using HPLC.

- Extract compounds from LDPE discs using appropriate solvent and measure concentration.

- Calculate experimental logKLDPE/W = log(CLDPE/CWater).

Model Validation:

- Calculate predicted logKLDPE/W using the established LSER model [27].

- Perform linear regression between predicted and experimental values.

- Calculate validation statistics: R², RMSE, and mean absolute error.

Quality Control:

- Include reference compounds with known partition coefficients in each experiment.

- Ensure mass balance of 85-115% for all compounds.

- Perform experiments in triplicate to assess reproducibility.

Computational Implementation

In Silico Prediction of LSER Descriptors

For high-throughput applications, LSER molecular descriptors can be predicted computationally:

Method 1: QSPR-Based Prediction

- Use quantitative structure-property relationship (QSPR) models to predict descriptors from molecular structure alone [27].

- Implement available software tools or web-based platforms that calculate Abraham descriptors from SMILES strings or molecular structure files.

- Validate predictions against experimental values for structurally similar compounds.

Method 2: DFT Calculations

- Apply density functional theory (DFT) to calculate electronic properties relevant to descriptor values.

- Use calculated molecular volume, electrostatic potential maps, and hydrogen bonding propensity to estimate descriptors.

Performance Considerations: When using predicted rather than experimental descriptors, expect slightly increased prediction error (e.g., RMSE increase from 0.352 to 0.511 observed in LDPE-water partitioning) [27].

LSER Workflow for Green Solvent Selection

The following diagram illustrates the computational-experimental framework for applying LSER in green solvent selection:

The Scientist's Toolkit

Table: Essential Research Reagents and Computational Tools for LSER Applications

| Tool/Reagent | Function | Application Notes |

|---|---|---|

| n-Hexadecane | Reference solvent for determining L descriptor | High purity grade, use in GC stationary phases or partitioning experiments |

| Well-characterized solvent systems (e.g., octanol-water, alkane-alcohol) | For experimental determination of solute descriptors | Systems with established LSER parameters enable descriptor determination |

| Abraham Descriptor Database | Source of curated solute descriptors | Freely accessible web-based database containing descriptors for thousands of compounds [27] [26] |

| QSPR Prediction Tools | In silico prediction of LSER descriptors | Enables descriptor estimation for novel compounds without experimental data [27] |

| Polymer-specific LSER parameters | Predict partitioning into polymeric materials | Essential for pharmaceutical packaging, medical device, and environmental applications [27] |