Improving Process Mass Intensity: Case Studies and Strategies for Sustainable Biomanufacturing

This article provides a comprehensive analysis of Process Mass Intensity (PMI) improvement, tailored for researchers, scientists, and drug development professionals.

Improving Process Mass Intensity: Case Studies and Strategies for Sustainable Biomanufacturing

Abstract

This article provides a comprehensive analysis of Process Mass Intensity (PMI) improvement, tailored for researchers, scientists, and drug development professionals. It explores the foundational principles of PMI and green chemistry metrics, examines methodological advances through real-world case studies in bioprocessing, addresses key troubleshooting and optimization challenges in implementation, and validates strategies with comparative lifecycle and cost-benefit analyses. By synthesizing the latest industry practices and academic research, this resource offers a actionable framework for enhancing productivity and sustainability in biopharmaceutical manufacturing.

Understanding Process Mass Intensity: The Foundation of Green Biomanufacturing

Defining Process Mass Intensity and Key Green Chemistry Metrics

FAQs on Process Mass Intensity (PMI) and Green Metrics

This technical guide addresses common questions and troubleshooting issues for researchers and scientists implementing green chemistry metrics, specifically Process Mass Intensity (PMI), in pharmaceutical development and fine chemical synthesis.

What is Process Mass Intensity (PMI) and how is it calculated?

Answer: Process Mass Intensity (PMI) is a key green chemistry metric used to benchmark the efficiency and environmental impact of a chemical process. It measures the total mass of materials used to produce a unit mass of the desired product [1].

The standard calculation is [2]:

Troubleshooting Tip: Ensure you include all materials used in the process: reactants, reagents, solvents (reaction and purification), catalysts, and work-up materials. Omitting solvents is a common error that significantly skews results [1] [3].

How does PMI differ from E-Factor and Atom Economy?

Answer: While all are mass-based green metrics, they measure different aspects of efficiency. The table below summarizes key differences:

| Metric | Calculation | What It Measures | Primary Application |

|---|---|---|---|

| Process Mass Intensity (PMI) | Total mass of inputs / Mass of product |

Total resource consumption for a process [1] | Overall process efficiency; pharmaceutical industry standard [4] |

| E-Factor | Mass of total waste / Mass of product |

Total waste generated by a process [5] | Environmental impact assessment; fine chemicals and API synthesis [5] |

| Atom Economy | (MW of desired product / Σ MW of reactants) × 100% |

Theoretical incorporation of reactant atoms into the final product [5] [6] | Reaction pathway design and selection [5] |

Note: PMI and E-Factor are directly related: E-Factor = PMI - 1 [2]. Atom economy is a theoretical maximum, while PMI and E-Factor are based on actual experimental data [5].

Why is my PMI value misleadingly high, and how can I improve it?

Answer: A high PMI indicates low resource efficiency. Common causes and solutions are listed below.

| Issue | Root Cause | Corrective Action |

|---|---|---|

| Excessive Solvent Use | Dilute reaction conditions; multiple solvent-intensive purification steps [3] | Increase reaction concentration; switch to solvent-free conditions; recover/recycle solvents [7] |

| Low Yield | Incomplete reactions, side reactions, or product loss during work-up [3] | Optimize reaction parameters (temp, time, catalyst); improve work-up and isolation protocols [6] |

| High Stoichiometry of Reagents | Use of reagents in large excess [5] | Employ catalytic instead of stoichiometric reagents; optimize stoichiometry [6] |

Experimental Consideration: When reporting PMI, always document the reaction concentration and yield. A high-yielding reaction run at a very low concentration can have a worse PMI than a moderate-yielding reaction run at high concentration [3].

When should I use a convergent PMI calculator?

Answer: Use a convergent PMI calculator when evaluating multi-step synthetic routes where two or more intermediates are synthesized separately and then combined [4].

Protocol:

- Calculate the PMI for each synthetic branch independently.

- Use the convergent calculator to combine these inputs and outputs accurately.

- The tool automatically accounts for the mass contributions of converging branches toward the final API mass.

Benefit: This provides a fairer assessment of convergent syntheses compared to simply summing the PMIs of all steps, which would over-penalize the route. It allows for direct comparison between linear and convergent strategies [4] [1].

Answer: PMI is an excellent metric for resource efficiency, but it has limitations for full environmental assessment.

- Strength: It is a simple, mass-based metric that is easy to track and highly effective for driving reductions in material use and waste within a process [4] [1].

- Weakness: PMI does not differentiate between materials of different toxicities, hazardousness, or environmental impact. A process with a low PMI that uses highly toxic solvents may be less "green" than a process with a slightly higher PMI that uses only water and ethanol [8] [9].

Best Practice: Use PMI in conjunction with other metrics. For a more comprehensive environmental profile, consider:

- Life Cycle Assessment (LCA): The gold standard for evaluating full environmental impact, including energy use and carbon footprint [8].

- Simple Toxicity Screening: Classify solvents and reagents using a guide like CHEM21 to avoid high-hazard materials.

The Scientist's Toolkit: Key Research Reagent Solutions

| Tool / Reagent Category | Function | Green Chemistry Application |

|---|---|---|

| Heterogeneous Catalysts | Facilitate reactions without being consumed; easily separated and reused [6] | Replaces stoichiometric, high-mass reagents; improves E-factor and PMI [6]. |

| Benign Solvents | Reaction medium with low toxicity and environmental impact (e.g., water, ethanol, 2-MeTHF) [5] | Reduces process hazard and waste treatment burden, directly improving process sustainability. |

| ACS GCI PMI Calculator | Standardized tool for calculating Process Mass Intensity [4] | Enables benchmarking against industry data and tracks efficiency improvements over time. |

Workflow for Green Process Development

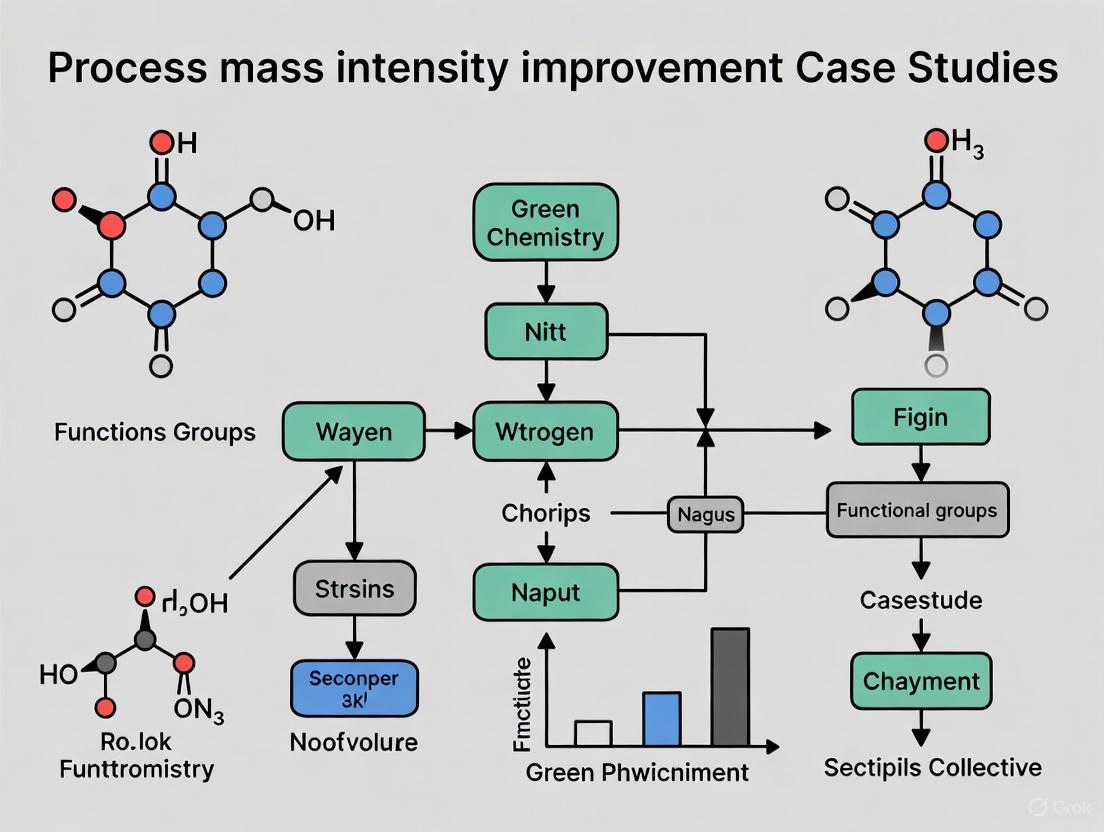

The following diagram outlines a logical workflow for integrating PMI and other metrics into chemical process development.

Figure 1. A iterative workflow for developing efficient and sustainable chemical processes by integrating PMI and other green metrics from early development.

Key Takeaways for Researchers

- PMI is Your Baseline: Track PMI relentlessly as it directly correlates to material cost and waste.

- Context is Critical: A low PMI is good, but it must be evaluated alongside safety, toxicity, and life-cycle data.

- Tools are Available: Leverage the free ACS GCI PMI Calculator and Convergent PMI Calculator for standardized measurement [4] [1].

- Design for Convergence: Where possible, design synthetic routes that are convergent, as they often have a superior overall PMI compared to linear sequences.

The Critical Role of System Boundaries in Accurate PMI Assessment

FAQs on System Boundaries and PMI

Q1: What is Process Mass Intensity (PMI) and why is its system boundary important?

A: Process Mass Intensity (PMI) is a key green chemistry metric that represents the total mass of materials (raw materials, reactants, solvents, etc.) required to produce a specified mass of a chemical product, typically expressed as kg of input per kg of output [10]. The system boundary defines which materials and processes are included in this calculation. An inaccurate or inconsistently applied system boundary is a major source of error, as it can lead to underestimating the true resource consumption and environmental impact. A gate-to-gate PMI only considers materials used within the factory, while a cradle-to-gate boundary expands to include the upstream value chain, offering a more complete environmental picture [8].

Q2: My PMI is low, but my process still has high environmental impact. Why?

A: This common issue often stems from an overly narrow, gate-to-gate system boundary [8]. A low PMI calculated within the factory gates can be misleading if it ignores resource-intensive upstream processes. For example, a reagent might contribute little mass but have an extremely energy-intensive production path. Mass-based metrics like PMI do not directly account for the environmental impact of material production, energy usage, or waste properties [8] [10]. Therefore, a comprehensive assessment requires expanding the system boundary to cradle-to-gate and, for a full picture, should be supplemented with Life Cycle Assessment (LCA) [8].

Q3: How do I define an appropriate system boundary for my PMI calculation?

A: Defining a system boundary is a critical step. The following workflow provides a systematic methodology, moving from the simplest to the most comprehensive assessment. A cradle-to-gate "Value-Chain Mass Intensity" (VCMI) is recommended for a more reliable approximation of environmental impacts [8].

Q4: What is the difference between PMI and a full Life Cycle Assessment (LCA)?

A: PMI is a single-metric, mass-based efficiency ratio. It is simple to calculate but does not differentiate between materials or quantify specific environmental impacts like carbon emissions or toxicity [10]. In contrast, LCA is a multi-criteria, holistic method that evaluates numerous environmental impacts (e.g., climate change, water use, resource depletion) across the product's entire life cycle, from raw material extraction (cradle) to end-of-life disposal [8]. While LCA is the recommended method for comprehensive evaluation, PMI serves as a useful, simplified proxy when LCA data or expertise is unavailable, provided its limitations are understood [8].

Troubleshooting Common PMI Assessment Problems

Problem: Inconsistent PMI Values for the Same Process

Solution:

- Cause: Different teams may be using different system boundaries (e.g., one team excludes water, another includes it).

- Action: Standardize the system boundary using a clear, written protocol. The table below outlines common boundary definitions. Adopt a cradle-to-gate VCMI where possible, as recent research shows it correlates more strongly with LCA environmental impacts than a gate-to-gate PMI [8].

Problem: PMI Suggests Improvement, but LCA Shows Worse Impact

Solution:

- Cause: The PMI improvement likely came from replacing a high-mass, low-impact input with a low-mass, high-impact one (e.g., switching to a reagent with a cleaner production profile but that is heavier).

- Action: Do not rely on PMI alone for environmental claims. Use PMI for initial, internal screening of process efficiency, but always validate major "green" improvements with at least a simplified LCA that considers the upstream impacts of key materials [8].

Problem: Difficulty Sourcing Upstream Data for Cradle-to-Gate PMI

Solution:

- Cause: Lack of primary data from suppliers due to confidentiality or unavailability.

- Action: Use secondary data from reputable life cycle inventory databases (e.g., ecoinvent) to fill data gaps [8]. For commercially available raw materials, a practical approach is to define the boundary using "commonly available materials," for example, those listed on major chemical supplier sites like Sigma-Aldrich below a certain cost threshold [8].

Quantitative Data on PMI and System Boundaries

Table 1: Typical PMI Ranges Across Pharmaceutical Modalities

This table benchmarks average PMI values for different drug types, highlighting the significant resource intensity of peptide synthesis. These figures are typically calculated using a gate-to-gate system boundary.

| Pharmaceutical Modality | Typical/Reported PMI (kg input / kg API) | Key Contributing Factors |

|---|---|---|

| Small Molecule APIs | 168 - 308 (median) [10] | Solvent use in reactions and purifications. |

| Peptide APIs (SPPS) | ~13,000 (average) [10] | Large excesses of solvents and reagents in solid-phase synthesis. |

| Oligonucleotides | 3,035 - 7,023 (average ~4,300) [10] | Solid-phase processes, challenging purifications. |

| Biologics (mAbs) | ~8,300 (average) [10] | Water-intensive cell culture and purification. |

Table 2: Correlation of Mass Intensities with LCA Environmental Impacts

A 2025 study systematically analyzed how expanding the system boundary improves the correlation between mass intensity and environmental impacts. This demonstrates the superiority of a cradle-to-gate approach. VCMI = Value-Chain Mass Intensity [8].

| System Boundary Type | Spearman Correlation with LCA Impacts (Typical Finding) | Description & Scope |

|---|---|---|

| Gate-to-Gate (PMI) | Weak / Not Robust [8] | Includes only materials used within the immediate chemical process (factory gate). |

| Cradle-to-Gate (VCMI) | Stronger for 15 of 16 environmental impacts [8] | Expands boundary to include upstream value chain, back to extraction of natural resources. |

Experimental Protocol: Calculating a Cradle-to-Gate Value-Chain Mass Intensity (VCMI)

Objective: To standardize the calculation of a cradle-to-gate VCMI for a chemical process, enabling a more reliable approximation of its environmental footprint than a gate-to-gate PMI.

Principles: The VCMI is calculated as the total mass of all inputs within the defined cradle-to-gate system boundary per unit mass of the final product. Expanding the boundary strengthens the correlation with Life Cycle Assessment results [8].

Procedure:

- Define the Final Product: Clearly identify the chemical product (e.g., 1.0 kg of active pharmaceutical ingredient).

- Map the Chemical Value Chain: List all input materials for the final production step. For each input, trace its production pathway back to extracted natural resources (e.g., crude oil, metal ores, biomass).

- Classify Input Materials: Categorize all value-chain products into standardized classes (e.g., based on the Central Product Classification). This allows for a systematic expansion of the system boundary [8].

- Collect Mass Data: For your immediate process, use measured mass data. For upstream materials, use data from life cycle inventory databases (e.g., ecoinvent) or reliable literature sources [8].

- Calculate VCMI: Use the formula below, ensuring all masses are in consistent units (e.g., kg).

Formula:

VCMI = (Total Mass of all Cradle-to-Gate Inputs) / (Mass of Final Product)

Diagram: Cradle-to-Gate VCMI System Boundary This diagram illustrates the flow of materials from natural resources (cradle) to the final product (gate), showing which elements are included in a VCMI calculation versus a traditional gate-to-gate PMI.

The Scientist's Toolkit: Key Reagents and Materials

Table 3: Essential Materials in Peptide Synthesis and Their Environmental Considerations

This table details key reagents used in Solid-Phase Peptide Synthesis (SPPS), a process with a very high PMI, and highlights associated environmental and regulatory concerns [10].

| Research Reagent / Material | Primary Function in Synthesis | Key Considerations & Green Chemistry Context |

|---|---|---|

| N,N-Dimethylformamide (DMF) | Primary solvent for SPPS. | Reprotoxic; facing potential regulatory restrictions. A major driver of PMI and environmental impact [10]. |

| Fmoc-Protected Amino Acids | Building blocks for peptide chain assembly. | Inherent poor atom economy due to the mass of the protecting group that is later cleaved off as waste [10]. |

| Coupling Agents (e.g., HATU, DIC) | Activate amino acids for bond formation. | Often used in excess; some can be explosive or sensitizing, posing safety and waste hazards [10]. |

| Trifluoroacetic Acid (TFA) | Cleaves the peptide from the resin and removes protecting groups. | Highly corrosive; generates hazardous waste streams, requiring careful handling and disposal [10]. |

| Dichloromethane (DCM) | Swelling resin and as a solvent for cleavage and purification. | Toxic solvent; its use is discouraged by green chemistry principles due to health and environmental risks [10]. |

Frequently Asked Questions (FAQs)

Core Concepts of Process Mass Intensity

Q1: What is Process Mass Intensity (PMI) and why is it a key green chemistry metric?

Process Mass Intensity (PMI) is a comprehensive mass-based metric used to evaluate the efficiency and environmental impact of a chemical process. It is defined as the total mass of all materials used in a process to produce a unit mass of the desired product [11]. This includes reagents, reactants, catalysts, solvents (for both reaction and purification), and work-up chemicals [11].

PMI is considered a key green chemistry metric because it provides a complete picture of resource consumption and waste generation potential. Unlike simple yield, PMI accounts for all material inputs, driving innovation and efficiency from the outset of process development [11]. A lower PMI indicates a more efficient and environmentally friendly process, as it signifies less raw material usage, lower cost, less waste generated, and a reduced environmental footprint [12].

Q2: How is PMI calculated, and what are its component parts?

PMI is calculated using the following formula [11]:

PMI = Total Mass of All Materials Used (kg) / Mass of Product (kg)

The total mass input can be broken down into its key components, allowing for a more detailed analysis. The formula can be expanded as [11]:

PMI = PMI_RRC + PMI_Solv

Where:

- PMI_RRC: Process Mass Intensity of reactants, reagents, and catalyst.

- PMI_Solv: Process Mass Intensity of solvents.

This breakdown helps identify the major contributors to mass inefficiency in a process, whether it's the stoichiometry of the reaction itself or the large volumes of solvents often used [11].

Q3: How does PMI relate to other common green chemistry metrics like E-factor?

PMI and E-factor are closely related mass-based metrics. The E-factor is defined as the total mass of waste produced per unit mass of product [11]. The relationship between them can be described as:

PMI = E-factor + 1

This is because the total mass of inputs (PMI) equals the mass of the product (1) plus the mass of all waste generated (E-factor). PMI is often preferred as it focuses on process inputs from the beginning, driving resource efficiency, whereas E-factor focuses on waste output [11].

PMI in Pharmaceutical Research and Development

Q4: What are typical PMI values in the pharmaceutical industry, and how do they compare to other sectors?

The pharmaceutical industry has significantly higher PMI values compared to bulk chemical industries due to complex multi-step syntheses and stringent purity requirements. The table below summarizes typical PMI ranges.

| Industry / Process Type | Typical PMI Range (kg/kg) | Key Reasons for High PMI |

|---|---|---|

| Oil Refining [12] | ~1.1 | Large-scale, optimized continuous processes |

| Pharmaceuticals (Commercial) [12] | 26 - 100+ | Complex molecules, multi-step synthesis, safety & purity focus |

| Pharmaceuticals (Early-Phase) [12] | Often >100, can exceed 500 | Unoptimized routes, focus on speed to clinic |

| Example: Classical Synthesis [11] | 817.1 | High solvent use (PMI_Solv = 742.3) in reaction & work-up |

| Example: Multi-Component Reaction [11] | 324.5 | More efficient design reduces solvent mass intensity |

Q5: Why can a direct comparison of PMI values between two different processes be misleading?

A direct PMI comparison can be misleading without due consideration of key reaction parameters. A process with a lower PMI is not automatically "greener" if other factors are neglected [11]. Key considerations include:

- Yield and Molecular Weight: PMI is highly sensitive to reaction yield and the molecular weight of reactants versus the product [11].

- Concentration: Reactions run at low concentrations will have an inherently high PMI due to large solvent volumes (high PMI_Solv) [11].

- Hazard and Toxicity: PMI is a measure of mass, not hazard. A process with a slightly higher PMI that uses benign solvents and reagents may be preferable to a low-PMI process that uses highly hazardous materials [11].

- Upstream Considerations: PMI does not account for the environmental cost of producing the reactants, reagents, and catalysts in the first place [11].

A fair appraisal requires a holistic analysis that includes both quantitative metrics like PMI and qualitative assessments of safety, health, and environmental impact [11].

Troubleshooting Common PMI Issues

Symptoms:

- PMI value significantly above 100 for an early-phase project.

- High raw material costs and large waste streams.

Investigation and Resolution Steps:

| Step | Action | Goal / Expected Outcome |

|---|---|---|

| 1. Data Collection | Collect PMI data from all development and production batches. [12] | Establish a reliable baseline for analysis and identify worst-performing steps. |

| 2. PMI Breakdown | Calculate PMIRRC and PMISolv for each synthetic step. [11] | Pinpoint whether the issue stems from stoichiometry (RRC) or solvent use (Solv). |

| 3. Identify Root Cause | Analyze the steps with the highest PMI. Common causes are low yields, high dilution, or inefficient work-ups. [11] | Target improvement efforts on the most impactful areas. |

| 4. Implement Solutions | Apply strategies like solvent substitution, concentration optimization, or route scouting. | Achieve a measurable reduction in PMI for the targeted step. |

| 5. Cultural Change | Recognize teams that achieve low or improved PMI and make PMI reduction a key performance indicator (KPI). [12] | Embed sustainability thinking into the R&D culture for long-term improvement. |

Problem: PMI and Yield Sending Conflicting Signals

Symptom: A reaction has an excellent yield (>90%) but a very high, unsatisfactory PMI.

Explanation: This apparent contradiction is common and highlights the difference between PMI and yield. Yield measures the efficiency of converting the limiting reactant into product. PMI measures the efficiency of using all mass inputs.

A high-yield reaction can have a high PMI if it uses large excesses of other reagents, stoichiometric (rather than catalytic) amounts of reagents, or large volumes of solvent [11]. The diagram below illustrates the components that PMI captures beyond yield.

Resolution: Focus on reducing the mass of non-reactant inputs. Key strategies include:

- Increase Reaction Concentration: Reduce solvent volumes to lower PMI_Solv.

- Optimize Stoichiometry: Use catalysts or reduce excesses of reagents to lower PMI_RRC.

- Solvent Selection and Recycling: Choose safer solvents and implement recycling loops.

Problem: Discrepancy Between PMI and Qualitative "Greenness"

Symptom: A process has a favorable (low) PMI but uses hazardous or undesirable reagents, raising questions about its overall green credentials.

Case Study Example: Research compared amide bond formation using different coupling reagents. The lowest PMI was achieved using boric acid, but this method also received "red flags" in a qualitative assessment (e.g., perhaps due to hazards or energy use). Conversely, an enzymatic process had a much higher PMI but received almost all "green flags" for its mild, biocatalytic conditions [11].

Interpretation: This demonstrates a critical limitation of relying solely on PMI. The metric measures mass efficiency, not safety, toxicity, or energy consumption.

Resolution: Always use PMI as part of a holistic assessment framework. Combine it with other tools, such as the CHEM21 Metrics Toolkit, which uses a flag system (green/amber/red) to qualitatively evaluate factors like solvent safety, renewability, and waste management [11]. A process should be optimized for both low PMI and a positive qualitative flag profile.

Essential Methodologies for PMI Analysis

Protocol: Calculating and Reporting PMI for a Single Reaction

Purpose: To standardize the calculation of PMI to ensure objective comparison between different processes.

Materials:

- Laboratory Notebook with detailed masses of all inputs.

- Isolated, Dried Product with accurate mass measurement.

Procedure:

- List All Inputs: For the reaction, work-up, and purification, record the masses (in grams or kg) of every substance introduced into the system. This includes:

- Substrates and Reagents

- Catalysts

- Solvents (for reaction, extraction, washing, chromatography)

- Work-up chemicals (e.g., acids, bases, drying agents)

- Calculate Total Mass Input: Sum the masses from step 1.

- Record Mass of Product: Accurately weigh the final, isolated product after it is completely dry.

- Calculate PMI: Apply the formula

PMI = Total Mass Input / Mass of Product. - Optional Breakdown: Calculate PMIRRC and PMISolv for deeper insight.

Reporting: When reporting PMI, always state:

- The mass of product obtained.

- The reaction scale.

- The PMI value, and if possible, PMIRRC and PMISolv.

Protocol: Benchmarking PMI Against Industry Standards

Purpose: To evaluate the relative greenness of a process using the Green Aspiration Level (GAL) concept.

Background:

The Green Aspiration Level (GAL) sets realistic, data-driven PMI targets for the pharmaceutical industry based on molecular complexity and market demand [11]. For early-stage development, the "simple" E-Factor (sEF) target is 42 kg/kg, and for commercial processes, the "complete" E-Factor (cEF) is 167 kg/kg [11]. Recall that PMI = E-factor + 1.

Procedure:

- Calculate your process PMI using the standard protocol.

- Determine the appropriate benchmark. For an early-phase API, compare your PMI to the sEF-based target:

PMI_target = sEF + 1 = 43 kg/kg. - Calculate the Relative Process Greenness (RPG) [11]. This indicates how close your process is to the industry benchmark.

RPG = (PMI_target / Your_PMI) * 100%

An RPG > 100% indicates your process is greener than the target, while <100% shows there is room for improvement.

The Scientist's Toolkit: Key Reagents and Solutions for PMI Reduction

| Tool / Category | Function / Purpose in PMI Reduction | Specific Examples & Notes |

|---|---|---|

| Catalytic Reagents | Reduces or eliminates the stoichiometric waste generated by traditional reagents, lowering PMI_RRC. [11] | Catalytic hydrogenation, catalytic coupling reagents (e.g., for amide formation). |

| Green Solvent Selection Guides | Guides the choice of solvents with better environmental, health, and safety (EHS) profiles and potential for recycling. [11] | CHEM21 Solvent Selection Guide; preference for water, ethanol, 2-methyl-THF over DCM, DMF. |

| Flow Chemistry Systems | Enables safer handling of hazardous reagents, improved heat/mass transfer, and reduced solvent use, lowering PMI_Solv. [12] | Particularly useful for reactions involving gases, toxic intermediates, or high exotherms. |

| Multi-Component Reactions (MCRs) | Combines multiple reactants in a single pot to construct complex molecules, reducing the number of steps and associated PMI. [11] | Can significantly improve Atom Economy (AE) and reduce PMI compared to classical linear syntheses. |

| Process Mass Intensity (PMI) | The primary metric for measuring the mass efficiency of a process and identifying areas for improvement. [11] [12] | Serves as a Key Performance Indicator (KPI) for sustainability in R&D. |

Technical Troubleshooting Guides

Troubleshooting Common Process Intensification Challenges

Table 1: Common Experimental Challenges and Solutions in Process Intensification

| Observed Challenge | Potential Root Cause | Recommended Solution | Key Performance Indicator to Monitor |

|---|---|---|---|

| Low Product Yield in Intensified Bioreactor | - Nutrient depletion- Inadequate mass transfer- Suboptimal cell density | - Implement continuous perfusion or fed-batch modes- Optimize mixing and aeration strategies- Apply cell retention technologies (e.g., ATF, TFF) | - Volumetric Productivity (g/L/day)- Viable Cell Density (cells/mL)- Metabolite levels |

| Poor Product Quality or Consistency | - Shifts in process parameters (pH, temp)- Inadequate control of reaction pathways | - Integrate Process Analytical Technology (PAT) for real-time monitoring- Implement advanced process control strategies | - Product Critical Quality Attributes (CQAs)- Process Capability (Cpk) |

| Difficulty Scaling Up from Bench to Production | - Non-linear scaling parameters- Equipment design disparities | - Employ scale-down models for process characterization- Adopt modular equipment design principles | - Productivity at different scales- Shear stress profile consistency |

| Fouling in Intensified Separation Systems | - High cell density or product concentration- Membrane incompatibility | - Optimize filtration parameters (flux, TMP)- Implement periodic back-flushing or cleaning-in-place (CIP) | - Transmembrane Pressure (TMP)- Permeate Flux Rate |

| Process Instability in Continuous Operation | - Microbial contamination- Cell line genetic instability- Drifting control parameters | - Enhance aseptic design and procedures- Establish robust cell bank systems and seed train intensification- Utilize automated feedback control loops | - Duration of continuous run- Batch success rate- Genetic stability data |

Frequently Asked Questions (FAQs)

Core Concepts and Methodology

Q1: What is the precise definition of "Process Intensification" (PI) in a bioprocessing context? A: Bioprocess intensification is defined as a significant step increase in output relative to cell concentration, time, reactor volume, or cost, resulting in improvements in productivity, environmental, and economic metrics. This usually involves a drastic change in equipment and/or process design, such as moving from batch to continuous processing or integrating new process steps [13].

Q2: What are the primary categories of benefits offered by Process Intensification? A: The benefits can be categorized into three main areas [13]:

- Business: Miniaturized plant size, reduced capital and operational expenditures (CAPEX/OPEX), potential for distributed manufacturing, and a faster timeline from research to market.

- Process: Achievement of higher cell densities, increased productivity, improved product Critical Quality Attributes (CQAs), operation across wider process conditions, and enabling continuous processing.

- Environment: Reduced energy consumption, lower waste generation, decreased reagent usage, and a smaller physical footprint.

Q3: How does a "reference terminology" differ from an "interface terminology," and why is this distinction critical for PI? A: This distinction is fundamental for standardizing nomenclature [14]:

- A Reference Terminology is a set of well-defined concepts and relationships that provide a common reference point for comparison and aggregation of data. In PI, it is used for data analysis, research, and ensuring semantic interoperability across different systems and publications (e.g., a standard code for "perfusion rate").

- An Interface Terminology (or application terminology) is a systematic collection of phrases that support scientists in entering data into computer systems, like an Electronic Lab Notebook (ELN). These user-friendly terms are then mapped to the reference terminology for consistent data management.

Q4: What are the key characteristics of a robust, standardized terminology for a scientific field like PI? A: A robust standardized terminology should have the following core characteristics [14]:

- Concepts & Unique Identifiers: Unambiguous ideas, each with a unique code.

- Definitions & Terms: A precise definition and a human-readable text description for each concept.

- Synonyms: Inclusion of variant terms to account for different linguistic preferences.

- Relationships: Well-defined hierarchical and associative relationships between concepts (e.g., "is-a," "part-of," "enabled-by").

Implementation and Analysis

Q5: What fundamental shift in process design is central to many PI strategies? A: A central shift is the move from traditional batch processing to continuous processing, which often involves the integration of unit operations (e.g., reaction and separation) into single, multifunctional steps [13].

Q6: What analytical frameworks are used to quantify the mass intensity improvements from PI? A: The primary metric is Process Mass Intensity (PMI), calculated as the total mass of materials used in the process divided by the mass of the final product. Case studies should track PMI before and after intensification. Other key metrics include volumetric productivity, equipment utilization rate, and environmental factors (E-factor) [13].

Q7: How can researchers effectively manage the complexity of data generated from intensified processes? A: Effective management requires [15]:

- Digitalization and Data Standards: Using standardized nomenclature ensures data from PAT, sensors, and control systems is consistent and interoperable.

- Process Analytical Technology (PAT): Implementing tools for real-time monitoring of critical process parameters (CPPs) to maintain critical quality attributes (CQAs).

- Advanced Data Analysis: Leveraging digital tools for modeling, simulation, and analysis of the complex, high-frequency data generated by continuous processes.

Experimental Protocols for Process Mass Intensity Improvement

Protocol: Intensified Perfusion Bioreactor Operation for High Cell Density Culture

Objective: To establish a continuous perfusion process achieving high cell density (>50 x 10^6 cells/mL) to increase volumetric productivity and reduce process mass intensity.

Materials:

- Bioreactor system equipped with cell retention device (e.g., Alternating Tangential Flow (ATF) or Tangential Flow Depth Filtration (TFDF) system)

- Proprietary cell culture medium and feed

- Production cell line

- Process Analytical Technology (PAT) probes (for pH, DO, CO2, etc.)

- Off-line analyzer for metabolites (e.g., Nova, Cedex)

Methodology:

- Inoculum Train Intensification (N-1):

- Inoculate the N-1 bioreactor at a higher seeding density than standard practice.

- Use an intensified feeding strategy or perfusion in the N-1 stage to achieve a high viable cell density (VCD) at the time of inoculation for the production bioreactor.

- This step reduces the number of seed train vessels, media volume, and time [13].

Production Bioreactor Operation:

- Inoculate the production bioreactor at a high seeding density from the intensified N-1 stage.

- Initiate perfusion immediately or shortly after inoculation. The perfusion rate should be controlled based on VCD or metabolite levels.

- The cell retention device (e.g., XCell ATF) is operated to retain cells within the bioreactor while removing spent media.

Process Monitoring and Control (PAT):

- Utilize PAT for real-time monitoring and control of key parameters.

- Correlate online data with frequent off-line measurements (VCD, viability, metabolites, product titer) to guide process adjustments.

- This allows for dynamic control of the process, ensuring consistency and quality [13].

Harvest: Continuously harvest the product from the cell-free permeate stream from the retention device.

Data Analysis:

- Calculate and compare the Volumetric Productivity (g/L/day) and Process Mass Intensity (PMI) against a reference batch or fed-batch process.

- Monitor and report Critical Quality Attributes (CQAs) to ensure product comparability.

Diagram 1: Intensified Perfusion Workflow

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Reagent Solutions for Process Intensification Experiments

| Item | Function in Process Intensification | Example Application |

|---|---|---|

| Cell Retention Devices (ATF/TFF Systems) | Enables high cell density culture by physically retaining cells within the bioreactor while removing spent media. | Continuous perfusion bioreactor operations for monoclonal antibody or viral vector production [13]. |

| Specialized Media & Feeds | Formulated to support high cell densities and prolonged culture durations in intensified processes. | Concentrated feeds for N-1 intensification; balanced nutrient media for continuous perfusion [13]. |

| Process Analytical Technology (PAT) Probes | Provides real-time, in-line monitoring of Critical Process Parameters (CPPs) for advanced process control. | Monitoring glucose/lactate levels to dynamically control perfusion rates; pH/DO probes for feedback control [15]. |

| Structured Catalysts or Packings | Increases surface area and efficiency of catalytic reactions, a key PI method in chemical synthesis. | Structured reactors for intensified continuous-flow chemical synthesis. |

| Alternative Energy Source Equipment | Utilizes non-conventional energy for physicochemical activation to enhance reaction rates and efficiency. | Equipment for sonochemistry (ultrasound), microwave-assisted synthesis, or electrochemical reactors [15]. |

Diagram 2: PI Framework & Tool Relationships

Process Intensification in Action: Methodologies and Case Studies for PMI Reduction

Technical Support Center

Troubleshooting Guides

This section addresses common technical challenges encountered when implementing integrated continuous bioprocessing for monoclonal antibody (mAb) production.

Problem 1: Inconsistent Product Quality During Extended Continuous Runs

- Symptoms: Fluctuations in protein purity, concentration, or aggregation levels detected in the output stream.

- Investigation Procedure:

- Install Bio-Fluorescent Particle Counters (BFPCs) in air and water systems to discriminate between inert and biological particles in real-time [16].

- Use Process Analytical Technology (PAT) to provide real-time monitoring and feedback on critical parameters including protein purity, concentration, pH, and conductivity in the Harvested Clarified Cell Culture Fluid (HCCF) [17].

- Perform Residence Time Distribution (RTD) analysis to understand the flow of product through the interconnected system and identify mixing or dead zones that could cause product quality heterogeneity [18] [17].

- Solution: Integrate PAT with an automated closed-loop control system to intelligently divert out-of-spec samples based on RTD models, significantly enhancing manufacturing procedure control [17].

Problem 2: Failure to Achieve Projected Cell Density and Productivity in Perfusion Bioreactor

- Symptoms: Cell densities and product titers fall below expectations, failing to achieve the 5–20× higher productivity potential of continuous processes [17].

- Investigation Procedure:

- Confirm the performance of the cell retention device (e.g., Alternating Tangential Flow Filtration - ATF) to minimize filter fouling [19].

- Correlate peak cell density with the frequency of technical failures; higher cell densities can increase the likelihood of equipment failures [19].

- Analyze the Process Mass Intensity (PMI) of the culture media and feeding strategies to ensure sustainability and efficiency [4] [20].

- Solution: Implement an Alternating Tangential Flow Filtration (ATF) perfusion system to reduce filter fouling instances and achieve higher cell densities and productivities compared to earlier perfusion systems [19].

Problem 3: High Particulate Counts in Purified Water Loop

- Symptoms: Elevated particle counts in the Purified Water (PW) system, potentially affecting downstream purification.

- Investigation Procedure:

- Utilize a water-based BFPC to establish baseline particle and Auto-Fluorescent Unit (AFU) counts [16].

- If AFU counts are low but inert particle counts are high, investigate sources of non-biological contamination, such as shedding from system components [16].

- Install a 50-nanometer inline water filter between the water loop and the BFPC as a diagnostic step to confirm the particle source [16].

- Solution: Identify and eliminate the source of inert particulates, which may involve component replacement or process adjustment, guided by real-time BFPC data [16].

Problem 4: Control and Connectivity Challenges in an Integrated System

- Symptoms: Lack of synchronization between unit operations (e.g., bioreactor, capture chromatography, polishing), leading to process disruptions.

- Investigation Procedure:

- Map the entire process using the three basic control building blocks defined in industry best practices to understand the flow of product [18].

- Define a control strategy based on the specific requirements of your process, using a conserved approach to link the outputs and inputs between unit operations [18].

- Implement a Manufacturing Execution System (MES) to integrate real-time monitoring and optimize workflows, providing the data backbone for control [21].

- Solution: Adopt a standardized industry control strategy for continuous bioprocessing to mitigate potential process risks and reduce implementation barriers [18].

Frequently Asked Questions (FAQs)

Q1: What is the tangible evidence supporting the claim of "10-fold productivity gains"? Multiple independent studies and industrial implementations confirm these gains. For example:

- WuXi Biologics' WuXiUP platform demonstrates upstream yields exceeding 110 g/L total output over 24 days, with a peak daily yield of 7.6 g/L [17]. Their platform enables productivity 5–20× higher than traditional processes [17].

- A holistic assessment of continuous bioprocessing published in Biotechnology Progress confirms that integrated continuous strategies can boost productivity up to 10-fold, reducing the cost of goods and enhancing product quality [19].

- Fully connected continuous manufacturing platforms compress batches and boost productivity, making them a forefront change in biologics manufacturing [22].

Q2: How does continuous processing improve sustainability, specifically Process Mass Intensity (PMI)? Continuous processing dramatically improves material efficiency, a core component of PMI.

- Case Study - Merck: In manufacturing a complex ADC drug-linker, a redesigned continuous process reduced PMI by approximately 75% by cutting a 20-step synthesis down to just three steps and decreasing energy-intensive chromatography time by >99% [23].

- Fundamental Principle: Semicontinuous chromatography increases resin utilization, reducing required resin volumes by 40–50% and step buffer consumption by 40–50%, directly lowering the PMI of the purification steps [19].

Q3: What are the key differences between a fed-batch process and an integrated continuous process? The table below summarizes the core differences.

| Feature | Fed-Batch Process | Integrated Continuous Bioprocessing |

|---|---|---|

| Operation Mode | Discrete batches with start/stop steps | Uninterrupted, seamless flow from bioreactor to downstream |

| Facility Footprint | Larger equipment (e.g., 10,000-20,000 L bioreactors) | Smaller footprint; 1,000-2,000 L single-use bioreactors can match output of large stainless-steel ones [17] |

| Process Duration | Long cycle times (e.g., 12-20 days) | Highly intensified; WuXiUP runs 24-day cultures [17] |

| Product Quality | Potential for degradation in longer runs | Enhanced quality by preventing degradation [22] |

| Resin Utilization | Lower utilization in batch chromatography | 40-50% higher resin utilization with continuous chromatography [19] |

Q4: What is the business case for adopting continuous bioprocessing? The business case varies by clinical phase and company size, driven by cost, operational, and environmental factors [19].

- Early Phase & Small/Medium Companies: The optimal strategy is often a fully integrated continuous process (ATF perfusion, continuous capture, continuous polishing), as it reduces upfront resin and buffer costs [19].

- Commercial & Large Portfolio Companies: A hybrid strategy (fed-batch culture, continuous capture, batch polishing) may be preferred from a Cost of Goods per gram (COG/g) perspective. However, if operational feasibility is prioritized over pure economics, the hybrid strategy is favored for all scales [19].

Q5: What enabling technologies are critical for successful implementation? Successful implementation relies on a suite of advanced technologies:

- Alternating Tangential Flow (ATF) Perfusion Systems: For high-density cell culture [19].

- Semicontinuous Chromatography (e.g., BioSMB, PCC): For high-resin-utilization capture and polishing [19].

- Process Analytical Technology (PAT) & Bio-Fluorescent Particle Counters (BFPCs): For real-time monitoring and control [17] [16].

- Manufacturing Execution Systems (MES): For integrating data, optimizing workflows, and enabling control and connectivity [21].

Experimental Protocols & Data

Detailed Methodology: Establishing an Integrated Continuous mAb Production Process

This protocol is adapted from successful industrial case studies and proof-of-concept demonstrations [19] [17].

1. Upstream Process Intensification via Perfusion

- Objective: Achieve and maintain high cell density for sustained product secretion.

- Materials:

- Bioreactor System: Single-use bioreactor (SUB), 1,000-2,000 L scale.

- Cell Retention Device: Alternating Tangential Flow (ATF) system with a hollow-fiber filter.

- Cell Line: Recombinant CHO cell line expressing the target mAb.

- Analytical Tools: Bio-Fluorescent Particle Counter (BFPC) for air monitoring, automated cell counters, and metabolite analyzers.

- Procedure:

- Inoculate the bioreactor and allow the cells to grow to the desired viability.

- Initiate the ATF system to begin continuous media exchange. Set the perfusion rate based on cell density and nutrient consumption rates.

- Maintain the culture for a target of 24+ days, continuously harvesting the cell culture fluid.

- Monitor the environment in real-time with an air-based BFPC to ensure air quality and troubleshoot any excursions related to personnel flow or system shutdowns [16].

- Key Performance Indicators (KPIs):

- Peak Viable Cell Density: Target > 50 x 10^6 cells/mL.

- Productivity: Target a cumulative output > 100 g/L and a peak daily yield > 7 g/L [17].

2. Downstream Purification with Continuous Chromatography

- Objective: Purify the mAb from the harvested stream with high efficiency and minimal buffer consumption.

- Materials:

- Clarification: Depth filters and centrifuges.

- Capture Step: Periodic Counter-Current (PCC) chromatography system (e.g., 3-4 columns) packed with Protein A resin.

- Polishing Step: Two-step, high-efficiency membrane chromatography system [17].

- Process Analytical Technology (PAT): Online monitors for UV, pH, and conductivity.

- Procedure:

- Clarify the harvested stream from the bioreactor using depth filtration.

- Load the clarified stream onto the continuous Protein A system. The system is configured so the first column is loaded to 100% breakthrough capacity, with the flow-through directed to the next column.

- Elute the bound protein and pass it directly to the continuous polishing steps.

- Utilize PAT probes for real-time monitoring of protein purity and concentration. Integrate this data with a control system that uses RTD models to make decisions on product diversion [17].

3. System Integration and Control

- Objective: Create a seamless, automated, and controlled end-to-end process.

- Materials: Manufacturing Execution System (MES), integrated control software, PAT tools, and RTD models.

- Procedure:

- Design the control strategy using established building blocks to manage the flow of product between unit operations [18].

- Implement an MES to provide real-time data integrity, batch record automation, and workflow optimization across the entire process [21].

- Use RTD analysis to model the movement of a product "slug" through the integrated system, which is critical for defining product quality attributes and enabling real-time release [18] [17].

The table below consolidates key performance metrics from cited case studies, demonstrating the impact of continuous processing.

| Metric | Traditional Fed-Batch | Intensified/Continuous Process | Source |

|---|---|---|---|

| Volumetric Productivity | Baseline | 5 to 20-fold higher | [17] |

| Bioreactor Scale for Equivalent Output | 10,000-20,000 L | 1,000-2,000 L | [17] |

| Protein A Resin Savings | Baseline | 40-50% reduction | [19] |

| Buffer Consumption (Capture Step) | Baseline | 40-50% reduction | [19] |

| Process Mass Intensity (PMI) | Baseline | ~75% reduction (case study for an ADC linker) | [23] |

| Cost of Goods per Gram (COG/g) | Baseline | Up to 50% reduction (case study) | [21] |

Visualization of an Integrated Continuous Bioprocess

Integrated Continuous Bioprocessing Workflow

This diagram illustrates the seamless flow of an integrated continuous bioprocess for mAb production, highlighting the key unit operations and the overarching role of real-time monitoring and control systems (PAT, BFPC, and MES) that ensure product quality and process efficiency [18] [17] [16].

The Scientist's Toolkit: Essential Research Reagent Solutions

The table below details key materials and technologies critical for developing and troubleshooting continuous bioprocesses.

| Item | Function & Application |

|---|---|

| Alternating Tangential Flow (ATF) System | A perfusion cell retention device that minimizes filter fouling, enabling high cell density cultures and continuous harvest [19]. |

| Bio-Fluorescent Particle Counter (BFPC) | An environmental monitor that provides real-time discrimination between inert and biological particles in air/water, crucial for rapid troubleshooting [16]. |

| Periodic Counter-Current (PCC) Chromatography | A semi-continuous multi-column chromatography system that increases resin utilization by loading columns to full breakthrough capacity [19]. |

| Membrane Chromatography | A high-efficiency purification technology enabling faster mass transfer and a 5-10 fold productivity increase versus traditional resins [17]. |

| Process Analytical Technology (PAT) | A suite of tools (e.g., online UV, pH, conductivity) for real-time monitoring of critical process parameters to ensure consistent product quality [17]. |

The following table summarizes key performance and economic data for emerging column-free antibody capture systems, illustrating their potential for process mass intensity improvement.

Table 1: Performance and Economic Comparison of Column-Free mAb Capture Technologies

| Technology | Reported Productivity Improvement | Potential COG Reduction | Key PMI/Sustainability Findings | Technology Readiness |

|---|---|---|---|---|

| Precipitation | Not explicitly quantified | ~20-40% (from continuous flowsheets) [24] | Similar COG/g to continuous ProA; lower environmental burden than batch [24] | Integrated in continuous economic models [24] |

| Aqueous Two-Phase Extraction (ATPE) | Not explicitly quantified | Higher than ProA/precipitation flowsheets [24] | Increased consumables usage in continuous mode [24] | Research phase for integrated continuous processing [24] |

| Continuous Countercurrent Tangential Chromatography (CCTC) | High (No specific multiplier) [25] | Significant resin cost elimination [25] | Enables high host cell protein removal [25] | Experimental stage (academic research) [25] |

| Chromatan BioRMB Kascade | 10x-20x vs. column chromatography [26] | Not explicitly quantified | Enables steady-state continuous processing [26] | Commercial system launched (2024) [26] |

Frequently Asked Questions (FAQs)

1. How do column-free capture systems directly contribute to Process Mass Intensity (PMI) improvement?

Column-free systems directly improve PMI—a key sustainability metric defined as the total mass of materials used per mass of product—by eliminating the single largest contributor to consumables mass in traditional downstream processing: the chromatography resin [27]. Protein A affinity resin is exceptionally costly and contributes significantly to the overall mass of consumables. By replacing this with alternatives like precipitation or extraction, these systems avoid this mass input entirely [24]. Furthermore, when integrated into an end-to-end continuous process, these systems enable smaller, more intensive facilities that reduce buffer and water consumption compared to batch processes, thereby lowering the overall environmental burden and total PMI [24].

2. What are the primary economic drivers for adopting column-free capture?

The primary economic driver is the elimination of the high upfront cost associated with Protein A resins, which are the most significant consumable cost in a standard mAb purification train [24]. Additionally, continuous column-free flowsheets can offer 20-40% cost of goods (COG) savings over batch processes at low and medium annual commercial demands (100-500 kg) [24]. The enhanced productivity, such as the 10x-20x improvements reported for some commercial systems, also reduces the cost per gram by enabling more product to be manufactured with the same equipment footprint over time [26].

3. Are continuous column-free systems compatible with current Good Manufacturing Practice (GMP) requirements?

As of late 2024, this is an area of active development. While commercial systems designed for GMP are now emerging, one research analysis concluded that "further research is needed to determine the potential of column-free technologies integrated in a fully end-to-end continuous process with good manufacturing practice (GMP) equipment..." [24]. The regulatory pathway is being paved by strong encouragement from agencies like the FDA for continuous manufacturing innovations, but a full precedent for end-to-end continuous bioprocessing with column-free capture is still being established [28].

4. My current process uses a batch chromatography step. What is the key operational change with column-free continuous capture?

The key shift is from a batch-wise, cyclic operation to a steady-state, continuous operation. In batch chromatography, you process a set volume of harvested cell culture fluid through a column in discrete cycles (load, wash, elute, clean). A column-free continuous system, such as one based on precipitation or membrane adsorption, operates with a constant feed stream and simultaneous product recovery [26]. This requires integrated pumps, sensors, and controllers to maintain steady-state conditions and necessitates a different skillset for development and operation, focusing more on flow rates and residence times rather than cycle times [28].

Troubleshooting Guides

Issue 1: Low Product Yield in Continuous Precipitation

| Possible Cause | Recommended Action | Underlying Principle |

|---|---|---|

| Inconsistent precipitation | Verify precise control of precipitant feed rate and mixing energy. Ensure turbulent flow for rapid, uniform mixing. | Optimal precipitation requires a narrow, well-defined residence time and supersaturation profile for consistent particle formation and product entrapment. |

| Incomplete product recovery from precipitate | Re-optimize the dissolution buffer composition (e.g., pH, ionic strength) and solid-liquid separation conditions. | The solubility of the target mAb and impurities is differentially affected by solvent conditions. Incomplete dissolution leaves product in the waste stream. |

| Product degradation during hold | Implement a flow-through cooler to control the temperature of the precipitation reactor and minimize hold-up volume. | The product is in an aggregated state and may be more susceptible to degradation; minimizing time in this state is critical. |

Issue 2: Poor Product Quality (e.g., High Aggregate or Host Cell Protein Levels)

| Possible Cause | Recommended Action | Underlying Principle |

|---|---|---|

| Inefficient washing | In a countercurrent system, increase the number of washing stages or optimize the wash buffer-to-feed ratio. | Impurities are separated from the product-bearing solid phase by differential solubility. More efficient washing requires adequate stages and volume. |

| Over-precipitation or shear damage | Screen for milder precipitating agents and reduce shear forces in pumps and transfer lines. | Harsh conditions can induce irreversible aggregation or shear proteins, creating product-related impurities that are difficult to remove. |

| Carryover of solubilized impurities | Introduce a flow-through polishing step (e.g., membrane adsorber) immediately after the product dissolution step. | The precipitation step may not achieve the purity of Protein A; a subsequent, orthogonal polishing step is often necessary for critical impurity removal. |

Issue 3: System Fouling and Flow Instability

| Possible Cause | Recommended Action | Underlying Principle |

|---|---|---|

| Precipitate accumulation | Implement periodic, automated clean-in-place (CIP) cycles with appropriate cleaning agents at defined intervals. | Precipitates can adhere to surfaces (e.g., membranes, tubing), increasing pressure and reducing heat transfer and separation efficiency. |

| Clogging in filters or transfer lines | Incorporate a pre-filtration step to remove large debris and use larger diameter tubing where possible. | The particle size distribution of the precipitate may be too broad, leading to large agglomerates that physically block flow paths. |

| Inconsistent feed composition | Tighten control of upstream perfusion bioreactor to ensure consistent cell viability and harvest clarity. | A variable upstream process leads to a variable load, which can cause unpredictable precipitation behavior and fouling. |

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Materials and Reagents for Column-Free Capture Development

| Reagent/Material | Function in Process Development | Application Example |

|---|---|---|

| Precipitating Agents (e.g., CaCl₂, Caprylic Acid, PEG) | Selectively reduces the solubility of the target mAb, causing it to come out of solution and separate from soluble impurities. | Screening different agents and concentrations to maximize yield and purity while minimizing aggregation. |

| Phase-Forming Polymers/Salts (e.g., PEG-Dextran systems) | Creates an aqueous two-phase system (ATPS) for partitioning the mAb based on surface properties into one phase, separating it from impurities. | Optimizing system composition and pH to achieve high partition coefficients for the target antibody. |

| Solid-Liquid Separation Aids (e.g., Diatomaceous Earth) | Improves the efficiency of depth filtration by providing a high-surface-area matrix to trap precipitates and cell debris. | Used in depth filters during the primary recovery of precipitated antibody to achieve high clarity. |

| Low-Pressure Adsorptive Membranes | Provides a high-flow-rate, convective mass transfer platform for continuous bind-and-elute or flow-through polishing chromatography. | Used in systems like Continuous Countercurrent Tangential Chromatography (CCTC) for polishing after initial capture. |

Decision Framework for Technology Implementation

The following diagram visualizes the logical workflow for evaluating and implementing a column-free capture system, from initial assessment through to validation.

Troubleshooting Guides

Guide 1: Resolving Oscillatory or Unstable Control Loops

Problem: A control loop in your cGMP pilot system exhibits continuous oscillations or unstable behavior, making consistent operation difficult.

Investigation Steps:

- Verify Controller Mode and Tuning: First, confirm the controller is in automatic mode. While tuning is often the initial suspect, it is frequently not the root cause. [29] Ensure you understand the vendor-specific control equations, as the same tuning constants can behave differently between systems. [29]

- Check for Control Valve Stiction:

- Place the controller in manual mode and maintain a constant output. If the process variable stabilizes, valve stiction is a likely cause. [29]

- Look for a sawtooth pattern in the controller output and a corresponding square-wave pattern in the process variable, which is a classic signature of stiction. [29]

- Solution: Perform a valve stroke test with small, incremental output changes. Check if the valve packing is too tight or if the actuator is underpowered. [29]

- Confirm Control Action and Valve Failure Mode: An incorrectly set control action (direct vs. reverse) will cause immediate instability upon switching to automatic mode. [29] Also, verify that the configured control action is logically consistent with the valve's failure mode (air-to-open vs. air-to-close). [29]

- Review the Control Equation Structure: Some control systems allow selection of different algorithm structures. For a responsive controller, the proportional (P) and integral (I) terms should act on the error (setpoint - process variable), while the derivative (D) term should act only on the process variable. An incorrect setup can lead to a sluggish response to setpoint changes or an overreaction to setpoint changes. [29]

Guide 2: Addressing Loops Constantly in Manual Mode

Problem: Operators consistently run a particular controller in manual mode, indicating a lack of confidence in its automatic performance.

Investigation Steps:

- Assess Service Factor: Analyze historian data to calculate the controller's service factor (percentage of time in automatic mode with non-conditional status). A service factor below 50% is poor and requires investigation. [29]

- Investigate Instrument Reliability:

- With the controller in manual, trend the measured process variable while the control valve is held constant.

- Look for a frozen value (possible instrument scaling or installation error), high-frequency noise with large amplitude, or large, sudden jumps in value. [29]

- Solution: Troubleshoot the instrument installation and calibration. For level measurements, ensure calibration accounts for the correct liquid density to prevent errors like blow-through or carryover. [29]

- Evaluate Setpoint Variance: High variance in the controller setpoint can indicate that operators are manually "helping" the controller respond to disturbances. This is a key indicator of a problematic loop that needs correction. [29]

Guide 3: Managing Media Fill Failures in Aseptic Processes

Problem: Media fills repeatedly fail, and conventional investigation methods cannot identify the contaminant.

Investigation Steps:

- Expand Microbiological Testing: If standard microbiological techniques (e.g., blood agar, TSA) fail to isolate the organism, consider the presence of cell-wall-deficient bacteria like Acholeplasma laidlawii. [30]

- Test the Media Source: This organism, associated with animal-derived materials, is known to be present in non-sterile tryptic soy broth (TSB) and can pass through 0.2-micron sterilizing filters. [30]

- Implement Corrective Actions:

Frequently Asked Questions (FAQs)

Does CGMP require three validation batches before releasing a new drug product?

Answer: No. The CGMP regulations and FDA policy do not specify a minimum number of batches for process validation. The "rule of three" is an outdated convention. FDA now emphasizes a product lifecycle approach, focusing on sound process design and development studies, and expects a manufacturer to have a scientific rationale for the number of batches used to demonstrate reproducibility. [30]

How can Advanced Process Control (APC) improve Process Mass Intensity (PMI)?

Answer: APC techniques, particularly Model Predictive Control (MPC), stabilize operations and drive processes toward their economic optimum. This leads to:

- Reduced Variability: Tighter control minimizes deviations, leading to more consistent yields and less off-spec material, directly lowering the mass of waste generated per mass of product. [31]

- Optimized Resource Use: APC can minimize the use of expensive raw materials and utilities like energy, which are included in a full cradle-to-gate PMI calculation. [8] [31]

- Higher Overall Efficiency: By reducing cycle times and increasing throughput, APC improves the efficiency of mass utilization across the entire process. [31] [32]

Is it acceptable to sample containers and closures in a warehouse environment?

Answer: Yes, for non-sterile materials. CGMP permits sampling in a warehouse if it is performed in a manner that prevents contamination. The act of sampling must not affect the integrity of the remaining containers. For containers/closures purporting to be sterile or depyrogenated, sampling must be performed in an environment equivalent to their purported quality level (e.g., not in a warehouse). [30]

What is the role of the Quality Unit in a cGMP environment?

Answer: The Quality Unit is responsible for ensuring that drug products have the required identity, strength, quality, and purity. Current industry practice typically divides these responsibilities between Quality Control (QC), which focuses on testing and monitoring, and Quality Assurance (QA), which oversees the overall quality system. Key duties include approving procedures, reviewing batch records, and managing deviations and changes. [33]

Experimental Protocols for Process Mass Intensity (PMI) Improvement

Quantitative Metrics for Environmental Performance

The following metrics are crucial for evaluating the greenness of chemical processes and are directly related to PMI improvement. [8] [6]

| Metric | Formula / Definition | Relevance to PMI and Environmental Impact |

|---|---|---|

| Process Mass Intensity (PMI) | PMI = Total Mass Input to Process (kg) / Mass of Product (kg) [8] |

A direct, gate-to-gate measure of process efficiency. Lowering PMI is a primary goal, as it indicates less waste and higher resource efficiency. [8] |

| Atom Economy (AE) | AE = (MW of Product / Σ MW of Reactants) x 100% [6] |

A theoretical metric from stoichiometry. A higher AE suggests a more efficient reaction design, potentially leading to a lower PMI. [6] |

| Reaction Mass Efficiency (RME) | RME = (Mass of Product / Σ Mass of Reactants) x 100% [6] |

A more practical metric than AE, as it accounts from reaction yield. Improving RME directly lowers the PMI of the reaction step. [6] |

| E-Factor | E-Factor = Total Waste (kg) / Mass of Product (kg) |

Complementary to PMI (PMI = E-Factor + 1). It focuses specifically on waste generation. [8] |

Case Study Protocol: PMI Analysis for a Catalytic Process

This protocol outlines a methodology for evaluating and improving PMI in fine chemical synthesis, as demonstrated in case studies. [6]

1. Objective: To evaluate and compare the PMI and associated green metrics for the synthesis of a target molecule (e.g., dihydrocarvone) under different catalytic and material recovery scenarios.

2. Materials and Equipment:

- Reagents: List all reactants, solvents, and catalysts (e.g., R-(+)-limonene, catalyst such as dendritic zeolite d-ZSM-5/4d). [6]

- Equipment: Round-bottom flask, condenser, heating mantle, magnetic stirrer, separation funnel, distillation or recrystallization apparatus, analytical instruments (GC, HPLC, NMR).

3. Experimental Procedure: * Reaction Step: Conduct the synthesis (e.g., epoxidation, cyclization) as per established literature, carefully controlling temperature and pressure. [6] * Work-up and Isolation: Perform the separation of the product from the reaction mixture. * Purification: Purify the crude product using an appropriate technique (e.g., distillation). * Solvent and Catalyst Recovery: Implement a recovery protocol (e.g., solvent distillation, catalyst filtration, and reactivation) for the chosen scenario.

4. Data Collection and Analysis: * Record the masses of all input materials (reactants, solvents, catalysts) and the final purified product. * Perform the calculations for PMI, AE, RME, and other relevant metrics as defined in the table above. [6] * Create Recovery Scenarios: Analyze the data for three scenarios: * Scenario A: No material recovery. * Scenario B: Partial solvent and/or catalyst recovery. * Scenario C: Full recovery of all reusable materials.

5. Visualization with Radial Diagrams: * Use a radial pentagon diagram to graphically compare the five key metrics (AE, Reaction Yield, 1/SF, MRP, RME) across different processes or scenarios. This provides an immediate visual assessment of the process's "greenness." [6]

System Architecture and Troubleshooting Visualizations

APC-Integrated cGMP Pilot System Architecture

Systematic Control Loop Troubleshooting Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

| Item | Function in cGMP/APC Context | Key Considerations |

|---|---|---|

| Tryptic Soy Broth (TSB) | Used in media fill simulations to validate aseptic manufacturing processes. [30] | Source sterile, irradiated TSB or filter through a 0.1-micron filter to prevent contamination by organisms like Acholeplasma laidlawii. [30] |

| Smart Instrumentation | Sensors and actuators with embedded digital communication (e.g., IO-Link, Ethernet/IP). [34] | Enables predictive maintenance, provides device health status, and simplifies integration in modular cleanrooms. Reduces long-term maintenance costs. [34] |

| RFID Tags | Embedded in autoclavable tubing bundles and single-use components. [34] | Tracks component usage, number of sterilizations, and proves transfer panel connections, ensuring process integrity and use-within limits. [34] |

| Intrinsically Safe (IS) Instrumentation | Electrical equipment designed for hazardous (Class I Div 1) areas, such as those with solvent vapors. [34] | Prevents ignition of flammable atmospheres. Has a smaller footprint and lower maintenance costs than explosion-proof equipment, though with a higher initial cost. [34] |

| Water for Injection (WFI) | Used in formulation of media and buffers in bioprocessing. [34] | Control systems should incorporate safety features (e.g., stroke limitation valves) to prevent high-pressure dispense that can rupture single-use bags. [34] |

Frequently Asked Questions (FAQs) and Troubleshooting

FAQ 1: What are the primary strategies for improving product titer in intensified upstream processes?

Improving titer involves a multi-faceted approach. Key strategies include transitioning from traditional fed-batch to perfusion processes, which can maintain high cell densities (e.g., over 100 million cells/mL) for extended periods, leading to a reported 10-fold increase in yield [35]. Genetically engineering host cells for higher specific productivity and enhanced stability is also critical. This includes engineering apoptosis-resistant cell lines and using gene editing tools like CRISPR/Cas9 to knock out metabolic bottlenecks, which has been shown to significantly improve culture growth and final antibody titer [36].

FAQ 2: How can genetic instability in microbial production systems be mitigated?

A major cause of genetic instability is the high selection pressure on growth-arrested populations, which favors mutations that allow cells to escape growth control [37]. To counter this, you can:

- Incorporate Genetic Redundancy: For example, building an inducible growth switch in E. coli with redundancy in the expression of RNA polymerase subunits (β, β', and α) drastically improved stability, reducing the escape frequency to below 10⁻⁹ [37].

- Use Advanced Selection Systems: Employ transposon-based systems (e.g., PiggyBac) to integrate transgenes into transcriptionally active genomic loci, which promotes more stable and consistent high-level expression [36].

FAQ 3: What are common sources of contamination in low-biomass or intensive cultures, and how can they be prevented?

Contamination and cross-contamination can disproportionately impact high-density and prolonged cultures. Common sources include human operators, sampling equipment, and reagents [38].

- Prevention Protocols: Use single-use, DNA-free equipment where possible. Decontaminate reusable tools with 80% ethanol followed by a nucleic acid-degrading solution (e.g., bleach). Personnel should wear appropriate personal protective equipment (PPE) like gloves, coveralls, and masks to limit sample exposure [38].

- Implement Rigorous Controls: Always include negative controls during sampling and processing, such as swabs of the sampling environment or aliquots of preservation solutions, to identify and account for contaminant backgrounds [38].

FAQ 4: My transformation efficiency is low. What factors should I investigate?

Low transformation efficiency can stem from several issues related to cell competency and DNA handling [39]:

- Cell Competency: Ensure competent cells are fresh and have not undergone repeated freeze-thaw cycles. Test cell batches with a known, high-quality control plasmid.

- DNA Quality and Quantity: Use purified, contaminant-free plasmid DNA. The optimal amount is typically between 10-100 ng; too much or too little can reduce efficiency.

- Protocol Execution: For heat shock, strictly adhere to the timing (30-45 seconds) and temperature (42°C). Use the correct concentration of calcium chloride and ensure an adequate recovery period in nutrient-rich medium before plating [39].

Key Experimental Protocols

Protocol for Developing a Genetically Stabilized Growth Switch

This protocol outlines the creation of a stable, inducible growth arrest system in E. coli to reorient metabolic fluxes toward production [37].

Methodology:

- Genetic Construct Design: Design a genetic circuit where the genes encoding the β- and β'-subunits of RNA polymerase (rpoB and rpoC) are placed under the control of an inducible promoter (e.g., PBAD or PLtetO-1).

- Introduction of Genetic Redundancy: To enhance stability, incorporate the gene for the α-subunit of RNA polymerase (rpoA) under the control of a copy of the same inducible promoter. This multi-target approach makes it harder for mutations to overcome growth control.

- Strain Transformation: Introduce the constructed plasmid into the production E. coli strain using a high-efficiency method like electroporation [39].

- Validation and Stability Testing:

- Induce the promoter and measure the frequency of escapees (cells that continue to grow) over a long period (e.g., 50-100 generations).

- Compare the escape frequency of the redundant system (ββ' + α) against the non-redundant system (ββ' only). The improved switch should show an escape frequency of <10⁻⁹ [37].

- Assess production yield of a target compound (e.g., glycerol) in the growth-arrested state to confirm the system's functionality.

Protocol for Intensified Seed Train and N-1 Perfusion

This protocol describes intensifying the pre-culture (seed train) to generate high biomass for inoculating production bioreactors, significantly reducing process time and increasing volumetric productivity [40] [35].

Methodology:

- High Cell Density Cryopreservation (HCDC): Begin the seed train by thawing a vial of working cell bank that was cryopreserved at a high cell density (e.g., 50 x 10⁶ cells/mL). This eliminates several expansion steps [40].

- N-1 Perfusion: Instead of using a standard batch or fed-batch culture for the final seed (N-1) bioreactor, operate it in perfusion mode.

- Use a cell retention device (e.g., an Alternating Tangential Flow (ATF) system) to retain cells within the bioreactor.

- Continuously add fresh medium and remove spent medium, allowing the cell density to reach very high levels (e.g., 50-150 x 10⁶ cells/mL) over 5-7 days [35].

- Production Bioreactor Inoculation: Inoculate the main production bioreactor (fed-batch or perfusion) with the entire contents or a large portion of the N-1 perfusion bioreactor. This high inoculation density leads to a very short or non-existent growth phase in the production bioreactor, extending the productive synthesis phase.

The following workflow illustrates the comparison between traditional and intensified N-1 seed train processes:

Quantitative Data and Process Comparison

The following table summarizes key performance metrics reported for different upstream processing modes, demonstrating the impact of process intensification.

Table 1: Performance Comparison of Upstream Processing Modes [36] [35]

| Metric | Traditional Fed-Batch (TFB) | Intensified Fed-Batch (N-1 Perfusion) | Perfusion Process |

|---|---|---|---|

| Max Viable Cell Density | ~20-30 x 10⁶ cells/mL | ~50-150 x 10⁶ cells/mL | >100 x 10⁶ cells/mL (sustained) |

| Volumetric Productivity | Baseline | 3-5 fold higher than TFB | Up to 10 fold higher than TFB; ~1 g/L/day antibody harvest |

| Process Duration | 10-14 days | Similar or slightly less than TFB | Weeks to months (e.g., 50-day processes) |

| Space-Time Yield | Baseline | Increased | ~3 fold increase over TFB |

| Product Titer | 1.5 - 5 g/L (for mAbs) | Higher than TFB | Can exceed 5 g/L; consistent harvest |