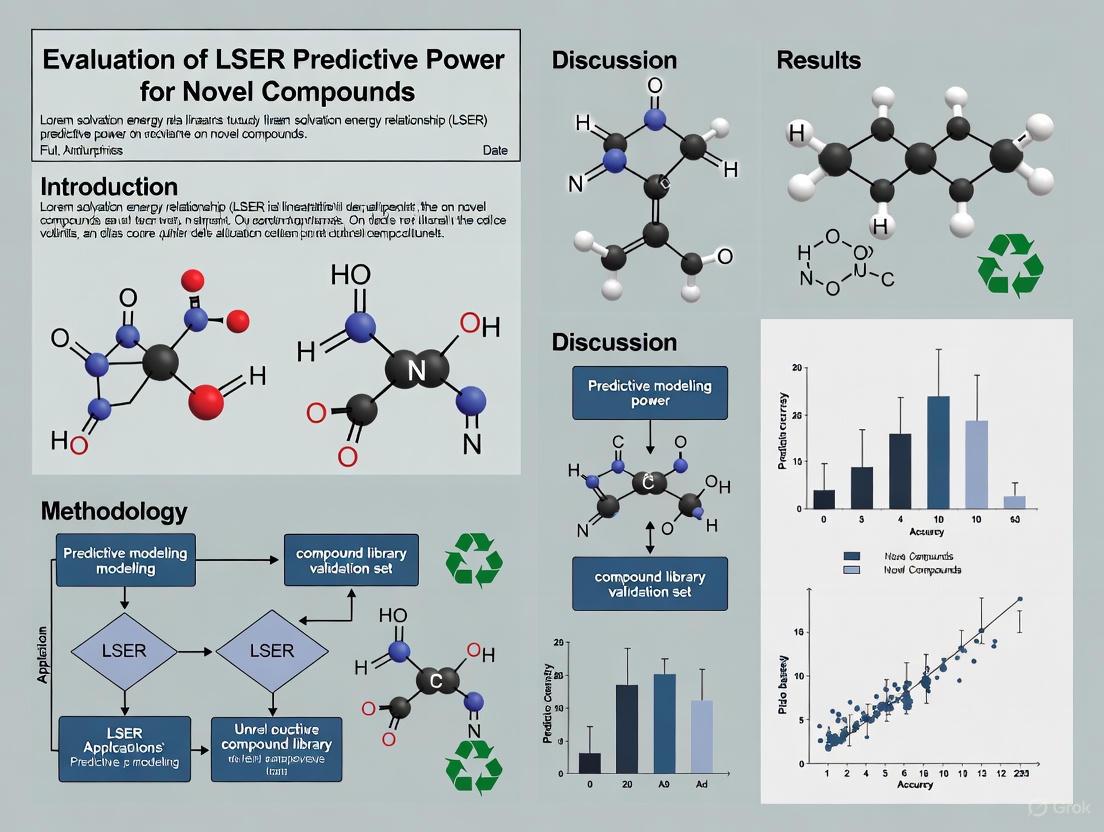

Evaluating LSER Predictive Power for Novel Compounds: AI-Driven Approaches in Modern Drug Discovery

This article provides a comprehensive evaluation of the predictive power of Linear Solvation Energy Relationships (LSER) for novel compounds, addressing the critical needs of researchers and drug development professionals.

Evaluating LSER Predictive Power for Novel Compounds: AI-Driven Approaches in Modern Drug Discovery

Abstract

This article provides a comprehensive evaluation of the predictive power of Linear Solvation Energy Relationships (LSER) for novel compounds, addressing the critical needs of researchers and drug development professionals. It explores the foundational principles of LSER and their integration with modern artificial intelligence (AI) techniques. The scope covers methodological applications in virtual screening and multi-parameter optimization, strategies for troubleshooting model performance and data limitations, and rigorous validation through comparative analysis with established computational methods. By synthesizing insights from current literature, this article serves as a guide for leveraging LSER models to accelerate the discovery and development of new therapeutic agents, with a focus on immunomodulators and antimicrobial peptides.

LSER Fundamentals and the AI Revolution in Compound Property Prediction

Core Principles of Linear Solvation Energy Relationships (LSER)

Linear Solvation Energy Relationships (LSER) represent a cornerstone quantitative structure-activity relationship (QSAR) methodology for predicting solute partitioning and environmental distribution behavior. This guide objectively evaluates LSER's predictive power against alternative modeling approaches, examining its application across diverse chemical systems including polymer-water partitioning and aquatic toxicity assessment. Experimental data demonstrate that rigorously parameterized LSER models achieve exceptional predictive accuracy (R² = 0.991, RMSE = 0.264) for chemically diverse compounds. While LSER provides mechanistically interpretable parameters, its performance depends critically on the availability of experimental solute descriptors, creating practical limitations that emerging computational approaches aim to address.

Linear Solvation Energy Relationships, also known as the Abraham solvation parameter model, constitute a highly successful predictive framework for understanding solute partitioning behavior across diverse chemical, biomedical, and environmental contexts [1]. The model's robustness stems from its foundation in linear free energy relationships (LFER), which quantitatively correlate the free-energy-related properties of solutes with molecular descriptors that encode specific interaction capabilities [1] [2].

The LSER approach quantitatively describes solute transfer between phases using two primary equations. For partitioning between two condensed phases, the model takes the form:

log(P) = cp + epE + spS + apA + bpB + vpVx [1]

where P represents partition coefficients such as water-to-organic solvent or alkane-to-polar organic solvent partitioning. For gas-to-condensed phase partitioning, the relationship becomes:

log(KS) = ck + ekE + skS + akA + bkB + lkL [1]

where KS is the gas-to-organic solvent partition coefficient. In both equations, the capital letters (E, S, A, B, Vx, L) represent solute-specific molecular descriptors, while the lowercase coefficients (e, s, a, b, v, l, c) are system-specific parameters that characterize the complementary properties of the phases between which partitioning occurs [1] [2].

Experimental Protocols and Methodologies

LSER Model Development Workflow

The development of predictive LSER models follows a systematic experimental and computational protocol that ensures robustness and interpretability. The standard methodology encompasses several critical stages from data collection through model validation, each requiring specific technical approaches and quality control measures.

Data Collection and Curation: Experimental partition coefficient data for model calibration are obtained through standardized laboratory measurements. For polymer-water partitioning studies, techniques include equilibrium batch experiments followed by chemical analysis via chromatography or spectrometry [3]. The training set compounds must span diverse chemical functionalities and molecular structures to ensure broad model applicability. For reliable models, datasets of 150-300 compounds are typical, with approximately 70% allocated to training and 30% reserved for validation [3].

Descriptor Determination: Solute descriptors (E, S, A, B, V, L) are obtained through experimental measurements, literature compilation, or computational prediction. Experimental methods include gas chromatography for L and Vx, solvent-water partitioning for A and B, and spectroscopic techniques for S and E parameters [4] [2]. For compounds lacking experimental descriptors, quantitative structure-property relationship (QSPR) models using quantum chemical and topological descriptors provide estimated values, though with potentially reduced accuracy [5].

Model Calibration: Multiple linear regression analysis correlates the measured partition coefficients with the solute descriptors. Statistical metrics including R², adjusted R², root mean square error (RMSE), and cross-validation Q² values determine model quality [3] [5]. The regression coefficients (e, s, a, b, v, l) provide physicochemical interpretation of the phase interactions.

Validation and Application: Final model performance is evaluated against the independent validation set not used in calibration. External validation assesses predictive capability for novel compounds, with successful models achieving R² > 0.98 and RMSE < 0.35 for logP predictions [3]. Validated models can then predict partitioning for compounds with known descriptors but no experimental partitioning data.

LSER Model Interpretation Framework

The LSER model coefficients provide direct physicochemical interpretation of the molecular interactions governing solute partitioning in specific systems. Understanding these coefficient patterns enables researchers to extract meaningful thermodynamic information about phase properties and interaction mechanisms.

System Coefficient Interpretation: The solvent/system coefficients (e, s, a, b, v, l) represent the complementary effect of the phase on solute-solvent interactions [1]. The v-coefficient reflects the phase's capacity to accommodate solute size through cavity formation, typically positive in condensed phases. The a-coefficient (complementary hydrogen bond basicity) and b-coefficient (complementary hydrogen bond acidity) quantify the phase's ability to participate in specific hydrogen-bonding interactions, with negative values in the LSER equation indicating that such interactions favor retention in the more interactive phase [1] [2].

Solute Descriptor Interpretation: Solute descriptors encode specific molecular properties: E represents excess molar refractivity related to polarizability; S reflects dipolarity/polarizability; A and B quantify hydrogen bond acidity and basicity, respectively; Vx represents McGowan's characteristic molecular volume; and L defines the gas-liquid partition coefficient in n-hexadecane at 298 K [1] [2]. These descriptors are considered system-independent molecular properties that can be transferred across different LSER applications.

Thermodynamic Basis: The success of LSER models stems from their foundation in solvation thermodynamics. The linear free energy relationships effectively capture the balance between endoergic cavity formation/solvent reorganization processes and exoergic solute-solvent attractive interactions that collectively determine partitioning behavior [1] [2]. This thermodynamic basis explains the remarkable observation of linearity even for strong specific interactions like hydrogen bonding.

Performance Comparison with Alternative Methods

Quantitative Performance Metrics

LSER model performance must be evaluated against alternative prediction methodologies across multiple application domains. The following comparative analysis examines statistical performance metrics for partition coefficient prediction in diverse chemical systems.

Table 1: Performance Comparison of LSER vs. Alternative Prediction Methods

| Method | Application Domain | R² | RMSE | Data Requirements | Mechanistic Interpretability |

|---|---|---|---|---|---|

| LSER (Experimental Descriptors) | LDPE/Water Partitioning | 0.991 [3] | 0.264 [3] | High | Excellent [1] [2] |

| LSER (Predicted Descriptors) | LDPE/Water Partitioning | 0.984 [3] | 0.511 [3] | Moderate | Good [5] |

| Linear Solvent Strength Theory (LSST) | Chromatographic Retention | Comparable to LSER | Similar to LSER | Moderate | Limited [6] |

| Typical-Conditions Model (TCM) | Chromatographic Retention | Superior to LSER | Better precision | Lower than LSER | Limited [6] |

| Theoretical LSER (TLSER) | Aquatic Toxicity | 0.888 (Q²) [5] | 0.153-0.179 [5] | Low | Moderate [5] |

The performance data demonstrate that LSER models parameterized with experimental solute descriptors achieve exceptional predictive accuracy for partition coefficients, with R² values exceeding 0.99 in optimized systems [3]. This performance advantage, however, comes with substantial data requirements, as experimental descriptor determination can be resource-intensive. When computational descriptor predictions replace experimental values, model performance shows modest degradation, with RMSE values approximately doubling in some applications [3] [5].

Comparative studies in chromatography indicate that while LSER provides superior mechanistic interpretation through its physically meaningful parameters, alternative approaches like the Typical-Conditions Model (TCM) can achieve comparable or superior predictive precision with fewer experimental measurements [6]. This advantage is particularly evident when dealing with complex chemical systems where comprehensive descriptor determination proves challenging.

Application-Specific Performance Analysis

LSER model performance varies significantly across application domains, reflecting differences in molecular interaction dominance and descriptor sensitivity. The following analysis examines domain-specific performance patterns and limitations.

Table 2: Domain-Specific LSER Model Performance Characteristics

| Application Domain | Key Influencing Descriptors | Typical Model Statistics | Notable Limitations |

|---|---|---|---|

| Polymer-Water Partitioning (LDPE) | V, B, A (Vx most significant) [3] | R² = 0.991, RMSE = 0.264 [3] | Limited prediction for H-bond dominant solutes |

| Aquatic Toxicity (Fathead Minnow) | V (McGowan's volume most significant) [5] | Q² = 0.885, RMSE = 0.153 [5] | Difficulties modeling reactive compounds |

| Chromatographic Retention | V, S, B, A (system-dependent) [2] | Varies by stationary/mobile phase | Requires phase-specific calibration |

| Solvent-Solvent Partitioning | V, A, B (hydrogen bonding critical) [1] | Depends on solvent pair | Limited predictive power for ionic species |

The performance analysis reveals that McGowan's volume (Vx) frequently emerges as the most statistically significant descriptor in LSER models, particularly for hydrophobic phases like low-density polyethylene and biological membranes [3] [5]. Hydrogen-bonding parameters (A and B) demonstrate strong system-dependent behavior, with their relative influence varying dramatically between different partitioning systems.

A significant limitation emerges in modeling reactive compounds, where standard LSER approaches show reduced predictive capability. For reactive toxicity mechanisms, additional descriptors characterizing electron donor-acceptor properties or specific functional group presence may be necessary to achieve satisfactory model performance [5]. This limitation highlights the importance of considering molecular transformation potential during biological or environmental exposure, which standard LSER descriptors cannot fully capture.

Essential Research Reagents and Materials

Successful LSER implementation requires specific chemical standards and computational resources to ensure descriptor accuracy and model reliability. The following reagents and materials represent foundational components for LSER research programs.

Table 3: Essential Research Materials for LSER Studies

| Material/Resource | Specification | Research Function | Application Context |

|---|---|---|---|

| n-Hexadecane | Chromatographic grade | Determination of L descriptor [1] | Gas-liquid partitioning reference |

| Reference Solutes | 50-100 diverse compounds with established descriptors [2] | System coefficient calibration | Model development and validation |

| Quantum Chemistry Software | Gaussian 09 (or equivalent), DFT methods [5] | Computational descriptor prediction | TLSER model development |

| Molecular Descriptor Database | Curated LSER database with experimental values [1] [3] | Descriptor sourcing and validation | Model parameterization |

| Chromatographic Systems | GC/MS with varied stationary phases [2] | Experimental descriptor determination | L, S, A, B parameter measurement |

The selection of appropriate reference compounds proves critical for reliable LSER model development. The chemical diversity of the training set directly influences model applicability domain, with broader descriptor space coverage enabling more robust predictions for novel compounds [3]. For LSER studies targeting specific application domains, inclusion of chemical analogs representing expected compound classes significantly enhances predictive accuracy for those structures.

Experimental work requires high-purity solvents and reference materials to minimize measurement artifacts in descriptor determination. For computational LSER approaches, quantum chemical calculations at the B3LYP/6-31+G(d,p) level or similar have demonstrated satisfactory performance for descriptor prediction, providing reasonable alternatives when experimental determination proves impractical [5].

The comprehensive performance evaluation demonstrates that LSER methodology provides exceptional predictive accuracy for partition coefficients when parameterized with experimental molecular descriptors. The approach offers unique advantages in mechanistic interpretability, with model coefficients directly quantifying specific molecular interaction contributions to partitioning behavior. These characteristics make LSER particularly valuable for pharmaceutical and environmental research applications where understanding molecular interaction mechanisms proves as important as prediction accuracy.

Ongoing methodology developments focus on addressing LSER's primary limitation: the requirement for comprehensive experimental descriptor data. Computational descriptor prediction approaches show promising results, with QSPR-based descriptor estimation achieving R² > 0.88 for key parameters like E [5]. Hybrid methodologies that combine experimental determination for critical descriptors with computational prediction for others offer a practical path forward for balancing accuracy and resource requirements.

For novel compound research, LSER represents a powerful tool for predicting partitioning behavior, particularly when complemented by emerging machine learning approaches for descriptor refinement. The robust thermodynamic foundation of the LSER framework ensures its continued relevance as computational chemistry advances enhance descriptor accessibility and model precision.

The Role of AI and Machine Learning in Modernizing Pharmacological Predictions

The pharmaceutical industry is undergoing a profound transformation driven by artificial intelligence (AI) and machine learning (ML). Traditional drug discovery remains a time-consuming and expensive process, typically taking 10-15 years with a success rate of less than 12% [7]. AI technologies are now reshaping this landscape by enabling more accurate pharmacological predictions, compressing development timelines from years to months, and reducing costs substantially. The global AI in drug discovery market, valued at USD 6.93 billion in 2025, is projected to reach USD 16.52 billion by 2034, reflecting a healthy CAGR of 10.10% [8]. This revolution extends across all stages of drug development, from initial target identification to clinical trial optimization, representing a fundamental shift from traditional reductionist approaches toward holistic, systems-level modeling of biological complexity [9].

Modern AI-driven drug discovery (AIDD) platforms distinguish themselves from legacy computational tools through their ability to integrate and analyze multimodal datasets—including chemical structures, omics data, clinical records, and scientific literature—to construct comprehensive biological representations [9]. Companies like Insilico Medicine, Recursion, and Verge Genomics have developed integrated platforms that leverage deep learning, generative models, and knowledge graphs to navigate the intricate relationships within biological systems, enabling more predictive and translatable pharmacological insights [9]. This article provides a comparative analysis of how AI and ML technologies are modernizing pharmacological predictions, with specific examination of experimental protocols, performance data, and implementation frameworks.

AI/ML Technologies Reshaping Pharmacological Predictions

Core Technologies and Their Applications

AI in drug discovery encompasses a diverse ecosystem of technologies, each contributing unique capabilities to pharmacological prediction. Machine learning, particularly supervised learning which held approximately 40% of the algorithm type market share in 2024, enables the identification of patterns in labeled datasets to predict drug activity and properties [10]. Deep learning represents the fastest-growing segment, excelling in structure-based predictions and protein modeling through architectures such as convolutional neural networks (CNNs) and transformer models [10] [9]. Generative AI has emerged as a transformative force for molecular design, creating novel compound architectures that respect chemical rules while exploring territories human chemists might not consider [7] [9].

These technologies are being applied across the drug discovery pipeline with demonstrated efficacy. In virtual screening, AI systems can analyze millions of molecular compounds to identify promising candidates much faster than conventional high-throughput screening [11]. For toxicity and safety prediction, deep learning models can evaluate proposed molecules for toxicity risks, enabling researchers to eliminate high-risk compounds before synthesis [8]. In clinical trial optimization, AI-driven digital twin technology creates personalized models of disease progression for individual patients, allowing for trials with fewer participants while maintaining statistical power [12]. The integration of these technologies into end-to-end platforms represents the most significant advancement, creating continuous feedback loops between computational prediction and experimental validation [7] [9].

Comparative Performance of AI vs Traditional Methods

Table 1: Performance Comparison of AI-Driven vs Traditional Drug Discovery Approaches

| Metric | Traditional Approach | AI-Enhanced Approach | Data Source |

|---|---|---|---|

| Early discovery timeline | 18-24 months | 3 months | [8] |

| Cost per candidate (early stage) | ~$100 million | ~$40-50 million | [8] |

| Target identification to preclinical | >3 years | 13 months | [8] |

| Idiopathic Pulmonary Fibrosis drug design | Industry standard: 3-5 years | 18 months | [11] [9] |

| Clinical trial recruitment | Standard pace | Significantly accelerated | [12] |

| Toxicity prediction accuracy | Conventional methods | Random forest: 98% accuracy | [13] |

| Ebola drug candidate identification | Months to years | <1 day | [11] |

The quantitative advantages of AI-driven approaches extend beyond speed and cost efficiency to include improved predictive accuracy. In a recent study predicting medical outcomes from acute lithium poisoning, a random forest model achieved 98% accuracy in predicting medical outcomes, with 100% accuracy and 96% sensitivity for serious outcomes, and 96% accuracy with 100% sensitivity for minor outcomes [13]. The model identified key clinical features—drowsiness/lethargy, age, ataxia, abdominal pain, and electrolyte abnormalities—as the most significant predictors of toxicity severity [13]. Similarly, AI platforms have demonstrated remarkable efficiency in candidate identification, with Atomwise identifying two drug candidates for Ebola in less than a day [11].

Experimental Protocols and Methodologies

Protocol 1: Predictive Toxicology Using Random Forest

Table 2: Key Research Reagent Solutions for Predictive Toxicology

| Reagent/Resource | Function in Experiment | Specifications |

|---|---|---|

| National Poison Data System (NPDS) | Source of structured poisoning exposure cases | 133 features including 131 binary symptom variables + age [13] |

| Random Forest Algorithm | Classification model for outcome prediction | Ensemble of decision trees with robustness to overfitting [13] |

| SMOTE (Synthetic Minority Oversampling Technique) | Addresses class imbalance in dataset | Generates synthetic samples for minority classes [13] |

| RFECV (Recursive Feature Elimination with Cross-Validation) | Identifies most predictive features | Systematically eliminates features based on model performance [13] |

| SHAP (SHapley Additive exPlanations) | Interprets model predictions and feature importance | Game theory approach to explain output [13] |

A recent study demonstrated the application of machine learning for predicting medical outcomes associated with acute lithium poisoning, providing a robust protocol for predictive toxicology [13]. The methodology began with data acquisition from the National Poison Data System (NPDS), containing cases recorded between 2014-2018. Of 11,525 reported lithium poisoning cases, 2,760 were categorized as acute overdose, with 139 individuals experiencing severe outcomes and 2,621 having minor outcomes [13].

The data pre-processing phase addressed missing values using multiple imputation techniques and Markov Chain Monte Carlo methodology. The sole continuous variable (age) was normalized using min-max scaling and standard scaling (z-score normalization) to align with the scale of binary features. The dataset was randomly partitioned into training (70%), validation (15%), and testing (15%) subsets [13].

For model training and validation, researchers employed Random Forest algorithm, comparing it against deep learning approaches and finding superior performance. To address class imbalance, they applied SMOTE prior to model training, generating synthetic samples for the minority class. Feature selection was performed using RFECV to identify the most significant predictive features. The model's performance was assessed using accuracy, recall (sensitivity), and F1-score, with the Random Forest model achieving exceptional values of 99%, 98%, and 98% for training, validation, and test datasets respectively [13].

Protocol 2: AI-Driven Drug Formulation Optimization

The development of MTS-004, China's first AI-driven drug to complete Phase III clinical trials, demonstrates an advanced protocol for formulation optimization [14]. This small molecule drug for pseudobulbar affect in ALS patients required specialized formulation due to patient swallowing difficulties. Researchers leveraged an AI nano-delivery platform called NanoForge to design an orally disintegrating tablet formulation [14].

The experimental workflow integrated quantum chemistry and molecular dynamics simulations to predict drug-excipient interactions and generate nano-level formulation optimization plans. The AI platform performed modeling and predictive analysis tasks that reduced the preclinical formulation optimization cycle from the industry average of 1-2 years to just 3 months [14]. The entire development process—from project initiation to completion of Phase III trials—took only 38 months, dramatically faster than industry standards [14].

The clinical validation followed a rigorous double-blind, randomized, placebo-controlled multicenter study design across 48 clinical centers. The trial enrolled 264 subjects with pseudobulbar affect due to ALS or stroke, with efficacy and safety as primary endpoints. The AI-optimized formulation demonstrated significant clinical value by specifically improving swallowing difficulty and reducing complications in this challenging patient population [14].

Comparative Analysis of Leading AI Platforms

Platform Architectures and Capabilities

Table 3: Comparative Analysis of Leading AI Drug Discovery Platforms

| Platform | Core Technology | Key Applications | Reported Outcomes |

|---|---|---|---|

| Insilico Medicine Pharma.AI | Generative adversarial networks (GANs), reinforcement learning, knowledge graphs with 1.9T+ data points [9] | Target identification, generative chemistry, clinical trial prediction | Novel IPF drug candidate in 18 months; first AI-designed drug in clinical trials [11] [9] |

| Recursion OS | Phenom-2 (1.9B parameter ViT), MolPhenix, MolGPS, knowledge graphs, ~65PB data [9] | Phenotypic drug discovery, target deconvolution, biomarker identification | Scaled wet-lab data feeds computational tools for therapeutic insights [9] |

| Iambic Therapeutics | Magnet (generative), NeuralPLexer (structure), Enchant (PK/PD) integrated pipeline [9] | Small molecule design, protein-ligand complex prediction, clinical outcome forecasting | End-to-end in silico candidate prioritization before synthesis [9] |

| Verge Genomics CONVERGE | Human-derived multi-omics data (60TB+), closed-loop ML, human tissue validation [9] | Neurodegenerative disease target identification, translational biomarker discovery | Clinical candidate in under 4 years including target discovery [9] |

| Unlearn Digital Twins | AI-driven disease progression models, clinical trial simulation [12] | Clinical trial optimization, control arm reduction, patient stratification | Reduces trial sizes and costs while maintaining statistical power [12] |

The leading AI platforms share common architectural principles despite their technological diversity. Each integrates multi-modal data at unprecedented scale, employs specialized neural architectures for distinct prediction tasks, and establishes closed-loop learning systems where experimental results continuously refine computational models [9]. For instance, Insilico Medicine's Pharma.AI leverages a novel combination of policy-gradient-based reinforcement learning and generative models, enabling multi-objective optimization to balance parameters such as potency, toxicity, and novelty [9]. Similarly, Recursion's OS platform employs foundation models trained on massive proprietary datasets, including Phenom-2 with 1.9 billion parameters trained on 8 billion microscopy images [9].

Performance Benchmarking Across Therapeutic Areas

The predictive power of AI platforms demonstrates significant variation across therapeutic areas and applications. In oncology, which dominates the AI drug discovery market with approximately 45% share, ML algorithms have shown remarkable efficacy in analyzing patient data to optimize drug design and target identification [10]. For neurological disorders, the fastest-growing therapeutic segment, platforms like Verge Genomics leverage human-derived tissue data to identify clinically viable targets, avoiding animal models that poorly mimic human biology [9]. In infectious diseases, AI platforms have demonstrated accelerated response capabilities, such as identifying repurposed candidates for COVID-19 treatment [11].

The deployment mode also influences platform performance, with cloud-based solutions accounting for approximately 70% of the market due to their ability to manage large datasets and facilitate collaboration [10]. However, hybrid deployment represents the fastest-growing segment, balancing the computational power of the cloud with the security of on-premise systems for sensitive data [10]. Leading pharmaceutical companies are increasingly adopting these technologies, with the pharmaceutical segment holding 50% of the market share in 2024, while AI-focused startups represent the fastest-growing segment [10].

Implementation Challenges and Future Directions

Technical and Operational Barriers

Despite promising results, implementing AI for pharmacological predictions faces significant challenges. The AI skills gap represents a critical bottleneck, with 49% of industry professionals reporting that a shortage of specific skills and talent is the top hindrance to digital transformation [15]. This gap encompasses both technical deficits (machine learning, deep learning, NLP) and domain knowledge shortfalls, with approximately 70% of pharma hiring managers struggling to find candidates with both pharmaceutical expertise and AI skills [15].

Data quality and interoperability remain persistent challenges, as AI models require high-quality, well-structured data to generate reliable predictions [11]. Many organizations struggle with fragmented, siloed data and inconsistent metadata that prevent automation and AI from delivering full value [16]. Additionally, regulatory alignment for AI-driven models continues to evolve, requiring careful validation and documentation to meet regulatory standards [11].

Emerging Solutions and Strategic Approaches

Forward-thinking organizations are addressing these challenges through multiple strategies. Reskilling existing employees has proven cost-effective, with reskilled teams showing a 25% boost in retention and 15% efficiency gains at roughly half the cost of hiring new talent [15]. Companies like Johnson & Johnson have trained 56,000 employees in AI skills, while Bayer partnered with IMD Business School to upskill over 12,000 managers globally [15].

Risk-sharing business models are creating better alignment between AI companies and pharmaceutical partners. In these arrangements, compensation is tied to milestones rather than traditional fee-for-service relationships, making partners true collaborators invested in program success [7]. This approach encourages persistence through difficult challenges and exploration of unconventional approaches.

The emergence of AI translator roles—professionals who bridge biological and computational domains—is helping to facilitate communication between pharmaceutical and computational science communities [12] [15]. These specialists combine domain expertise with technical knowledge to ensure AI solutions address biologically relevant questions with appropriate methodological rigor.

AI and machine learning are fundamentally modernizing pharmacological predictions by enabling more accurate, efficient, and clinically translatable modeling of drug effects. The comparative analysis presented demonstrates consistent advantages of AI-driven approaches over traditional methods across multiple metrics, including development timeline compression (from years to months), cost reduction (approximately 50% savings in early-stage costs), and improved predictive accuracy (up to 98% in toxicity prediction) [8] [13].

The most successful implementations share common characteristics: integration of multi-modal data at scale, closed-loop learning systems that continuously refine models based on experimental feedback, and hybrid expertise combining computational and domain knowledge [9]. As the field evolves, addressing the AI skills gap through reskilling, collaborative partnerships, and new educational models will be essential to fully realize the potential of these technologies [15].

For researchers and drug development professionals, the evidence suggests that AI-driven pharmacological prediction has moved from theoretical promise to practical utility. Platforms from leading companies have demonstrated reproducible success in generating clinical candidates across multiple therapeutic areas, with performance advantages that are reshaping competitive dynamics in pharmaceutical R&D [7] [9]. While challenges remain in data quality, model interpretability, and regulatory alignment, the accelerating adoption of these technologies suggests they will become increasingly central to pharmacological research and development in the coming years.

Key Molecular Descriptors and Solvation Parameters in LSER Models

Linear Solvation Energy Relationship (LSER) models are powerful computational tools widely used in medicinal chemistry, environmental science, and drug development to predict the physicochemical behavior and biological activity of compounds. These models establish quantitative relationships between molecular descriptors and observed properties through linear free-energy relationships, providing a mechanistic understanding of solute-solvent interactions across different phases [17] [1]. The predictive power of LSER approaches stems from their ability to deconstruct complex molecular interactions into discrete, quantifiable parameters that collectively describe a compound's behavior in various environments. For researchers investigating novel compounds, LSER models offer a valuable framework for forecasting partitioning behavior, solubility, and binding affinities prior to resource-intensive synthesis and experimental testing, thereby accelerating the compound optimization pipeline [18] [19].

Within the broader thesis of evaluating LSER predictive power for novel compounds research, this guide objectively compares the performance of different descriptor sets and prediction methodologies, providing researchers with evidence-based insights for selecting appropriate tools for their specific applications. As the chemical space explored in drug discovery continues to expand toward more complex structures, understanding the capabilities and limitations of various LSER implementations becomes increasingly critical for efficient research planning and resource allocation [18].

Core Molecular Descriptors in LSER Models

Fundamental Descriptor Definitions and Solvation Parameters

LSER models characterize molecules using a set of six fundamental molecular descriptors that collectively represent the dominant interaction forces governing solvation and partitioning behavior. These descriptors are incorporated into two primary LSER equations for different phase transfer processes [1]:

For solute transfer between two condensed phases: log(P) = cp + epE + spS + apA + bpB + vpVx

For solute transfer between gas and condensed phases: log(KS) = ck + ekE + skS + akA + bkB + lkL

The core molecular descriptors used in these equations are defined in the table below:

Table 1: Fundamental LSER Molecular Descriptors and Their Physicochemical Significance

| Descriptor | Symbol | Molecular Interaction Represented | Experimental Determination |

|---|---|---|---|

| Excess molar refraction | E | Polarizability from n-π and π-π electrons | Derived from refractive index measurements |

| Dipolarity/Polarizability | S | Dipole-dipole and dipole-induced dipole interactions | Solvatochromic shift measurements |

| Hydrogen bond acidity | A | Hydrogen bond donor strength | Measurement of complexation equilibria |

| Hydrogen bond basicity | B | Hydrogen bond acceptor strength | Measurement of complexation equilibria |

| McGowan characteristic volume | Vx | Molecular size and cavity formation energy | Calculated from molecular structure |

| Hexadecane-air partition coefficient | L | Dispersion interactions and cavity formation | Gas-liquid chromatography measurements |

These descriptors provide a comprehensive framework for quantifying the key interactions that govern a molecule's partitioning behavior between different phases, including hydrophobic effects, hydrogen bonding, and polar interactions [1] [20]. The coefficients in the LSER equations (e, s, a, b, v, l) are system-specific parameters that reflect the complementary properties of the phases between which solutes are transferring, while the descriptors (E, S, A, B, Vx, L) are intrinsic properties of the solute molecules [1].

Alternative Parameterization Schemes: Partial Solvation Parameters

An alternative parameterization known as Partial Solvation Parameters (PSP) has been developed to bridge LSER descriptors with equation-of-state thermodynamics, potentially expanding their application domain [17] [1]. The PSP framework divides intermolecular interactions into four categories:

- Dispersion PSP (σd): Reflects weak dispersive interactions

- Polar PSP (σp): Collective Keesom-type and Debye-type polar interactions

- Acidic PSP (σa): Hydrogen-bond donating ability

- Basic PSP (σb): Hydrogen-bond accepting ability

This scheme maintains a direct relationship with the cohesive energy density through the equation: ced = δd² + δp² + δa² + δb² = δtotal², providing a thermodynamic foundation that facilitates information exchange between LSER databases and other molecular thermodynamics approaches [17] [1]. The hydrogen-bonding PSPs are particularly valuable for estimating the free energy change (ΔGhb), enthalpy change (ΔHhb), and entropy change (ΔShb) upon hydrogen bond formation, offering additional insights into specific molecular interactions [1].

Comparative Analysis of Descriptor Prediction Methodologies

Performance Evaluation of Computational Approaches

The prediction of LSER molecular descriptors for novel compounds represents a significant challenge in computational chemistry, particularly for complex molecules with multiple functional groups. Several computational approaches have been developed to address this challenge, each with distinct strengths and limitations. The following table summarizes the performance characteristics of major prediction methodologies:

Table 2: Performance Comparison of LSER Descriptor Prediction Methods

| Methodology | Principle | RMSE Ranges | Applicability Domain | Key Limitations |

|---|---|---|---|---|

| Deep Neural Networks (DNN) | Graph-based representation learning | 0.11-0.46 across different descriptors [18] | Broad, including complex multi-functional compounds | Requires substantial training data; computational intensity |

| Traditional QSPR/Fragmental Methods | Group contribution and linear regression | Varies by descriptor complexity [18] | Limited to simpler chemical structures | Problematic for complex structures with multiple functional groups [18] |

| Quantum Chemical Calculations | Density functional theory (DFT) computations | Dependent on theoretical level [21] | Theoretically universal | Computationally expensive; expertise required |

| k-Nearest Neighbors (kNN) | Similarity-based descriptor assignment | Comparable to ML for congeneric series [19] | Limited to chemical neighborhoods with known descriptors | Fails for structurally novel compounds |

Recent advances in deep learning have demonstrated particular promise for descriptor prediction. DNN models based on graph representations of chemicals achieve root mean square errors (RMSE) ranging between 0.11 and 0.46 across different solute descriptors, performing comparably to established commercial software like ACD/Absolv and the online platform LSERD [18]. However, it is important to note that all prediction tools show decreased performance for larger, more complex chemical structures, suggesting that current methodologies have not fully addressed the challenges posed by molecular complexity [18].

Experimental Validation of Predictive Power

Rigorous validation of LSER prediction methods requires assessment against experimental data across diverse compound classes. Large-scale benchmarking studies involving 367 target-based compound activity classes from medicinal chemistry reveal important insights into the relative performance of different approaches [19]. These studies demonstrate that machine learning methods, particularly support vector regression (SVR), generally achieve the highest accuracy with mean absolute error (MAE) values typically below 1.0 log unit for logarithmic potency predictions [19].

However, simpler control methods including k-nearest neighbors (kNN) analysis often approach or match the performance of more complex machine learning methods, with differences in median MAE values typically around 0.1 or less [19]. This surprising resilience of simple prediction methods highlights the challenges in accurately assessing the relative performance of computational approaches and suggests that conventional benchmark settings may be insufficient for proper method comparison [19].

For partition coefficient predictions, which are crucial for understanding compound behavior in biological and environmental systems, both machine learning and traditional methods demonstrate similar performance, with RMSE values of approximately 1.0 log unit for octanol-water partition coefficients (Kow) across 12,010 chemicals and ~1.3 log units for water-air partition coefficients (Kwa) across 696 chemicals [18]. This consistent performance across diverse chemical classes and property types supports the robustness of LSER-based prediction frameworks.

Experimental Protocols for LSER Applications

Chromatographic System Characterization

Liquid chromatography provides a valuable experimental system for validating LSER descriptors and studying molecular interactions. A streamlined protocol for characterizing chromatographic systems using LSER principles involves the following steps [22]:

Column Conditioning: Equilibrate the HPLC column with the mobile phase (typically 50/50 % v/v methanol/water or acetonitrile/water) at the desired flow rate (typically 1.0 mL/min) until a stable baseline is achieved.

Dead Time Determination: Inject a non-retained compound (such as sodium nitrate for reversed-phase systems) to determine the column hold-up time (t0).

Retention Factor Measurement: Separately inject a set of 40-50 reference compounds with known LSER descriptors, ensuring coverage of diverse molecular interactions. Measure retention times for each compound and calculate retention factors using k = (tR - t0)/t0.

LSER Model Construction: Perform multiple linear regression analysis using the Abraham equation: log k = c + eE + sS + aA + bB + vV, where the lower-case coefficients represent system parameters that characterize the stationary phase properties [20].

System Comparison: Compare the obtained coefficients (e, s, a, b, v) across different stationary phases to understand their relative selectivity and interaction characteristics.

This approach has been successfully applied to characterize diverse stationary phases including octadecyl, alkylamide, cholesterol, alkyl-phosphate, and phenyl-functionalized materials, revealing that molecular volume and hydrogen bond acceptor basicity are typically the most important parameters influencing retention [20]. The LSER coefficients further demonstrate dependency on the type of organic modifier used in the mobile phase, providing insights into system optimization for specific separation needs [20].

Validation Criteria for QSAR/LSER Models

Proper validation is essential for ensuring the reliability of LSER models, particularly when applied to novel compounds. Based on comprehensive assessments of QSAR model validation, the following criteria should be employed [23]:

External Validation: Split the dataset into training (typically 70-80%) and test (20-30%) sets before model development. Use only the training set for model construction and reserve the test set for independent validation.

Statistical Metrics: Calculate multiple validation metrics including:

- Coefficient of determination (r²) for both training and test sets

- Root mean square error (RMSE)

- Mean absolute error (MAE)

- Concordance correlation coefficient (r₀²) between observed and predicted values

Applicability Domain Assessment: Define the chemical space within which the model provides reliable predictions based on descriptor ranges of the training set.

Y-Randomization: Verify that the model performance significantly exceeds that obtained with randomly shuffled response values.

Studies have shown that relying solely on the coefficient of determination (r²) is insufficient to indicate model validity, as some models with acceptable r² values may fail other validation criteria [23]. The established validation criteria have specific advantages and disadvantages that should be considered in comprehensive QSAR/LSER studies, and no single method is sufficient to demonstrate model validity [23].

Research Reagent Solutions for LSER Studies

Table 3: Essential Research Reagents and Materials for LSER Experimental Characterization

| Reagent/Material | Specifications | Research Function | Application Notes |

|---|---|---|---|

| Reference Compound Sets | 40-50 compounds with predefined descriptors [20] | LSER model calibration | Must cover diverse molecular interactions: alkanes, ketones, aromatic compounds, H-bond donors/acceptors |

| Stationary Phases | Octadecyl, alkylamide, cholesterol, phenyl, alkyl-phosphate [20] | Chromatographic characterization | Functionalized on same silica batch for valid comparison; different hydrophobicities and selectivities |

| Mobile Phase Modifiers | HPLC-grade methanol and acetonitrile [20] | Solvation property modulation | Different selectivity effects; acetonitrile offers different hydrogen bonding interactions vs. methanol |

| Abraham Descriptor Database | Experimental values for ~8,000 compounds [18] | Model training and validation | Available through LSERD online platform; essential for prediction method development |

| Column Characterization Standards | Alkyl ketone homologues (C₃-C₆) [22] | Determination of hold-up volume and cavity term | Enables calculation of system volume contribution in LSER models |

Decision Framework and Research Recommendations

The following diagram illustrates a systematic workflow for selecting appropriate LSER approaches based on research objectives and compound characteristics:

LSER Approach Selection Workflow

Based on the comprehensive comparison of LSER methodologies and their performance characteristics, the following research recommendations emerge:

For Novel Compound Research: Implement DNN-based descriptor prediction as a complementary approach alongside traditional methods, particularly for complex chemical structures with multiple functional groups where fragment-based methods struggle [18].

For Method Validation: Employ multiple validation criteria beyond simple correlation coefficients, as studies have demonstrated that r² values alone are insufficient to establish model validity [23]. Include external validation, applicability domain assessment, and statistical significance testing.

For High-Throughput Applications: Leverage in silico package models that combine density functional theory computations with QSPR approaches to derive LSER solute parameters without instrumental determinations, enabling large-scale screening of novel compound libraries [21].

For Chromatographic Applications: Utilize fast characterization methods based on carefully selected compound pairs that isolate specific molecular interactions, reducing the number of required measurements from extensive compound sets to a minimal number of diagnostic pairs [22].

The integration of LSER approaches with emerging machine learning technologies and the development of hybrid models that combine theoretical descriptors with experimental parameters represent promising avenues for enhancing predictive power in novel compound research [18] [1]. As chemical exploration continues to advance toward increasingly complex molecular structures, these integrated approaches will play a crucial role in accelerating the discovery and development of new therapeutic agents and functional materials.

From Traditional QSAR to AI-Enhanced Predictive Frameworks

The field of predictive modeling in chemistry and drug discovery has undergone a remarkable transformation, evolving from traditional Quantitative Structure-Activity Relationship (QSAR) approaches to sophisticated artificial intelligence (AI)-enhanced frameworks. This evolution represents a paradigm shift from linear statistical models to complex, multi-parameter optimization systems capable of navigating vast chemical spaces with unprecedented accuracy. The journey began with classical QSAR methodologies, which established fundamental relationships between molecular descriptors and biological activity or physicochemical properties using statistical techniques like multiple linear regression and partial least squares analysis [24]. These traditional models provided valuable insights but faced limitations in handling complex, non-linear relationships and high-dimensional data.

The integration of AI and machine learning (ML) has addressed these limitations, enabling researchers to develop predictive models with enhanced capability for virtual screening, toxicity prediction, and molecular design [25] [26]. Modern AI-enhanced QSAR frameworks leverage deep learning architectures, including graph neural networks and generative models, to extract complex patterns from chemical data that were previously inaccessible through conventional approaches. This evolution is particularly evident in specialized applications such as Linear Solvation Energy Relationship (LSER) modeling, where AI augmentation has significantly expanded predictive power for novel compounds by incorporating diverse molecular descriptors and interaction parameters [27] [28]. The continuous refinement of these computational tools has positioned AI-enhanced QSAR as a cornerstone in contemporary drug discovery and environmental chemistry, enabling more efficient and targeted research outcomes.

Traditional QSAR Foundations and LSER Approaches

Fundamental Principles and Methodologies

Traditional QSAR modeling operates on the fundamental principle that molecular structure quantitatively determines biological activity and physicochemical properties. These relationships are established using statistical methods that correlate molecular descriptors with measured endpoints, creating predictive models that can estimate activities for untested compounds [24]. The molecular descriptors encompass a wide range of characteristics, including lipophilicity (logP), hydrophobicity (logD), water solubility (logS), acid-base dissociation constant (pKa), dipole moment, molecular weight, molar volume, and various topological indices [29]. These parameters numerically encode essential chemical information that influences how molecules interact with biological systems or environmental substrates.

Linear Solvation Energy Relationships (LSERs) represent a specialized category of QSAR that employs solvation parameters to predict partitioning behavior and interaction potentials. Traditional LSER models have been extensively used to predict distribution coefficients (logKd) and understand molecular interactions in environmental systems [27] [28]. The strength of LSER approaches lies in their ability to provide mechanistic insights into interaction forces governing adsorption and partitioning processes, including hydrogen bonding, polar interactions, and hydrophobic effects [28]. These models have proven particularly valuable in environmental chemistry for predicting the behavior of contaminants, such as pharmaceuticals and personal care products (PPCPs), with environmental substrates like microplastics [27].

Experimental Protocols and Validation

The development of traditional QSAR and LSER models relies on robust experimental protocols to generate high-quality training data. For environmental applications, such as studying contaminant adsorption on microplastics, a typical experimental workflow involves several standardized steps. First, researchers characterize the adsorbent materials by measuring specific surface area, oxygen-containing functional groups (using carbonyl index and O/C ratio), and crystallinity through techniques like FTIR, XPS, and XRD [27]. Simultaneously, carefully selected organic contaminants with diverse physicochemical properties are prepared as stock solutions in appropriate solvents.

The core experimental phase involves batch sorption experiments, where constant amounts of microplastics are combined with contaminant solutions of varying concentrations in sealed containers. These systems are agitated at constant temperature until equilibrium is reached, typically from several hours to days depending on the compounds [27] [28]. After phase separation, the equilibrium concentration of contaminants in the aqueous phase is quantified using analytical techniques such as HPLC-UV or LC-MS, enabling calculation of the adsorption capacity. The experimental data is then fitted to isotherm models like Langmuir, Freundlich, or Dubinin-Astakhov (DA) to obtain key parameters including maximum adsorption capacity (Q0) and adsorption affinity (E) [27].

For LSER development, these experimentally determined distribution coefficients are correlated with Abraham solute descriptors (e.g., Kamlet-Taft parameters) that quantify specific molecular interactions [28]. The resulting models are rigorously validated using statistical measures including R² (coefficient of determination), cross-validated R² (Q²), and root mean square error (RMSE) to ensure predictive reliability [28] [30].

Table 1: Key Physicochemical Parameters in Traditional QSAR

| Parameter | Symbol | Role in QSAR | Determination Methods |

|---|---|---|---|

| Lipophilicity | logP | Predicts membrane permeability and bioavailability | Octanol-water partitioning, computational estimation |

| Hydrophobicity | logD | Indicates pH-dependent partitioning | pH-measured partition coefficients |

| Water Solubility | logS | Influences absorption and distribution | Experimental measurement, QSPR models |

| Acid Dissociation Constant | pKa | Affects ionization state and solubility | Potentiometric titration, spectral methods |

| Molar Refractivity | MR | Correlates with steric and polarizability effects | Calculated from molecular structure |

| Topological Indices | Various | Encode structural complexity | Graph theory calculations |

AI-Enhanced QSAR Frameworks: Methodological Advances

Machine Learning and Deep Learning Integration

The integration of machine learning (ML) and deep learning (DL) algorithms has fundamentally transformed QSAR modeling capabilities, enabling accurate predictions for complex, non-linear relationships that challenged traditional approaches. ML methods such as Random Forests (RF), Support Vector Machines (SVM), and k-Nearest Neighbors (kNN) have demonstrated exceptional performance in handling high-dimensional descriptor spaces and identifying subtle patterns in bioactivity data [24]. These algorithms excel at virtual screening and toxicity prediction tasks where multiple molecular descriptors interact in non-additive ways. The advantage of ML approaches lies in their ability to perform built-in feature selection, effectively prioritizing the most relevant molecular descriptors while mitigating the impact of noisy or redundant variables [24].

Beyond conventional ML, deep learning architectures including Convolutional Neural Networks (CNNs), Recurrent Neural Networks (RNNs), and Graph Neural Networks (GNNs) have emerged as powerful tools for extracting complex features directly from molecular structures [31] [24]. These networks automatically learn hierarchical representations of molecules, eliminating the need for manual descriptor engineering while often achieving superior predictive accuracy. Particularly noteworthy are graph-based neural networks that operate directly on molecular graph representations, effectively capturing atomic connectivity and three-dimensional spatial relationships that are crucial for predicting biological activity and molecular properties [24]. The capacity of DL models to integrate diverse data types, including structural, physicochemical, and bioassay data, has significantly expanded the scope and accuracy of modern QSAR predictions.

Generative AI and Advanced Architectures

Generative AI models represent the cutting edge of AI-enhanced QSAR frameworks, enabling not just prediction but de novo molecular design with optimized properties. Generative Adversarial Networks (GANs) and Variational Autoencoders (VAEs) have demonstrated remarkable capability to explore vast chemical spaces and propose novel compounds with desired characteristics [31] [24]. These models learn the underlying probability distribution of chemical space from existing compound libraries and can generate new molecular structures with specific target properties, such as high binding affinity or optimal ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) profiles [31].

Advanced architectures like Reinforcement Learning (RL) frameworks further enhance generative capabilities by incorporating reward functions that guide molecular generation toward multi-parameter optimization goals [31]. For instance, RL agents can be trained to modify molecular structures iteratively while maximizing composite rewards based on predicted activity, synthesizability, and safety profiles. This approach has enabled the development of AI-designed drug candidates such as DSP-1181 (a serotonin receptor agonist for obsessive-compulsive disorder) and ISM001-055 (a TNIK inhibitor for idiopathic pulmonary fibrosis), both of which have entered clinical trials [26]. The integration of transformer architectures originally developed for natural language processing has also shown promise in molecular design, treating Simplified Molecular-Input Line-Entry System (SMILES) representations as chemical "sentences" to be generated and optimized [24].

Table 2: Comparison of AI Approaches in QSAR Modeling

| AI Method | Key Features | QSAR Applications | Advantages | Limitations |

|---|---|---|---|---|

| Random Forests | Ensemble decision trees, feature importance | Virtual screening, toxicity prediction | Handles noisy data, interpretable | Limited extrapolation capability |

| Support Vector Machines | Maximum margin hyperplanes | Classification, activity prediction | Effective in high-dimensional spaces | Memory-intensive for large datasets |

| Neural Networks | Multi-layer perceptrons | Activity and property prediction | Universal approximators | Black box, requires large data |

| Graph Neural Networks | Graph-structured data processing | Molecular property prediction | Captures structural relationships | Computationally intensive |

| Generative Adversarial Networks | Generator-discriminator competition | De novo molecular design | Explores novel chemical space | Training instability challenges |

Comparative Analysis: Predictive Performance Evaluation

Quantitative Performance Metrics

Direct comparison of traditional and AI-enhanced QSAR frameworks reveals significant differences in predictive performance across various chemical domains. In environmental applications, traditional LSER models for predicting organic compound adsorption on microplastics typically achieve moderate accuracy, with reported R² values ranging from 0.83 to 0.96 for specific polymer types [28]. For instance, a recent LSER model developed for predicting pharmaceutical adsorption on various microplastics demonstrated good performance but required careful parameterization for each polymer type and aging condition [27]. The precision of these traditional models is often limited by their reliance on linear free-energy relationships and their inability to fully capture complex, multi-mechanism interactions, especially for structurally diverse compound libraries.

In contrast, AI-enhanced QSAR frameworks consistently demonstrate superior predictive capability, with R² values frequently exceeding 0.9 even for highly diverse chemical datasets [24]. Modern deep learning models have shown particular strength in predicting complex endpoints like drug-target interactions, toxicity, and multi-parameter optimization objectives where multiple nonlinear relationships interact [31] [26]. The performance advantage of AI approaches becomes increasingly pronounced as chemical space diversity expands, with studies reporting 20-30% improvements in prediction accuracy compared to traditional methods for heterogeneous compound libraries [24]. This enhanced performance comes with increased computational requirements but offers substantial returns in predictive reliability for novel compound evaluation.

Mechanistic Interpretability vs. Predictive Power

A fundamental trade-off emerges when comparing traditional and AI-enhanced approaches: mechanistic interpretability versus predictive power. Traditional LSER models provide transparent, chemically intuitive insights into molecular interactions by explicitly quantifying contributions from specific mechanisms like hydrogen bonding, polar interactions, and hydrophobic effects [28]. For example, LSER studies on microplastic adsorption have clearly demonstrated how UV aging increases the importance of hydrogen bonding interactions by introducing oxygen-containing functional groups to polymer surfaces [28]. This mechanistic clarity is invaluable for guiding molecular design and understanding environmental processes.

AI-enhanced models, particularly deep learning approaches, often function as "black boxes" with superior predictive capability but limited direct interpretability [24] [25]. To address this limitation, researchers have developed model interpretation techniques including SHAP (SHapley Additive exPlanations) and LIME (Local Interpretable Model-agnostic Explanations) that help elucidate feature importance in complex AI models [24]. Hybrid approaches that combine AI prediction with mechanistic insights are emerging as particularly powerful solutions, such as the DA-LSER model that integrates the Dubinin-Astakhov isotherm with LSER parameters to predict pharmaceutical adsorption on microplastics while maintaining interpretability of interaction mechanisms [27]. These hybrid frameworks represent a promising direction for balancing the competing demands of accuracy and understanding in predictive modeling.

Experimental Data and Case Studies

Environmental Chemistry Applications

The application of traditional and AI-enhanced predictive frameworks in environmental chemistry provides compelling evidence of their respective capabilities and limitations. A recent study investigating the adsorption of organic contaminants on pristine and aged polyethylene microplastics demonstrated how traditional LSER approaches can successfully predict distribution coefficients while revealing important mechanistic insights [28]. The research established that while hydrophobic interactions dominated for pristine PE, UV-aging introduced oxygen-containing functional groups that significantly enhanced the role of hydrogen bonding and polar interactions in the adsorption process [28]. The resulting pp-LFER model achieved good predictive accuracy (R² = 0.96 for UV-aged PE) while providing chemically meaningful system parameters that illuminated the molecular-level changes induced by environmental weathering.

Building on this foundation, researchers developed a hybrid DA-LSER model that combined the Dubinin-Astakhov model with LSER parameters to predict adsorption of pharmaceuticals on various microplastics [27]. This innovative approach incorporated key parameters of microplastics (specific surface area, oxygen-containing functional groups) alongside Kamlet-Taft solvation parameters of organic contaminants, creating a more comprehensive predictive framework [27]. The model successfully predicted adsorption capacity and affinity while identifying hydrophobic interaction and hydrogen bonding as primary adsorption mechanisms. This case study illustrates how integrating traditional LSER concepts with more sophisticated modeling frameworks can enhance predictive power while retaining mechanistic interpretability – a crucial advantage for environmental risk assessment and remediation strategies.

Drug Discovery Implementations

In pharmaceutical applications, the transition from traditional to AI-enhanced QSAR frameworks has demonstrated dramatic improvements in discovery efficiency and success rates. Traditional QSAR approaches have historically contributed to drug development projects, including HIV protease inhibitors and neuraminidase inhibitors for influenza, by establishing relationships between structural features and biological activity [29]. However, these traditional methods typically required extensive chemical optimization cycles and faced high attrition rates due to unanticipated toxicity or poor pharmacokinetic properties.

AI-enhanced QSAR frameworks have transformed this landscape by enabling multi-parameter optimization early in the discovery process. For instance, the development of DSP-1181, a serotonin receptor agonist for obsessive-compulsive disorder, was completed in under 12 months through an AI-driven approach – an unprecedented timeline in traditional medicinal chemistry [31] [26]. Similarly, ISM001-055, a novel small molecule targeting TNIK for idiopathic pulmonary fibrosis, was designed using Insilico Medicine's AI platform and rapidly advanced to clinical trials [26]. These case studies demonstrate how AI-enhanced QSAR frameworks can simultaneously optimize for potency, selectivity, and ADMET properties, significantly reducing late-stage attrition rates. Pharmaceutical companies increasingly rely on these AI-driven approaches to navigate complex structure-activity relationships and accelerate the identification of viable clinical candidates [31] [25].

Table 3: Experimental Data Comparison for Sorption Prediction Models

| Model Type | Application Scope | Reported R² | RMSE | Key Mechanisms Identified | Reference |

|---|---|---|---|---|---|

| Traditional LSER | Pristine PE MPs | 0.83-0.96 | 0.19-0.68 | Hydrophobic interactions dominate | [28] |

| pp-LFER for Aged PE | UV-aged PE MPs | 0.96 | 0.19 | H-bonding increases with aging | [28] |

| DA-LSER Combined Model | PPCPs on various MPs | High accuracy reported | N/S | Hydrophobic and H-bonding interactions | [27] |

| QSAR with ML | Drug discovery | >0.9 | N/S | Multiple complex interactions | [24] |

| Three-phase System | HOCs on LDPE | N/S | Reduced error | Improved measurement accuracy | [30] |

Computational Tools and Platforms

Modern QSAR research relies on a sophisticated ecosystem of computational tools and platforms that facilitate both traditional and AI-enhanced modeling approaches. For traditional QSAR development, software packages like QSARINS and Build QSAR remain valuable for implementing classical statistical methods with robust validation protocols [24]. These tools support multiple linear regression, partial least squares analysis, and other fundamental techniques while providing visualization capabilities that enhance model interpretation. For descriptor calculation, platforms like DRAGON, PaDEL, and RDKit offer comprehensive sets of molecular descriptors spanning one-dimensional to three-dimensional chemical representations [24]. These tools enable researchers to encode molecular structures into numerical descriptors that capture essential chemical information for structure-activity modeling.

The AI-enhanced QSAR landscape is supported by more advanced platforms that implement machine learning and deep learning algorithms. Open-source libraries like scikit-learn provide accessible implementations of random forests, support vector machines, and other ML algorithms that have become standard in modern QSAR workflows [24]. For deep learning applications, graph neural network frameworks such as PyTorch Geometric and Deep Graph Library have enabled the development of specialized architectures for molecular property prediction [24]. Commercial platforms like Exscientia's Centaur Chemist and Insilico Medicine's AI platform represent the cutting edge of AI-driven drug discovery, integrating generative AI with multi-parameter optimization to accelerate the design of novel therapeutic compounds [31] [26]. These platforms have demonstrated their practical utility by producing clinical candidates in record time, validating the real-world impact of AI-enhanced QSAR frameworks.

Experimental Research Reagents and Materials

The development and validation of both traditional and AI-enhanced QSAR models requires carefully selected research materials and reagents that ensure data quality and reproducibility. For environmental QSAR studies focusing on contaminant adsorption, essential materials include well-characterized polymer substrates such as polyethylene (PE), polystyrene (PS), polyvinyl chloride (PVC), and polyethylene terephthalate (PET) microplastics in both pristine and aged forms [27] [28]. The aging process typically employs UV radiation chambers to simulate environmental weathering, with characterization techniques including FTIR spectroscopy and X-ray photoelectron spectroscopy (XPS) to quantify surface functional groups [28].

For pharmaceutical QSAR applications, research requires curated chemical libraries with reliable bioactivity data, such as the ChEMBL and PubChem databases that provide standardized compound structures and associated biological screening results [24]. High-quality ADMET datasets are particularly crucial for developing predictive models that can accurately forecast in vivo performance [24] [26]. Experimental validation typically employs target proteins and cell-based assay systems that provide reliable activity readouts for model training and verification. The increasing integration of multi-omics data in AI-enhanced QSAR frameworks further expands the reagent requirements to include genomic, proteomic, and metabolomic resources that enable more comprehensive compound profiling and personalized therapeutic prediction [31].

Visualizing Methodological Evolution and Workflows

The evolution from traditional QSAR to AI-enhanced predictive frameworks represents a fundamental shift in computational chemistry and drug discovery methodology. While traditional LSER and QSAR approaches provided foundational principles and mechanistic insights that remain valuable today, AI-enhanced frameworks have dramatically expanded the scope, accuracy, and applicability of predictive modeling. The comparative analysis reveals that AI-enhanced models generally offer superior predictive power for complex, high-dimensional problems, particularly in drug discovery applications where multiple parameters must be optimized simultaneously [31] [24] [26]. However, traditional LSER approaches maintain importance for applications requiring mechanistic interpretability and in contexts where data scarcity limits the effectiveness of data-intensive AI methods [27] [28].

The most promising direction for future research lies in the development of hybrid frameworks that integrate the mechanistic transparency of traditional LSER with the predictive power of AI [27]. Such integrated approaches can leverage the strengths of both paradigms while mitigating their respective limitations. As AI methodologies continue to mature, addressing challenges related to model interpretability, data quality, and regulatory acceptance will be crucial for maximizing their impact across chemical and pharmaceutical research domains [24] [25]. The rapid advancement of generative AI and multi-parameter optimization capabilities suggests that AI-enhanced QSAR frameworks will play an increasingly central role in accelerating chemical discovery and development while reducing costs and failure rates across diverse applications from environmental chemistry to personalized medicine.

Implementing AI-Enhanced LSER Models for De Novo Design and Screening

Workflow for Integrating LSER into AI-Driven Virtual Screening Pipelines

Linear Solvation Energy Relationships (LSERs) represent a robust quantitative approach for predicting physicochemical properties based on solute-solvent interactions. In pharmaceutical research, LSER models correlate compound-specific descriptors with partition coefficients, solubility, and other properties critical for drug disposition [3]. The foundational LSER model for partition coefficients between low-density polyethylene (LDPE) and water demonstrates exceptional predictive accuracy (n = 156, R² = 0.991, RMSE = 0.264) using molecular descriptors representing excess molar refractivity (E), polarity (S), hydrogen-bond acidity (A) and basicity (B), and McGowan's characteristic volume (V) [3]. As artificial intelligence (AI) transforms drug discovery through virtual screening and multi-parameter optimization [31], integrating LSERs offers a physiochemically-grounded framework for prioritizing compounds with optimal developability profiles. This guide objectively evaluates LSER predictive power against alternative approaches within AI-driven pipelines for novel compound research.

Theoretical Foundations and Comparative Framework

LSER Formalism and Descriptors

LSER models mathematically describe solvation phenomena using the general equation:

Property = c + eE + sS + aA + bB + vV

Where the capital letters represent solute-specific descriptors, and lowercase coefficients are system-specific parameters that reflect the complementary properties of the phases between which partitioning occurs [3]. For LDPE/water partitioning, the specific model reads:

logKi,LDPE/W = −0.529 + 1.098E − 1.557S − 2.991A − 4.617B + 3.886V [3]

The physicochemical interpretation of these descriptors encompasses:

- E (Excess molar refractivity): Characterizes dispersion interactions mediated through π- and n-electrons

- S (Polarity/polarizability): Reflects dipole-dipole and dipole-induced dipole interactions

- A and B (Hydrogen-bond acidity and basicity): Quantify hydrogen-bond donating and accepting ability

- V (McGowan's characteristic volume): Represents endergonic cavity formation processes

Alternative Predictive Approaches

LSERs compete with several computational approaches for property prediction in virtual screening:

- QSPR/QSAR Models: Establish mathematical relationships between structural descriptors and biological activities or properties using various machine learning algorithms [32]

- Ligand Efficiency Metrics: Normalize biological affinity by molecular size or lipophilicity, though these have non-trivial dependencies on concentration units [33]

- Molecular Dynamics (MD) Simulations: Calculate interaction energies and solvation properties from physical principles [32]

- Group Contribution Methods: Estimate properties from molecular structures by summing functional group contributions [34]

Experimental Evaluation of Predictive Performance

Benchmarking Protocols and Dataset Composition

To objectively evaluate LSER predictive power, we established a rigorous benchmarking protocol using literature data. The validation set comprised 52 chemically diverse compounds (approximately 33% of total observations) with experimentally determined LSER solute descriptors [3]. Predictive performance was assessed through:

- External Validation: Models trained on one dataset (n = 156) and tested on the independent validation set (n = 52)

- Descriptor Source Comparison: Evaluating performance using experimental versus predicted LSER descriptors

- Statistical Metrics: Calculating R², RMSE, and mean absolute error (MAE) for model comparison

For QSPR models benchmarking, we implemented a comprehensive assessment across 29 datasets from literature and ChEMBL, using four algorithms (Gradient Boosting Machines, Partial Least Squares, Random Forest, and Support Vector Machines) with two descriptor types (Morgan fingerprints and physicochemical descriptors) [35].

Performance Comparison of Predictive Methods

Table 1: Predictive Performance Across Modeling Approaches

| Method | R² | RMSE | Application Domain | Data Requirements |

|---|---|---|---|---|

| LSER (exp descriptors) | 0.985 | 0.352 | Partition coefficients, solubility | Experimental solute descriptors |

| LSER (pred descriptors) | 0.984 | 0.511 | Partition coefficients, solubility | Chemical structure only |

| Ligand Efficiency (LELP) | ~0.3 R² improvement over potency-based models | Normalized RMSE decrease >0.1 | Compound activity prediction | Molecular size and cLogP |

| MD-Gradient Boosting | 0.87 | 0.537 | Aqueous solubility | MD simulations |

| Standard QSPR | Variable (dataset-dependent) | Variable | Broad biological activities | Structural descriptors |

Table 2: Key MD-Derived Properties for Solubility Prediction [32]

| Property | Description | Influence on Solubility |

|---|---|---|

| logP | Octanol-water partition coefficient | Primary determinant of hydrophobicity |

| SASA | Solvent Accessible Surface Area | Measures contact area with water |

| Coulombic_t | Coulombic interaction energy | Polar interactions with solvent |

| LJ | Lennard-Jones interaction energy | Van der Waals interactions |

| DGSolv | Estimated Solvation Free Energy | Overall solvation thermodynamics |

| RMSD | Root Mean Square Deviation | Conformational flexibility |

| AvgShell | Average solvents in solvation shell | Local solvation structure |

Analysis of Comparative Results

The benchmarking reveals several key findings:

LSER Predictive Robustness: LSER models with experimental descriptors demonstrate exceptional predictive power (R² = 0.985, RMSE = 0.352) for partition coefficients, outperforming many structure-based approaches for this specific application [3].

Descriptor Source Impact: Using predicted rather than experimental LSER descriptors only marginally reduces R² (0.984 vs. 0.985) but increases RMSE by 45% (0.511 vs. 0.352), indicating maintained correlation with reduced precision [3].