Decoding LSER Solute Descriptors: A Comprehensive Guide to Vx, E, S, A, B, L for Pharmaceutical Research

This article provides a comprehensive exploration of Linear Solvation Energy Relationship (LSER) solute descriptors—Vx, E, S, A, B, L—tailored for researchers and professionals in drug development.

Decoding LSER Solute Descriptors: A Comprehensive Guide to Vx, E, S, A, B, L for Pharmaceutical Research

Abstract

This article provides a comprehensive exploration of Linear Solvation Energy Relationship (LSER) solute descriptors—Vx, E, S, A, B, L—tailored for researchers and professionals in drug development. It covers the fundamental chemical significance of each parameter, methodological approaches for their determination, troubleshooting common challenges in descriptor application, and validation techniques against experimental data. By integrating theoretical foundations with practical applications, this guide serves as an essential resource for leveraging LSERs in predicting solute partitioning, solubility, and membrane permeability to optimize pharmaceutical compounds.

Understanding LSER Solute Descriptors: A Deep Dive into Vx, E, S, A, B, L Fundamentals

Linear Solvation Energy Relationships (LSERs) represent a cornerstone quantitative model in physical organic and pharmaceutical chemistry for predicting the partitioning behavior of solutes in different chemical environments. The model provides a powerful framework for understanding and predicting how molecules distribute themselves between phases, a process fundamental to drug absorption, distribution, and clearance. The Abraham solvation parameter model, as LSER is formally known, has demonstrated remarkable success as a predictive tool across chemical, biomedical, and environmental applications [1]. Its robustness stems from its ability to deconstruct complex solvation phenomena into contributions from fundamental, chemically intuitive molecular interactions.

The theoretical foundation of LSER rests on Linear Free Energy Relationships (LFER), which posit that free-energy-related properties of a solute can be correlated with molecular descriptors representing its capacity for specific interaction types [1]. In thermodynamic terms, the partitioning of a solute between two solvents is equivalent to the difference in two gas/liquid solution processes [2]. This process is modeled as the sum of an endoergic cavity formation/solvent reorganization process and exoergic solute-solvent attractive forces [2]. The remarkable linearity observed in these relationships, even for strong specific interactions like hydrogen bonding, has been verified through the combination of equation-of-state solvation thermodynamics with the statistical thermodynamics of hydrogen bonding [1].

The Abraham LSER Model and Solute Descriptors

The most widely accepted symbolic representation of the LSER model, as proposed by Abraham, is given by the equation:

SP = c + eE + sS + aA + bB + vV [2]

In this equation, SP represents any free energy related property. In pharmaceutical contexts, this is most often the logarithm of a partition coefficient (log P) or retention factor (log k') [2]. The lowercase letters (e, s, a, b, v) are system coefficients reflecting the complementary properties of the phases between which partitioning occurs, while the uppercase letters (E, S, A, B, V) are solute descriptors that quantify the solute's ability to participate in specific intermolecular interactions [2]. An alternative form of the equation uses L instead of V for gas-to-solvent partitioning: log (KS) = ck + ekE + skS + akA + bkB + lkL [1].

The following table provides a detailed explanation of each solute descriptor and its molecular interpretation:

Table 1: Abraham LSER Solute Descriptors and Their Molecular Significance

| Descriptor | Name | Molecular Interpretation | Role in Solvation |

|---|---|---|---|

| E | Excess molar refraction | Electron pair interactions and polarizability [3] | Measures the solute's ability to interact through π- and n-electrons [2] |

| S | Solute dipolarity/polarizability | Dipolarity and polarizability [3] | Characterizes the solute's ability to engage in dipole-dipole and dipole-induced dipole interactions [2] |

| A | Hydrogen bond acidity | Overall hydrogen-bond donating ability [3] | Quantifies the solute's effectiveness as a hydrogen bond donor [2] |

| B | Hydrogen bond basicity | Overall hydrogen-bond accepting ability [3] | Quantifies the solute's effectiveness as a hydrogen bond acceptor [2] |

| V | McGowan characteristic volume | Molecular size [3] | Represents the endoergic cost of forming a cavity in the solvent [2] |

| L | Gas-liquid partition coefficient | Partition coefficient in n-hexadecane at 298 K [1] | Alternative to V for gas-to-solvent partitioning; related to dispersion interactions [1] |

These descriptors can be obtained experimentally through various physicochemical measurements, including gas-liquid chromatographic data and water-solvent partition coefficients [3]. Increasingly, computational approaches are also available for their estimation from molecular structure alone [4].

LSERs in Pharmaceutical Applications

Predicting Drug Partitioning and Absorption

In pharmaceutical development, LSERs provide invaluable predictive capabilities for key absorption, distribution, metabolism, and excretion (ADME) parameters. The model has been successfully applied to predict critical properties including aqueous solubility (log S₍w₎), various water-solvent partition coefficients (log P₍s₎), and air-solvent partition coefficients (log L₍s₎) [4]. These properties directly influence a drug's membrane permeability, bioavailability, and distribution patterns within the body. The ability to predict such properties from molecular structure alone during early development stages enables more efficient lead optimization and candidate selection.

Leachables and Extractables Assessment

LSERs have emerged as particularly valuable for addressing pharmaceutical packaging challenges, specifically in predicting the partitioning of potential leachables between plastic materials and pharmaceutical solutions. This application is critical for ensuring drug product safety and regulatory compliance. A recently developed LSER model for partitioning between low-density polyethylene (LDPE) and water demonstrates the precision achievable:

logK₍i,LDPE/W₎ = −0.529 + 1.098E − 1.557S − 2.991A − 4.617B + 3.886V [5]

This model, calibrated using 159 chemically diverse compounds, exhibited exceptional accuracy (n = 156, R² = 0.991, RMSE = 0.264) and outperformed traditional log-linear models, particularly for polar compounds with significant hydrogen-bonding propensity [5]. The model enables identification of maximum (worst-case) levels of leaching in support of chemical safety risk assessments [5].

Chromatographic Method Development

In pharmaceutical analysis, LSERs extensively characterize retention mechanisms in high-performance liquid chromatography (HPLC). The coefficients derived from LSER analysis reveal how specific stationary phases interact with different solute functionalities, guiding the rational selection of chromatographic conditions for method development [3]. Studies have demonstrated that the most important parameters influencing retention are typically the solute volume (V) and hydrogen bond acceptor basicity (B) [3]. This understanding allows chromatographers to optimize separations based on the specific molecular properties of analytes rather than through purely empirical approaches.

Experimental Protocols and Methodologies

Determining Solute Descriptors

Experimental determination of LSER descriptors requires careful measurement of partition coefficients in well-characterized systems. The following protocol outlines the standard approach for determining descriptors for new chemical entities:

Measure gas-chromatographic retention indices on at least 6-8 stationary phases of different polarities to obtain the L descriptor [4].

Determine water-solvent partition coefficients (log P) for a minimum of 6-8 solvent systems with known LSER coefficients [4]. The shake-flask method is commonly employed, though the microshake-flask method has been introduced for compounds with limited availability [4].

Validate descriptor consistency by comparing predicted and measured partition coefficients in additional solvent systems not used in the initial regression [2].

For compounds with limited solubility, consider alternative approaches including reversed-phase HPLC retention times and calculated descriptor values from molecular structure using validated software tools [4].

Developing System-Specific LSER Models

When creating new LSER models for specific pharmaceutical applications, the following methodological considerations are essential:

Select a chemically diverse training set of solutes spanning a wide range of molecular weights, hydrophobicity, and hydrogen-bonding capabilities [5]. The training set should be representative of the "chemical space" of compounds relevant to the application domain [5].

Ensure experimental data quality through appropriate replication, calibration, and validation of analytical methods. For partition coefficient measurements, equilibrium must be verified and mass balance confirmed [5].

Perform multiple linear regression analysis using the Abraham equation to determine system coefficients. Statistical evaluation should include R², root mean square error (RMSE), and leave-one-out cross-validation [2].

Validate the model using an independent test set not included in the initial regression. For the LDPE/water partitioning model, validation with 52 compounds (approximately 33% of the total observations) yielded R² = 0.985 and RMSE = 0.352 [6].

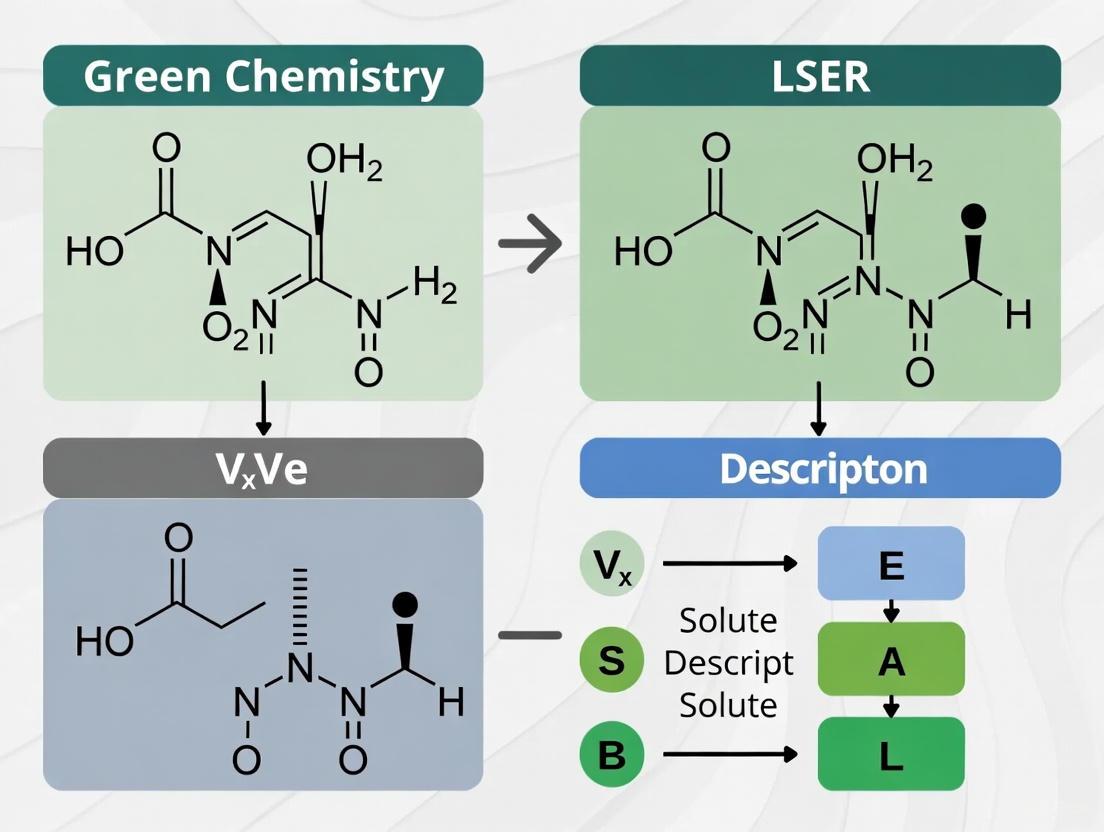

Figure 1: LSER Model Development Workflow for Pharmaceutical Applications

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful application of LSERs in pharmaceutical research requires access to well-characterized materials and computational resources. The following table outlines essential components of the LSER toolkit:

Table 2: Essential Research Tools for LSER Studies in Pharmaceutical Science

| Tool/Reagent | Function/Purpose | Pharmaceutical Relevance |

|---|---|---|

| Reference Solutes | Chemically diverse compounds with known descriptors for system calibration [2] | Enables characterization of new partitioning systems and stationary phases |

| Well-Characterized Solvents | Solvents with known LSER system coefficients for descriptor determination [4] | Provides standardized environments for measuring solute-specific interactions |

| Chromatographic Columns | Stationary phases with known LSER characteristics (e.g., C18, alkylamide, cholesterol) [3] | Facilitates determination of solute descriptors via HPLC retention measurements |

| Abraham Descriptor Database | Curated database of solute descriptors for known compounds [6] | Provides essential parameters for predictive modeling without experimental work |

| QSPR Prediction Software | Computational tools for predicting LSER descriptors from molecular structure [4] | Enables descriptor estimation for novel compounds when experimental data is lacking |

| Polymer Materials | Characterized polymeric phases (LDPE, PDMS, POM) with known LSER models [6] | Allows prediction of leachables partitioning from packaging materials into formulations |

Current Research and Future Perspectives

Recent advances in LSER applications continue to expand their utility in pharmaceutical sciences. The development of Partial Solvation Parameters (PSP) based on equation-of-state thermodynamics represents a promising approach for extracting thermodynamic information from LSER databases [1]. These parameters are designed to facilitate the exchange of information between QSPR-type databases and equation-of-state developments, potentially enabling estimation of solvation properties over broad ranges of temperature and pressure [1].

Comparative studies of polymer sorption behavior using LSER system parameters have revealed important differences between materials. While low-density polyethylene (LDPE) exhibits predominantly hydrophobic character, polymers like polyoxymethylene (POM) with heteroatomic building blocks demonstrate stronger sorption for polar, non-hydrophobic compounds [6]. Such insights guide the selection of appropriate packaging materials for specific drug formulations.

Ongoing research addresses the challenge of predicting LSER descriptors for novel chemical entities. The availability of "rule of thumb" estimation methods for variable values based on molecular functional groups has improved accessibility of the LSER approach [7]. Furthermore, the introduction of user-friendly software tools for descriptor calculation and the creation of freely accessible, curated databases promise to broaden LSER adoption in pharmaceutical development [4] [6].

As pharmaceutical research increasingly embraces in silico approaches, LSERs provide a thermodynamically grounded, chemically intuitive framework for predicting critical physicochemical properties. Their ability to decomplexify solvation phenomena into fundamental interactions makes them uniquely valuable for rational drug design and formulation optimization.

Figure 2: LSER Model Components and Their Relationships in Pharmaceutical Prediction

Linear Solvation Energy Relationships (LSERs) represent a cornerstone of quantitative structure-property relationship (QSPR) modeling, providing a powerful predictive framework for understanding solvation phenomena across chemical, biological, and environmental sciences. The widely accepted Abraham model formulation offers a sophisticated mathematical framework that quantifies how solute properties interact with their solvation environment, enabling researchers to predict partition coefficients, solubility, retention in chromatography, and other free-energy-related properties with remarkable accuracy [2]. This model's robustness stems from its foundation in linear free-energy relationships (LFER), which establish quantitative connections between molecular structure and thermodynamic behavior.

The LSER approach operates on the fundamental principle that solvation processes can be deconstructed into discrete, chemically meaningful interactions, each contributing additively to the overall free energy change [1]. This conceptual framework allows researchers to move beyond empirical correlations toward mechanistically interpretable models. The LSER model and its associated database are exceptionally rich in thermodynamic information about intermolecular interactions, which, when properly extracted, provides invaluable insights for various thermodynamic developments and applications [1]. This technical guide deconstructs the theoretical foundations, solute descriptors, and experimental methodologies underpinning LSERs, with particular emphasis on their significance for researchers in pharmaceutical development and analytical chemistry.

The LSER Equation: Fundamental Principles and Thermodynamic Basis

Mathematical Formulation of the Abraham Model

The most current and widely adopted symbolic representation of the LSER model, as proposed by Abraham, is expressed by the following equation:

SP = c + eE + sS + aA + bB + vV

In this foundational equation, SP represents any free-energy-related property, which in chromatographic applications most commonly corresponds to log k' (where k' is the retention factor) [2]. The lowercase coefficients (e, s, a, b, v) and constant (c) are system-specific parameters determined through multiparameter linear least squares regression analysis of datasets comprising solutes with known descriptor values [2]. These coefficients quantify the solvent's complementary responsiveness to each type of solute interaction.

The model's thermodynamic foundation lies in its interpretation of the solvation process. The partitioning of a solute between two solvents is thermodynamically equivalent to the difference in two gas/liquid solution processes [2]. For gas-liquid partitioning, the process is conceptually modeled as the sum of an endoergic cavity formation/solvent reorganization process and exoergic solute-solvent attractive forces [2]. This physical interpretation provides a chemically intuitive framework for understanding the contributions of the various terms in the LSER equation.

Thermodynamic Basis of Linearity

A fundamental question surrounding LSER models concerns the thermodynamic basis for the observed linearity, particularly for strong specific interactions like hydrogen bonding. Recent research combining equation-of-state solvation thermodynamics with the statistical thermodynamics of hydrogen bonding has verified that there is indeed a sound thermodynamic basis for LFER linearity [1]. This insight validates the model's application even for complex interaction types and provides deeper understanding of the thermodynamic character and content of the coefficients and terms in the LSER equations.

The LSER formalism has been successfully extended beyond free energy predictions to encompass other thermodynamic properties. Enthalpies of solvation, for instance, can be handled through a parallel linear relationship of the form [1]:

ΔHS = cH + eHE + sHS + aHA + bHB + lHL

This extension demonstrates the versatility of the LSER approach and enables researchers to extract valuable thermodynamic information about intermolecular interactions for solute/solvent systems where both LSER descriptors and LFER coefficients are available [1].

Deconstructing the LSER Solute Descriptors

Comprehensive Descriptor Definitions and Chemical Significance

The capital letters in the LSER equation (E, S, A, B, V) represent solute-specific molecular descriptors that quantify distinct aspects of a molecule's interaction potential. A deep understanding of these parameters' physico-chemical basis is essential for proper application and interpretation of LSER models [2].

Table 1: LSER Solute Descriptors and Their Chemical Significance

| Descriptor | Full Name | Chemical Interpretation | Measurement Basis | Molecular Feature Quantified |

|---|---|---|---|---|

| V | McGowan's Characteristic Volume | Molecular size influencing cavity formation | Computational from molecular structure | Energy cost of displacing solvent to create cavity |

| E | Excess Molar Refraction | Polarizability from π- and n-electrons | Measured refraction compared to alkane analog | Dispersion interaction capability |

| S | Dipolarity/Polarizability | Dipolarity with polarizability contribution | Solvatochromic comparison method | Strength of dipole-dipole and dipole-induced dipole interactions |

| A | Hydrogen Bond Acidity | Hydrogen bond donating ability | Solvatochromic measurement or computational | Strength as hydrogen bond donor |

| B | Hydrogen Bond Basicity | Hydrogen bond accepting ability | Solvatochromic measurement or computational | Strength as hydrogen bond acceptor |

| L | Gas-Hexadecane Partition Coefficient | Overall lipophilicity at molecular level | Experimental partition coefficient in n-hexadecane at 298 K | General dispersion interactions |

The development and physico-chemical basis of these solute parameters establishes their fundamental meaning and proper application [2]. Each descriptor corresponds to a specific type of solute-solvent interaction that contributes to the overall solvation energy, allowing for a nuanced understanding of molecular recognition processes in solution.

System Coefficients and Their Interpretation

The lowercase coefficients in the LSER equation (e, s, a, b, v) are system-specific parameters that reflect the solvent's responsiveness to each type of solute interaction. These coefficients are considered to correspond to the complementary effect of the phase (solvent) on solute-solvent interactions and contain chemical information on the solvent/phase in question [1]. In practical applications, these coefficients are determined via multiple linear regression analysis of experimental data for a diverse set of solutes with known descriptor values [2].

For partition processes between two condensed phases, the LSER relationship takes the form [1]:

log (P) = cp + epE + spS + apA + bpB + vpVx

Where P represents the partition coefficient between two solvents (e.g., water-to-organic solvent or alkane-to-polar organic solvent). For gas-to-solvent partitioning, the relationship becomes [1]:

log (KS) = ck + ekE + skS + akA + bkB + lkL

The remarkable feature of these equations is that the coefficients are solvent (phase or system) descriptors and are not influenced by the solute [1]. This characteristic enables the prediction of solute behavior in known systems and facilitates the rational selection of solvent systems for specific separation needs in pharmaceutical development.

Experimental Protocols for LSER Parameter Determination

Solvatochromic Method for Solute Parameter Measurement

The determination of solute descriptors relies on a combination of experimental measurements and computational approaches. For the S (dipolarity/polarizability), A (hydrogen bond acidity), and B (hydrogen bond basicity) parameters, solvatochromic methods provide a robust experimental approach [8].

Protocol: Solvatochromic Measurement of Kamlet-Taft Parameters

Sample Preparation: Prepare solutions of solvatochromic indicator probes (e.g., 4-nitroaniline) in the solvent systems of interest at controlled concentrations (approximately 10 µM). Use volatile solvents for stock solutions that can be evaporated and replaced with the test solvent [8].

Spectrophotometric Analysis: Record UV-Vis absorption spectra over an appropriate wavelength range (e.g., 300-700 nm) using a precision spectrophotometer. Maintain constant temperature (typically 298.15 K) using thermostated cell holders [8].

Data Processing: Calculate the molar electronic transition energy, ET, from the maximum absorption wavelength using the relationship: ET(kcal·mol⁻¹) = 2.85915 × νmax(cm⁻¹) where νmax is the wavenumber of maximum absorption [8].

Multiple Linear Regression: Correlate the ET values with solvent parameters using the LSER equation: ET = A₀ + aa + bβ + pπ where a, β, and π are the Kamlet-Taft solvatochromic parameters representing hydrogen-bond donor acidity, hydrogen-bond acceptor basicity, and dipolarity/polarizability, respectively [8].

Statistical Validation: Evaluate the regression model using statistical parameters including squared correlation coefficient (r²), F-statistic values, and standard deviations of regression coefficients to identify the optimal model [8].

This protocol enables the determination of solvent parameters that can be correlated with Abraham parameters, establishing a bridge between different LSER formalisms.

Chromatographic Method for System Characterization

Chromatographic techniques provide a powerful approach for characterizing system parameters (coefficients) in the LSER equation.

Protocol: Determination of LSER System Coefficients by Chromatography

Column Selection and Conditioning: Select the chromatographic stationary phase of interest and condition according to manufacturer specifications. For reversed-phase systems, ensure equilibration with mobile phase.

Test Solute Selection: Curate a diverse set of test solutes (30-40 compounds) spanning a wide range of descriptor values to ensure a well-conditioned regression. Include compounds with varied hydrogen bonding capabilities, polarizabilities, and molecular sizes [2].

Chromatographic Measurement: Determine retention factors (k') for each test solute under isocratic conditions. Ensure sufficient replication to establish measurement precision (typically RSD < 2%).

Descriptor Assignment: Obtain solute descriptors (E, S, A, B, V) from authoritative databases or through experimental determination for each test compound.

Multiple Linear Regression: Perform multiparameter linear regression of log k' against the solute descriptors to obtain the system-specific coefficients (e, s, a, b, v) and constant (c).

Model Validation: Validate the model using leave-one-out cross-validation or external test sets to ensure predictive capability. The model should cover at least 80% of the variance in the training and test sets for reliable application [9].

This methodology allows for the characterization of chromatographic systems, enabling rational method development in pharmaceutical analysis and quality control.

Figure 1: Experimental Workflow for LSER Parameter Determination. This diagram illustrates the parallel pathways for determining solute descriptors (solvatochromic method) and system coefficients (chromatographic method).

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of LSER research requires specific reagents, materials, and instrumentation. The following table details essential components of the LSER research toolkit.

Table 2: Essential Research Reagents and Materials for LSER Studies

| Category | Specific Items | Function/Application | Technical Specifications |

|---|---|---|---|

| Solvatochromic Indicators | 4-Nitroaniline, Reichardt's dye, Nitroanisoles | Probe for specific solvent interactions; determination of S, A, B parameters | High purity (>99%); wavelength calibration standards |

| Chromatographic Test Solutes | Alkylbenzenes, nitroalkanes, anilines, phenols, polycyclic aromatic hydrocarbons | Characterization of system coefficients; must span descriptor space | 30-40 diverse compounds; known descriptor values |

| Reference Solvents | n-Hexadecane, water, alkanols, ethers, dimethyl sulfoxide | Reference systems for partition coefficient measurements; calibration standards | HPLC grade; controlled humidity for aqueous systems |

| Chromatographic Equipment | HPLC system with UV-Vis detector, analytical columns | Determination of retention factors for system characterization | Precision: RSD < 2% for retention times |

| Spectroscopic Equipment | UV-Vis spectrophotometer, quartz cuvettes, temperature controller | Solvatochromic measurements; determination of transition energies | Wavelength accuracy: ±0.5 nm; temperature control: ±0.1°C |

| Computational Resources | LSER database software, statistical analysis package, molecular modeling suite | Regression analysis; descriptor calculation; model validation | Multiple linear regression with cross-validation capability |

This toolkit enables researchers to implement the experimental protocols described previously and generate high-quality LSER data for both fundamental studies and applied pharmaceutical research.

Advanced Applications and Recent Methodological Developments

Integration with Equation-of-State Thermodynamics

Recent advances in LSER methodology have focused on integrating the approach with equation-of-state thermodynamics to extract more detailed thermodynamic information. The development of Partial Solvation Parameters (PSP) represents a significant innovation designed to facilitate the extraction of thermodynamic information from LSER databases [1]. PSPs are characterized by their equation-of-state thermodynamic basis, which permits estimation over a broad range of external conditions [1].

This integration enables the estimation of key thermodynamic quantities beyond free energy, including the enthalpy change (ΔH) and entropy change (ΔS) upon formation of hydrogen bonds [1]. The hydrogen-bonding PSPs (σa and σb) reflect the acidity and basicity characteristics of molecules, respectively, while the dispersion PSP (σd) reflects weak dispersive interactions, and the polar PSP (σp) collectively reflects remaining Keesom-type and Debye-type polar interactions [1].

Artificial Intelligence and Machine Learning Enhancements

The field of LSER modeling is being transformed by artificial intelligence and machine learning approaches, which offer powerful alternatives to traditional regression methods. Recent developments include dataset-based machine learning and hybrid quantum mechanical/machine learning models that achieve superior accuracy in free energy predictions with reduced computational costs [10].

Machine learning models now enable rapid pKa predictions across a wide range of diverse solvents, and the integration of thermodynamic principles into machine learning frameworks allows for accurate and consistent macro-micro pKa prediction [10]. Graph-convolutional neural networks demonstrate high accuracy in reaction outcome prediction with interpretable mechanisms, representing a significant advancement over traditional LSER approaches for complex systems [10].

These AI/ML enhancements maintain the chemical interpretability of traditional LSER approaches while significantly expanding their predictive capability and application domain, particularly in pharmaceutical research where prediction of solvation-related properties is crucial for drug design and development.

The Linear Solvation Energy Relationship equation represents a sophisticated framework that deconstructs solvation phenomena into chemically meaningful interaction terms. The Abraham model, with its well-defined solute descriptors (V, E, S, A, B, L) and system-specific coefficients, provides both predictive power and mechanistic insight into molecular interactions in solution. The experimental protocols for parameter determination, particularly solvatochromic and chromatographic methods, provide robust approaches for characterizing both solute properties and system responses.

Recent advances integrating LSER with equation-of-state thermodynamics and machine learning approaches are expanding the methodology's capabilities, enabling more detailed thermodynamic analysis and enhanced predictive accuracy. For researchers in drug development, these developments offer increasingly powerful tools for predicting solubility, permeability, and other pharmaceutically relevant properties, ultimately supporting more efficient drug design and development processes. The continued evolution of LSER methodology promises to further bridge the gap between empirical correlation and fundamental molecular understanding in solvation science.

The Solvation Parameter Model, often expressed through Linear Solvation Energy Relationships (LSER), is a powerful quantitative structure-property relationship (QSPR) tool for predicting a wide range of chemical, biological, and environmental processes [11]. The model characterizes intermolecular interactions using a set of solute descriptors. The most current and widely accepted form of the Abraham model is represented by the equation [2] [12] [1]:

SP = c + eE + sS + aA + bB + vV

In this equation, SP is a free-energy related property, such as the logarithm of a partition coefficient or retention factor in chromatography [2]. The capital letters represent the solute descriptors:

- E: Excess molar refraction

- S: Dipolarity/Polarizability

- A: Overall hydrogen-bond acidity

- B: Overall hydrogen-bond basicity

- V: McGowan's Characteristic Volume

The lowercase letters (e, s, a, b, v) are the system coefficients that characterize the complementary effect of the solvent or phase on solute-solvent interactions [1]. Among these descriptors, McGowan's Characteristic Volume (V or Vx) serves as a fundamental parameter representing the molecular size and contributing to the cavity formation energy required to accommodate a solute molecule within a solvent matrix [1]. This guide explores the theoretical foundation, computational determination, experimental assessment, and practical applications of Vx within modern chemical research and drug development.

Theoretical Foundation and Mathematical Formulation

Definition and Physical Interpretation

McGowan's Characteristic Volume (Vx) is defined as the intrinsic molecular volume, typically expressed in units of dm³·mol⁻¹·100, though it is a dimensionless quantity in the LSER equation [11]. It represents the van der Waals volume of a molecule, corresponding to the space occupied by a molecule that is impenetrable to other molecules at ordinary temperatures [13]. In thermodynamic terms, the Vx descriptor primarily reflects the endothermic cavity formation process and dispersive interactions that occur when a solute is transferred between phases [1]. The product term vV in the LSER equation quantifies the energy required to create a suitably sized cavity in the solvent to accommodate the solute molecule.

Calculation from Molecular Structure

Unlike several other LSER descriptors that require experimental determination, Vx can be calculated directly from molecular structure using a simple algorithm based on atomic contributions and bond counts [11] [14]. The standard calculation method is as follows:

Vx = (Σ Atomic Contributions - Σ Bond Contributions) / 100

Table: Atomic and Bond Contributions for Calculating McGowan's Characteristic Volume

| Component | Contribution Value | Notes |

|---|---|---|

| Carbon Atom | 0.01635 dm³·mol⁻¹ | All carbon types |

| Hydrogen Atom | 0.00110 dm³·mol⁻¹ | All hydrogen types |

| Other Atoms | Element-specific volumes | Based on van der Waals radii |

| Single Bond | -0.00578 dm³·mol⁻¹ | Between any two atoms |

| Double Bond | -0.01156 dm³·mol⁻¹ | Exactly twice single bond value |

| Triple Bond | -0.01734 dm³·mol⁻¹ | Exactly three times single bond value |

The calculation accounts for atomic overlaps in covalent bonding by subtracting bond contributions, resulting in a more accurate representation of the actual molecular volume than simple atomic volume summation [13]. This approach aligns with the concept that van der Waals molecular volume is smaller than the sum of individual atomic volumes due to covalent bond shortening [13].

Diagram: Computational workflow for determining McGowan's Characteristic Volume (Vx) from molecular structure, showing the sequence of summing atomic contributions and adjusting for bond overlaps.

Relationship to Other Molecular Volume Descriptors

Comparison with van der Waals Volume

The van der Waals molecular volume (VvdW) is conceptually similar to Vx but is typically calculated using different methodologies. VvdW is defined as the volume occupied by a molecule that is impenetrable to other molecules, calculated using van der Waals radii of atoms [13]. The calculation involves representing atoms as interlocking spheres with radii corresponding to their van der Waals radii, with the covalent bond distance being shorter than the sum of these radii [13]. While Vx and VvdW both represent intrinsic molecular volumes, Vx employs a simplified calculation method based on atomic contributions and bond corrections, making it more accessible for rapid computation in large-scale chemical database applications [15].

Table: Comparison of Molecular Volume Descriptors

| Descriptor | Basis of Calculation | Units | Key Applications | Advantages |

|---|---|---|---|---|

| McGowan's Vx | Sum of atomic volumes minus bond contributions | dm³·mol⁻¹·100 | LSER models, partition coefficients | Rapid calculation from structure, consistent with LSER framework |

| van der Waals Volume | Sum of atomic spheres with van der Waals radii | ų or nm³ | Molecular modeling, packing calculations | More physically accurate representation of excluded volume |

| Molecular Volume (υ) | Molar volume from molecular weight and density | cm³·mol⁻¹ | Thermodynamic property prediction | Directly measurable, relates to bulk properties |

| Characteristic Volume | McGowan's calculated molecular volume [13] | dm³·mol⁻¹·100 | Chromatographic retention prediction | Specifically parameterized for LSER applications |

McGowan Volume in Context of Steric Descriptors

In modern computational chemistry, Vx represents one of several approaches to quantifying molecular size and steric properties. Contemporary research continues to develop complementary descriptors, such as buried volume and Sterimol parameters, which offer alternative perspectives on steric effects, particularly in catalysis and drug design [16]. The recently developed HeteroAryl Descriptors database (HArD), for instance, includes multiple steric descriptors to capture different aspects of molecular size and shape for heteroaromatic compounds [16]. Despite these advances, Vx remains particularly valuable in LSER applications due to its parameterization within the established solvation parameter model and its computational efficiency.

Experimental Determination and Validation

Chromatographic Methods for Descriptor Assignment

While Vx can be calculated directly from structure, experimental techniques are essential for validating its accuracy and determining other LSER descriptors. The Solvent Method provides a robust multi-technique approach for descriptor assignment [14]. A streamlined experimental design requires 26 total measurements across different techniques [14]:

Table: Experimental Measurements for LSER Descriptor Determination

| Technique | Experimental Conditions | Number of Measurements | Descriptors Validated |

|---|---|---|---|

| Gas Chromatography | 3 retention factor measurements in 60°C range on four columns | 12 measurements | L, S, A, B |

| Reversed-Phase Liquid Chromatography | 3 retention factor measurements in 30% (v/v) acetonitrile composition range on two columns | 6 measurements | S, A, B |

| Liquid-Liquid Partition | Eight partition constant measurements in totally organic and aqueous biphasic systems | 8 measurements | E, S, A, B |

This streamlined approach represents a significant improvement on earlier single-technique approaches, allowing simultaneous determination of E, S, A, B, and L descriptors with minimal bias compared to established database values [14]. For Vx specifically, calculation from structure remains the preferred method, with experimental retention data serving to validate its accuracy in the context of overall LSER model performance.

Substantial efforts have been dedicated to creating comprehensive, validated databases of LSER descriptors. The Wayne State University (WSU) descriptor database represents one of the most authoritative sources, recently updated to the WSU-2025 version containing descriptors for 387 varied compounds [11]. This expanded and updated database provides improved precision and predictive capability compared to its predecessors, with Vx values calculable for all entries [11]. Computational chemistry toolkits such as the Chemistry Development Kit (CDK) implement algorithms for calculating Vx and related volume descriptors, enabling high-throughput screening of compound libraries [15].

Applications in Pharmaceutical Research and Environmental Chemistry

Drug Discovery and Bioavailability Prediction

In pharmaceutical research, Vx serves as a critical parameter for predicting absorption, distribution, metabolism, and excretion (ADME) properties. The descriptor contributes to models of lipophilicity and membrane permeability, which directly influence drug bioavailability. In reversed-phase liquid chromatography, which simulates biomembrane partitioning, the Vx term directly influences retention factor values through its relationship to cavity formation energy [13]. Research on Per- and Polyfluoroalkyl Substances (PFAS) binding to human serum albumin (HSA) has demonstrated the significance of molecular volume descriptors in predicting bioaccumulation potential and protein interaction affinities [17].

Environmental Fate Modeling

Vx is particularly valuable in environmental chemistry for predicting the partitioning behavior of organic contaminants between different environmental compartments. The descriptor features prominently in models predicting air-water, soil-water, and sediment-water partition coefficients, which are essential for understanding the transport, persistence, and ecological impact of pollutants. The solvation parameter model, with Vx as a key component, has been successfully applied to predict the environmental distribution of diverse chemical classes, from hydrocarbons to complex industrial chemicals [1].

Chromatographic Retention Prediction

In analytical chemistry, Vx contributes significantly to accurate retention prediction in various chromatographic modes, including reversed-phase liquid chromatography and gas chromatography [12]. The characteristic volume descriptor helps characterize the hydrophobic contribution to retention, complementing polar and hydrogen-bonding interactions captured by other LSER parameters. Recent research has explored fast characterization methods based on the Abraham solvation parameter model for both reversed-phase and hydrophilic interaction liquid chromatography (HILIC), with Vx playing a consistent role in retention models [12].

Current Research and Emerging Applications

Integration with Equation-of-State Thermodynamics

Recent research has explored the interconnection between LSER descriptors and equation-of-state thermodynamics through the development of Partial Solvation Parameters (PSP) [1]. This approach aims to extract thermodynamic information from the LSER database and facilitate its transfer to other molecular thermodynamics applications. The Vx descriptor contributes to the estimation of dispersion interactions within this framework, helping to bridge QSPR-type databases with equation-of-state developments [1].

Expansion to Heteroaromatic Compound Space

The development of specialized databases for heteroaryl substituents represents an important advancement in steric descriptor applications. The recently introduced HeteroAryl Descriptors database (HArD) comprises DFT-computed steric and electronic descriptors for over 31,500 heteroaryl substituents [16]. While including alternative steric parameters like buried volume and Sterimol parameters, such databases complement the Vx descriptor by providing specialized characterization of important pharmaceutical scaffolds [16].

Machine Learning and Predictive Modeling

Vx continues to serve as a fundamental feature in quantitative structure-activity relationship (QSAR) and machine learning models for chemical property prediction. Its computational efficiency and physical interpretability make it particularly valuable for large-scale virtual screening and chemical priority setting. Recent studies on PFAS-protein interactions have demonstrated how volume-related descriptors like the packing density index (PDI), defined as the ratio between McGowan volume and total surface area, can provide insights into binding affinity and toxicity mechanisms [17].

Research Reagent Solutions

Table: Essential Computational and Experimental Resources for McGowan Volume Research

| Resource/Tool | Type | Function/Application | Key Features |

|---|---|---|---|

| WSU-2025 Descriptor Database [11] | Database | Reference data for LSER parameters | 387 compounds with validated descriptors; updated values |

| Solver Method [14] | Methodology | Experimental descriptor assignment | Multi-technique approach (GC, LC, partition) |

| Chemistry Development Kit (CDK) [15] | Software Library | Molecular descriptor calculation | Open-source, includes Vx implementation |

| MarvinSketch [13] | Software Application | van der Waals volume and area calculation | Commercial implementation with graphical interface |

| HArD Database [16] | Specialized Database | Steric descriptors for heteroaryl groups | >31,500 heteroaryl substituents with DFT-computed parameters |

| Abraham Solvation Parameter Model [1] | Theoretical Framework | Prediction of partition and solubility properties | Established LSER equations with system-specific coefficients |

Linear Solvation Energy Relationships (LSERs) represent a powerful quantitative approach for predicting a wide array of physicochemical and biological properties based on the molecular structure of a compound. The foundational LSER model, as developed by Abraham, is described by the following equation:

Property = c + vVx + eE + sS + aA + bB + lL

In this model, the capital letters (Vx, E, S, A, B, L) are the solute descriptors, each quantifying a specific aspect of a molecule's potential for intermolecular interactions. The lower-case letters (c, v, e, s, a, b, l) are the system coefficients, determined via regression analysis, which reflect the property's sensitivity to each interaction type in a given system. The E descriptor, officially termed the excess molar refractivity, is a central parameter in this framework. It serves as a combined measure of a molecule's polarizability and its capacity to participate in dispersion interactions. Unlike simple refractive index measurements, the E descriptor is specifically constructed to be largely independent of strong, specific interactions like hydrogen bonding, making it a unique and fundamental property in LSER studies for predicting solubility, partitioning behavior, and pharmacokinetic properties.

Theoretical Foundation of Excess Molar Refractivity

Defining Excess Molar Refractivity

The excess molar refractivity (E) is derived from the Lorenz-Lorentz equation, which relates the refractive index of a substance to its molar mass and density. The molar refractivity (R) of a compound is given by:

R = (n² - 1)/(n² + 2) × (M / ρ)

Where:

- n is the refractive index

- M is the molar mass

- ρ is the density

The excess molar refractivity is then defined as the difference between the compound's molar refractivity and the molar refractivity of a hypothetical hydrocarbon of the same molecular volume. This "excess" quantifies the contribution from π- and n-electrons to the overall polarizability, which is why it is also considered a measure of the solute's polarizability and dispersion interaction capability. In the context of LSERs, the E descriptor is a dimensionless quantity, typically normalized and determined from experimental chromatographic or partition data.

Physical Interpretation: Polarizability and Dispersion Forces

The E descriptor fundamentally captures a molecule's ability to undergo electronic polarization—the temporary distortion of its electron cloud in response to an electric field, such as that generated by a nearby molecule. This induced dipole moment is the origin of dispersion (London) forces, which are universal attractive forces present between all atoms and molecules.

- Polarizability: A higher value of the E descriptor indicates a larger, more easily deformable electron cloud. This is typically associated with molecules containing conjugated π-systems, aromatic rings, and heavy atoms (e.g., sulfur, bromine, iodine).

- Dispersion Interactions: In solution, these induced dipole-induced dipole interactions are a major component of the solvation energy. A solute with a high E value will generally experience stronger dispersion interactions with non-polar or polarizable solvents and phases. In biological systems, these interactions are critical for drug binding to hydrophobic pockets in proteins and for passive diffusion through lipid membranes.

Experimental Determination and Methodologies

Direct Measurement via Refractometry

The most straightforward method for determining molar refractivity, and by extension informing the E descriptor, involves measuring the refractive index (n) and density (ρ) of a liquid solute.

Table 1: Key Instrumentation for Direct Refractivity Measurements

| Instrument | Measured Property | Brief Principle of Operation | Key Considerations |

|---|---|---|---|

| Abbé Refractometer | Refractive Index (n) | Measures the critical angle of total internal reflection for a liquid sample. | Requires only a small sample volume; standard method for n. |

| Digital Density Meter | Density (ρ) | Measures the natural oscillation frequency of a U-shaped glass tube filled with the sample (e.g., Anton Paar densimeter). | Provides high-precision density data; often thermostatted. |

Experimental Protocol:

- Sample Preparation: Ensure the compound of interest is pure and in liquid form. If solid, it may need to be dissolved in a solvent, though this complicates the calculation.

- Density Measurement: Fill the densimeter cell with the sample and record the density (ρ) at a controlled temperature (e.g., 25.0 °C). The instrument is typically calibrated with air and water.

- Refractive Index Measurement: Place a drop of the sample on the prism of the refractometer and record the refractive index (n) at the same controlled temperature.

- Calculation: Use the measured n and ρ values, along with the known molar mass (M), to calculate the molar refractivity (R) using the Lorenz-Lorentz equation.

Chromatographic Determination of the E Descriptor

For compounds that are not readily available as pure liquids, or for high-throughput determination, reversed-phase liquid chromatography (RPLC) is a powerful and common indirect method. The retention factor (log k) in a chromatographic system correlates with the LSER descriptors.

Experimental Protocol for RPLC-Derived E Values:

- Chromatographic System Setup:

- Column: A non-polar stationary phase is used, such as an octadecylsilyl (ODS or C18) column (e.g., Purosphere RP-18e) [18].

- Mobile Phase: A binary mixture of water and a water-miscible organic modifier (e.g., methanol, acetonitrile) is used.

- Detection: A UV-Vis or refractive index detector is employed.

- Calibration: A set of reference compounds with known E descriptor values is run isocratically at different mobile phase compositions. The retention factor, log k, is calculated for each compound: k = (tR - t0) / t0, where tR is the compound's retention time and t0 is the column void time.

- Measurement: The compound of unknown E is run under the same chromatographic conditions, and its log k is measured.

- Correlation and Prediction: A multi-linear regression is performed on the calibration set data to establish the system coefficients for the LSER equation in that specific chromatographic system. The established model is then used to back-calculate the unknown solute's E descriptor from its measured log k.

Quantitative Data and Property Correlations

The E descriptor's utility is demonstrated by its strong correlation with numerous physicochemical properties. The following table summarizes key relationships observed in research.

Table 2: Correlations of the E Descriptor with Physicochemical and Biological Properties

| Property / System | Correlation with E | Interpretation & Application | Representative Study |

|---|---|---|---|

| Octanol-Water Partition Coefficient (log P) | Positive | Higher polarizability favors partitioning into the organic (octanol) phase due to enhanced dispersion interactions. | Foundational LSER studies |

| Blood-Brain Barrier Permeability (log BB) | Positive | Compounds with greater polarizability diffuse more readily through lipid membranes of the BBB, a key consideration in CNS drug development [18]. | QSAR studies on drug candidates [18] |

| Chromatographic Retention (log k) on Non-polar Phases | Positive | Increased dispersion interactions with the alkyl chain (C18) stationary phase lead to longer retention times. | RPLC method development |

| Aqueous Solubility | Generally Negative (for non-ionic compounds) | Strong dispersion interactions in the aqueous phase are unfavorable; high-E compounds are "squeezed out" into a non-aqueous phase. | Solubility prediction models |

Research on poly(ethylene glycol)s (PEGs) and their aqueous solutions further illustrates the role of intermolecular interactions, including those captured by the E descriptor. Studies measuring properties like excess molar volume (VmE) and deviation in viscosity (Δη) provide insights into the disruption of water's hydrogen-bonding network and the formation of new glycol-water H-bonds, which are influenced by the polarizability and size of the solute molecules [19].

The Scientist's Toolkit: Essential Reagents and Materials

Successful experimental determination of parameters related to excess molar refractivity relies on specific reagents and instrumentation.

Table 3: Key Research Reagent Solutions and Materials

| Item / Reagent | Function / Purpose | Example & Notes |

|---|---|---|

| ODS (C18) Chromatography Column | Stationary phase for reversed-phase HPLC; provides a non-polar environment for measuring partitioning behavior. | Purosphere RP-18e [18]; the gold standard for log P and LSER descriptor determination. |

| Immobilized Artificial Membrane (IAM) Column | Biomimetic stationary phase that mimics cell membranes; used for predicting pharmacokinetic properties like BBB permeability [18]. | IAM.PC.DD2 columns are used to study passive drug transport. |

| HPLC-Grade Solvents | Mobile phase components for creating a consistent elution environment in chromatography. | Acetonitrile, Methanol, and High-purity Water (e.g., from a Milli-Q system). |

| Reference Compound Sets | Calibrants with known LSER descriptors for establishing system coefficients in chromatographic methods. | Sets often include simple aromatics, alkanes, and compounds with various functional groups. |

| Digital Refractometer | Instrument for direct, precise measurement of a solution's refractive index (n). | Abbé or automated digital refractometers. |

| Vibrating-Tube Density Meter | Instrument for high-precision density (ρ) measurements of liquids and solutions. | Anton Paar digital densimeter, used in studies of aqueous PEG solutions [19]. |

Applications in Pharmaceutical Research and Drug Development

The E descriptor is particularly valuable in Quantitative Structure-Activity Relationship (QSAR) and Quantitative Structure-Property Relationship (QSPR) modeling, which are cornerstones of modern drug discovery.

Predicting Blood-Brain Barrier (BBB) Permeability: The ability of a drug candidate to cross the BBB is crucial for central nervous system targets. Research has established that BBB penetration is promoted by high lipophilicity and a weak hydrogen bonding potential [18]. The E descriptor, representing favorable dispersion interactions with the lipid-rich membrane, is a key positive contributor in LSER models for log BB (where log BB = log(Cbrain/Cblood)) [18]. Models using chromatographic retention data from ODS and IAM columns to predict log BB rely heavily on an accurate determination of the E descriptor.

Solubility and Absorption Prediction: During the early stages of drug design, predicting a molecule's aqueous solubility and intestinal absorption is vital. The E descriptor contributes to models that predict these properties by accounting for the energy cost of cavity formation in water and the dispersion interactions that drive partitioning into biological membranes.

Within the comprehensive LSER framework, the excess molar refractivity (E) descriptor is an indispensable tool for quantifying the polarizability and dispersion interaction capacity of a molecule. Its determination, whether through direct physico-chemical measurements or indirect chromatographic methods, provides critical insights that drive predictive modeling in environmental chemistry and pharmaceutical sciences. As drug discovery efforts increasingly rely on in silico methods to prioritize synthetic targets, the accurate determination and application of the E descriptor and its fellow LSER parameters (Vx, S, A, B, L) will remain a fundamental aspect of rational molecular design, enabling researchers to optimize key properties like membrane permeability and bioavailability more efficiently.

Linear Solvation Energy Relationships (LSERs) utilize a set of solute descriptors, commonly known as the Abraham descriptors, to quantitatively predict physicochemical properties and biological activities. These descriptors are encapsulated in the acronym Vx E S A B L, where each letter represents a specific molecular property: Vx is McGowan's characteristic volume, E is the excess molar refractivity, S represents dipolarity/polarizability, A denotes hydrogen-bond acidity, B signifies hydrogen-bond basicity, and L is the gas-hexadecane partition coefficient. The S descriptor specifically quantifies a molecule's ability to engage in dipole-dipole and dipole-induced dipole interactions. It measures a compound's polarity and polarizability, representing the solute's effective tendency to stabilize itself through nonspecific interactions with polar solvents. In pharmacological contexts, the S descriptor helps predict solubility, permeability, and membrane transport properties, as these processes fundamentally depend on molecular interactions in various biological environments. Accurate determination of S is therefore crucial for rational drug design, particularly in optimizing absorption, distribution, and bioavailability characteristics of lead compounds.

Theoretical Foundations of Dipolarity and Polarizability

Electric Dipole Moments in Molecular Systems

An electric dipole moment arises from the separation of positive and negative charges within a molecular system. For the simplest case of two point charges +q and -q separated by distance vector d, the dipole moment μ is defined as μ = qd. In molecular systems, permanent dipole moments exist in neutral molecules due to unequal electron distribution between atoms of different electronegativities. The magnitude of this permanent dipole significantly influences how a molecule interacts with its environment, particularly in condensed phases and biological systems. When a molecule with a permanent dipole moment is placed in an electric field, the field exerts a torque that tends to align the dipole with the field direction, while thermal motion tends to randomize the orientation. This competition between ordering and disordering forces governs many dielectric properties of materials and contributes to the S descriptor in LSER formulations. The energy of interaction between a permanent dipole and an external electric field is given by U = -μ·E, which forms the basis for understanding orientation polarization in dielectric materials. [20]

Polarization Mechanisms and Polarizability

Polarizability (α) describes how easily the electron cloud of a molecule can be distorted by an external electric field, leading to an induced dipole moment (μ_ind = αE). This induced moment exists only while the field is applied and contributes to the overall polarization of the substance. The relationship between molecular polarizability and the S descriptor is fundamental to LSER theory, as it captures the nonspecific, non-directional interactions between solute and solvent molecules. Several polarization mechanisms contribute to a molecule's overall response to electric fields: Electronic polarization involves displacement of the electron cloud relative to the nuclei, atomic polarization involves relative displacement of atomic nuclei within the molecule, and orientation polarization involves alignment of permanent dipoles with the field. For drug-like molecules, the electronic polarizability often correlates with π-electron systems and aromatic character, making it particularly relevant for pharmaceutical compounds containing aromatic rings or conjugated systems. The S descriptor effectively integrates these various polarization contributions into a single parameter that describes a molecule's overall tendency to engage in dipole-related interactions. [20]

Interfacial Effects on Dipole Behavior

When molecules are situated near interfaces, as commonly occurs in biological systems and chromatography, their dipole moments and polarization characteristics can be significantly modified. The presence of an interface creates a dielectric discontinuity that affects both the inducing field and the radiation emitted by the oscillating dipole. Research has shown that the electric dipole moment of a particle near an interface can be described by resolvent functions Υ∥(h) and Υ⊥(h) that depend on the dimensionless distance h between the particle and the interface. These functions exhibit resonance features due to the back-action mechanism where dipole radiation reflects at the interface and modifies its own source. This phenomenon is particularly relevant for understanding molecular behavior at biological membranes, protein surfaces, and stationary phases in chromatographic systems used to determine LSER descriptors. The power emitted by the particle depends on h due to interference between source radiation and reflected radiation, creating an additional distance dependence that shows resonance peaks under certain conditions. [20]

Experimental Methodologies for Dipole Moment Determination

Polarization Laser Spectroscopy

Polarization laser spectroscopy represents a sophisticated approach for measuring electric dipole moments between degenerate quantum states. This method exploits the strong polarization dependence of atomic photo-excitation behavior in a controlled vacuum environment. The experimental protocol involves a two-step resonance excitation process with two laser beams, where precise control of laser polarizations enables different excitation conditions within the same excitation scheme. [21]

Table 1: Key Parameters in Polarization Laser Spectroscopy for Dipole Moment Measurement

| Parameter | Specification | Function |

|---|---|---|

| Laser System | Tunable narrow-bandwidth lasers | Provides precise excitation energy for resonant transitions |

| Vacuum Chamber | Ultra-high vacuum (≤10⁻⁸ mbar) | Elimates collisional broadening and quenching |

| Polarization Control | Linear, circular, or elliptical polarizers | Creates specific excitation conditions for quantum states |

| Detection System | Fluorescence or ionization detectors | Measures population transfer between quantum states |

| Quantum Mechanical Model | Includes μ as fitting parameter | Extracts dipole moment from experimental data |

The experimental workflow begins with atomization of the sample in the vacuum chamber, typically using high-temperature heating or laser ablation. Two independently tunable laser systems with precise polarization control are then employed for sequential excitation of the target quantum states. The first laser prepares atoms in an intermediate state, while the second laser promotes them to the final excited state. By systematically varying the polarizations of both lasers and measuring the resulting excitation curves, researchers can obtain sufficient data to extract the transition dipole moment through quantum mechanical fitting. This method has been successfully applied to uranium atom transitions, revealing dipole moments of 0.16 and 4.1 Debye for specific transitions that had not been previously measured directly. [21]

Electric Field-Induced Alignment Methods

Another approach for determining molecular dipole moments involves observing the alignment or orientation of molecules in external electric fields. Stark spectroscopy applies a controlled electric field to a molecular sample and measures the resulting shifts and splittings in rotational or vibrational spectra. The magnitude of these effects depends directly on the permanent dipole moment, allowing for precise quantification. For symmetric top molecules, the Stark effect produces characteristic splitting patterns that can be analyzed to determine both the magnitude and orientation of the dipole moment vector relative to the principal molecular axes. Modern implementations of this technique often combine supersonic jet expansion with high-resolution microwave or infrared spectroscopy, enabling the study of isolated molecules with minimal thermal broadening.

Computational Approaches for S Descriptor Determination

While experimental methods provide direct measurements of dipole moments, computational approaches offer efficient means for estimating the S descriptor for LSER applications. Quantum mechanical calculations, particularly density functional theory (DFT), can predict molecular dipole moments and polarizabilities with reasonable accuracy. These calculations typically involve geometry optimization followed by property evaluation at the equilibrium structure. For the S descriptor specifically, chromatographic methods using well-characterized stationary phases provide experimental determination through solvation parameter models. The S value is derived from the difference in retention behavior between polar and nonpolar stationary phases, capturing the molecule's overall dipolarity and polarizability characteristics that govern its interactions in biological and environmental systems.

Research Reagent Solutions for Dipole Moment Studies

Table 2: Essential Research Reagents for Dipole Moment and Polarization Experiments

| Reagent/Equipment | Function | Technical Specifications |

|---|---|---|

| Tunable Dye Laser System | Provides precise excitation wavelengths | Spectral resolution <0.1 cm⁻¹, wavelength range 250-1000 nm |

| Ultra-High Vacuum Chamber | Creates collision-free environment for spectroscopy | Base pressure ≤10⁻⁸ mbar, with sample introduction system |

| Electro-Optic Modulators | Controls polarization states of laser beams | Modulation frequency >100 kHz, extinction ratio >1000:1 |

| Quantum Chemistry Software | Computes molecular electronic properties | DFT functionals (B3LYP, ωB97X-D), basis sets (cc-pVDZ, aug-cc-pVTZ) |

| High-Voltage Stark Electrodes | Generates uniform electric fields for alignment | Field strength up to 100 kV/cm, parallel plate configuration |

| Supersonic Jet Expansion Source | Cools molecules for high-resolution spectroscopy | Backing pressure 1-10 bar, pulsed valve operation |

Visualization of Experimental Workflows

Polarization Spectroscopy Methodology

LSER S Descriptor Determination Framework

Data Presentation and Comparative Analysis

Experimental Dipole Moment Measurements

Table 3: Experimentally Determined Electric Dipole Moments for Selected Transitions

| Atomic/Molecular System | Transition Energy (cm⁻¹) | Dipole Moment (Debye) | Measurement Method |

|---|---|---|---|

| Uranium Atom | 16,900 → 33,939 | 0.16 | Polarization Laser Spectroscopy |

| Uranium Atom | 16,900 → 34,599 | 4.10 | Polarization Laser Spectroscopy |

| Representative Drug Molecule | N/A | 1.5-4.5 | Stark Spectroscopy |

| Polar Aromatic Compound | N/A | 3.0-5.0 | Computational DFT Methods |

The data presented in Table 3 illustrates the range of dipole moments measurable with current techniques. The significant difference between the two uranium transitions (0.16 vs. 4.10 Debye) highlights the substantial variation that can exist even within the same atomic system. For pharmaceutical compounds, dipole moments typically fall in the 1.5-5.0 Debye range, with higher values often correlating with improved aqueous solubility but potentially reduced membrane permeability. [21]

Correlation Between Dipole Properties and S Descriptor

Table 4: Relationship Between Molecular Properties and LSER S Descriptor

| Molecular Characteristic | Effect on S Descriptor | Impact on Solvation Properties |

|---|---|---|

| Large Permanent Dipole Moment | Increases S value | Enhanced solubility in polar solvents |

| High Electronic Polarizability | Increases S value | Stronger dispersion interactions |

| Conjugated π-Systems | Significantly increases S | Improved interaction with aromatic stations |

| Polar Functional Groups | Increases S | Better hydration and polar solvation |

| Nonpolar Aliphatic Chains | Decreases S | Increased hydrophobicity |

The S descriptor integrates various dipole-related properties into a single parameter that effectively predicts solvation behavior across different media. Understanding these relationships enables researchers to rationally design compounds with optimized distribution characteristics for pharmaceutical applications. Molecules with balanced S values typically exhibit improved drug-like properties, adequate for both aqueous solubility and membrane permeation.

Hydrogen-bond (H-bond) acidity and basicity are fundamental molecular properties that quantify a substance's capacity to act as a hydrogen-bond donor (HBD) or acceptor (HBA), respectively. Within the framework of Linear Solvation Energy Relationships (LSERs), these are formally defined as the solute descriptors A (hydrogen-bond acidity) and B (hydrogen-bond basicity) [22]. They are integral components of the Abraham solvation parameter model, which expresses a solute's property (such as a partition coefficient) as a linear combination of its molecular descriptors: Vx, E, S, A, B, and L [23]. The A parameter represents the solute's ability to donate a hydrogen bond, while the B parameter represents its ability to accept one [22]. The accurate determination of these parameters is critical for researchers and drug development professionals, as they allow for the prediction of a molecule's behavior in different environments, influencing solubility, permeability, bioavailability, and binding affinity [24] [25] [23].

Experimental Scales and Measurement Methodologies

Several experimental scales have been developed to quantify hydrogen-bond strength, primarily based on measuring equilibrium constants for complex formation in poorly coordinating solvents.

The pK₍BHX₎ Scale for Hydrogen-Bond Basicity

The pKBHX scale is a widely used measure of hydrogen-bond acceptor strength. It is defined as the base-10 logarithm of the equilibrium constant (K) for the 1:1 complex formation between a hydrogen-bond acceptor and the reference donor 4-fluorophenol in carbon tetrachloride [24] [26]. Under these conditions, pKBHX values for common organic functional groups span approximately six orders of magnitude, typically ranging from -1 for weak acceptors like alkenes to 5 for strong acceptors like amides and N-oxides [24] [26].

Table 1: Experimentally Measured pKBHX Values for Representative Functional Groups

| Functional Group | Typical pK₍BHX₎ Range | Representative Example |

|---|---|---|

| Alkene | -1 to 0 | --- |

| Amine | ~1.4 (varies with sterics) | Triisopropylamine: 0.30 [24] |

| Amide | 2.0 to 2.5 | --- |

| Carbonyl | ~2.0 (varies with substitution) | --- |

| N-Oxide | >3.0 | --- |

The Abraham Scale and the ln K₍eq₎ Measurement

Abraham's A and B parameters are determined from the log₁₀K values for hydrogen bond formation between acids and bases in inert solvents like CCl₄ [22]. An alternative, experimentally accessible approach for measuring donor strength uses a colorimetric pyrazinone sensor.

Experimental Protocol: UV-Vis Titration for Hydrogen-Bond Donor Strength

- Principle: A pyrazinone sensor undergoes a colorimetric shift upon complexation with a hydrogen-bond donor. The binding constant (Keq), determined by titration, is a direct measure of the analyte's hydrogen-bond donating ability, reported as lnKeq [25].

- Procedure:

- Environment: Titrations are performed in dichloromethane to limit confounding non-covalent interactions and amplify hydrogen bonding effects [25].

- Measurement: The analyte is titrated into a solution of the pyrazinone sensor.

- Analysis: The resulting UV-Vis spectral shifts are measured, and the binding constant (Keq) is calculated from the titration data. A larger lnKeq value corresponds to a stronger hydrogen-bond donor [25].

- Application: This method is suitable for weak to moderate donors, including various N-H and O-H containing compounds such as heterocycles, amides, sulfonamides, and alcohols that are common in pharmaceuticals [25].

Table 2: Experimentally Determined Hydrogen-Bond Donor Strengths (ln K₍eq₎) for Selected Motifs

| Compound Class | Example Structure | ln K₍eq₎ | Notes |

|---|---|---|---|

| Aliphatic Alcohols | Compound 44 / 45 | 0.86 / 0.94 | Very weak donors [25] |

| Benzyl Alcohol | Compound 50 | 1.93 | Stronger than aliphatic due to inductive effects [25] |

| Amines | Compound 1 | ~2.0 (weak) | Among the weakest successful titrations [25] |

| Primary Amide | Compound 13 | >2.41 | Stronger than secondary amides [25] |

| Imidazole | Unsubstituted | 3.42 | Strength decreases with alkyl substitution [25] |

| Imides | Compound 22 | Relatively strong | Enhanced by two electron-withdrawing carbonyls [25] |

Computational Prediction of A and B Parameters

Computational methods provide an efficient path to predicting hydrogen-bonding strength, avoiding laborious experimental measurements.

Prediction from Electrostatic Potential (pK₍BHX₎)

A robust black-box workflow for predicting site-specific hydrogen-bond basicity (pKBHX) uses the minimum electrostatic potential (Vmin) in the region of a hydrogen-bond acceptor's lone pairs [24] [26].

Computational Protocol: Vmin-Based pK₍BHX₎ Prediction

- Step 1: Conformer Generation. A conformer search is run on the input molecule using the ETKDG algorithm in RDKit, followed by MMFF94 optimization [24].

- Step 2: Conformer Filtering. The conformational ensemble is filtered using the CREST screening protocol and GFN2-xTB energies to remove duplicates and high-energy conformers [24].

- Step 3: Neural Network Optimization. The remaining conformers are scored and optimized with the AIMNet2 neural network potential, with the lowest-energy conformer selected for the final calculation [24].

- Step 4: DFT Single-Point Calculation. A single density-functional-theory (DFT) calculation at the r2SCAN-3c level is performed on the optimized geometry to compute the electrostatic potential [24].

- Step 5: Locate Vmin and Scale. The electrostatic potential minima (Vmin) near each hydrogen-bond accepting atom are located by numerical optimization. These values are linearly scaled using functional-group-specific parameters to predict experimental pKBHX values [24]. This workflow achieves a mean absolute error of about 0.19 pKBHX units across diverse functional groups [24].

Figure 1: Computational workflow for predicting hydrogen-bond basicity (pKBHX) from the electrostatic potential.

Prediction from Atomic Properties and Quantum Chemical Topology

The Abraham A parameter correlates strongly with the computed partial charge on the most positive hydrogen atom in the molecule, though steric effects can also play a significant role [22]. In contrast, the S parameter, which represents polarity/polarizability, correlates with the molecular dipole moment, the partial charge on the most negative atom, and for single-ring aromatics, the molecular polarizability [22].

A Quantum Chemical Topology (QCT) approach has also shown success, linking experimentally measured pKBHX values to the change in the atomic energy of the hydrogen atom, ΔE(H), upon complexation. This method has achieved strong correlations for several common HBDs, including water (r² = 0.96), methanol (r² = 0.95), and 4-fluorophenol (r² = 0.91) [23].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Reagents and Computational Tools for H-Bond Strength Analysis

| Item / Reagent | Function / Application | Context & Rationale |

|---|---|---|

| 4-Fluorophenol | Reference Hydrogen-Bond Donor | Standard donor for experimental pK₍BHX₎ measurement in CCl₄ [24] [26]. |

| Carbon Tetrachloride (CCl₄) | Inert Solvent | Used in equilibrium constant measurements to minimize solvent interference [24] [22]. |

| Pyrazinone Sensor | Colorimetric H-Bond Acceptor | Enables H-Bond donor strength (ln K₍eq₎) quantification via UV-Vis titration [25]. |

| RDKit | Open-Source Cheminformatics | Used for initial conformer generation with the ETKDG algorithm [24]. |

| AIMNet2 | Neural Network Potential | Accelerates geometry optimization, replacing costly DFT optimizations [24]. |

| r2SCAN-3c Functional | Density Functional Theory (DFT) | Provides a low-cost, high-accuracy method for the final electrostatic potential calculation [24]. |

Applications in Rational Molecular Design and Drug Discovery

Quantifying A and B parameters is not merely an academic exercise; it provides powerful insights for rational molecular design, particularly in medicinal chemistry.

Figure 2: The influence of hydrogen-bond donor and acceptor strength on key physicochemical and pharmacological properties of drug molecules.

A compelling case study from AstraZeneca illustrates this principle. During the optimization of IRAK4 inhibitors, researchers observed that a seemingly minor change—moving a nitrogen atom within a pyrrolopyrimidine scaffold—increased the hydrogen-bond acceptor strength (pKBHX) of two key sites by 0.61 and 0.15 units, respectively. This 4-fold increase in basicity for one site led to decreased lipophilicity, lower membrane permeability, and a higher efflux ratio, all undesirable for an orally bioavailable drug. In contrast, switching to a pyrrolotriazine scaffold lowered the pKBHX values, resulting in more favorable permeability and efflux profiles [26]. This demonstrates how quantitative prediction of hydrogen-bond basicity can directly guide scaffold selection and property-based design.