Beyond Size: Strategies for Addressing Chemical Diversity in LIBS Training Sets for Enhanced Analytical Accuracy

This article addresses the critical challenge of chemical diversity in Laser-Induced Breakdown Spectroscopy (LIBS) training sets, a key factor influencing the accuracy and reliability of analytical models.

Beyond Size: Strategies for Addressing Chemical Diversity in LIBS Training Sets for Enhanced Analytical Accuracy

Abstract

This article addresses the critical challenge of chemical diversity in Laser-Induced Breakdown Spectroscopy (LIBS) training sets, a key factor influencing the accuracy and reliability of analytical models. As LIBS sees expanding use in fields from drug development to Mars exploration, ensuring training libraries represent the vast chemical universe is paramount. We explore foundational concepts of chemical space, investigate methodologies like transfer learning and active learning to overcome data limitations, provide optimization techniques to tackle matrix effects, and present validation frameworks for comparative model assessment. This guide equips researchers and drug development professionals with practical strategies to build more robust, generalizable, and effective LIBS analytical methods.

Defining the Challenge: Chemical Space, Diversity, and the Limitations of Current LIBS Training Sets

Understanding Chemical Space and Why Diversity Matters in Spectroscopy

In analytical sciences, chemical space represents the total universe of all possible organic molecules, a theoretical collection estimated to include around 10^60 unique structures with molecular weights under 500 Da [1]. For researchers in spectroscopy and drug development, effectively navigating this vast space is critical for creating robust predictive models and ensuring comprehensive chemical analysis. The core challenge lies in the fact that our current analytical methods, while powerful, capture only a tiny fraction of this diversity. Non-targeted analysis (NTA) studies using liquid chromatography–high-resolution mass spectrometry (LC–HRMS) have been shown to cover only about 2% of the relevant chemical space in environmental and biological samples [1]. This limited coverage underscores why diversity in spectroscopy training sets isn't merely beneficial—it's essential for generating reliable, real-world applicable results.

Technical Support Center

Frequently Asked Questions (FAQs)

1. What is chemical space and why does its diversity matter in spectroscopy?

Chemical space encompasses all possible organic molecules that could theoretically exist. In practical spectroscopic terms, it refers to the chemical diversity relevant to your specific analysis, such as the human exposome (all environmental exposures) or particular drug classes [1] [2]. Diversity matters because non-diverse training sets create significant blind spots. If your spectral libraries or calibration sets don't adequately represent the chemical diversity you might encounter, your models will fail to accurately identify or quantify novel compounds. Research reveals that current implementations of mass spectrometry, for instance, confidently identify and quantify less than 1% of the broad chemical space because pure standards are unavailable for the remaining compounds [3].

2. My spectroscopic models perform well in validation but fail with real-world samples. What is the likely cause?

This common issue typically stems from a lack of chemical diversity in your training data. Your model has likely overfitted to a limited chemical domain and cannot generalize to the broader diversity encountered in actual samples. The problem is particularly acute in methods like non-targeted analysis, where the gap between the chemical space covered during method development and the sample's actual composition is vast [1]. To resolve this, you must expand your training set to include a more representative range of chemical structures, functional groups, and sample matrices.

3. How can I assess the chemical diversity of my current spectral library or training set?

Begin by auditing the structural and physicochemical properties of the compounds in your set. Use metrics like molecular weight, polarity, presence of key functional groups, and structural fingerprints. Advanced approaches involve creating Chemical Space Networks (CSNs), which are complex network models that visualize and quantify relationships between compounds based on similarity. These networks can reveal clusters, gaps, and the overall coverage of your chemical space [2]. Tools for calculating molecular descriptors and similarities are available in various cheminformatics software packages.

4. What are the practical steps to increase diversity in laser spectroscopy training sets?

- Strategic Compound Selection: Move beyond convenience sampling of available standards. Use chemical space mapping to identify underrepresented regions and prioritize compounds that fill these gaps.

- Leverage Multiple Sources: Incorporate compounds and spectra from diverse databases like PubChem, NORMAN, and CompTox [1].

- Data Augmentation: For techniques like Laser-Induced Breakdown Spectroscopy (LIBS), employ data preprocessing and augmentation strategies (e.g., adding noise, spectral variations) to effectively increase diversity [4].

- Collaborative Curation: Partner with other research groups to share and combine datasets, naturally increasing coverage.

5. How does a lack of diversity specifically impact different spectroscopic techniques?

- Mass Spectrometry (MS): Leads to an inability to identify "unknown unknowns" in non-targeted analysis, as current MS technology is predominantly reliant on comparison to previously seen reference spectra [3].

- Laser-Induced Breakdown Spectroscopy (LIBS): Results in poor generalization of machine learning classifiers for sample identification and discrimination when faced with new sample types or matrices [4].

- Near-Infrared (NIR) Spectroscopy: Causes prediction models for quality parameters (e.g., water content) to fail when the chemical or physical properties of new samples fall outside the range of the original calibration set [5].

Troubleshooting Guides

Problem: Poor Generalization of Machine Learning Models in LIBS Classification

Description: A classifier trained on LIBS spectra performs well on its training data but fails to correctly identify or classify new samples from a slightly different origin or composition.

Solution:

- Audit Training Set Diversity: Analyze the elemental composition and matrix types in your training set. Ensure it includes the full range of variations expected in real applications.

- Apply Robust Preprocessing: Implement preprocessing steps to mitigate matrix effects and enhance generalizable features. Common strategies include [4]:

- Normalization (e.g., vector, area, internal standard)

- Background correction

- Feature selection to focus on the most discriminative spectral lines

- Expand the Training Set: Actively collect spectra from samples that represent the under-represented chemical domains or matrix types.

- Re-train with Expanded Data: Use the more diverse dataset to re-train your classifier (e.g., Support Vector Machines, Random Forests, PCA-LDA). Validate its performance on a completely independent and diverse test set.

Table 1: Common Preprocessing Steps for Improving LIBS Model Generalization [4]

| Step | Purpose | Common Techniques |

|---|---|---|

| Spectral Normalization | Minimizes signal fluctuations from pulse energy and sample surface | Total Area, Internal Standard, Vector Normalization |

| Background Correction | Removes continuum and dark noise | Polynomial Fitting, Wavelet Transformation |

| Feature Selection | Reduces dimensionality, focuses on key elements | Variance Threshold, Genetic Algorithms, PCA |

Problem: Low Identification Rate in Non-Targeted Analysis with LC-HRMS

Description: Despite processing complex samples, your non-targeted workflow identifies a very low percentage of the chromatographic features detected (e.g., ≤5%), leaving many compounds unknown [1].

Solution:

- Evaluate Chemical Space Coverage: Compare the compounds you can confidently identify (confidence levels 1 and 2) against a comprehensive database like NORMAN SusDat (containing ~60,000 unique chemicals) to quantify your coverage gap [1].

- Optimize Experimental Parameters: The generic nature of NTA methods means no single setup captures everything. Critically evaluate and potentially adjust:

- Sample preparation: Use multiple extraction techniques to cover a wider polarity range.

- Chromatography: Test different LC columns (e.g., HILIC, RPLC) to separate different compound classes.

- Data Acquisition: Employ both positive and negative ionization modes and consider combining data-dependent and data-independent acquisition modes.

- Diversify Spectral Libraries: The libraries used for spectral matching are a major bottleneck. Supplement commercial libraries with domain-specific and experimental databases.

- Report Transparently: Clearly document all unreported parameters in your workflow, as missing information hinders reproducibility and method improvement [1].

Table 2: Key Experimental Parameters Affecting Chemical Space Coverage in LC-HRMS NTA [1]

| Workflow Stage | Parameter to Review | Impact on Diversity |

|---|---|---|

| Sample Prep | Extraction solvent, sorbent | Dictates range of physicochemical properties (polarity, volatility) captured. |

| Chromatography | Column chemistry, gradient | Influences separation of different compound classes. |

| MS Acquisition | Ionization polarity, mass analyzer, acquisition mode | Affects detection of ions with different affinities for positive/negative mode and data quality. |

Research Reagent Solutions

Table 3: Essential Materials for Comprehensive Chemical Space Analysis

| Item Name | Function/Benefit |

|---|---|

| NORMAN SusDat Database | A collaborative, open database containing structures of ~60,000 "suspect" chemicals of emerging concern, used to benchmark the coverage of an analytical method [1]. |

| PubChem Database | A public repository of over 100 million compounds, providing extensive chemical and structural data for diversity assessment and compound identification [1]. |

| Liquid Chromatography Columns (Multiple Chemistries) | Using a combination of columns (e.g., reversed-phase, HILIC, ion-pairing) is crucial to separate and retain a diverse range of molecules in a non-targeted workflow [1]. |

| Certified Reference Materials (Diverse Classes) | A wide array of pure analytical standards from different chemical classes (e.g., pharmaceuticals, pesticides, metabolites) is essential for building calibrated and identifiable spectral libraries [3]. |

Experimental Protocols & Workflows

Protocol: Building a Chemically Diverse Training Set for Spectral Prediction Models

This protocol is adapted from best practices in NIR spectroscopy and chemical space analysis [5] [2].

1. Define the Scope of the Chemical Space * Clearly delineate the boundaries of your research question. Are you focused on a specific class of pharmaceuticals, all potential environmental contaminants, or a broad range of metabolites? * Use existing knowledge and databases to list the key structural scaffolds, functional groups, and physicochemical properties (log P, molecular weight, etc.) that define this space.

2. Conduct a Gap Analysis * Map the compounds for which you have existing spectra onto a chemical space network or a principal component analysis (PCA) plot based on molecular descriptors. * Visually and statistically identify regions of the chemical space that are sparse or unrepresented in your current collection.

3. Curate and Acquire Standards * Prioritize the acquisition of reference standards or well-characterized samples that fill the identified gaps. This may require strategic purchasing, synthesis, or collaboration. * For an "easy" matrix, 10-20 well-chosen samples might suffice for a initial model. For complex applications, a minimum of 40-60 diverse samples is recommended [5].

4. Acquire Spectra Under Standardized Conditions * Collect high-quality spectral data for all curated samples using consistent, documented instrumental parameters. * For NIR models, correlate these spectra with reference values from a primary method (e.g., Karl Fischer titration for water content) to build the prediction model [5].

5. Validate with External Test Sets * Test the performance of your model using a completely independent set of samples that were not used in training, ensuring they represent the diversity of the entire chemical space of interest.

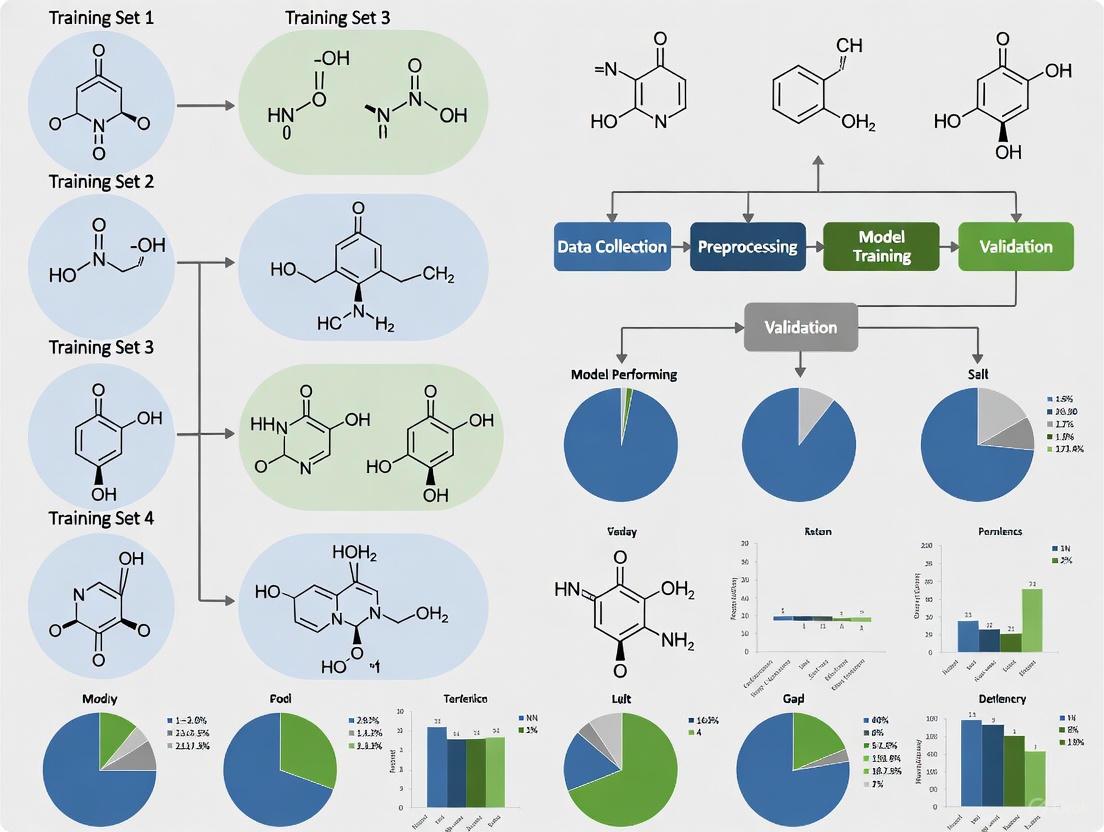

Diagram 1: Workflow for building a diverse training set.

Conceptual Framework: The Chemical Space Network (CSN) for Diversity Assessment

Chemical Space Networks (CSNs) provide a powerful, non-metric alternative to traditional coordinate-based representations of chemical space, which can be heavily influenced by the choice of molecular descriptors [2]. In a CSN:

- Nodes represent individual chemicals.

- Edges connect nodes where the pairwise molecular similarity (e.g., Tanimoto similarity) exceeds a defined threshold.

This network-based approach allows researchers to use tools from graph theory to understand the structure and diversity of their compound sets. Analyzing properties like assortativity and community structure can reveal whether a dataset is a meaningful, organized collection of related compounds or merely a random assembly, thereby guiding diversification efforts [2].

Diagram 2: A simplified Chemical Space Network (CSN) showing clusters and diversity gaps.

Technical Support Center

This support center provides troubleshooting guidance for researchers encountering the Cardinality vs. Diversity Paradox in the design of Linear Solvation Energy Relationship (LSER) training sets. A training set with high cardinality (a large number of data points) but low chemical diversity (limited variation in molecular structures and properties) can lead to models with poor predictive performance and limited applicability.

Troubleshooting Guides

Guide 1: Troubleshooting Poor Model Generalization

Problem: Your LSER model performs well on its training data but fails to accurately predict the solvation energy of new, seemingly similar compounds.

Diagnosis: This is a classic symptom of the Cardinality vs. Diversity Paradox. The model has overfit to a training set that lacks sufficient chemical diversity to represent the broader chemical space you are investigating [6].

Solution:

- Quantify Dataset Diversity: Calculate key diversity metrics for your training set and the new compounds causing prediction failures. Compare these values to identify coverage gaps.

- Augment the Training Set: Strategically select new compounds that address the identified diversity gaps, rather than adding more compounds similar to your existing ones. Prioritize molecules that increase the range of your descriptor values.

Guide 2: Resolving Bias in High-Throughput Screening Data

Problem: Your high-throughput screening generates a large volume of data (high cardinality), but the resulting model is biased towards certain molecular scaffolds, leading to misleading structure-activity relationships.

Diagnosis: The underlying compound library used for screening lacks chemical diversity, causing an overrepresentation of specific chemotypes and an underrepresentation of others [6].

Solution:

- Analyze Library Composition: Use chemoinformatics tools to profile the structural and physicochemical property space of your screening library.

- Apply Strategic Oversampling: For regions of chemical space that are relevant but poorly represented, use techniques like clustering and strategic compound acquisition to increase diversity, even if it temporarily reduces the total number of compounds [6].

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between cardinality and diversity in an LSER training set? A1: Cardinality refers simply to the number of data points or compounds in your training set. Diversity, however, describes the breadth and variety of the chemical space covered by these compounds, measured through molecular descriptors (e.g., log P, polarizability, hydrogen bonding parameters). A set can have high cardinality but low diversity if it contains many similar molecules [6].

Q2: My dataset is very large. How can I quickly assess if a lack of diversity is a problem? A2: You can perform a principal component analysis (PCA) on your molecular descriptors. Plot the first two principal components. If the data points are clustered tightly in one or two regions, it indicates low diversity, even if the total number of points is high. A diverse set will be spread more evenly across the plot [4].

Q3: Are there machine learning techniques that can mitigate the effects of low diversity in a training set? A3: While some algorithms are robust to certain data imbalances, they cannot create information that is not present in the training data. The most reliable solution is to improve the diversity of the training set itself. Techniques like data augmentation (creating virtual compounds via small structural modifications) can be helpful but have limitations in exploring truly novel chemical space [4].

Q4: How does the concept of "granular computing" relate to this paradox? A4: Granular computing involves drawing together data points which are related through similarity, proximity, or functionality [6]. In the context of LSER training sets, it emphasizes the importance of summarizing information by grouping similar molecules. This helps in understanding and ensuring that the training set contains representative granules (clusters) from all relevant regions of the chemical space, rather than an overabundance of points from just a few granules.

Experimental Protocols

Protocol: Designing a Chemically Diverse LSER Training Set

Objective: To construct a training set that provides broad coverage of a defined chemical space, balancing data quantity (cardinality) with structural and property diversity.

Materials:

- Compound library (commercial or in-house)

- Chemoinformatics software (e.g., RDKit, OpenBabel)

- Molecular descriptor calculation software

- Statistical analysis software (e.g., R, Python with pandas/sci-kit learn)

Methodology:

- Define the Chemical Space: Identify the boundaries of your research (e.g., "drug-like molecules under 500 Da"). Select a set of relevant molecular descriptors (e.g.,

π(dipolarity/polarizability),Σα₂ᴴ(total hydrogen-bond acidity),Σβ₂ᴴ(total hydrogen-bond basicity), molecular weight, etc.) [6]. - Calculate Descriptors: For all candidate compounds in your initial library, calculate the selected molecular descriptors.

- Apply a Diversity-Picking Algorithm:

- Perform clustering (e.g., k-means, hierarchical) based on the calculated descriptors to identify natural groupings in the data.

- Instead of picking compounds randomly, select a defined number of compounds from each cluster. This ensures representation from all regions of the chemical space.

- Alternatively, use a MaxMin algorithm, which iteratively selects the compound that is the most distant (least similar) from those already chosen for the training set.

- Validate Diversity: Calculate diversity metrics (see Table 1) for the final selected training set to confirm it meets the desired coverage.

Table 1: Key Quantitative Metrics for Assessing Training Set Diversity

| Metric | Formula/Description | Interpretation |

|---|---|---|

| Descriptor Range | ( \text{Max}(Descriptor) - \text{Min}(Descriptor) ) | A larger range for each descriptor indicates coverage of a wider spectrum of that molecular property. |

| Principal Component Analysis (PCA) Coverage | The area covered by the data points in the space of the first two principal components. | A larger, more uniform coverage indicates greater diversity. Tight clustering indicates low diversity. |

| Pairwise Distance Mean | ( \frac{1}{N(N-1)/2} \sum{i=1}^{N-1} \sum{j=i+1}^{N} d(xi, xj) ) where ( d ) is a molecular distance metric. | A higher mean pairwise distance indicates that molecules are, on average, more dissimilar from each other. |

| Intra-Cluster Density | The average similarity of molecules within their assigned clusters. | High density within many clusters may indicate redundancy and potential for cardinality reduction without losing information [6]. |

Workflow and Relationship Visualizations

Diverse Training Set Design Flow

The Paradox's Impact on Models

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for LSER Training Set Construction

| Item | Function |

|---|---|

| Commercial Compound Libraries | Provide a large source of candidate molecules (high cardinality) for initial screening and selection. |

| Molecular Descriptor Calculator | Software used to compute quantitative descriptors that define a molecule's physicochemical properties for diversity analysis. Examples include RDKit and OpenBabel. |

| Clustering Algorithm | A computational method to group molecules based on similarity, which is crucial for ensuring that selected training compounds represent distinct regions of chemical space [6]. |

| Diversity Selection Software | Chemoinformatics platforms (e.g., using Python/R scripts) that implement algorithms like MaxMin to systematically select a diverse subset from a larger library. |

| Validated Solvation Property Data | A reliable database of experimentally measured solvation energies (or related properties) for benchmark compounds, used to validate the predictive power of the developed LSER model. |

In the pursuit of novel therapeutics, the concept of chemical diversity is paramount. Research into Linear Solvation Energy Relationship (LSER) training sets hinges on the ability to quantify and navigate the vastness of chemical space effectively. Molecular fingerprints provide a foundational method for this task by converting complex molecular structures into fixed-length numerical arrays, enabling computational comparison and analysis [7]. For scenarios demanding extreme sensitivity and specificity, such as detecting ultra-rare biomarkers, the Intrinsic Similarity (iSIM) method offers a powerful, amplification-free approach based on single-molecule kinetics [8]. This technical support center provides researchers with practical guides and FAQs for implementing these critical technologies.

Molecular Fingerprints: A Primer

What is a Molecular Fingerprint?

A molecular fingerprint is a fixed-length array of numbers where different elements indicate the presence or absence of specific structural features in a molecule [7]. This representation allows variable-sized molecules to be processed by models that require fixed-size inputs. If two molecules have similar fingerprints, it indicates they share many structural features and are likely to have similar chemical properties [7].

The Extended Connectivity Fingerprint (ECFP) is a common type. The ECFP algorithm operates iteratively:

- Iteration 1: Classifies atoms based on their immediate properties and bonds (e.g., "carbon atom bonded to two hydrogens and two heavy atoms").

- Iteration 2+: Identifies larger features by looking at circular neighborhoods around atoms, creating features that represent specific combinations of simpler features.

This process continues for a set number of iterations, typically two [7].

Research Reagent Solutions: Molecular Fingerprinting

Table 1: Essential components for a molecular fingerprinting workflow.

| Component | Function | Example/Notes |

|---|---|---|

| Chemical Libraries | Source compounds for diversity analysis and screening. | Libraries can contain millions of compounds; their mutual relationships can be visualized with Chemical Library Networks (CLNs) [9]. |

| Computational Framework | Software to generate fingerprints and calculate similarities. | The RDKit toolkit is often used with the (extended) Tanimoto index for optimal similarity description [9]. |

| Machine Learning Model | A model to make predictions based on fingerprint inputs. | A simple fully connected MultitaskClassifier can make toxicity predictions from 1024-bit fingerprints [7]. |

Diagram 1: ECFP generation workflow.

Intrinsic Similarity (iSIM): Experimental Protocols

Intrinsic Similarity (iSIM), based on the intramolecular Single molecule recognition through equilibrium Poisson sampling (iSiMREPS) method, is an amplification-free technique for detecting nucleic acid biomarkers with single-molecule sensitivity and virtually unlimited specificity [8]. It employs single-molecule Förster Resonance Energy Transfer (smFRET) to generate kinetic fingerprints.

Key Research Reagents

Table 2: Essential materials for an iSiMREPS experiment.

| Item | Function |

|---|---|

| Anchor Strand | Surface-immobilizes the sensor assembly via an affinity tag (e.g., biotin) [8]. |

| Capture Probe (CP) | A fluorescent probe that strongly and stably binds the target molecule [8]. |

| Query Probe (QP) | A fluorescent probe that transiently binds the target, generating blinking FRET signals [8]. |

| Competitor (C) | Accelerates the dissociation of the QP, speeding up the kinetic fingerprinting [8]. |

| Invader Strands | A pair of oligonucleotides used to remove target-less sensor assemblies from the surface, reducing background [8]. |

| Formamide | A denaturant added to the imaging buffer to accelerate kinetics, reducing acquisition time [8]. |

| Oxygen Scavenger System | Included in the imaging solution to limit fluorophore photobleaching [8]. |

| Passivated Coverslip/ Slide | A treated glass surface to which the sensor assembly is anchored, compatible with TIRF microscopy [8]. |

Detailed Methodology

1. Sensor Assembly and Immobilization A dynamic DNA nanoassembly is constructed from a surface-tethered anchor strand, a Capture Probe (CP), and a Query Probe (QP). The sensor is immobilized on a passivated glass surface suitable for Total Internal Reflection Fluorescence (TIRF) microscopy [8].

2. Target Binding and Imaging The sample is introduced to the sensor surface. The CP stably captures the target molecule (e.g., miRNA, ctDNA). The QP, which is also part of the assembly, transiently binds and dissociates from the target. This reversible binding, in the presence of the Competitor, generates characteristic alternating on/off smFRET signals—the kinetic fingerprint. Movies of these signals are recorded at an acquisition rate of ~10 Hz for a short period (~10 seconds per field of view) [8].

3. Data Analysis The recorded kinetic fingerprints are analyzed to distinguish specific target binding from non-specific background binding with near-perfect discrimination. This analysis enables the precise counting of target molecules present at ultra-low concentrations (e.g., limit of detection of ~1 fM for miR-141) [8].

Diagram 2: iSIM core detection process.

Frequently Asked Questions (FAQs) and Troubleshooting

Molecular Fingerprints

Q: What are the main advantages of using ECFP fingerprints? A: ECFPs provide a fixed-size representation for variable-sized molecules, which is essential for many machine learning models. They are computationally efficient to generate and have proven effective for predicting chemical properties and biological activities in drug discovery contexts [7].

Q: How is the diversity of large chemical libraries measured? A: Diversity is quantified using fingerprint-based similarity indices. The extended Tanimoto index in combination with RDKit fingerprints has been found to offer an effective description of similarity for large libraries. This allows for the construction of Chemical Library Networks (CLNs) to visualize relationships between different libraries [9].

Intrinsic Similarity (iSIM) Experimental Setup

Q: My smFRET signal is too weak for reliable detection. What could be wrong? A: First, verify the illumination intensity and TIRF angle adjustment on your microscope; an intensity of ~50 W/cm² and a penetration depth of ~70–85 nm are typical. Second, ensure your oxygen scavenger system is functioning correctly to prevent rapid photobleaching. Third, check the integrity of your fluorophores (Cy3 and A647) and the efficiency of the FRET pair [8].

Q: I am observing a high non-specific background signal. How can I reduce it? A: Implement the pair of invader strands in your protocol. These are designed to selectively displace target-less sensor assemblies from the surface before imaging, which significantly reduces background. Also, ensure that the surface passivation is complete to minimize non-specific adsorption of probes [8].

Q: The kinetic fingerprinting process is too slow for my application. Can it be accelerated? A: Yes, the standard acquisition time for iSiMREPS has been reduced to about 10 seconds per field of view. This acceleration is achieved by adding formamide to the imaging buffer and using the intramolecular design with a Competitor, which together speed up the association and dissociation kinetics of the Query Probe [8].

Data Analysis and Interpretation

Q: How does iSIM achieve such high specificity in discriminating single-nucleotide variants? A: The specificity does not rely solely on thermodynamic hybridization. Instead, it leverages the characteristic kinetic fingerprints (dwell times, association/dissociation rates) generated by the transient binding of the Query Probe. A perfectly matched target produces a distinct kinetic signature compared to a closely related non-target (e.g., a wild-type vs. mutant sequence), enabling near-perfect discrimination at the single-molecule level [8].

Q: In a fingerprint-based model, how should I handle missing data from multi-assay experiments? A: Use a weights array. For assays not performed on certain molecules, set the corresponding weight for that sample and task to zero. This causes the missing data to be ignored during model fitting and evaluation. Weights close to, but not exactly, 1 can be used to balance the contribution of positive and negative samples across different tasks [7].

Troubleshooting Guides

Guide 1: Diagnosing and Correcting Representation Bias in LSER Training Sets

Problem Statement: My Linear Solvation Energy Relationship (LSER) model performs well for common solvents but shows poor predictive accuracy for solvents with strong, specific hydrogen-bonding interactions.

Root Cause Analysis: This is typically caused by Representation Bias, where the training data fails to proportionally represent all relevant chemical groups. In LSER terms, this manifests as an underrepresentation of molecules with extreme values of hydrogen bond acidity (A) and basicity (B) descriptors, or a narrow range of McGowan's characteristic volume (Vx) [10].

Diagnostic Steps:

- Quantify Descriptor Range: Calculate the range and distribution of each LSER molecular descriptor (E, S, A, B, V, L) in your training set. Compare this to the descriptor space of your intended application domain.

- Performance Discrepancy Analysis: Test model performance on subsets of validation data binned by descriptor values (e.g., high A, low B, etc.). Significantly higher error in specific bins indicates underrepresentation.

- Apply Statistical Tests: Use statistical measures like Population Stability Index (PSI) to compare the distribution of descriptors between your training set and the real-world population of chemicals you aim to predict.

Solution Steps:

- Strategic Data Augmentation: Proactively source data for molecules that fill gaps in your chemical space, focusing on regions with high A/B values or other underrepresented descriptors [11] [12].

- Apply Fairness-Aware Training: If retraining the model, use techniques like reweighting training samples from underrepresented regions of the chemical space to balance their influence on the model [13].

- Continuous Monitoring: Establish a dashboard to monitor the distribution of incoming prediction requests against your training set descriptor ranges. Flag significant distribution shifts for model review.

Guide 2: Addressing Temporal and Historical Bias in Solvation Parameter Databases

Problem Statement: The model's predictions for new, synthetically relevant compounds are consistently less accurate than for older, well-documented compounds.

Root Cause Analysis: This is often Historical Bias, where the training database is built on historical experimental data that over-represents certain classes of compounds (e.g., classical organic solvents) and lacks modern, complex chemical entities like macrocycles or complex natural product-inspired scaffolds [11] [14].

Diagnostic Steps:

- Temporal Analysis: Tag your training data by the year the compound was first reported or studied. Analyze model accuracy versus the "age" of the compound.

- Scaffold Analysis: Perform a molecular scaffold analysis of your training database. Calculate the percentage of unique scaffolds and compare it to a modern compound library to identify over-represented chemotypes.

- Check Data Provenance: Document the origins of your training data. Heavy reliance on a few legacy sources is a key indicator of potential historical bias.

Solution Steps:

- Incorporate Contemporary Data: Integrate data from recent high-throughput screening (HTS) campaigns and studies focused on under-explored chemical spaces, such as natural product derivatives [11] [12].

- Leverage Transfer Learning: Pre-train your model on the broad historical database, then fine-tune it on a smaller, carefully curated dataset of modern, relevant compounds to update its predictive capabilities.

- Implement Version Control: Maintain versioned copies of your training databases and models. This allows you to track performance changes and roll back if new data introduces instability [14].

Frequently Asked Questions (FAQs)

FAQ 1: What are the most common types of bias that can affect my LSER model's generalizability?

The most common bias types relevant to chemical models are detailed in the table below.

| Type of Bias | Description | Impact on LSER Models |

|---|---|---|

| Representation Bias [13] | Training data fails to represent the full diversity of the target chemical space. | Poor prediction for solvents/solutes with descriptor values outside the training set range. |

| Historical Bias [14] | Training data reflects past, limited compound sets, not current chemical diversity. | Model is outdated and performs poorly on novel compound classes (e.g., macrocycles, targeted covalent inhibitors). |

| Measurement Bias [15] | Errors or inconsistencies in how experimental solvation data is collected or labeled. | Introduces noise and reduces the overall predictive accuracy and reliability of the model. |

| Aggregation Bias [14] | Combining data from different sources without accounting for systematic differences (e.g., measurement techniques). | Creates a model that is "averaged" and not optimal for any specific chemical sub-space. |

FAQ 2: Beyond simple accuracy metrics, how can I quantitatively measure bias in my training set?

Bias can be measured using specific statistical metrics applied to the model's outputs and the training data's composition [15].

| Metric | Definition | Application in LSER Context |

|---|---|---|

| Demographic Parity [15] | Checks if outcomes are independent of protected attributes. | Check if prediction accuracy is consistent across different molecular families (e.g., alkanes vs. alcohols). |

| Equalized Odds [15] | Requires that True Positive and False Positive Rates are equal across groups. | Ensure the model is equally good at identifying "high" and "low" solvation energy compounds for different chemical classes. |

| Disparate Impact [15] | Measures the ratio of positive outcomes between different groups. | Analyze if the model systematically predicts higher/lower solvation energy for one group of compounds versus another. |

FAQ 3: I have a limited budget for new experimental data. What is the most efficient way to improve my biased training set?

The most cost-effective strategy is targeted data acquisition based on an analysis of the gaps in your chemical descriptor space [11]. Instead of collecting data randomly:

- Perform a principal component analysis (PCA) on your current training set using the LSER descriptors.

- Identify the regions of the resulting chemical space that are sparsely populated.

- Prioritize experiments or data purchases for compounds that lie in these sparse regions, thereby maximizing the diversity gain per new data point. This approach is more efficient than a simple random expansion of the dataset [12].

FAQ 4: Our model is deployed but we've detected a bias issue. What are the immediate mitigation steps without a full retrain?

Post-hoc mitigation is possible. You can:

- Apply Threshold Adjustments: Implement different decision thresholds for different chemical classes to balance error rates [15].

- Use Output Calibration: Calibrate the model's predicted probabilities separately for different segments of your chemical space to ensure they are accurate.

- Deploy an Ensemble Model: Combine the predictions of your existing model with a smaller, specifically trained "corrector" model that is focused on the underrepresented class, effectively debiasing the output.

Experimental Protocols for Bias Assessment

Protocol 1: Auditing an LSER Database for Representational Harm

Objective: To systematically quantify the diversity and representation of chemical functional groups within an LSER training database.

Materials:

- LSER database (e.g., Abraham database)

- Cheminformatics software (e.g., RDKit, OpenBabel)

- Standard statistical analysis software (e.g., R, Python with pandas)

Methodology:

- Data Preparation: Load the database and standardize chemical structures. Calculate molecular descriptors beyond LSER parameters, such as molecular weight, number of hydrogen bond donors/acceptors, and presence of key functional groups (e.g., carboxylic acids, amines, halogens).

- Diversity Analysis:

- Use a dimensionality reduction technique (e.g., PCA or t-SNE) based on the LSER descriptors (E, S, A, B, V, L) to visualize the chemical space of your database.

- Identify "data deserts" – sparse regions in the plot that correspond to real-world chemicals of interest.

- Functional Group Audit: Tally the frequency of each key functional group in the database. Compare these frequencies to their prevalence in a larger reference database (e.g., PubChem) to identify under- and over-represented groups.

- Reporting: Generate a report with visualizations of the chemical space and a table of functional group counts. This audit pinpoints the exact nature of representation bias.

Protocol 2: Implementing a Red Teaming Exercise for a Solvation Prediction Model

Objective: To proactively identify model failures and biases by testing on challenging, edge-case compounds before deployment.

Materials:

- Trained LSER prediction model

- A curated "challenge set" of compounds (see below)

- Model inference environment

Methodology:

- Challenge Set Curation: Assemble a set of 50-100 compounds that are chemically valid but likely to stress the model. This set should include:

- Compounds with extreme LSER descriptor values (e.g., very high A or B).

- Complex natural products or macrocycles not typically in training sets [11].

- Compounds from chemical classes known to be underrepresented in the main training data.

- Execution: Run the model predictions on the challenge set.

- Analysis: Calculate the model's accuracy and mean absolute error (MAE) on the challenge set and compare it to the performance on a standard validation set. A significant performance drop indicates vulnerability to bias.

- Iteration: Use the results to inform the strategic expansion of the training set, specifically adding compounds similar to those the model failed on.

Visualization of Workflows

Bias Mitigation Workflow

Chemical Space Analysis

The Scientist's Toolkit: Research Reagent Solutions

| Item / Reagent | Function in Context of LSER & Bias Mitigation |

|---|---|

| Abraham LSER Descriptors [10] | The core set of molecular parameters (E, S, A, B, V, L) used to quantify a compound's solvation properties and define its position in chemical space. |

| Curated Compound Aggregator Libraries [11] | Platforms that consolidate commercially available compounds from multiple suppliers. Essential for sourcing specific molecules to fill identified gaps in chemical diversity. |

| Natural Product Extracts & Libraries [12] | Provide access to complex, evolutionarily validated chemical scaffolds often underrepresented in synthetic libraries, crucial for combating historical and representation bias. |

| Fairness Toolkits (e.g., AIF360) | Open-source software containing a suite of algorithms for measuring and mitigating bias in machine learning models, applicable to LSER-based predictive models [13] [15]. |

| Cheminformatics Software (e.g., RDKit) | Provides the computational tools for standardizing structures, calculating molecular descriptors, and analyzing chemical space diversity. |

Building Better Libraries: Methodologies for Expanding and Curating Chemically Diverse LIBS Sets

In the field of Linear Solvation Energy Relationships (LSERs), the predictive accuracy and applicability of models are fundamentally constrained by the chemical diversity of their training sets. A training set that inadequately samples the relevant chemical space can lead to biased models with poor external predictive power. Cheminformatics provides the necessary tools to quantify, analyze, and optimize this diversity. This technical support center outlines how modern computational tools, specifically the iSIM (instant similarity) framework and the BitBIRCH clustering algorithm, can be leveraged to diagnose and solve critical issues related to chemical diversity in library design for LSER research. These methods enable researchers to move beyond simple, often misleading, compound counts and to perform rigorous, similarity-based diversity assessments with high computational efficiency, which is crucial for handling large compound libraries [16] [17].

Essential Concepts & Tools

What are iSIM and BitBIRCH?

iSIM (instant similarity) is a novel computational framework that calculates the average pairwise similarity for an entire set of molecules with linear O(N) scaling, a significant improvement over the traditional O(N²) required for all pairwise comparisons [16] [17]. It operates by arranging molecular fingerprints (e.g., binary vectors) into a matrix, summing each column to get a vector ( K = [k1, k2, ..., kM] ), where ( ki ) is the number of "on" bits in column i. These values directly yield the total coincidences of "on" bits ((a)), "off" bits ((d)), and mismatches ((b+c)) across the set, which are the components of common similarity indices [16]. For example, the instantaneous Tanimoto (iT) is calculated as: ( iT = \frac{\sum{i=1}^{M} \frac{ki(ki - 1)}{2}} {\sum{i=1}^{M} \left[ \frac{ki(ki - 1)}{2} + ki(N - ki) \right]} ) [17]. This provides the same value as the average of all pairwise Tanimoto comparisons but is computed orders of magnitude faster [16] [17].

BitBIRCH is a clustering algorithm designed for large-scale chemical datasets. Inspired by the BIRCH (Balanced Iterative Reducing and Clustering using Hierarchies) algorithm, it uses a tree structure to minimize the number of comparisons needed for clustering [17]. Its key advantage is that it is designed specifically for binary fingerprint representations and uses the Tanimoto similarity, making it highly efficient for grouping molecules based on structural similarity in O(N) time, thus enabling the analysis of ultra-large libraries [17].

Table 1: Key Software Toolkits and Libraries for Cheminformatics Analysis

| Tool Name | Type/License | Key Functions Relevant to Diversity Analysis | API/Interface |

|---|---|---|---|

| RDKit [18] [19] [20] | Open-Source Toolkit | Molecule I/O, fingerprint generation (Morgan, RDKit), descriptor calculation, molecular depiction. | C++, Python |

| Chemistry Development Kit (CDK) [18] [19] | Open-Source Library | Chemical structure representation, molecular descriptor calculation, fingerprint generation, SAR analysis. | Java, R, Python |

| Open Babel [18] [19] | Open-Source Program | Chemical file format conversion, structure manipulation, descriptor calculation, substructure search. | C++, Python, Java |

| PaDEL-Descriptor [18] | Open-Source Software | Calculation of molecular descriptors and fingerprints for quantitative analysis. | Command-line, Python wrapper |

| OEChem TK [21] | Commercial Toolkit | Core chemistry handling, molecule file I/O, molecular property calculation, and filtering. | C++, Python, Java, .NET |

Troubleshooting Guides and FAQs

Frequently Asked Questions (FAQs)

FAQ 1: My LSER model performs well on the training set but poorly on new compounds. Could this be a chemical diversity issue in my training library, and how can iSIM help diagnose this?

Yes, this is a classic symptom of a training set with insufficient chemical diversity or coverage of the chemical space relevant to your predictions. iSIM can diagnose this by quantifying the internal similarity of your training set. A very high average iSIM value (e.g., iT > 0.7) indicates that the molecules in your set are too similar to each other, creating a narrow model. Furthermore, you can use the concept of complementary similarity from the iSIM framework [17]. By calculating the iSIM of your training set after iteratively removing each molecule, you can identify molecules that are central (low complementary similarity) or peripheral (high complementary similarity) to your set. An over-reliance on a few central chemotypes would be revealed, guiding you to add compounds from the underrepresented, peripheral regions.

FAQ 2: When using BitBIRCH to cluster a large library, the resulting clusters seem chemically unreasonable. What could be the cause?

This issue typically stems from two main sources:

- Inappropriate Fingerprint Choice: The molecular fingerprint you use defines chemical space. BitBIRCH's performance is sensitive to the type of fingerprint used [17]. A fingerprint that is too coarse (e.g., short MACCS keys) may fail to distinguish meaningful chemotypes. For clustering, more detailed fingerprints like Morgan fingerprints (equivalent to ECFP) are generally recommended.

- Poorly Chosen Similarity Threshold: BitBIRCH, like other clustering methods, requires a similarity threshold to decide when a molecule joins a cluster. A threshold that is too low will create overly broad, heterogeneous clusters, while one that is too high will generate an excessive number of small, fragmented clusters. You must experiment with different thresholds and validate the chemical coherence of the resulting clusters manually or via internal validation metrics.

FAQ 3: How can I efficiently determine which new compounds to add to my existing LSER training set to maximize its chemical diversity?

A combined iSIM and BitBIRCH workflow is highly efficient for this:

- Cluster your existing training set (A) and the candidate new compounds (B) together using BitBIRCH.

- Analyze the resulting clusters. Clusters that contain only molecules from candidate set B represent entirely new chemotypes not covered in your training set. These are high-priority compounds for inclusion.

- Quantify using iSIM. For clusters that contain a mix of A and B, calculate the complementary similarity of the B molecules relative to the entire cluster. Select the candidate molecules with the highest complementary similarity (the most "outlier" or unique) within these mixed clusters to expand the boundaries of your existing chemical space.

FAQ 4: Are iSIM calculations limited to binary fingerprints, or can they be used with continuous molecular descriptors?

The iSIM framework has been extended to handle real-value molecular descriptors [16]. The requirement is that the descriptor vectors are normalized (e.g., all values between 0 and 1). The core logic remains the same but operates on inner products between the molecular vectors and their "flipped" representations (( \mathbf{\bar{X}} = 1 - \mathbf{X} )) to compute the equivalents of the a, b, c, and d variables for continuous data, allowing for the efficient calculation of similarity indices over the entire set [16].

Common Error Messages and Solutions

Table 2: Common Implementation Issues and Resolutions

| Error / Problem | Likely Cause | Solution |

|---|---|---|

| Inconsistent iSIM results between your implementation and pairwise averages. | Incorrect handling of the fingerprint matrix or column sums. | For binary fingerprints, double-check the calculation of (a), (d), and mismatches from the column-sum vector (K). Ensure the formula for your chosen index (e.g., iT, iSM) is implemented exactly as defined [16]. |

| BitBIRCH fails to cluster or runs extremely slowly. | The input data format is incorrect, or the fingerprint is not a binary vector. | Ensure your molecules are represented as binary fingerprints (e.g., RDKit's Morgan fingerprint in bit-vector mode). Verify the input file format matches the algorithm's expectations. |

| Low diversity score (low iT) but the library does not appear diverse. | The chosen fingerprint does not capture relevant chemical features for your LSER context. | The definition of diversity is representation-dependent [17]. Switch to a different fingerprint type (e.g., from path-based to circular fingerprints) or use a set of relevant physicochemical descriptors and recalculate. |

Experimental Protocols & Workflows

Standard Protocol: Quantifying Library Diversity with iSIM

Objective: To calculate the average internal Tanimoto similarity of a molecular library efficiently. Materials: A list of molecules in SMILES or SDF format; Cheminformatics toolkit (e.g., RDKit, CDK).

- Fingerprint Generation: Load the molecules and generate a binary fingerprint for each (e.g., RDKit Morgan fingerprint with radius 2 and a fixed length of 2048 bits).

- Construct Matrix: Create a matrix ( \mathbf{X} ) of size N x M, where N is the number of molecules and M is the fingerprint length.

- Column Summation: Sum the elements of each column to produce the vector ( K = [k1, k2, ..., k_M] ).

- Calculate iT:

- Compute the total number of "on-on" pairs: ( A = \sum{i=1}^{M} \frac{ki(ki - 1)}{2} ).

- Compute the total number of "on-off" mismatches: ( BC = \sum{i=1}^{M} ki(N - ki) ).

- Compute the instantaneous Tanimoto: ( iT = \frac{A}{A + BC} ) [17].

- Interpretation: A lower iT value indicates a more diverse library. Compare this value across different training sets or against a reference library.

Standard Protocol: Partitioning a Library with BitBIRCH Clustering

Objective: To cluster a large molecular library into structurally similar groups. Materials: A list of molecules; Cheminformatics toolkit with BitBIRCH implementation.

- Fingerprint Generation: Generate binary fingerprints for all molecules (as in Protocol 4.1).

- Configure BitBIRCH: Set the clustering threshold (e.g., Tanimoto similarity of 0.65). This is a critical parameter that controls cluster granularity.

- Execute Clustering: Run the BitBIRCH algorithm on the fingerprint matrix.

- Analyze Output: The output is an assignment of each molecule to a cluster. Analyze the size and chemotypes of the clusters.

- For Representative Selection: From each cluster, select one or more representative molecules (e.g., the molecule closest to the cluster centroid) for inclusion in a diverse training set.

- For Diversity Gap Analysis: Identify clusters that are over-represented (too many molecules) or under-represented (few or no molecules) to guide future library acquisition or synthesis.

Integrated Workflow for LSER Training Set Design and Validation

The following workflow diagram integrates iSIM and BitBIRCH to design and validate a chemically diverse LSER training set.

Performance and Comparison Data

Table 3: Computational Scaling of Similarity and Clustering Methods

| Method | Traditional Approach | iSIM / BitBIRCH Approach | Key Advantage |

|---|---|---|---|

| Average Similarity | O(N²) for all pairwise comparisons [16] [17] | O(N) via column-wise summation [16] [17] | Enables analysis of ultra-large libraries (millions of compounds) in feasible time. |

| Clustering | O(N²) for Taylor-Butina and Jarvis-Patrick [17] | O(N) via tree-based indexing with BitBIRCH [17] | Makes clustering of massive datasets tractable without extensive computational resources. |

Integrating Generative AI and Active Learning for Targeted Exploration of Chemical Space

FAQs and Troubleshooting Guides

General Workflow Questions

Q1: What is the core advantage of integrating Generative AI with Active Learning for chemical space exploration?

The primary advantage is the creation of a self-improving cycle that overcomes key limitations of using either method in isolation. The Generative AI, often a Variational Autoencoder (VAE), proposes novel molecules. The Active Learning component then uses computational oracles to evaluate these molecules, selecting the most informative ones to iteratively fine-tune the generative model. This synergy allows for targeted exploration of vast chemical spaces while focusing resources on regions with high predicted affinity, diversity, and synthetic accessibility [22].

Q2: My generative model is producing molecules with low synthetic accessibility or poor drug-likeness. How can I address this?

This is a common challenge. The recommended solution is to implement a multi-stage filtering process within your Active Learning cycle:

- Integrate Chemoinformatic Oracles: Right after molecule generation, employ fast computational filters to assess properties like drug-likeness and synthetic accessibility (SA) [22].

- Use a Nested AL Cycle: Structure your workflow with "inner" Active Learning cycles that use these chemoinformatic oracles. Only molecules passing these filters proceed to more computationally expensive "outer" cycles that involve molecular docking or physics-based simulations [22].

- Reinforcement Learning: Some models address SA by using reinforcement learning with SA estimators or by confining generation to the vicinity of training datasets known for good SA, though this may limit novelty [22].

Q3: In low-data regimes, my exploitative Active Learning model gets stuck on a single scaffold (analog bias). How can I promote diversity?

To combat analog bias and enhance scaffold diversity, consider shifting from a purely exploitative strategy to one that incorporates diversity maximization or uses paired-molecule approaches.

- Adopt a Diversity-Maximizing Strategy: Implement an Active Learning strategy that iteratively selects new molecules to maximize the diversity of the training set. The diversity can be calculated using feature vectors derived from a graph neural network, ensuring the set is representative of the broader chemical space of interest [23].

- Implement the ActiveDelta Approach: Instead of predicting absolute property values, train your model on paired molecular representations to predict property improvements. This approach has been shown to identify more chemically diverse inhibitors in terms of Murcko scaffolds compared to standard exploitative learning, as it more directly learns the path to optimization rather than exploiting a single scaffold [24].

Q4: How can I ensure my model generates molecules that are novel but still similar enough to a known active compound for lead optimization?

A molecular transformer model regularized with a similarity kernel is designed for this exact purpose. This model is trained on billions of molecular pairs with a regularization term that explicitly correlates the probability of generating a target molecule with its similarity to a source molecule. This allows for an exhaustive, controlled exploration of the "near-neighborhood" chemical space around a lead compound, generating highly similar molecules based on precedented and chemically plausible transformations [25].

Technical and Implementation Issues

Q5: The correlation between my model's predictions (e.g., docking scores) and actual experimental affinity is weak. How can I improve target engagement?

To improve the reliability of your predictions, especially when target-specific data is limited, integrate physics-based simulations into your selection pipeline.

- Use Physics-Based Oracles: Replace or supplement data-driven affinity predictors with physics-based molecular modeling oracles, such as molecular docking, which offer greater reliability in low-data regimes [22].

- Implement Refinement Steps: After initial filtering, subject the top-generated candidates to more intensive molecular simulations, such as Monte Carlo simulations with Protein Energy Landscape Exploration (PELE) or absolute binding free energy (ABFE) calculations. These methods provide a more in-depth evaluation of binding interactions and stability, helping to select the most promising candidates for synthesis [22].

Q6: My transformer model generates molecules with low similarity to the source molecule during lead optimization. What is wrong?

The issue likely lies in the model's training. A standard molecular transformer learns the empirical distribution of transformations from its training data without an explicit constraint on similarity.

- Solution: Introduce a similarity-based regularization term into the training loss function. This term penalizes the model if the similarity between the generated target molecule and the source molecule does not align with the generation probability (Negative Log-Likelihood). This forces a direct relationship between the precedence of a transformation and the molecular similarity, ensuring the model generates more similar molecules [25].

Q7: How do I handle the high computational cost of running molecular simulations on thousands of generated molecules?

The nested Active Learning cycle is specifically designed to address this. The workflow uses fast, cheap filters (chemoinformatic oracles) in the inner cycles to drastically reduce the number of molecules that advance to the computationally expensive molecular docking stage in the outer cycles. This iterative refinement ensures that only the most promising candidates undergo resource-intensive simulations, maximizing the efficiency of your computational budget [22].

Experimental Protocols & Workflows

Protocol 1: Nested Active Learning with a VAE Generator

This protocol is adapted from a workflow successfully used to generate novel, potent inhibitors for CDK2 and KRAS [22].

1. Data Preparation and Initialization

- Data Representation: Represent training molecules as SMILES strings, which are then tokenized and converted into one-hot encoding vectors for input into the VAE [22].

- Initial Training: First, pre-train the VAE on a large, general molecular dataset (e.g., PubChem) to learn the fundamentals of chemical structure. Then, perform an initial fine-tuning on a target-specific training set to bias the generator towards relevant chemical space [22].

2. Nested Active Learning Cycles

- Step 1 - Generation: Sample the fine-tuned VAE to generate a set of new molecules.

- Step 2 - Inner AL Cycle (Chemical Filters):

- Validate the chemical correctness of generated molecules.

- Evaluate them using chemoinformatic oracles for drug-likeness, synthetic accessibility (SA), and dissimilarity to the current training set.

- Molecules passing these thresholds are added to a "temporal-specific" set, which is used to fine-tune the VAE. Repeat this inner cycle for a set number of iterations to accumulate chemically viable molecules [22].

- Step 3 - Outer AL Cycle (Affinity Filters):

- After several inner cycles, evaluate the accumulated molecules in the temporal-specific set using a physics-based affinity oracle (e.g., molecular docking simulations).

- Molecules meeting the docking score threshold are transferred to a "permanent-specific" set.

- Use this permanent set to fine-tune the VAE, creating a powerful feedback loop that steers generation toward high-affinity structures [22].

- Step 4 - Iterate: Repeat Steps 1-3, conducting inner cycles of chemical exploration nested within outer cycles of affinity-based selection.

3. Candidate Selection and Validation

- After multiple outer AL cycles, apply stringent filtration to the permanent-specific set.

- Use advanced molecular modeling (e.g., PELE Monte Carlo simulations, Absolute Binding Free Energy calculations) to refine docking poses and predict binding affinities with high accuracy [22].

- Select top candidates for synthesis and experimental validation (e.g., in vitro activity assays).

The workflow for this protocol is illustrated below.

Protocol 2: Exhaustive Local Exploration with a Similarity-Regularized Transformer

This protocol uses a transformer model to exhaustively sample the chemical space around a single source molecule, ideal for lead optimization [25].

1. Model Training

- Data Curation: Train a source-target molecular transformer on a massive dataset of molecular pairs (e.g., hundreds of billions of pairs from PubChem) [25].

- Similarity Regularization: Critically, incorporate a regularization term (e.g., a ranking loss) into the training loss function. This term forces a correlation between the model's Negative Log-Likelihood (NLL) for a generated molecule and its structural similarity (e.g., ECFP4 Tanimoto) to the source molecule [25].

2. Sampling and Exploration

- Beam Search: For a given source molecule, use beam search to identify all target molecules up to a user-defined NLL threshold.

- Exhaustive Enumeration: Due to the enforced similarity-NLL correlation, sampling to a specific NLL threshold corresponds to an approximately exhaustive enumeration of the "near-neighborhood" of the source molecule. The user controls the size and similarity of the explored space by setting the NLL threshold [25].

Performance Data

The table below summarizes quantitative results from key studies implementing these integrated approaches.

Table 1: Performance Metrics of Generative AI and Active Learning Integration in Drug Discovery

| Method / Study | Target / Dataset | Key Performance Results | Reference |

|---|---|---|---|

| VAE with Nested Active Learning | CDK2 | Generated novel scaffolds. Of 9 molecules synthesized, 8 showed in vitro activity, including 1 with nanomolar potency. | [22] |

| ActiveDelta (Paired Learning) | 99 Ki benchmark datasets | Outperformed standard exploitative active learning in identifying potent inhibitors and achieved greater Murcko scaffold diversity. | [24] |

| Similarity-Regularized Transformer | TTD Database (821 compounds) | Model regularization significantly improved the "Rank Score" and correlation between generation probability and molecular similarity. | [25] |

| Diversity-Maximizing Active Learning | Multiple molecular properties | Outperformed random sampling in constructing compact, representative training sets for graph neural network models. | [23] |

The Scientist's Toolkit

Table 2: Essential Research Reagents and Computational Tools

| Item / Resource | Function / Description | Relevance to Workflow |

|---|---|---|

| Variational Autoencoder (VAE) | A generative model that maps molecules to a continuous latent space, allowing for smooth interpolation and controlled generation of novel structures. | Core generative component in the nested Active Learning workflow [22]. |

| Molecular Transformer | A sequence-to-sequence model treating molecular generation as a translation task, ideal for applying localized transformations to a source molecule. | Used for exhaustive local chemical space exploration when regularized for similarity [25]. |

| Chemical Fingerprints (ECFP4) | A vector representation of molecular structure that captures atom environments. Used to calculate molecular similarity. | Critical for calculating Tanimoto similarity for filtering and model regularization [25]. |

| Molecular Docking Software | A computational method that predicts the preferred orientation and binding affinity of a small molecule to a protein target. | Acts as the physics-based affinity oracle in the outer Active Learning cycle [22]. |

| PELE (Protein Energy Landscape Exploration) | An advanced Monte Carlo simulation algorithm used to study protein-ligand binding and dynamics. | Used for candidate refinement after initial docking to better evaluate binding poses and stability [22]. |

| PubChem / ChEMBL | Large, publicly accessible databases of chemical molecules and their biological activities. | Source for initial training data and for benchmarking generated molecules [26]. |

| ActiveDelta Framework | A machine learning approach that trains on paired molecular representations to directly predict property improvements. | Mitigates analog bias in exploitative active learning and enhances scaffold diversity [24]. |

Technical Support Center: Troubleshooting Guides and FAQs

This technical support center addresses common challenges researchers face when applying transfer learning (TL) to adapt analytical models from controlled laboratory standards to complex real-world samples. The guidance is framed within thesis research on addressing chemical diversity in Linear Solvation Energy Relationship (LSER) training sets.

Troubleshooting Common Experimental Issues

| Problem Description | Possible Root Cause | Proposed Solution | Key References |

|---|---|---|---|

| Poor Model Generalization: Model performs well on source lab data but fails on real-world target data. | Significant distribution shift or domain gap between the source and target domains. [27] | Implement a domain adaptation strategy using adversarial learning. Introduce a domain discriminator and use a gradient reversal layer to learn domain-invariant features. [27] | Simulation-to-Real Transfer [27] |

| Limited Fault/Sample Data: Insufficient labeled data in the target domain for effective model training. | The real-world process is high-cost, high-risk, or rare, making data collection difficult. [28] [27] [29] | Step 1: Use physics-based modeling to generate simulated source data. [27] Step 2: Employ a multi-scale collaborative adversarial network to align simulated and real-world features. [27] | Simulation-to-Real Transfer [27]; Pharmacokinetics Prediction [28] |

| Unclear Performance Gains: Difficulty in determining if transfer learning is providing a significant benefit. | Lack of a rigorous benchmarking protocol to isolate the impact of TL. [29] | Adopt a two-step TL framework with comparative benchmarks. [29] 1. Pretrain on a large, generic source dataset (e.g., GDSC with various drugs). [29] 2. Refine on a domain-specific dataset (e.g., HGCC for glioblastoma). [29] 3. Compare against models without TL and with 1-step TL on the final target dataset. [29] | Two-Step TL for Drug Response [29] |

| Model Focuses on Incorrect Features: The model learns spurious correlations instead of causally relevant features. | The feature representation learned in the source task is not optimal for the target task. [30] | Apply dual transfer learning. First, pre-train the model on a related but different imaging modality (e.g., histology). Then, fine-tune it on the primary target modality (e.g., confocal endomicroscopy). [30] This helps the network learn more robust, general-purpose feature detectors. [30] | Dual TL for Lung Cancer Diagnosis [30] |

Frequently Asked Questions (FAQs)

Q1: What are the primary categories of transfer learning relevant to chemical analysis? Transfer learning can be broadly categorized based on the relationship between the source and target domains and tasks [28]:

- Homogeneous Transfer Learning: The source and target domains use the same type of data (e.g., same molecular representations), but the prediction tasks are different. An example is a model that leverages knowledge from predicting one PK property to improve prediction of another. [28]

- Heterogeneous In-Domain Transfer Learning: Knowledge is transferred using different types of input data (e.g., 2D graphs vs. 3D spatial fingerprints) for a single prediction task. [28]

- Heterogeneous Cross-Domain Transfer Learning: Knowledge is transferred from entirely different domains, such as using a natural language model pre-trained on general text to predict drug labels and PK properties. [28]

Q2: How can I quantitatively assess the domain shift between my laboratory and real-world datasets before starting? While specific metrics weren't detailed in the search results, the following methodology is recommended based on described practices:

- Procedure: Extract a set of informative features (e.g., elemental ratios, molecular descriptors) from both your source (lab) and target (real-world) datasets.

- Calculation: Compute distribution distance metrics, such as Maximum Mean Discrepancy (MMD) or Wasserstein distance, between the source and target feature sets. [27] A larger distance indicates a more significant domain shift, signaling the need for a robust domain adaptation strategy.

Q3: My model is suffering from "negative transfer," where performance is worse than without TL. How can I mitigate this? Negative transfer occurs when the source and target tasks/domains are not sufficiently related. The solution is to improve task relatedness. [29]

- Strategy: Implement a two-step transfer learning framework.

- Protocol:

- Identify a Related Source: Instead of transferring directly from a generic source, first identify a "bridge" domain that is more closely related to your target. In drug response prediction, this meant pre-training on a different but mechanistically related drug (Oxaliplatin) before fine-tuning on the target drug (Temozolomide). [29]

- Refine on a Bridge Domain: Fine-tune your model on this intermediate, related dataset.

- Transfer to Final Target: Finally, fine-tune the now-specialized model on your small, target dataset. [29]

Q4: Can transfer learning be integrated with a physics-based or mechanistic modeling approach? Yes, this is a powerful hybrid approach. The core idea is to use physics-based simulations to generate a rich source domain for TL, overcoming the lack of real-world fault data. [27]

- Workflow:

- Physical Modeling: Construct a dynamic model based on first principles (e.g., Hertz contact theory for bearings) to simulate data under various health states and operating conditions. [27]

- Signal Transformation: Convert the simulated signals into a suitable format (e.g., time-frequency representations via wavelet transform). [27]

- Transfer Learning: Use the simulated data as the source domain and apply a domain adaptation framework (e.g., adversarial learning) to align the simulated features with real-world data features. [27]

Experimental Protocols & Methodologies

Detailed Protocol 1: Two-Step Transfer Learning for Drug Response Prediction

This protocol is adapted from a study predicting Temozolomide (TMZ) response in Glioblastoma (GBM) and is highly relevant for contexts with very small target datasets. [29]

- Objective: To improve the accuracy of a deep learning model in predicting drug response on a small target dataset by leveraging knowledge from larger, related datasets.

- Datasets:

- Source: Genomics of Drug Sensitivity in Cancer (GDSC). A large public dataset containing molecular data and drug response for various cancer types and drugs. [29]

- Bridge/Tuning: Human Glioblastoma Cell Culture (HGCC) dataset. A smaller, domain-specific dataset for the target cancer (GBM) and drug (TMZ). [29]

- Target: A small, specific dataset (e.g., GSE232173) for final validation. [29]

- Step-by-Step Procedure:

- Pretraining: Train separate deep learning models on the GDSC dataset for each of several drugs (e.g., TMZ, Oxaliplatin, Cyclophosphamide). [29]

- Source Model Selection: Evaluate the pretrained models from Step 1 on the bridge dataset (HGCC) to identify which source drug knowledge transfers best to the target drug (TMZ). [29]

- First Fine-Tuning (Step 1 TL): Take the best-performing model (e.g., the one pretrained on Oxaliplatin) and fine-tune it on the bridge dataset (HGCC). [29]

- Second Fine-Tuning (Step 2 TL): Further fine-tune the resulting model from Step 3 on the small target dataset (GSE232173). [29]

- Validation: Benchmark the performance of this two-step TL model against models trained without TL and with direct one-step TL from the source. [29]

Detailed Protocol 2: Simulation-to-Real Transfer with Adversarial Learning

This protocol is designed for scenarios where real-world fault or target data is scarce, and physics-based modeling is feasible. [27]

- Objective: To diagnose faults in real-world systems by transferring knowledge from a physics-based simulation model.

- Datasets:

- Step-by-Step Procedure:

- Source Data Generation: Build a numerical model of the system (e.g., a bearing) based on physical principles (e.g., Hertzian contact theory) and simulate vibration signals for different health states. [27]

- Signal Processing: Transform both simulated and real vibration signals into time-frequency images (e.g., using Continuous Wavelet Transform - CWT) to create a common input representation. [27]

- Model Architecture:

- Adversarial Domain Adaptation:

- Attach a domain discriminator to the feature extractor. Its goal is to distinguish whether features come from the simulation or real world. [27]

- Train the feature extractor to fool the discriminator, thereby learning features that are invariant to the source (simulation) and target (real) domains. [27]

- Collaborative Alignment: Implement independent domain discriminators at multiple semantic scales within the network to ensure comprehensive feature alignment. [27]

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function / Relevance in Transfer Learning Research |

|---|---|

| GDSC (Genomics of Drug Sensitivity in Cancer) Dataset | A large-scale public resource used as a source domain for pre-training models on drug response across multiple cancer types and compounds. [29] |

| CellVizio pCLE System | A confocal laser endomicroscopy device used to acquire real-time in vivo microscopic images; serves as a target domain data source for medical image classification tasks. [30] |

| CWRU Bearing Dataset | A benchmark dataset of real-world vibration signals from bearings; commonly used as the target domain for validating simulation-to-real transfer learning in fault diagnosis. [27] |

| Hertz Contact Theory Model | A physics-based model used to generate simulated vibration data for bearings; acts as a synthetic source domain when real fault data is unavailable. [27] |

| Kolmogorov-Arnold Network (KAN) | A modern neural network architecture with learnable activation functions on edges; can be used in a Multi-scale KAN Convolutional Network (MKANC) for enhanced nonlinear feature extraction from complex data. [27] |

| Wavelet Transform (e.g., CWT) | A signal processing tool used to convert 1D time-series signals (vibration, spectral) into 2D time-frequency representations, providing a richer input for feature extraction models. [27] |

The following table summarizes quantitative performance improvements achieved by transfer learning in various studies, providing benchmarks for expected outcomes.

| Application Domain | TL Method | Benchmark Performance | TL Performance Gain | Key Metric |

|---|---|---|---|---|

| PK/ADME Prediction [28] | Homogeneous Multi-task Graph Attention | Not Reported | Achieved MCC: 0.53 (Classification) AUC: 0.85 (Regression) | Matthews Correlation Coefficient (MCC), Area Under Curve (AUC) |

| Bearing Fault Diagnosis [27] | Simulation-to-Real Adversarial Learning | Traditional methods fail under cross-domain conditions. | Proposed framework achieves high diagnostic accuracy in the target domain. | Diagnostic Accuracy |

| GBM Drug Response (TMZ) [29] | Two-Step TL (Oxaliplatin as source) | MGMT biomarker: Limited predictive power. [29] | Superior to models without TL and with 1-step TL. [29] | Prediction Accuracy |

| Lung Cancer Classification [30] | Dual TL (Histology → pCLE) | Confocal TL only: Lower accuracy (e.g., ~90% for ResNet). [30] | AlexNet: 94.97% Accuracy, 0.98 AUC. [30] GoogLeNet: 91.43% Accuracy, 0.97 AUC. [30] | Accuracy, AUC |

Workflow and Relationship Diagrams

TL Paradigm for Chemical Analysis

Two-Step Transfer Learning Process

Technical Support Center: FAQs & Troubleshooting

This section addresses common challenges researchers face when applying transfer learning to mitigate physical matrix effects in Laser-Induced Breakdown Spectroscopy (LIBS).

FAQ 1: Our pellet-based calibration model performs poorly on raw rock samples. What is the primary cause?