Beyond LSER: Comparing Modern Polarity Scales and QSPR Approaches for Drug Discovery

This article provides a comprehensive analysis of Linear Solvation Energy Relationship (LSER) models in comparison with emerging polarity scales and Quantitative Structure-Property Relationship (QSPR) approaches relevant to pharmaceutical research.

Beyond LSER: Comparing Modern Polarity Scales and QSPR Approaches for Drug Discovery

Abstract

This article provides a comprehensive analysis of Linear Solvation Energy Relationship (LSER) models in comparison with emerging polarity scales and Quantitative Structure-Property Relationship (QSPR) approaches relevant to pharmaceutical research. We explore foundational concepts of solvent polarity measurement, examine methodological applications in property prediction, address common challenges in model implementation, and validate approaches through comparative analysis. Specifically, we investigate innovative compartmentalized polarity scales like the PN parameter for ionic liquids and structure-based pharmacophore modeling techniques for G protein-coupled receptors (GPCRs), highlighting their advantages over traditional methods for predicting compound behavior in complex biological systems. This resource equips researchers with practical insights for selecting optimal computational approaches in drug development workflows.

Understanding Polarity Scales and QSPR Foundations in Pharmaceutical Research

Polarity, a fundamental property of molecules and biological systems, describes the asymmetric distribution of physical and chemical characteristics. The accurate measurement and modeling of polarity are crucial in pharmaceutical research, directly influencing drug design, efficacy, and ADMET properties (Absorption, Distribution, Metabolism, Excretion, and Toxicity). The evolution from traditional bulk solvent measurements to sophisticated compartmentalized biological approaches represents a paradigm shift in how scientists quantify and utilize polarity information.

This evolution has been driven by the integration of Linear Solvation Energy Relationships (LSER) with advanced Quantitative Structure-Property Relationship (QSPR) modeling. While LSER parameters provide a framework for understanding solute-solvent interactions, modern QSPR approaches leverage topological descriptors and machine learning to predict physicochemical properties directly from molecular structure. The most significant advancement emerges from recognizing that polarity is not uniform within biological systems but is compartmentalized at subcellular and macromolecular levels, creating specialized microenvironments that profoundly influence biological activity and drug behavior.

Historical Foundations of Polarity Measurement

The Origins of Polarimetry

The scientific investigation of polarity began with optical polarization phenomena discovered in the late 1600s. Erasmus Bartholinus first observed double refraction in Iceland spar (calcite) in 1669, while Christiaan Huyghens later determined that the two resulting beams exhibited directional properties, though the term "polarization" had not yet been coined [1]. The field remained dormant for nearly a century until Étienne-Louis Malus made his momentous discovery of polarization by reflection in 1808, observing polarized sunlight reflected from the windows of the Luxemborg Palace in Paris through a rotating calcite crystal analyzer [1].

Augustin Fresnel's subsequent work in the early 19th century established the theoretical foundation for polarization optics through his laws of reflection for obliquely incident polarized light. His identification and production of circular and elliptical polarization, along with his derivation of reflection coefficients for dielectric interfaces, earned him recognition as a founder of ellipsometry [1]. These optical discoveries paved the way for the first quantitative polarity measurements through polarimetry and ellipsometry.

Development of Quantitative Polarity Scales

The 20th century witnessed the development of empirical solvent polarity scales, which quantified solvent effects on chemical processes and spectroscopic properties. Key scales included the Kamlet-Taft parameters (π*, α, β), Reichardt's dye-based ET(30) scale, and solvatochromic comparison methods. These approaches enabled researchers to quantify solvent polarity through its effects on standard probe molecules, creating multidimensional parameters that could dissect specific solute-solvent interactions.

Linear Solvation Energy Relationships (LSER) emerged as a powerful framework for predicting solvent-dependent properties by correlating them with linear combinations of solute and solvent parameters. The general LSER equation takes the form:

[ \text{Property} = \text{Property}_0 + a\alpha + b\beta + s\pi^* + \ldots ]

where α represents hydrogen-bond donor acidity, β represents hydrogen-bond acceptor basicity, and π* represents dipolarity/polarizability. This approach allowed for the quantitative prediction of how molecular properties would respond to different solvent environments.

Table 1: Traditional Solvent Polarity Parameters

| Parameter | Description | Measurement Method | Key Applications |

|---|---|---|---|

| ET(30) | Empirical polarity parameter based on dye solvatochromism | UV-Vis spectroscopy of betaine dye | General solvent polarity ranking |

| π* | Dipolarity/Polarizability | Solvatochromic comparison | Dipolar interactions |

| α | Hydrogen-bond donor acidity | Solvatochromic comparison | Proton donor strength |

| β | Hydrogen-bond acceptor basicity | Solvatochromic comparison | Proton acceptor strength |

| Log P | Octanol-water partition coefficient | Shake-flask/chromatography | Hydrophobicity estimation |

The Shift to Compartmentalized Polarity in Biological Systems

Discovery of Plasma Membrane Compartmentalization

The paradigm of polarity measurement underwent a fundamental transformation with the discovery that biological membranes exhibit intrinsic compartmentalization rather than uniform polarity distribution. Seminal research in Drosophila embryos revealed that the plasma membrane in the syncytial blastoderm is polarized into discrete domains with epithelial-like characteristics before cellularization [2].

Using fluorescence imaging with targeted membrane markers (GAP43-Venus, PH(PLCδ1)-Cerulean, and Toll-Venus), researchers observed distinct membrane domains: one apical-like region residing above individual nuclei and another lateral domain containing markers associated with basolateral membranes and junctions [2]. This polarity emerged without physical cell boundaries, challenging previous assumptions about membrane uniformity.

Restricted Diffusion in Membrane Domains

Fluorescence Recovery After Photobleaching (FRAP) and Fluorescence Loss In Photobleaching (FLIP) experiments demonstrated that molecules could diffuse within each membrane domain but exhibited minimal exchange between plasma membrane regions above adjacent nuclei [2]. This compartmentalization created functionally distinct microenvironments with different polarity characteristics.

Crucially, drug-induced F-actin depolymerization disrupted both the apicobasal-like polarity and the diffusion barriers, correlating with perturbations in spatial patterning of Toll signaling [2]. This established that intact cytoskeletal networks are essential for maintaining polarity compartmentalization and proper morphogen gradient formation.

Table 2: Key Findings in Biological Polarity Compartmentalization

| Discovery | Experimental Evidence | Biological Significance |

|---|---|---|

| Pre-cellularization polarity | Differential localization of membrane markers in Drosophila embryos | Polarity establishment precedes physical cell boundaries |

| Domain-restricted diffusion | FRAP/FLIP experiments showing limited molecular exchange between domains | Creates functionally specialized microenvironments |

| Cytoskeletal dependence | F-actin depolymerization disrupts both polarity and diffusion barriers | Active maintenance of compartmentalization |

| Signaling regulation | Correlation between intact polarity and proper Toll signaling patterns | Compartmentalization shapes morphogen gradients |

Integration with QSPR and Topological Approaches

Topological Indices as Polarity Descriptors

Quantitative Structure-Property Relationship (QSPR) modeling has revolutionized polarity measurement by enabling prediction of physicochemical properties directly from molecular structure. Topological indices (TIs) – numerical descriptors derived from molecular graphs – have emerged as powerful tools for capturing structural features related to polarity [3] [4].

In these molecular graphs, atoms represent vertices and chemical bonds represent edges. Degree-based topological indices quantify connectivity patterns, while distance-based indices capture broader structural relationships. Temperature-based indices, including Product Connectivity Temperature Index, Harmonic Temperature Index, and Symmetric Division Temperature Index, have shown particular utility in predicting polarity-related properties [3].

For cancer drugs including Aminopterin, Daunorubicin, and Podophyllotoxin, topological indices have demonstrated strong correlations with boiling point, molar refractivity, polar surface area, and molecular volume [3]. These QSPR models enable researchers to predict polarity-related properties without resource-intensive experimental measurements.

Machine Learning Enhancement of QSPR Models

Modern QSPR modeling has incorporated machine learning algorithms to capture complex, non-linear relationships between molecular structure and physicochemical properties. Artificial Neural Networks (ANN) and Random Forest (RF) models have significantly improved prediction accuracy for polarity-related properties in pharmaceutical compounds [4].

These advanced computational approaches leverage both traditional topological indices and newer descriptors based on reverse vertex degrees and reduced reverse vertex degrees [4]. The integration of machine learning with topological descriptors has been successfully applied to antimalarial compounds, demonstrating high predictive accuracy for properties critical to drug efficacy and bioavailability.

Experimental Protocols for Modern Polarity Assessment

Protocol 1: Membrane Polarity and Compartmentalization Analysis

Objective: To characterize plasma membrane polarity domains and diffusion barriers in living cells.

Materials:

- Cells or embryos expressing fluorescent membrane markers (GAP43-Venus, PH(PLCδ1)-Cerulean, Toll-Venus)

- Confocal fluorescence microscope with photobleaching capability

- Appropriate environmental control chamber

- F-actin depolymerizing drugs (e.g., Latrunculin B) for perturbation studies

Methodology:

- Transfer living specimens to imaging chamber and maintain at appropriate physiological conditions

- Acquire high-resolution confocal images to establish steady-state distribution of membrane markers

- For FRAP analysis: Select a small region of interest (ROI) on the membrane and apply high-intensity laser pulse to bleach fluorescence

- Monitor recovery of fluorescence in the bleached area at regular intervals (e.g., every 5 seconds for 5 minutes)

- For FLIP analysis: Repeatedly photobleach a specific membrane region while monitoring fluorescence loss in distant areas

- Repeat experiments following F-actin depolymerization to assess cytoskeletal dependence

- Analyze fluorescence recovery/loss kinetics using appropriate modeling software

Data Analysis:

- Calculate diffusion coefficients from FRAP recovery curves

- Determine mobile/immobile fractions of membrane components

- Map diffusion barriers by analyzing fluorescence exchange between membrane domains

- Correlate polarity domain integrity with biological signaling outcomes

Protocol 2: QSPR Modeling with Topological Indices

Objective: To develop predictive models for polarity-related physicochemical properties using topological indices.

Materials:

- Chemical structures of compounds of interest (in SMILES or similar format)

- Python programming environment with RDKit, Scikit-learn, or specialized QSPR packages

- Database of experimental physicochemical properties (e.g., ChemSpider)

- Graph visualization software (e.g., Graph Online)

Methodology:

- Convert chemical structures to molecular graphs (atoms as vertices, bonds as edges)

- Calculate topological indices using appropriate algorithms:

- Degree-based indices (Zagreb indices, Randić index)

- Distance-based indices (Wiener index)

- Temperature-based indices (Product Connectivity, Harmonic, Symmetric Division)

- Reverse degree-based indices

- Compile experimental property data (polar surface area, molar refractivity, etc.)

- Perform correlation analysis between topological indices and physicochemical properties

- Develop regression models (linear, multiple linear, machine learning)

- Validate models using cross-validation and external test sets

Data Analysis:

- Evaluate model performance using R² values, root mean square error

- Identify topological indices with strongest predictive power for specific properties

- Compare traditional LSER parameters with topological descriptors

- Implement machine learning models (ANN, Random Forest) for improved prediction of complex relationships

Visualization of Key Concepts

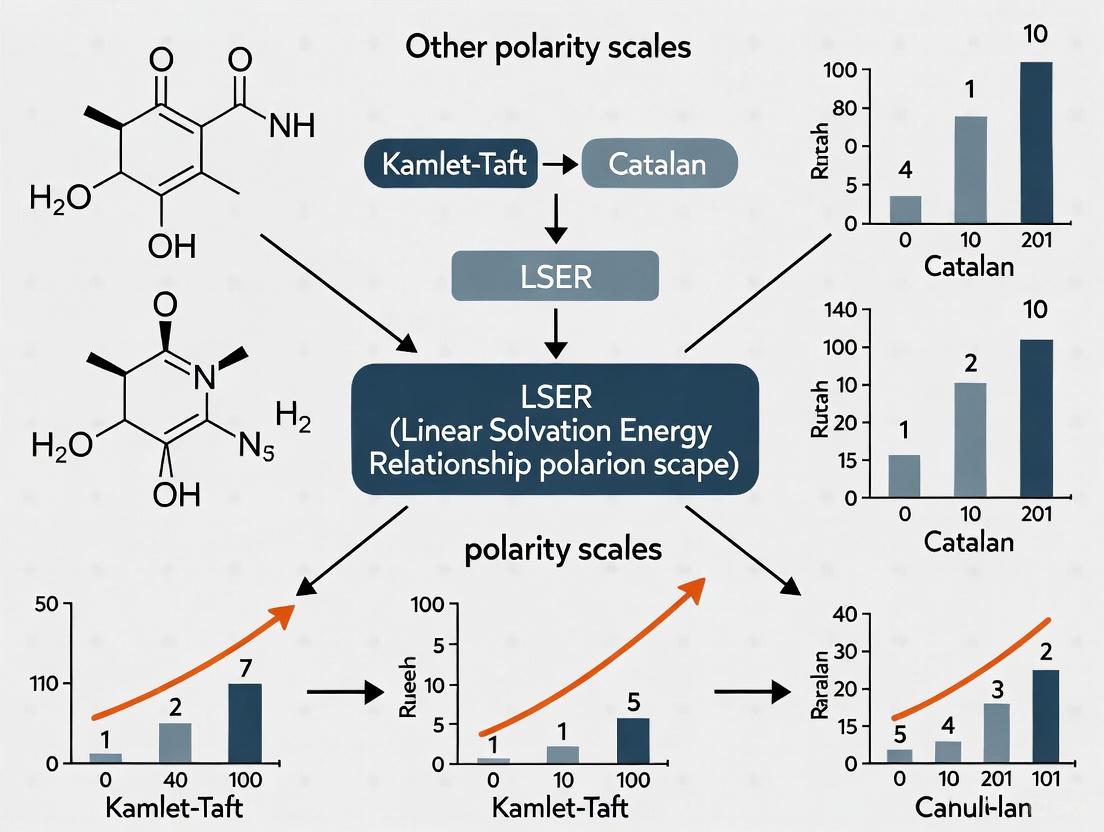

Evolution of Polarity Measurement Approaches

Plasma Membrane Compartmentalization

QSPR Workflow with Topological Indices

Research Reagent Solutions

Table 3: Essential Reagents for Modern Polarity Research

| Reagent/Category | Specific Examples | Function/Application |

|---|---|---|

| Fluorescent Membrane Markers | GAP43-Venus, PH(PLCδ1)-Cerulean, Toll-Venus | Specific labeling of membrane domains and compartments |

| Cytoskeletal Perturbation Agents | Latrunculin B (F-actin depolymerizer) | Investigation of structural maintenance of polarity |

| Computational Chemistry Tools | RDKit, Python QSPR packages, Graph Online | Calculation of topological indices and molecular descriptors |

| Machine Learning Platforms | Scikit-learn, TensorFlow, PyTorch | Development of advanced QSPR prediction models |

| Polarity-Sensitive Dyes | Reichardt's dye, solvatochromic probes | Traditional polarity measurement and validation |

The evolution of polarity measurement from traditional bulk approaches to compartmentalized biological perspectives represents a fundamental transformation in chemical and pharmaceutical research. Where LSER parameters once provided the primary framework for understanding solvent effects, modern QSPR approaches now leverage topological indices and machine learning to predict polarity-related properties directly from molecular structure.

The most significant advancement comes from recognizing that biological systems exhibit intricate polarity compartmentalization at subcellular levels, creating specialized microenvironments that profoundly influence drug behavior and biological activity. The integration of these perspectives – traditional LSER, computational QSPR, and biological compartmentalization – provides a comprehensive framework for understanding and exploiting polarity in pharmaceutical development.

Future advances will likely focus on multi-scale modeling approaches that connect molecular-level polarity descriptors with macroscopic biological outcomes, further bridging the gap between computational prediction and biological reality in drug design and development.

Limitations of Conventional Polarity Scales for Complex Chemical Systems

In molecular thermodynamics and solvation science, "polarity" represents an overarching, complex concept intended to quantify the ability of a solvent or solute to engage in various intermolecular interactions. Conventional polarity scales, often derived from solvatochromic probe measurements or linear free-energy relationships, have provided valuable frameworks for predicting solubility, partitioning, and reactivity. However, these traditional approaches exhibit significant limitations when applied to contemporary challenges in chemical research, including the development of advanced materials, solvate ionic liquids, and pharmaceutical formulations with complex molecular architectures.

The fundamental issue with conventional polarity characterization lies in its reductionist nature. As noted in studies of aqueous solutions, "different polarity values provide different estimates for the same solvent" and "there is no absolute correct measure of polarity" [5]. This inherent limitation becomes critically problematic when attempting to predict behavior in complex, multi-component systems where specific solute-solvent interactions dominate macroscopic properties. The research community increasingly recognizes that a single-parameter polarity scale cannot adequately capture the nuanced interplay of intermolecular forces—including dispersion, dipolarity/polarizability, hydrogen-bond donation, and hydrogen-bond acceptance—that collectively determine solvation phenomena [6] [5].

This technical review examines the specific limitations of conventional polarity scales, highlighting how emerging approaches combining quantum chemical calculations with multi-parameter linear solvation energy relationships (LSER) are addressing these challenges, particularly in pharmaceutical and advanced materials applications.

Fundamental Theoretical Shortcomings of Conventional Approaches

Thermodynamic Inconsistency in Self-Solvation Cases

Conventional polarity scales and their implementation in LSER models demonstrate significant thermodynamic inconsistencies, particularly evident in self-solvation scenarios where solute and solvent are identical molecules. The Abraham LSER model, while widely successful for practical predictions, produces peculiar results when applied to hydrogen-bonded solutes. The model fails to maintain the expected equality of complementary hydrogen-bonding interaction energies when solute and solvent become identical, indicating a fundamental limitation in its parameterization approach [7].

This inconsistency arises because "the LSER descriptors and the corresponding LFER coefficients are typically determined by multilinear regression of experimental data" without enforcing thermodynamic constraints that must hold true for self-solvation cases [7]. The problem permeates beyond academic interest, as it affects predictive accuracy for systems with strong specific interactions like alcohols, amines, and carboxylic acids that frequently appear in pharmaceutical compounds and biological media.

Inadequate Separation of Interaction Contributions

Traditional polarity scales often conflate multiple interaction types into a single parameter, limiting their predictive capability for systems where specific interactions dominate. As demonstrated in aqueous solution studies, solvent features encompass at least three distinct aspects: polarity/dipolarity, hydrogen bond donor (HBD) acidity, and hydrogen bond acceptor (HBA) basicity [5]. These parameters vary independently in solutions of different compounds, yet conventional scales typically provide only composite measures.

The limitation becomes particularly evident in solvate ionic liquids (SILs), where polarity was found to be "an interesting outcome of the interaction between the cation, chelating species and anion" [8]. In these complex systems, the measured polarity parameters show non-intuitive relationships with molecular structure because conventional approaches cannot deconvolute the competing contributions of cation-probe, anion-probe, and cation-anion interactions to the overall solvation environment.

Practical Limitations in Contemporary Applications

Challenges with Complex Pharmaceutical Molecules

Conventional polarity parameters and prediction methods face significant challenges when applied to modern pharmaceutical compounds, which often feature complex molecular structures, multiple functional groups, and acid/base characteristics. As noted in studies of drug molecule partitioning, "popular prediction tools such as EpiSuite and SPARC provide unreliable values for large molecules" [9], highlighting how traditional QSPR approaches based on conventional polarity descriptors fail for structurally complex compounds.

The problem is particularly acute for drug molecules, which "are semi-volatile compounds with complex molecular structures" and are "often acids, bases, or zwitterions" [9]. These characteristics introduce multiple competing intermolecular interactions that cannot be captured by simplistic polarity scales. Furthermore, the "lack of experimental reference data raises questions about the accuracy of computed values" derived from these conventional approaches [9], creating circular validation problems in pharmaceutical development.

Table 1: Limitations of Conventional Polarity Scales for Drug Molecules

| Challenge Area | Specific Limitation | Impact on Predictive Capability |

|---|---|---|

| Structural Complexity | Inadequate descriptors for large, flexible molecules with multiple functional groups | Unreliable prediction of partitioning behavior for complex pharmaceuticals |

| Ionizable Compounds | Failure to account for protonation states and zwitterionic forms | Inaccurate solvation models for biologically relevant pH conditions |

| Data Availability | Limited experimental reference data for regulated substances | Compromised validation of prediction models for new chemical entities |

Limitations for Emerging Materials Systems

Solvate ionic liquids represent a class of materials where conventional polarity scales demonstrate significant limitations. These systems, typically composed of equimolar mixtures of lithium salts with chelating solvents like glymes or glycols, exhibit polarity behavior that cannot be predicted from their constituent components [8]. The measured polarity parameters in SILs show unusual trends that reflect "the unique nature of this class of 'solvents' in terms of the range of polarity observed" [8], highlighting the failure of conventional group-contribution or additive approaches.

Similar limitations appear in aqueous polymer solutions and biological media, where the "solvent features of water in solutions of various compounds are linearly related to each other" but in ways that cannot be captured by simple polarity parameters [5]. In these complex systems, solute molecules alter multiple solvent properties simultaneously, including dipolarity/polarizability, HBD acidity, and HBA basicity, through complex molecular-level interactions that conventional scales cannot resolve.

Methodological Limitations and Experimental Constraints

Probe-Dependent Measurements and Scale Proliferation

A fundamental methodological limitation of conventional polarity scales is their dependence on specific molecular probes, which leads to proliferation of competing scales and impedes universal comparison. As noted in aqueous solution research, "the use of molecular probes did not ensure a generalized/universal scale of solute-solvent interactions" because "the interactions of the probe with the solvent need not differentiate the specific and nonspecific solvent interactions, giving rise to polarity scales which were subject to the choice of probe molecules" [8].

This probe-dependence creates particular problems when attempting to characterize new materials systems like solvate ionic liquids, where researchers observed that "the use of Reichardt's dye was not feasible for all SIL samples studied, thus necessitating the use of Burgess' dye" [8]. Such limitations in experimental applicability further constrain the utility of conventional polarity scales for novel chemical systems.

Table 2: Methodological Limitations of Experimental Polarity Assessment

| Methodological Issue | Consequence | Emerging Solution Approach |

|---|---|---|

| Probe-Dependent Results | Different probes yield different polarity rankings for the same solvent | Multi-parameter approaches using homomorphic probes (e.g., Catalan's method) |

| Inapplicable Probes | Some dyes are unstable or insoluble in new solvent systems | Quantum-chemical calculations replacing experimental probes |

| Temperature Dependence | Limited data on temperature variation of polarity parameters | Computational methods enabling temperature-dependent prediction |

Data Scarcity and Regression Limitations

The development and application of conventional polarity scales face significant practical hurdles due to experimental data scarcity, particularly for complex and novel compounds. As observed in LSER research, "new, reliable experimental data on solvation or hydrogen-bonding quantities are becoming more and more scarce following the related scarcity of research groups on experimental thermodynamics worldwide" [7]. This scarcity creates a fundamental limitation for expanding conventional approaches to new chemical spaces.

Furthermore, the statistical foundations of traditional LSER models present inherent limitations. The models rely on "multilinear regression of experimental data" where "the model expansion is, thus, restricted by the availability of experimental data" [7]. This constraint creates circular dependencies that hinder predictive application to novel compounds lacking extensive experimental measurements, a particular challenge in pharmaceutical development where new chemical entities constantly emerge.

Emerging Solutions: Quantum-Chemical and Integrated Approaches

Quantum-Chemical LSER Descriptors

Recent research addresses the limitations of conventional polarity scales through the development of quantum-chemical (QC) LSER descriptors derived from computational chemistry. These approaches leverage "molecular descriptors based on quantum-chemical calculations" to create predictive methods "free, to a rather significant extent, from the above limitations" of conventional LSER models [6]. The QC-LSER methodology enables thermodynamically consistent reformulation of LSER models while providing a pathway for a priori prediction of solvation properties without extensive experimental data [7].

These new methods use "new molecular descriptors of electrostatic interactions derived from the distribution of molecular surface charges obtained from COSMO-type quantum chemical calculations" [7]. This represents a fundamental shift from empirical parameterization to first-principles computation, potentially overcoming the probe-dependence and data scarcity limitations of conventional polarity scales.

Diagram 1: Evolution from Conventional Polarity Scales to QC-LSER Approaches

COSMO-RS and LSER Integration

The integration of conductor-like screening model for real solvation (COSMO-RS) with LSER methodologies represents another promising approach to overcoming conventional limitations. COSMO-RS serves as "one of the best currently available a-priori predictive methods for solvation free energies" [10] and provides a pathway for "the development of simple enough but thermodynamically consistent linear solvation energy relationships" [7]. This integration enables first-principles prediction of hydrogen-bonding contributions to solvation enthalpies, addressing a key limitation of conventional LSER approaches [10].

The statistical thermodynamic formulation of COSMO-RS combined with LSER molecular descriptors facilitates "the direct interconnection of the quantum-mechanics based COSMO-RS model with Abraham's LSER model" [10], creating a hybrid framework that leverages the strengths of both approaches while mitigating their individual limitations.

Experimental Protocols for Advanced Polarity Assessment

Quantum-Chemical LSER Implementation Protocol

Objective: Implement QC-LSER methodology to predict solvation properties without experimental polarity parameters.

Methodology:

- Molecular Structure Optimization: Perform quantum-chemical geometry optimization using density functional theory (DFT) with appropriate basis sets.

- COSMO Calculations: Conduct COSMO calculations to obtain sigma surfaces and sigma profiles for each compound of interest.

- Descriptor Calculation: Compute new QC-LSER descriptors from molecular surface charge distributions, including electrostatic interaction parameters.

- Solvation Energy Prediction: Apply QC-LSER equations to predict solvation free energies, enthalpies, and entropies.

- Thermodynamic Validation: Verify thermodynamic consistency, particularly for self-solvation cases where solute and solvent are identical.

Key Advantages: This protocol enables "the extraction of valuable information on intermolecular interactions and its transfer in other LFER-type models, in acidity/basicity scales, or even in equation-of-state models" [7].

Multi-Parameter Solvatochromic Measurement Protocol

Objective: Characterize solvent features using multi-parameter approach to overcome single-parameter polarity scale limitations.

Methodology:

- Probe Selection: Employ a set of three solvatochromic dyes: 4-nitroanisole for dipolarity/polarizability (π*), 4-nitrophenol for HBA basicity (β), and Reichardt's carboxylated betaine dye for HBD acidity (α).

- Spectroscopic Measurement: Record UV-visible absorption spectra for each probe in the solvent system of interest.

- Parameter Calculation: Determine individual solvent parameters from spectral shifts using established correlation equations.

- Cross-Validation: Verify internal consistency using the established relationship: πij = kπj + kαjαij + kβjβij.

Application Notes: This approach has been successfully applied to "over 60 various solutes including inorganic salts, free amino acids, small organic compounds, polymers, and a few proteins" [5].

Essential Research Reagent Solutions

Table 3: Key Reagents for Advanced Polarity Assessment

| Reagent/Category | Function in Polarity Assessment | Application Notes |

|---|---|---|

| Catalan's Solvatochromic Probes | Multi-parameter polarity assessment using homomorphic probes | Enables separation of dipolarity, HBD acidity, and HBA basicity [8] |

| COSMOtherm Software Suite | Quantum-chemical calculation of sigma profiles and solvation properties | Implements COSMO-RS theory for a priori prediction; version 19 with TZVPD-Fine recommended [10] |

| Quantum Chemical Codes | Molecular structure optimization and electronic property calculation | Required for QC-LSER descriptor computation; DFT methods typically employed [7] [9] |

| Abraham LSER Database | Reference data for LSER coefficients and molecular descriptors | Foundation for traditional LSER with expanding quantum-chemical extensions [7] |

| Specialized Polarizers | Infrared polarization spectroscopy for species detection | BBO or TiO2 polarizers with high extinction ratios (>10⁻⁵) for IRPS measurements [11] |

Conventional polarity scales, while historically valuable for simple chemical systems, exhibit fundamental limitations when applied to complex contemporary challenges in pharmaceutical development and advanced materials. These limitations include thermodynamic inconsistencies, inadequate separation of interaction contributions, probe-dependent measurements, and data scarcity for complex molecules. The research community is actively addressing these challenges through quantum-chemical LSER approaches, COSMO-RS integration, and multi-parameter solvatochromic methods that provide more nuanced, predictive characterization of solvation phenomena. As chemical systems of interest grow increasingly complex, these advanced methodologies will become essential tools for accurate prediction and rational design across chemical, pharmaceutical, and materials sciences.

Quantitative Structure-Property Relationships (QSPR) represent a fundamental methodology in chemical and pharmaceutical sciences that mathematically correlates the structural and physicochemical properties of molecules with their biological activities or physicochemical properties [12]. When specifically modeling biological activity, the approach is often termed Quantitative Structure-Activity Relationship (QSAR) [12]. These models operate on the fundamental principle that molecular structure determines properties and activities, enabling researchers to predict the behavior of untested compounds through statistical or machine learning methods [13]. The general form of a QSPR model is expressed as: Activity = f(physicochemical properties and/or structural properties) + error, where the error term encompasses both model bias and observational variability [12].

In the broader context of molecular descriptor research, QSPR approaches complement other established methodologies like Linear Solvation Energy Relationships (LSER), which quantify solute-solvent interactions using molecular descriptors such as volume, polarity, and hydrogen-bonding parameters [14]. While LSER models specifically address solvation-related thermodynamic properties through linear free energy relationships, QSPR encompasses a wider range of properties and often employs more diverse descriptor types and modeling techniques [15] [14]. This versatility makes QSPR invaluable across multiple disciplines, including drug discovery, toxicity prediction, risk assessment, and materials science [12].

Fundamental Concepts and Molecular Descriptors

Theoretical Basis and Key Assumptions

The foundational assumption underlying QSPR modeling is that similar molecules exhibit similar properties and activities [12]. This Structure-Activity Relationship (SAR) principle, however, comes with the "SAR paradox," which acknowledges that not all similar molecules display similar activities, indicating the complexity of molecular interactions [12]. Successful QSPR modeling depends on several critical factors: quality of input data, appropriate descriptor selection, suitable statistical methods, and rigorous validation protocols [12].

QSPR models can be categorized based on their mathematical approach (regression or classification) and the type of molecular representation they utilize [12]. Regression models predict continuous values (e.g., inhibition constants, partition coefficients), while classification models categorize compounds into discrete groups (e.g., active/inactive, toxic/non-toxic) [12]. The molecular representations range from simple two-dimensional structural fragments to complex three-dimensional molecular fields and quantum-chemical properties [15] [12].

Types of Molecular Descriptors

Molecular descriptors quantitatively capture structural features and are central to QSPR modeling. The table below summarizes major descriptor categories and their applications:

Table 1: Classification of Molecular Descriptors in QSPR Studies

| Descriptor Category | Representative Examples | Molecular Properties Captured | Common Applications |

|---|---|---|---|

| Empirical Descriptors | Abraham parameters (A, B, S, E, V), Kamlet-Taft parameters (α, β, π*) [15] [14] | Hydrogen bond acidity/basicity, dipolarity/polarizability, excess molar refraction | LSER models, solvation property prediction, partition coefficients |

| Theoretical Descriptors | COSMO-based descriptors (VCOSMO*, αCOSMO, βCOSMO, δCOSMO) [15] | Molecular volume, acidity, basicity, charge asymmetry | Solvation thermodynamics, prediction for ionic liquids |

| Topological Indices | Zagreb indices, Randić index, Harmonic index [16] [17] | Molecular branching, shape, connectivity | Predicting boiling points, molar volume, enthalpy of vaporization |

| 3-Dimensional Descriptors | CoMFA fields, molecular surface areas, volume descriptors [12] | Steric bulk, electrostatic potential fields | Receptor-ligand interactions, conformational-dependent properties |

| Quantum Chemical Descriptors | HOMO/LUMO energies, partial atomic charges, dipole moments [15] | Electronic distribution, reactivity, interaction energies | Reaction modeling, excited state properties |

Empirical descriptors, such as the Abraham parameters and Kamlet-Taft parameters, are derived from experimental measurements and have proven successful in predicting solvation-related properties [15] [14]. Theoretical descriptors, including those derived from quantum chemical computations like the recently developed DFT/COSMO-based descriptors, offer the advantage of being calculable purely from molecular structure without prior experimental data [15]. These computed descriptors have demonstrated strong correlation with established empirical scales (mostly R² > 0.8, and for some R² > 0.9) [15].

Topological indices represent another important descriptor class that quantifies molecular connectivity patterns. Studies on anti-hepatitis drugs have demonstrated that topological indices can effectively predict physicochemical properties including boiling points, molar volume, and vaporization enthalpy [16] [17]. For example, the first Zagreb index shows high correlation (0.961) with boiling points, while the harmonic index effectively estimates molar refraction (0.963) [17].

QSPR Methodologies and Modeling Techniques

Fundamental Workflow and Protocol Development

The QSPR modeling process follows a systematic workflow encompassing multiple critical stages. The diagram below illustrates the standard QSPR modeling protocol:

Data Collection and Curation Protocols

The initial phase of QSPR modeling requires careful data collection and curation. For a study on anti-hepatitis drugs, researchers obtained two-dimensional structures of 16 hepatitis medications and computed 14 different topological indices [17]. Experimental data for validation was collected from ChemSpider, including properties such as molecular weight, enthalpy, boiling point, density, vapor pressure, and logP [17]. Data curation typically involves handling missing values, removing outliers, and standardizing molecular representations (e.g., tautomer standardization, neutralization of charges) [13].

The dataset splitting methodology is crucial for developing robust models. Common approaches include random splits, time-based splits (for temporal validation), and activity-based splits to ensure representative distribution of activities in both training and test sets [12]. For the hepatitis drug study, researchers utilized specialized software tools including MATLAB for computation verification and SPSS for statistical analysis including linear regression equations and parameter calculations [17].

Descriptor Calculation and Selection Methods

Descriptor calculation methods vary significantly based on descriptor type. For topological indices, researchers typically represent molecular structures as graphs where atoms are vertices and bonds are edges [17] [12]. The edge partitioning technique is employed to compute vertex degrees, which serve as inputs for topological formulas [17]. For quantum chemical descriptors, low-cost Density Functional Theory (DFT) calculations with the COSMO (Conductor-like Screening Model) solvation approach have proven effective [15]. This methodology involves several steps:

- Geometry Optimization: Molecular structures are optimized using DFT methods to find the most stable conformation [15]

- COSMO Calculations: The screening charge density is computed using the COSMO approach, typically implemented in programs like the ADF/COSMO-RS module of Amsterdam Modeling Studio [15]

- Descriptor Calculation: Four primary descriptors are derived - VCOSMO (molecular volume), αCOSMO (hydrogen bond/Lewis acidity), βCOSMO (basicity), and δCOSMO (charge asymmetry of the nonpolar region) [15]

Descriptor selection and reduction techniques are employed to avoid overfitting and improve model interpretability. Methods include stepwise selection, genetic algorithms, and principal component analysis (PCA) [12]. The objective is to identify a minimal set of descriptors that maximally explains the variance in the target property while maintaining physical interpretability.

Model Building and Validation Frameworks

The core modeling phase involves selecting appropriate algorithms and validation strategies. The table below compares common QSPR modeling approaches:

Table 2: QSPR Modeling Techniques and Their Applications

| Modeling Technique | Mathematical Basis | Advantages | Limitations | Typical Applications |

|---|---|---|---|---|

| Multiple Linear Regression (MLR) | Linear combination of descriptors | Simple, interpretable, less prone to overfitting | Limited to linear relationships | Preliminary screening, property prediction |

| Partial Least Squares (PLS) | Latent variable projection | Handles correlated descriptors, works with many variables | Less interpretable than MLR | 3D-QSAR (CoMFA), spectral data |

| Decision Trees/Random Forests | Hierarchical splitting rules | Handles non-linearity, provides feature importance | Can overfit without proper tuning | Classification tasks, toxicity prediction |

| Support Vector Machines (SVM) | Maximum margin hyperplane | Effective in high dimensions, handles non-linearity | Black box, parameter sensitive | Activity classification, complex endpoints |

| Artificial Neural Networks (ANN) | Multi-layer interconnected nodes | Captures complex non-linear relationships | Black box, requires large datasets | Complex property prediction, multi-task learning |

Model validation is critical for ensuring predictive reliability. Standard validation protocols include [12]:

- Internal Validation: Typically using cross-validation techniques like leave-one-out (LOO) or leave-many-out (LMO) to assess model robustness

- External Validation: Using a completely independent test set not involved in model building to evaluate predictive performance

- Data Randomization (Y-scrambling): Verifying the absence of chance correlations by randomizing response variables and confirming model performance degradation

- Applicability Domain (AD) Assessment: Defining the chemical space where the model can reliably predict

For the hepatitis drug study, researchers used correlation coefficients (r²) to evaluate the relationship between topological indices and physicochemical properties, finding that the harmonic index effectively predicted molar volume and molar refraction, while the first Zagreb index correlated strongly with boiling points [17].

Computational Tools and Research Reagents

Modern QSPR research relies on specialized software tools for descriptor calculation, model building, and validation. The following table outlines key resources:

Table 3: Essential Computational Tools for QSPR Research

| Tool Name | Type | Primary Function | Key Features | Access |

|---|---|---|---|---|

| QSPRpred [13] | Python package | QSPR model development and deployment | Modular API, automated serialization, includes data preprocessing in saved models | Open-source |

| Amsterdam Modeling Studio [15] | Quantum chemistry suite | DFT/COSMO calculations for theoretical descriptors | ADF/COSMO-RS module, geometry optimization, σ-profile generation | Commercial |

| MATLAB [17] | Numerical computing | Computation verification and algorithm development | Extensive mathematical toolbox, custom script development | Commercial |

| SPSS [17] | Statistical analysis | Regression analysis and statistical validation | User-friendly interface, comprehensive statistical tests | Commercial |

| DeepChem [13] | Python library | Deep learning for molecular modeling | Diverse featurizers, deep learning models, integration with TensorFlow/PyTorch | Open-source |

| KNIME [13] | Workflow platform | Visual workflow design for QSPR | GUI-based, extensive node library, integration with various tools | Open-source |

QSPRpred represents a recent advancement in QSPR software, addressing challenges in reproducibility and model deployment [13]. Its key innovation includes automated serialization that saves models with all required data pre-processing steps, enabling direct predictions from SMILES strings without manual intervention [13]. This addresses a critical gap in many existing tools where reproducing the preparation workflow for deployment remains challenging.

Experimental and Computational Reagents

Successful QSPR studies require both computational and experimental components. Key research reagents include:

Reference Compound Sets: Well-characterized molecules with experimentally determined properties for model training and validation. For solvation studies, this includes compounds with established Abraham parameters or Kamlet-Taft parameters [15] [14]

Quantum Chemical Methods: Density Functional Theory (DFT) methods with appropriate basis sets and solvation models like COSMO for theoretical descriptor calculation [15]

Descriptor Calculation Algorithms: Implementations for topological indices (e.g., Zagreb, Randić, Harmonic indices) and other molecular descriptors [17]

Validation Datasets: Curated collections of compounds with reliable experimental data for external validation, often from databases like ChemSpider [17]

For the development of new DFT/COSMO-based descriptors, researchers utilized sets of 128 non-ionic organic molecules and 47 ions composing ionic liquids, with properties validated against established empirical scales [15].

Advanced Applications and Comparative Analysis

Case Studies in Pharmaceutical Research

QSPR approaches have demonstrated significant utility in pharmaceutical research, particularly in predicting physicochemical properties critical for drug development. The hepatitis drug study revealed several important structure-property relationships [17]:

- The harmonic index showed strong correlation with molar volume and molar refraction, enabling prediction of molecular size and polarizability

- The first Zagreb index effectively predicted boiling points, important for understanding drug stability and purification

- The Randić index proved critical for determining LogP values, directly relevant to membrane permeability and bioavailability

- The second Zagreb index strongly correlated with enthalpy of vaporization, useful for predicting solvation effects

These findings provide pharmaceutical scientists with theoretical methods for obtaining crucial information about drug candidates without extensive laboratory testing [17]. Similar approaches have been successfully applied to other drug classes, including anti-tuberculosis medications, breast cancer drugs, and anxiety treatments [17].

Integration with LSER and Other Polarity Scales

In the broader context of molecular descriptor research, QSPR methodologies complement established approaches like Linear Solvation Energy Relationships (LSER). The integration between these frameworks enables richer thermodynamic insights and expands application possibilities [14]. The relationship between these approaches can be visualized as follows:

The Partial Solvation Parameters (PSP) framework represents an innovative approach to bridge LSER and QSPR methodologies [14]. PSPs are designed with an equation-of-state thermodynamic basis that facilitates information exchange between different descriptor systems [14]. This integration enables researchers to:

- Extract thermodynamic information from LSER databases for use in QSPR models [14]

- Reconcile descriptors from different sources including quantum chemical calculations, LSER molecular descriptors, and empirical polarity scales [14]

- Estimate free energy changes upon formation of specific molecular interactions, particularly hydrogen bonds [14]

Recent research has verified that there is a thermodynamic basis for the linearity observed in LFER models, even for strong specific interactions like hydrogen bonding [14]. This theoretical foundation enhances confidence in applying these models for predictive purposes in drug discovery and materials science.

Emerging Trends and Methodological Advances

The field of QSPR continues to evolve with several emerging trends shaping future research directions. Integration of machine learning and artificial intelligence represents a significant advancement, with tools like QSPRpred offering streamlined workflows for model development, validation, and deployment [13]. The increasing emphasis on model reproducibility and transferability addresses critical limitations in earlier QSPR approaches, ensuring that models can be reliably applied in practical settings [13].

The development of novel descriptor types continues to expand the applicability of QSPR methods. Recent work on DFT/COSMO-based descriptors demonstrates how low-cost quantum chemical computations can generate theoretically sound descriptors that correlate well with empirical scales [15]. Similarly, advancements in proteochemometric modeling (PCM) extend traditional QSPR by incorporating protein target information, enabling predictions across protein families and enhancing applications in polypharmacology and off-target prediction [13].

QSPR methodologies provide a powerful framework for bridging molecular structure with biological activity and physicochemical properties. The core principle—that molecular structure determines properties—enables researchers to predict behavior for untested compounds, significantly accelerating discovery processes in pharmaceutical and chemical sciences. When positioned within the broader landscape of molecular descriptor research, QSPR complements established approaches like LSER while offering greater versatility in the types of properties and compounds that can be modeled.

The continuing development of computational tools, descriptor types, and modeling approaches ensures that QSPR will remain a cornerstone of molecular design and optimization. By integrating insights from theoretical chemistry, statistical modeling, and machine learning, QSPR methodologies provide researchers with powerful strategies to navigate complex structure-activity relationships and make informed decisions in drug discovery and materials design.

The accurate quantification of solvent polarity is a cornerstone of physical chemistry, with profound implications for predicting reaction rates, optimizing separation processes, and designing pharmaceutical compounds. Traditional polarity scales, such as the empirical ET(30) scale based on solvatochromic dye effects, have provided valuable insights but face significant limitations. These methods can be time-consuming, expensive, and difficult to apply universally, particularly for non-structured liquids like Ionic Liquids (ILs) where classical concepts like relative permittivity (εr) and dipole moment (δ) fall short [18]. Within the broader context of Linear Solvation Energy Relationships (LSER) and Quantitative Structure-Property Relationship (QSPR) approaches, the need for a predictive, accessible, and theoretically sound polarity framework is acute. QSPR models, which establish mathematical relationships between a compound's molecular descriptors and its macroscopic properties, are powerful tools in material science and drug discovery [19] [20] [3]. However, their predictive power is contingent on the availability of accurate and easily obtainable input parameters. This paper examines the development of a novel compartmentalized polarity scale, the PN scale, which addresses these challenges by dividing polarity into distinct surface and body contributions, leveraging easily measurable physicochemical properties [18] [21].

The PN Scale: A Compartmentalized Approach to Polarity

Theoretical Foundation

The PN scale represents a paradigm shift in polarity assessment by rejecting the notion of a single, monolithic polarity value. Instead, it proposes that the overall polarity of a liquid (PN) is a composite of two independent contributions:

- The Polarity of the Surface (s): Governed by molar surface entropy, this compartment reflects the anisotropic environment and specific interactions at the liquid-gas interface.

- The Polarity of the Body (P₂): Represented by a polarity coefficient derived from bulk properties, this compartment describes the isotropic environment within the liquid's volume [18].

This compartmentalization is crucial because molecular interactions at an interface can differ significantly from those in the bulk phase. The scale is founded on the ability to predict surface tension via an improved Lorentz-Lorenz equation, bridging fundamental electromagnetic theory with practical physicochemical measurements [18] [21].

Required Physicochemical Parameters

A key advantage of the PN scale is its reliance on standard, easily measurable properties. The following parameters are required for its calculation, demonstrated here for novel ether-functionalized Amino Acid Ionic Liquids (AAILs) [18]:

- Density (ρ): Relates to packing efficiency and intermolecular interactions.

- Surface Tension (γ): A critical property of the liquid-gas interface.

- Refractive Index (nD): Provides information on electronic polarization.

The experimental values for these parameters, determined using the standard addition method, are summarized in Table 1.

Table 1: Experimental Physicochemical Parameters for Anhydrous Ether-Functionalized AAILs at 298.15 K [18]

| Ionic Liquid | ρ (g·cm⁻³) | γ (mJ·m⁻²) | nD |

|---|---|---|---|

| [C₁OC₂mim][Ala] | 1.15423 | 50.9 | 1.5080 |

| [C₂OC₂mim][Ala] | 1.13190 | 48.9 | 1.4914 |

These parameters form the foundational dataset from which other thermodynamic and molecular properties, such as molecular volume (Vm), thermal expansion coefficient (α), and ultimately the PN scale components, are derived.

Experimental Protocols for PN Scale Determination

Synthesis and Characterization of Model Compounds

The development and validation of the PN scale were demonstrated using novel, environmentally friendly ether-functionalized AAILs [18].

- Synthesis: Two ILs, 1-(2-methoxyethyl)-3-methylimidazolium alanine ([C₁OC₂mim][Ala]) and 1-(2-ethoxyethyl)-3-methylimidazolium alanine ([C₂OC₂mim][Ala]), were synthesized via a neutralization method. The ether functionalization was chosen to reduce viscosity without sacrificing thermal stability, while the amino acid anions lower toxicity [18].

- Structural Confirmation: The structures of the synthesized ILs were confirmed using Nuclear Magnetic Resonance (NMR) spectroscopy, ensuring the integrity of the target molecules before property measurement [18].

Measurement of Density, Surface Tension, and Refractive Index

A detailed methodology was employed to obtain high-quality data for the PN scale calculation [18].

- Procedure:

- Prepare samples with varying, known water contents.

- Measure density, surface tension, and refractive index for each sample across a temperature range (e.g., 288.15 to 328.15 K at 5 K intervals).

- Plot each parameter against water content. The data typically forms straight lines with correlation coefficients (r²) > 0.99.

- Extrapolate the y-axis intercept of these plots to obtain the experimental value for the anhydrous ionic liquid (Table 1).

- Data Treatment: The use of the standard addition method and extrapolation to zero water content minimizes the confounding effects of trace water, a common impurity in ILs, ensuring accuracy for the anhydrous system.

Calculation of Molecular Descriptors and PN Value

The experimentally determined parameters are used to calculate a series of intermediate molecular descriptors, which feed into the final PN value.

- Molecular Volume (Vm): Calculated using the formula ( V_m = M / (N ρ) ), where M is molar mass and N is Avogadro's constant. At 298.15 K, Vm was 0.3300 nm³ for [C₁OC₂mim][Ala] and 0.3571 nm³ for [C₂OC₂mim][Ala] [18].

- Strength of Intermolecular Interactions: Analyzed through derived properties such as standard entropy, lattice energy, and association enthalpy.

- Compartmentalized Polarity: The surface polarity (s) is determined using molar surface entropy. The body polarity (P₂) is calculated as a polarity coefficient via the improved Lorentz-Lorenz equation.

- Overall Polarity (PN): The final PN value is a composite of the s and P₂ compartments.

The following workflow diagram illustrates the complete experimental and computational pathway for determining the PN scale.

The Researcher's Toolkit: Essential Reagents and Materials

Table 2 outlines key reagents, instruments, and computational tools used in the development and application of the PN scale and related QSPR analyses.

Table 2: Research Reagent Solutions for Polarity and QSPR Studies

| Item Name | Function / Relevance |

|---|---|

| Ether-Functionalized AAILs (e.g., [C₁OC₂mim][Ala]) | Model compounds for demonstrating the PN scale; combine low toxicity, reduced viscosity, and high thermal stability [18]. |

| NMR Spectrometer | Essential for confirming the chemical structure of synthesized ionic liquids or novel compounds prior to analysis [18]. |

| Density Meter / Digital Densimeter | Precisely measures density (ρ), a fundamental input parameter for the PN scale and molecular volume calculations [18]. |

| Surface Tensiometer | Directly measures surface tension (γ), which is critical for determining the surface polarity compartment of the PN scale [18]. |

| Refractometer | Measures refractive index (nD), a key property used in the Lorentz-Lorenz equation for calculating the body polarity compartment [18]. |

| Topological Indices (e.g., Temperature Indices) | Mathematical descriptors of molecular structure used in QSPR models to predict physicochemical properties like boiling point and polar surface area [3]. |

| Support Vector Regression (SVR) | A machine learning algorithm used to build robust QSPR models for predicting properties such as triplet yield in singlet fission materials [19] [3]. |

Integration with QSPR and Comparison to LSER Approaches

The PN scale offers a compelling alternative and complement to traditional LSER and QSPR methodologies.

- LSER Approach: Linear Solvation Energy Relationships model solvent effects based on multiple parameters (e.g., π*, α, β) representing different interaction types. While highly successful, these often require extensive experimental data from solvatochromic probes for parameterization.

- QSPR Paradigm: QSPR models correlate structural descriptors with properties. The PN scale aligns perfectly with this paradigm by providing a new, experimentally accessible macroscopic descriptor (PN) that can be directly correlated with biological activity or chemical reactivity without requiring complex spectroscopic measurements.

- Synergy with Topological Indices: QSPR studies increasingly rely on topological indices (TIs) as molecular descriptors. For example, recent research has used Temperature Indices and SVR to predict properties like boiling point, molar refractivity, and polar surface area for cancer drugs [3]. The PN value can serve as a complementary descriptor that encapsulates specific solvent-solute interaction information not fully captured by pure structural indices.

The following diagram illustrates the conceptual position of the PN scale within the ecosystem of property prediction frameworks.

The PN scale marks a significant advancement in the quantification of solvent polarity. Its compartmentalized nature provides a more nuanced and physically realistic model of liquid environments by distinguishing between surface and bulk interactions. From a practical standpoint, its reliance on straightforward physicochemical measurements makes it a highly accessible and versatile tool for researchers in fields ranging from materials science to pharmaceutical development. When integrated into the broader framework of QSPR modeling, either as a standalone descriptor or in conjunction with topological indices, the PN scale enhances our ability to predict and optimize the properties of new chemical entities rationally. This new framework not only facilitates a deeper understanding of intermolecular interactions but also accelerates the design of task-specific materials and drugs with tailored properties.

Role of Polarity Prediction in Bioactive Compound Discovery and Optimization

Polarity stands as a fundamental molecular property that profoundly influences the behavior of bioactive compounds in biological systems. It governs critical processes including solubility, membrane permeability, target binding, and metabolic stability—factors that collectively determine a compound's ultimate success or failure as a therapeutic agent [22]. In modern drug discovery, predicting and optimizing polarity has evolved from empirical observation to a sophisticated computational science, enabling researchers to navigate the complex trade-offs between potency and pharmacokinetics [23].

The strategic importance of polarity prediction extends throughout the drug discovery pipeline. During lead generation, computational tools rapidly screen vast chemical spaces to identify compounds with optimal polarity characteristics. In lead optimization, researchers deliberately fine-tune molecular structures to achieve the precise polarity balance required for effective drug-like behavior [22]. This whitepaper examines the computational frameworks, particularly Quantitative Structure-Property Relationship (QSPR) and Linear Solvation Energy Relationship (LSER) approaches, that enable accurate polarity prediction and its application in bioactive compound development. We present a technical analysis of these methodologies within the context of a broader thesis comparing LSER against other polarity scales and QSPR approaches, providing researchers with actionable protocols and frameworks for implementation.

Computational Frameworks for Polarity Prediction

QSPR Methodology and Implementation

Quantitative Structure-Property Relationship (QSPR) modeling represents a powerful computational approach that establishes mathematical relationships between molecular descriptors and physicochemical properties, including polarity-dependent properties [24]. QSPR operates on the fundamental principle that the structure of a molecule encodes information about its properties, enabling the prediction of properties for novel compounds without the need for resource-intensive experimental measurements [25].

The standard QSPR workflow encompasses several well-defined stages, as illustrated below:

Data Curation and Preparation: The initial phase involves assembling high-quality experimental data for model training. As emphasized in recent literature, rigorous data curation is essential—addressing issues such as structural standardization, removal of duplicates, and handling of mixed solvents or inorganics [26]. For polarity-relevant properties, common experimental endpoints include partition coefficients (LogP), solubility, and chromatographic retention parameters [24].

Molecular Descriptor Calculation: Following data curation, molecular descriptors are computed to numerically represent structural features. Modern QSPR implementations leverage extensive descriptor libraries, with the Mordreds library providing 1,825 molecular descriptors that capture electronic, topological, and geometric properties relevant to polarity [24]. These include octanol-water partition coefficients (LogP), dipole moments, polar surface areas, and hydrogen bonding parameters [23].

Model Training and Validation: Machine learning algorithms establish mathematical relationships between descriptors and target properties. Current open-source QSPR platforms support multiple algorithms including extreme gradient boosting (XGBoost), random forests, support vector machines, and neural networks [24]. Model validation follows OECD guidelines, employing internal cross-validation and external test sets to ensure predictive reliability [27] [26].

LSER Fundamentals and Theoretical Basis

Linear Solvation Energy Relationship (LSER) modeling provides a complementary approach to polarity prediction with a stronger theoretical foundation in solvation thermodynamics. The LSER framework characterizes solute-solvent interactions through a set of empirically-derived parameters that capture specific intermolecular interactions [28].

The fundamental LSER equation for predicting partition coefficients (log K) is:

log K = c + eE + sS + aA + bB + vV

Where each parameter represents a specific solute-solvent interaction:

- E represents excess molar refractivity

- S represents dipolarity/polarizability

- A represents hydrogen-bond acidity

- B represents hydrogen-bond basicity

- V represents McGowan characteristic volume

This approach has demonstrated significant utility in predicting polarity-dependent properties, with one study reporting squared correlation coefficients (r²) above 0.87 for predicting Ostwald solubility coefficients of trans-stilbene across 44 organic solvents [28]. The strength of LSER lies in its direct parameterization of specific intermolecular forces that govern polarity and solvation, providing chemically intuitive insights that complement purely statistical QSPR models.

Comparative Analysis: LSER versus Descriptor-Based QSPR

The following table summarizes the key distinctions between LSER and descriptor-based QSPR approaches for polarity prediction:

Table 1: Comparison of LSER and QSPR Approaches for Polarity Prediction

| Feature | LSER Approach | Descriptor-Based QSPR |

|---|---|---|

| Theoretical Basis | Solvation thermodynamics; specific interaction parameters | Statistical correlation; diverse mathematical descriptors |

| Descriptor Interpretation | Direct chemical meaning (H-bonding, polarity, volume) | Varies from chemically intuitive to mathematical abstractions |

| Experimental Requirements | Requires experimental parameterization for solvation systems | Can utilize existing databases; less experimental input needed |

| Transferability | Limited to systems with parameterized interactions | Highly transferable across diverse chemical spaces |

| Implementation Complexity | Moderate; parameters well-established for common systems | Low to high depending on descriptor selection and model complexity |

| Chemical Insight Generation | High; directly identifies specific intermolecular interactions | Variable; requires descriptor interpretation and analysis |

| Applicability Domain | Defined by parameterized interactions and compounds | Defined by chemical space of training set and descriptor ranges |

This comparative analysis reveals complementary strengths: LSER provides superior mechanistic interpretation of polarity phenomena, while QSPR offers greater flexibility and predictive scope across diverse chemical spaces [28]. The choice between approaches depends on the specific research context—LSER excels in understanding specific solvation interactions, while QSPR offers broader screening capabilities for novel compounds.

Experimental Protocols and Implementation

QSPR-Based Polarity Prediction Protocol

Objective: Predict octanol-water partition coefficients (LogP) for a series of novel bioactive compounds using QSPR methodology.

Materials and Computational Tools:

Table 2: Research Reagent Solutions for QSPR Implementation

| Tool/Resource | Type | Function | Access |

|---|---|---|---|

| RDKit | Cheminformatics Library | Molecular descriptor calculation | Open-source |

| Mordred | Descriptor Package | Calculation of 1,825 molecular descriptors | Open-source |

| QSPRmodeler | Modeling Framework | Machine learning model development | Open-source |

| ChEMBL | Database | Bioactivity and property data | Public |

| PubChem | Database | Chemical structures and properties | Public |

Step-by-Step Procedure:

Data Collection and Curation:

- Retrieve experimental LogP values for 200-500 diverse compounds from reliable sources such as PubChem or ChEMBL [29].

- Apply rigorous curation: remove duplicates, standardize structures, and address measurement outliers.

- Split data into training (80%) and test (20%) sets using rational division methods.

Descriptor Calculation:

- Generate molecular structures from SMILES strings using RDKit.

- Calculate molecular descriptors using Mordred, including topological indices, electronic parameters, and constitutional descriptors [24].

- Apply feature selection to reduce dimensionality: remove constant descriptors and highly correlated pairs (r > 0.95).

Model Training:

- Implement multiple machine learning algorithms (random forest, XGBoost, neural networks) using QSPRmodeler.

- Optimize hyperparameters through cross-validation grid search.

- Train models using training set compounds and descriptors.

Validation and Application:

- Evaluate model performance on test set using metrics including R², RMSE, and MAE.

- Apply validated model to predict LogP for novel compounds of interest.

- Define applicability domain to identify extrapolations beyond model scope.

This protocol has demonstrated robust performance in pharmaceutical applications, with validated QSPR models achieving correlation coefficients (r²) of 0.58-0.90 for various biological activity endpoints [23] [24].

LSER Implementation for Solubility Prediction

Objective: Predict gas-solvent partition coefficients for trans-stilbene derivatives across organic solvents using LSER methodology.

Materials:

- Experimental solubility data for model development

- LSER parameters for solvents of interest

- Computational environment for multilinear regression analysis

Step-by-Step Procedure:

Data Compilation:

- Collect experimental Ostwald solubility coefficients for trans-stilbene in 44 organic solvents from literature sources [28].

- Compile LSER parameters (E, S, A, B, V) for each solvent from established databases.

Model Development:

- Perform multilinear regression to determine equation coefficients: log K = c + eE + sS + aA + bB + vV

- Validate model significance through F-testing and coefficient p-values.

- Assess multicollinearity among LSER parameters using variance inflation factors.

Interpretation and Application:

- Analyze coefficient magnitudes to identify dominant solubility interactions.

- Apply validated model to predict solubility in novel solvents.

- Compare predictions with experimental values to refine model accuracy.

This approach has demonstrated strong predictive performance, with reported r² values of 0.84-0.90 for training sets and 0.86-0.87 for test sets in trans-stilbene solubility prediction [28].

Applications in Bioactive Compound Optimization

Lead Generation and Virtual Screening

Polarity prediction serves as a critical filter in virtual screening campaigns, enabling efficient prioritization of compounds with optimal physicochemical profiles. In practice, QSPR models rapidly evaluate massive chemical libraries (10⁵-10⁷ compounds), identifying candidates with desirable polarity characteristics for further evaluation [26]. This approach significantly enriches hit rates compared to random screening, with reported success rates of 1-40% for QSPR-based virtual screening versus 0.01-0.1% for conventional high-throughput screening [26].

A notable application involves the discovery of non-nucleoside inhibitors of HIV reverse transcriptase (NNRTIs), where polarity-informed screening identified initial lead compounds with activities at low-µM concentrations that were subsequently optimized to low-nM inhibitors [23]. In these campaigns, computed LogP values served as key descriptors in scoring functions, highlighting the critical role of polarity optimization in lead identification.

Lead Optimization and Property Balancing

During lead optimization, polarity prediction guides structural modifications to achieve the delicate balance between permeability and solubility—a fundamental challenge in drug development [22]. Researchers systematically modify molecular structures through strategies including:

- Bioisosteric replacement to fine-tune hydrogen bonding capacity

- Side chain optimization to modulate lipophilicity

- Scaffold hopping to explore diverse polarity profiles

The integration of polarity prediction with other molecular properties creates a comprehensive optimization framework, as illustrated below:

This iterative process continues until compounds achieve the optimal polarity profile for the intended therapeutic application, successfully balancing the often-conflicting demands of solubility and membrane permeability [22].

Validation and Best Practices

Model Validation Strategies

Robust validation represents a critical component of reliable polarity prediction. The following approaches ensure model reliability and applicability:

- External Validation: The most critical validation method, using compounds not included in model training. A study of 44 QSAR models demonstrated that R² alone is insufficient for model validation, highlighting the need for multiple validation metrics [27].

- Applicability Domain Definition: Characterizing the chemical space where models provide reliable predictions, typically based on descriptor ranges and molecular similarity to training compounds.

- Progressive Validation: Implementing a tiered strategy from internal cross-validation to external testing and ultimate experimental confirmation.

Data Quality and Curation

High-quality input data forms the foundation of predictive polarity models. Best practices include:

- Structural Standardization: Normalizing representation of tautomers, stereochemistry, and protonation states [26].

- Experimental Accuracy: Prioritizing data from standardized protocols with documented experimental conditions.

- Outlier Detection: Identifying and investigating compounds with large prediction errors to refine models and identify limitations.

Polarity prediction stands as an indispensable component of modern bioactive compound discovery and optimization, with QSPR and LSER approaches providing complementary frameworks for property assessment. QSPR methodologies offer broad applicability across diverse chemical spaces, while LSER provides superior mechanistic insight into specific solute-solvent interactions. The integration of these approaches, supported by robust validation and implementation protocols, enables researchers to efficiently navigate the complex optimization landscape and accelerate the development of novel therapeutic agents with optimal physicochemical profiles.

As the field advances, the convergence of these computational approaches with experimental validation creates a powerful paradigm for polarity-informed drug design—systematically addressing one of the most fundamental challenges in pharmaceutical development. Through the strategic application of these methodologies, researchers can continue to enhance the efficiency and success rates of the drug discovery process.

Practical Implementation of Advanced Polarity and QSPR Methods

Structure-Based Pharmacophore Modeling for Targets with Limited Ligand Data

Structure-based pharmacophore modeling represents a pivotal methodology in computer-aided drug design, particularly for targets with scarce ligand information. This whitepaper details the theoretical foundation, methodological framework, and practical implementation of target-focused pharmacophore modeling approaches that extract essential interaction features directly from macromolecular structures. By leveraging energy grid calculations and density-based clustering algorithms, researchers can identify key pharmacophoric features—hydrogen bond donors/acceptors, hydrophobic regions, and ionic interactions—without dependence on known ligands. This technical guide contextualizes these approaches within the broader landscape of Linear Solvation-Energy Relationships (LSER) and other Quantitative Structure-Property Relationship (QSPR) methodologies, highlighting comparative advantages for underexplored therapeutic targets. The integration of these computational strategies enables rational drug design against novel biological targets where traditional ligand-based methods fail.

The Challenge of Limited Ligand Data

Conventional pharmacophore modeling approaches predominantly fall into two categories: ligand-based methods that require multiple known active compounds, and structure-based methods derived from existing ligand-target complexes [30]. Both methodologies depend on the availability of ligand information, creating a significant research gap for emerging therapeutic targets, underexplored protein classes, and novel allosteric sites where such data is scarce or nonexistent [30]. This limitation is particularly problematic in early-stage drug discovery against newly identified disease targets.

Theoretical Framework: LSER and QSPR Context

Target-focused pharmacophore modeling exists within the broader ecosystem of molecular descriptor systems and polarity scales, including the well-established Linear Solvation-Energy Relationships (LSER) or Abraham solvation parameter model [14]. LSER correlates free-energy-related properties of solutes with six molecular descriptors: McGowan's characteristic volume (Vx), gas-liquid partition coefficient (L), excess molar refraction (E), dipolarity/polarizability (S), hydrogen bond acidity (A), and hydrogen bond basicity (B) [14]. While LSER provides a robust framework for predicting solvation properties, its transfer to direct pharmacophore feature identification for drug binding sites presents challenges. Target-focused pharmacophore modeling complements these QSPR approaches by deriving interaction potentials directly from the 3D structure of the biological target itself, creating a bridge between empirical polarity scales and structure-based drug design [30] [14].

Methodological Foundations

Core Principles of Target-Focused Pharmacophore Modeling