Beyond Basic Predictions: Mastering Strong Specific Interactions in LSER Models for Advanced Drug Discovery

This article provides a comprehensive guide for researchers and drug development professionals on handling strong, specific interactions within Linear Solvation Energy Relationship (LSER) models.

Beyond Basic Predictions: Mastering Strong Specific Interactions in LSER Models for Advanced Drug Discovery

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on handling strong, specific interactions within Linear Solvation Energy Relationship (LSER) models. It covers the foundational theory of these challenging interactions, details advanced methodological approaches for their integration, and offers practical troubleshooting strategies for model optimization. Furthermore, it explores rigorous validation techniques and comparative analyses with other predictive models, positioning robust LSERs as a critical tool for improving the accuracy of property prediction in rational drug design.

Deconstructing the Challenge: A Primer on Strong Specific Interactions in LSERs

FAQs on Strong Specific Interactions

1. What are "strong specific interactions" and why are they important in LSER models? Strong specific interactions are highly directional, attractive forces between molecules that significantly influence solubility, partitioning, and chemical reactivity. In LSER research, they are crucial because they account for the "hydrogen-bonding" term in the model, directly impacting the accuracy of predicting partition coefficients and solvation energies for pharmaceuticals and other chemicals [1]. Accurately characterizing these interactions allows for better prediction of a molecule's behavior in biological and environmental systems.

2. How does hydrogen bonding differ from a standard dipole-dipole interaction? While both are electrostatic, hydrogen bonding is a stronger, more specific interaction that occurs when a hydrogen atom is covalently bonded to a highly electronegative atom (N, O, or F) and attracts a lone pair on another electronegative atom [2]. A standard dipole-dipole interaction is a more general attraction between the partial positive end of one polar molecule and the partial negative end of another, without the requirement of a hydrogen atom bonded to N, O, or F [3].

3. What experimental techniques can confirm the presence of hydrogen bonding in a cocrystal? Vibrational spectroscopic techniques like FT-IR and FT-Raman are primary tools. The formation of a hydrogen bond is often confirmed by a redshift (shift to lower wavenumber) and broadening of the stretching band of the donor group (e.g., O-H) and a change in the bond length [4]. Complementary methods include:

- Powder X-ray Diffraction (PXRD): To characterize the crystal structure and identify the crystalline phase of the material, such as a cocrystal.

- Differential Scanning Calorimetry (DSC): To identify the thermal events (e.g., melting point) unique to the cocrystal, confirming its formation [4].

4. Our LSER predictions for a new API are inaccurate. Could unaccounted halogen bonding be the cause? Yes. Traditional LSER descriptors (A and B) primarily account for hydrogen-bonding acidity and basicity. If your active pharmaceutical ingredient (API) contains halogens (e.g., Cl, Br, I) in a molecular context that allows them to act as electrophilic sites (so-called "sigma-holes"), they can engage in significant halogen bonding [1]. This specific interaction is not explicitly captured by standard Abraham's LSER parameters and could lead to deviations between predicted and experimental partition coefficients. For accurate modeling, you may need to explore advanced quantum chemical descriptors that can quantify this behavior [1].

5. Why is the preparation of a caffeine-citric acid (CAF-CA) cocrystal a classic example for studying interactions? Caffeine has multiple oxygen and nitrogen atoms that act as excellent hydrogen bond acceptors but is a poor hydrogen bond donor. Citric acid, with its carboxylic acid and hydroxyl groups, is a strong hydrogen bond donor. Together, they form a stable cocrystal through specific intermolecular hydrogen bonds, such as O–H···N and O–H···O, making it an ideal model system to study the "best-donor-best-acceptor" rule in supramolecular chemistry [4].

Troubleshooting Guides

Problem: Inconsistent or Low Yield in Pharmaceutical Cocrystal Formation Potential Cause and Solution:

- Cause: Incorrect stoichiometry or insufficient activation energy for the molecular components to interact and form the new crystalline phase.

- Solution:

- Validate Reactant Ratios: Use analytical techniques to confirm the purity and stoichiometry of your starting materials (CAF and CA). Even slight deviations can lead to different polymorphs or incomplete reaction.

- Optimize Slurry Crystallization Protocol:

- Ensure the solvent (e.g., ethanol) is pure and anhydrous.

- Extend the stirring time (e.g., to 48 hours or more) to allow the system to reach thermodynamic equilibrium.

- Control the temperature precisely, as it can dictate which polymorph is formed.

- Characterize the Product: Use PXRD and DSC to confirm the successful formation of the desired CAF-CA cocrystal (Form II) and rule out the presence of unreacted starting materials or other polymorphs [4].

Problem: Poor Correlation Between Experimental and Predicted Solvation Free Energies in LSER Models Potential Cause and Solution:

- Cause: The standard LSER descriptors (A and B) may not fully capture the specific hydrogen-bonding strength of your compounds, especially for complex, multi-sited molecules.

- Solution:

- Recalibrate with QC-LSER Descriptors: For more robust predictions, derive new quantum chemical-linear solvation energy relationship (QC-LSER) descriptors. These are based on the molecular surface charge distribution (σ-profiles) obtained from DFT calculations.

- Calculate New Descriptors: Determine the proton donor capacity (( \alphaG )) and proton acceptor capacity (( \betaG )) for your molecules. For a solute (1) and solvent (2), the hydrogen-bonding contribution to the free energy can be predicted as ( c(\alpha{G1}\beta{G2} + \beta{G1}\alpha{G2}) ), where ( c ) is a universal constant [1].

- Verify with Model Systems: Test the new predictive scheme against known experimental data or Abraham's LSER model estimations to validate its accuracy for your specific set of compounds [1].

Quantitative Data on Interaction Strengths

Table 1: Representative Energy Ranges of Strong Specific Interactions

| Interaction Type | Typical Energy Range (kJ/mol) | Key Characteristics |

|---|---|---|

| Hydrogen Bonding | ~9 to >30 [2] | Directional; requires H bonded to N, O, or F. |

| Ion-Dipole | ~50 to >200 (highly variable) [2] | Stronger than dipole-dipole; key in solvation. |

| Dipole-Dipole | 5-20 (weaker than H-bond) [2] | Occurs between any polar molecules. |

Table 2: Experimental Hydrogen Bond Interactions in CAF-CA Cocrystal

The following table summarizes key intermolecular hydrogen bonds identified in the caffeine-citric acid (CAF-CA) cocrystal, showcasing specific atom-level interactions and their strengths [4].

| Bonding Type | Specific Interaction | Reported Interaction Energy (kcal/mol) |

|---|---|---|

| Intermolecular | O26–H27···N24–C22 | Not Specified |

| Intermolecular | O39–H40···O52=C51 | Not Specified |

| Intermolecular | O43–H44···O86=C83 | Not Specified |

| Intermolecular (Strongest) | O88–H89···O41 | -12.4247 |

Detailed Experimental Protocol: Cocrystal Formation via Slurry Crystallization

This protocol is adapted from the synthesis of the caffeine-citric acid (CAF-CA) cocrystal, a model system for studying hydrogen bonding [4].

Objective: To prepare and characterize a 1:1 cocrystal of caffeine (CAF) and citric acid (CA) to study strong, specific hydrogen-bonding interactions.

Materials and Equipment:

- Reactants: Caffeine (CAF), Citric Acid (CA).

- Solvent: Anhydrous Ethanol.

- Glassware: 10 ml glass vial with cap, vacuum filtration setup.

- Equipment: Magnetic stirrer and stir bar, analytical balance, oven or hotplate for drying.

Procedure:

- Weighing: Charge a 10 ml glass vial with 1.9 g of CAF and 2.0 g of CA.

- Slurry Formation: Add 3 ml of anhydrous ethanol to the vial to form a slurry.

- Reaction: Cap the vial and allow the reaction to stir at room temperature for 48 hours.

- Isolation: After 48 hours, isolate the solid product by vacuum filtration.

- Drying: Air-dry the filtered solids at room temperature until the solvent odor is no longer detectable [4].

Characterization and Analysis:

- Powder X-Ray Diffraction (PXRD):

- Purpose: To confirm the formation of a new, distinct crystalline phase (the cocrystal) and rule out the presence of physical mixtures of the starting components.

- Method: Collect the PXRD pattern of the product and compare it to the simulated patterns of pure CAF and CA. The appearance of new, characteristic peaks confirms cocrystal formation [4].

- Differential Scanning Calorimetry (DSC):

- Purpose: To identify the unique thermal fingerprint (e.g., melting point) of the cocrystal, which will be different from that of the individual components.

- Method: Load 2-4 mg of the sample into a DSC pan. Heat the sample from -25°C to 200°C at a rate of 10°C per minute under a nitrogen purge. The resulting thermogram will show a distinct melting endotherm for the CAF-CA cocrystal [4].

- Vibrational Spectroscopy (FT-IR and FT-Raman):

- Purpose: To provide direct evidence of hydrogen bond formation through shifts in vibrational frequencies.

- Method: Record FT-IR and FT-Raman spectra of the cocrystal and the pure components. Key evidence for hydrogen bonding includes a redshift and broadening of the O-H stretching vibration of citric acid, indicating a weakening and lengthening of the bond due to the interaction [4].

Visualization of Concepts and Workflows

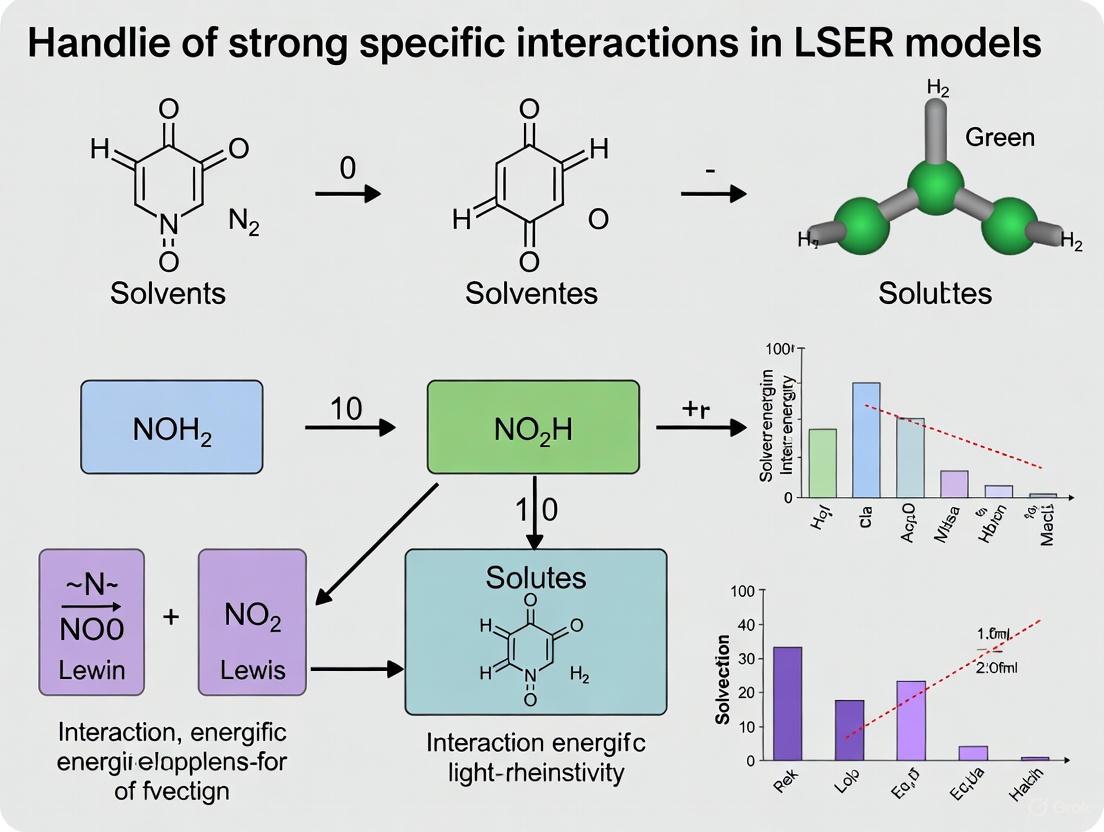

This diagram outlines the key experimental and analytical steps for forming and characterizing a cocrystal, such as CAF-CA, to study hydrogen bonding.

This diagram shows how hydrogen bonding is incorporated as a specific component within a broader LSER model, governed by acidity and basicity descriptors.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Materials for Studying Strong Interactions

| Item Name | Function / Relevance | Example from Context |

|---|---|---|

| Caffeine (CAF) | Model Active Pharmaceutical Ingredient (API) with multiple H-bond acceptor sites but poor donor ability. | Used in CAF-CA cocrystal to study heteromeric synthon formation [4]. |

| Citric Acid (CA) | Coformer with strong hydrogen bond donor groups (carboxylic acid and hydroxyl). | Forms strong O–H···O and O–H···N bonds with caffeine [4]. |

| Anhydrous Ethanol | Solvent for slurry crystallization. | Facilitates molecular mobility and interaction between CAF and CA without reacting [4]. |

| Quantum Chemical Software | For calculating molecular descriptors (e.g., σ-profiles, αG, βG) and interaction energies. | Used to derive QC-LSER descriptors for more robust prediction of HB free energies [1]. |

Technical Support Center: Troubleshooting LSER Models

Frequently Asked Questions (FAQs)

Q1: My LSER model shows poor predictive accuracy for compounds involved in hydrogen bonding. What could be wrong? The standard LSER model treats solute descriptors as purely additive, which can fail for molecules with strong, specific interactions like hydrogen bonding. This additivity assumption does not fully capture the cooperative or competitive nature of these forces, especially in complex systems like drug molecules [5]. The error often lies in the hydrogen-bonding descriptors (A and B), which may not adequately represent the actual free energy change for these interactions in your specific system [6].

Q2: How can I troubleshoot systematic errors in my calculated partition coefficients (log P)? Systematic errors often originate from the limitations of the experimental data used to train the LSER model. To troubleshoot:

- Benchmark Your Model: Compare your predictions against a high-quality, chemically diverse validation set. A significant drop in accuracy (e.g., increase in RMSE) for certain compound classes indicates descriptor limitations [7].

- Check Descriptor Provenance: Determine if solute descriptors (E, S, A, B, V) were obtained experimentally or predicted computationally. Predictions from QSPR tools, while convenient, introduce additional error and are a common source of inaccuracy [7].

- Analyze Residuals: Plot the residuals (difference between predicted and experimental values) against each solute descriptor. A non-random pattern, particularly against the A (acidity) or B (basicity) descriptors, points to a failure in modeling hydrogen-bonding interactions [5].

Q3: What experimental factors can lead to unreliable LSER solute descriptors? Unreliable descriptors often stem from experimental artifacts, particularly in gas chromatography (GC) measurements used to determine them [8].

- Adsorption Effects: For polar solutes, adsorption on the solid support or at the gas-liquid interface in a GC column can skew retention data, leading to incorrect

log L16values [8]. - Stationary Phase Issues: The use of inappropriate or impure stationary phases, or those with poor thermal stability, can corrupt the fundamental partition coefficient data from which descriptors are derived [8].

- Temperature Extrapolation: Measuring retention times at high temperatures and extrapolating to standard conditions (e.g., 298 K) is error-prone. The quality of the result is highly dependent on the extrapolation method used [8].

Q4: Are there alternative models that better handle strong specific interactions? Yes, the Partial Solvation Parameter (PSP) approach is designed to address some LSER limitations. PSPs are grounded in equation-of-state thermodynamics, providing a more coherent framework for mixtures and interfaces [6] [5]. A key advantage is the direct calculation of the Gibbs free energy change (ΔGHB) for hydrogen bond formation, offering a more thermodynamically sound treatment of these specific interactions [5]. The parameters can also be converted back to classical LSER descriptors, facilitating comparison [5].

Troubleshooting Guide: Common LSER Experimental Issues

Issue: Inconsistent Solute Descriptors for Hydrogen-Bonding Compounds

- Symptoms: Large variations in reported A (acidity) and B (basicity) values for the same compound across different databases or publications; poor model performance for drugs and complex organics.

- Underlying Cause: The self-association of solute molecules is often neglected during the experimental determination of LSER descriptors. For complex molecules with multiple functional groups, this can lead to significant errors [5].

- Solution:

- Utilize inverse gas chromatography (IGC) to measure descriptors under controlled conditions specific to your research context [5].

- Apply corrections for self-association in the data analysis phase if possible [5].

- Consider adopting the PSP framework, which is designed to extract more thermodynamically meaningful information from LSER-type data [6].

Issue: Poor Model Transferability Between Systems

- Symptoms: An LSER model calibrated for one polymer-solvent system (e.g., LDPE-water) performs poorly when applied to another (e.g., PDMS-water), even for similar compounds.

- Underlying Cause: The chemical diversity of the training set is a major factor in a model's applicability domain. A model trained on a narrow range of interactions will not generalize well [7].

- Solution:

- Ensure your training set encompasses a wide range of interaction types (polar, non-polar, acidic, basic).

- When building a model for a new system, benchmark it against established LSER system parameters for similar phases to identify potential biases in its interaction capabilities (e.g., a polymer's inherent basicity) [7].

- Recalibrate the model constants for your specific two-phase system instead of relying on generic parameters [7].

Issue: Low Predictive Accuracy for Non-Volatile Compounds

- Symptoms: Inability to generate descriptors or predictions for heavy, less volatile compounds.

- Underlying Cause: Direct experimental determination of the key descriptor

log L16is impossible for compounds less volatile than the n-hexadecane standard, and difficult even for slightly more volatile compounds [8]. - Solution:

- Use specialized stationary phases like apolane (a branched C87 alkane) that allow for measurements at higher temperatures [8].

- Employ relative methods and extrapolation techniques, though these introduce uncertainty [8].

- Use predictive methods for

log L16as a last resort, with a clear understanding of the potential error introduced [8].

Experimental Protocols for Key LSER Methodologies

Protocol 1: Determining LSER Solute Descriptors via Gas Chromatography

This protocol outlines the steps for determining the log L16 descriptor, a foundation for other parameters [8].

- Column Preparation: Use a gas chromatography column coated with a non-polar stationary phase, ideally n-hexadecane or apolane. A high stationary phase loading (up to 20%) is recommended to minimize adsorption effects [8].

- Dead Time Determination: Accurately measure the column's dead time (tm) using a non-retained compound.

- Retention Time Measurement: For the solute of interest, measure its retention time (tR) isocratically at a controlled temperature, ideally 298.2 K. For non-volatile compounds, measure at higher temperatures and extrapolate using a validated procedure [8].

- Partition Coefficient Calculation: Calculate the gas-liquid partition coefficient. For capillary columns, use a relative method with a reference compound (e.g., n-hexane) and the relationship:

log L16 = log (tR,X - tm) + C, where C is a constant derived from the reference compound's knownlog L16[8]. - Data Validation: Ensure chromatographic peaks are symmetric. Asymmetric peaks for polar solutes indicate adsorption, invalidating the results [8].

Protocol 2: Validating an LSER Model with an Independent Set This protocol is crucial for assessing the real-world predictive power of a developed LSER model [7].

- Data Set Splitting: Before model development, randomly assign approximately 70-80% of the full experimental dataset to a training set. The remaining 20-30% is held back as an independent validation set [7].

- Model Training: Develop the LSER model (e.g.,

log K = c + eE + sS + aA + bB + vV) using only the data from the training set. - Blind Prediction: Use the finalized model to predict the partition coefficients for all compounds in the independent validation set.

- Performance Calculation: Calculate standard regression statistics (R² and RMSE) by comparing the model's predictions against the actual experimental data for the validation set [7].

- Benchmarking: Compare these statistics with those from the training set. A significant performance drop indicates overfitting and poor model generalizability [7].

Research Reagent Solutions

The following table details key materials and computational tools used in LSER-related research.

| Item/Reagent | Function in LSER Research | Key Considerations |

|---|---|---|

| n-Hexadecane Stationary Phase [8] | The standard non-polar phase for determining the foundational log L16 solute descriptor. |

High loading ratios reduce adsorption effects. Difficult to use for non-volatile solutes [8]. |

| Apolane (C87H176) Stationary Phase [8] | A branched alkane stationary phase for GC, allows determination of log L16 at higher temperatures. |

Enables work with less volatile compounds, but film stability at high temperatures can be an issue [8]. |

| Inverse Gas Chromatography (IGC) [5] | An experimental technique to measure thermodynamic properties and determine LSER descriptors for novel materials (e.g., drugs, polymers). | Provides data for the specific material under study. Only a few probe gases are needed for reasonable PSP/LSER estimates [5]. |

| Abraham LSER Descriptor Database [7] [5] | A curated database of experimentally derived solute descriptors (E, S, A, B, V, L). | Essential for model development. Users must verify descriptor provenance (experimental vs. predicted) [7]. |

| Partial Solvation Parameters (PSP) [6] [5] | A thermodynamically-grounded framework that can be derived from LSER descriptors to better model hydrogen bonding. | Offers a unified approach for bulk and interface thermodynamics. Allows calculation of free energy change for hydrogen bonds [5]. |

Visualizing LSER Limitations and Solutions

The following diagrams illustrate the core limitations of standard LSER models and a potential troubleshooting workflow.

LSER Additivity Limitation

LSER Troubleshooting Path

FAQs: Addressing Core Experimental Challenges

FAQ 1: What is the fundamental difference between kinetic and thermodynamic solubility assays, and when should each be used?

Kinetic solubility is the maximum solvability of the fastest precipitating species of a compound, typically measured by first dissolving a compound in an organic solvent like DMSO and then diluting it in an aqueous buffer. It is performed in high throughput with shorter incubation times and analyzed using plate readers, such as nephelometric or direct UV assays. It is most useful for rapid compound assessment, guiding structure optimization, and diagnosing bioassay issues early in drug discovery. In contrast, thermodynamic solubility is the saturation solvability at equilibrium with excess solid material and is considered the "true" solubility of a compound. It is measured by incubating excess solid compound with buffer for extended periods (often days) before filtration and quantitation (e.g., via HPLC). This method is critical for preformulation work and understanding a compound's fundamental properties [9] [10].

FAQ 2: How do computational models for predicting membrane permeability, such as Molecular Dynamics (MD), compare to in vitro assays like PAMPA?

Computational models, particularly umbrella sampling Molecular Dynamics (MD), provide an atomistic description of the passive permeability process, offering detailed insights into the underlying molecular mechanism. These models can be validated and fine-tuned using data from in vitro parallel artificial membrane permeability assays (PAMPA). When calibrated this way, MD models have shown substantially improved agreement with PAMPA data compared to alternative computational methods. While PAMPA provides an efficient, experimental measure of permeability, MD simulations offer a powerful, complementary strategy that can elucidate the specific molecular features governing a compound's ability to cross lipid bilayers [11] [12].

FAQ 3: My Quantitative Structure-Property Relationship (QSPR) model for logP is overfitting, especially with a small dataset. What strategies can improve its robustness?

Overfitting is a common challenge in QSPR modeling, particularly with small datasets and a high number of molecular descriptors. A powerful strategy is to use transformed descriptor frameworks like Arithmetic Residuals in K-groups Analysis (ARKA) descriptors. ARKA descriptors condense a preselected set of molecular descriptors into a more compact and informative form (typically two descriptors: ARKA1, linked to lipophilicity, and ARKA2, linked to hydrophilicity). This dimensionality reduction helps retain essential chemical information while mitigating overfitting and improving model generalizability for new, unseen compounds [13].

FAQ 4: Why might a compound with a high predicted logP value show unexpectedly low membrane permeability in a cellular assay?

A high logP value indicates high lipophilicity, which is generally favorable for passive membrane diffusion. However, several factors can lead to discrepancies:

- Molecular Size and Specific Chemical Groups: Permeability is influenced by more than just lipophilicity. Size, charge, and the presence of specific chemical groups can significantly hinder diffusion [12].

- Efflux Transport: The compound might be a substrate for active efflux transporters (e.g., P-glycoprotein), which pump the drug out of cells, reducing its apparent permeability [11].

- Metabolism: The compound could be rapidly metabolized within the cell before it can be measured [11].

- Incorrect logP Prediction: The computational model used to predict logP may be inaccurate for that specific chemical scaffold, giving a false sense of its true lipophilicity [14].

Troubleshooting Guides for Common Experimental Issues

Troubleshooting Guide: Inconsistent Solubility Measurements

| Symptom | Possible Cause | Solution |

|---|---|---|

| Low measured kinetic solubility, but good thermodynamic solubility. | Precipitate formation from a meta-stable, high-energy species in the kinetic assay. | Confirm the solid form used in the thermodynamic assay is the most stable crystal polymorph [10]. |

| High variability in nephelometric solubility readings. | Inconsistent detection of undissolved particulate matter due to operator or equipment variance. | Switch to a direct UV assay, where dissolved material is quantitated after filtration to remove particles, providing a more direct measurement [9]. |

| Solubility results are inconsistent with bioassay performance. | Compound precipitation in the bioassay buffer, masking its true activity. | Use kinetic solubility data to guide the design of bioassay vehicle formulations, ensuring the test compound remains dissolved throughout the experiment [9]. |

Troubleshooting Guide: Poor Correlation Between Predicted and Experimental logP

| Symptom | Possible Cause | Solution |

|---|---|---|

| Large errors for specific compound classes (e.g., with dimerization). | Standard descriptors or models fail to capture key intramolecular interactions or specific molecular features. | Utilize simpler, optimized molecular descriptors like the optimized 3D-MoRSE (opt3DM), which incorporate 3D structural information and have been shown to achieve high accuracy (RMSE ~0.31) [14]. |

| QSPR model performs poorly on new, unseen compounds. | Model overfitting or the new compounds are outside the model's "applicability domain" (structural space it was trained on). | Implement the ARKA descriptor framework to reduce dimensionality and enhance model robustness. Always analyze the applicability domain of your QSPR model before using it for prediction [13]. |

| Discrepancy between different computational methods (e.g., QC vs. ML). | Underlying limitations of the method; e.g., COSMO-RS can overestimate hydrophilicity for molecules with dimerization effects. | For complex molecules, consider consensus modeling or leverage machine learning methods that have been shown to outperform some quantum chemical (QC) and molecular dynamics (MD) approaches in blind challenges [14]. |

Performance Comparison of logP Prediction Methods

This table summarizes the root mean square error (RMSE) of various logP prediction methods as reported in competitive SAMPL challenges, providing a benchmark for model selection.

| Prediction Method | SAMPL6 Challenge (RMSE) | SAMPL9 Challenge (RMSE) | Key Characteristics |

|---|---|---|---|

| MD (CGenFF/Nequilibrium) [14] | 0.82 | - | Physics-based, computationally intensive |

| QC (COSMO-RS) [14] | 0.38 | - | Can overestimate hydrophilicity for some dimers |

| Deep Learning (Ulrich et al.) [14] | 0.33 | - | Uses data augmentation with tautomers |

| ML-QSPR (Lui et al.) [14] | 0.49 | - | Outperformed MD methods in its challenge |

| ML with opt3DM (This Work) [14] | 0.31 | Competitive results | Simple, fast, and highly accurate descriptor |

| D-MPNN (Graph Neural Network) [14] | - | 1.02 | High model complexity, longer training times |

Comparison of Solubility Assay Types in Drug Discovery

This table compares the two main types of solubility assays to guide appropriate experimental design.

| Assay Parameter | Kinetic Solubility | Thermodynamic Solubility |

|---|---|---|

| Definition | Maximum solvability of the fastest precipitating species [10] | Saturation solubility at equilibrium with the most stable solid form [10] |

| Throughput | High [9] | Moderate [9] |

| Incubation Time | Short (minutes to hours) [9] [10] | Long (hours to days) [9] [10] |

| Starting Material | DMSO stock solution [9] | Solid powder [9] |

| Primary Use Case | Rapid compound assessment, bioassay diagnosis, guiding chemistry design [9] | Preformulation, determining "true" solubility for development candidates [9] [15] |

Experimental Protocols for Key Assays

Protocol: High-Throughput Kinetic Solubility via Nephelometry

Principle: A DMSO stock solution of the test compound is diluted into aqueous buffer. Undissolved particles are detected via light scattering (nephelometry) as the solution is serially diluted to determine the solubility limit [9].

Procedure:

- Sample Preparation: Prepare a concentrated stock solution of the test compound in DMSO (e.g., 10 mM).

- Dilution: Using an automated liquid handler, transfer a small aliquot of the DMSO stock into a microtiter plate containing aqueous buffer (e.g., phosphate-buffered saline, pH 7.4). The final DMSO concentration should be kept low (typically ≤1%) to minimize cosolvent effects.

- Incubation: Allow the plate to incubate at room temperature for a short, standardized period (e.g., 60 minutes).

- Measurement: Read the plate using a nephelometer. A sharp increase in light scattering signal indicates the presence of undissolved particles.

- Data Analysis: The solubility is reported as the concentration at the last clear well before a significant increase in nephelometric signal is observed [9] [10].

Protocol: Determining Thermodynamic (Equilibrium) Solubility

Principle: Excess solid compound is agitated in buffer for a prolonged period to achieve a saturated solution at equilibrium. The concentration of the dissolved compound is then quantitated after removing the undissolved material [9] [15].

Procedure:

- Solid Dispensing: Weigh an amount of solid test compound (in its most stable crystalline form) that exceeds its anticipated solubility into a vial or microtiter plate well.

- Buffer Addition: Add a known volume of the desired buffer to the solid.

- Equilibration: Agitate the suspension for a sufficient time (e.g., 24-48 hours) at a constant temperature to reach equilibrium.

- Separation: Separate the dissolved compound from the undissolved solid by filtration (e.g., using a 96-well filter plate) or centrifugation.

- Quantitation: Dilute the filtrate/supernatant as needed and quantify the concentration of the dissolved compound using a suitable method, such as HPLC with UV detection [9].

Experimental Workflows and Signaling Pathways

Diagram 1: Integrated Drug Property Screening Workflow

Diagram 2: Addressing Strong Interactions in LSER Models

The Scientist's Toolkit: Essential Research Reagents and Materials

| Item | Function in Experiment |

|---|---|

| DMSO (Dimethyl Sulfoxide) | A common polar aprotic solvent used to prepare high-concentration stock solutions of test compounds for kinetic solubility assays and initial bioactivity screening [9] [10]. |

| PAMPA Plate (Parallel Artificial Membrane Permeability Assay) | A multi-well plate system incorporating an artificial lipid membrane to simulate passive, transcellular drug permeability in a high-throughput manner [11]. |

| Octanol-Water Partitioning System | The standard solvent system for experimentally determining the partition coefficient (logP), a key measure of compound lipophilicity [13]. |

| AlvaDesc Software | A comprehensive tool for calculating a vast array of molecular descriptors from chemical structures, which serve as inputs for building QSPR models [13]. |

| RDKit Library | An open-source cheminformatics toolkit used for programmatically calculating molecular descriptors, handling SMILES strings, and performing other chemoinformatic tasks [14]. |

Troubleshooting Guide: When Your LSER Model Fails

This guide helps researchers diagnose and correct common failures in Linear Solvation Energy Relationship (LSER) models, especially when applied to systems dominated by strong, specific interactions.

1. Problem: Poor Model Predictivity for Hydrogen-Bonding Compounds

- Question: "My LSER model works well for most solutes but fails dramatically for strong acids or bases. Why?"

- Diagnosis: This is a classic sign of model failure due to unaccounted for or improperly parameterized specific interactions. The standard LSER solute descriptors (A for acidity, B for basicity) or system coefficients (a, b) may be insufficient for the chemical space of your dataset [6]. The linear free-energy relationship can break down when very strong acid-base interactions are present [6].

- Solution: Re-evaluate the applicability domain of your training set. Ensure it includes a sufficient number of compounds with a wide range of A and B values. For systems with very strong interactions, consider that the model's linearity has thermodynamic limits that may be exceeded [6].

2. Problem: Inaccurate Partition Coefficient Predictions for Polymers

- Question: "My LSER model predicts the log K for Low-Density Polyethylene (LDPE)/water poorly. What's wrong?"

- Diagnosis: The model may be using incorrect or non-robust system parameters. The dominant interactions for sorption in LDPE are cavity formation and dispersion (vV), but hydrogen-bonding (aA, bB) and polar interactions (eE, sS) can be significant negative contributors [7].

- Solution: Implement a rigorously validated LSER model. For LDPE/water, one established model is [7]:

log Ki,LDPE/W = −0.529 + 1.098E − 1.557S − 2.991A − 4.617B + 3.886V

3. Problem: Failure to Compare Sorption Behavior Across Different Polymers

- Question: "How can I use LSER to understand why my compound sorbs differently to LDPE versus another polymer?"

- Diagnosis: The system coefficients for each polymer are not being compared directly. These coefficients encode the chemical nature of the sorption phase [7] [6].

- Solution: Compare the LSER system parameters for the polymers in question. For example, benchmarking reveals that LDPE is more hydrophobic and has weaker hydrogen bond accepting abilities than polymers like polyacrylate (PA) or polyoxymethylene (POM). Polar polymers like PA and POM exhibit stronger sorption for polar, non-hydrophobic compounds up to a

log Krange of 3-4 [7]. This quantitative comparison explains differences in sorption behavior based on chemical interactions.

LSER Model Failure: Key Experimental Data

The following tables summarize quantitative data from case studies where specific interactions led to model failure or required specialized models.

Table 1: LSER Model for LDPE/Water Partitioning [7]

| Model Statistic | Value / Equation |

|---|---|

| LSER Equation | log Ki,LDPE/W = −0.529 + 1.098E − 1.557S − 2.991A − 4.617B + 3.886V |

| Training Set (n) | 156 |

| Coefficient of Determination (R²) | 0.991 |

| Root Mean Square Error (RMSE) | 0.264 |

| Validation Set (n) | 52 |

| R² (Validation, exp. descriptors) | 0.985 |

| RMSE (Validation, exp. descriptors) | 0.352 |

| RMSE (Validation, predicted descriptors) | 0.511 |

Table 2: Comparative Sorption Characteristics of Polymers via LSER [7] [16]

| Polymer | Dominant Sorption Mechanisms | Key Characteristics vs. LDPE |

|---|---|---|

| LDPE | Cavity formation/dispersion (vV), π-/n-electron interactions (eE) | Baseline; more hydrophobic, weaker H-bond acceptance [7]. |

| Single-Walled Carbon Nanotubes (SWCNTs) | Cavity formation/dispersion (vV), π-/n-electron interactions (eE) | More polarizable, less polar, more hydrophobic than AC [16]. |

| Activated Carbon (AC) | Cavity formation/dispersion (vV) | Has less hydrophobic and less hydrophilic sites than CNTs; nonspecific interactions are weaker than SWCNTs [16]. |

| Polyacrylate (PA) | Hydrogen-bonding (aA, bB), polar interactions (sS) | Stronger sorption for polar, non-hydrophobic compounds [7]. |

Experimental Protocol: Developing a Robust LSER Model

This methodology outlines the key steps for building a reliable LSER model, highlighting where failures often occur.

1. Phase System Selection and Characterization

- Objective: Define the two-phase system (e.g., polymer/water, solvent/air) for which the partition coefficient (log P or log K) will be modeled.

- Procedure: Precisely control the temperature and composition of the phases. For polymer/water systems, ensure the polymer is well-characterized (e.g., crystallinity, amorphous fraction).

2. Curating a Chemically Diverse Training Set

- Objective: Assemble a set of solute compounds that broadly covers the chemical space of interest.

- Procedure: Select solutes with a wide range of experimental LSER molecular descriptors (Vx, E, S, A, B, L). Critical Step: The set must adequately populate the range of each descriptor, especially hydrogen-bonding (A, B) and polarity (S), to avoid model failure for specific interactions [6].

3. Measuring Partition Coefficients and Model Fitting

- Objective: Obtain experimental partition data and derive the system-specific LSER coefficients.

- Procedure:

- Experimentally measure the partition coefficient for each solute in the training set.

- Perform multiple linear regression of the measured log K values against the solute descriptors to obtain the system coefficients (c, e, s, a, b, v).

- Validation: Hold back a portion of the data (~30%) as an independent validation set to test the model's predictive power, as shown in Table 1 [7].

4. Model Application and Domain Checking

- Objective: Safely use the model for prediction.

- Procedure: For a new solute, ensure its molecular descriptors fall within the range of the training set's chemical space. Predictions for compounds outside this applicability domain are unreliable and a common source of failure.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for LSER and Sorption Experiments

| Item | Function in the Experiment |

|---|---|

| Low-Density Polyethylene (LDPE) | A model non-polar, semi-crystalline polymer phase for studying hydrophobic sorption and establishing baseline LSER system parameters [7]. |

| Single-Walled Carbon Nanotubes (SWCNTs) | An adsorbent with high polarizability and strong nonspecific interactions (eE, vV) for comparative studies with polymeric materials [16]. |

| Activated Carbon (AC) | A standard porous adsorbent with a complex surface; used as a benchmark to compare the sorption characteristics of new materials like CNTs [16]. |

| Polydimethylsiloxane (PDMS) | A common polymeric phase in passive sampling devices; its LSER system parameters are used to understand differences in sorption behavior compared to LDPE [7]. |

| Polyacrylate (PA) | A polar polymer used to study and model sorption driven by strong hydrogen-bonding and polar interactions [7]. |

Workflow Diagram: LSER Model Development & Failure Analysis

LSER Model Development and Failure Analysis

Frequently Asked Questions (FAQs)

Q1: What is the thermodynamic basis for LSER model linearity, and when does it break down? The linearity of LSER models is rooted in free-energy relationships. The model's success, even for specific interactions like hydrogen bonding, suggests a thermodynamic consistency where the free energy of transfer is a linear function of the molecular descriptors [6]. However, this linearity can break down if the training set includes solute-solvent pairs with extremely strong specific interactions that deviate from the linear free-energy relationship established by the rest of the data [6].

Q2: How can I use LSER to estimate the hydrogen-bonding contribution to solvation free energy?

In the standard LSER equation for partition coefficients (e.g., log P = c + eE + sS + aA + bB + vV), the terms aA and bB represent the combined hydrogen-bonding contribution to the free energy of transfer. The product A_solute * b_solvent estimates the contribution from an acidic solute with a basic solvent, and B_solute * a_solvent estimates the contribution from a basic solute with an acidic solvent [6].

Q3: My model failed. Is it possible to extract useful thermodynamic information from a failed LSER model?

Yes, a failed model can be highly informative. Systematically analyzing the residuals (the difference between predicted and experimental values) can reveal patterns. For example, if all high-acidity compounds are poorly predicted, it strongly indicates that the model's parameterization for hydrogen-bond acidity (A descriptor or a coefficient) is inadequate for your system. This diagnosis directs you to focus your experimental efforts on better characterizing those specific interactions [6].

Advanced Modeling Techniques: Integrating Specific Interactions into Practical LSER Frameworks

Technical Support Center

Troubleshooting Guides

This section addresses common experimental challenges researchers face when working with and expanding the Linear Solvation Energy Relationship (LSER) parameter set.

Issue 1: Poor Correlation in Multivariate LSER Models

Problem: A model built using the solvation parameter model ( SP = c + eE + sS + aA + bB + vV ) shows poor statistical correlation for a set of test compounds.

- Potential Cause 1: Inadequate Descriptor Set. The traditional five descriptors (Excess molar refraction

E, dipolarity/polarizabilityS, acidityA, basicityB, and McGowan volumeV) may not fully capture the strong, specific intermolecular interactions present in your analyte set [17]. - Solution: Investigate the introduction of new, more specific descriptors for acidity and basicity. The residuals of your initial model can help identify which types of compounds are poorly predicted, guiding the development of these new descriptors [17].

Experimental Protocol:

- Calculate the residuals (difference between experimental and predicted values) for all compounds in your training set.

- Group compounds with the largest residuals and analyze their common structural features. For instance, you may find a subclass of strong organic acids is consistently poorly modeled.

- Propose a new descriptor,

A_spec, designed to quantify the property of this subclass. - Recalibrate the model using the expanded descriptor set (

SP = c + eE + sS + aA + bB + vV + a_specA_spec) and validate its performance on a new, external test set of compounds.

Potential Cause 2: Insufficient or Non-Diverse Training Set. The small set of compounds used to characterize the system's polarity does not adequately represent the chemical space of your analytes [17].

- Solution: Ensure your training set is composed of a sufficient number (e.g., >20) of reference compounds with appropriately diverse polarities. These compounds should have known and varied descriptor values to cover a wide range of interaction types [17].

Issue 2: Inconsistent Retention Time Predictions in Chromatography

Problem: When using a polarity parameter p to predict retention behavior in Reversed-Phase Liquid Chromatography (RPLC) with the model log k = (log k)0 + p(PmN - PsN), predictions are inaccurate for acidic compounds.

- Potential Cause: The solute polarity parameter

pmay not fully account for the hydrogen-bond acidity of the compounds when the mobile phase composition changes [17]. - Solution: Correlate the

pvalues with the full set of solvation descriptors, including the effective hydrogen-bond acidityA. The study showed that when octanol-water partition coefficients (log P_o/w) were corrected with a term considering solute acidity, good correlations withpwere observed [17]. - Experimental Protocol:

- Determine the

pvalue for your analytes from retention data in a reference chromatographic system. - For compounds with poor prediction, obtain or calculate their hydrogen-bond acidity descriptor

A. - Develop a corrected model, for example:

p_corrected = p + f(A), wheref(A)is a function of the acidity descriptor. - Use the corrected polarity parameter to predict retention in new systems.

- Determine the

Issue 3: Transferring Retention Data Between Chromatographic Systems Fails

Problem: A model developed on one column/solvent system does not accurately predict retention when applied to a new system.

- Cause: The polarity parameters (

p,P_mN,P_sN) have residual dependencies on the specific mobile and stationary phases, meaning the solute'sp-value is not entirely system-independent [17]. - Solution: Re-characterize the new chromatographic system with a small, diverse training set of compounds. Establish a simple correlation between the experimental

p-values in the new system and the referencep-values from your database [17]. - Experimental Protocol:

- Select a training set of 10-15 reference compounds with known and diverse

pvalues from your database. - Run these compounds on the new chromatographic system to determine their experimental

p_newvalues. - Plot

p_refvs.p_newand establish a correlation equation (e.g., linear regression). - Use this equation to convert reference

p-values for all other solutes to the new system's values for accurate retention prediction.

- Select a training set of 10-15 reference compounds with known and diverse

Frequently Asked Questions (FAQs)

Q1: What is the core advantage of expanding the LSER parameter set with new acidity/basicity descriptors? A1: The traditional five-parameter LSER model is powerful but can struggle with strong, specific intermolecular interactions. Introducing specialized descriptors for particular subclasses of acids or bases allows for a more nuanced and accurate prediction of physicochemical properties, such as chromatographic retention or solubility, for these compounds [17].

Q2: My research involves predicting retention in RPLC. How can the solute polarity parameter p be related to the fundamental LSER model?

A2: The solute polarity parameter p can be analyzed on the basis of the linear solvation relationship. It has been shown to correlate with the five molecular parameters (E, S, A, B, V) through the general solvation parameter model. This means p effectively summarizes the polar interactions of a solute, making it a valuable single parameter for retention modeling that integrates these fundamental properties [17].

Q3: Can I use a compound's octanol-water partition coefficient (log Po/w) to estimate its polarity parameter p?

A3: Yes, but with an important consideration. Good correlations between p and log P_o/w have been observed when the log Po/w is corrected with a term that accounts for the solute's hydrogen-bond acidity. This highlights the critical influence of acidity on chromatographic retention and the need to consider it explicitly in models [17].

Q4: What is the minimum number of compounds needed to characterize a new chromatographic column for retention prediction?

A4: While the exact number can vary, the methodology requires only a small training set of compounds with appropriately diverse polarities. By measuring their retention in the new system, you can establish a correlation to reference p-values, effectively transferring the existing database of parameters to the new column [17].

Experimental Protocols & Data

Table 1: Key Molecular Descriptors in Solvation Parameter Model

| Descriptor Symbol | Description | Role in LSER Model |

|---|---|---|

E |

Excess molar refraction | Capability to interact with solute π- and n-electron pairs [17] |

S |

Solute dipolarity/polarizability | Measures dipole-dipole and induction interactions [17] |

A |

Effective hydrogen-bond acidity | Measures the solute's ability to donate a hydrogen bond (interacts with a basic phase) [17] |

B |

Effective hydrogen-bond basicity | Measures the solute's ability to accept a hydrogen bond (interacts with an acidic phase) [17] |

V |

McGowan volume | Characterizes the hydrophobicity and dispersion interactions; represents the cavity term [17] |

Table 2: Example Protocol for Determining a New Acidity Descriptor (A_spec)

| Step | Action | Purpose & Notes |

|---|---|---|

| 1 | Identify Model Failure | Group compounds with large prediction residuals from a standard LSER model. |

| 2 | Structural Analysis | Identify common functional group or structural motif among the outliers (e.g., strong organic acids). |

| 3 | Descriptor Proposal | Propose a quantitative measure for the identified property (e.g., A_spec as a function of pKa or calculated atomic charge). |

| 4 | Data Collection | Obtain or calculate the new A_spec descriptor for all compounds in the extended training set. |

| 5 | Model Recalibration | Perform multilinear regression with the expanded descriptor set: SP = c + eE + sS + aA + bB + vV + a_specA_spec. |

| 6 | Validation | Test the new model's predictive power on a separate, external validation set of compounds. |

Workflow Visualization

Diagram 1: New descriptor development workflow.

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for LSER Experiments

| Reagent / Material | Function in Research | Context in LSER |

|---|---|---|

| Spherisorb ODS-2 Column | A common stationary phase used in Reversed-Phase Liquid Chromatography (RPLC) for acquiring retention data (log k) [17]. | Used to characterize the system's polarity parameters (P_sN, (log k)0) and test the predictive power of LSER models [17]. |

| Acetonitrile (ACN) | A common organic solvent used in mobile phases for RPLC [17]. | Its volume fraction (φ) is used to calculate the mobile phase polarity parameter (P_mN), a key variable in the retention model [17]. |

| Methanol (MeOH) | An alternative organic solvent for RPLC mobile phases, with different elution properties than acetonitrile [17]. | Allows for the testing of model transferability between different solvent systems and investigation of solvent-specific effects on descriptors [17]. |

| Formic Acid / Buffer | pH modifiers added to the mobile phase to control ionization of analytes [18]. | Critical for studying ionizable compounds, as pH significantly affects the hydrogen-bond acidity (A) and basicity (B) of solutes, thereby impacting retention [18]. |

| Reference Compound Set | A small, diverse set of compounds with well-established LSER descriptor values (e.g., alkylbenzenes, phenols, anilines) [17]. | Serves as a training set to characterize new chromatographic systems and validate the performance of expanded LSER models [17]. |

Frequently Asked Questions (FAQs)

Q: What is interaction energy analysis, and why is it critical for LSER models? A: Interaction energy analysis quantifies the strength and nature of non-covalent forces between molecular systems, such as dispersion or electrostatics [19]. For LSER models, which relate solute-solvent interactions to molecular properties, precise interaction energies are essential. They provide the fundamental data needed to calibrate and validate these models, especially for handling strong, specific interactions that classical force fields might misrepresent [20].

Q: My calculation failed with a "SCF convergence" error. What steps can I take? A: SCF (Self-Consistent Field) convergence failures are common. Follow this troubleshooting guide:

- Step 1: Check Initial Geometry: Ensure your molecular geometry is physically reasonable and does not contain unrealistically close atomic contacts.

- Step 2: Loosen Convergence Criteria: Temporarily use a looser SCF convergence threshold (e.g., 10^-4) to get an initial wavefunction.

- Step 3: Use a Smearing Technique: Applying a small electronic temperature (e.g., 500 K) can help the calculation escape metastable states, particularly for systems with metallic character or small HOMO-LUMO gaps.

- Step 4: Employ a Different Algorithm: Switch from the default DIIS algorithm to a more robust one, such as the Direct Inversion in the Iterative Subspace (DIIS) with level shifting or the Energy-Based Stability Analysis.

Q: How do I know if my basis set is large enough for accurate LSER parameterization? A: Perform a basis set superposition error (BSSE) analysis using the counterpoise correction method [19]. The table below summarizes key metrics to check. If BSSE constitutes more than a few percent of your total binding energy, consider using a larger basis set.

| Metric | Target Value for Convergence | Action if Target Not Met |

|---|---|---|

| BSSE-Corrected Energy | Change < 1 kJ/mol from larger basis set | Use larger basis set with more diffuse functions |

| Energy Decomposition | Electrostatic & dispersion components stable | Use basis set with higher angular momentum functions |

| Interaction Energy | Variation < 2% across basis sets of increasing size | Consider the calculation converged with the current basis set |

Q: What are the best practices for decomposing interaction energies to inform LSER parameters? A: Energy decomposition analysis (EDA) breaks down the total interaction energy into physically meaningful components like electrostatics, exchange-repulsion, dispersion, and charge transfer [19]. For LSER research, this is invaluable. Correlate the magnitudes of these components with specific LSER descriptors (e.g., electrostatic energy with the dipolarity/polarizability parameter π*, dispersion energy with the dispersion parameter L). This provides a quantum-mechanical basis for your LSER model's predictive power.

Q: Our system is too large for a full QM treatment. What are our options? A: For large systems relevant to drug development, you can use fragment-based quantum mechanical methods. The Divide-and-Conquer (D&C) approach is particularly effective [20]. It partitions the entire system into smaller, manageable fragments (or subsystems), each with its own local environment buffer. The quantum mechanical equations are solved for each subsystem independently, and the results are assembled to give the total energy and properties of the full system, linearizing the computational cost [20].

Experimental Protocols & Methodologies

Protocol 1: Calculating Interaction Energies with Counterpoise Correction This protocol details the "supermolecular approach" for calculating accurate intermolecular interaction energies, corrected for Basis Set Superposition Error (BSSE) [19].

- Geometry Optimization: Optimize the geometry of the molecular complex (AB) and the isolated monomers (A and B) using a cost-effective method (e.g., DFT with a medium-sized basis set).

- Single-Point Energy Calculation: Perform a single-point energy calculation on the complex and the monomers at the optimized geometry using a high-level theory (e.g., MP2, CCSD(T), or double-hybrid DFT) and a larger basis set.

- E(AB): Energy of the complex in its full basis set.

- E(A): Energy of monomer A in its own basis set.

- E(B): Energy of monomer B in its own basis set.

- Counterpoise Calculation: To correct for BSSE, recalculate the monomer energies using the full basis set of the complex, but with the "ghost" atoms of the other monomer present.

- E(A in AB's basis): Energy of monomer A using the full (AB) basis set.

- E(B in AB's basis): Energy of monomer B using the full (AB) basis set.

- Compute BSSE-Corrected Interaction Energy:

- Uncorrected ΔE = E(AB) - E(A) - E(B)

- BSSE = [E(A) - E(A in AB's basis)] + [E(B) - E(B in AB's basis)]

- Corrected ΔE = Uncorrected ΔE + BSSE

Protocol 2: Energy Decomposition Analysis (EDA) This protocol breaks down the total interaction energy into physically intuitive components, which can be directly mapped to LSER parameters [19].

- Prepare the System: Use the optimized geometry of the complex from Protocol 1.

- Select an EDA Method: Choose a specific EDA scheme (e.g., Morokuma, LMO-EDA, or SAPT).

- Run the EDA Calculation: Execute the calculation, which will typically output energy terms such as:

- Electrostatic: Classical interaction between the unperturbed charge distributions.

- Exchange-Repulsion: Pauli repulsion due to overlapping electron clouds.

- Dispersion: Attractive correlation due to fluctuating dipoles.

- Charge Transfer: Stabilization from electron donation between fragments.

- Polarization: Energy lowering due to distortion of electron densities.

- Correlate with LSER Parameters: Use the decomposed energy terms to interpret and refine LSER descriptors. For instance, a strong correlation between the electrostatic component and the π* parameter would provide a quantum-mechanical justification for its value in your model.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational "reagents" and resources essential for performing robust interaction energy calculations.

| Resource / Tool | Function & Explanation |

|---|---|

| Divide-and-Conquer (D&C) Algorithm | A linear-scaling QM method that partitions a large system into smaller fragments, making QM calculations on large biological systems tractable [20]. |

| Supermolecular Approach | The standard method for interaction energy calculation, involving separate energy computations for the complex and isolated monomers [19]. |

| Counterpoise Correction | A crucial procedure to correct for Basis Set Superposition Error (BSSE), which artificially lowers the energy of monomers and overestimates binding [19]. |

| Energy Decomposition Analysis (EDA) | A set of methods that dissect the total interaction energy into components (electrostatics, dispersion, etc.), providing deep insight into the nature of binding [19]. |

| GPU-Accelerated Computing | The use of graphics processing units to dramatically speed up the evaluation of molecular interactions, reducing computation time from days to hours [19]. |

Data Presentation: Comparison of QM Methods for Interaction Energies

The table below summarizes different quantum mechanical methods, helping you select the most appropriate one for your LSER research based on the trade-off between accuracy and computational cost.

| Method | Typical Accuracy (kJ/mol) | Computational Cost | Best Use Case for LSER | Key Limitations |

|---|---|---|---|---|

| Semiempirical (e.g., PM6) | ±20-50 | Low | Rapid screening of large molecular datasets; initial geometry optimization | Low accuracy; poor for dispersion-dominated interactions [20] |

| Density Functional Theory (DFT) | ±5-20 | Medium | Balanced accuracy/cost for most specific interactions (H-bonding, polarity) | Performance depends heavily on functional; standard functionals poor for dispersion [20] |

| MP2 | ±2-10 | High | Accurate treatment of dispersion forces; reliable for most interaction types | High cost; can overbind dispersive systems; BSSE can be significant |

| CCSD(T) | < 2 | Very High | "Gold standard" for final validation on small model systems | Prohibitively expensive for large systems; not for routine use [20] |

| DFT-D (Dispersion Corrected) | ±3-10 | Medium | General-purpose for LSER work, includes missing dispersion in DFT | Correction is often empirical; not a single universal method |

Troubleshooting Guide: FAQs on Hybrid ML-LSER Implementation

This section addresses common challenges researchers face when developing and applying hybrid Machine Learning-Linear Solvation Energy Relationship models.

FAQ 1: Why does my hybrid model show poor generalization for hydrogen-bonding compounds despite good training accuracy?

- Problem: This often stems from thermodynamic inconsistency in handling strong specific interactions like hydrogen bonding, especially during self-solvation where solute and solvent are identical. The model fails to achieve the expected equality of complementary interaction energies [21].

- Solution:

- Implement a thermodynamically consistent reformulation of the LSER model using quantum chemical (QC) calculations to derive new molecular descriptors [21].

- Use QC-based descriptors from molecular surface charge distributions (e.g., sigma profiles from COSMO-RS) to replace traditionally fitted LSER descriptors S, A, and B, ensuring a sounder physical basis for hydrogen-bonding interactions [21].

FAQ 2: How can I improve the prediction of solvation enthalpy and free energy for solutes with conformational flexibility?

- Problem: Standard LSER descriptors are often determined via global optimization and may not adequately capture conformational changes a solute undergoes upon solvation, leading to errors in predicted ΔH and ΔG [21].

- Solution:

- Leverage QC-LSER methodologies that account for conformational changes by using charge-density distributions from the solute's structure. This provides a more nuanced descriptor set that adapts to the solute's state in different solvents [21].

- Combine the LSER model with equation-of-state thermodynamics, using tools like Partial Solvation Parameters (PSP), to better extract and transfer hydrogen-bonding information (free energy, ΔG, and enthalpy, ΔH) across different conditions [6].

FAQ 3: My model's performance is limited by scarce experimental data for LSER descriptor determination. What are my options?

- Problem: The expansion of traditional LSER models is restricted by the availability of experimental data for multilinear regression to determine solute descriptors and system-specific coefficients [21].

- Solution:

- Adopt a QC-LSER approach where descriptors are obtained solely from the molecular structure via quantum chemical calculations, eliminating the dependency on extensive experimental data for each new compound [21].

- For property prediction (e.g., polymer-water partition coefficients), use QSPR prediction tools to generate the required LSER solute descriptors directly from the chemical structure when experimental descriptors are unavailable [7].

FAQ 4: How do I interpret the contribution of different intermolecular interactions in my hybrid model's predictions?

- Problem: While the LSER model provides a linear equation, the interpretation of the lower-case system coefficients (e.g.,

a,b) as purely solvent-specific descriptors can be challenging, as they are typically obtained through fitting processes [6]. - Solution:

- Use interpretable machine learning techniques like SHAP (SHapley Additive exPlanations) or LIME (Local Interpretable Model-agnostic Explanations). These methods can be applied to the hybrid model to identify and quantify the influence of individual molecular descriptors (E, S, A, B, V, L) on the final prediction, providing clarity on the role of different interactions [22].

Experimental Protocols & Data

Protocol 1: Developing a QC-LSER Model for Hydrogen-Bonding Calculations

Objective: To derive a thermodynamically consistent LSER model for solvation properties using quantum chemical calculations [21].

- Molecular Structure Input: Obtain or draw the 3D molecular structure of the solute and solvent of interest.

- Quantum Chemical Calculation: Perform a COSMO-type quantum chemical calculation (e.g., using DFT methods) to generate the sigma profile (distribution of molecular surface charge densities) for each molecule.

- Descriptor Calculation: From the sigma profile, calculate new QC-based molecular descriptors intended to replace the traditional LSER parameters S (dipolarity/polarizability), A (hydrogen-bond acidity), and B (hydrogen-bond basicity).

- Model Formulation: Integrate these new descriptors into the standard LSER equations for the target property (e.g., log K for a partition coefficient or ΔH for solvation enthalpy).

- Validation: Correlate the model's predictions against high-quality experimental solvation data, including self-solvation cases, to validate its consistency and accuracy [21].

Protocol 2: Implementing a Hybrid Supervised-Reinforcement Learning Model for Predictive Maintenance

Objective: To enhance the prediction of a system's Remaining Useful Life (RUL) by combining supervised and reinforcement learning, a hybrid approach applicable to optimizing computational experiments [23].

- Data Preparation: Utilize a time-series dataset from system sensors (e.g., C-MAPSS aircraft engine data). Pre-process data to preserve vital temporal relationships.

- Supervised Learning Setup: Train a Multi-Layer Perceptron (MLP) network to make initial RUL predictions based on the input sensor data.

- Reinforcement Learning Integration: Use a Q-learning algorithm where:

- The state is defined by the system's condition and MLP output.

- The actions involve adjusting prediction strategies.

- The reward is based on the accuracy of the RUL prediction.

- Model Training and Comparison: Train the hybrid model and compare its performance against standalone models (e.g., SVR, CNN, LSTM) using accuracy and precision metrics. The reported hybrid model achieved a 15% increase in accuracy over single supervised learning algorithms [23].

Table 1: Performance Comparison of Predictive Models from Literature

| Model Type | Application Area | Key Performance Metrics | Reference |

|---|---|---|---|

| Hybrid MLP + Q-learning | RUL Prediction (Aircraft Engines) | 15% accuracy increase vs. single ML algorithms (SVR, MLP, CNN, LSTM); 4% accuracy increase vs. other hybrid algorithms (CNN-LSTM) [23]. | [23] |

| LSER Model | LDPE-Water Partitioning | R² = 0.991, RMSE = 0.264 (Training, n=156); R² = 0.985, RMSE = 0.352 (Validation with experimental descriptors) [7]. | [7] |

| LSTM-ANN Hybrid | Power Load Forecasting (Microgrids) | R²: 0.8852, MSE: 0.0043, outperforming GRU, SVM, ARIMA, and SARIMA [24]. | [24] |

| Optimized CatBoost/AdaBoost | Solar Radiation Forecasting | RMSE reduced by 6–82% after hyperparameter tuning with Nelder-Mead and feature selection with LIME [22]. | [22] |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Hybrid ML-LSER Research

| Item / Resource | Function / Description | Relevance to Hybrid ML-LSER |

|---|---|---|

| LSER Database | A comprehensive, freely accessible database of solute descriptors and system coefficients [6] [21]. | The foundational source of experimental data for training, validating, and benchmarking hybrid models. |

| Quantum Chemical Software | Software suites (e.g., for DFT calculations) to compute molecular charge-density distributions and sigma profiles [21]. | Essential for calculating thermodynamically consistent, QC-based molecular descriptors to replace or augment traditional LSER parameters. |

| Partial Solvation Parameters (PSP) | A thermodynamic framework with parameters (σd, σp, σa, σb) based on equation-of-state thermodynamics [6]. | Facilitates the extraction and transfer of hydrogen-bonding information (ΔG, ΔH) from the LSER database for use in other thermodynamic models. |

| Interpretability Libraries (SHAP/LIME) | Python libraries for model interpretability. SHAP provides a unified measure of feature importance, while LIME gives local, model-agnostic explanations [22]. | Crucial for deciphering the "black box" nature of complex ML models, helping researchers understand which molecular interactions drive predictions. |

Workflow Visualization

Below is a workflow for developing a thermodynamically consistent QC-LSER model, integrating quantum chemistry and machine learning.

Diagram 1: QC-LSER model development workflow.

The following diagram illustrates the architecture of a hybrid supervised-reinforcement learning model for predictive tasks.

Diagram 2: Hybrid supervised-RL model architecture.

Frequently Asked Questions: Core Concepts

What is the thermodynamic basis for LSER linearity, even for strong interactions like hydrogen bonding? The observed linearity in LSER models, including for hydrogen bonding, has a solid foundation in equation-of-state thermodynamics combined with the statistics of hydrogen bonding. This theoretical basis verifies that free-energy-related properties can be expressed as a linear combination of interaction-specific contributions, even for these specific interactions [6].

How can I estimate the hydrogen-bonding contribution to the free energy of solvation? Within the LSER framework, the hydrogen-bonding contribution to the free energy of solvation for a solute (1) in a solvent (2) can be estimated from the products of the solute's descriptors and the system's coefficients: specifically, through the terms A₁a₂ (for acidity) and B₁b₂ (for basicity) [6].

My model performance is poor for zwitterionic drug molecules. What should I consider? Drug molecules are often complex, existing as acids, bases, or zwitterions. For accurate LSER modeling of partitioning, the calculation must be performed for the correct, predominant neutral form of the molecule. You must calculate or obtain the pKa values of your compounds to determine the fractional population of each species at the relevant pH [25].

Troubleshooting Guide: Common LSER Modeling Issues

| Problem Area | Specific Issue | Possible Cause | Solution & Checks |

|---|---|---|---|

| Data Quality | High prediction error for certain chemical classes. | Lack of chemical diversity in training set; over-reliance on predicted descriptors [7]. | 1. Curate a diverse training set: Ensure it covers a wide range of structures and interactions [7].2. Use experimental descriptors: For final model validation, use experimental LSER solute descriptors where possible [7]. |

| Model Parameters | The model's coefficients (a, b, s, etc.) lack physicochemical intuition. | Coefficients are determined purely by statistical fitting without thermodynamic grounding [6]. | Interpret coefficients via a thermodynamic framework like Partial Solvation Parameters (PSP) to connect them to physical interactions [6]. |

| Handling Complex Molecules | Unreliable predictions for large, complex drug molecules. | Standard prediction tools (e.g., EpiSuite, SPARC) can be unreliable for large molecules [25]. | Use quantum mechanical (QM) methods: While computationally expensive, QM methods can more reliably calculate solvation energies and descriptors for complex structures [25]. |

| Phase Definition | Inaccurate partition coefficients for polymer-water systems. | Treating a semi-crystalline polymer (like LDPE) as a homogeneous phase [7]. | Account for polymer morphology: For polymers like LDPE, convert partition coefficients to consider only the amorphous fraction as the effective phase volume [7]. |

The Scientist's Toolkit: Essential Research Reagents & Materials

The following table details key components and resources required for developing and validating LSER models.

| Item / Resource | Function & Application in LSER Modeling |

|---|---|

| Abraham Solute Descriptors (Vx, E, S, A, B, L) | Core molecular descriptors that quantify a compound's characteristic volume, excess polarization, dipolarity/polarizability, hydrogen-bond acidity, and hydrogen-bond basicity [6]. |

| LSER Database | A freely accessible, curated database containing a wealth of experimental partition coefficients and pre-calculated solute descriptors, serving as a primary source for training data [6]. |

| Reference Partitioning Systems | Well-characterized systems like octanol/water (KOW), hexadecane/air (KHdA/L), and air/water (KAW) are used to benchmark and calibrate models [25]. |

| Quantum Chemical (QM) Software | Used to calculate partition coefficients and solvation free energies (ΔGsolv) for molecules where experimental data is lacking or difficult to obtain [25]. |

| openOCHEM Platform | A public online platform used to develop robust predictive models via consensus approaches, combining multiple algorithms to improve prediction accuracy [26]. |

Experimental Protocol & Workflow Visualization

The following diagram outlines the logical workflow for building a robust LSER model, from data collection to final validation.

The table below summarizes the core LSER equations and provides an example of a high-performance model from recent literature for benchmarking.

| Model Type & Purpose | LSER Equation | Key Performance Metrics | Context & Application |

|---|---|---|---|

| General Form (Condensed Phases) | log(P) = cp + epE + spS + apA + bpB + vpVx [6] |

N/A | Used for predicting partition coefficients (P) between two condensed phases, e.g., water-to-organic solvent [6]. |

| General Form (Gas-to-Solvent) | log(KS) = ck + ekE + skS + akA + bkB + lkL [6] |

N/A | Used for predicting gas-to-organic solvent partition coefficients (KS) [6]. |

| Specific Model (LDPE/Water) | log Ki,LDPE/W = -0.529 + 1.098E - 1.557S - 2.991A - 4.617B + 3.886V [7] |

n=156, R²=0.991, RMSE=0.264 [7] | A robust, validated model for predicting the partitioning of neutral compounds between low-density polyethylene and water [7]. |

Frequently Asked Questions (FAQs)

Q: What is High-Throughput Screening (HTS) and how is it used in discovery? A: High-Throughput Screening (HTS) is a method used to automatically and rapidly test thousands or even millions of chemical, biological, or material samples. It helps researchers find active compounds, such as potential new drugs or better-performing materials, by using robotics and specialized software to process over 10,000 samples in a single day, a task that might take weeks with traditional methods [27].

Q: What are the common types of assays used in HTS? A: HTS utilizes various assay formats, which are biological tests designed to measure activity. Common adaptations include assays that use light measurements—such as fluorescence, absorbance, or luminescence—to detect active samples. These assays are optimized for sensitivity, dynamic range, and stability, and are scaled to run in microtiter plates with 96, 384, or even 1536 wells [28] [27].

Q: What is a good Z'-factor score and why is it important? A: The Z'-factor is a statistical value used to check the reliability and quality of an HTS assay. A Z'-factor above 0.5 is generally considered to indicate a good, robust assay. It is a critical parameter during assay development and optimization to minimize false positives and ensure data quality [27].

Q: What are the main challenges in HTS and how can they be addressed? A: Key challenges in HTS include:

- False Positives: Some compounds can give misleading results. This is often addressed by using control tests and secondary assays to validate initial "hits" [27].

- High Costs: Setting up an HTS facility can be expensive. Costs can be mitigated through collaborative networks, shared access programs, and using virtual screening powered by AI to reduce the number of physical tests needed [27].

- Data Overload: A single HTS run can produce terabytes of data. Solutions involve using machine learning tools to highlight promising results and cloud-based platforms for data analysis [27].

Troubleshooting Guides

Issue 1: High Rate of False Positives in Screening Data

Problem: A significant number of compounds are flagged as active ("hits") but are later found to be inactive in follow-up tests, wasting time and resources.

Solutions:

- Implement Robust Counter-Screens: Use detergent-based or other specific control assays designed to identify and weed out compounds that cause interference or non-specific binding [27].

- Concentration-Response Curves (CRCs): For initial hits, perform dose-response testing to confirm activity and determine the potency (IC50/EC50) of the compound.

- Orthogonal Assays: Use a different, independent assay technology to measure the same biological activity. Confirmation across multiple platforms increases confidence in a true hit [28].

- Check Assay Health: Re-evaluate the Z'-factor of your primary assay. A decline may indicate issues with reagent stability or pipetting precision.

Issue 2: Poor Assay Performance and Low Signal-to-Noise Ratio

Problem: The assay shows a weak signal, a high background, or poor distinction between positive and negative controls.

Solutions:

- Re-optimize Reagent Concentrations: Titrate key assay components (e.g., enzymes, substrates, cells) to find the optimal concentrations that maximize the signal window [28].

- Review Incubation Times: Ensure that reaction incubations (e.g., for enzyme activity or cell-based responses) are sufficient for signal development.

- Check Liquid Handling: Calibrate automated liquid handlers to ensure accurate and precise dispensing of samples and reagents, which is critical for reproducibility in microtiter plates [27].

- Instrumentation Check: Verify that plate readers and detectors are properly calibrated and that the correct optical filters are being used for fluorescence or luminescence measurements.

Experimental Protocols & Data

Standard Protocol for a Cell-Based HTS Assay

Objective: To identify compounds that inhibit a specific pathway in a cell-based model.

Summary Workflow: The process begins with library preparation, followed by automated dispensing of cells and compounds into assay plates. After incubation, a detection reagent is added, and the plates are read. The resulting data is then analyzed to identify active "hit" compounds [27].

Detailed Methodology: