Advancing Predictive Toxicology: Strategies for Enhancing LSER Model Accuracy and Precision in Drug Development

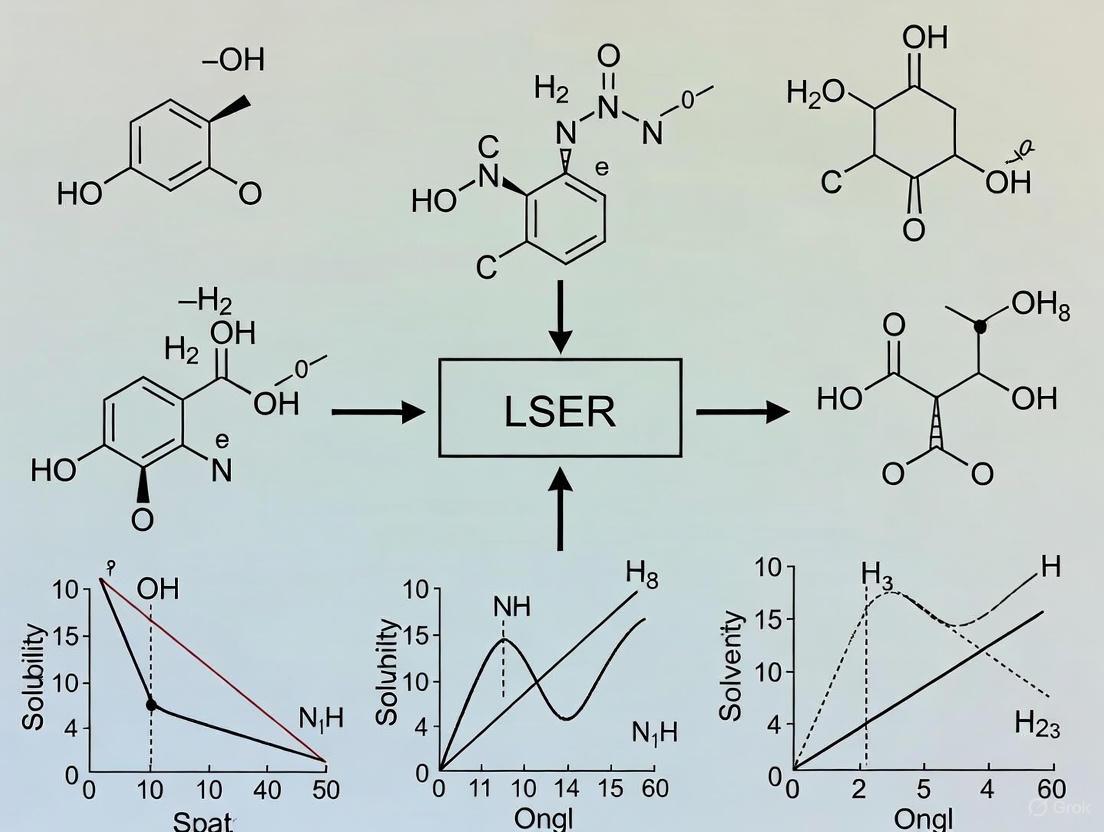

This article provides a comprehensive guide for researchers and drug development professionals on improving the accuracy and precision of Linear Solvation Energy Relationship (LSER) models.

Advancing Predictive Toxicology: Strategies for Enhancing LSER Model Accuracy and Precision in Drug Development

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on improving the accuracy and precision of Linear Solvation Energy Relationship (LSER) models. Covering foundational principles, advanced methodological applications, troubleshooting for common pitfalls, and rigorous validation protocols, it synthesizes current knowledge and emerging trends. By exploring the integration of LSER with equation-of-state thermodynamics, addressing challenges with polar compounds, and validating models against complex environmental contaminants, this resource aims to equip scientists with practical strategies to enhance the predictive power of LSER for critical applications like pharmacokinetics and chemical safety assessment.

Deconstructing the LSER Framework: From Core Principles to Thermodynamic Basis

FAQs: Core Concepts and Definitions

What are Molecular Descriptors and why are they critical for LSER models?

Molecular descriptors are numerical quantities that capture specific characteristics of a molecule's structure. In the context of Linear Solvation Energy Relationships (LSERs), they translate a molecule's chemical information into a form that can be used in mathematical models to predict its behavior and properties. The accuracy and predictive power of an LSER model are directly dependent on the effectiveness of the chosen descriptors in representing the key molecular interactions governing the system under study [1].

What is the difference between degree-based and distance-based topological descriptors?

Topological descriptors are a major class of molecular descriptors derived from the molecular graph, where atoms are represented as vertices and bonds as edges.

- Degree-based descriptors are calculated using the vertex degree, which is the number of connections (bonds) an atom has. Examples include the Randić index and Zagreb indices [1].

- Distance-based descriptors rely on the shortest path distance between pairs of vertices in the molecular graph. The Wiener index is a classic example of a distance-based index [1].

Modern research is creating enhanced descriptors that combine both vertex degrees and distances to more effectively capture complex structural characteristics, thereby improving QSPR model performance [1].

My LSER model performance has plateaued. How can enhanced descriptors help?

If your model's performance has stalled, it may be due to the limitations of conventional descriptors in capturing the full complexity of your molecular structures. Enhanced descriptors address this by integrating multiple aspects of molecular structure. For instance, novel descriptors that combine degree-distance invariants with perspectives like neighbourhood degree and reverse degree have shown strong correlations with chemical properties, independent of molecular size and structural effects. These advanced descriptors can capture structural nuances that simpler indices miss, potentially breaking through accuracy plateaus in your research [1].

Troubleshooting Guides

Problem: Poor Model Accuracy and Predictivity

Symptoms

- Low R² values in both training and validation sets.

- High prediction errors for new compounds.

- Model fails to capture trends within a homologous series.

Investigation and Resolution Protocol

| Step | Action | Expected Outcome & Diagnostic Tips |

|---|---|---|

| 1 | Interrogate Your Descriptors | Confirm the descriptors are relevant to the property being modeled. For solvation-related properties, ensure descriptors reflect polarity, hydrogen bonding, and dispersion forces. |

| 2 | Evaluate Descriptor Diversity | Check for high correlation (multicollinearity) between descriptors. A good model should use a set of descriptors that capture independent aspects of molecular structure. |

| 3 | Incorporate Enhanced Descriptors | Replace or supplement basic descriptors with advanced ones. Test newly developed degree-distance descriptors that integrate neighborhood and reverse degree concepts, which have demonstrated improved efficacy in QSPR modeling [1]. |

| 4 | Validate Model with a Congeneric Set | Use a well-defined set of isomers (e.g., structural isomers of saturated hydrocarbons like hexane). If the model cannot accurately distinguish between them, the descriptors lack sufficient structural resolution [1]. |

Problem: Model Fails to Generalize to New Data

Symptoms

- Excellent performance on the training set but poor performance on the test set or external validation compounds.

- The model is overfitted to the specific data used to build it.

Investigation and Resolution Protocol

| Step | Action | Expected Outcome & Diagnostic Tips |

|---|---|---|

| 1 | Apply Regularization Techniques | Use methods like Ridge or Lasso regression to penalize overly complex models and reduce overfitting. |

| 2 | Simplify the Descriptor Space | Reduce the number of descriptors. Use feature selection algorithms to retain only the most statistically significant descriptors for prediction. |

| 3 | Utilize Quadratic Modeling | Move beyond simple linear relationships. Employ QSPR quadratic modelling, which has been shown to successfully capture non-linear relationships using enhanced topological descriptors, leading to more robust and generalizable models [1]. |

| 4 | Expand Training Data Diversity | Ensure the training set encompasses the full chemical space to which the model will be applied, including variations in size, branching, and functional groups. |

Experimental Protocols for Descriptor Validation

Protocol 1: Benchmarking New Descriptors Using a Carboxylic Acid Dataset

This protocol outlines a method for evaluating the performance of newly developed molecular descriptors against established ones.

1. Objective To assess the correlation efficacy of novel topological descriptors compared to existing descriptors by modeling properties of carboxylic acids.

2. Materials and Data

- A curated dataset of carboxylic acids with experimentally measured physicochemical properties (e.g., boiling point, log P) [1].

- Calculated values for both established descriptors (e.g., Wiener index, Zagreb indices) and the new enhanced descriptors.

3. Methodology

- Step 1: Data Preparation: Compile the dataset, ensuring a range of molecular sizes and structures.

- Step 2: Descriptor Calculation: Compute the values for all descriptors for each molecule in the dataset.

- Step 3: Model Building: Develop separate QSPR models for each property using linear and quadratic regression techniques.

- Step 4: Correlation Analysis: Statistically compare the correlation coefficients (R²) and predictive errors of the models using the new descriptors versus those using traditional descriptors.

4. Expected Outcome The newly derived descriptors are expected to exhibit stronger correlations and higher predictive accuracy, demonstrating their utility and independence from simple size-effects compared to existing descriptors [1].

Protocol 2: Assessing Structural Sensitivity with Isomer Sets

This protocol tests a descriptor's ability to capture subtle structural differences that affect molecular properties.

1. Objective To determine if a descriptor can accurately predict property variations among structural isomers of a saturated hydrocarbon.

2. Materials and Data

- A set of structural isomers of a saturated hydrocarbon (e.g., all isomers of hexane, C₆H₁₄) [1].

- A target property that varies with branching (e.g., octane number, boiling point).

3. Methodology

- Step 1: Structure Enumeration: Identify and sketch all possible structural isomers.

- Step 2: Descriptor Calculation: Calculate the candidate descriptor for each isomer graph.

- Step 3: Model Construction: Build a simple model linking the descriptor to the target property.

- Step 4: Validation: Check if the model correctly ranks the isomers according to their known property values.

4. Expected Outcome A powerful descriptor will show a clear trend with the property, successfully differentiating between isomers based on their degree of branching, even when molecular size is constant [1].

Research Reagent Solutions: The Computational Toolkit

| Item Name | Function/Brief Explanation |

|---|---|

| Topological Descriptor Software | Software tools that can generate a wide array of descriptors from a molecular structure, including degree-based, distance-based, and integrated indices. |

| Quadratic Regression Module | Statistical software or built-in functions capable of performing quadratic (non-linear) QSPR modeling to capture complex structure-property relationships [1]. |

| Benzenoid Hydrocarbon Structures | Polycyclic benzenoid hydrocarbons serve as ideal benchmark structures for testing new descriptors on complex graphs with "convex cuts" [1]. |

| Taguchi Method Design Suite | Software that facilitates the design of experiments (DOE) using the Taguchi method, allowing for systematic optimization of parameters with minimal experimental runs [2]. |

Workflow and Relationship Diagrams

Diagram 1: LSER Model Optimization Pathway

Diagram 2: Enhanced Descriptor Development Logic

Technical Support Center

Troubleshooting Guides & FAQs

This technical support center addresses common challenges researchers face when working with the Linear Solvation-Energy Relationships (LSER) model. The following guides and protocols are designed to help you improve the accuracy and precision of your solvation thermodynamics research.

FAQ 1: What is the thermodynamic basis for the linearity of LSER models, especially for strong specific interactions like hydrogen bonding?

The observed linearity of LSER models, even for strong specific interactions, finds its thermodynamic basis in the combination of equation-of-state solvation thermodynamics with the statistical thermodynamics of hydrogen bonding [3] [4].

- Fundamental Principle: The LSER model's linear free-energy relationships correlate a solute's free-energy-related properties with its molecular descriptors. The hydrogen-bonding contribution to the solvation free energy can be placed on a firm thermodynamic basis, making predictive calculations possible using the known acidity (A) and basicity (B) molecular descriptors [4].

- Addressing Non-Ideality: The LFER linearity has a thermodynamic foundation that can be verified by examining the contribution of strong specific interactions in the solute/solvent system. This involves reconciling the LSER framework with equation-of-state properties [3].

- Practical Implication: This understanding allows for the extraction of valid thermodynamic information on intermolecular interactions for solute/solvent systems where both LSER descriptors and LFER coefficients are available. For instance, the hydrogen bonding contribution to the free energy of solvation can be estimated from the products A₁a₂ and B₁b₂ [3].

FAQ 2: How can I extract thermodynamically meaningful hydrogen-bonding parameters (like ΔG) from LSER descriptors?

The hydrogen-bonding contribution to solvation free energy can be derived from LSER descriptors. The key is to utilize the Partial Solvation Parameter (PSP) framework, which is designed to facilitate the extraction of this information [3].

- Methodology: The PSP framework uses the two hydrogen-bonding parameters, σa and σb (reflecting acidity and basicity), to estimate a key quantity: the free energy change upon the formation of a hydrogen bond, ΔGhb [3].

- Extended Calculations: Due to its equation-of-state basis, this framework also allows for the estimation of the corresponding changes in enthalpy ( ΔHhb ) and entropy ( ΔShb ) upon hydrogen bond formation [3].

- Data Source: This extraction is performed using the rich thermodynamic information available in the freely accessible LSER database [3].

FAQ 3: Why do my LSER predictions for solvation enthalpy show inconsistencies with experimental data?

Inconsistencies in predicting solvation enthalpies (ΔHS) can arise from the incorrect application of the LSER model's linear relationship for enthalpy [3].

- Correct Formulation: Solvation enthalpies are handled in LSER by a linear relationship of the form: ΔHS = cH + eHE + sHS + aHA + bHB + lHL [3].

- Troubleshooting Steps:

- Verify Coefficients: Ensure you are using the correct solvent-specific coefficients (cH, eH, sH, aH, bH, lH) for the condensed phase you are studying.

- Check Descriptor Integrity: Confirm the accuracy of the solute's molecular descriptors (E, S, A, B, L). Errors in these values will propagate into enthalpy calculations.

- Cross-Reference Models: The information on hydrogen bonding from the free-energy equations (e.g., from the products A₁a₂ and B₁b₂) should be consistent with the information used for estimating the hydrogen bonding change in enthalpy from Equation (3) [3].

Experimental Protocols & Data Presentation

Table 1: Core LSER Molecular Descriptors and Their Thermodynamic Interpretation

This table summarizes the key solute descriptors used in the LSER model and their physicochemical meanings, which are crucial for designing experiments and interpreting results [3].

| Descriptor Symbol | Name | Thermodynamic Interpretation and Role |

|---|---|---|

| Vx | McGowan's Characteristic Volume | Represents the endoergic cavity formation energy; correlated with size and volume of the solute [3]. |

| L | Gas-Liquid Partition Coefficient in n-hexadecane | A measure of dispersion interactions in the solute; determined experimentally at 298 K [3]. |

| E | Excess Molar Refraction | Models polarizability contributions from n- and π-electrons [3]. |

| S | Dipolarity/Polarizability | Describes the solute's ability to engage in dipole-dipole and dipole-induced dipole interactions [3]. |

| A | Hydrogen Bond Acidity | Quantifies the solute's ability to donate a hydrogen bond [3]. |

| B | Hydrogen Bond Basicity | Quantifies the solute's ability to accept a hydrogen bond [3]. |

Table 2: LSER Equation Coefficients as System Descriptors

The coefficients in the LSER equations are solvent-specific and represent the complementary effect of the phase on solute-solvent interactions [3].

| Coefficient Symbol (e.g., in log(P) equation) | Physicochemical Meaning | Determination Method |

|---|---|---|

| c | Constant term for the system | Determined via multiple linear regression fitting of experimental data for a variety of solutes in the solvent [3]. |

| e | Solvent's complementary response to the solute's excess molar refraction (E) | Determined via multiple linear regression fitting of experimental data for a variety of solutes in the solvent [3]. |

| s | Solvent's complementary response to the solute's dipolarity/polarizability (S) | Determined via multiple linear regression fitting of experimental data for a variety of solutes in the solvent [3]. |

| a | Solvent's complementary response to the solute's hydrogen bond acidity (A) | Determined via multiple linear regression fitting of experimental data for a variety of solutes in the solvent [3]. |

| b | Solvent's complementary response to the solute's hydrogen bond basicity (B) | Determined via multiple linear regression fitting of experimental data for a variety of solutes in the solvent [3]. |

| v | Solvent's complementary response to the solute's characteristic volume (Vx) | Determined via multiple linear regression fitting of experimental data for a variety of solutes in the solvent [3]. |

Protocol: Extracting Hydrogen-Bonding Free Energy using the PSP Framework

This protocol outlines the methodology for deriving hydrogen-bonding free energy (ΔGhb) from LSER descriptors, based on the Partial Solvation Parameters (PSP) approach [3].

Objective: To calculate the free energy change upon hydrogen bond formation from experimental LSER data. Background: The PSPs (σa and σb) provide a bridge between the LSER database and equation-of-state thermodynamics, enabling the extraction of thermodynamically meaningful hydrogen-bonding information [3].

Procedure:

- Data Acquisition: Obtain the necessary Abraham solute parameters (A and B) for your compound of interest from a validated LSER database [3].

- PSP Calculation: Utilize the established relationships between the LSER descriptors and the Partial Solvation Parameters to calculate the hydrogen-bonding PSPs, σa and σb. Note: The specific mathematical relationships for this step are defined in the foundational literature and may require specialized software or computational scripts [3].

- Free Energy Calculation: Input the calculated σa and σb values into the equation-of-state thermodynamic model to compute the free energy change, ΔGhb [3].

- Validation: Compare the calculated ΔGhb values with available experimental data or benchmark calculations to ensure accuracy.

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for LSER Methodology

A list of key materials and computational tools essential for conducting research involving the LSER model.

| Item | Function in LSER Research |

|---|---|

| LSER Database | A freely accessible database containing a wealth of thermodynamic information and pre-compiled solute descriptors (Vx, L, E, S, A, B) for a vast array of compounds [3]. |

| Reference Solvent Sets | A carefully selected set of solvents with well-characterized LSER coefficients (e.g., a, b, s, v). These are used in chromatographic or partitioning experiments to determine unknown solute descriptors [3]. |

| Partial Solvation Parameters (PSP) Framework | A thermodynamic framework that acts as a tool for extracting and transferring information on intermolecular interactions from the LSER database for use in equation-of-state developments and other thermodynamic calculations [3]. |

| Quantum Chemistry Software | Software used for computational determination or verification of molecular descriptors (like E, S, A, B), especially for novel compounds not yet in the LSER database [3]. |

Workflow Visualization

Diagram 1: LSER-PSP Thermodynamic Information Flow

This diagram illustrates the process of extracting thermodynamic properties from experimental data using the combined LSER and Partial Solvation Parameter (PSP) framework.

Diagram 2: LSER Model Linearity Verification

This workflow outlines the logical process for verifying the thermodynamic basis of LSER linearity in a research project.

Extracting Thermodynamic Information from LSER Databases and Coefficients

Troubleshooting Guides

Guide 1: Resolving Inconsistencies in Hydrogen-Bonding Contribution Calculations

Problem: Significant discrepancies observed between calculated and experimental hydrogen-bonding contributions to solvation free energy.

Symptoms:

- LSER-calculated HB contributions (aA + bB) do not match values derived from experimental solvation data

- Poor correlation when transferring HB parameters to thermodynamic models like SAFT or COSMO-RS

- Inconsistent results for self-solvation cases where solute and solvent are identical

Diagnosis and Solutions:

Table 1: Troubleshooting Hydrogen-Bonding Calculation Issues

| Problem Cause | Diagnostic Steps | Solution | Preventive Measures |

|---|---|---|---|

| Improper descriptor application | Verify A and B descriptors for your solute in the UFZ-LSER database [5]; Check if coefficients a and b are available for your solvent system [3] | Use consolidated descriptors from multiple sources; For unavailable coefficients, use predictive methods [6] | Always cross-reference descriptors with multiple sources when possible |

| Limitation of LSER linearity assumption | Compare HB contributions from different models (COSMO-RS, PSP) for the same system [7] | Implement Partial Solvation Parameters (PSP) as intermediary between LSER and equation-of-state models [3] | Understand the thermodynamic basis of LSER linearity, especially for strong specific interactions [3] |

| Regression artifacts in LFER coefficients | Check if aA = bB for self-solvation cases; significant differences indicate regression artifacts [6] | Use quantum-chemical LSER descriptors to derive more consistent HB parameters [6] | Validate coefficients with known reference systems before application |

Experimental Protocol Validation:

- For solvation free energy measurements: Use Equation (1) from recent research connecting solvation constant (KGS) with measurable thermodynamic properties [6]

- For solvation enthalpy: Apply LSER relationship ΔHS = cH + eHE + sHS + aHA + bHB + lHL and verify each term's contribution [3]

- Cross-validate with COSMO-RS predictions at TZVPD-Fine level for comparable accuracy [7]

Guide 2: Addressing Experimental Variability in Partition Coefficient Measurements

Problem: High variability in experimentally determined log KOW values affecting LSER parameterization.

Symptoms:

- Log KOW values for the same compound varying by >1 log unit across different experimental methods

- Poor predictability of LSER models for new chemical classes

- Inconsistent solute descriptors derived from experimental partition coefficients

Diagnosis and Solutions:

Table 2: Managing Experimental Variability in Partition Coefficients

| Variability Source | Impact on LSER | Solution | Validation Approach |

|---|---|---|---|

| Different experimental methods | Shake-flask, generator column, and slow-stirring methods yield different log KOW values for the same compound [8] | Apply iterative consensus modeling - use mean of ≥5 valid data points from different independent methods [8] | Statistical analysis of variability; consolidated log KOW should show variability <0.2 log units |

| Solute concentration issues | KOW becomes concentration-dependent at >0.01 mol/L, violating infinite dilution requirement [8] | Ensure measurements at appropriate dilution; verify linearity of partitioning with concentration | Measure at multiple concentrations and extrapolate to infinite dilution |

| Speciation and ionization | Observed distribution coefficient log D differs from true log KOW for ionizable compounds [8] | Control pH carefully; apply Henderson-Hasselbalch correction for ionizable compounds | Measure pH-dependent distribution and extrapolate to neutral species domain |

Experimental Protocol for Robust log KOW Determination:

- Method Selection: Choose method based on expected log KOW range:

- -2 to 4: Shake-flask (OECD TG 107)

- 1 to 6: Generator column (EPA OPPTS 830.7560)

- >4.5 to 8.2: Slow-stirring (OECD TG 123) [8]

Consensus Building: Apply weight-of-evidence approach combining experimental and computational estimates

Quality Control: Accept repeatability of ±0.3 log units for shake-flask, ±0.5 for HPLC methods [8]

Frequently Asked Questions

Q1: How can I extract meaningful hydrogen-bonding free energies from LSER parameters for use in equation-of-state models?

The products aA and bB in LSER equations represent the combined hydrogen-bonding contribution to solvation free energy but cannot be directly separated into individual HB interaction free energies. However, recent advances provide two approaches:

Quantum-Chemical LSER Descriptors: Implement new molecular descriptors (α, β) derived from quantum-chemical calculations that directly relate to HB interaction free energy through: -ΔG₁₂ʰᵇ = 5.71(α₁β₂ + β₁α₂) kJ/mol at 25°C [6]

Partial Solvation Parameters (PSP): Use PSPs (σₐ, σb, σd, σ_p) as intermediaries between LSER and equation-of-state models. These provide:

- Direct estimation of free energy change upon hydrogen bond formation (ΔGʰᵇ)

- Capability to estimate enthalpy (ΔHʰᵇ) and entropy (ΔSʰᵇ) changes [3]

Q2: What are the best practices for validating LSER-derived thermodynamic parameters before use in predictive modeling?

Establish a multi-tier validation framework:

Tier 1: Cross-Model Comparison

- Compare LSER predictions with COSMO-RS calculations for the same systems

- For solvation enthalpies, ensure COSMO-RS calculations at TZVPD-Fine level for optimal accuracy [7]

- Discrepancies >5 kJ/mol warrant further investigation

Tier 2: Experimental Verification

- For partition coefficients, use consolidated log KOW values from multiple independent methods [8]

- Design validation experiments using systems with well-characterized HB interactions (alcohols, carboxylic acids)

Tier 3: Internal Consistency Checks

- Verify that for self-solvation, acid-base interactions are symmetric (aA ≈ bB for similar systems) [6]

- Check thermodynamic consistency between free energy and enthalpy contributions

Q3: How can I handle systems where LSER coefficients are not available for my solvent of interest?

For solvents without established LSER coefficients, implement this decision framework:

Step 1: Analog Identification

- Identify solvents with similar chemical functionality and polarity

- Use Abraham descriptors or σ-profiles to quantify similarity

Step 2: Predictive Approaches

- Use quantum-chemical LSER descriptors derived from σ-profiles [6]

- Implement Partial Solvation Parameters with equation-of-state basis for extrapolation [3]

Step 3: Experimental Parameterization

- If experimental resources allow, determine system-specific coefficients by measuring log P or log Ks for 20-30 reference solutes with diverse descriptors

- Focus on solutes covering wide range of A, B, S, Vx values for robust regression

Table 3: Key Research Reagent Solutions for LSER Thermodynamic Studies

| Resource Category | Specific Tools/Methods | Application in LSER Research | Critical Considerations |

|---|---|---|---|

| Primary Databases | UFZ-LSER Database [5] | Source of solute descriptors (Vx, L, E, S, A, B) and solvent system coefficients | Always check domain of applicability; valid primarily for neutral chemicals [5] |

| Computational Tools | COSMO-RS (via COSMOtherm) [7] | A priori prediction of solvation properties; comparison with LSER predictions | Use TZVPD-Fine level for optimal accuracy in HB contribution estimation [7] |

| Experimental Validation | Consolidated log KOW approach [8] | Reference data for LSER parameterization and validation | Combine ≥5 independent estimates (experimental and computational) to reduce uncertainty |

| Specialized Descriptors | Quantum-chemical LSER descriptors [6] | More consistent HB interaction energies and free energies | Derived from σ-profiles available in COSMObase or via DFT calculations |

Experimental Workflows and Pathways

Diagram 1: LSER Parameter Extraction and Validation Workflow

Diagram 2: Hydrogen-Bonding Contribution Analysis Pathways

FAQs: Navigating the Arbitrariness in Intermolecular Interaction Models

FAQ 1: Why is there inherent arbitrariness in classifying intermolecular interactions, and how does this impact the LSER model? The division of intermolecular interactions into distinct classes (e.g., dispersive, polar, hydrogen bonding) is not absolute or universally accepted, as it is fundamentally based on the strength and nature of the interacting species [3]. This introduces an inherent arbitrariness, which significantly impedes the direct exchange of rich thermodynamic information between different databases and modeling approaches, including the Linear Solvation Energy Relationship (LSER) model [3]. This lack of a unified framework makes it challenging to compare descriptors and system coefficients from different thermodynamic scales or QSPR-type databases directly.

FAQ 2: How can I ensure thermodynamic consistency when extracting hydrogen-bonding free energies from LSER equations? A major challenge is using the LSER products (e.g., A₁a₂ and B₁b₂ for acidity and basicity) to validly estimate the free energy change upon the formation of specific acid-base hydrogen bonds [3]. The current use of LSER equations can lead to thermodynamic inconsistency, especially for self-solvation of hydrogen-bonded solutes, where the solute and solvent are identical, and the complementary interaction energies should be equal [9]. A thermodynamically consistent reformulation of the model, potentially using new quantum chemical (QC) descriptors, is required for reliable extraction [9].

FAQ 3: What computational methods can help quantify the relative importance of different interactions in a complex system? Advanced quantum mechanical methods can deconstruct the total interaction energy into physically meaningful components. For instance, the Local Energy Decomposition (LED) scheme used with domain-based local pair natural orbital coupled cluster (DLPNO-CCSD(T)) calculations can quantify the contribution of London dispersion, electrostatics, and other forces to the stability of a system, such as a DNA duplex [10]. This helps move beyond qualitative classifications to quantitative allocations of interaction energy.

FAQ 4: How do weak interactions contribute significantly to stability in complex biological systems? Although often classified as "weak," interactions such as hydrophobic effects and van der Waals forces are crucial for holding together cellular interaction networks [11]. While strong, stoichiometric complexes exist, computational analyses of interactomes show that the removal of weak, transient interactions can cause the entire network to fragment into disconnected subnetworks [11]. In DNA, London dispersion effects are essential for the stability of the duplex structure [10].

Troubleshooting Guides: Resolving Key Issues in LSER and Interaction Analysis

Issue: Discrepancies in Hydrogen-Bonding Strength Between LSER and Equation-of-State Models

Problem Description Researchers encounter significant discrepancies when transferring hydrogen-bonding parameters (e.g., free energy, enthalpy) derived from the Abraham LSER model to other thermodynamic frameworks, such as SAFT or NRHB equation-of-state models [3] [9]. This leads to inaccurate predictions of phase equilibria and activity coefficients.

Investigation & Diagnostic Steps

- Verify Data Source Consistency: Check if the LSER coefficients and molecular descriptors were obtained from the same database version and fitting procedure. Inconsistencies often arise from using parameters regressed from different experimental datasets [3].

- Check for Thermodynamic Consistency in Self-Solvation: Test the parameters for a case where the solute and solvent are the same molecule (e.g., water in water). The current LSER model often fails this test, indicating a fundamental inconsistency in how complementary interactions are defined [9].

- Compare with ab initio Calculations: Perform or consult high-level quantum chemical calculations (e.g., COSMO-RS, DLPNO-CCSD(T)) to obtain an independent benchmark for the hydrogen-bonding energy of a simple dimer. Compare this value to that implied by the LSER products (A₁a₂, B₁b₂) [10] [9].

Resolution Protocols

- Adopt a Reformed QC-LSER Approach: Implement new molecular descriptors derived from quantum chemical surface charge distributions (e.g., COSMO sigma profiles) [9]. These provide a more rigorous and consistent basis for defining electrostatic and hydrogen-bonding contributions.

- Use Partial Solvation Parameters (PSP): Transition to using PSPs, which are designed with an equation-of-state thermodynamic basis to facilitate the extraction and transfer of information from the LSER database [3]. PSPs can be estimated over a range of conditions and provide separate parameters for dispersion (σd), polar (σp), acidity (σa), and basicity (σb).

- Calibrate Model Parameters: Use the hydrogen-bonding enthalpies and free energies derived from the reformed QC-LSER or PSP methods as external input for your equation-of-state model, ensuring consistency across platforms [9].

Issue: Different Experimental Methods Yield Contradictory Rankings of Interaction Importance

Problem Description In the study of DNA duplex stability, different experimental techniques (e.g., AFM rupture force measurements vs. solution calorimetry) provide seemingly contradictory results: one suggesting hydrogen bonding is most critical, the other suggesting base stacking is dominant [10].

Investigation & Diagnostic Steps

- Understand the Experimental Observable: Recognize that each technique measures a different proxy for stability. AFM measures mechanical rupture forces, while calorimetry measures solution-free energy parameters; these are influenced by different ensembles of contributions (ionic concentration, entropy, etc.) [10].

- Deconstruct the Total Interaction Energy Computationally: Use advanced quantum mechanical energy decomposition analysis (EDA) or symmetry-adapted perturbation theory (SAPT) on a realistic model of the system to quantify the intrinsic contribution of each interaction type (electrostatic, dispersion, charge-transfer) without experimental confounding factors [10].

Resolution Protocols

- Reconcile Findings with Computational Insight: Accept that both experimental results can be valid but highlight different aspects of stability. Computational results can bridge this gap; for example, QM calculations on DNA confirm that Watson-Crick base pairing is the largest intrinsic stabilizing contribution, while stacking, though smaller, is essential for the duplex structure [10].

- Contextualize the Results: Frame the interpretation of any single experiment within its specific limitations and the nature of the property it directly measures. Avoid over-generalizing findings from one methodological domain to all others.

Issue: Accurately Quantifying Interacting Molecules from Super-Resolution Microscopy Data

Problem Description In two-color Single-Molecule Localization Microscopy (SMLM) data, it is challenging to distinguish true biomolecular interactions from random colocalization due to finite localization precision (20-30 nm) and the stochastic nature of the data [12].

Investigation & Diagnostic Steps

- Calculate Proximity Probability: For each putative pair of localizations (A and B) from two channels, calculate the probability that their true separation distance lies within an expected interaction range, given the observed distance and the localization precisions (σA, σB) [12].

- Model the System as a Bipartite Graph: Represent all localizations from channel A and B as two sets of nodes in a graph. Connect nodes with an edge if their proximity probability is greater than zero, and use the probability as the edge weight [12].

- Identify the Most Probable Configuration: Apply a graph matching algorithm (like the Gaussian Mixture Optimization approach mentioned) to select the set of pairs that maximizes the total probability, respecting the constraint that each molecule pairs with at most one partner [12].

Resolution Protocols

- Correct for Spurious Colocalization: Perform Monte Carlo simulations of non-interacting particles at the same density as your experiment. Use the average number of random pairs from these simulations as a background estimate and subtract it from the counted pairs in your experimental data [12].

- Validate with Controls: Always include a negative control system with known non-interacting proteins to empirically determine the background colocalization rate under your specific imaging conditions.

Quantitative Data on Intermolecular Interactions

Table 1: Experimentally Derived Rupture Forces and Computed Interaction Energies for DNA Components

| Interaction Type | System / Method | Measured Energy / Force | Key Contribution |

|---|---|---|---|

| Hydrogen Bonding | G-C Base Pair (AFM) [10] | 20 pN rupture force | Electrostatic & London dispersion [10] |

| Hydrogen Bonding | A-T Base Pair (AFM) [10] | 14 pN rupture force | Electrostatic & London dispersion [10] |

| Stacking | DNA Bases (AFM) [10] | 2 pN rupture force | London dispersion [10] |

| Base Pairing | G-C vs. A-T (QM) [10] | Stronger than stacking | Major stability contributor |

| London Dispersion | DNA Duplex (QM/HFLD) [10] | Essential for stability | Crucial for duplex integrity |

Table 2: Performance of a Probabilistic Pair-Counting Algorithm in SMLM [12]

| Molecular Density (μm⁻²) | Localization Precision (nm) | Recall of Correct Pairs | Identification Error |

|---|---|---|---|

| 5 - 10 | 20 - 30 | ~90% | A few percent |

| Up to ~55 | 1 - 50 | >95% (typical) | A few percent |

Experimental Protocols

Objective: To predict the stability of organic molecular crystals and obtain a data-driven assessment of the contribution of different chemical groups to the lattice energy.

Materials:

- Curated Dataset: A set of molecular crystals from the Cambridge Structural Database (CSD).

- Software: DFT code (e.g., for PBE-D2 calculations), machine learning environment (e.g., scikit-learn), and atom-centered descriptor generator (e.g., for SOAP descriptors).

Methodology:

- Geometry Optimization: Optimize the crystal structure and its isolated molecular components in the gas phase using DFT.

- Compute Lattice Energy: Calculate the lattice (binding) energy, Δc, using the equation: Δc = Ecrystal - Emolecule_gas [13].

- Featurization: Represent each atom in the crystal and the gas-phase molecule using a symmetry-adapted descriptor (e.g., Smooth Overlap of Atomic Positions, SOAP).

- Build a Regression Model: Train a machine learning model (e.g., ridge regression) to predict the per-atom energy directly from the solid-phase atomic descriptors.

- Interpret Contributions: The trained model's weights allow you to estimate the contribution (δa) of each atom, or group of atoms, to the total lattice energy, identifying key stabilizing moieties.

Objective: To quantify the absolute number and proportion of interacting molecules from two-color SMLM datasets.

Materials:

- Imaging System: SMLM setup with two spectrally distinct fluorescent labels.

- Software: Custom probabilistic analysis code (as described in [12]).

Methodology:

- Data Acquisition: Obtain a two-color SMLM dataset of the target proteins, generating localization lists with coordinates and their precisions (σ).

- Calculate Pair Probabilities: For every possible pair of localizations (A from channel 1, B from channel 2), compute the proximity probability (P_prox) that their true distance is within the expected interaction range.

- Find the Most Likely Configuration: Construct a bipartite graph where localizations are nodes and P_prox values are edge weights. Use an optimization algorithm to select the set of pairs that maximizes the total probability, ensuring one-to-one matching.

- Background Correction: Simulate multiple datasets with the same molecular densities but no interactions. Calculate the average number of random pairs from these simulations and subtract this background from the counted pairs in the experimental data.

- Validation: Benchmark the pipeline on simulated data with known ground truth to confirm accuracy under your experimental conditions (density, precision).

Research Reagent Solutions: Essential Computational Tools

Table 3: Key Computational Tools for Analyzing Intermolecular Interactions

| Tool / Reagent | Function / Description | Application in Troubleshooting |

|---|---|---|

| LSER Database [3] | A comprehensive database of solute descriptors and solvent coefficients for partition coefficient prediction. | The primary source for solvation parameters; requires careful handling for thermodynamic consistency. |

| Partial Solvation Parameters (PSP) [3] | An equation-of-state-based framework with descriptors (σd, σp, σa, σb) for dispersion, polar, acidity, and basicity interactions. | Facilitates extraction and transfer of thermodynamic information from LSER for use in other models. |

| QC-LSER Descriptors [9] | New molecular descriptors derived from quantum chemical surface charge distributions (e.g., from COSMO). | Provides a path for thermodynamically consistent reformulation of the LSER model. |

| HFLD/LED Scheme [10] | A quantum chemical method (Hartree–Fock plus London Dispersion with Local Energy Decomposition) for non-covalent interactions. | Quantifies the role of specific interaction components (e.g., dispersion) in complex systems like DNA. |

| SOAP Descriptors [13] | Symmetry-adapted atomic descriptors that encode the geometric environment of an atom. | Enables machine learning models to predict crystal lattice energies and assign atomic contributions. |

| Probabilistic Interaction Model [12] | An algorithm for counting interacting pairs from SMLM data based on localization precision and stoichiometry. | Corrects for spurious colocalization to quantify absolute numbers of bound complexes. |

Workflow and Relationship Diagrams

Diagram 1: A troubleshooting map outlining the core challenges stemming from the inherent arbitrariness in classifying intermolecular interactions and the corresponding solutions discussed in this guide.

Advanced Applications and Integration with Modern Thermodynamic Frameworks

Leveraging Partial Solvation Parameters (PSP) for Enhanced Predictions

Troubleshooting Guide & FAQs

This section addresses common challenges researchers face when determining and applying Partial Solvation Parameters (PSP) in pharmaceutical development.

FAQ 1: What are the primary advantages of using PSP over the Hansen Solubility Parameter (HSP) or Linear Solvation Energy Relationship (LSER) models?

PSP offers a more sound and versatile thermodynamic foundation compared to classical models. A key advantage is its ability to differentiate between the acidity and basicity of a molecule, which the Hansen Solubility Parameter does not. Furthermore, the PSP framework provides a unified approach that allows parameters to be readily converted to either classical solubility or LSER parameters, enabling better integration and comparison across different research databases and methodologies [14].

FAQ 2: My PSP predictions for a new drug compound are inaccurate. What could be the source of error?

Inaccuracies can stem from several sources in the experimental data. A common issue is the use of compiled datasets where different labs used non-standardized methods and experimental conditions. This introduces significant variability. To improve accuracy, ensure data is obtained using consistent, standardized protocols, ideally from a single source trained to perform all experiments uniformly [14].

FAQ 3: How can I calculate the hydrogen-bonding contribution to the cohesive energy density using PSPs?

The hydrogen-bonding contribution to the cohesive energy density (ced_HB) can be calculated using the acidity (σ_Ga) and basicity (σ_Gb) PSPs. The formula is derived from the number of hydrogen bonds per mole and the associated energy [14]:

ced_HB = - (r1 * ν11 * E_HB) / V_m

Where:

r1is a parameter calculated from the McGowan volume,V_x.ν11is the total number of hydrogen bonds per mol.E_HBis the hydrogen-bonding energy, calculated as-30,450 * A * B(where A and B are the LSER descriptors).V_mis the molar volume.

FAQ 4: Can PSPs be used to predict the components of a drug's surface energy?

Yes, a specific benefit of the Partial Solvation Parameter approach is that it can be used to calculate the different surface energy contributions of a drug substance, providing valuable insight for formulations [14].

Experimental Protocols

Protocol 1: Determination of Drug PSPs Using Inverse Gas Chromatography (IGC)

This methodology details the experimental determination of Partial Solvation Parameters for drug compounds, a critical step for enhancing the accuracy of solvation models [14].

- Objective: To obtain experimental PSPs for a drug substance through Inverse Gas Chromatography, which will serve as input for predicting solubility and surface energy.

- Materials and Equipment:

- Gas chromatograph equipped with a suitable detector.

- Capillary column.

- Sample of the drug substance to be analyzed.

- Series of probe gases (e.g., alkanes, solvents with known properties). Research indicates that only a few probe gases are needed to get reasonable estimates of the drug PSPs [14].

- Procedure:

- Sample Preparation: Pack the drug substance into the capillary column as the stationary phase.

- System Calibration: Ensure the gas chromatograph system is properly calibrated.

- Data Collection: Inject a series of probe gases into the column and record their retention times and volumes.

- Data Analysis: Use the retention data to calculate the activity coefficients at infinite dilution for the various probes.

- PSP Calculation: The raw data from IGC is used to calculate the four key PSPs (dispersion, polarity, acidity, and basicity) for the drug, mapping the LSER descriptors according to established equations [14].

Protocol 2: Predicting Drug Solubility in Various Solvents Using Experimental PSPs

This protocol outlines how to use determined PSPs to predict a drug's solubility in different organic solvents, a key application in pre-formulation studies [14].

- Objective: To predict the solubility of a drug in a range of organic solvents using its previously determined PSPs.

- Materials and Equipment:

- Experimentally determined PSPs for the drug (from Protocol 1).

- Database of PSPs or LSER descriptors for the target solvents.

- Computational software for thermodynamic calculations.

- Procedure:

- Data Compilation: Obtain the PSPs for the solvents of interest.

- Activity Coefficient Calculation: For each drug-solvent pair, calculate the activity coefficient. The activity coefficient is considered a product of combinatorial and residual contributions. The residual part includes separate terms for dispersion, polar, and hydrogen-bonding interactions [14].

- Solubility Prediction: Use the calculated activity coefficients to predict the mole fraction solubility of the drug in each solvent.

- Validation: Where possible, validate the predictions with experimental solubility data.

Data Presentation

Table 1: Partial Solvation Parameter Definitions and Calculations

This table summarizes the core definitions and working equations for the four Partial Solvation Parameters [14].

| Parameter Type | Symbol | Molecular Descriptor Mapping | Equation |

|---|---|---|---|

| Dispersion PSP | σ_d |

McGowan Volume (V_x) & Excess Refractivity (E) |

σ_d = 100 * (3.1 * V_x + E) / V_m |

| Polarity PSP | σ_p |

Polarity (S) |

σ_p = 100 * S / V_m |

| Acidity PSP | σ_Ga |

Acidity (A) |

σ_Ga = 100 * A / V_m |

| Basicity PSP | σ_Gb |

Basicity (B) |

σ_Gb = 100 * B / V_m |

Table 2: Key Formulae for Hydrogen-Bonding and Mixture Thermodynamics

This table provides essential formulae for calculating hydrogen-bonding interactions and activity coefficients in mixtures using the PSP framework [14].

| Property | Formula | Variables |

|---|---|---|

| Hydrogen-Bond Gibbs Energy | -G_HB = 2 * V_m * σ_Ga * σ_Gb |

V_m: Molar volumeσ_Ga, σ_Gb: Acidity/Basicity PSP |

| Hydrogen-Bond Enthalpy | E_HB = -30,450 * A * B |

A, B: LSER descriptors |

| Combinatorial Activity Coefficient (Flory-Huggins) | ln(γ₁ᶜ) = ln(φ₁/x₁) + (1 - r₁/r₂) * φ₂ |

φ: Volume fractionx: Mole fractionr: Volume parameter |

The Scientist's Toolkit

Table 3: Essential Research Reagents and Materials for PSP Determination

This table lists key materials used in the experimental determination of Partial Solvation Parameters.

| Item | Function in PSP Research |

|---|---|

| Inverse Gas Chromatograph | The primary instrument used to obtain raw retention data of probe gases on a drug stationary phase, which is essential for calculating experimental PSPs [14]. |

| Probe Gases | A series of characterized chemical vapors (e.g., n-alkanes, solvents of varying polarity). Their interactions with the drug sample reveal its surface energy and solvation properties [14]. |

| Drug Substance | The compound of interest, which is prepared as the stationary phase within the chromatographic column for analysis [14]. |

| Computational Software (e.g., for COSMO-RS) | Used for quantum chemical calculations to predict σ-profiles and derive PSPs, offering an alternative or complementary method to experimental IGC [14]. |

Workflow and Relationship Diagrams

PSP Determination Workflow

PSPs in Solubility Prediction

Integrating LSER with Equation-of-State Thermodynamics

Troubleshooting Guides

Issue 1: Discrepancies in Hydrogen-Bonding Energy Calculations

Problem Description: When extracting hydrogen-bonding free energy (ΔGℎ𝑏) from LSER data, the calculated values are inconsistent with experimental results or exhibit high uncertainty, particularly for systems with strong specific interactions.

Diagnosis and Solution:

| Diagnostic Step | Possible Cause | Recommended Action |

|---|---|---|

| Check descriptor-product consistency | Incorrect pairing of solute descriptors (A, B) with system coefficients (a, b) | Verify that the product A1a2 represents acid(1)-base(2) interaction and B1b2 represents base(1)-acid(2) interaction [3]. |

| Assess data quality for regression | LFER coefficients (a, b) determined from limited experimental data | Use solvents with extensively fitted coefficients; consult the LSER database for systems with high data density [3]. |

| Evaluate temperature dependence | Incorrect assumption of temperature-independent parameters | Implement temperature-dependent PSPs (σa, σb) via equation-of-state thermodynamics for ΔHℎ𝑏 and ΔSℎ𝑏 estimation [3]. |

| Probe physical consistency | Violation of fundamental thermodynamic constraints | Apply physics-informed regularization (e.g., enforcing 𝐶𝑉>0, 𝐾𝑇>0) during parameter estimation [15]. |

Validation Experiment:

- Objective: Validate calculated ΔGℎ𝑏 against isothermal titration calorimetry (ITC) measurements.

- Protocol: Perform LSER analysis for a test solute in multiple solvents. For each system, calculate ΔGℎ𝑏 from the LSER products (A1a2, B1b2) and compare with directly measured ΔG from ITC. A significant deviation (>1 kcal/mol) indicates a need to re-evaluate the LSER coefficients or molecular descriptors.

Issue 2: Poor Predictions for Solute Transfer Between Condensed Phases

Problem Description: The LSER model (log(𝑃)=𝑐𝑝+𝑒𝑝𝐸+𝑠𝑝𝑆+𝑎𝑝𝐴+𝑏𝑝𝐵+𝑣𝑝𝑉𝑥) yields inaccurate predictions for partition coefficients (P) between water and organic solvents or alkane-to-polar solvent systems.

Diagnosis and Solution:

| Diagnostic Step | Possible Cause | Recommended Action |

|---|---|---|

| Analyze residual patterns | Systematic error due to missing interaction term | Use the full set of six molecular descriptors (Vx, L, E, S, A, B); avoid omitting L or E [3]. |

| Check for descriptor cross-correlation | High multicollinearity between independent variables (e.g., S and E) | Apply regularized regression techniques or use latent variable models to handle correlated descriptors. |

| Verify system coefficient provenance | Use of system coefficients (e.g., vp, ep) fitted from a different class of compounds | Ensure system coefficients are derived from a diverse training set relevant to your solute class. |

| Inspect Vx descriptor accuracy | Error in McGowan’s characteristic volume calculation | Recompute Vx using accurate atomic contribution parameters and 3D molecular geometry. |

Validation Experiment:

- Objective: Determine the dominant source of error in a flawed partition coefficient prediction.

- Protocol: Measure the partition coefficient for a small set of reference solutes with well-established descriptors in the solvent system of interest. Compare predictions from the full model versus models with individual descriptors omitted. The model with the largest performance drop when a descriptor is removed indicates the most critical missing interaction term for your system.

Issue 3: Failure of Linearity for Strong Specific Interactions

Problem Description: The fundamental LFER linearity breaks down for solute/solvent systems dominated by strong hydrogen bonding or acid-base interactions, leading to poor model fits.

Diagnosis and Solution:

| Diagnostic Step | Possible Cause | Recommended Action |

|---|---|---|

| Scrutinize the LSER equation | Improper application of the gas-phase vs. condensed-phase equation | Use log(𝐾𝑆)=𝑐𝑘+𝑒𝑘𝐸+𝑠𝑘𝑆+𝑎𝑘𝐴+𝑏𝑘𝐵+𝑙𝑘𝐿 for gas-to-solvent systems and log(𝑃) for condensed-phase transfer [3]. |

| Examine data for non-linear clusters | Distinct solvation regimes for different classes of solutes | Segment the data by chemical class and develop separate, cluster-specific models if physically justified. |

| Investigate compensatory effects | Inaccurate assumption of additive energy terms | Implement a joint learning framework (e.g., EOSNN) that can capture non-additive interactions from diverse data sources [15]. |

| Probe combinatorial binding | Formation of multi-site hydrogen bonding not captured by A/B | Consider advanced models that explicitly account for cooperative effects, beyond the simple A×a and B×b products. |

Frequently Asked Questions (FAQs)

Q1: What is the core thermodynamic justification for the linearity of LSER models, even for strong interactions? The linearity arises from the separable nature of the different interaction energy terms within the solvation process. The LSER framework treats the overall solvation free energy as a sum of approximately independent contributions from cavity formation (Vx), dispersion (L), polarity/polarizability (E, S), and hydrogen bonding (A, B). Each term is a product of a solute-specific descriptor (e.g., A, B) and a solvent-specific coefficient (e.g., a, b), which represents the complementary property of the solvent. This additivity is thermodynamically sound when the underlying interactions are not strongly coupled [3].

Q2: How can I extract meaningful enthalpy (ΔH) and entropy (ΔS) changes from the LSER database, which primarily provides free energy (ΔG) data? While the standard LSER provides log-based relationships for partition coefficients (related to ΔG), an analogous linear form exists for solvation enthalpies: ΔH𝑆=𝑐𝐻+𝑒𝐻𝐸+𝑠𝐻𝑆+𝑎𝐻𝐴+𝑏𝐻𝐵+𝑙𝐻𝐿 [3]. The coefficients for this equation are less commonly tabulated. A more robust approach is to use the Partial Solvation Parameter (PSP) framework. PSPs are built on EOS thermodynamics, allowing for the direct estimation of ΔGℎ𝑏, ΔHℎ𝑏, and ΔSℎ𝑏 for hydrogen bonding based on the acidity and basicity PSPs (σa and σb) across a range of temperatures [3].

Q3: My experimental data is a mixture of P-V-T data from static compression and P-V-ΔE data from shock experiments. Can I still integrate this with LSER? Yes, but it requires a flexible, partially supervised learning approach. Traditional semi-empirical EOS models often fail here due to their need for complete data. Modern machine learning methods, like the proposed EOSNN, are designed to learn jointly from multiple data sources with different limitations. These models can be trained on diverse inputs, including your P-V-T and P-V-ΔE data, to infer a complete EOS surface, which can then be reconciled with LSER descriptors [15].

Q4: How can I quantify the uncertainty in my LSER-based predictions, especially when extrapolating? Uncertainty quantification is a key challenge in traditional LSER and EOS models. Advanced probabilistic deep learning models offer a solution by accounting for both aleatoric uncertainty (noise inherent in the data) and epistemic uncertainty (uncertainty in the model itself due to a lack of data). Implementing an uncertainty-aware model, such as a physics-regularized neural network, allows you to produce predictions with confidence intervals, making it clear when the model is extrapolating beyond reliable bounds [15].

Q5: What are the common pitfalls in transitioning from the Kamlet-Taft LSER to the Abraham LSER? The main pitfall is the inconsistent mapping of descriptors. While Kamlet-Taft's α and β are solvent acidity and basicity descriptors, Abraham's A and B are solute acidity and basicity descriptors. Furthermore, the system coefficients (a, b in Abraham; π*, α, β in Kamlet-Taft) have different physical meanings and scales. Do not assume a direct correlation. Always use a consistent set of descriptors and corresponding coefficients from a single model framework, and consult established cross-correlation studies if translation is necessary [3].

Experimental Data & Protocols

| Descriptor | Symbol | Thermodynamic Interpretation | Typical Experimental Source |

|---|---|---|---|

| McGowan Volume | Vx | Measures the endoergic cost of forming a cavity in the solvent | Calculated from atomic volumes and bond counts |

| Gas-Hexadecane Partition Coeff. | L | Characterizes dispersion (London) interactions | GC retention on non-polar stationary phases |

| Excess Molar Refraction | E | Captures polarizability due to π/n electrons | Measured from refractive index deviation |

| Dipolarity/Polarizability | S | Represents dipole-dipole & dipole-induced dipole interactions | Solvatochromic comparison methods |

| Hydrogen-Bond Acidity | A | Quantifies the solute's ability to donate a H-bond | Partitioning in carefully chosen solvent systems |

| Hydrogen-Bond Basicity | B | Quantifies the solute's ability to accept a H-bond | Partitioning in carefully chosen solvent systems |

Table 2: Comparison of EOS Modeling Approaches for Integration with LSER

| Model Type | Key Strengths | Key Limitations | Suitability for LSER Integration |

|---|---|---|---|

| Semi-Empirical (e.g., MGD) | Physically intuitive parameters; well-understood [15]. | Relies on strong assumptions (e.g., constant γ); poor flexibility [15]. | Moderate; good if assumptions hold, difficult otherwise. |

| Gaussian Process (GP) | Built-in uncertainty quantification; can incorporate physical constraints [15]. | Sensitive to kernel choice; poor scalability (O(n³)); requires complete data [15]. | High for small, clean datasets; low for large/mixed data. |

| Physics-Informed Neural Network (e.g., EOSNN) | High flexibility; works with mixed/partial data; scalable; can enforce physical laws [15]. | "Black box" nature; requires significant computational resources for training [15]. | Very High; can jointly learn from EOS and LSER data directly. |

Core Protocol: Validating LSER-EOS Integration with a Physics-Informed Neural Network

Objective: To train a unified model that accurately predicts thermodynamic properties by jointly learning from EOS surfaces and LSER-based descriptors.

Materials:

- Data: Combined dataset of P-V-T-E points from ab initio calculations and experimental solute partition coefficients with known LSER descriptors.

- Software: Python with deep learning libraries (e.g., PyTorch, TensorFlow) and a differential equation solver.

Methodology:

- Model Architecture: Implement a feed-forward neural network that takes volume (V) and temperature (T) as primary inputs.

- Physics-Informed Loss: Define a composite loss function (ℒ) that includes:

- Data Loss (ℒdata): Mean squared error between predictions and observed P and E values.

- Physics Loss (ℒphysics): Penalty for violating thermodynamic identities derived from EOS, e.g., 𝑃=−(∂𝐸/∂𝑉)𝑇.

- LSER Regularization (ℒ_LSER): Penalty for deviation of the model's predicted solvation free energies from the values projected by the LSER linear model for a set of reference solutes.

- Training: Minimize the total loss ℒ = ℒdata + λ𝑝ℎ𝑦𝑠𝑖𝑐𝑠 ℒphysics + λ𝐿𝑆𝐸𝑅 ℒ_LSER, where λ are regularization hyperparameters.

- Validation: Assess the model on its ability to predict P-V-T-E relations outside the training set and accurately compute partition coefficients for novel solutes.

Workflow Visualization

Diagram 1: LSER-EOS Integration Workflow

Diagram 2: Troubleshooting Prediction Inaccuracy

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Computational Tools for LSER-EOS Research

| Item Name | Function / Role in Research | Specification / Notes |

|---|---|---|

| Abraham Descriptor Dataset | Provides the core solute parameters (Vx, E, S, A, B) for LSER calculations. | Use the freely accessible LSER database. Ensure descriptors are for the correct temperature [3]. |

| Reference Solvent Set | A set of solvents with well-established LFER system coefficients for method calibration. | Should include apolar (alkanes), polar aprotic (e.g., DMSO), and protic (e.g., water, alcohols) solvents [3]. |

| Partial Solvation Parameters (PSP) | A thermodynamic framework to bridge LSER information with EOS models. | Used to estimate σa, σb, σd, σp and subsequently ΔGℎ𝑏, ΔHℎ𝑏, ΔSℎ𝑏 [3]. |

| EOSNN Software Framework | A physics-informed neural network for joint learning of EOS from diverse data. | Allows for integration of incomplete P-V-T and P-V-ΔE data with physical constraints [15]. |

| Uncertainty Quantification Module | Tool to compute both aleatoric and epistemic uncertainties in predictions. | Critical for assessing model reliability, especially when extrapolating [15]. |

Estimating Free Energy and Enthalpy of Hydrogen Bonding from LSER Data

Frequently Asked Questions (FAQs)

FAQ 1: How can I quickly estimate hydrogen-bonding interaction energies for my LSER model? A new method combining quantum chemical calculations with the LSER approach allows for the straightforward prediction of hydrogen-bonding interaction energies. Each molecule is characterized by its proton donor capacity (α) and proton acceptor capacity (β). The hydrogen-bonding interaction energy between two molecules, 1 and 2, is calculated as: ΔE = c(α₁β₂ + α₂β₁), where c is a universal constant equal to 5.71 kJ/mol at 25°C. For identical molecules, the self-association energy is 2cαβ. These α and β descriptors are derived from molecular surface charge distributions obtained via DFT calculations, making them available even for unsynthesized compounds [16].

FAQ 2: My LSER model's performance dropped for polar compounds. What could be the issue?

A common pitfall is the application of log-linear models that are only robust for nonpolar compounds. If your dataset includes mono- or bipolar compounds with significant hydrogen-bonding propensity, a log-linear model may show weak correlation (e.g., R²=0.930, RMSE=0.742). For such cases, a full LSER model is superior as it explicitly accounts for hydrogen-bonding acidity (A) and basicity (B) terms. Ensure your model uses the complete LSER equation, such as: logK = constant + eE + sS + aA + bB + vV [17].

FAQ 3: Are there computational tools for predicting hydrogen-bonding strengths and hydration free energy? Yes, open-source tools like Jazzy are available for this purpose. Jazzy predicts atomic and molecular hydrogen-bond strengths and the free energy of hydration for small molecules. It calculates a free energy of hydration (ΔG_hydr) as the sum of three terms: a polar term (from donor and acceptor strengths), an apolar term (based on surface area and ring count), and an interaction term. It allows for the visualization of atomic hydrogen-bond strengths, supporting the design of compounds with desired properties [18].

Troubleshooting Guides

Problem: Inaccurate Prediction of Partition Coefficients for Hydrogen-Bonding Compounds

- Symptoms: Your LSER model shows significant deviations between experimental and predicted logK values for polar compounds, while predictions for nonpolar compounds remain accurate.

- Possible Causes & Solutions:

- Cause 1: Use of an oversimplified log-linear model. A model like

logK_LDPE/water = 1.18*logK_O/W - 1.33works well only for nonpolar, low hydrogen-bonding compounds (n=115, R²=0.985) [17].- Solution: Transition to a full LSER model. For instance, a robust LSER for LDPE/water partitioning is [17]:

logK = -0.529 + 1.098E - 1.557S - 2.991A - 4.617B + 3.886VThis model, which includes hydrogen-bond acidity (A) and basicity (B) parameters, demonstrated high accuracy (n=156, R²=0.991, RMSE=0.264) across a chemically diverse compound set [17].

- Solution: Transition to a full LSER model. For instance, a robust LSER for LDPE/water partitioning is [17]:

- Cause 2: Incorrect molecular descriptors, particularly for hydrogen-bonding.

- Solution: Adopt a consistent method for calculating α and β descriptors. The method based on COSMO-RS sigma-profiles is recommended, as it provides a quantum-chemical account of the hydrogen-bonding contribution and can handle conformational populations [16].

- Cause 1: Use of an oversimplified log-linear model. A model like

Problem: Experimentally Determining Reliable Partition Coefficients for Model Calibration

- Symptoms: High experimental variance in measured polymer/water partition coefficients, especially for polar molecules, leading to poor model calibration.

- Possible Causes & Solutions:

- Cause: The material state of the polymer can influence sorption. For instance, sorption of polar compounds into non-purified, pristine LDPE can be up to 0.3 log units lower than into purified, solvent-extracted LDPE [17].

- Solution: Standardize polymer purification before experiments. For worst-case (maximum) leaching estimates in risk assessments, use partition coefficients derived from purified polymers and ignore kinetic information to simulate equilibrium conditions [17].

- Cause: The material state of the polymer can influence sorption. For instance, sorption of polar compounds into non-purified, pristine LDPE can be up to 0.3 log units lower than into purified, solvent-extracted LDPE [17].

The table below compares different computational approaches relevant to estimating hydrogen-bonding energies and solvation properties.

Table 1: Comparison of Computational Methods for Hydrogen-Bonding and Solvation Properties

| Method / Tool | Primary Application | Key Outputs | Key Inputs / Descriptors | Underlying Principle |

|---|---|---|---|---|

| Novel α/β Method [16] | Predicting H-bond interaction energies | Hydrogen-bonding interaction energy (ΔE) | Acidity (α) and basicity (β) descriptors from COSMO | Linear relationship: ΔE = c(α₁β₂ + α₂β₁) |

| LSER Model [17] | Predicting partition coefficients | logK (e.g., logK_LDPE/W) | E, S, A, B, V solvatochromic parameters | Multivariate linear regression using solvation parameters |

| Jazzy Tool [18] | Predicting H-bond strengths & hydration free energy | Atomic/molecular H-bond strengths, ΔG_hydr | Partial charges, van der Waals radii (via kallisto) | Sum of polar, apolar, and interaction terms |

Experimental Protocols

Protocol 1: Calculating Hydrogen-Bonding Interaction Energies Using the α/β Method This protocol describes how to calculate hydrogen-bonding interaction energies for use in LSERs or other thermodynamic models [16].

- Molecular Descriptor Calculation: For each molecule, perform a DFT calculation with a continuum solvation model (like COSMO) to obtain the surface charge distribution (sigma-profile).

- Acidity/Basicity Determination: From the sigma-profile, calculate the molecular descriptors for proton donor capacity (acidity, α) and proton acceptor capacity (basicity, β).

- Energy Calculation: For an interaction between two molecules (1 and 2), calculate the overall hydrogen-bonding interaction energy (in kJ/mol) using the formula: ΔE = 5.71 × (α₁β₂ + α₂β₁).

- Validation: For self-associating molecules, the self-association energy can be used for validation and is given by ΔE_self = 2 × 5.71 × αβ.

Protocol 2: Building a Robust LSER Model for Partitioning This protocol outlines the steps for developing a linear solvation energy relationship for partition coefficients, incorporating hydrogen-bonding effects [17].

- Data Collection: Compile a experimental dataset of partition coefficients (e.g., logK_LDPE/W) for a chemically diverse set of compounds. The set should span a wide range of molecular weight, hydrophobicity, and hydrogen-bonding propensity.

- Descriptor Acquisition: For each compound, obtain the five core LSER descriptors: excess molar refractivity (E), dipolarity/polarizability (S), hydrogen-bond acidity (A), hydrogen-bond basicity (B), and McGowan's characteristic molecular volume (V).

- Model Calibration: Perform a multiple linear regression of the experimental logK values against the five descriptors (E, S, A, B, V) to obtain the model coefficients.

- Model Validation: Validate the model using a test set of compounds not included in the calibration. Compare its performance against simpler models (e.g., log-linear octanol-water models) to demonstrate superiority, particularly for polar compounds.

The Scientist's Toolkit

Table 2: Essential Research Reagents and Computational Tools

| Item | Function in Research |

|---|---|

| Purified LDPE Material | A standardized polymer substrate for experimental determination of partition coefficients, crucial for generating high-quality calibration data for LSER models [17]. |

| COSMO-RS Software | A quantum chemistry-based method used to generate the sigma-profiles and molecular surface charge densities required for calculating the α and β hydrogen-bonding descriptors [16]. |

| Jazzy Open-Source Tool | A computational tool for the fast prediction of hydrogen-bond strengths and free energy of hydration, useful for featurization and interactive compound design [18]. |

| DFT Calculation Package | Software (e.g., Gaussian, ORCA) for performing the underlying quantum chemical calculations to obtain electron densities and partial charges needed for descriptors in tools like Jazzy or the α/β method [18] [16]. |

Workflow Diagram

The diagram below illustrates the integrated workflow for using experimental data and computational tools to build and refine LSER models with accurate hydrogen-bonding energy terms.

Workflow for LSER Model Development

Welcome to the LSER Research Support Center

This support center is designed for researchers and scientists working to improve the accuracy and precision of the Linear Solvation Energy Relationship (LSER) model, especially when dealing with polar and hydrogen-bonding compounds. Here you will find targeted troubleshooting guides, detailed experimental protocols, and essential resources to advance your solvation thermodynamics research.

Frequently Asked Questions & Troubleshooting

Q1: What are the key limitations of traditional LSER models when dealing with polar and hydrogen-bonding compounds?

Traditional LSER models, while highly successful, face specific challenges with polar and hydrogen-bonding interactions:

- Parameter Ambiguity: The model's solvent-specific coefficients (e.g.,

s2,a2,b2in the solvation free energy equation) are typically determined via multilinear regression. This can make it difficult to isolate and unambiguously interpret the specific physical contributions from polar and hydrogen-bonding interactions [19]. - Data Dependency: Extending the LSER model to new solvents requires a substantial amount of critically compiled experimental data to reliably determine the six required parameters, which can be a bottleneck for research on novel compounds [19].

- Inconsistent Descriptors: The coefficients for solvation free energy (Eq. 4) and solvation enthalpy (Eq. 5) are often "very different," complicating a unified molecular-level understanding of these interactions [19].

Q2: How can COSMO-based quantum chemical calculations help overcome these limitations?

COSMO (Conductor-like Screening Model) calculations provide a powerful, prediction-oriented alternative to purely empirical correlations.

- Novel Molecular Descriptors: COSMO-type calculations are used to develop new, quantum chemically-based molecular descriptors for a solute's electrostatic interactions. These descriptors are derived from molecular surface charge distributions (σ-profiles) and offer a more fundamental characterization of a molecule's potential for polar and hydrogen-bonding interactions [19] [16].

- Reduced Parameter Set: The new method based on these descriptors requires only one to three solvent-specific parameters for predicting solvation free energies, significantly simplifying the model compared to the six parameters needed in the traditional LSER approach [19].

- Prediction of Hydrogen-Bonding Energies: A specific application allows for the straightforward prediction of hydrogen-bonding interaction energies using acidity (α) and basicity (β) descriptors derived from COSMO calculations. The interaction energy between two molecules is given by a universal constant multiplied by

(α1β2 + α2β1)[16].

Q3: What is a practical protocol for implementing this new COSMO-based approach?

The following table outlines a general workflow for deriving and using the new descriptors [19] [16].

| Step | Action | Key Details |

|---|---|---|

| 1. Input Structure | Generate a 3D molecular structure for the compound of interest. | Ensure the structure is energetically minimized. |

| 2. Quantum Chemical Calculation | Perform a DFT/COSMO calculation. | Use an appropriate density functional and basis set to compute the molecule's σ-profile (surface charge distribution). |

| 3. Descriptor Extraction | Calculate the new molecular descriptors from the σ-profile. | This yields descriptors for the solute's electrostatic, dispersion, and hydrogen-bonding character. For HB, extract α (acidity) and β (basicity). |

| 4. Model Application | Input descriptors into the new solvation model. | Use the descriptors with the corresponding solvent-specific parameters to predict solvation free energies or hydrogen-bonding interaction energies. |

Q4: How do I integrate these new methods with existing LSER-based research frameworks?

The COSMO-based approach is designed to be complementary to the established LSER model.

- Reference Data: The new model often uses Abraham's LSER model to provide reference solvation free energy data for determining its own solvent-specific parameters [19].

- Information Transfer: The descriptors and partial solvation parameters (PSP) generated by the new method can be translated into formats analogous to Hansen Solubility Parameters, thereby enlarging the application range of both frameworks [19].

- Hybrid Strategy: Researchers can use the LSER model for well-characterized systems while employing the COSMO-based method for new, data-sparse compounds or to gain deeper mechanistic insight into specific interaction contributions.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational and theoretical "reagents" essential for work in this field.

| Item / Concept | Function & Explanation |

|---|---|

| σ-Profile (Sigma-Profile) | A quantum-chemically derived histogram of a molecule's surface charge density. It serves as the fundamental descriptor for a molecule's polarity and hydrogen-bonding propensity in COSMO-based models [19] [16]. |

| Acidity (α) & Basicity (β) Descriptors | Molecular descriptors quantifying a molecule's capacity to donate (α) or accept (β) a proton in a hydrogen bond. They are used to predict hydrogen-bonding interaction energies [16]. |

| Partial Solvation Parameters (PSP) | Parameters analogous to Hansen Solubility Parameters, derived from solvation enthalpy and free-energy information. They help characterize a solvent's interaction capacity and extend the range of solubility predictions [19]. |

| Abraham's LSER Descriptors (E, S, A, B, V, L) | The established set of empirical molecular descriptors (excess molar refraction, polarity/polarizability, hydrogen-bond acidity/basicity, McGowan's volume, and n-hexadecane partition coefficient) used in the traditional LSER model for correlating solvation data [19]. |

Experimental & Computational Workflows

Workflow for Advanced Solvation Modeling

This diagram illustrates the integrated workflow for moving beyond traditional log-linear models by combining LSER and COSMO-based approaches.

Identifying and Overcoming Common LSER Pitfalls and Model Limitations

Critical Analysis of Weak Correlations for Polar Compounds

Linear Solvation Energy Relationships (LSERs) represent a powerful quantitative approach for predicting partition coefficients and solvation properties in pharmaceutical and environmental research. The standard Abraham LSER model correlates a compound's free energy-related properties with its molecular descriptors through the equation: LogK = c + eE + sS + aA + bB + vV [19] [3]. These descriptors represent: V (McGowan's characteristic volume), E (excess molar refraction), S (dipolarity/polarizability), A (hydrogen bond acidity), and B (hydrogen bond basicity) [3].