A Practical Guide to Determining LSER Solute Descriptors for Drug Discovery and Biomolecular Research

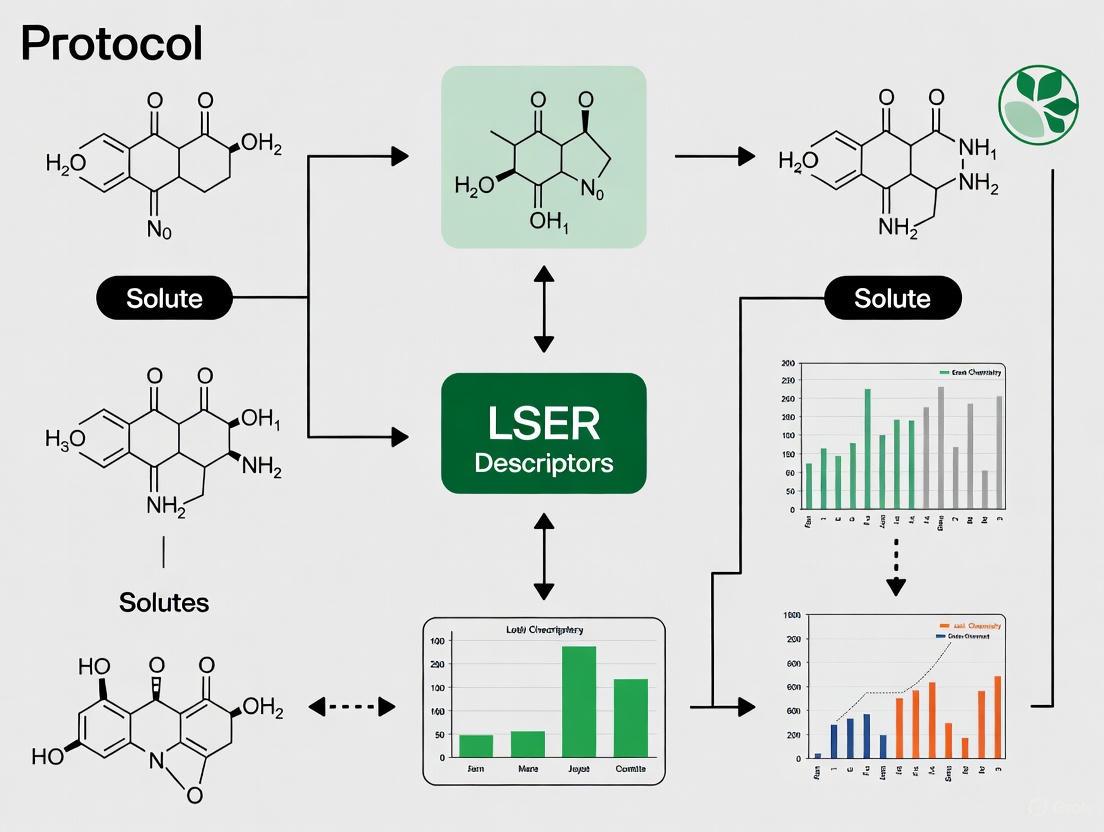

This article provides a comprehensive protocol for determining Linear Solvation Energy Relationship (LSER) solute descriptors, a critical tool for predicting solute partitioning and biomolecular interactions.

A Practical Guide to Determining LSER Solute Descriptors for Drug Discovery and Biomolecular Research

Abstract

This article provides a comprehensive protocol for determining Linear Solvation Energy Relationship (LSER) solute descriptors, a critical tool for predicting solute partitioning and biomolecular interactions. Tailored for researchers and drug development professionals, it covers the foundational theory of the Abraham solvation parameter model, details established and emerging computational methods for descriptor determination, and offers strategies for troubleshooting and validating results. By integrating traditional thermodynamics with modern machine learning approaches, this guide serves as a vital resource for accelerating solvent selection, predicting pharmacokinetic properties, and enabling rational design in chemical and pharmaceutical development.

Understanding LSER Fundamentals: The Abraham Solvation Parameter Model and Its Six Key Descriptors

Linear Solvation Energy Relationships (LSERs) represent a powerful quantitative approach for predicting the partitioning behavior and solubility of chemical compounds. Evolving from early solvent polarity scales, the LSER framework, formalized by Abraham, uses a set of solute-specific descriptors to model and predict complex physicochemical properties across diverse biological and environmental systems. This makes LSERs an indispensable tool for researchers in drug development and environmental chemistry, enabling robust predictions of a molecule's behavior without exhaustive laboratory experimentation for every new compound.

Theoretical Foundation: The LSER Equation

The predictive power of LSERs is encapsulated in a multiple linear regression equation that relates a free-energy related property (e.g., log of a partition coefficient) to fundamental molecular interactions:

SP = c + eE + sS + aA + bB + vV

The following table defines the descriptors and system constants in the LSER model:

Table 1: Components of the Abraham LSER Equation

| Symbol | Type | Description | Represents |

|---|---|---|---|

| SP | Dependent Variable | Solute Property | The log of a measured property (e.g., log K) |

| c | System Constant | Regression Constant | System-specific intercept |

| E | Solute Descriptor | Excess Molar Refractivity | The solute's ability to interact via π- and n-electron pairs |

| S | Solute Descriptor | Dipolarity/Polarizability | The solute's dipole moment and polarizability |

| A | Solute Descriptor | Overall Hydrogen-Bond Acidity | The solute's ability to donate a hydrogen bond |

| B | Solute Descriptor | Overall Hydrogen-Bond Basicity | The solute's ability to accept a hydrogen bond |

| V | Solute Descriptor | McGowan's Characteristic Volume | The solute's molecular size |

| e, s, a, b, v | System Constants | System Coefficients | Quantify the system's sensitivity to each interaction |

Experimental Protocols for Determining Solute Descriptors

Determining the five solute descriptors (E, S, A, B, V) requires a combination of experimental measurements and computational methods. The following protocols outline the standard methodologies.

Protocol 3.1: Determination of Descriptor V (McGowan's Characteristic Volume)

Principle: The McGowan Characteristic Volume is calculated from the molecular structure and represents the size of the molecule, which influences cavity formation in the solvent.

Materials:

- Software: Molecular modeling software (e.g., Avogadro, ChemDraw) or a standardized calculation spreadsheet.

- Data: Molecular structure and table of atomic volumes.

Procedure:

- Draw Molecular Structure: Create a precise 2D or 3D model of the solute molecule.

- Sum Atomic Volumes: Calculate the volume by summing the characteristic volumes for all atoms in the molecule. Standard values are available in the literature (e.g., carbon = 16.35, hydrogen = 8.71, oxygen = 12.43 cm³/molˣ10⁻²).

- Apply Correction: Subtract 6.56 cm³/molˣ10⁻² for each single bond in the molecule. This accounts for the overlap between connected atoms.

- Record Value: The result is the V descriptor. It is a unitless, characteristic volume.

Protocol 3.2: Determination of Descriptor E (Excess Molar Refractivity)

Principle: The E descriptor is derived from the measured refractive index of the solute and indicates its polarizability.

Materials:

- Instrument: Refractometer.

- Reagents: Solute of high purity, standard solvent (if measurement is not done on neat liquid).

Procedure:

- Calibrate Instrument: Calibrate the refractometer with a standard, such as deionized water.

- Measure Refractive Index: If the solute is a liquid at room temperature, measure its refractive index (n_D) directly. For solids, measure the refractive index of a solution and extrapolate.

- Calculate Molar Refraction: Compute the molar refraction (R) using the Lorentz-Lorenz equation: ( R = \frac{(nD^2 - 1)}{(nD^2 + 2)} \times MW / density ).

- Calculate E Descriptor: The E descriptor is the molar refraction of the solute, normalized by 10 and subtracted by the molar refraction of an alkane of the same volume: ( E = (R{solute} - R{alkane}) / 10 ).

Protocol 3.3: Determination of Descriptors S, A, and B via Gas-Chromatographic (GC) Method

Principle: Descriptors S (dipolarity), A (hydrogen-bond acidity), and B (hydrogen-bond basicity) are determined by measuring gas-liquid partition coefficients (log K) on multiple stationary phases with characterized LSER system constants.

Materials:

- Equipment: Gas Chromatograph with Flame Ionization Detector (FID).

- Columns: A set of at least 6-8 different capillary GC columns with known LSER system constants (e.g., squalane, OV-225, Triton X-305).

- Reagents: Solute of interest, inert gas (e.g., methane) for dead time measurement, high-purity solvents for dilution.

Procedure:

- Prepare Solute Solution: Dissolve the solute in a volatile solvent to create a dilute injection solution.

- Measure Retention Times:

- For each column, inject the solute and an unretained marker (e.g., methane).

- Record the retention time of the solute (tR) and the unretained marker (tM).

- Calculate Partition Coefficient:

- Calculate the specific retention volume, ( Vg ).

- The gas-liquid partition coefficient is log K = log(Vg).

- Perform Multivariate Regression:

- Compile the log K values measured on all columns.

- Using a statistical software package, perform a multiple linear regression of the measured log K values against the known system constants (e, s, a, b, l) for each column.

- The regression will yield the solute's descriptors S, A, and B as the fitted coefficients.

Table 2: Research Reagent Solutions for LSER Descriptor Determination

| Item | Function/Application |

|---|---|

| Gas Chromatograph with FID | Primary instrument for measuring gas-liquid partition coefficients for determining S, A, and B descriptors. |

| Diverse GC Stationary Phases | A set of columns with different polarities and interaction properties (e.g., Squalane, OV-225) to probe specific solute-solvent interactions [1]. |

| Refractometer | Measures the refractive index of a solute, which is essential for calculating the E descriptor (Excess Molar Refractivity). |

| Molecular Modeling Software | Used to construct and visualize molecular structures for the calculation of the V descriptor (McGowan's Characteristic Volume). |

| UFZ-LSER Database | Key computational resource for accessing known descriptor values, system parameters, and performing calculations like biopartitioning and sorbed concentration [1]. |

Application Notes: Calculating Key Properties

The UFZ-LSER database provides practical tools for applying solute descriptors to predict critical properties in drug development [1]. The workflow for using these tools is outlined below.

LSER Application Workflow

Note 4.1: Calculating Biopartitioning

Purpose: To predict the distribution of a neutral solute within biological compartments (e.g., muscle, fat, storage lipids, proteins).

Procedure:

- Access Tool: Navigate to the "Calculate the biopartitioning" section of the UFZ-LSER database [1].

- Input Composition: Define the volume percentages of key biological phases (e.g., muscle protein, water, lipids, carbohydrates).

- Input Solute Data: Enter the solute's LSER descriptors (E, S, A, B, V).

- Execute Calculation: The tool computes the fraction of solute partitioned into each biological phase based on the system's LSER equation.

Note 4.2: Calculating Permeability through Caco-2/MDCK Monolayers

Purpose: To predict intestinal absorption (Caco-2) or renal/brain barrier permeability (MDCK) for neutral molecules.

Procedure:

- Access Tool: Navigate to the "Calculate the permeability through a Caco-2/MDCK monolayer" module [1].

- Input Solute Data: Enter the solute's LSER descriptors.

- Account for Ionization (if applicable): For ionizable compounds, enter the fraction of neutral species at the experimental pH.

- Execute Calculation: The tool provides a predicted permeability value, which can be exported for further analysis.

Note 4.3: Key Considerations for Accurate Predictions

- Domain of Applicability: The LSER model is primarily validated for neutral chemicals. Predictions for ions or metal complexes may be unreliable [1].

- Descriptor Accuracy: The quality of all predictions is contingent on the accuracy of the input solute descriptors.

- Freely Dissolved Analyte: The "Calculate the concentration of freely dissolved analyte" tool is explicitly marked for neutral molecules only [1].

Data Presentation and Analysis

The following table provides a sample of solute descriptors for common compounds, illustrating how molecular structure influences these values. These data are crucial for understanding and predicting partitioning behavior.

Table 3: Experimental LSER Solute Descriptors for Selected Compounds (from UFZ-LSER Database) [1]

| Compound Name | E | S | A | B | V |

|---|---|---|---|---|---|

| Benzene | 0.610 | 0.520 | 0.000 | 0.140 | 0.716 |

| Chloroform | 0.425 | 0.490 | 0.150 | 0.020 | 0.616 |

| Ethyl Acetate | 0.106 | 0.620 | 0.000 | 0.450 | 0.784 |

| Aniline | 0.955 | 0.820 | 0.260 | 0.410 | 0.816 |

| Butan-1-ol | 0.224 | 0.420 | 0.370 | 0.480 | 0.730 |

The logical relationship and dependencies between the solute's molecular properties, its experimentally determined descriptors, and the final predicted biological activities are summarized in the following diagram.

LSER Predictive Logic

The Abraham solvation parameter model is a widely adopted linear free energy relationship (LFER) that quantitatively describes the partitioning of solutes between different phases. The model's predictive power resides in six solute descriptors—Vx, L, E, S, A, and B—which encode fundamental aspects of a molecule's interaction potential. These descriptors allow for the prediction of a wide array of physicochemical and biological properties, including partition coefficients, solubility, chromatographic retention, and toxicity. This application note provides a detailed deconstruction of each descriptor, presents validated protocols for their experimental determination, and illustrates their practical application within pharmaceutical and environmental sciences.

The Abraham solvation parameter model defines solute transfer between phases using two primary equations [2]:

For partitioning between two condensed phases:

log P = c + eE + sS + aA + bB + vV (1)

For partitioning between a gas phase and a condensed phase:

log K = c + eE + sS + aA + bB + lL (2)

Here, the uppercase letters (E, S, A, B, V, L) are the solute descriptors, representing intrinsic properties of the solute. The lowercase letters (c, e, s, a, b, v, l) are the system coefficients, characterizing the solvent system or process. The model's versatility allows it to be applied to processes ranging from water-to-solvent partitioning and chromatographic retention to skin permeability and biological activity [2] [3].

Deconstruction of the Solute Descriptors

The six solute descriptors quantitatively capture the key molecular interactions governing solvation and partitioning. Their definitions and physicochemical significance are summarized in the table below.

Table 1: The Six Abraham Solute Descriptors: Definitions and Interpretations

| Descriptor | Full Name | Definition & Interpretation | Molecular Interactions Encoded |

|---|---|---|---|

| V or Vx | McGowan Characteristic Volume | Molecular volume, calculated from atomic contributions and bond counts [4]. Units: (cm³ mol⁻¹)/100. | Size/Cavity Formation: Energy required to create a cavity in the solvent to accommodate the solute. |

| L | Gas-Hexadecane Partition Coefficient | Logarithm of the solute's gas-to-hexadecane partition coefficient at 298 K [4]. | Dispersion Interactions: Solute-solvent dispersion (London) forces in an aliphatic hydrocarbon environment. |

| E | Excess Molar Refractivity | Molar refraction in excess of a hypothetical non-polar, non-π-conjugated alkane of similar size [2]. Units: (cm³ mol⁻¹)/10. | Polarizability from n- and π-electrons: Captures interactions from lone pairs and π-electrons. |

| S | Dipolarity/Polarizability | A combined measure of the solute's ability to stabilize a charge or a dipole [5]. | Dipolarity & Polarizability: solute-solvent interactions via dipole-dipole and dipole-induced dipole forces. |

| A | Overall Hydrogen-Bond Acidity | The solute's effective or summation hydrogen-bond donating ability [5]. | Hydrogen-Bond Donating Ability: Strength of the solute (acid) - solvent (base) hydrogen bonds. |

| B | Overall Hydrogen-Bond Basicity | The solute's effective or summation hydrogen-bond accepting ability [5]. | Hydrogen-Bond Accepting Ability: Strength of the solute (base) - solvent (acid) hydrogen bonds. |

The following diagram illustrates the logical relationship between the descriptors and the molecular properties they represent.

Diagram 1: Molecular interactions captured by Abraham descriptors.

Experimental Protocols for Descriptor Determination

A solute's descriptors can be determined by measuring its behavior in multiple systems with known Abraham model coefficients and solving the resulting set of equations.

Protocol: Determination of Descriptors for a Simple, Neutral Solute

This protocol outlines the steps for determining descriptors for a solute that does not ionize or self-associate in solution [2].

1. Compilation of Experimental Data:

- Gather experimental values for the target solute across various partitioning systems. A minimum of 6-8 diverse data points is typically required.

- Recommended Property Types:

- log P (Water-Solvent Partition): Measured for 3-4 different organic solvents (e.g., wet octanol, hexane, toluene, diethyl ether) [2].

- log K (Gas-Solvent Partition): Measured for 2-3 different organic solvents.

- log S (Solubility): Molar solubility in water and at least 3-4 organic solvents, expressed as log(molar solubility ratio relative to water) [2].

2. Descriptor Calculation Workflow: The sequential process for determining the final set of descriptors is shown below.

Diagram 2: Workflow for descriptor determination.

3. Data Regression and Validation:

- Input the compiled experimental data and the known system coefficients for each measurement into statistical software.

- Perform a multiple linear regression to solve for the unknown descriptors (typically L, S, A, and B).

- Validate the result by ensuring the back-calculated properties agree with the experimental data within an acceptable error (e.g., standard deviation of ~0.10 log units or less) [2].

Specialized Protocols

Protocol for Carboxylic Acids (Dimerizing Solutes): For solutes like trans-cinnamic acid that dimerize in non-polar solvents, separate descriptor sets for the monomer and dimer must be determined [2].

- Monomer Descriptors: Use solubility and partition coefficient data only from polar solvents (e.g., alcohols, acetone) where the solute exists predominantly as a monomer.

- Dimer Descriptors: Use solubility data only from non-polar, aprotic solvents (e.g., cyclohexane, benzene) where the solute exists predominantly as a dimer. The dimer is treated as a distinct chemical entity.

Protocol for Hydrocarbons using Gas Chromatography (GC): For non-polar molecules like alkanes, the descriptors E, S, A, and B are zero, simplifying the calculation [4] [6].

- Calculate Vx from molecular structure.

- The only unknown descriptor is L.

- Use a single set of measured Kováts Retention Indices (KRI) on a non-polar stationary phase (e.g., squalane).

- Use a pre-established correlation (KRI vs. L) for a series of alkanes to calculate the L descriptor for the target solute directly [6].

Protocol for Pharmaceuticals using HPLC: High-throughput determination of S, A, and B descriptors for drug-like molecules can be achieved using reversed-phase HPLC [5].

- Column Selection: Utilize a minimum of 3-4 HPLC columns with different bonded phases (e.g., C18, cyano, phenyl).

- Measurement: Determine the solute's retention factor (log k) on each column.

- Regression: Relate the measured log k values to the system coefficients of the HPLC columns to solve for the solute's S, A, and B descriptors. The E descriptor is often obtained from prediction, and Vx is calculated.

The Scientist's Toolkit: Research Reagents & Materials

Table 2: Essential Materials for Abraham Descriptor Research

| Material / Reagent | Function & Application in Descriptor Determination |

|---|---|

| n-Hexadecane | The reference solvent for defining the L descriptor [4]. Used in gas-liquid partition experiments. |

| Squalane GC Column | A non-polar stationary phase used in GC to measure retention data for determining the L descriptor of non-polar solutes like alkanes [6]. |

| Diverse Organic Solvents (e.g., octanol, hexane, ether, chloroform) | Used in water-solvent partition (log P) and solubility studies to probe different interaction potentials (dispersion, dipole, H-bonding) [2] [7]. |

| Characterized HPLC Columns (e.g., C18, Cyano, Phenyl) | Columns with different surface chemistries act as distinct partitioning systems. Measuring retention on these columns allows for the high-throughput determination of S, A, and B for pharmaceuticals [5]. |

| Solutes with Known Descriptors | A training set of reference compounds with well-established descriptor values is crucial for developing new Abraham model correlations for novel solvents or systems [4]. |

Applications in Pharmaceutical and Medical Device Development

The Abraham model is a powerful tool for addressing complex challenges in pharmaceutical and medical device industries [3].

- Establishing Equivalent Solvents: The model can objectively identify less toxic or more sustainable solvents with similar solubilizing properties to a standard solvent, aiding in "green chemistry" initiatives and replacement strategies [3] [7].

- Developing Drug Product Simulating Solvents: For extractables and leachables (E&L) studies, the model helps formulate solvents that mimic the hydrophobicity and hydrogen-bonding activity of a drug product, ensuring relevant extraction conditions [3].

- Understanding Extraction Efficiency: It provides a quantitative basis for understanding and predicting the extraction power of various solvents towards different polymeric materials used in medical devices and container closure systems [3].

- Chromatographic Retention Prediction: By modeling HPLC retention times, the model can assist in the identification of unknown E&L compounds, a critical step in safety assessments [3].

Linear Solvation Energy Relationships (LSERs), specifically the Abraham solvation parameter model, represent one of the most successful predictive frameworks in molecular thermodynamics [8] [9]. These models are built on linear free energy relationships (LFERs) that correlate solute transfer between phases using fundamental molecular descriptors [10]. The remarkable robustness of LSERs stems from their solid thermodynamic foundation, which maintains linearity even when accounting for strong specific interactions like hydrogen bonding [8]. This application note explores the thermodynamic principles underlying LSER linearity and provides detailed protocols for determining LSER solute descriptors, framed within broader research on solvation thermodynamics.

The LSER model utilizes two primary equations for quantifying solute partitioning. For transfer between two condensed phases, the relationship is expressed as:

log(P) = cp + epE + spS + apA + bpB + vpVx [8] [9]

For gas-to-solvent partitioning, the equation becomes:

log(KS) = ck + ekE + skS + akA + bkB + lkL [8] [9]

In these equations, the uppercase letters (E, S, A, B, V, L) represent solute-specific molecular descriptors, while the lowercase letters (e, s, a, b, v, l, c) are solvent-specific system coefficients that embody the complementary effect of the solvent phase on solute-solvent interactions [8].

Thermodynamic Foundation of LSER Linearity

Theoretical Basis for Linear Relationships

The persistent linearity observed in LSER equations, even for systems with strong specific interactions like hydrogen bonding, finds its theoretical basis in solvation thermodynamics and statistical mechanics [8]. When the free-energy functions corresponding to the diabatic states of a solute-solvent system are approximated as parabolas of equal curvature, the resulting adiabatic ground-state surface naturally gives rise to linear relationships between activation free energy (ΔG‡) and reaction free energy (ΔG⁰) [10]. This parabolic approximation provides the mathematical foundation for the observed linearity in free-energy relationships across diverse chemical systems.

The division of Gibbs energy into hydrogen-bonding (ΔGhb) and non-hydrobonding (ΔG-LF) components within equation-of-state frameworks further validates the thermodynamic consistency of LSERs [9]. The hydrogen-bonding contribution follows Veytsman's statistics, while the non-hydrogen-bonding term incorporates all other intermolecular interactions, creating a comprehensive theoretical structure that supports the empirical success of LSER models.

Molecular Descriptors and Their Thermodynamic Significance

Table 1: LSER Molecular Descriptors and Their Thermodynamic Interpretation

| Descriptor | Symbol | Thermodynamic Property | Intermolecular Interactions Represented |

|---|---|---|---|

| Excess Molar Refraction | E | Polarizability due to π- and n-electrons | Dispersion interactions with polarizable solvents |

| Dipolarity/Polarizability | S | Dipole moment and molecular polarizability | Keesom-type (dipole-dipole) and Debye-type (dipole-induced dipole) interactions |

| Hydrogen Bond Acidity | A | Hydrogen bond donor strength | Free energy of complexation with hydrogen bond acceptor solvents |

| Hydrogen Bond Basicity | B | Hydrogen bond acceptor strength | Free energy of complexation with hydrogen bond donor solvents |

| McGowan's Characteristic Volume | Vx | Molecular size and volume | Cavity formation energy and dispersion interactions |

| Gas-Hexadecane Partition Coefficient | L | General hydrophobicity/lipophilicity | Composite of all intermolecular interactions in apolar environment |

Each LSER descriptor quantifies a specific aspect of solute-solvent interactions, contributing additively to the overall free energy of solvation or partitioning [8] [9]. The success of the model lies in this additive contribution approach, where each term represents a distinct, theoretically grounded interaction type that collectively captures the complexity of solvation phenomena.

Experimental Protocols for LSER Descriptor Determination

Core Experimental Methodology

The determination of LSER solute descriptors relies on measuring partition coefficients across multiple well-characterized systems and applying multilinear regression. The following protocol outlines the standard approach for experimental determination of Abraham solute descriptors:

Protocol 1: Experimental Determination of Abraham Solute Descriptors

Sample Preparation

- Prepare standard solutions of the target solute in high-purity water, n-hexane, and octanol at concentrations suitable for analytical detection

- Ensure chemical stability of solute in all solvent systems through preliminary stability studies

- Use saturated solutions for solubility determinations, verified by the presence of excess solid phase

Partition Coefficient Measurement

- Employ shake-flask method for liquid-liquid partitioning systems (e.g., octanol-water)

- Utilize headspace gas chromatography for gas-liquid partitioning systems

- Maintain constant temperature (typically 298 K) using calibrated water baths or incubators

- Allow sufficient time for equilibrium establishment (typically 24-48 hours with agitation)

- Verify equilibrium through forward and reverse approaches

Analytical Quantification

- Apply appropriate analytical methods (HPLC, GC, spectrophotometry) based on solute properties

- Use internal standards to account for procedural losses and matrix effects

- Perform triplicate measurements for each partition system to assess reproducibility

- Include system suitability controls with reference compounds of known descriptors

Data Regression and Descriptor Calculation

- Compile measured partition coefficients (log P) for multiple systems (minimum 5-6 diverse systems)

- Assemble system parameters (e, s, a, b, v, c) for each partitioning system from reference databases

- Apply weighted multilinear regression to solve the system of LSER equations

- Validate descriptor set by predicting additional partition coefficients in test systems

- Calculate confidence intervals for each descriptor through error propagation analysis

This experimental approach requires careful measurement of partition coefficients across systems with complementary selectivity to ensure well-conditioned regression and minimize parameter covariance [11] [12].

Computational Protocol for Descriptor Prediction

For compounds lacking experimental data, computational methods provide an alternative route for descriptor estimation. The following protocol outlines the use of machine learning approaches, specifically the AbraLlama model, for predicting solute descriptors:

Protocol 2: Computational Prediction of LSER Descriptors Using AbraLlama-Solute

Input Preparation

- Generate canonical SMILES representation of target compound using cheminformatics tools (e.g., OpenBabel, RDKit)

- Verify SMILES syntax and molecular structure correctness

- For mixtures, prepare separate SMILES for each component

Model Execution

- Access AbraLlama-Solute model through Hugging Face platform

- Input SMILES string into the prediction interface

- Execute prediction for all five descriptors (E, S, A, B, V) simultaneously

- Record predicted values with associated confidence estimates

Result Validation

- Compare predictions with similar compounds from experimental databases (UFZ-LSER database)

- Assess chemical reasonableness of predicted values (e.g., A=0 for compounds without hydrogen bond donors)

- Perform consensus prediction using multiple algorithms (SoluteGC, SoluteML) when available

- Flag compounds with high prediction uncertainty for experimental verification

The AbraLlama model, fine-tuned from the ChemLLaMA large language model specifically for cheminformatics tasks, demonstrates high accuracy in predicting solute descriptors directly from SMILES representations [12]. This approach significantly expands the applicability of LSER methods to compounds without extensive experimental data.

Advanced Integration: LSER and Equation-of-State Thermodynamics

Partial Solvation Parameters (PSP) Framework

The Partial Solvation Parameters (PSP) approach provides a crucial bridge between LSER descriptors and equation-of-state thermodynamics, enabling the extraction of thermodynamically meaningful information from LSER databases [8] [13]. The PSP framework defines four fundamental parameters that correspond to specific interaction types:

Table 2: Correspondence Between LSER Descriptors and Partial Solvation Parameters

| PSP Parameter | Symbol | LSER Equivalent | Thermodynamic Interpretation |

|---|---|---|---|

| Dispersion PSP | σd | L, Vx | Quantifies London dispersion interactions related to molecular size and polarizability |

| Polar PSP | σp | E, S | Represents Keesom and Debye interactions from permanent and induced dipoles |

| Acidic Hydrogen-Bonding PSP | σa | A | Measures hydrogen bond donor strength (Lewis acidity) |

| Basic Hydrogen-Bonding PSP | σb | B | Measures hydrogen bond acceptor strength (Lewis basicity) |

The one-to-one correspondence between PSPs and LSER molecular descriptors enables direct information exchange between solvation parameter approaches and equation-of-state models [13]. This integration facilitates the estimation of interaction energies over broad ranges of temperature and pressure, significantly expanding the application domain of LSER-derived parameters.

LSER-Equation of State Integration Protocol

Protocol 3: Integrating LSER Descriptors with Equation-of-State Models

PSP Parameter Calculation

- Convert experimental or predicted LSER descriptors to PSPs using established transformation equations [13]

- Calculate dispersion PSP (σd) from McGowan volume (Vx) and hexadecane partition coefficient (L)

- Determine polar PSP (σp) from excess molar refraction (E) and dipolarity/polarizability (S)

- Derive acidic PSP (σa) directly from hydrogen bond acidity descriptor (A)

- Derive basic PSP (σb) directly from hydrogen bond basicity descriptor (B)

Hydrogen-Bonding Free Energy Estimation

- Calculate free energy change upon hydrogen bond formation (ΔGhb) from σa and σb values

- Estimate corresponding enthalpy (ΔHhb) and entropy (ΔShb) changes using temperature-dependent relationships

- Incorporate hydrogen-bonding contribution into equation-of-state framework (e.g., LFHB, SAFT)

Phase Equilibrium Calculation

- Implement PSP-derived parameters in statistical associating fluid theory (SAFT) or lattice-fluid hydrogen-bonding (LFHB) equations of state

- Calculate activity coefficients, vapor-liquid equilibria, and other thermodynamic properties

- Validate predictions against experimental data for systems with similar interaction characteristics

This integrated approach enables the transfer of LSER information to predictive thermodynamic models applicable over wide ranges of conditions, overcoming the temperature limitations of standard LSER correlations [8] [9].

Research Toolkit for LSER Applications

Table 3: Research Reagent Solutions for LSER Studies

| Resource | Type | Function | Access |

|---|---|---|---|

| UFZ-LSER Database | Database | Repository of experimental LSER solute descriptors for >6,800 compounds | https://www.ufz.de/lserd |

| AbraLlama-Solute | ML Model | Predicts Abraham solute descriptors (E, S, A, B, V) from SMILES strings | Hugging Face Platform |

| AbraLlama-Solvent | ML Model | Predicts modified Abraham solvent parameters (e₀, s₀, a₀, b₀, v₀) | Hugging Face Platform |

| COSMO-RS | Computational Method | A priori prediction of solvation properties and hydrogen-bonding contributions | Commercial Software |

| Modified Solvent Parameters | Dataset | Enables direct comparison of solvent characteristics without intercept complications | Figshare Repository |

Experimental Reference Systems

For experimental determination of LSER descriptors, the following reference partitioning systems provide well-characterized solvent parameters with complementary selectivity:

- n-Hexadecane/water (for L and Vx determination)

- Octanol/water (benchmark system for drug partitioning)

- Diisopropylether/water (hydrogen bond acidity selectivity)

- Cyclohexane/water (dispersion interaction characterization)

- Propylene glycol dipelargonate/water (comprehensive interaction profiling)

These systems collectively provide diverse interaction environments that ensure well-conditioned regression for descriptor determination [11] [12].

LSER Workflow Visualization

Diagram 1: LSER Research Workflow for Thermodynamic Property Prediction. This workflow illustrates the integrated computational and experimental approach for determining LSER descriptors and their application in predictive thermodynamics.

The thermodynamic basis of LSER linearity rests on robust theoretical foundations, with the parabolic approximation of free-energy profiles providing mathematical justification for the observed linear relationships [8] [10]. The integration of LSER descriptors with equation-of-state thermodynamics through the PSP framework creates a powerful predictive tool that transcends the temperature and pressure limitations of conventional LSER approaches [13] [9]. The experimental and computational protocols detailed in this application note provide researchers with comprehensive methodologies for determining LSER solute descriptors and leveraging them in thermodynamic predictions. As machine learning approaches like AbraLlama continue to advance, the accessibility and applicability of LSER methods will further expand, solidifying their role as essential tools in molecular thermodynamics and drug development research [12].

The Critical Role of LSER Databases as a Source of Thermodynamic Information

Linear Solvation Energy Relationships (LSERs), specifically the Abraham solvation parameter model, represent one of the most successful predictive frameworks in molecular thermodynamics [8]. This approach provides a quantitative method for understanding solute-solvent interactions that are fundamental to countless chemical, biological, and environmental processes [8]. The model's power lies in its ability to distill complex intermolecular interactions into a set of six empirically determined molecular descriptors that comprehensively characterize solute behavior [14]. These descriptors capture the contribution of different interaction types - dispersion forces, dipolarity/polarizability, and hydrogen-bonding capacity - allowing for the prediction of free-energy related properties such as partition coefficients and solubility [9]. The wealth of thermodynamic information encoded in LSER databases has become indispensable for researchers across multiple disciplines, from drug development to environmental chemistry [8] [15].

The LSER model operates on the principle that free-energy related properties can be described through linear relationships that separate solute properties from system (solvent or phase) properties [9]. For the transfer of neutral compounds between two condensed phases, the model is expressed as:

log(SP) = c + eE + sS + aA + bB + vV [14]

where SP is a free-energy related property such as a partition coefficient or retention factor. The capital letters represent solute-specific descriptors, while the lower-case letters are system constants that describe the complementary interactions of the system with the solute descriptors [14]. This separation of solute and system parameters enables the prediction of solute behavior in any system for which the constants are known, without requiring additional experiments [14].

LSER Molecular Descriptors: Fundamentals and Thermodynamic Interpretation

The LSER model employs six key descriptors that provide comprehensive characterization of a solute's interaction potential. These descriptors are experimental quantities that collectively capture a molecule's capability for various types of intermolecular interactions, offering rich thermodynamic information about solute behavior in different environments [14].

Table 1: LSER Solute Molecular Descriptors and Their Thermodynamic Significance

| Descriptor | Symbol | Thermodynamic Interpretation | Experimental Determination Methods |

|---|---|---|---|

| McGowan's Characteristic Volume | V | Related to the energy cost of cavity formation in the solvent | Calculation from molecular structure [14] |

| Excess Molar Refraction | E | Measures dispersion interactions from n- and π-electrons | Refractive index at 20°C for sodium D-line [14] |

| Dipolarity/Polarizability | S | Characterizes dipole-dipole and dipole-induced dipole interactions | Combination of GC retention data and liquid-liquid partition constants [14] |

| Hydrogen-Bond Acidity | A | Overall hydrogen-bond donating capacity | Gas chromatography, liquid-liquid partition, or NMR spectroscopy [14] |

| Hydrogen-Bond Basicity | B | Overall hydrogen-bond accepting capacity | Biphasic partition systems (e.g., water-organic solvent) [14] |

| Gas-Hexadecane Partition Coefficient | L | Describes dispersion interactions and cavity formation | Gas chromatography with n-hexadecane stationary phase [14] |

Of these six descriptors, only McGowan's characteristic volume (V) can be obtained solely by calculation from a known structure [14]. The excess molar refraction (E) can be calculated for liquids from the characteristic volume and an experimental refractive index [14]. The remaining descriptors (S, A, B, L) are primarily experimental quantities determined through various chromatographic and partition methods, making robust experimental protocols essential for their accurate determination [14].

Experimental Protocols for Determining LSER Solute Descriptors

Determination of Hydrogen-Bond Basicity (B Descriptor)

The hydrogen-bond basicity descriptor (B) is particularly challenging to determine and requires carefully controlled experimental conditions.

Materials and Reagents:

- HPLC-grade water

- High-purity organic solvents (e.g., n-hexane, ethyl acetate, diethyl ether)

- Certified reference compounds with well-established descriptor values

- Reversed-phase liquid chromatography system with C18 column

- Thermostated separation vessels

- Gas chromatograph with flame ionization detector

Procedure:

- System Selection: Employ biphasic systems where one phase is aqueous, such as reversed-phase liquid chromatography, micellar electrokinetic chromatography, or water-organic solvent liquid-liquid partition [14].

- Partition Experiments: For liquid-liquid partition, equilibrate the solute between water and an immiscible organic solvent in thermostated vessels at 25°C for 24 hours with constant agitation.

- Phase Separation: After equilibration, allow phases to separate completely and analyze each phase quantitatively using appropriate analytical methods (e.g., GC-FID, HPLC-UV).

- Measurement: Determine the partition coefficient as P = [solute]ₒᵣ𝑔/[solute]wₐₜₑᵣ.

- Data Analysis: Use measured partition coefficients for multiple reference systems in conjunction with Eq. 2 to solve for the B descriptor through multilinear regression.

Quality Control:

- Use at least 3 different biphasic systems with varying hydrogen-bond accepting characteristics

- Include reference compounds with well-established B values in each experiment

- Maintain constant temperature (±0.1°C) throughout partitioning

- Verify mass balance of solute (95-105% recovery)

Determination of Gas-Hexadecane Partition Coefficient (L Descriptor)

The L descriptor is preferably determined using gas chromatographic methods under specific conditions.

Materials and Reagents:

- n-Hexadecane stationary phase of high purity (>99%)

- Inert gas chromatographic support material

- Deactivated fused silica columns

- High-purity helium carrier gas

- Temperature-controlled gas chromatography oven (±0.1°C precision)

- Certified reference alkanes for retention index calibration

Procedure:

- Column Preparation: Coat capillary columns with n-hexadecane stationary phase at isothermal conditions appropriate for the solute volatility.

- Retention Measurement: Inject solutes and measure retention factors (k) at 25°C, using n-alkanes as retention markers.

- Calculation: Calculate the L descriptor directly from log k values after appropriate calibration.

- Alternative Method: For compounds not amenable to gas chromatography at 25°C, determine L from back-calculation using retention factors measured on low-polarity stationary phases at elevated temperatures, extrapolating to 25°C using established thermodynamic relationships.

Critical Considerations:

- Avoid using polar stationary phases for L descriptor determination as they may introduce mixed retention mechanisms [14]

- Ensure solute does not undergo degradation at analysis temperatures

- Verify linearity of retention index behavior for homologue series

- Use appropriate reference compounds to validate system performance

Research Reagent Solutions and Essential Materials

Table 2: Essential Research Reagents and Materials for LSER Descriptor Determination

| Category | Specific Items | Function/Application | Quality Specifications |

|---|---|---|---|

| Chromatographic Phases | n-Hexadecane, poly(ethylene glycol), poly(siloxane) | Stationary phases for GC determination of L, S, and A descriptors | >99% purity, low bleeding characteristics [14] |

| Partition Solvents | Water, n-octanol, alkanes (hexane, heptane), diethyl ether, ethyl acetate | Biphasic systems for determining S, A, and B descriptors | HPLC grade, low UV absorbance, purity >99% [14] |

| Reference Compounds | n-Alkanes, alkylbenzenes, ketones, alcohols, ethers | System calibration and descriptor validation | Certified reference materials, >98% purity [14] |

| Analytical Instruments | Gas chromatograph with FID, HPLC system with UV detection, automated titrator | Quantification of solute concentrations in partition experiments | Calibration certified, temperature control ±0.1°C [14] |

Workflow for LSER Descriptor Determination

The following diagram illustrates the comprehensive experimental workflow for determining LSER solute descriptors:

Data Validation and Quality Control in LSER Databases

The accuracy of LSER predictions depends critically on the quality of the underlying descriptor data. Inconsistent descriptor values for the same compound across different literature sources present a significant challenge [14]. The Wayne State University (WSU) compound descriptor database addresses this issue by acquiring experimental data for descriptor calculation in a single laboratory with consistent quality control and calibration protocols [14]. This approach minimizes experimental uncertainty and provides screening tools to identify problematic data associated with secondary compound-system interactions [14].

Common Sources of Error in Descriptor Determination:

Mixed Retention Mechanisms: In chromatographic systems, retention factors may not exclusively reflect the intended intermolecular interactions when multiple mechanisms are operative [14]. This is particularly problematic for n-alkanes on polar stationary phases where interfacial adsorption can contribute significantly to retention [14].

Electrostatic Interactions: For compounds containing protonatable functional groups on silica-based stationary phases, electrostatic interactions with ionized silanol groups can cause significant errors as these interactions are falsely attributed to descriptor values for the neutral compound [14].

Steric Resistance: Bulky compounds may not fully penetrate the solvated stationary phase in liquid chromatography, resulting in lower retention and consequently inaccurate descriptor values [14].

Validation Protocols:

- Cross-validate descriptors using multiple determination methods

- Compare predicted versus experimental partition coefficients for systems not used in descriptor determination

- Assess internal consistency through thermodynamic relationships between different descriptors

- Utilize statistical outlier detection methods to identify potentially erroneous values

Advanced Applications and Integration with Modern Thermodynamic Models

The thermodynamic information contained in LSER databases has proven valuable beyond traditional partition coefficient prediction. Recent research has focused on integrating LSER descriptors with equation-of-state models and quantum-chemical approaches to create more powerful predictive frameworks [8] [9].

The Partial Solvation Parameters (PSP) approach represents one such development, designed to facilitate the exchange of thermodynamic information between LSER databases and equation-of-state developments [8]. PSPs maintain an equation-of-state thermodynamic basis that permits estimation over a broad range of external conditions, with hydrogen-bonding PSPs (σa and σb) used to estimate the free energy change upon hydrogen bond formation (ΔGhb) [8].

Similarly, efforts to interconnect the quantum-mechanics based COSMO-RS (Conductor Screening Model for Realistic Solvation) with the LSER approach have shown promise [9]. Comparative studies have demonstrated "a rather good agreement" between COSMO-RS predictions of hydrogen-bonding contribution to solvation enthalpy and corresponding LSER predictions for most studied systems [9]. This convergence of approaches suggests a path toward developing a COSMO-LSER equation-of-state framework that would leverage the strengths of both methods [9].

Recent work has also explored the development of novel quantum chemical-LSER (QC-LSER) descriptors that combine quantum chemical calculations with the LSER approach for predicting hydrogen-bonding interaction free energies [16]. These developments are particularly useful for solvation studies in chemical and biochemical systems and for equation-of-state developments in molecular thermodynamics [16].

The critical role of LSER databases as sources of thermodynamic information continues to expand as new applications and integration with computational methods emerge. The robust experimental protocols for descriptor determination, comprehensive validation procedures, and ongoing methodological developments ensure that these databases remain indispensable tools for researchers predicting solute behavior in complex chemical and biological systems.

A Step-by-Step Protocol: From Experimental Data to Computational Prediction of Solute Descriptors

Partition coefficients are fundamental physicochemical parameters that quantify the equilibrium distribution of a solute between two immiscible phases. Within the context of Linear Solvation Energy Relationships (LSERs), the accurate experimental determination of these coefficients is a critical step for deriving solute descriptors, which in turn enable the prediction of a vast array of environmental, biological, and pharmaceutical properties [8]. These descriptors - Vx, L, E, S, A, and B - encapsinate a solute's characteristic volume, gas-liquid partitioning, excess molar refraction, dipolarity/polarizability, hydrogen-bond acidity, and hydrogen-bond basicity, respectively [8]. This protocol details the core experimental methodologies for measuring gas-liquid and water-solvent partition coefficients, which are essential for populating the LSER database and refining its predictive power [1].

Theoretical Foundation and LSER Context

The LSER model correlates free-energy-related properties using two primary equations. For solute transfer between two condensed phases (e.g., water and an organic solvent), the model is expressed as: log (P) = cp + epE + spS + apA + bpB + vpVx [8]

Where P is the partition coefficient and the lower-case letters are the system-specific LSER coefficients. For gas-to-solvent partitioning, the equation is:

log (KS) = ck + ekE + skS + akA + bkB + lkL [8]

Here, L is the logarithm of the hexadecane/air partition coefficient, a key parameter often determined via experimental methods [17]. The experimental determination of partition coefficients like P and KS for a diverse set of solutes allows for the back-calculation and validation of these molecular descriptors, creating a robust, self-consistent database for predictive toxicology and pharmacokinetics [1] [18].

Experimental Protocols

Protocol 1: Shake-Flask Method for Water-Solvent Partition Coefficients

The shake-flask method is a direct experimental approach for determining the partition coefficient of a solute between water and a water-immiscible organic solvent, most commonly n-octanol (log KOW).

Materials and Equipment

- Solvents: High-purity water (e.g., HPLC-grade) and n-octanol, pre-saturated with each other before use.

- Solute: A stock solution of the analyte of known concentration.

- Glassware: Separatory funnels or glass vials with PTFE-lined caps.

- Apparatus: Mechanical shaker, centrifuge, and an analytical instrument (e.g., HPLC, GC, or UV-Vis spectrophotometer).

Step-by-Step Procedure

- Preparation: Pre-saturate the water and n-octanol by mixing them thoroughly and allowing the phases to separate. Use the saturated phases for the experiment.

- Equilibration: Accurately measure known volumes of the aqueous and organic phases (e.g., 10 mL each) into a glass vial. Add a known amount of the solute stock solution. Seal the vial and agitate it vigorously for a predetermined time (e.g., 1 hour) using a mechanical shaker at a constant temperature (e.g., 25 ± 1°C) to establish equilibrium [19].

- Phase Separation: After agitation, allow the phases to separate completely. This may be facilitated by centrifugation [19].

- Analysis: Carefully separate the two phases. Determine the concentration of the solute in each phase using a suitable analytical method. Chromatographic methods (HPLC, GC) are preferred for their specificity [19].

- Calculation: The partition coefficient, P, is calculated as the ratio of the equilibrium concentration of the solute in the organic phase to that in the aqueous phase: P = [A]org / [A]aq.

- Validation: The total mass of solute recovered in both phases should be compared to the mass introduced to account for any adsorption or degradation [19]. The OECD guideline recommends multiple runs with different phase volume ratios, with the resulting log P values falling within a range of ± 0.3 units [19].

The workflow for this method is standardized as follows:

Protocol 2: Determining Gas-Liquid Partition Coefficients

Gas-liquid partitioning, characterized by Henry's Law constant (KH) or the air-water partition coefficient (KAW), is crucial for understanding the volatility of a substance.

Materials and Equipment

- Headspace Vials: Sealed vials with PTFE/silicone septa.

- Thermostatted Water Bath: For precise temperature control.

- Apparatus: Gas-tight syringes and a GC system equipped with a headspace autosampler or appropriate detector (e.g., Mass Spectrometer).

Step-by-Step Procedure

- Sample Preparation: Place a known volume of an aqueous solution of the solute into a headspace vial. Seal the vial immediately to prevent any gas exchange.

- Equilibration: Incubate the vial in a thermostatted water bath at a constant temperature (e.g., 25°C) until equilibrium between the aqueous and gas phases is reached.

- Sampling: Using a gas-tight syringe or an automated headspace sampler, extract a defined volume of the headspace (gas phase).

- Analysis: Inject the headspace sample into a Gas Chromatograph (GC) for quantitative analysis of the solute concentration in the gas phase, [A]air.

- Calculation: The air-water partition coefficient, KAW, is calculated as KAW = [A]air / [A]aq. Henry's Law constant (KH) is often reported in different units (e.g., Pa·m³/mol) and may require conversion [20] [17].

The logical workflow for determining the air-water partition coefficient is outlined below:

Data Presentation and Analysis

Table 1: Key partition coefficients and their applications in LSER and environmental modeling.

| Partition Coefficient | Symbol | Phases | Primary Application in LSER / Context |

|---|---|---|---|

| Octanol-Water [20] [19] | KOW / log P | n-octanol / Water | Measures lipophilicity; foundational for LSER solute descriptor Vx, S, A, B [8]. |

| Air-Water / Henry's Law [20] | KAW / KH | Air / Water | Quantifies volatility; related to the gas-liquid partitioning constant L [17]. |

| Hexadecane-Air [17] | KHdA / L | n-hexadecane / Air | Directly provides the LSER solute descriptor L; measures dispersion interactions [8] [17]. |

| Organic Carbon-Water [20] | KOC | Organic carbon / Water | Predicts environmental sorption to soils and sediments. |

| Distribution Coefficient [20] | D | Organic solvent / Water (at specific pH) | Accounts for ionization; essential for ionizable solutes (acids, bases, zwitterions). |

Critical Experimental Parameters

Table 2: Critical parameters and their impact on measurement accuracy and LSER descriptor determination.

| Parameter | Impact on Measurement | Recommendation for LSER Studies |

|---|---|---|

| Temperature [20] [19] | Affects equilibrium constant. A 1°C change can significantly alter the measured value. | Maintain constant temperature (± 0.5°C), typically 20-25°C. Report temperature precisely [19]. |

| Phase Purity & Saturation [19] | Impurities or unsaturated solvents shift equilibrium and introduce error. | Pre-saturate immiscible solvents before use. Use high-purity reagents. |

| Solute Concentration | High concentrations can cause non-ideal behavior (association, saturation). | Use dilute solutions to ensure ideal behavior and infinite dilution conditions. |

| Ionization State (pKa) [20] [17] | For ionizable compounds, the partition coefficient (P) is pH-dependent. | For log P, ensure the solute is in its neutral form. Use the distribution coefficient D for pH-specific values [20]. |

| Mass Balance Verification [19] | Confirms no solute loss via adsorption, degradation, or volatilization. | Mandatory step. Recovery should be 100% ± 10%. Data from recoveries outside this range should be treated with caution. |

The Scientist's Toolkit

Table 3: Essential research reagents and materials for partition coefficient experiments.

| Item | Function / Application |

|---|---|

| n-Octanol (saturated with water) [19] | Standard organic solvent for measuring lipophilicity (log KOW), a key parameter in LSER and QSAR. |

| n-Hexadecane [17] | A non-polar solvent used to determine the LSER solute descriptor L (log KHdA), which characterizes dispersion interactions. |

| Inert Headspace Vials & Septa | Essential for gas-liquid partitioning experiments to prevent contamination and loss of volatile analytes. |

| Centrifuge [19] | Used for complete and rapid separation of emulsionated liquid phases after shaking (e.g., in shake-flask method). |

| Gas Chromatograph (GC) with FID/MS | Preferred analytical method for volatile and semi-volatile solutes in both liquid and gas phases [17]. |

| High-Performance Liquid Chromatograph (HPLC) [19] | Preferred analytical method for non-volatile, thermally labile solutes in the shake-flask method. |

| Thermostatted Shaker / Water Bath | Provides controlled agitation and constant temperature during the equilibration process, critical for reproducible results. |

Leveraging Chromatographic Retention Data for Descriptor Determination (HILIC & Reversed-Phase)

The determination of Linear Solvation Energy Relationship (LSER) solute descriptors is a critical methodology for quantitatively predicting the chromatographic behavior and physicochemical properties of novel compounds. These descriptors, central to the Abraham solvation parameter model, provide a powerful framework for understanding molecular interactions in different chromatographic systems, notably Reversed-Phase Liquid Chromatography (RPLC) and Hydrophilic Interaction Liquid Chromatography (HILIC) [21] [22]. For researchers in drug development, this approach offers a reliable protocol for estimating retention times, solubility, and other key properties essential for candidate selection and optimization.

This application note details experimental protocols for determining LSER solute descriptors using HILIC and RPLC retention data, framed within broader thesis research on descriptor determination methodologies. We provide comprehensive guidelines for data collection, processing, and descriptor calculation, specifically addressing the challenges of analyzing both neutral and ionizable compounds.

Theoretical Foundations of the LSER Model

The Abraham solvation parameter model defines solute transfer between two phases using a linear free energy relationship (LFER) [21] [4]. For chromatography, the model is expressed as:

log k = c + e·E + s·S + a·A + b·B + v·V [21]

In this equation:

- k is the retention factor

- c is a system constant

- The uppercase letters (E, S, A, B, V) are solute descriptors representing specific molecular properties

- The lowercase coefficients (e, s, a, b, v) are system parameters reflecting the complementary properties of the chromatographic system

The solute descriptors are defined as follows [21] [4]:

- E: The solute's excess molar refraction

- S: Solute polarity/polarizability

- A: Overall hydrogen-bond acidity

- B: Overall hydrogen-bond basicity

- V: McGowan molecular volume

For ionizable compounds, the model can be extended to include terms for the degree of ionization. A modified LSER model includes the D descriptor, which accounts for the ionization state based on the mobile phase pH and analyte pKa [22]. This descriptor can be further separated into D+ for bases and D- for acids to improve accuracy for ionizable compounds [22].

Experimental Protocols for Descriptor Determination

Stationary and Mobile Phase Selection

Stationary Phases:

- HILIC Mode: Select polar stationary phases with well-characterized properties. Options include bare silica, zwitterionic (e.g., sulfobetaine), amide, diol, or cyano phases [23] [24]. Different phases exhibit varying contributions from partitioning, polar interactions, and ion-exchange mechanisms [23].

- RPLC Mode: Use conventional non-polar phases such as C18, C8, or phenyl columns [21] [25].

Mobile Phase Preparation:

- HILIC: Prepare mobile phases with acetonitrile/water or methanol/water mixtures containing 3-40% water [21]. Include 10-20 mM volatile buffers (e.g., ammonium acetate) for pH control [25]. For zwitterionic columns, prepare mobile phases using plastic solvent bottles (e.g., PFA) instead of borosilicate glass to prevent ion leaching that causes retention time irreproducibility [26].

- RPLC: Use standard aqueous-organic mixtures with appropriate buffers for pH control.

Table 1: Research Reagent Solutions for LSER Descriptor Determination

| Reagent Category | Specific Examples | Function in Protocol |

|---|---|---|

| HILIC Stationary Phases | Bare Silica (e.g., BEH HILIC), Zwitterionic (e.g., ZIC-HILIC), Amide (e.g., TSKgel Amide-80) | Provides polar surface for retention; different phases offer distinct blends of partitioning and ionic interactions [23] [24] [25]. |

| RPLC Stationary Phases | C18, C8, Phenyl, Butylimidazolium-based | Provides hydrophobic surface for reversed-phase retention [21] [22]. |

| Organic Modifiers | Acetonitrile (MeCN), Methanol (MeOH) | Primary mobile phase component; affects retention mechanism and selectivity [21] [23]. |

| Buffers & Salts | Ammonium Acetate, Ammonium Formate | Controls mobile phase pH and ionic strength; minimizes unwanted ionic interactions [25] [27]. |

| Solvent Reservoirs | PFA (Tetrafluoroethylene Copolymer) Bottles | Prevents leaching of ions that alter the water layer on HILIC phases and cause retention time drift [26]. |

Retention Measurement and Data Collection

- Probe Solute Selection: Assemble a diverse set of 30-40 reference compounds with well-established solute descriptors [22] [4]. Include both neutral and ionizable compounds (acids and bases) to adequately characterize the system.

- Chromatographic Conditions:

- Retention Factor Calculation: For each compound and mobile phase composition, calculate the retention factor: k = (tᵣ - t₀)/t₀, where tᵣ is the compound retention time and t₀ is the column dead time.

- Data Recording: Record log k values for each compound at multiple mobile phase compositions. For ionizable compounds, note the mobile phase pH and the analyte pKa (preferably measured in the mobile phase) for calculating D+ and D- descriptors [22].

System Characterization and Descriptor Calculation

- System Coefficient Determination: For each chromatographic system (stationary phase/mobile phase combination), perform multiple linear regression using the known solute descriptors of the reference compounds and their measured log k values to determine the system coefficients (e, s, a, b, v, c) [21] [4].

- Descriptor Calculation for New Compounds:

- Measure retention factors for the new compound across multiple chromatographic systems with known system coefficients.

- Use multiple linear regression to solve for the solute descriptors (E, S, A, B, V) that best fit the retention data across all systems [4].

- For ionizable compounds, include the D+ or D- term in the regression model [22].

Figure 1: Experimental workflow for LSER solute descriptor determination using chromatographic retention data. HILIC-specific considerations are highlighted in red. MLR = Multiple Linear Regression.

Data Analysis and Interpretation

System Coefficient Interpretation

The system coefficients obtained from the multiple linear regression provide insights into the molecular interactions governing retention in each chromatographic system [21]:

- A positive v-coefficient indicates that larger molecular volume increases retention (common in RPLC)

- A negative v-coefficient suggests that larger size reduces retention (observed in HILIC with acetonitrile-rich mobile phases)

- Positive a- and b-coefficients indicate that hydrogen-bonding interactions strengthen retention

- Negative a- and b-coefficients suggest that hydrogen-bonding with the mobile phase reduces retention

Table 2: Representative System Coefficients for Different Chromatographic Modes

| System Coefficient | HILIC (Zwitterionic Phase with MeCN) | Reversed-Phase (C18) | Interpretation |

|---|---|---|---|

| v (Molecular Volume) | Negative [21] | Positive [21] | In HILIC, larger size reduces retention; in RPLC, it increases retention. |

| b (H-Bond Basicity) | Positive (MeCN) / Negative (MeOH) [21] | Negative [21] | H-bond basicity increases retention in HILIC-MeCN but decreases it in RPLC. |

| a (H-Bond Acidity) | Positive [21] | Variable | H-bond acidity generally increases retention in HILIC. |

| s (Polarity) | Positive [21] | Variable | Dipole-type interactions increase retention in HILIC. |

| Ion-Exchange Contribution | Slope ~ -1 (High) to 0 (Low) [23] | Not significant | Varies by HILIC phase; high for pentafluorophenyl, low for pentahydroxyl phases [23]. |

Special Considerations for HILIC Systems

HILIC retention involves multiple mechanisms that must be considered when interpreting data:

Partitioning Mechanism: The primary retention mechanism in HILIC involves partitioning between the organic-rich mobile phase and a water-rich layer adsorbed on the stationary phase [21] [23]. This mechanism predominates on phases with high water uptake capacity (e.g., bare silica, amide, diol phases).

Ionic Interactions: Many HILIC phases exhibit significant ion-exchange characteristics [23]. To evaluate this contribution, measure retention at different buffer concentrations and plot log k versus log[buffer concentration]. A slope approaching -1 indicates dominant ion-exchange retention, while a slope near 0 indicates minimal ionic interactions [23].

Organic Modifier Effects: The choice of organic modifier (acetonitrile vs. methanol) significantly impacts selectivity in HILIC [21]. With acetonitrile, solute hydrogen-bond basicity enhances retention, while with methanol, this contribution may become negative [21].

Figure 2: Complex retention mechanisms in HILIC chromatography and their relationship with different stationary phases and analyte types. The dominant mechanism varies significantly with stationary phase chemistry.

Addressing Ionizable Compounds

For ionizable analytes, the standard LSER model requires modification to account for pH-dependent ionization:

Calculate Degree of Ionization: For each ionizable compound, calculate the D descriptor using the formula: D = 10^(pH - pKa) / (1 + 10^(pH - pKa)) [22] Use separate D+ and D- terms for basic and acidic compounds, respectively [22].

Mobile Phase Considerations: Note that pKa values are solvent-dependent and may shift significantly in high-organic mobile phases compared to aqueous values [22].

Extended LSER Model: Use the extended model including the D term: log k = c + e·E + s·S + a·A + b·B + v·V + d·D [22]

Applications in Drug Development

The solute descriptors obtained through these protocols enable prediction of various properties critical to pharmaceutical development:

Chromatographic Method Development: Descriptors predict retention times and selectivity for new compounds, streamlining method development [21] [23].

Solubility Prediction: LSER descriptors facilitate prediction of aqueous solubility and solubility in pharmaceutically relevant solvents [4].

Partition Coefficient Estimation: Log P and other partition coefficients can be accurately predicted using the solute descriptors [4].

Absorption and Permeability Modeling: Descriptors correlate with membrane permeability and can support prediction of absorption characteristics [4].

This application note provides comprehensive protocols for determining LSER solute descriptors using HILIC and reversed-phase chromatographic retention data. The methodologies described enable robust characterization of molecular properties for both neutral and ionizable compounds, with special considerations for the complex retention mechanisms in HILIC chromatography. When properly implemented, this approach provides valuable descriptors that support various predictive modeling efforts in drug discovery and development.

The integration of these protocols into a broader thesis on descriptor determination methodologies offers a systematic approach to molecular characterization that bridges chromatographic behavior with fundamental solvation properties. Following the detailed experimental guidelines and data analysis procedures outlined herein will ensure reliable, reproducible descriptor determination applicable across diverse compound classes.

The precise prediction of physicochemical properties is a cornerstone of environmental science and chemical risk assessment, particularly in the context of Linear Solvation Energy Relationship (LSER) models for determining solute descriptors [28]. These descriptors are crucial for predicting partition coefficients, such as the octanol-water partition coefficient (Kow) and octanol-air partition coefficient (Koa), which characterize the bioaccumulation potential of chemicals [28]. However, experimentally determined solute descriptors are available for only about 8,000 chemicals, a minuscule fraction of the over 182 million registered chemicals [28]. This disparity creates a pressing need for robust computational methods to predict these descriptors accurately, especially for complex chemical structures with multiple functional groups where traditional quantitative structure-property relationship (QSPR) models struggle [28].

The integration of quantum mechanical (QM) calculations with molecular mechanical (MD) simulations has emerged as a powerful multiscale computational tool for studying chemical reactions in complex environments [29]. While direct ab initio QM/MM molecular dynamics simulations provide high accuracy, they are prohibitively time-consuming for adequate statistical sampling [29]. This application note details hybrid protocols that leverage machine learning to bridge the accuracy of quantum chemistry with the computational efficiency of molecular dynamics, providing researchers with practical methodologies for advancing LSER solute descriptor research.

Computational Methodologies

Foundational QM/MM Framework

The QM/MM approach partitions the system into a QM region, where bond breaking and formation occurs, treated with quantum mechanical methods, and an MM region, representing the complex environment, treated with molecular mechanical force fields [29]. This hybrid scheme allows for a realistic modeling of chemical processes in solution or enzymes while maintaining computational feasibility. The fundamental LSER equations for predicting solute properties (SP) utilize solute descriptors as follows [28]:

- Eq. (1) for Condensed Phase Systems:

SP = c + eE + sS + aA + bB + vV - Eq. (2) for Air-Included Systems:

SP = c + eE + sS + aA + bB + lL - Eq. (3) for General Application:

SP = c + sS + aA + bB + vV + lL

where the uppercase letters represent solute descriptors (excess molar refraction E, polarizability S, hydrogen bond acidity A, hydrogen bond basicity B, McGowan characteristic volume V, and hexadecane-air partition coefficient L), and lowercase letters represent system constants [28].

Deep Learning for Solute Descriptor Prediction

Protocol: Deep Neural Network (DNN) for Solute Descriptor Prediction

- Objective: To accurately predict LSER solute descriptors for chemicals, overcoming limitations of traditional fragmental QSPR approaches for complex structures [28].

- Curated Dataset:

- Begin with the Abraham Absolv dataset of 7,881 chemicals [28].

- Filter to chemicals with available S descriptors, reducing to 7,241 chemicals [28].

- Exclude metals, organometallics, and gases (e.g., argon, nitrogen, methane) [28].

- Implement error-checking for descriptor values outside plausible ranges, finalizing with approximately 6,364 chemicals [28].

- Model Development:

- Singletask Models: Develop individual DNN models for each solute descriptor (E, S, A, B, V, L). These are preferred over multitask models for smaller datasets [28].

- Graph Representations: Use graph representations of chemicals as input features for the DNN [28].

- Data Augmentation: Employ tautomer-based data augmentation strategies to improve DNN training [28].

- Performance Validation: Achieve root mean square errors (rmse) between 0.11 and 0.46 for different solute descriptors [28].

- Implementation:

Neural Network-Driven QM/MM Molecular Dynamics

Protocol: Adaptive QM/MM-NN Molecular Dynamics- Objective: To perform direct MD simulations approximating ab initio QM/MM accuracy at a fraction of the computational cost, enabling statistical sampling for free energy calculations [29].

- Initial Setup:

- Neural Network Training:

- Iterative MD Scheme:

- Cycle 1: Perform MD simulations on the NN-predicted potential energy surface [29].

- Adaptive Selection: Identify configurations encountered during MD that were excluded from the initial NN training database and may cause sampling difficulties [29].

- Database Update: Add these new configurations to the database, recalculate ab initio QM/MM energies, and retrain the NN [29].

- Cycle Repetition: Repeat MD simulations and NN updates for 2-4 cycles until convergence, reproducing results at the ab initio QM/MM level [29].

- Output Applications:

Quantitative Data Presentation

The following tables summarize key quantitative data for comparing traditional and hybrid computational approaches in LSER and molecular dynamics. Table 1: Performance Comparison of Solute Descriptor Prediction Methods [28]| Prediction Method | Model Type | RMSE Range for Descriptors | Key Application Strength |

|---|---|---|---|

| Deep Neural Network (DNN) | Singletask/Graph-Based | 0.11 - 0.46 | Complex structures with multiple functional groups |

| QSPR (LSERD) | Fragmental-based | Not Specified | Simple chemical structures with one functional group |

| ACD/Absolv | Fragmental-based | Not Specified | Simple chemical structures with one functional group |

| Partition Coefficient Dataset | Dataset Size (Chemicals) | Typical RMSE (log units) |

|---|---|---|

| Octanol-Water (Kow) | 12,010 | ~1.0 |

| Water-Air (Kwa) | 696 | ~1.3 |

| Octanol-Air (Koa) | Not Specified | Comparable to other methods |

| Content Type | Minimum Ratio (AA) | Enhanced Ratio (AAA) |

|---|---|---|

| Body Text | 4.5:1 | 7:1 |

| Large-Scale Text (≥18pt or 14pt bold) | 3:1 | 4.5:1 |

| UI Components / Graphical Objects | 3:1 | Not Defined |

Experimental Protocols

Protocol for Free Energy Calculation via Adaptive QM/MM-NN MD

This protocol details the calculation of free energy changes for a chemical reaction in solution using the adaptive QM/MM-NN method.System Preparation:

- Solute: Create initial coordinates for the reactant molecule(s). Determine the quantum mechanical (QM) region, encompassing all atoms directly involved in bond breaking/formation.

- Solvent: Embed the solute in a pre-equilibrated box of water molecules (or other solvent) representing the molecular mechanical (MM) region.

- Force Field Assignment: Assign appropriate MM force field parameters to the solvent and any non-QM solute atoms.

Initial Sampling and NN Training:

- SQM/MM MD: Perform molecular dynamics simulation using a semiempirical QM/MM method (e.g., AM1/MM or SCC-DFTB/MM). Ensure sampling covers the relevant reaction coordinate.

- Configuration Selection: Extract 500-1000 configurations from the SQM/MM MD trajectory.

- Ab Initio Single-Point Calculations: For each selected configuration, perform a single-point energy calculation at the target ab initio QM/MM level (e.g., DFT/MM).

- Neural Network Training: Train the QM/MM-NN model using the SQM/MM configurations as input and the energy difference (ΔE = Eab initio - ESQM) as the target output.

Iterative Adaptive MD:

- Cycle Initiation: Launch an MD simulation on the NN-corrected potential energy surface.

- Configuration Monitoring: During the MD, identify new configurations where the NN prediction has high uncertainty or is an outlier compared to the training set.

- Database Update: Add these new configurations to the training database and compute their ab initio QM/MM energies.

- Model Retraining: Retrain the QM/MM-NN model with the expanded database.

- Convergence Check: Repeat steps 3b-3d for 2-4 cycles until the free energy profile and key geometric parameters remain stable between cycles.

Free Energy Analysis:

- Umbrella Sampling: Use the final NN-predicted PES to run umbrella sampling simulations along the predefined reaction coordinate.

- WHAM Analysis: Apply the Weighted Histogram Analysis Method (WHAM) to the umbrella sampling data to compute the potential of mean force (PMF), which is the free energy profile for the reaction.

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Computational Tools for Hybrid QM/MM and LSER Research| Item / Software | Type | Primary Function |

|---|---|---|

| Abraham Absolv Dataset | Dataset | A curated collection of ~7,800 chemicals with experimentally determined solute descriptors for model training and validation [28]. |

| LSERD Platform | Software (Online) | A free online platform using a fragmental QSPR approach to predict solute descriptors for LSER models [28]. |

| ACD/Percepta (Absolv) | Software (Commercial) | A commercial software package providing predictions of solute descriptors, useful for benchmarking against new methods [28]. |

| Semiempirical Methods (AM1, PM3, SCC-DFTB) | Computational Method | Fast, approximate QM methods used for initial configuration sampling and MD in QM/MM simulations to reduce computational cost [29]. |

| Ab Initio QM Methods (DFT) | Computational Method | Higher-accuracy quantum chemical methods (e.g., Density Functional Theory) used for target energy calculations and training data generation [29]. |

| Neural Network Potentials (e.g., QM/MM-NN) | Computational Model | Machine learning models trained to predict high-level QM energies from low-level inputs, enabling accurate and efficient MD simulations [29]. |

Workflow Visualization

Figure 1: Adaptive QM/MM-NN workflow for free energy calculation. The process begins with initial sampling and neural network training (green), followed by an iterative refinement cycle (blue) that continues until convergence is achieved.

Figure 2: Solute descriptor prediction and LSER application ecosystem. Experimental data feeds both traditional QSPR and modern DNN approaches, which in turn supply descriptors to the core LSER model for various physicochemical applications.